Kernel (statistics)

The term kernel is used in statistical analysis to refer to a window function. The term "kernel" has several distinct meanings in different branches of statistics.

Bayesian statistics

In statistics, especially in Bayesian statistics, the kernel of a probability density function (pdf) or probability mass function (pmf) is the form of the pdf or pmf in which any factors that are not functions of any of the variables in the domain are omitted.[1] Note that such factors may well be functions of the parameters of the pdf or pmf. These factors form part of the normalization factor of the probability distribution, and are unnecessary in many situations. For example, in pseudo-random number sampling, most sampling algorithms ignore the normalization factor. In addition, in Bayesian analysis of conjugate prior distributions, the normalization factors are generally ignored during the calculations, and only the kernel considered. At the end, the form of the kernel is examined, and if it matches a known distribution, the normalization factor can be reinstated. Otherwise, it may be unnecessary (for example, if the distribution only needs to be sampled from).

For many distributions, the kernel can be written in closed form, but not the normalization constant.

An example is the normal distribution. Its probability density function is

- [math]\displaystyle{ p(x|\mu,\sigma^2) = \frac{1}{\sqrt{2\pi\sigma^2}} e^{-\frac{(x-\mu)^2}{2\sigma^2}} }[/math]

and the associated kernel is

- [math]\displaystyle{ p(x|\mu,\sigma^2) \propto e^{-\frac{(x-\mu)^2}{2\sigma^2}} }[/math]

Note that the factor in front of the exponential has been omitted, even though it contains the parameter [math]\displaystyle{ \sigma^2 }[/math] , because it is not a function of the domain variable [math]\displaystyle{ x }[/math] .

Pattern analysis

The kernel of a reproducing kernel Hilbert space is used in the suite of techniques known as kernel methods to perform tasks such as statistical classification, regression analysis, and cluster analysis on data in an implicit space. This usage is particularly common in machine learning.

Nonparametric statistics

In nonparametric statistics, a kernel is a weighting function used in non-parametric estimation techniques. Kernels are used in kernel density estimation to estimate random variables' density functions, or in kernel regression to estimate the conditional expectation of a random variable. Kernels are also used in time-series, in the use of the periodogram to estimate the spectral density where they are known as window functions. An additional use is in the estimation of a time-varying intensity for a point process where window functions (kernels) are convolved with time-series data.

Commonly, kernel widths must also be specified when running a non-parametric estimation.

Definition

A kernel is a non-negative real-valued integrable function K. For most applications, it is desirable to define the function to satisfy two additional requirements:

- [math]\displaystyle{ \int_{-\infty}^{+\infty}K(u)\,du = 1\,; }[/math]

- Symmetry:

- [math]\displaystyle{ K(-u) = K(u) \mbox{ for all values of } u\,. }[/math]

The first requirement ensures that the method of kernel density estimation results in a probability density function. The second requirement ensures that the average of the corresponding distribution is equal to that of the sample used.

If K is a kernel, then so is the function K* defined by K*(u) = λK(λu), where λ > 0. This can be used to select a scale that is appropriate for the data.

Kernel functions in common use

Several types of kernel functions are commonly used: uniform, triangle, Epanechnikov,[2] quartic (biweight), tricube,[3] triweight, Gaussian, quadratic[4] and cosine.

In the table below, if [math]\displaystyle{ K }[/math] is given with a bounded support, then [math]\displaystyle{ K(u) = 0 }[/math] for values of u lying outside the support.

| Kernel Functions, K(u) | [math]\displaystyle{ \textstyle \int u^2K(u)du }[/math] | [math]\displaystyle{ \textstyle \int K(u)^2 du }[/math] | Efficiency[5] relative to the Epanechnikov kernel | ||

|---|---|---|---|---|---|

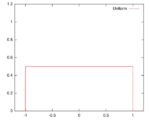

| Uniform ("rectangular window") | [math]\displaystyle{ K(u) = \frac12 }[/math]

Support: [math]\displaystyle{ |u|\leq1 }[/math] |

|

[math]\displaystyle{ \frac13 }[/math] | [math]\displaystyle{ \frac12 }[/math] | 92.9% |

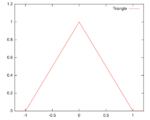

| Triangular | [math]\displaystyle{ K(u) = (1-|u|) }[/math]

Support: [math]\displaystyle{ |u|\leq1 }[/math] |

|

[math]\displaystyle{ \frac{1}{6} }[/math] | [math]\displaystyle{ \frac{2}{3} }[/math] | 98.6% |

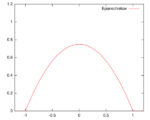

| Epanechnikov

(parabolic) |

[math]\displaystyle{ K(u) = \frac{3}{4}(1-u^2) }[/math]

Support: [math]\displaystyle{ |u|\leq1 }[/math] |

|

[math]\displaystyle{ \frac{1}{5} }[/math] | [math]\displaystyle{ \frac{3}{5} }[/math] | 100% |

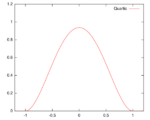

| Quartic (biweight) |

[math]\displaystyle{ K(u) = \frac{15}{16}(1-u^2)^2 }[/math]

Support: [math]\displaystyle{ |u|\leq1 }[/math] |

|

[math]\displaystyle{ \frac{1}{7} }[/math] | [math]\displaystyle{ \frac{5}{7} }[/math] | 99.4% |

| Triweight | [math]\displaystyle{ K(u) = \frac{35}{32}(1-u^2)^3 }[/math]

Support: [math]\displaystyle{ |u|\leq1 }[/math] |

|

[math]\displaystyle{ \frac{1}{9} }[/math] | [math]\displaystyle{ \frac{350}{429} }[/math] | 98.7% |

| Tricube | [math]\displaystyle{ K(u) = \frac{70}{81}(1- {\left| u \right|}^3)^3 }[/math]

Support: [math]\displaystyle{ |u|\leq1 }[/math] |

|

[math]\displaystyle{ \frac{35}{243} }[/math] | [math]\displaystyle{ \frac{175}{247} }[/math] | 99.8% |

| Gaussian | [math]\displaystyle{ K(u) = \frac{1}{\sqrt{2\pi}}e^{-\frac{1}{2}u^2} }[/math] |

|

[math]\displaystyle{ 1\, }[/math] | [math]\displaystyle{ \frac{1}{2\sqrt\pi} }[/math] | 95.1% |

| Cosine | [math]\displaystyle{ K(u) = \frac{\pi}{4}\cos\left(\frac{\pi}{2}u\right) }[/math]

Support: [math]\displaystyle{ |u|\leq1 }[/math] |

|

[math]\displaystyle{ 1-\frac{8}{\pi^2} }[/math] | [math]\displaystyle{ \frac{\pi^2}{16} }[/math] | 99.9% |

| Logistic | [math]\displaystyle{ K(u) = \frac{1}{e^{u}+2+e^{-u}} }[/math] |

|

[math]\displaystyle{ \frac{\pi^2}{3} }[/math] | [math]\displaystyle{ \frac{1}{6} }[/math] | 88.7% |

| Sigmoid function | [math]\displaystyle{ K(u) = \frac{2}{\pi}\frac{1}{e^{u}+e^{-u}} }[/math] |

|

[math]\displaystyle{ \frac{\pi^2}{4} }[/math] | [math]\displaystyle{ \frac{2}{\pi^2} }[/math] | 84.3% |

| Silverman kernel[6] | [math]\displaystyle{ K(u) = \frac{1}{2} e^{-\frac{|u|}{\sqrt{2}}} \cdot \sin\left( \frac{|u|}{\sqrt{2}}+\frac{\pi}{4}\right) }[/math] |

|

[math]\displaystyle{ 0 }[/math] | [math]\displaystyle{ \frac{3\sqrt{2}}{16} }[/math] | not applicable |

See also

- Kernel density estimation

- Kernel smoother

- Stochastic kernel

- Positive-definite kernel

- Density estimation

- Multivariate kernel density estimation

- Kernel method

References

- ↑ Schuster, Eugene (August 1969). "Estimation of a probability density function and its derivatives". The Annals of Mathematical Statistics 40 (4): 1187-1195. doi:10.1214/aoms/1177697495.

- ↑ Named for Epanechnikov, V. A. (1969). "Non-Parametric Estimation of a Multivariate Probability Density". Theory Probab. Appl. 14 (1): 153–158. doi:10.1137/1114019.

- ↑ Altman, N. S. (1992). "An introduction to kernel and nearest neighbor nonparametric regression". The American Statistician 46 (3): 175–185. doi:10.1080/00031305.1992.10475879.

- ↑ Cleveland, W. S.; Devlin, S. J. (1988). "Locally weighted regression: An approach to regression analysis by local fitting". Journal of the American Statistical Association 83 (403): 596–610. doi:10.1080/01621459.1988.10478639.

- ↑ Efficiency is defined as [math]\displaystyle{ \sqrt{\int u^2 K(u) \, d u} \int K(u)^2 \, d u }[/math].

- ↑ Silverman, B. W. (1986). Density Estimation for Statistics and Data Analysis. Chapman and Hall, London.

- Li, Qi; Racine, Jeffrey S. (2007). Nonparametric Econometrics: Theory and Practice. Princeton University Press. ISBN 978-0-691-12161-1.

- Zucchini, Walter. "APPLIED SMOOTHING TECHNIQUES Part 1: Kernel Density Estimation". http://staff.ustc.edu.cn/~zwp/teach/Math-Stat/kernel.pdf.

- Comaniciu, D; Meer, P (2002). "Mean shift: A robust approach toward feature space analysis". IEEE Transactions on Pattern Analysis and Machine Intelligence 24 (5): 603–619. doi:10.1109/34.1000236.

|