DMelt:IO/1 File Input and Output

File input/output

DataMelt supports many different types of I/O (input-output), in most cases the I/O part of DataMelt is based on self-descriptive file formats. You can look at the supported files in the category supported by DataMelt].

Here is the list of I/O supported by DataMelt:

- The native Java I/O. Access them from the java.io package;

- The native Python I/O methods and classes;

- Native DataMelt I/O classes which are built-in into the JHPLOT package (will be discussed below). Several packages based on the standard Java serialization and XML-type serialization. Access them from jhplot.io. We will discuss some of them below;

- Native DataMelt I/O classes build around XML syntax. File format is cross platform

- External databases such as:

- SQL-type (Derby and SQLite based on SQLjet). Starting from v3.8, Derby is excluded from the package since it comes with JDK as JavaDB (see the JAVAHOME/db/ directory).

- Several object-based databases (like NeoDatis)

- Maps with disk backends to store large data

- External file formats native to C++, such as ROOT and AIDA

- Google's Buffers library which is fully integrated, thus all DataMelt Java data containers can be accessed or written using C++ program (or any other which are supported by the Protocol Buffers).

- Data (mainly time series) can be read and saved using ASCII, Gauss, Matlab, Excel formats (PRO edition).

- DIF - Data Interchange Format (see the DIF description)

- Comma-separated_values (CSV) - Comma-separated_values format.

- EDN - is an extensible data notation format.

DataMelt is 100% Java, but the unique feature is that it fully supports many ways to share data between Java and C++ or other programming languages.

Input and Output

DataMelt contains several powerful classes for persistent storage of objects (data) in files. It should be noted that many DataMelt objects described before have their own methods for file input/output (with or without compression). Any data container for arrays can be initialized form a file (on the disk or from URL).

For example, let's create a PND object representing a multidimensional matrix. We will initialize this object from URL. The number of columns and rows can be arbitrary.

>>> from jhplot import *

>>> pn=PND('data','http://jwork.org/dmelt/examples/data/pnd.d')

>>> print pn.toString()

Run this class and you will see the output of this container. Now you can project this matrix into 1D, or make X-Y plot using ant column or row.

Still, one can use external classes from the package  jhplot.io.package-summary to write and read data

in a persistent form. This will be considered in this section.

jhplot.io.package-summary to write and read data

in a persistent form. This will be considered in this section.

Here is a short summary of the input-output classes for data:

jhplot.IO - Write/read read any Java object (lists, maps, etc.) using the Java serialization.

jhplot.IO - Write/read read any Java object (lists, maps, etc.) using the Java serialization. jhplot.io.Serialized - Write/read read any Java object (lists, maps, etc.) using the Java serialization. Kept for backward comparability with ScaVis

jhplot.io.Serialized - Write/read read any Java object (lists, maps, etc.) using the Java serialization. Kept for backward comparability with ScaVis jhplot.io.HFile - Write/read read any Java object in sequential order using the Java serialization.

jhplot.io.HFile - Write/read read any Java object in sequential order using the Java serialization. jhplot.io.HFileXML - Write/read read any Java object in sequential order using the XML serialization.

jhplot.io.HFileXML - Write/read read any Java object in sequential order using the XML serialization. jhplot.io.PFile - Write/read DataMelt data structures (P1D,P2D,H1D,H2D) in files using Google's Prototype Buffer (cross platform)

jhplot.io.PFile - Write/read DataMelt data structures (P1D,P2D,H1D,H2D) in files using Google's Prototype Buffer (cross platform) jhplot.io.EFile - Write/read DataMelt data structures (P1D,P2D,H1D,H2D) in sequential order into ntuples using Google's Prototype Buffer (cross platform)

jhplot.io.EFile - Write/read DataMelt data structures (P1D,P2D,H1D,H2D) in sequential order into ntuples using Google's Prototype Buffer (cross platform)

DataMelt file formats

DataMelt supports the following formats:

- Files based on the Java serialization, which includes binary compressed format and XML human readable formats. The extension are ".jser". Such files are generated by HFile class. Generally, all graphical attributes can be saved (which can make the files are larger then expected. Read about this in Java serialization IO.

- File formats based on Googles protocol buffers. The files can be generated by PFile class. The extension is ".jpbu". This is cross platform data format, but data are not human readable. Also it is fastest engine with small sizes for output files. Read about it in Cross-platform IO.

- XML-based format with the extension jdat. Such files can be generated by HBook class. Generally, the format is significantly smaller than the usual XML serialisation since data blocks do not have XML tags.

- External ROOT and AIDA file formats. DataMelt can read and display data from such files, but does not write data in such formats. Read about this Other file format.

You can list and read data stored in the files with the extensions:

- ".jser" (HFile compressed Java serialization). Any Java object can be saved and restored as long as implements java.io.Serializable. All DataMelt data objects can be saved/restored in this format. Files are JVM independent, meaning an object can be serialized on one platform and deserialized on an entirely different platform.

- ".jxml" (HFileXML XML serialisation). Human readable, but file sizes is x5 larger than for *.jser

- ".jpbu" (PFile compressed Google ProtocolBuffer serialization with cross platform support). All data structures from the jhplot package are supported.

- ".jdat" (HBook human-readable XML format). Good cross platform compatibility.

- ".root" (ROOT zip compressed ROOT format)

- ".aida/.xml" AIDA XML format

using a data browser. You can open such data file and plot them (or show as a table) as this:

- Start DataMelt IDE.

- In the toolbar, go to "Plots" and the "HPlot" (or HPlot3D" in case 3D objects). You will see a canvas

- In the canvas, select "File" → "Open data file". The look at the files with the extensions as above. When you click on the file, you will see a table to the left of the browser. Then select the select the object and plot it.

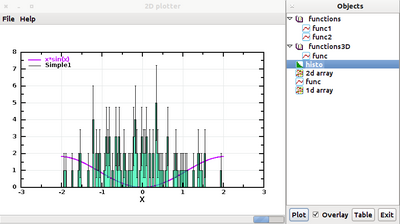

You can open data browser for any supported file inside a Java or Jython script. In this case, the program executes the script and will bring up 2 windows: one is a canvas and the second window with the objects inside the input files. The file should have the extension *.jdat, *.jpbu, *.jser, *.root or *.aida. The file can be located on URL.

Data objects can be organized in directories and can be shown in the data browser as a trees. You can find below a simple script which brings up the file browser and the canvas exactly as it is shown in figure above:

from jhplot import *

from jhplot.io import *

c1=HPlot()

c1.visible()

c1.setAutoRange()

BrowserData("output.jdat",c1) # show the browser for existing files

BrowserData("output.jpbu",c1)

ASCII formats

This is a data interchange format (extension .dif) and Comma-separated_values (CSV) with file extension .csv, are text file formats used to import/export single spreadsheets. This small example shows how to read DIF files:

import dif

f=open("nature04632-s16-2.dif",'r')

d = dif.DIF(f)

print d.header

print d.vectors

print d.data

Note that this module is pure Python, so this example will not work using Java.