Quadratic unconstrained binary optimization

Quadratic unconstrained binary optimization (QUBO), also known as unconstrained binary quadratic programming (UBQP), is a combinatorial optimization problem with a wide range of applications from finance and economics to machine learning.[1] QUBO is an NP hard problem, and for many classical problems from theoretical computer science, like maximum cut, graph coloring and the partition problem, embeddings into QUBO have been formulated.[2][3] Embeddings for machine learning models include support-vector machines, clustering and probabilistic graphical models.[4] Moreover, due to its close connection to Ising models, QUBO constitutes a central problem class for adiabatic quantum computation, where it is solved through a physical process called quantum annealing.[5]

Definition

Let the set of binary digits (or bits), then is the set of binary vectors of fixed length . Given a symmetric or upper triangular matrix , whose entries define a weight for each pair of indices , we can define the function that assigns a value to each binary vector through

Alternatively, the linear and quadratic parts can be separated as

where and . This is equivalent to the previous definition through using the diag operator, exploiting that for all binary values .

Intuitively, the weight is added if both and . The QUBO problem consists of finding a binary vector that minimizes , i.e., .

In general, is not unique, meaning there may be a set of minimizing vectors with equal value w.r.t. . The complexity of QUBO arises from the number of candidate binary vectors to be evaluated, as grows exponentially in .

Sometimes, QUBO is defined as the problem of maximizing , which is equivalent to minimizing .

Properties

QUBO is scale invariant for positive factors , which leave the optimum unchanged:

- .

In its general form, QUBO is NP-hard and cannot be solved efficiently by any polynomial-time algorithm.[6] However, there are polynomially-solvable special cases, where has certain properties,[7] for example:

- If all coefficients are positive, the optimum is trivially . Similarly, if all coefficients are negative, the optimum is .

- If is diagonal, the bits can be optimized independently, and the problem is solvable in . The optimal variable assignments are simply if , and otherwise.

- If all off-diagonal elements of are non-positive, the corresponding QUBO problem is solvable in polynomial time.[8]

QUBO can be solved using integer linear programming solvers like CPLEX or Gurobi Optimizer. This is possible since QUBO can be reformulated as a linear constrained binary optimization problem. To achieve this, substitute the product by an additional binary variable and add the constraints , and . Note that can also be relaxed to continuous variables within the bounds zero and one.

Applications

QUBO is a structurally simple, yet computationally hard optimization problem. It can be used to encode a wide range of optimization problems from various scientific areas.[9]

Maximum Cut

Given a graph with vertex set and edges , the maximum cut (max-cut) problem consists of finding two subsets with , such that the number of edges between and is maximized.

The more general weighted max-cut problem assumes edge weights , with , and asks for a partition that maximizes the sum of edge weights between and , i.e.,

By setting for all this becomes equivalent to the original max-cut problem above, which is why we focus on this more general form in the following.

For every vertex in we introduce a binary variable with the interpretation if and if . As , every is in exactly one set, meaning there is a 1:1 correspondence between binary vectors and partitions of into two subsets.

We observe that, for any , the expression evaluates to 1 if and only if and are in different subsets, equivalent to logical XOR. Let with . By extending above expression to matrix-vector form we find that

is the sum of weights of all edges between and , where . As this is a quadratic function over , it is a QUBO problem whose parameter matrix we can read from above expression as

after flipping the sign to make it a minimization problem.

Cluster Analysis

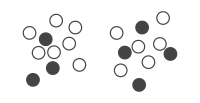

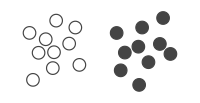

Next, we consider the problem of cluster analysis, where we are given a set of points in -dimensional space and want to assign each point to one of two classes or clusters, such that points in the same cluster are similar to each other. For this example we set and . The data is given as a matrix , where each row contains two cartesian coordinates. For two clusters, we can assign a binary variable to the point corresponding to the -th row in , indicating whether it belongs to the first () or second cluster (). Consequently, we have 20 binary variables, which form a binary vector that corresponds to a cluster assignment of all points (see figure).

One way to derive a clustering is to consider the pairwise distances between points. Given a cluster assignment , the expression evaluates to 1 if points and are in the same cluster. Similarly, indicates that they are in different clusters. Let denote the Euclidean distance between the points and , i.e.,

- ,

where is the -th row of .

In order to define a cost function to minimize, when points and are in the same cluster we add their positive distance , and subtract it when they are in different clusters. This way, an optimal solution tends to place points which are far apart into different clusters, and points that are close into the same cluster.

Let with for all . Given an assignment , such a cost function is given by

where .

From the second line we can see that this expression can be re-arranged to a QUBO problem by defining

and ignoring the constant term . Using these parameters, a binary vector minimizing this QUBO instance will correspond to an optimal cluster assignment w.r.t. above cost function.

Connection to Ising models

QUBO is very closely related and computationally equivalent to the Ising model, whose Hamiltonian function is defined as

with real-valued parameters for all . The spin variables are binary with values from instead of . Note that this formulation is simplified, since, in a physics context, are typically Pauli operators, which are complex-valued matrices of size , whereas here we treat them as binary variables. Many formulations of the Ising model Hamiltonian further assume that the variables are arranged in a lattice, where only neighboring pairs of variables can have non-zero coefficients; here, we simply assume that if and are not neighbors.

Applying the identity yields an equivalent QUBO problem [10]

whose weight matrix is given by

again ignoring the constant term, which does not affect the minization. Using the identity , a QUBO problem with matrix can be converted to an equivalent Ising model using the same technique, yielding

and a constant offset of .[10]

References

- ↑ Kochenberger, Gary; Hao, Jin-Kao; Glover, Fred; Lewis, Mark; Lu, Zhipeng; Wang, Haibo; Wang, Yang (2014). "The unconstrained binary quadratic programming problem: a survey.". Journal of Combinatorial Optimization 28: 58–81. doi:10.1007/s10878-014-9734-0. https://leeds-faculty.colorado.edu/glover/454%20-%20xQx%20survey%20article%20as%20published%202014.pdf.

- ↑ Glover, Fred; Kochenberger, Gary (2019). "A Tutorial on Formulating and Using QUBO Models". arXiv:1811.11538 [cs.DS].

- ↑ Lucas, Andrew (2014). "Ising formulations of many NP problems". Frontiers in Physics 2: 5. doi:10.3389/fphy.2014.00005. Bibcode: 2014FrP.....2....5L.

- ↑ Mücke, Sascha; Piatkowski, Nico; Morik, Katharina (2019). "Learning Bit by Bit: Extracting the Essence of Machine Learning". LWDA. https://pdfs.semanticscholar.org/f484/b4a789e1563b91a416a7cfabbf72f0aa3b2a.pdf.

- ↑ Tom Simonite (8 May 2013). "D-Wave's Quantum Computer Goes to the Races, Wins". MIT Technology Review. http://www.technologyreview.com/view/514686/d-waves-quantum-computer-goes-to-the-races-wins/.

- ↑ A. P. Punnen (editor), Quadratic unconstrained binary optimization problem: Theory, Algorithms, and Applications, Springer, Springer, 2022.

- ↑ Çela, E., Punnen, A.P. (2022). Complexity and Polynomially Solvable Special Cases of QUBO. In: Punnen, A.P. (eds) The Quadratic Unconstrained Binary Optimization Problem. Springer, Cham. https://doi.org/10.1007/978-3-031-04520-2_3

- ↑ See Theorem 3.16 in Punnen (2022); note that the authors assume the maximization version of QUBO.

- ↑ Ratke, Daniel (2021-06-10). "List of QUBO formulations". https://blog.xa0.de/post/List-of-QUBO-formulations/.

- ↑ 10.0 10.1 Mücke, S. (2025). Quantum-Classical Optimization in Machine Learning. Shaker Verlag. https://d-nb.info/1368090214

External links

- QUBO Benchmark (Benchmark of software packages for the exact solution of QUBOs; part of the well-known Mittelmann benchmark collection)

- Endre Boros, Peter L Hammer & Gabriel Tavares (April 2007). "Local search heuristics for Quadratic Unconstrained Binary Optimization (QUBO)". Journal of Heuristics (Association for Computing Machinery) 13 (2): 99–132. doi:10.1007/s10732-007-9009-3. http://portal.acm.org/citation.cfm?id=1231283. Retrieved 12 May 2013.

- Di Wang & Robert Kleinberg (November 2009). "Analyzing quadratic unconstrained binary optimization problems via multicommodity flows". Discrete Applied Mathematics (Elsevier) 157 (18): 3746–3753. doi:10.1016/j.dam.2009.07.009. PMID 20161596.

- Hiroshima University and NTT DATA Group Corporation : "QUBO++ with ABS2 GPU QUBO Solver" # Software.

|