Multivariate stable distribution

|

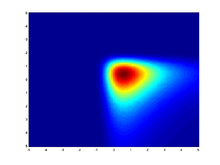

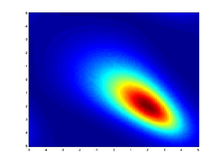

Probability density function  Heatmap showing a Multivariate (bivariate) stable distribution with α = 1.1 | |||

| Parameters |

[math]\displaystyle{ \alpha \in (0,2] }[/math] — exponent [math]\displaystyle{ \delta \in \mathbb{R}^d }[/math] - shift/location vector [math]\displaystyle{ \Lambda(s) }[/math] - a spectral finite measure on the sphere | ||

|---|---|---|---|

| Support | [math]\displaystyle{ u \in \mathbb{R}^d }[/math] | ||

| (no analytic expression) | |||

| CDF | (no analytic expression) | ||

| Variance | Infinite when [math]\displaystyle{ \alpha \lt 2 }[/math] | ||

| CF | see text | ||

The multivariate stable distribution is a multivariate probability distribution that is a multivariate generalisation of the univariate stable distribution. The multivariate stable distribution defines linear relations between stable distribution marginals.[clarification needed] In the same way as for the univariate case, the distribution is defined in terms of its characteristic function.

The multivariate stable distribution can also be thought as an extension of the multivariate normal distribution. It has parameter, α, which is defined over the range 0 < α ≤ 2, and where the case α = 2 is equivalent to the multivariate normal distribution. It has an additional skew parameter that allows for non-symmetric distributions, where the multivariate normal distribution is symmetric.

Definition

Let [math]\displaystyle{ \mathbb{S} }[/math] be the unit sphere in [math]\displaystyle{ \mathbb R^d\colon \mathbb{S} = \{u \in \mathbb R^d\colon|u| = 1\} }[/math]. A random vector, [math]\displaystyle{ X }[/math], has a multivariate stable distribution - denoted as [math]\displaystyle{ X \sim S(\alpha, \Lambda, \delta) }[/math] -, if the joint characteristic function of [math]\displaystyle{ X }[/math] is[1]

- [math]\displaystyle{ \operatorname{E} \exp(i u^T X) = \exp \left\{-\int \limits_{s \in \mathbb S}\left\{|u^Ts|^\alpha + i \nu (u^Ts, \alpha) \right\} \, \Lambda(ds) + i u^T\delta\right\} }[/math]

where 0 < α < 2, and for [math]\displaystyle{ y\in\mathbb R }[/math]

- [math]\displaystyle{ \nu(y,\alpha) =\begin{cases} -\mathbf{sign}(y) \tan(\pi \alpha / 2)|y|^\alpha & \alpha \ne 1, \\ (2/\pi)y \ln |y| & \alpha=1. \end{cases} }[/math]

This is essentially the result of Feldheim,[2] that any stable random vector can be characterized by a spectral measure [math]\displaystyle{ \Lambda }[/math] (a finite measure on [math]\displaystyle{ \mathbb S }[/math]) and a shift vector [math]\displaystyle{ \delta \in \mathbb R^d }[/math].

Parametrization using projections

Another way to describe a stable random vector is in terms of projections. For any vector [math]\displaystyle{ u }[/math], the projection [math]\displaystyle{ u^TX }[/math] is univariate [math]\displaystyle{ \alpha- }[/math]stable with some skewness [math]\displaystyle{ \beta(u) }[/math], scale [math]\displaystyle{ \gamma(u) }[/math] and some shift [math]\displaystyle{ \delta(u) }[/math]. The notation [math]\displaystyle{ X \sim S(\alpha,\beta(\cdot),\gamma(\cdot),\delta(\cdot)) }[/math] is used if X is stable with [math]\displaystyle{ u^TX \sim s(\alpha,\beta(\cdot),\gamma(\cdot),\delta(\cdot)) }[/math] for every [math]\displaystyle{ u \in \mathbb R^d }[/math]. This is called the projection parameterization.

The spectral measure determines the projection parameter functions by:

- [math]\displaystyle{ \gamma(u) = \Bigl( \int_{s \in \mathbb{S}} |u^Ts|^\alpha \Lambda(ds) \Bigr)^{1/\alpha} }[/math]

- [math]\displaystyle{ \beta(u) = \int_{s \in \mathbb{S}}|u^Ts|^\alpha \mathbf{sign}(u^Ts)\Lambda(ds)/ \gamma(u)^\alpha }[/math]

- [math]\displaystyle{ \delta(u)=\begin{cases}u^T \delta & \alpha \ne 1\\u^T \delta -\int_{s \in \mathbb{S}}\tfrac{\pi}{2} u^Ts \ln|u^Ts|\Lambda(ds)&\alpha=1\end{cases} }[/math]

Special cases

There are special cases where the multivariate characteristic function takes a simpler form. Define the characteristic function of a stable marginal as

- [math]\displaystyle{ \omega(y|\alpha,\beta) = \begin{cases}|y|^\alpha\left[1-i \beta(\tan \tfrac{\pi\alpha}{2})\mathbf{sign}(y)\right]& \alpha \ne 1\\ |y|\left[1+i \beta \tfrac{2}{\pi} \mathbf{sign}(y)\ln |y|\right] & \alpha = 1\end{cases} }[/math]

Isotropic multivariate stable distribution

The characteristic function is [math]\displaystyle{ E \exp(i u^T X)=\exp\{-\gamma_0^\alpha|u|^\alpha+i u^T \delta)\} }[/math] The spectral measure is continuous and uniform, leading to radial/isotropic symmetry.[3] For the multinormal case [math]\displaystyle{ \alpha=2 }[/math], this corresponds to independent components, but so is not the case when [math]\displaystyle{ \alpha\lt 2 }[/math]. Isotropy is a special case of ellipticity (see the next paragraph) – just take [math]\displaystyle{ \Sigma }[/math] to be a multiple of the identity matrix.

Elliptically contoured multivariate stable distribution

The elliptically contoured multivariate stable distribution is a special symmetric case of the multivariate stable distribution. If X is α-stable and elliptically contoured, then it has joint characteristic function [math]\displaystyle{ E \exp(i u^T X)=\exp\{-(u^T\Sigma u)^{\alpha/2}+i u^T \delta)\} }[/math] for some shift vector [math]\displaystyle{ \delta \in R^d }[/math] (equal to the mean when it exists) and some positive definite matrix [math]\displaystyle{ \Sigma }[/math] (akin to a correlation matrix, although the usual definition of correlation fails to be meaningful). Note the relation to characteristic function of the multivariate normal distribution: [math]\displaystyle{ E \exp(i u^T X)=\exp\{-(u^T\Sigma u)+i u^T \delta)\} }[/math] obtained when α = 2.

Independent components

The marginals are independent with [math]\displaystyle{ X_j \sim S(\alpha, \beta_j, \gamma_j, \delta_j) }[/math], then the characteristic function is

- [math]\displaystyle{ E \exp(i u^T X) = \exp\left\{-\sum_{j=1}^m \omega(u_j|\alpha,\beta_j)\gamma_j^\alpha +i u^T \delta)\right\} }[/math]

Observe that when α = 2 this reduces again to the multivariate normal; note that the iid case and the isotropic case do not coincide when α < 2. Independent components is a special case of discrete spectral measure (see next paragraph), with the spectral measure supported by the standard unit vectors.

Discrete

If the spectral measure is discrete with mass [math]\displaystyle{ \lambda_j }[/math] at [math]\displaystyle{ s_j \in \mathbb{S},j=1,\ldots,m }[/math] the characteristic function is

- [math]\displaystyle{ E \exp(i u^T X)= \exp\left\{-\sum_{j=1}^m \omega(u^Ts_j|\alpha,1)\lambda_j^\alpha +i u^T \delta)\right\} }[/math]

Linear properties

If [math]\displaystyle{ X \sim S(\alpha, \beta(\cdot), \gamma(\cdot), \delta(\cdot)) }[/math] is d-dimensional, A is an m x d matrix, and [math]\displaystyle{ b \in \mathbb{R}^m, }[/math] then AX + b is m-dimensional [math]\displaystyle{ \alpha }[/math]-stable with scale function [math]\displaystyle{ \gamma(A^T\cdot), }[/math] skewness function [math]\displaystyle{ \beta(A^T\cdot), }[/math] and location function [math]\displaystyle{ \delta(A^T\cdot) + b^T. }[/math]

Inference in the independent component model

Recently[4] it was shown how to compute inference in closed-form in a linear model (or equivalently a factor analysis model), involving independent component models.

More specifically, let [math]\displaystyle{ X_i \sim S(\alpha, \beta_{x_i}, \gamma_{x_i}, \delta_{x_i}), i=1,\ldots,n }[/math] be a set of i.i.d. unobserved univariate drawn from a stable distribution. Given a known linear relation matrix A of size [math]\displaystyle{ n \times n }[/math], the observation [math]\displaystyle{ Y_i = \sum_{i=1}^n A_{ij}X_j }[/math] are assumed to be distributed as a convolution of the hidden factors [math]\displaystyle{ X_i }[/math]. [math]\displaystyle{ Y_i = S(\alpha, \beta_{y_i}, \gamma_{y_i}, \delta_{y_i}) }[/math]. The inference task is to compute the most probable [math]\displaystyle{ X_i }[/math], given the linear relation matrix A and the observations [math]\displaystyle{ Y_i }[/math]. This task can be computed in closed-form in O(n3).

An application for this construction is multiuser detection with stable, non-Gaussian noise.

See also

- Multivariate Cauchy distribution

- Multivariate normal distribution

Resources

- Mark Veillette's stable distribution matlab package http://www.mathworks.com/matlabcentral/fileexchange/37514

- The plots in this page where plotted using Danny Bickson's inference in linear-stable model Matlab package: https://www.cs.cmu.edu/~bickson/stable

Notes

- ↑ J. Nolan, Multivariate stable densities and distribution functions: general and elliptical case, BundesBank Conference, Eltville, Germany, 11 November 2005. See also http://academic2.american.edu/~jpnolan/stable/stable.html

- ↑ Feldheim, E. (1937). Etude de la stabilité des lois de probabilité . Ph. D. thesis, Faculté des Sciences de Paris, Paris, France.

- ↑ User manual for STABLE 5.1 Matlab version, Robust Analysis Inc., http://www.RobustAnalysis.com

- ↑ D. Bickson and C. Guestrin. Inference in linear models with multivariate heavy-tails. In Neural Information Processing Systems (NIPS) 2010, Vancouver, Canada, Dec. 2010. https://www.cs.cmu.edu/~bickson/stable/

|