Chow test

The Chow test (Chinese: 鄒檢定), proposed by econometrician Gregory Chow in 1960, is a test of whether the true coefficients in two linear regressions on different data sets are equal. In econometrics, it is most commonly used in time series analysis to test for the presence of a structural break at a period which can be assumed to be known a priori (for instance, a major historical event such as a war). In program evaluation, the Chow test is often used to determine whether the independent variables have different impacts on different subgroups of the population.

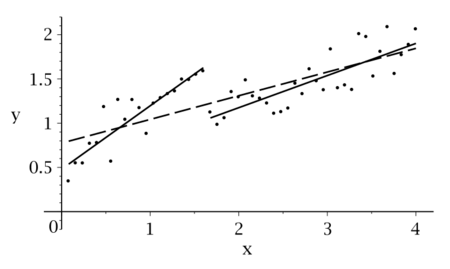

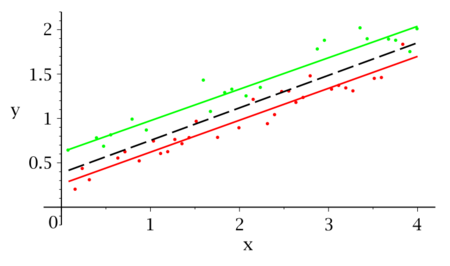

Illustrations

First Chow Test

Suppose that we model our data as

If we split our data into two groups, then we have

and

The null hypothesis of the Chow test asserts that , , and , and there is the assumption that the model errors are independent and identically distributed from a normal distribution with unknown variance.

Let be the sum of squared residuals from the combined data, be the sum of squared residuals from the first group, and be the sum of squared residuals from the second group. and are the number of observations in each group and is the total number of parameters (in this case 3, i.e. 2 independent variables coefficients + intercept). Then the Chow test statistic is

The test statistic follows the F-distribution with and degrees of freedom.

The same result can be achieved via dummy variables.

Consider the two data sets which are being compared. Firstly there is the 'primary' data set i={1,...,} and the 'secondary' data set i={+1,...,n}. Then there is the union of these two sets: i={1,...,n}. If there is no structural change between the primary and secondary data sets a regression can be run over the union without the issue of biased estimators arising.

Consider the regression:

Which is run over i={1,...,n}.

D is a dummy variable taking a value of 1 for i={+1,...,n} and 0 otherwise.

If both data sets can be explained fully by then there is no use in the dummy variable as the data set is explained fully by the restricted equation. That is, under the assumption of no structural change we have a null and alternative hypothesis of:

The null hypothesis of joint insignificance of D can be run as an F-test with degrees of freedom (DoF). That is: .

Remarks

- The global sum of squares (SSE) is often called the Restricted Sum of Squares (RSSM) as we basically test a constrained model where we have assumptions (with the number of regressors).

- Some software like SAS will use a predictive Chow test when the size of a subsample is less than the number of regressors.

References

- Chow, Gregory C. (1960). "Tests of Equality Between Sets of Coefficients in Two Linear Regressions". Econometrica 28 (3): 591–605. doi:10.2307/1910133. http://pdfs.semanticscholar.org/0f70/219160c8ad2f9db02e226d3f7d7320e729b8.pdf.

- Doran, Howard E. (1989). Applied Regression Analysis in Econometrics. CRC Press. p. 146. ISBN 978-0-8247-8049-4.

- Dougherty, Christopher (2007). Introduction to Econometrics. Oxford University Press. p. 194. ISBN 978-0-19-928096-4.

- Kmenta, Jan (1986). Elements of Econometrics (Second ed.). New York: Macmillan. pp. 412–423. ISBN 978-0-472-10886-2. https://archive.org/details/elementsofeconom0003kmen.

- Wooldridge, Jeffrey M. (2009). Introduction to Econometrics: A Modern Approach (Fourth ed.). Mason: South-Western. pp. 243–246. ISBN 978-0-324-66054-8.

External links

- Computing the Chow statistic, Chow and Wald tests, Chow tests: Series of FAQ explanations from the Stata Corporation at https://www.stata.com/support/faqs/

- [1]: Series of FAQ explanations from the SAS Corporation

|