Gauss–Legendre method

In numerical analysis and scientific computing, the Gauss–Legendre methods are a family of numerical methods for ordinary differential equations. Gauss–Legendre methods are implicit Runge–Kutta methods. More specifically, they are collocation methods based on the points of Gauss–Legendre quadrature. The Gauss–Legendre method based on s points has order 2s.[1]

All Gauss–Legendre methods are A-stable.[2]

The Gauss–Legendre method of order two is the implicit midpoint rule. Its Butcher tableau is:

1/2 1/2 1

The Gauss–Legendre method of order four has Butcher tableau:

The Gauss–Legendre method of order six has Butcher tableau:

The computational cost of higher-order Gauss–Legendre methods is usually excessive, and thus, they are rarely used.[3]

Intuition

Gauss-Legendre Runge-Kutta (GLRK) methods solve an ordinary differential equation with . The distinguishing feature of GLRK is the estimation of with Gaussian quadrature.

where are the sampled velocities, are the quadrature weights, are the abscissas, and are the roots of the Legendre polynomial of degree . A further approximation is needed, as is still impossible to evaluate. To maintain truncation error of order , we only need to order . The Runge-Kutta implicit definition is invoked to accomplish this. This is an implicit constraint that must be solved by a root finding algorithm like Newton's method. The values of the Runge-Kutta parameters can be determined from a Taylor series expansion in .

Practical example

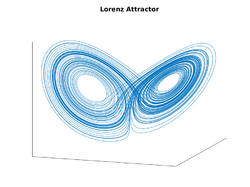

The Gauss-Legendre methods are implicit, so in general they cannot be applied exactly. Instead one makes an educated guess of , and then uses Newton's method to converge arbitrarily close to the true solution. Below is a Matlab function which implements the Gauss-Legendre method of order four.

% starting point

x = [ 10.5440; 4.1124; 35.8233];

dt = 0.01;

N = 10000;

x_series = [x];

for i = 1:N

x = gauss_step(x, @lorenz_dynamics, dt, 1e-7, 1, 100);

x_series = [x_series x];

end

plot3( x_series(1,:), x_series(2,:), x_series(3,:) );

set(gca,'xtick',[],'ytick',[],'ztick',[]);

title('Lorenz Attractor');

return;

function [td, j] = lorenz_dynamics(state)

% return a time derivative and a Jacobian of that time derivative

x = state(1);

y = state(2);

z = state(3);

sigma = 10;

beta = 8/3;

rho = 28;

td = [sigma*(y-x); x*(rho-z)-y; x*y-beta*z];

j = [-sigma, sigma, 0;

rho-z, -1, -x;

y, x, -beta];

end

function x_next = gauss_step( x, dynamics, dt, threshold, damping, max_iterations )

[d,~] = size(x);

sq3 = sqrt(3);

if damping > 1 || damping <= 0

error('damping should be between 0 and 1.')

end

% Use explicit Euler steps as initial guesses

[k,~] = dynamics(x);

x1_guess = x + (1/2-sq3/6)*dt*k;

x2_guess = x + (1/2+sq3/6)*dt*k;

[k1,~] = dynamics(x1_guess);

[k2,~] = dynamics(x2_guess);

a11 = 1/4;

a12 = 1/4 - sq3/6;

a21 = 1/4 + sq3/6;

a22 = 1/4;

error = @(k1, k2) [k1 - dynamics(x+(a11*k1+a12*k2)*dt); k2 - dynamics(x+(a21*k1+a22*k2)*dt)];

er = error(k1, k2);

iteration=1;

while (norm(er) > threshold && iteration < max_iterations)

fprintf('Newton iteration %d: error is %f.\n', iteration, norm(er) );

iteration = iteration + 1;

[~, j1] = dynamics(x+(a11*k1+a12*k2)*dt);

[~, j2] = dynamics(x+(a21*k1+a22*k2)*dt);

j = [eye(d) - dt*a11*j1, -dt*a12*j1;

-dt*a21*j2, eye(d) - dt*a22*j2];

k_next = [k1;k2] - damping * linsolve(j, er);

k1 = k_next(1:d);

k2 = k_next(d+(1:d));

er = error(k1, k2);

end

if norm(er) > threshold

error('Newton did not converge by %d iterations.', max_iterations);

end

x_next = x + dt / 2 * (k1 + k2);

end

This algorithm is surprisingly cheap. The error in can fall below in as few as 2 Newton steps. The only extra work compared to explicit Runge-Kutta methods is the computation of the Jacobian.

Time-symmetric variants

At the cost of adding an additional implicit relation, these methods can be adapted to have time reversal symmetry. In these methods, the averaged position is used in computing instead of just the initial position in standard Runge-Kutta methods. The method of order 2 is just an implicit midpoint method.

The method of order 4 with 2 stages is as follows.

The method of order 6 with 3 stages is as follows.

Notes

- ↑ Iserles 1996, p. 47

- ↑ Iserles 1996, p. 63

- ↑ Iserles 1996, p. 47

References

- Iserles, Arieh (1996), A First Course in the Numerical Analysis of Differential Equations, Cambridge University Press, ISBN 978-0-521-55655-2, https://books.google.com/books?id=7Zofw3SFTWIC&q=%22Gauss%E2%80%93Legendre%22.

|