Runge–Kutta methods

| Differential equations |

|---|

|

| Classification |

| Solution |

In numerical analysis, the Runge–Kutta methods (English: /ˈrʊŋəˈkʊtɑː/ (![]() listen) RUUNG-ə-KUUT-tah[1]) are a family of implicit and explicit iterative methods, which include the Euler method, used in temporal discretization for the approximate solutions of simultaneous nonlinear equations.[2] These methods were developed around 1900 by the German mathematicians Carl Runge and Wilhelm Kutta.

listen) RUUNG-ə-KUUT-tah[1]) are a family of implicit and explicit iterative methods, which include the Euler method, used in temporal discretization for the approximate solutions of simultaneous nonlinear equations.[2] These methods were developed around 1900 by the German mathematicians Carl Runge and Wilhelm Kutta.

The Runge–Kutta method

The most widely known member of the Runge–Kutta family is generally referred to as "RK4", the "classic Runge–Kutta method" or simply as "the Runge–Kutta method".

Let an initial value problem be specified as follows:

Here is an unknown function (scalar or vector) of time , which we would like to approximate; we are told that , the rate at which changes, is a function of and of itself. At the initial time the corresponding value is . The function and the initial conditions , are given.

Now we pick a step-size h > 0 and define:

for n = 0, 1, 2, 3, ..., using[3]

(Note: the above equations have different but equivalent definitions in different texts.[4])

Here is the RK4 approximation of , and the next value () is determined by the present value () plus the weighted average of four increments, where each increment is the product of the size of the interval, h, and an estimated slope specified by function f on the right-hand side of the differential equation.

- is the slope at the beginning of the interval, using (Euler's method);

- is the slope at the midpoint of the interval, using and ;

- is again the slope at the midpoint, but now using and ;

- is the slope at the end of the interval, using and .

In averaging the four slopes, greater weight is given to the slopes at the midpoint. If is independent of , so that the differential equation is equivalent to a simple integral, then RK4 is Simpson's rule.[5]

The RK4 method is a fourth-order method, meaning that the local truncation error is on the order of , while the total accumulated error is on the order of .

In many practical applications the function is independent of (so called autonomous system, or time-invariant system, especially in physics), and their increments are not computed at all and not passed to function , with only the final formula for used.

Explicit Runge–Kutta methods

The family of explicit Runge–Kutta methods is a generalization of the RK4 method mentioned above. It is given by

where[6]

- (Note: the above equations may have different but equivalent definitions in some texts.[4])

To specify a particular method, one needs to provide the integer s (the number of stages), and the coefficients aij (for 1 ≤ j < i ≤ s), bi (for i = 1, 2, ..., s) and ci (for i = 2, 3, ..., s). The matrix [aij] is called the Runge–Kutta matrix, while the bi and ci are known as the weights and the nodes.[7] These data are usually arranged in a mnemonic device, known as a Butcher tableau (after John C. Butcher):

A Taylor series expansion shows that the Runge–Kutta method is consistent if and only if

There are also accompanying requirements if one requires the method to have a certain order p, meaning that the local truncation error is O(hp+1). These can be derived from the definition of the truncation error itself. For example, a two-stage method has order 2 if b1 + b2 = 1, b2c2 = 1/2, and b2a21 = 1/2.[8] Note that a popular condition for determining coefficients is [8]

This condition alone, however, is neither sufficient, nor necessary for consistency. [9]

In general, if an explicit -stage Runge–Kutta method has order , then it can be proven that the number of stages must satisfy and if , then .[10] However, it is not known whether these bounds are sharp in all cases. In some cases, it is proven that the bound cannot be achieved. For instance, Butcher proved that for , there is no explicit method with stages.[11] Butcher also proved that for , there is no explicit Runge-Kutta method with stages.[12] In general, however, it remains an open problem what the precise minimum number of stages is for an explicit Runge–Kutta method to have order . Some values which are known are:[13]

The provable bound above then imply that we can not find methods of orders that require fewer stages than the methods we already know for these orders. The work of Butcher also proves that 7th and 8th order methods have a minimum of 9 and 11 stages, respectively.[11][12] An example of an explicit method of order 6 with 7 stages can be found in Ref.[14] Explicit methods of order 7 with 9 stages[11] and explicit methods of order 8 with 11 stages[15] are also known. See Refs.[16][17] for a summary.

Examples

The RK4 method falls in this framework. Its tableau is[18]

0 1/2 1/2 1/2 0 1/2 1 0 0 1 1/6 1/3 1/3 1/6

A slight variation of "the" Runge–Kutta method is also due to Kutta in 1901 and is called the 3/8-rule.[19] The primary advantage this method has is that almost all of the error coefficients are smaller than in the popular method, but it requires slightly more floating-point operations per time step. Its Butcher tableau is

0 1/3 1/3 2/3 −1/3 1 1 1 −1 1 1/8 3/8 3/8 1/8

However, the simplest Runge–Kutta method is the (forward) Euler method, given by the formula . This is the only consistent explicit Runge–Kutta method with one stage. The corresponding tableau is

0 1

Second-order methods with two stages

An example of a second-order method with two stages is provided by the explicit midpoint method:

The corresponding tableau is

0 1/2 1/2 0 1

The midpoint method is not the only second-order Runge–Kutta method with two stages; there is a family of such methods, parameterized by α and given by the formula[20]

Its Butcher tableau is

0

In this family, gives the midpoint method, is Heun's method,[5] and is Ralston's method.

Use

As an example, consider the two-stage second-order Runge–Kutta method with α = 2/3, also known as Ralston method. It is given by the tableau

| 0 | |||

| 2/3 | 2/3 | ||

| 1/4 | 3/4 |

with the corresponding equations

This method is used to solve the initial-value problem

with step size h = 0.025, so the method needs to take four steps.

The method proceeds as follows:

The numerical solutions correspond to the underlined values.

Implicit Runge–Kutta methods

Explicit Runge–Kutta methods are generally unsuitable for the solution of stiff equations because their region of absolute stability is small; in particular, it is bounded.[21] This issue is especially important in the solution of partial differential equations.

The instability of explicit Runge–Kutta methods motivates the development of implicit methods. An implicit Runge–Kutta method has the form

where

The difference with an explicit method is that in an explicit method, the sum over j only goes up to i − 1.[23] This also shows up in the Butcher tableau: the coefficient matrix of an explicit method is lower triangular. In an implicit method, the sum over j goes up to s and the coefficient matrix is not strictly triangular, yielding a Butcher tableau of the form[18]

The consequence of this difference is that at every step, a system of algebraic equations has to be solved. This increases the computational cost considerably. If a method with s stages is used to solve a differential equation with m components, then the system of algebraic equations has ms components. This can be contrasted with implicit linear multistep methods (the other big family of methods for ODEs): an implicit s-step linear multistep method needs to solve a system of algebraic equations with only m components, so the size of the system does not increase as the number of steps increases.[24]

Examples

The simplest example of an implicit Runge–Kutta method is the backward Euler method:

The Butcher tableau for this is simply:

This Butcher tableau corresponds to the formulae

which can be re-arranged to get the formula for the backward Euler method listed above.

Another example for an implicit Runge–Kutta method is the trapezoidal rule. Its Butcher tableau is:

The trapezoidal rule is a collocation method (as discussed in that article). All collocation methods are implicit Runge–Kutta methods, but not all implicit Runge–Kutta methods are collocation methods.[25]

The Gauss–Legendre methods form a family of collocation methods based on Gauss quadrature. A Gauss–Legendre method with s stages has order 2s (thus, methods with arbitrarily high order can be constructed).[26] The method with two stages (and thus order four) has Butcher tableau:

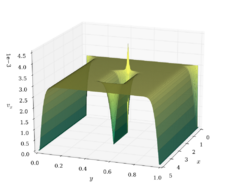

Stability

The advantage of implicit Runge–Kutta methods over explicit ones is their greater stability, especially when applied to stiff equations. Consider the linear test equation . A Runge–Kutta method applied to this equation reduces to the iteration , with r given by

where e stands for the vector of ones. The function r is called the stability function.[28] It follows from the formula that r is the quotient of two polynomials of degree s if the method has s stages. Explicit methods have a strictly lower triangular matrix A, which implies that det(I − zA) = 1 and that the stability function is a polynomial.[29]

The numerical solution to the linear test equation decays to zero if | r(z) | < 1 with z = hλ. The set of such z is called the domain of absolute stability. In particular, the method is said to be absolute stable if all z with Re(z) < 0 are in the domain of absolute stability. The stability function of an explicit Runge–Kutta method is a polynomial, so explicit Runge–Kutta methods can never be A-stable.[29]

If the method has order p, then the stability function satisfies as . Thus, it is of interest to study quotients of polynomials of given degrees that approximate the exponential function the best. These are known as Padé approximants. A Padé approximant with numerator of degree m and denominator of degree n is A-stable if and only if m ≤ n ≤ m + 2.[30]

The Gauss–Legendre method with s stages has order 2s, so its stability function is the Padé approximant with m = n = s. It follows that the method is A-stable.[31] This shows that A-stable Runge–Kutta can have arbitrarily high order. In contrast, the order of A-stable linear multistep methods cannot exceed two.[32]

Adaptive Runge–Kutta methods

Adaptive methods are designed to produce an estimate of the local truncation error of a single Runge–Kutta step. This is done by having two methods, one with order and one with order . These methods are interwoven, i.e., they have common intermediate steps. Thanks to this, estimating the error has little or negligible computational cost compared to a step with the higher-order method.

During the integration, the step size is adapted such that the estimated error stays below a user-defined threshold: If the error is too high, a step is repeated with a lower step size; if the error is much smaller, the step size is increased to save time. This results in an (almost), optimal step size, which saves computation time. Moreover, the user does not have to spend time on finding an appropriate step size.

The lower-order step is given by

where are the same as for the higher-order method. Then the error is

which is . The Butcher tableau for this kind of method is extended to give the values of :

The Runge–Kutta–Fehlberg method has two methods of orders 5 and 4. Its extended Butcher tableau is:

| 0 | |||||||

| 1/4 | 1/4 | ||||||

| 3/8 | 3/32 | 9/32 | |||||

| 12/13 | 1932/2197 | −7200/2197 | 7296/2197 | ||||

| 1 | 439/216 | −8 | 3680/513 | -845/4104 | |||

| 1/2 | −8/27 | 2 | −3544/2565 | 1859/4104 | −11/40 | ||

| 16/135 | 0 | 6656/12825 | 28561/56430 | −9/50 | 2/55 | ||

| 25/216 | 0 | 1408/2565 | 2197/4104 | −1/5 | 0 |

However, the simplest adaptive Runge–Kutta method involves combining Heun's method, which is order 2, with the Euler method, which is order 1. Its extended Butcher tableau is:

| 0 | |||

| 1 | 1 | ||

| 1/2 | 1/2 | ||

| 1 | 0 |

Other adaptive Runge–Kutta methods are the Bogacki–Shampine method (orders 3 and 2), the Cash–Karp method and the Dormand–Prince method (both with orders 5 and 4).

Nonconfluent Runge–Kutta methods

A Runge–Kutta method is said to be nonconfluent [33] if all the are distinct.

Runge–Kutta–Nyström methods

Runge–Kutta–Nyström (RKN) methods are a family of methods based on the same principles as Runge–Kutta methods but for second-order initial value problems[34][35], hence problems of the form :

There are two derivatives two approximates, a Runge-Kutta-Nyström methods hence uses two Runge-Kutta matrices , and two sets of weights , but still only needs one set of nodes . This yields a Butcher table with the form :

Assume the approximations have been carried out up to , with the approximation of and the approximation of . The approximations at are the solutions of the following system :

Where are the intermediate approximations of and . It is strictly equivalent to work with the values where the have been replaced with their formula, instead of working with , similarly to what we previously did with Runge-Kutta methods, but the system is easier to write this way.

A Runge-Kutta-Nyström method is said to be explicit if both are strictly lower triangular, and in this case, the sums in the expressions of , may be replaced with [36]. Additionally, a Runge-Kutta-Nyström method is said to be of order if the local truncation error of both is .

If the function of the considered initial value problem is independent of , one does not need to approximate the intermediate values to compute the approximations, the weights are hence useless and instead we write a method made only for this special case using a tableau of the form :

This special case is particularly interesting since it allows for greater order than what a Runge-Kutta-Nyström can achieve in general. For example, two fourth-order explicit RKN methods are given by the following Butcher tableau:

These two schemes also have the symplectic-preserving properties when the original equation is derived from a conservative classical mechanical system, i.e. when

for some scalar function .[37]

B-stability

The A-stability concept for the solution of differential equations is related to the linear autonomous equation . (Dahlquist 1963) proposed the investigation of stability of numerical schemes when applied to nonlinear systems that satisfy a monotonicity condition. The corresponding concepts were defined as G-stability for multistep methods (and the related one-leg methods) and B-stability (Butcher, 1975) for Runge–Kutta methods. A Runge–Kutta method applied to the non-linear system , which verifies , is called B-stable, if this condition implies for two numerical solutions.

Let , and be three matrices defined by A Runge–Kutta method is said to be algebraically stable[38] if the matrices and are both non-negative definite. A sufficient condition for B-stability[39] is: and are non-negative definite.

Derivation of the Runge–Kutta fourth-order method

In general a Runge–Kutta method of order can be written as:

where:

are increments obtained evaluating the derivatives of at the -th order.

We develop the derivation[40] for the Runge–Kutta fourth-order method using the general formula with evaluated, as explained above, at the starting point, the midpoint and the end point of any interval ; thus, we choose:

and otherwise. We begin by defining the following quantities:

where and If we define:

and for the previous relations we can show that the following equalities hold up to : where: is the total derivative of with respect to time.

If we now express the general formula using what we just derived we obtain:

and comparing this with the Taylor series of around :

we obtain a system of constraints on the coefficients:

which when solved gives as stated above.

See also

- Euler's method

- List of Runge–Kutta methods

- Numerical methods for ordinary differential equations

- Runge–Kutta method (SDE)

- General linear methods

- Lie group integrator

Notes

- ↑ "Runge-Kutta method" (in en). https://www.dictionary.com/browse/runge-kutta-method.

- ↑ DEVRIES, Paul L.; HASBUN, Javier E. A first course in computational physics. Second edition. Jones and Bartlett Publishers: 2011. p. 215.

- ↑ Press et al. 2007, p. 908; Süli & Mayers 2003, p. 328

- ↑ 4.0 4.1 (Atkinson 1989), (Hairer Nørsett), (Kaw Kalu) and (Stoer Bulirsch) leave out the factor h in the definition of the stages. (Ascher Petzold), (Butcher 2008) and (Iserles 1996) use the y values as stages.

- ↑ 5.0 5.1 Süli & Mayers 2003, p. 328

- ↑ Press et al. 2007, p. 907

- ↑ Iserles 1996, p. 38

- ↑ 8.0 8.1 Iserles 1996, p. 39

- ↑ As a counterexample, consider any explicit 2-stage Runge-Kutta scheme with and and randomly chosen. This method is consistent and (in general) first-order convergent. On the other hand, the 1-stage method with is inconsistent and fails to converge, even though it trivially holds that .

- ↑ Butcher 2008, p. 187

- ↑ 11.0 11.1 11.2 Butcher 1965, p. 408

- ↑ 12.0 12.1 Butcher 1985

- ↑ Butcher 2008, pp. 187–196

- ↑ Butcher 1964

- ↑ Curtis 1970, p. 268

- ↑ Hairer, Nørsett & Wanner 1993, p. 179

- ↑ Butcher 1996, p. 247

- ↑ 18.0 18.1 Süli & Mayers 2003, p. 352

- ↑ (Hairer Nørsett) refer to (Kutta 1901).

- ↑ Süli & Mayers 2003, p. 327

- ↑ Süli & Mayers 2003, pp. 349–351

- ↑ Iserles 1996, p. 41; Süli & Mayers 2003, pp. 351–352

- ↑ Butcher 2008, p. 94

- ↑ 24.0 24.1 Süli & Mayers 2003, p. 353

- ↑ Iserles 1996, pp. 43–44

- ↑ Iserles 1996, p. 47

- ↑ Hairer & Wanner 1996, pp. 40–41

- ↑ Hairer & Wanner 1996, p. 40

- ↑ 29.0 29.1 Iserles 1996, p. 60

- ↑ Iserles 1996, pp. 62–63

- ↑ Iserles 1996, p. 63

- ↑ This result is due to (Dahlquist 1963).

- ↑ Lambert 1991, p. 278

- ↑ Dormand, J. R.; Prince, P. J. (October 1978). "New Runge–Kutta Algorithms for Numerical Simulation in Dynamical Astronomy". Celestial Mechanics 18 (3): 223–232. doi:10.1007/BF01230162. Bibcode: 1978CeMec..18..223D.

- ↑ Fehlberg, E. (October 1974). Classical seventh-, sixth-, and fifth-order Runge–Kutta–Nyström formulas with stepsize control for general second-order differential equations (Report) (NASA TR R-432 ed.). Marshall Space Flight Center, AL: National Aeronautics and Space Administration.

- ↑ Butcher 2008, p. 94

- ↑ Qin, Meng-Zhao; Zhu, Wen-Jie (1991-01-01). "Canonical Runge-Kutta-Nyström (RKN) methods for second order ordinary differential equations". Computers & Mathematics with Applications 22 (9): 85–95. doi:10.1016/0898-1221(91)90209-M. ISSN 0898-1221. https://dx.doi.org/10.1016/0898-1221%2891%2990209-M.

- ↑ Lambert 1991, p. 275

- ↑ Lambert 1991, p. 274

- ↑ Lyu, Ling-Hsiao (August 2016). "Appendix C. Derivation of the Numerical Integration Formulae". Institute of Space Science, National Central University. http://www.ss.ncu.edu.tw/~lyu/lecture_files_en/lyu_NSSP_Notes/Lyu_NSSP_AppendixC.pdf.

References

- Runge, Carl David Tolmé (1895), "Über die numerische Auflösung von Differentialgleichungen", Mathematische Annalen (Springer) 46 (2): 167–178, doi:10.1007/BF01446807, https://zenodo.org/record/2178704.

- Kutta, Wilhelm (1901), "Beitrag zur näherungsweisen Integration totaler Differentialgleichungen", Zeitschrift für Mathematik und Physik 46: 435–453, https://archive.org/details/zeitschriftfrma12runggoog/page/434/.

- Ascher, Uri M.; Petzold, Linda R. (1998), Computer Methods for Ordinary Differential Equations and Differential-Algebraic Equations, Philadelphia: Society for Industrial and Applied Mathematics, ISBN 978-0-89871-412-8.

- Atkinson, Kendall A. (1989), An Introduction to Numerical Analysis (2nd ed.), New York: John Wiley & Sons, ISBN 978-0-471-50023-0.

- Butcher, John C. (May 1963), "Coefficients for the study of Runge-Kutta integration processes", Journal of the Australian Mathematical Society 3 (2): 185–201, doi:10.1017/S1446788700027932.

- Butcher, John C. (May 1964), "On Runge-Kutta processes of high order", Journal of the Australian Mathematical Society 4 (2): 179–194, doi:10.1017/S1446788700023387

- Butcher, John C. (1975), "A stability property of implicit Runge-Kutta methods", BIT 15 (4): 358–361, doi:10.1007/bf01931672.

- Butcher, John C. (2000), "Numerical methods for ordinary differential equations in the 20th century", J. Comput. Appl. Math. 125 (1–2): 1–29, doi:10.1016/S0377-0427(00)00455-6, Bibcode: 2000JCoAM.125....1B.

- Butcher, John C. (2008), Numerical Methods for Ordinary Differential Equations, New York: John Wiley & Sons, ISBN 978-0-470-72335-7.

- Cellier, F.; Kofman, E. (2006), Continuous System Simulation, Springer Verlag, ISBN 0-387-26102-8.

- Dahlquist, Germund (1963), "A special stability problem for linear multistep methods", BIT 3: 27–43, doi:10.1007/BF01963532, ISSN 0006-3835.

- Forsythe, George E.; Malcolm, Michael A.; Moler, Cleve B. (1977), Computer Methods for Mathematical Computations, Prentice-Hall (see Chapter 6).

- Hairer, Ernst; Nørsett, Syvert Paul; Wanner, Gerhard (1993), Solving ordinary differential equations I: Nonstiff problems, Berlin, New York: Springer-Verlag, ISBN 978-3-540-56670-0.

- Hairer, Ernst; Wanner, Gerhard (1996), Solving ordinary differential equations II: Stiff and differential-algebraic problems (2nd ed.), Berlin, New York: Springer-Verlag, ISBN 978-3-540-60452-5.

- Iserles, Arieh (1996), A First Course in the Numerical Analysis of Differential Equations, Cambridge University Press, ISBN 978-0-521-55655-2, Bibcode: 1996fcna.book.....I.

- Lambert, J.D (1991), Numerical Methods for Ordinary Differential Systems. The Initial Value Problem, John Wiley & Sons, ISBN 0-471-92990-5

- Kaw, Autar; Kalu, Egwu (2008), Numerical Methods with Applications (1st ed.), autarkaw.com, http://numericalmethods.eng.usf.edu/topics/textbook_index.html.

- Press, William H.; Teukolsky, Saul A.; Vetterling, William T.; Flannery, Brian P. (2007), "Section 17.1 Runge-Kutta Method", Numerical Recipes: The Art of Scientific Computing (3rd ed.), Cambridge University Press, ISBN 978-0-521-88068-8, http://apps.nrbook.com/empanel/index.html#pg=907. Also, Section 17.2. Adaptive Stepsize Control for Runge-Kutta.

- Stoer, Josef; Bulirsch, Roland (2002), Introduction to Numerical Analysis (3rd ed.), Berlin, New York: Springer-Verlag, ISBN 978-0-387-95452-3.

- Süli, Endre; Mayers, David (2003), An Introduction to Numerical Analysis, Cambridge University Press, ISBN 0-521-00794-1.

- Tan, Delin; Chen, Zheng (2012), "On A General Formula of Fourth Order Runge-Kutta Method", Journal of Mathematical Science & Mathematics Education 7 (2): 1–10, http://msme.us/2012-2-1.pdf.

- advance discrete maths ignou reference book (code- mcs033)

- John C. Butcher: "B-Series : Algebraic Analysis of Numerical Methods", Springer(SSCM, volume 55), ISBN 978-3030709556 (April, 2021).

- Butcher, J.C. (1985), "The non-existence of ten stage eighth order explicit Runge-Kutta methods", BIT Numerical Mathematics 25 (3): 521–540, doi:10.1007/BF01935372, https://link.springer.com/article/10.1007/BF01935372.

- Butcher, J.C. (1965), "On the attainable order of Runge-Kutta methods", Mathematics of Computation 19 (91): 408–417, doi:10.1090/S0025-5718-1965-0179943-X, https://www.ams.org/journals/mcom/1965-19-091/S0025-5718-1965-0179943-X/.

- Curtis, A.R. (1970), "An eighth order Runge-Kutta process with eleven function evaluations per step", Numerische Mathematik 16 (3): 268–277, doi:10.1007/BF02219778, https://link.springer.com/article/10.1007/BF02219778.

- Cooper, G.J.; Verner, J.H. (1972), "Some Explicit Runge–Kutta Methods of High Order", SIAM Journal on Numerical Analysis 9 (3): 389–405, doi:10.1137/0709037, Bibcode: 1972SJNA....9..389C, https://epubs.siam.org/doi/abs/10.1137/0709037?journalCode=sjnaam.

- Butcher, J.C. (1996), "A History of Runge-Kutta Methods", Applied Numerical Mathematics 20 (3): 247–260, doi:10.1016/0168-9274(95)00108-5, https://dx.doi.org/10.1016/0168-9274%2895%2900108-5.

External links

- Hazewinkel, Michiel, ed. (2001), "Runge-Kutta method", Encyclopedia of Mathematics, Springer Science+Business Media B.V. / Kluwer Academic Publishers, ISBN 978-1-55608-010-4, https://www.encyclopediaofmath.org/index.php?title=p/r082810

- Runge–Kutta 4th-Order Method

- Tracker Component Library Implementation in Matlab — Implements 32 embedded Runge Kutta algorithms in

RungeKStep, 24 embedded Runge-Kutta Nyström algorithms inRungeKNystroemSStepand 4 general Runge-Kutta Nyström algorithms inRungeKNystroemGStep.

|