Hadamard code

This article's lead section may be too long for the length of the article. (May 2020) |

| Hadamard code | |

|---|---|

| Named after | Jacques Hadamard |

| Classification | |

| Type | Linear block code |

| Block length | |

| Message length | |

| Rate | |

| Distance | |

| Alphabet size | |

| Notation | -code |

| Augmented Hadamard code | |

|---|---|

| Named after | Jacques Hadamard |

| Classification | |

| Type | Linear block code |

| Block length | |

| Message length | |

| Rate | |

| Distance | |

| Alphabet size | |

| Notation | -code |

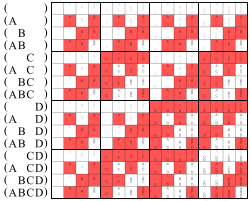

Here the white fields stand for 0

and the red fields for 1

The Hadamard code is an error-correcting code named after the French mathematician Jacques Hadamard that is used for error detection and correction when transmitting messages over very noisy or unreliable channels. In 1971, the code was used to transmit photos of Mars back to Earth from the NASA space probe Mariner 9.[1] Because of its unique mathematical properties, the Hadamard code is not only used by engineers, but also intensely studied in coding theory, mathematics, and theoretical computer science. The Hadamard code is also known under the names Walsh code, Walsh family,[2] and Walsh–Hadamard code[3] in recognition of the American mathematician Joseph Leonard Walsh.

The Hadamard code's mathematical specification is rather involved, and is described under Constructions. It is an example of a linear code of length over a binary alphabet. Unfortunately, this term is somewhat ambiguous as some references assume a message length while others assume a message length of . In this article, the first case is called the Hadamard code while the second is called the augmented Hadamard code.

The Hadamard code is unique in that each non-zero codeword has a Hamming weight of exactly , which implies that the distance of the code is also . In standard coding theory notation for block codes, the Hadamard code is a -code, that is, it is a linear code over a binary alphabet, has block length , message length (or dimension) , and minimum distance . The block length is very large compared to the message length, but on the other hand, errors can be corrected even in extremely noisy conditions.

The augmented Hadamard code is a slightly improved version of the Hadamard code; it is a -code and thus has a slightly better rate while maintaining the relative distance of , and is thus preferred in practical applications. In communication theory, this is simply called the Hadamard code and it is the same as the first order Reed–Muller code over the binary alphabet.[4]

Normally, Hadamard codes are based on Sylvester's construction of Hadamard matrices, but the term “Hadamard code” is also used to refer to codes constructed from arbitrary Hadamard matrices, which are not necessarily of Sylvester type. In general, such a code is not linear. Such codes were first constructed by Raj Chandra Bose and Sharadchandra Shankar Shrikhande in 1959.[5] If n is the size of the Hadamard matrix, the code has parameters , meaning it is a not-necessarily-linear binary code with 2n codewords of block length n and minimal distance n/2. The construction and decoding scheme described below apply for general n, but the property of linearity and the identification with Reed–Muller codes require that n be a power of 2 and that the Hadamard matrix be equivalent to the matrix constructed by Sylvester's method.

The Hadamard code is a locally decodable code, which provides a way to recover parts of the original message with high probability, while only looking at a small fraction of the received word. This gives rise to applications in computational complexity theory and particularly in the design of probabilistically checkable proofs. Since the relative distance of the Hadamard code is 1/2, normally one can only hope to recover from at most a 1/4 fraction of error. Using list decoding, however, it is possible to compute a short list of possible candidate messages as long as fewer than of the bits in the received word have been corrupted.

In code-division multiple access (CDMA) communication, the Hadamard code is referred to as Walsh Code, and is used to define individual communication channels. It is usual in the CDMA literature to refer to codewords as “codes”. Each user will use a different codeword, or “code”, to modulate their signal. Because Walsh codewords are mathematically orthogonal, a Walsh-encoded signal appears as random noise to a CDMA capable mobile terminal, unless that terminal uses the same codeword as the one used to encode the incoming signal.[6]

History

Hadamard code is the name that is most commonly used for this code in the literature. However, in modern use these error correcting codes are referred to as Walsh–Hadamard codes.

There is a reason for this:

Jacques Hadamard did not invent the code himself, but he defined Hadamard matrices around 1893, long before the first error-correcting code, the Hamming code, was developed in the 1940s.

The Hadamard code is based on Hadamard matrices, and while there are many different Hadamard matrices that could be used here, normally only Sylvester's construction of Hadamard matrices is used to obtain the codewords of the Hadamard code.

James Joseph Sylvester developed his construction of Hadamard matrices in 1867, which actually predates Hadamard's work on Hadamard matrices. Hence the name Hadamard code is disputed and sometimes the code is called Walsh code, honoring the American mathematician Joseph Leonard Walsh.

An augmented Hadamard code was used during the 1971 Mariner 9 mission to correct for picture transmission errors. The binary values used during this mission were 6 bits long, which represented 64 grayscale values.

Because of limitations of the quality of the alignment of the transmitter at the time (due to Doppler Tracking Loop issues) the maximum useful data length was about 30 bits. Instead of using a repetition code, a [32, 6, 16] Hadamard code was used.

Errors of up to 7 bits per 32-bit word could be corrected using this scheme. Compared to a 5-repetition code, the error correcting properties of this Hadamard code are much better, yet its rate is comparable. The efficient decoding algorithm was an important factor in the decision to use this code.

The circuitry used was called the "Green Machine". It employed the fast Fourier transform which can increase the decoding speed by a factor of three. Since the 1990s use of this code by space programs has more or less ceased, and the NASA Deep Space Network does not support this error correction scheme for its dishes that are greater than 26 m.

Constructions

While all Hadamard codes are based on Hadamard matrices, the constructions differ in subtle ways for different scientific fields, authors, and uses. Engineers, who use the codes for data transmission, and coding theorists, who analyse extremal properties of codes, typically want the rate of the code to be as high as possible, even if this means that the construction becomes mathematically slightly less elegant.

On the other hand, for many applications of Hadamard codes in theoretical computer science it is not so important to achieve the optimal rate, and hence simpler constructions of Hadamard codes are preferred since they can be analyzed more elegantly.

Construction using inner products

When given a binary message of length , the Hadamard code encodes the message into a codeword using an encoding function This function makes use of the inner product of two vectors , which is defined as follows:

Then the Hadamard encoding of is defined as the sequence of all inner products with :

As mentioned above, the augmented Hadamard code is used in practice since the Hadamard code itself is somewhat wasteful. This is because, if the first bit of is zero, , then the inner product contains no information whatsoever about , and hence, it is impossible to fully decode from those positions of the codeword alone. On the other hand, when the codeword is restricted to the positions where , it is still possible to fully decode . Hence it makes sense to restrict the Hadamard code to these positions, which gives rise to the augmented Hadamard encoding of ; that is, .

Construction using a generator matrix

The Hadamard code is a linear code, and all linear codes can be generated by a generator matrix . This is a matrix such that holds for all , where the message is viewed as a row vector and the vector-matrix product is understood in the vector space over the finite field . In particular, an equivalent way to write the inner product definition for the Hadamard code arises by using the generator matrix whose columns consist of all strings of length , that is,

where is the -th binary vector in lexicographical order. For example, the generator matrix for the Hadamard code of dimension is:

The matrix is a -matrix and gives rise to the linear operator .

The generator matrix of the augmented Hadamard code is obtained by restricting the matrix to the columns whose first entry is one. For example, the generator matrix for the augmented Hadamard code of dimension is:

Then is a linear mapping with .

For general , the generator matrix of the augmented Hadamard code is a parity-check matrix for the extended Hamming code of length and dimension , which makes the augmented Hadamard code the dual code of the extended Hamming code. Hence an alternative way to define the Hadamard code is in terms of its parity-check matrix: the parity-check matrix of the Hadamard code is equal to the generator matrix of the Hamming code.

Construction using general Hadamard matrices

Hadamard codes are obtained from an n-by-n Hadamard matrix H. In particular, the 2n codewords of the code are the rows of H and the rows of −H. To obtain a code over the alphabet {0,1}, the mapping −1 ↦ 1, 1 ↦ 0, or, equivalently, x ↦ (1 − x)/2, is applied to the matrix elements. That the minimum distance of the code is n/2 follows from the defining property of Hadamard matrices, namely that their rows are mutually orthogonal. This implies that two distinct rows of a Hadamard matrix differ in exactly n/2 positions, and, since negation of a row does not affect orthogonality, that any row of H differs from any row of −H in n/2 positions as well, except when the rows correspond, in which case they differ in n positions.

To get the augmented Hadamard code above with , the chosen Hadamard matrix H has to be of Sylvester type, which gives rise to a message length of .

Distance

The distance of a code is the minimum Hamming distance between any two distinct codewords, i.e., the minimum number of positions at which two distinct codewords differ. Since the Walsh–Hadamard code is a linear code, the distance is equal to the minimum Hamming weight among all of its non-zero codewords. All non-zero codewords of the Walsh–Hadamard code have a Hamming weight of exactly by the following argument.

Let be a non-zero message. Then the following value is exactly equal to the fraction of positions in the codeword that are equal to one:

The fact that the latter value is exactly is called the random subsum principle. To see that it is true, assume without loss of generality that . Then, when conditioned on the values of , the event is equivalent to for some depending on and . The probability that happens is exactly . Thus, in fact, all non-zero codewords of the Hadamard code have relative Hamming weight , and thus, its relative distance is .

The relative distance of the augmented Hadamard code is as well, but it no longer has the property that every non-zero codeword has weight exactly since the all s vector is a codeword of the augmented Hadamard code. This is because the vector encodes to . Furthermore, whenever is non-zero and not the vector , the random subsum principle applies again, and the relative weight of is exactly .

Local decodability

A locally decodable code is a code that allows a single bit of the original message to be recovered with high probability by only looking at a small portion of the received word.

A code is -query locally decodable if a message bit, , can be recovered by checking bits of the received word. More formally, a code, , is -locally decodable, if there exists a probabilistic decoder, , such that (Note: represents the Hamming distance between vectors and ):

, implies that

Theorem 1: The Walsh–Hadamard code is -locally decodable for all .

Lemma 1: For all codewords, in a Walsh–Hadamard code, , , where represent the bits in in positions and respectively, and represents the bit at position .

Proof of lemma 1

Let be the codeword in corresponding to message .

Let be the generator matrix of .

By definition, . From this, . By the construction of , . Therefore, by substitution, .

Proof of theorem 1

To prove theorem 1 we will construct a decoding algorithm and prove its correctness.

Algorithm

Input: Received word

For each :

- Pick uniformly at random.

- Pick such that , where is the -th standard basis vector and is the bitwise xor of and .

- .

Output: Message

Proof of correctness

For any message, , and received word such that differs from on at most fraction of bits, can be decoded with probability at least .

By lemma 1, . Since and are picked uniformly, the probability that is at most . Similarly, the probability that is at most . By the union bound, the probability that either or do not match the corresponding bits in is at most . If both and correspond to , then lemma 1 will apply, and therefore, the proper value of will be computed. Therefore, the probability is decoded properly is at least . Therefore, and for to be positive, .

Therefore, the Walsh–Hadamard code is locally decodable for .

Optimality

For k ≤ 7 the linear Hadamard codes have been proven optimal in the sense of minimum distance.[7]

See also

- Zadoff–Chu sequence — an improvement over the Walsh–Hadamard codes

References

- ↑ "Hadamard Codes". Coding Theory. 2006. http://www.mcs.csueastbay.edu/~malek/TeX/Hadamard.pdf.

- ↑ "On the assignment of Walsh and quasi-orthogonal codes in a multicarrier DS-CDMA system with multiple classes of users". Global Telecommunications Conference, 2002. GLOBECOM'02. IEEE. 1. IEEE. 2002-11-17. pp. 841–845. doi:10.1109/GLOCOM.2002.1188196. ISBN 0-7803-7632-3.

- ↑ "Section 19.2.2". Computational Complexity: A Modern Approach. Cambridge University Press. 2009. ISBN 978-0-521-42426-4. http://www.cs.princeton.edu/theory/complexity/.

- ↑ List decoding of binary codes. 2009. p. 3. https://www.cs.cmu.edu/~venkatg/pubs/papers/ld-binary-ms.pdf.

- ↑ "A note on a result in the theory of code construction". Information and Control 2 (2): 183–194. June 1959. doi:10.1016/S0019-9958(59)90376-6.

- ↑ "CDMA Tutorial: Intuitive Guide to Principles of Communications". Complex to Real. 2002. http://complextoreal.com/wp-content/uploads/2013/01/CDMA.pdf.

- ↑ "Optimal binary linear codes of dimension at most seven". http://www.math.unl.edu/~djaffe2/papers/sevens.html.

Further reading

- "Hamming code and Hamming bound". Lecture notes. http://www.cse.buffalo.edu/faculty/atri/courses/coding-theory/lectures/lect4.pdf.

- "46.4. Hadamard– oder Walsh–Codes" (in de). Modulationsverfahren. Cottbus, Germany: Brandenburg University of Technology (BTU). 2011-04-12. p. 214. http://www.diru-beze.de/modulationen/skripte/Modulationsverfahren.pdf. Retrieved 2021-06-14. (xiv+225 pages)

|