Reinforcement learning from human feedback

| Machine learning and data mining |

|---|

|

In machine learning, reinforcement learning from human feedback (RLHF) is a technique to align an intelligent agent with human preferences. It involves training a reward model to represent preferences, which can then be used to train other models through reinforcement learning.[1]

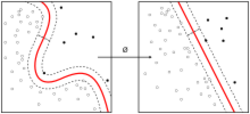

In classical reinforcement learning, an intelligent agent's goal is to learn a function that guides its behavior, called a policy.[2] The function is iteratively optimized to increase the reward signal derived from the agent's task performance.[3] However, explicitly defining a reward function that accurately approximates human preferences is challenging. Therefore, RLHF seeks to train a "reward model" directly from human feedback.[4] The reward model is first trained in a supervised manner to predict if a response to a given prompt is good (high reward) or bad (low reward) based on ranking data collected from human annotators. This model then serves as a reward function to improve an agent's policy through an optimization algorithm like proximal policy optimization.[5] [6] [7]

RLHF has applications in various domains in machine learning, including natural language processing tasks such as text summarization and conversational agents, computer vision tasks like text-to-image models, and the development of video game bots. While RLHF is an effective method of training models to act better in accordance with human preferences, it also faces challenges due to the way the human preference data is collected. Though RLHF does not require massive amounts of data to improve performance, sourcing high-quality preference data is still an expensive process. Furthermore, if the data is not carefully collected from a representative sample, the resulting model may exhibit unwanted biases.

Background and motivation

Optimizing a model based on human feedback is desirable when a task is difficult to specify yet easy to judge.[8] For example, one may want to train a model to generate safe text that is both helpful and harmless (such as lacking bias, toxicity, or otherwise harmful content). Asking humans to manually create examples of harmless and harmful text would be difficult and time-consuming. However, humans are adept at swiftly assessing and comparing the harmfulness of different AI-generated text. Therefore, a more practical objective would be to allow the model to use this type of human feedback to improve its text generation.[9]

Despite the clear benefits of incorporating human feedback in training models, prior efforts—including some that leverage reinforcement learning (RL)—have encountered significant challenges. Most attempts were either narrow and difficult to generalize, breaking down on more complex tasks,[10][11][12][13] or they faced difficulties learning from sparse (lacking specific information and relating to large amounts of text at a time) or noisy (inconsistently rewarding similar outputs) reward functions.[14][15]

RLHF was not the first successful method of using human feedback for reinforcement learning, but it is one of the most widely used. The foundation for RLHF was introduced as an attempt to create a general algorithm for learning from a practical amount of human feedback.[8][5] The algorithm as used today was introduced by OpenAI in a paper on enhancing text continuation or summarization based on human feedback, and it began to gain popularity when the same method was reused in their paper on InstructGPT.[4][16][17] RLHF has also been shown to improve the robustness of RL agents and their capacity for exploration, which results in an optimization process more adept at handling uncertainty and efficiently exploring its environment in search of the highest reward.[18]

Collecting human feedback

Human feedback is commonly collected by prompting humans to rank instances of the agent's behavior.[17][19][20] These rankings can then be used to score outputs, for example, using the Elo rating system, which is an algorithm for calculating the relative skill levels of players in a game based only on the outcome of each game.[5] While ranking outputs is the most widely adopted form of feedback, recent research has explored other forms, such as numerical feedback, natural language feedback, and prompting for direct edits to the model's output.[21]

One initial motivation of RLHF was that it requires relatively small amounts of comparison data to be effective.[8] It has been shown that a small amount of data can lead to comparable results to a larger amount. In addition, increasing the amount of data tends to be less effective than proportionally increasing the size of the reward model.[16] Nevertheless, a larger and more diverse amount of data can be crucial for tasks where it is important to avoid bias from a partially representative group of annotators.[17]

When learning from human feedback through pairwise comparison under the Bradley–Terry–Luce model (or the Plackett–Luce model for K-wise comparisons over more than two comparisons), the maximum likelihood estimator (MLE) for linear reward functions has been shown to converge if the comparison data is generated under a well-specified linear model. This implies that, under certain conditions, if a model is trained to decide which choices people would prefer between pairs (or groups) of choices, it will necessarily improve at predicting future preferences. This improvement is expected as long as the comparisons it learns from are based on a consistent and simple rule.[22][23]

Both offline data collection models, where the model is learning by interacting with a static dataset and updating its policy in batches, as well as online data collection models, where the model directly interacts with the dynamic environment and updates its policy immediately, have been mathematically studied proving sample complexity bounds for RLHF under different feedback models.[22][24]

In the offline data collection model, when the objective is policy training, a pessimistic MLE that incorporates a lower confidence bound as the reward estimate is most effective. Moreover, when applicable, it has been shown that considering K-wise comparisons directly is asymptotically more efficient than converting them into pairwise comparisons for prediction purposes.[24][25][17]

In the online scenario, when human feedback is collected through pairwise comparisons under the Bradley–Terry–Luce model and the objective is to minimize the algorithm's regret (the difference in performance compared to an optimal agent), it has been shown that an optimistic MLE that incorporates an upper confidence bound as the reward estimate can be used to design sample efficient algorithms (meaning that they require relatively little training data). A key challenge in RLHF when learning from pairwise (or dueling) comparisons is associated with the non-Markovian nature of its optimal policies. Unlike simpler scenarios where the optimal strategy does not require memory of past actions, in RLHF, the best course of action often depends on previous events and decisions, making the strategy inherently memory-dependent.[23]

Applications

RLHF has been applied to various domains of natural language processing (NLP), such as conversational agents, text summarization, and natural language understanding.[26][16] Ordinary reinforcement learning, in which agents learn from their actions based on a predefined "reward function", is difficult to apply to NLP tasks because the rewards tend to be difficult to define or measure, especially when dealing with complex tasks that involve human values or preferences.[8] RLHF can steer NLP models, in particular language models, to provide answers that align with human preferences with regard to such tasks by capturing their preferences beforehand in the reward model. This results in a model capable of generating more relevant responses and rejecting inappropriate or irrelevant queries.[17][27] Some notable examples of RLHF-trained language models are OpenAI's ChatGPT (and its predecessor InstructGPT),[19][28][29] DeepMind's Sparrow,[30][31][32] Google's Gemini,[33] and Anthropic's Claude.[34]

In computer vision, RLHF has also been used to align text-to-image models. Studies that successfully used RLHF for this goal have noted that the use of KL regularization in RLHF, which aims to prevent the learned policy from straying too far from the unaligned model, helped to stabilize the training process by reducing overfitting to the reward model. The final image outputs from models trained with KL regularization were noted to be of significantly higher quality than those trained without.[35][36] Other methods tried to incorporate the feedback through more direct training—based on maximizing the reward without the use of reinforcement learning—but conceded that an RLHF-based approach would likely perform better due to the online sample generation used in RLHF during updates as well as the aforementioned KL regularization over the prior model, which mitigates overfitting to the reward function.[37]

RLHF was initially applied to other areas, such as the development of video game bots and tasks in simulated robotics. For example, OpenAI and DeepMind trained agents to play Atari games based on human preferences. In classical RL-based training of such bots, the reward function is simply correlated to how well the agent is performing in the game, usually using metrics like the in-game score. In comparison, in RLHF, a human is periodically presented with two clips of the agent's behavior in the game and must decide which one looks better. This approach can teach agents to perform at a competitive level without ever having access to their score. In fact, it was shown that RLHF can sometimes lead to superior performance over RL with score metrics because the human's preferences can contain more useful information than performance-based metrics.[8][38] The agents achieved strong performance in many of the environments tested, often surpassing human performance.[39]

Training

In RLHF, two different models are trained: a reward model and a reinforcement learning policy. The reward model learns to determine what behavior is desirable based on human feedback, while the policy is guided by the reward model to determine the agent's actions. Both models are commonly initialized using a pre-trained autoregressive language model. This model is then customarily trained in a supervised manner on a relatively small dataset of pairs of prompts to an assistant and their accompanying responses, written by human annotators.

Reward model

The reward model is a function that takes a string (piece of text) as input, and produces a single number, which is the "reward".[40]

It is usually initialized with a pre-trained model, as this initializes it with an understanding of language and focuses training explicitly on learning human preferences. In addition to being used to initialize the reward model and the RL policy, the model is then also used to sample data to be compared by annotators.[17][16]

The reward model is then trained by replacing the final layer of the previous model with a randomly initialized regression head. This change shifts the model from its original classification task over its vocabulary to simply outputting a number corresponding to the score of any given prompt and response. This model is trained on the human preference comparison data collected earlier from the supervised model. In particular, it is trained to minimize the following cross-entropy loss function:

where is the number of responses the labelers ranked, is the output of the reward model for prompt and completion , is the preferred completion over , denotes the sigmoid function, and denotes the expected value.[17] This can be thought of as a form of logistic regression, where the model predicts the probability that a response is preferred over .

This loss function essentially measures the difference between the reward model's predictions and the decisions made by humans. The goal is to make the model's guesses as close as possible to the humans' preferences by minimizing the difference measured by this equation. In the case of only pairwise comparisons, , so the factor of .[16] In general, all comparisons from each prompt are used for training as a single batch.[17]

After training, the outputs of the model are normalized such that the reference completions have a mean score of 0. That is,[16] for each query and reference pair by calculating the mean reward across the training dataset and setting it as the bias in the reward head.

Policy

The policy model is a function that takes a string as input, and produces another string. Usually in language modeling, the output string is not produced in one forward pass, but by multiple forward passes, generated autoregressively. Similarly to the reward model, the human feedback policy is also initialized from a pre-trained model.[16]

The key is to understand language generation as if it is a game to be learned by RL. In RL, a policy is a function that maps a game state to a game action. In RLHF, the "game" is the game of replying to prompts. A prompt and all previously generated tokens are the game state, and generating a new token is a game action.[41]

The first step in its training is supervised fine-tuning (SFT). This step does not require the reward model. Instead, the pre-trained model is trained on a dataset that contains prompt-response pairs . Then, during SFT, the model is trained to auto-regressively generate the corresponding response when given a random prompt . The original paper recommends to SFT for only one epoch, since more than that causes overfitting.

The dataset is usually written by human contractors, who write both the prompts and responses.

The second step uses a policy gradient method to the reward model. It uses a dataset , which contains prompts, but not responses. Like most policy gradient methods, this algorithm has an outer loop and two inner loops:

- Initialize the policy to , the policy output from SFT.

- Loop for many steps.

- Initialize a new empty dataset .

- Loop for many steps

- Sample a random prompt from .

- Generate a response from the policy .

- Calculate the reward signal from the reward model .

- Add the triple to .

- Update by a policy gradient method to increase the objective function

Note that is equivalent to , which means "sample a prompt from , then sample a response from the policy".

The objective function has two parts. The first part is simply the expected reward , and is standard for any RL algorithm. The second part is a "penalty term" involving the KL divergence. The strength of the penalty term is determined by the hyperparameter .

This KL term works by penalizing the KL divergence (a measure of statistical distance between distributions) between the model being fine-tuned and the initial supervised model. By choosing an appropriate , the training can balance learning from new data while retaining useful information from the initial model, increasing generalization by avoiding fitting too closely to the new data. Aside from preventing the new model from producing outputs too dissimilar those of the initial model, a second motivation of including the KL term is to encourage the model to output high-entropy text, so as to prevent the model from collapsing to a small number of canned responses.[16]

In simpler terms, the objective function calculates how well the policy's responses are expected to align with human feedback. The policy generates responses to prompts, and each response is evaluated both on how well it matches human preferences (as measured by the reward model) and how similar it is to responses the model would naturally generate. The goal is to balance improving alignment with human preferences while ensuring the model's responses remain diverse and not too far removed from what it has learned during its initial training. This helps the model not only to provide answers that people find useful or agreeable but also to maintain a broad understanding and avoid overly narrow or repetitive responses.

Proximal policy optimization

The policy function is usually trained by proximal policy optimization (PPO) algorithm. That is, the parameter is trained by gradient ascent on the clipped surrogate function.[17][16]

Classically, the PPO algorithm employs generalized advantage estimation, which means that there is an extra value estimator , that updates concurrently with the policy during PPO training: .[42] The value estimator is used only during training, and not outside of training.

The PPO uses gradient descent on the following clipped surrogate advantage:

where the advantage term is defined as . That is, the advantage is computed as the difference between the reward (the expected return) and the value estimation (the expected return from the policy). This is used to train the policy by gradient ascent on it, usually using a standard momentum-gradient optimizer, like the Adam optimizer.

The original paper initialized the value estimator from the trained reward model.[16] Since PPO is an actor-critic algorithm, the value estimator is updated concurrently with the policy, via minimizing the squared TD-error, which in this case equals the squared advantage term:which is minimized by gradient descent on it. Other methods than squared TD-error might be used. See the actor-critic algorithm page for details.

Mixing pretraining gradients

A third term is commonly added to the objective function to prevent the model from catastrophic forgetting. For example, if the model is only trained in customer service, then it might forget general knowledge in geography. To prevent this, the RLHF process incorporates the original language modeling objective. That is, some random texts are sampled from the original pretraining dataset , and the model is trained to maximize the log-likelihood of the text . The final objective function is written as:

where controls the strength of this pretraining term.[17] This combined objective function is called PPO-ptx, where "ptx" means "Mixing Pretraining Gradients".[9] It was first used in the InstructGPT paper.[17]

In total, this objective function defines the method for adjusting the RL policy, blending the aim of aligning with human feedback and maintaining the model's original language understanding.

So, writing out fully explicitly, the PPO-ptx objective function is:

which is optimized by gradient ascent on it.

Limitations

RLHF suffers from challenges with collecting human feedback, learning a reward model, and optimizing the policy.[43] Compared to data collection for techniques like unsupervised or self-supervised learning, collecting data for RLHF is less scalable and more expensive. Its quality and consistency may vary depending on the task, interface, and the preferences and biases of individual humans.[17][44]

The effectiveness of RLHF depends on the quality of human feedback. For instance, the model may become biased, favoring certain groups over others, if the feedback lacks impartiality, is inconsistent, or is incorrect.[5][45] There is a risk of overfitting, where the model memorizes specific feedback examples instead of learning to generalize. For instance, feedback predominantly from a specific demographic might lead the model to learn peculiarities or noise, along with the intended alignment. Excessive alignment to the specific feedback it received (that is, to the bias therein) can lead to the model performing sub-optimally in new contexts or when used by different groups.[46] A single reward function cannot always represent the opinions of diverse groups of people. Even with a representative sample, conflicting views and preferences may result in the reward model favoring the majority's opinion, potentially disadvantaging underrepresented groups.[43]

In some cases, as is possible in regular reinforcement learning, there may be a risk of the model learning to manipulate the feedback process or game the system to achieve higher rewards rather than genuinely improving its performance.[47] In the case of RLHF, a model may learn to exploit the fact that it is rewarded for what is evaluated positively and not necessarily for what is actually good, which can lead to it learning to persuade and manipulate. For example, models might learn that apparent confidence, even if inaccurate, garners higher rewards. Such behavior, if unchecked, is not just incentivized but can cause significant deployment issues due to the model's potential to mislead. Studies have found that humans are not skilled at identifying mistakes in LLM outputs in complex tasks; therefore, models learning to generate confident-sounding yet incorrect text can lead to significant issues when deployed.[43]

Alternatives

Reinforcement learning from AI feedback

Similarly to RLHF, reinforcement learning from AI feedback (RLAIF) relies on training a preference model, except that the feedback is automatically generated.[48] This is notably used in Anthropic's constitutional AI, where the AI feedback is based on the conformance to the principles of a constitution.[49]

Direct alignment algorithms

Direct alignment algorithms (DAA) have been proposed as a new class of algorithms[50][51] that seek to directly optimize large language models (LLMs) on human feedback data in a supervised manner instead of the traditional policy-gradient methods.

These algorithms aim to align models with human intent more transparently by removing the intermediate step of training a separate reward model. Instead of first predicting human preferences and then optimizing against those predictions, direct alignment methods train models end-to-end on human-labeled or curated outputs. This reduces potential misalignment risks introduced by proxy objectives or reward hacking.

By directly optimizing for the behavior preferred by humans, these approaches often enable tighter alignment with human values, improved interpretability, and simpler training pipelines compared to RLHF.

Direct preference optimization

Direct preference optimization (DPO) is a technique to learn human preferences. Like RLHF, it has been applied to align pre-trained large language models using human-generated preference data. Unlike RLHF, however, which first trains a separate intermediate model to understand what good outcomes look like and then teaches the main model how to achieve those outcomes, DPO simplifies the process by directly adjusting the main model according to people's preferences. It uses a change of variables to define the "preference loss" directly as a function of the policy and uses this loss to fine-tune the model, helping it understand and prioritize human preferences without needing a separate step. Essentially, this approach directly shapes the model's decisions based on positive or negative human feedback.

Recall, the pipeline of RLHF is as follows:

- We begin by gathering human preference dataset .

- We then fit a reward model to data, by maximum likelihood estimation using the Plackett–Luce model

- We finally train an optimal policy that maximizes the objective function:

However, instead of doing the intermediate step of the reward model, DPO directly optimizes for the final policy.

First, solve directly for the optimal policy, which can be done by Lagrange multipliers, as usual in statistical mechanics:

where is the partition function. This is unfortunately not tractable, since it requires summing over all possible responses:

Next, invert this relationship to express the reward implicitly in terms of the optimal policy:

Finally, plug it back to the maximum likelihood estimator, we obtain[52]Template:Pg

Usually, DPO is used for modeling human preference in pairwise comparisons, so that . In that case, we have

DPO eliminates the need for a separate reward model or reinforcement learning loop, treating alignment as a supervised learning problem over preference data. This is simpler to implement and train than RLHF and has been shown to produce comparable and sometimes superior results.[52] Nevertheless, RLHF has also been shown to beat DPO on some datasets, for example, on benchmarks that attempt to measure truthfulness. Therefore, the choice of method may vary depending on the features of the human preference data and the nature of the task.[53]

Identity preference optimization

Identity preference optimization (IPO)[54] is a modification to the original DPO objective that introduces a regularization term to reduce the chance of overfitting even when preference data is noisy.

To solve this objective, IPO minimizes the quadratic loss function where .

IPO can control the gap between the log-likelihood ratios of the policy model and the reference by always regularizing the solution towards the reference model. It allows learning directly from preferences without a reward modelling stage and without relying on the Bradley-Terry modelling assumption that assumes that pairwise preferences can be substituted with pointwise rewards.[54]

Kahneman-Tversky optimization

Kahneman-Tversky optimization (KTO)[55] is another direct alignment algorithm drawing from prospect theory to model uncertainty in human decisions. Unlike DPO, KTO requires only a binary feedback signal (desirable or undesirable) instead of explicit preference pairs.

The value function is defined piecewise depending on whether is desirable () or undesirable ():

Here, controls how “risk-averse” the value function is (larger = faster saturation in the logistic function )and is a baseline given by the Kullback–Leibler divergence. Since many real-world feedback pipelines yield "like/dislike" data more easily than pairwise comparisons, KTO is designed to be data-efficient and to reflect "loss aversion" more directly by using a straightforward notion of "good vs. bad" at the example level.

See also

References

- ↑ Kongot, Aparna (2025). Human-Centered AI: An Illustrated Scientific Quest (Human–Computer Interaction Series). Springer. pp. 389. ISBN 978-3031613746. https://www.google.com/books/edition/Human_Centered_AI_An_Illustrated_Scienti/XmNSEQAAQBAJ?hl=en&gbpv=1&dq=%22reinforcement+learning+from+human+feedback%22&pg=PA389&printsec=frontcover.

- ↑ Lan, Xuguang (2025). Intelligent Robotics and Applications: 17th International Conference, ICIRA 2024, Xi'an, China, July 31 – August 2, 2024, Proceedings, Part VIII (Lecture Notes in Computer Science Book 15208). Springer. pp. 6. https://www.google.com/books/edition/Intelligent_Robotics_and_Applications/icVAEQAAQBAJ?hl=en&gbpv=1&dq=%22reinforcement+learning+from+human+feedback%22&pg=PA6&printsec=frontcover.

- ↑ Russell, Stuart J.; Norvig, Peter (2016). Artificial intelligence: a modern approach (Third, Global ed.). Boston Columbus Indianapolis New York San Francisco Upper Saddle River Amsterdam Cape Town Dubai London Madrid Milan Munich Paris Montreal Toronto Delhi Mexico City Sao Paulo Sydney Hong Kong Seoul Singapore Taipei Tokyo: Pearson. pp. 830–831. ISBN 978-0-13-604259-4.

- ↑ 4.0 4.1 Ziegler, Daniel M.; Stiennon, Nisan; Wu, Jeffrey; Brown, Tom B.; Radford, Alec; Amodei, Dario; Christiano, Paul; Irving, Geoffrey (2019). "Fine-Tuning Language Models from Human Preferences". arXiv:1909.08593 [cs.CL].

- ↑ 5.0 5.1 5.2 5.3 Lambert, Nathan; Castricato, Louis; von Werra, Leandro; Havrilla, Alex. "Illustrating Reinforcement Learning from Human Feedback (RLHF)". https://huggingface.co/blog/rlhf.

- ↑ Schulman, John; Wolski, Filip; Dhariwal, Prafulla; Radford, Alec; Klimov, Oleg (2017). "Proximal Policy Optimization Algorithms". arXiv:1707.06347 [cs.LG].

- ↑ Tuan, Yi-Lin; Zhang, Jinzhi; Li, Yujia; Lee, Hung-yi (2018). "Proximal Policy Optimization and its Dynamic Version for Sequence Generation". arXiv:1808.07982 [cs.CL].

- ↑ 8.0 8.1 8.2 8.3 8.4 Amodei, Dario; Christiano, Paul; Ray, Alex (13 June 2017). "Learning from human preferences". https://openai.com/research/learning-from-human-preferences.

- ↑ 9.0 9.1 Zheng, Rui; Dou, Shihan; Gao, Songyang; Hua, Yuan; Shen, Wei; Wang, Binghai; Liu, Yan; Jin, Senjie; Liu, Qin; Zhou, Yuhao; Xiong, Limao; Chen, Lu; Xi, Zhiheng; Xu, Nuo; Lai, Wenbin; Zhu, Minghao; Chang, Cheng; Yin, Zhangyue; Weng, Rongxiang; Cheng, Wensen; Huang, Haoran; Sun, Tianxiang; Yan, Hang; Gui, Tao; Zhang, Qi; Qiu, Xipeng; Huang, Xuanjing (2023). "Secrets of RLHF in Large Language Models Part I: PPO". arXiv:2307.04964 [cs.CL].

- ↑ Knox, W. Bradley; Stone, Peter; Breazeal, Cynthia (2013). "Training a Robot via Human Feedback: A Case Study" (in en). Social Robotics. Lecture Notes in Computer Science. 8239. Springer International Publishing. pp. 460–470. doi:10.1007/978-3-319-02675-6_46. ISBN 978-3-319-02674-9. https://link.springer.com/chapter/10.1007/978-3-319-02675-6_46. Retrieved 26 February 2024.

- ↑ Akrour, Riad; Schoenauer, Marc; Sebag, Michèle (2012). "APRIL: Active Preference Learning-Based Reinforcement Learning" (in en). Machine Learning and Knowledge Discovery in Databases. Lecture Notes in Computer Science. 7524. Springer. pp. 116–131. doi:10.1007/978-3-642-33486-3_8. ISBN 978-3-642-33485-6. https://link.springer.com/chapter/10.1007/978-3-642-33486-3_8. Retrieved 26 February 2024.

- ↑ Wilson, Aaron; Fern, Alan; Tadepalli, Prasad (2012). "A Bayesian Approach for Policy Learning from Trajectory Preference Queries". Advances in Neural Information Processing Systems (Curran Associates, Inc.) 25. https://papers.nips.cc/paper_files/paper/2012/hash/16c222aa19898e5058938167c8ab6c57-Abstract.html. Retrieved 26 February 2024.

- ↑ Schoenauer, Marc; Akrour, Riad; Sebag, Michele; Souplet, Jean-Christophe (18 June 2014). "Programming by Feedback" (in en). Proceedings of the 31st International Conference on Machine Learning (PMLR): 1503–1511. https://proceedings.mlr.press/v32/schoenauer14.html. Retrieved 26 February 2024.

- ↑ Warnell, Garrett; Waytowich, Nicholas; Lawhern, Vernon; Stone, Peter (25 April 2018). "Deep TAMER: Interactive Agent Shaping in High-Dimensional State Spaces". Proceedings of the AAAI Conference on Artificial Intelligence 32 (1). doi:10.1609/aaai.v32i1.11485.

- ↑ MacGlashan, James; Ho, Mark K.; Loftin, Robert; Peng, Bei; Wang, Guan; Roberts, David L.; Taylor, Matthew E.; Littman, Michael L. (6 August 2017). "Interactive learning from policy-dependent human feedback". Proceedings of the 34th International Conference on Machine Learning - Volume 70 (JMLR.org): 2285–2294. https://dl.acm.org/doi/10.5555/3305890.3305917.

- ↑ 16.00 16.01 16.02 16.03 16.04 16.05 16.06 16.07 16.08 16.09 Nisan Stiennon; Long Ouyang; Jeffrey Wu; Daniel Ziegler; Ryan Lowe; Chelsea Voss; Alec Radford; Dario Amodei et al. (2020). "Learning to summarize with human feedback" (in en). Advances in Neural Information Processing Systems 33. https://proceedings.neurips.cc/paper/2020/hash/1f89885d556929e98d3ef9b86448f951-Abstract.html.

- ↑ 17.00 17.01 17.02 17.03 17.04 17.05 17.06 17.07 17.08 17.09 17.10 17.11 Ouyang, Long; Wu, Jeffrey; Jiang, Xu; Almeida, Diogo; Wainwright, Carroll; Mishkin, Pamela; Zhang, Chong; Agarwal, Sandhini et al. (31 October 2022). "Training language models to follow instructions with human feedback" (in en). Thirty-Sixth Conference on Neural Information Processing Systems: NeurIPS 2022. https://openreview.net/forum?id=TG8KACxEON.

- ↑ Bai, Yuntao; Jones, Andy; Ndousse, Kamal; Askell, Amanda; Chen, Anna; DasSarma, Nova; Drain, Dawn; Fort, Stanislav; Ganguli, Deep; Henighan, Tom; Joseph, Nicholas; Kadavath, Saurav; Kernion, Jackson; Conerly, Tom; El-Showk, Sheer; Elhage, Nelson; Hatfield-Dodds, Zac; Hernandez, Danny; Hume, Tristan; Johnston, Scott; Kravec, Shauna; Lovitt, Liane; Nanda, Neel; Olsson, Catherine; Amodei, Dario; Brown, Tom; Clark, Jack; McCandlish, Sam; Olah, Chris; Mann, Ben; Kaplan, Jared (2022). "Training a Helpful and Harmless Assistant with Reinforcement Learning from Human Feedback". arXiv:2204.05862 [cs.CL].

- ↑ 19.0 19.1 Edwards, Benj (1 December 2022). "OpenAI invites everyone to test ChatGPT, a new AI-powered chatbot—with amusing results" (in en-us). https://arstechnica.com/information-technology/2022/12/openai-invites-everyone-to-test-new-ai-powered-chatbot-with-amusing-results/.

- ↑ Abhishek, Gupta (5 February 2023). "Getting stakeholder engagement right in responsible AI". https://venturebeat.com/ai/getting-stakeholder-engagement-right-in-responsible-ai/.

- ↑ Fernandes, Patrick; Madaan, Aman; Liu, Emmy; Farinhas, António; Pedro Henrique Martins; Bertsch, Amanda; de Souza, José G. C.; Zhou, Shuyan; Wu, Tongshuang; Neubig, Graham; Martins, André F. T. (2023). "Bridging the Gap: A Survey on Integrating (Human) Feedback for Natural Language Generation". arXiv:2305.00955 [cs.CL].

- ↑ 22.0 22.1 Xie, Tengyang; Jiang, Nan; Wang, Huan; Xiong, Caiming; Bai, Yu (2021). "Policy Finetuning: Bridging Sample-Efficient Offline and Online Reinforcement Learning". Advances in Neural Information Processing Systems (Curran Associates, Inc.) 34: 27395–27407. https://proceedings.neurips.cc/paper/2021/hash/e61eaa38aed621dd776d0e67cfeee366-Abstract.html. Retrieved 10 March 2024.

- ↑ 23.0 23.1 Pacchiano, Aldo; Saha, Aadirupa; Lee, Jonathan (2023-03-03). "Dueling RL: Reinforcement Learning with Trajectory Preferences" (in en). Proceedings of the 26th International Conference on Artificial Intelligence and Statistics (PMLR): 6263–6289. https://proceedings.mlr.press/v206/saha23a.html.

- ↑ 24.0 24.1 Zhu, Banghua; Jordan, Michael; Jiao, Jiantao (2023-07-03). "Principled Reinforcement Learning with Human Feedback from Pairwise or K-wise Comparisons" (in en). Proceedings of the 40th International Conference on Machine Learning (PMLR): 43037–43067. https://proceedings.mlr.press/v202/zhu23f.html.

- ↑ Li, Zihao; Yang, Zhuoran; Wang, Mengdi (20 June 2023). "Reinforcement learning with Human Feedback: Learning Dynamic Choices via Pessimism" (in en). ILHF Workshop ICML 2023. https://openreview.net/forum?id=gxM2AUFMsK&referrer=%5Bthe%20profile%20of%20Zhuoran%20Yang%5D(%2Fprofile%3Fid%3D~Zhuoran_Yang1). Retrieved 10 March 2024.

- ↑ Ouyang, Long; Wu, Jeff; Jiang, Xu; Almeida, Diogo; Wainwright, Carroll L.; Mishkin, Pamela; Zhang, Chong; Agarwal, Sandhini; Slama, Katarina; Ray, Alex; Schulman, John; Hilton, Jacob; Kelton, Fraser; Miller, Luke; Simens, Maddie; Askell, Amanda; Welinder, Peter; Christiano, Paul; Leike, Jan; Lowe, Ryan (2022). "Training language models to follow instructions with human feedback". arXiv:2203.02155 [cs.CL].

- ↑ Wiggers, Kyle (24 February 2023). "Can AI really be protected from text-based attacks?". https://techcrunch.com/2023/02/24/can-language-models-really-be-protected-from-text-based-attacks/.

- ↑ Heikkilä, Melissa (21 February 2023). "How OpenAI is trying to make ChatGPT safer and less biased" (in en). https://www.technologyreview.com/2023/02/21/1068893/how-openai-is-trying-to-make-chatgpt-safer-and-less-biased/.

- ↑ Douglas Heaven, Will (30 November 2022). "ChatGPT is OpenAI's latest fix for GPT-3. It's slick but still spews nonsense" (in en). https://www.technologyreview.com/2022/11/30/1063878/openai-still-fixing-gpt3-ai-large-language-model/.

- ↑ Glaese, Amelia; McAleese, Nat; Trębacz, Maja; Aslanides, John; Firoiu, Vlad; Ewalds, Timo; Rauh, Maribeth; Weidinger, Laura; Chadwick, Martin; Thacker, Phoebe; Campbell-Gillingham, Lucy; Uesato, Jonathan; Huang, Po-Sen; Comanescu, Ramona; Yang, Fan; See, Abigail; Dathathri, Sumanth; Greig, Rory; Chen, Charlie; Fritz, Doug; Elias, Jaume Sanchez; Green, Richard; Mokrá, Soňa; Fernando, Nicholas; Wu, Boxi; Foley, Rachel; Young, Susannah; Gabriel, Iason; Isaac, William; Mellor, John; Hassabis, Demis; Kavukcuoglu, Koray; Hendricks, Lisa Anne; Irving, Geoffrey (2022). "Improving alignment of dialogue agents via targeted human judgements". arXiv:2209.14375 [cs.LG].

- ↑ Goldman, Sharon (23 September 2022). "Why DeepMind isn't deploying its new AI chatbot — and what it means for responsible AI". https://venturebeat.com/ai/why-deepmind-isnt-deploying-its-new-ai-chatbot.

- ↑ The Sparrow team (22 September 2022). "Building safer dialogue agents" (in en). https://www.deepmind.com/blog/building-safer-dialogue-agents.

- ↑ Pinchai, Sundar; Hassabis, Demis (6 December 2023). "Introducing Gemini: our largest and most capable AI model" (in en-us). https://blog.google/technology/ai/google-gemini-ai/.

- ↑ Henshall, Will (18 July 2023). "What to Know About Claude 2, Anthropic's Rival to ChatGPT" (in en). TIME. https://time.com/6295523/claude-2-anthropic-chatgpt/. Retrieved 6 March 2024.

- ↑ Fan, Ying; Watkins, Olivia; Du, Yuqing; Liu, Hao; Ryu, Moonkyung; Boutilier, Craig; Abbeel, Pieter; Ghavamzadeh, Mohammad et al. (2 November 2023). "DPOK: Reinforcement Learning for Fine-tuning Text-to-Image Diffusion Models" (in en). NeurIPS 2023. https://openreview.net/forum?id=8OTPepXzeh&referrer=%5Bthe%20profile%20of%20Moonkyung%20Ryu%5D(%2Fprofile%3Fid%3D~Moonkyung_Ryu1). Retrieved 1 March 2024.

- ↑ Xu, Jiazheng; Liu, Xiao; Wu, Yuchen; Tong, Yuxuan; Li, Qinkai; Ding, Ming; Tang, Jie; Dong, Yuxiao (15 December 2023). "ImageReward: Learning and Evaluating Human Preferences for Text-to-Image Generation" (in en). Advances in Neural Information Processing Systems 36: 15903–15935. https://proceedings.neurips.cc/paper_files/paper/2023/hash/33646ef0ed554145eab65f6250fab0c9-Abstract-Conference.html. Retrieved 1 March 2024.

- ↑ Lee, Kimin; Liu, Hao; Ryu, Moonkyung; Watkins, Olivia; Du, Yuqing; Boutilier, Craig; Abbeel, Pieter; Ghavamzadeh, Mohammad; Gu, Shixiang Shane (2023). "Aligning Text-to-Image Models using Human Feedback". arXiv:2302.12192 [cs.LG].

- ↑ Leike, Jan; Martic, Miljan; Legg, Shane (12 June 2017). "Learning through human feedback" (in en). https://www.deepmind.com/blog/learning-through-human-feedback.

- ↑ Christiano, Paul F; Leike, Jan; Brown, Tom; Martic, Miljan; Legg, Shane; Amodei, Dario (2017). "Deep Reinforcement Learning from Human Preferences". Advances in Neural Information Processing Systems (Curran Associates, Inc.) 30. https://papers.nips.cc/paper/2017/hash/d5e2c0adad503c91f91df240d0cd4e49-Abstract.html. Retrieved 4 March 2023.

- ↑ von Csefalvay, Chris (2026). "4. Reinforcement Learning: Better Each Time". Post-Training: A Practical Guide for AI Engineers and Developers. No Starch Press. pp. 114–116. ISBN 978-1-7185-0520-9.

- ↑ Iusztin, Paul (2024). LLM Engineer's Handbook: Master the art of engineering large language models from concept to production. Packt Publishing. pp. 246. ISBN 978-1836200079. https://www.google.com/books/edition/LLM_Engineer_s_Handbook/jHEqEQAAQBAJ?hl=en&gbpv=1&dq=%22reinforcement+learning+from+human+feedback%22&pg=PA246&printsec=frontcover.

- ↑ von Csefalvay, Chris (2026). "5. Preference Optimization: Modern Alternatives to PPO". Post-Training: A Practical Guide for AI Engineers and Developers. No Starch Press. pp. 133–140. ISBN 978-1-7185-0520-9.

- ↑ 43.0 43.1 43.2 Casper, Stephen; Davies, Xander; Shi, Claudia; Gilbert, Thomas Krendl; Scheurer, Jérémy; Rando, Javier; Freedman, Rachel; Korbak, Tomasz et al. (18 September 2023). "Open Problems and Fundamental Limitations of Reinforcement Learning from Human Feedback". Transactions on Machine Learning Research. https://openreview.net/forum?id=bx24KpJ4Eb.

- ↑ Christiano, Paul (25 January 2023). "Thoughts on the impact of RLHF research" (in en). https://www.alignmentforum.org/posts/vwu4kegAEZTBtpT6p/thoughts-on-the-impact-of-rlhf-research.

- ↑ Belenguer, Lorenzo (2022). "AI bias: exploring discriminatory algorithmic decision-making models and the application of possible machine-centric solutions adapted from the pharmaceutical industry". AI and Ethics (AI Ethics) 2 (4): 771–787. doi:10.1007/s43681-022-00138-8. PMID 35194591.

- ↑ Zhang, Chiyuan; Bengio, Samy (4 November 2016). "Understanding deep learning requires rethinking generalization". International Conference on Learning Representations. https://openreview.net/forum?id=Sy8gdB9xx.

- ↑ Clark, Jack; Amodei, Dario (21 December 2016). "Faulty reward functions in the wild". OpenAI. https://openai.com/research/faulty-reward-functions.

- ↑ Lee, Harrison; Phatale, Samrat; Mansoor, Hassan; Lu, Kellie Ren; Mesnard, Thomas; Ferret, Johan; Bishop, Colton; Hall, Ethan et al. (2023-10-13). "RLAIF: Scaling Reinforcement Learning from Human Feedback with AI Feedback" (in en). ICLR. https://openreview.net/forum?id=AAxIs3D2ZZ.

- ↑ Edwards, Benj (2023-05-09). "AI gains "values" with Anthropic's new Constitutional AI chatbot approach" (in en-us). https://arstechnica.com/information-technology/2023/05/ai-with-a-moral-compass-anthropic-outlines-constitutional-ai-in-its-claude-chatbot/.

- ↑ Rafailov, Rafael; Chittepu, Yaswanth; Park, Ryan; Sikchi, Harshit; Hejna, Joey; Knox, Bradley; Finn, Chelsea; Niekum, Scott (2024). "Scaling Laws for Reward Model Overoptimization in Direct Alignment Algorithms". arXiv:2406.02900 [cs.LG].

- ↑ Shi, Zhengyan; Land, Sander; Locatelli, Acyr; Geist, Matthieu; Bartolo, Max (2024). "Understanding Likelihood Over-optimisation in Direct Alignment Algorithms". arXiv:2410.11677 [cs.CL].

- ↑ 52.0 52.1 Rafailov, Rafael; Sharma, Archit; Mitchell, Eric; Ermon, Stefano; Manning, Christopher D.; Finn, Chelsea (2023). "Direct Preference Optimization: Your Language Model is Secretly a Reward Model". arXiv:2305.18290 [cs.LG].

- ↑ Wang, Zhilin; Dong, Yi; Zeng, Jiaqi; Adams, Virginia; Sreedhar, Makesh Narsimhan; Egert, Daniel; Delalleau, Olivier; Scowcroft, Jane Polak; Kant, Neel; Swope, Aidan; Kuchaiev, Oleksii (2023). "HelpSteer: Multi-attribute Helpfulness Dataset for SteerLM". arXiv:2311.09528 [cs.CL].

- ↑ 54.0 54.1 Mohammad Gheshlaghi Azar; Rowland, Mark; Piot, Bilal; Guo, Daniel; Calandriello, Daniele; Valko, Michal; Munos, Rémi (2023). "A General Theoretical Paradigm to Understand Learning from Human Preferences". arXiv:2310.12036 [cs.AI].

- ↑ Ethayarajh, Kawin; Xu, Winnie; Muennighoff, Niklas; Jurafsky, Dan; Kiela, Douwe (2024). "KTO: Model Alignment as Prospect Theoretic Optimization". arXiv:2402.01306 [cs.LG].

Further reading

- "Deep reinforcement learning from human preferences". NeurIPS. 2017. https://arxiv.org/abs/1706.03741.

- "Training language models to follow instructions with human feedback". NeurIPS. 2022. https://arxiv.org/abs/2203.02155.

- "The N Implementation Details of RLHF with PPO". 2023-10-24. https://huggingface.co/blog/the_n_implementation_details_of_rlhf_with_ppo.

- "Proximal Policy Optimization — Spinning Up documentation". https://spinningup.openai.com/en/latest/algorithms/ppo.html.

- "The N+ Implementation Details of RLHF with PPO: A Case Study on TL;DR Summarization". COLM. 2024. https://arxiv.org/abs/2403.17031.

|