Uniform integrability

In mathematics, uniform integrability is an important concept in real analysis, functional analysis and measure theory, and plays a vital role in the theory of martingales.

Measure-theoretic definition

Uniform integrability is an extension to the notion of a family of functions being dominated in [math]\displaystyle{ L_1 }[/math] which is central in dominated convergence. Several textbooks on real analysis and measure theory use the following definition:[1][2]

Definition A: Let [math]\displaystyle{ (X,\mathfrak{M}, \mu) }[/math] be a positive measure space. A set [math]\displaystyle{ \Phi\subset L^1(\mu) }[/math] is called uniformly integrable if [math]\displaystyle{ \sup_{f\in\Phi}\|f\|_{L_1(\mu)}\lt \infty }[/math], and to each [math]\displaystyle{ \varepsilon\gt 0 }[/math] there corresponds a [math]\displaystyle{ \delta\gt 0 }[/math] such that

- [math]\displaystyle{ \int_E |f| \, d\mu \lt \varepsilon }[/math]

whenever [math]\displaystyle{ f \in \Phi }[/math] and [math]\displaystyle{ \mu(E)\lt \delta. }[/math]

Definition A is rather restrictive for infinite measure spaces. A more general definition[3] of uniform integrability that works well in general measures spaces was introduced by G. A. Hunt.

Definition H: Let [math]\displaystyle{ (X,\mathfrak{M},\mu) }[/math] be a positive measure space. A set [math]\displaystyle{ \Phi\subset L^1(\mu) }[/math] is called uniformly integrable if and only if

- [math]\displaystyle{ \inf_{g\in L^1_+(\mu)}\sup_{f\in\Phi}\int_{\{|f|\gt g\}}|f|\, d\mu=0 }[/math]

where [math]\displaystyle{ L^1_+(\mu)=\{g\in L^1(\mu): g\geq0\} }[/math].

Since Hunt's definition is equivalent to Definition A when the underlying measure space is finite (see Theorem 2 below), Definition H is widely adopted in Mathematics.

The following result[4] provides another equivalent another equivalent notion to Hunt's. This equivalency is sometimes given as definition for uniform integrability.

Theorem 1: If [math]\displaystyle{ (X,\mathfrak{M},\mu) }[/math] is a (positive) finite measure space, then a set [math]\displaystyle{ \Phi\subset L^1(\mu) }[/math] is uniformly integrable if and only if

- [math]\displaystyle{ \inf_{a\geq0}\sup_{f\in\Phi}\int_{\{|f|\gt a\}}|f|\, d\mu=0 }[/math]

If in addition [math]\displaystyle{ \mu(X)\lt \infty }[/math], then uniform integrability is equivalent to either of the following conditions

1. [math]\displaystyle{ \inf_{a\gt 0}\sup_{f\in \Phi}\int(|f|-a)_+\,d\mu =0 }[/math].

2. [math]\displaystyle{ \inf_{a\gt 0}\sup_{f\in \Phi}\int_{\{|f|\gt a\}}|f|\,d\mu=0 }[/math]

When the underlying space [math]\displaystyle{ (X,\mathfrak{M},\mu) }[/math] is [math]\displaystyle{ \sigma }[/math]-finite, Hunt's definition is equivalent to the following:

Theorem 2: Let [math]\displaystyle{ (X,\mathfrak{M},\mu) }[/math] be a [math]\displaystyle{ \sigma }[/math]-finite measure space, and [math]\displaystyle{ h\in L^1(\mu) }[/math] be such that [math]\displaystyle{ h\gt 0 }[/math] almost surely. A set [math]\displaystyle{ \Phi\subset L^1(\mu) }[/math] is uniformly integrable if and only if [math]\displaystyle{ \sup_{f\in\Phi}\|f\|_{L_1(\mu)}\lt \infty }[/math], and for any [math]\displaystyle{ \varepsilon\gt 0 }[/math], there exits [math]\displaystyle{ \delta\gt 0 }[/math] such that

- [math]\displaystyle{ \sup_{f\in\Phi}\int_A|f|\, d\mu \lt \varepsilon }[/math]

whenever [math]\displaystyle{ \int_A h\,d\mu \lt \delta }[/math].

A consequence of Theorems 1 and 2 is that equivalence of Definitions A and H for finite measures follows. Indeed, the statement in Definition A is obtained by taking [math]\displaystyle{ h\equiv1 }[/math] in Theorem 2.

Probability definition

In the theory of probability, Definition A or the statement of Theorem 1 are often presented as definitions of uniform integrability using the notation expectation of random variables.,[5][6][7] that is,

1. A class [math]\displaystyle{ \mathcal{C} }[/math] of random variables is called uniformly integrable if:

- There exists a finite [math]\displaystyle{ M }[/math] such that, for every [math]\displaystyle{ X }[/math] in [math]\displaystyle{ \mathcal{C} }[/math], [math]\displaystyle{ \operatorname E(|X|)\leq M }[/math] and

- For every [math]\displaystyle{ \varepsilon \gt 0 }[/math] there exists [math]\displaystyle{ \delta \gt 0 }[/math] such that, for every measurable [math]\displaystyle{ A }[/math] such that [math]\displaystyle{ P(A)\leq \delta }[/math] and every [math]\displaystyle{ X }[/math] in [math]\displaystyle{ \mathcal{C} }[/math], [math]\displaystyle{ \operatorname E(|X|I_A)\leq\varepsilon }[/math].

or alternatively

2. A class [math]\displaystyle{ \mathcal{C} }[/math] of random variables is called uniformly integrable (UI) if for every [math]\displaystyle{ \varepsilon \gt 0 }[/math] there exists [math]\displaystyle{ K\in[0,\infty) }[/math] such that [math]\displaystyle{ \operatorname E(|X|I_{|X|\geq K})\le\varepsilon\ \text{ for all } X \in \mathcal{C} }[/math], where [math]\displaystyle{ I_{|X|\geq K} }[/math] is the indicator function [math]\displaystyle{ I_{|X|\geq K} = \begin{cases} 1 &\text{if } |X|\geq K, \\ 0 &\text{if } |X| \lt K. \end{cases} }[/math].

Tightness and uniform integrability

One consequence of uniformly integrability of a class [math]\displaystyle{ \mathcal{C} }[/math] of random variables is that family of laws or distributions [math]\displaystyle{ \{P\circ|X|^{-1}(\cdot):X\in\mathcal{C}\} }[/math] is tight. That is, for each [math]\displaystyle{ \delta \gt 0 }[/math], there exists [math]\displaystyle{ a \gt 0 }[/math] such that [math]\displaystyle{ P(|X|\gt a) \leq \delta }[/math] for all [math]\displaystyle{ X\in\mathcal{C} }[/math].[8]

This however, does not mean that the family of measures [math]\displaystyle{ \mathcal{V}_{\mathcal{C}}:=\Big\{\mu_X:A\mapsto\int_A|X|\,dP,\,X\in\mathcal{C}\Big\} }[/math] is tight. (In any case, tightness would require a topology on [math]\displaystyle{ \Omega }[/math] in order to be defined.)

Uniform absolute continuity

There is another notion of uniformity, slightly different than uniform integrability, which also has many applications in probability and measure theory, and which does not require random variables to have a finite integral[9]

Definition: Suppose [math]\displaystyle{ (\Omega,\mathcal{F},P) }[/math] is a probability space. A classed [math]\displaystyle{ \mathcal{C} }[/math] of random variables is uniformly absolutely continuous with respect to [math]\displaystyle{ P }[/math] if for any [math]\displaystyle{ \varepsilon\gt 0 }[/math], there is [math]\displaystyle{ \delta\gt 0 }[/math] such that [math]\displaystyle{ E[|X|I_A]\lt \varepsilon }[/math] whenever [math]\displaystyle{ P(A)\lt \delta }[/math].

It is equivalent to uniform integrability if the measure is finite and has no atoms.

The term "uniform absolute continuity" is not standard,[citation needed] but is used by some authors.[10][11]

Related corollaries

The following results apply to the probabilistic definition.[12]

- Definition 1 could be rewritten by taking the limits as [math]\displaystyle{ \lim_{K \to \infty} \sup_{X \in \mathcal{C}} \operatorname E(|X|\,I_{|X|\geq K})=0. }[/math]

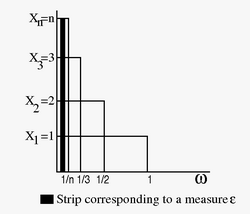

- A non-UI sequence. Let [math]\displaystyle{ \Omega = [0,1] \subset \mathbb{R} }[/math], and define [math]\displaystyle{ X_n(\omega) = \begin{cases} n, & \omega\in (0,1/n), \\ 0 , & \text{otherwise.} \end{cases} }[/math] Clearly [math]\displaystyle{ X_n\in L^1 }[/math], and indeed [math]\displaystyle{ \operatorname E(|X_n|)=1\ , }[/math] for all n. However, [math]\displaystyle{ \operatorname E(|X_n| I_{\{|X_n|\ge K \}})= 1\ \text{ for all } n \ge K, }[/math] and comparing with definition 1, it is seen that the sequence is not uniformly integrable.

- By using Definition 2 in the above example, it can be seen that the first clause is satisfied as [math]\displaystyle{ L^1 }[/math] norm of all [math]\displaystyle{ X_n }[/math]s are 1 i.e., bounded. But the second clause does not hold as given any [math]\displaystyle{ \delta }[/math] positive, there is an interval [math]\displaystyle{ (0, 1/n) }[/math] with measure less than [math]\displaystyle{ \delta }[/math] and [math]\displaystyle{ E[|X_m|: (0, 1/n)] =1 }[/math] for all [math]\displaystyle{ m \ge n }[/math].

- If [math]\displaystyle{ X }[/math] is a UI random variable, by splitting [math]\displaystyle{ \operatorname E(|X|) = \operatorname E(|X| I_{\{|X| \geq K \}})+\operatorname E(|X| I_{\{|X| \lt K \}}) }[/math] and bounding each of the two, it can be seen that a uniformly integrable random variable is always bounded in [math]\displaystyle{ L^1 }[/math].

- If any sequence of random variables [math]\displaystyle{ X_n }[/math] is dominated by an integrable, non-negative [math]\displaystyle{ Y }[/math]: that is, for all ω and n, [math]\displaystyle{ |X_n(\omega)| \le Y(\omega),\ Y(\omega)\ge 0,\ \operatorname E(Y) \lt \infty, }[/math] then the class [math]\displaystyle{ \mathcal{C} }[/math] of random variables [math]\displaystyle{ \{X_n\} }[/math] is uniformly integrable.

- A class of random variables bounded in [math]\displaystyle{ L^p }[/math] ([math]\displaystyle{ p \gt 1 }[/math]) is uniformly integrable.

Relevant theorems

In the following we use the probabilistic framework, but regardless of the finiteness of the measure, by adding the boundedness condition on the chosen subset of [math]\displaystyle{ L^1(\mu) }[/math].

- Dunford–Pettis theorem[13][14]A class[clarification needed] of random variables [math]\displaystyle{ X_n \subset L^1(\mu) }[/math] is uniformly integrable if and only if it is relatively compact for the weak topology [math]\displaystyle{ \sigma(L^1,L^\infty) }[/math].[clarification needed][citation needed]

- de la Vallée-Poussin theorem[15][16]The family [math]\displaystyle{ \{X_{\alpha}\}_{\alpha\in\Alpha} \subset L^1(\mu) }[/math] is uniformly integrable if and only if there exists a non-negative increasing convex function [math]\displaystyle{ G(t) }[/math] such that [math]\displaystyle{ \lim_{t \to \infty} \frac{G(t)} t = \infty \text{ and } \sup_\alpha \operatorname E(G(|X_{\alpha}|)) \lt \infty. }[/math]

Relation to convergence of random variables

A sequence [math]\displaystyle{ \{X_n\} }[/math] converges to [math]\displaystyle{ X }[/math] in the [math]\displaystyle{ L_1 }[/math] norm if and only if it converges in measure to [math]\displaystyle{ X }[/math] and it is uniformly integrable. In probability terms, a sequence of random variables converging in probability also converge in the mean if and only if they are uniformly integrable.[17] This is a generalization of Lebesgue's dominated convergence theorem, see Vitali convergence theorem.

Citations

- ↑ Rudin, Walter (1987). Real and Complex Analysis (3 ed.). Singapore: McGraw–Hill Book Co.. p. 133. ISBN 0-07-054234-1.

- ↑ Royden, H.L.; Fitzpatrick, P.M. (2010). Real Analysis (4 ed.). Boston: Prentice Hall. p. 93. ISBN 978-0-13-143747-0.

- ↑ Hunt, G. A. (1966). Martingales et Processus de Markov. Paris: Dunod. p. 254.

- ↑ Klenke, A. (2008). Probability Theory: A Comprehensive Course. Berlin: Springer Verlag. pp. 134–137. ISBN 978-1-84800-047-6.

- ↑ Williams, David (1997). Probability with Martingales (Repr. ed.). Cambridge: Cambridge Univ. Press.. pp. 126–132. ISBN 978-0-521-40605-5.

- ↑ Gut, Allan (2005). Probability: A Graduate Course. Springer. pp. 214–218. ISBN 0-387-22833-0.

- ↑ Bass, Richard F. (2011). Stochastic Processes. Cambridge: Cambridge University Press. pp. 356–357. ISBN 978-1-107-00800-7.

- ↑ Gut 2005, p. 236.

- ↑ Bass 2011, p. 356.

- ↑ Benedetto, J. J. (1976). Real Variable and Integration. Stuttgart: B. G. Teubner. p. 89. ISBN 3-519-02209-5.

- ↑ Burrill, C. W. (1972). Measure, Integration, and Probability. McGraw-Hill. p. 180. ISBN 0-07-009223-0.

- ↑ Gut 2005, pp. 215–216.

- ↑ Dunford, Nelson (1938). "Uniformity in linear spaces" (in en). Transactions of the American Mathematical Society 44 (2): 305–356. doi:10.1090/S0002-9947-1938-1501971-X. ISSN 0002-9947. https://www.ams.org/.

- ↑ Dunford, Nelson (1939). "A mean ergodic theorem" (in en). Duke Mathematical Journal 5 (3): 635–646. doi:10.1215/S0012-7094-39-00552-1. ISSN 0012-7094.

- ↑ Meyer, P.A. (1966). Probability and Potentials, Blaisdell Publishing Co, N. Y. (p.19, Theorem T22).

- ↑ Poussin, C. De La Vallee (1915). "Sur L'Integrale de Lebesgue". Transactions of the American Mathematical Society 16 (4): 435–501. doi:10.2307/1988879.

- ↑ Bogachev, Vladimir I. (2007). "The spaces Lp and spaces of measures". Measure Theory Volume I. Berlin Heidelberg: Springer-Verlag. pp. 268. doi:10.1007/978-3-540-34514-5_4. ISBN 978-3-540-34513-8.

References

- Shiryaev, A.N. (1995). Probability (2 ed.). New York: Springer-Verlag. pp. 187–188. ISBN 978-0-387-94549-1.

- Diestel, J. and Uhl, J. (1977). Vector measures, Mathematical Surveys 15, American Mathematical Society, Providence, RI ISBN 978-0-8218-1515-1

|