Rasch model

The Rasch model, named after Georg Rasch, is a psychometric model for analyzing categorical data, such as answers to questions on a reading assessment or questionnaire responses, as a function of the trade-off between the respondent's abilities, attitudes, or personality traits, and the item difficulty.[1][2] For example, they may be used to estimate a student's reading ability or the extremity of a person's attitude to capital punishment from responses on a questionnaire. In addition to psychometrics and educational research, the Rasch model and its extensions are used in other areas, including the health profession,[3] agriculture,[4] and market research.[5][6]

The mathematical theory underlying Rasch models is a special case of item response theory. However, there are important differences in the interpretation of the model parameters and its philosophical implications[7] that separate proponents of the Rasch model from the item response modeling tradition. A central aspect of this divide relates to the role of specific objectivity,[8] a defining property of the Rasch model according to Georg Rasch, as a requirement for successful measurement.

Overview

The Rasch model for measurement

In the Rasch model, the probability of a specified response (e.g. right/wrong answer) is modeled as a function of person and item parameters. Specifically, in the original Rasch model, the probability of a correct response is modeled as a logistic function of the difference between the person and item parameter. The mathematical form of the model is provided later in this article. In most contexts, the parameters of the model characterize the proficiency of the respondents and the difficulty of the items as locations on a continuous latent variable. For example, in educational tests, item parameters represent the difficulty of items while person parameters represent the ability or attainment level of people who are assessed. The higher a person's ability relative to the difficulty of an item, the higher the probability of a correct response on that item. When a person's location on the latent trait is equal to the difficulty of the item, there is by definition a 0.5 probability of a correct response in the Rasch model.

A Rasch model is a model in one sense in that it represents the structure which data should exhibit in order to obtain measurements from the data; i.e. it provides a criterion for successful measurement. Beyond data, Rasch's equations model relationships we expect to obtain in the real world. For instance, education is intended to prepare children for the entire range of challenges they will face in life, and not just those that appear in textbooks or on tests. By requiring measures to remain the same (invariant) across different tests measuring the same thing, Rasch models make it possible to test the hypothesis that the particular challenges posed in a curriculum and on a test coherently represent the infinite population of all possible challenges in that domain. A Rasch model is therefore a model in the sense of an ideal or standard that provides a heuristic fiction serving as a useful organizing principle even when it is never actually observed in practice.

The perspective or paradigm underpinning the Rasch model is distinct from the perspective underpinning statistical modelling. Models are most often used with the intention of describing a set of data. Parameters are modified and accepted or rejected based on how well they fit the data. In contrast, when the Rasch model is employed, the objective is to obtain data which fit the model.[9][10][11] The rationale for this perspective is that the Rasch model embodies requirements which must be met in order to obtain measurement, in the sense that measurement is generally understood in the physical sciences.

A useful analogy for understanding this rationale is to consider objects measured on a weighing scale. Suppose the weight of an object A is measured as being substantially greater than the weight of an object B on one occasion, then immediately afterward the weight of object B is measured as being substantially greater than the weight of object A. A property we require of measurements is that the resulting comparison between objects should be the same, or invariant, irrespective of other factors. This key requirement is embodied within the formal structure of the Rasch model. Consequently, the Rasch model is not altered to suit data. Instead, the method of assessment should be changed so that this requirement is met, in the same way that a weighing scale should be rectified if it gives different comparisons between objects upon separate measurements of the objects.

Data analysed using the model are usually responses to conventional items on tests, such as educational tests with right/wrong answers. However, the model is a general one, and can be applied wherever discrete data are obtained with the intention of measuring a quantitative attribute or trait.

Scaling

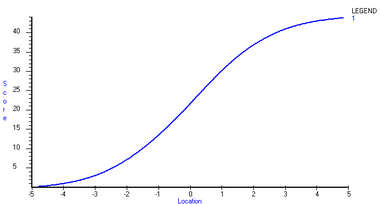

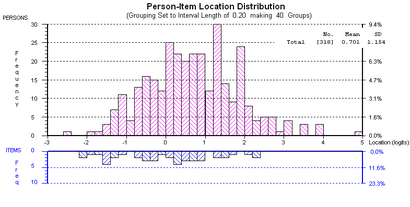

When all test-takers have an opportunity to attempt all items on a single test, each total score on the test maps to a unique estimate of ability and the greater the total, the greater the ability estimate. Total scores do not have a linear relationship with ability estimates. Rather, the relationship is non-linear as shown in Figure 1. The total score is shown on the vertical axis, while the corresponding person location estimate is shown on the horizontal axis. For the particular test on which the test characteristic curve (TCC) shown in Figure 1 is based, the relationship is approximately linear throughout the range of total scores from about 13 to 31. The shape of the TCC is generally somewhat sigmoid as in this example. However, the precise relationship between total scores and person location estimates depends on the distribution of items on the test. The TCC is steeper in ranges on the continuum in which there are more items, such as in the range on either side of 0 in Figures 1 and 2.

In applying the Rasch model, item locations are often scaled first, based on methods such as those described below. This part of the process of scaling is often referred to as item calibration. In educational tests, the smaller the proportion of correct responses, the higher the difficulty of an item and hence the higher the item's scale location. Once item locations are scaled, the person locations are measured on the scale. As a result, person and item locations are estimated on a single scale as shown in Figure 2.

Interpreting scale locations

For dichotomous data such as right/wrong answers, by definition, the location of an item on a scale corresponds with the person location at which there is a 0.5 probability of a correct response to the question. In general, the probability of a person responding correctly to a question with difficulty lower than that person's location is greater than 0.5, while the probability of responding correctly to a question with difficulty greater than the person's location is less than 0.5. The Item Characteristic Curve (ICC) or Item Response Function (IRF) shows the probability of a correct response as a function of the ability of persons. A single ICC is shown and explained in more detail in relation to Figure 4 in this article (see also the item response function). The leftmost ICCs in Figure 3 are the easiest items, the rightmost ICCs in the same figure are the most difficult items.

When responses of a person are sorted according to item difficulty, from lowest to highest, the most likely pattern is a Guttman pattern or vector; i.e. {1,1,...,1,0,0,0,...,0}. However, while this pattern is the most probable given the structure of the Rasch model, the model requires only probabilistic Guttman response patterns; that is, patterns which tend toward the Guttman pattern. It is unusual for responses to conform strictly to the pattern because there are many possible patterns. It is unnecessary for responses to conform strictly to the pattern in order for data to fit the Rasch model.

Each ability estimate has an associated standard error of measurement, which quantifies the degree of uncertainty associated with the ability estimate. Item estimates also have standard errors. Generally, the standard errors of item estimates are considerably smaller than the standard errors of person estimates because there are usually more response data for an item than for a person. That is, the number of people attempting a given item is usually greater than the number of items attempted by a given person. Standard errors of person estimates are smaller where the slope of the ICC is steeper, which is generally through the middle range of scores on a test. Thus, there is greater precision in this range since the steeper the slope, the greater the distinction between any two points on the line.

Statistical and graphical tests are used to evaluate the correspondence of data with the model. Certain tests are global, while others focus on specific items or people. Certain tests of fit provide information about which items can be used to increase the reliability of a test by omitting or correcting problems with poor items. In Rasch Measurement the person separation index is used instead of reliability indices. However, the person separation index is analogous to a reliability index. The separation index is a summary of the genuine separation as a ratio to separation including measurement error. As mentioned earlier, the level of measurement error is not uniform across the range of a test, but is generally larger for more extreme scores (low and high).

Features of the Rasch model

The class of models is named after Georg Rasch, a Danish mathematician and statistician who advanced the epistemological case for the models based on their congruence with a core requirement of measurement in physics; namely the requirement of invariant comparison.[1] This is the defining feature of the class of models, as is elaborated upon in the following section. The Rasch model for dichotomous data has a close conceptual relationship to the law of comparative judgment (LCJ), a model formulated and used extensively by L. L. Thurstone,[12][13] and therefore also to the Thurstone scale.[14]

Prior to introducing the measurement model he is best known for, Rasch had applied the Poisson distribution to reading data as a measurement model, hypothesizing that in the relevant empirical context, the number of errors made by a given individual was governed by the ratio of the text difficulty to the person's reading ability. Rasch referred to this model as the multiplicative Poisson model. Rasch's model for dichotomous data – i.e. where responses are classifiable into two categories – is his most widely known and used model, and is the main focus here. This model has the form of a simple logistic function.

The brief outline above highlights certain distinctive and interrelated features of Rasch's perspective on social measurement, which are as follows:

- He was concerned principally with the measurement of individuals, rather than with distributions among populations.

- He was concerned with establishing a basis for meeting a priori requirements for measurement deduced from physics and, consequently, did not invoke any assumptions about the distribution of levels of a trait in a population.

- Rasch's approach explicitly recognizes that it is a scientific hypothesis that a given trait is both quantitative and measurable, as operationalized in a particular experimental context.

Thus, congruent with the perspective articulated by Thomas Kuhn in his 1961 paper The function of measurement in modern physical science, measurement was regarded both as being founded in theory, and as being instrumental to detecting quantitative anomalies incongruent with hypotheses related to a broader theoretical framework.[15] This perspective is in contrast to that generally prevailing in the social sciences, in which data such as test scores are directly treated as measurements without requiring a theoretical foundation for measurement. Although this contrast exists, Rasch's perspective is actually complementary to the use of statistical analysis or modelling that requires interval-level measurements, because the purpose of applying a Rasch model is to obtain such measurements. Applications of Rasch models are described in a wide variety of sources.[16]

Invariant comparison and sufficiency

The Rasch model for dichotomous data is often regarded as an item response theory (IRT) model with one item parameter. However, rather than being a particular IRT model, proponents of the model[17]: 265 regard it as a model that possesses a property which distinguishes it from other IRT models. Specifically, the defining property of Rasch models is their formal or mathematical embodiment of the principle of invariant comparison. Rasch summarised the principle of invariant comparison as follows:

- The comparison between two stimuli should be independent of which particular individuals were instrumental for the comparison; and it should also be independent of which other stimuli within the considered class were or might also have been compared.

- Symmetrically, a comparison between two individuals should be independent of which particular stimuli within the class considered were instrumental for the comparison; and it should also be independent of which other individuals were also compared, on the same or some other occasion.[18]

Rasch models embody this principle because their formal structure permits algebraic separation of the person and item parameters, in the sense that the person parameter can be eliminated during the process of statistical estimation of item parameters. This result is achieved through the use of conditional maximum likelihood estimation, in which the response space is partitioned according to person total scores. The consequence is that the raw score for an item or person is the sufficient statistic for the item or person parameter. That is to say, the person total score contains all information available within the specified context about the individual, and the item total score contains all information with respect to the item, with regard to the relevant latent trait. The Rasch model requires a specific structure in the response data, namely a probabilistic Guttman structure.

In somewhat more familiar terms, Rasch models provide a basis and justification for obtaining person locations on a continuum from total scores on assessments. Although it is not uncommon to treat total scores directly as measurements, they are actually counts of discrete observations rather than measurements. Each observation represents the observable outcome of a comparison between a person and item. Such outcomes are directly analogous to the observation of the tipping of a beam balance in one direction or another. This observation would indicate that one or other object has a greater mass, but counts of such observations cannot be treated directly as measurements.

Rasch pointed out that the principle of invariant comparison is characteristic of measurement in physics using, by way of example, a two-way experimental frame of reference in which each instrument exerts a mechanical force upon solid bodies to produce acceleration. Rasch[1]: 112–3 stated of this context: "Generally: If for any two objects we find a certain ratio of their accelerations produced by one instrument, then the same ratio will be found for any other of the instruments". It is readily shown that Newton's second law entails that such ratios are inversely proportional to the ratios of the masses of the bodies.

The mathematical form of the Rasch model for dichotomous data

Let be a dichotomous random variable where, for example, denotes a correct response and an incorrect response to a given assessment item. In the Rasch model for dichotomous data, the probability of the outcome is given by:

where is the ability of person and is the difficulty of item . Thus, in the case of a dichotomous attainment item, is the probability of success upon interaction between the relevant person and assessment item. It is readily shown that the log odds, or logit, of correct response by a person to an item, based on the model, is equal to . Given two examinees with different ability parameters and and an arbitrary item with difficulty , compute the difference in logits for these two examinees by . This difference becomes . Conversely, it can be shown that the log odds of a correct response by the same person to one item, conditional on a correct response to one of two items, is equal to the difference between the item locations. For example,

where is the total score of person n over the two items, which implies a correct response to one or other of the items.[1][19][20] Hence, the conditional log odds does not involve the person parameter , which can therefore be eliminated by conditioning on the total score . That is, by partitioning the responses according to raw scores and calculating the log odds of a correct response, an estimate is obtained without involvement of . More generally, a number of item parameters can be estimated iteratively through application of a process such as Conditional Maximum Likelihood estimation (see Rasch model estimation). While more involved, the same fundamental principle applies in such estimations.

The ICC of the Rasch model for dichotomous data is shown in Figure 4. The grey line maps the probability of the discrete outcome (that is, correctly answering the question) for persons with different locations on the latent continuum (that is, their level of abilities). The location of an item is, by definition, that location at which the probability that is equal to 0.5. In figure 4, the black circles represent the actual or observed proportions of persons within Class Intervals for which the outcome was observed. For example, in the case of an assessment item used in the context of educational psychology, these could represent the proportions of persons who answered the item correctly. Persons are ordered by the estimates of their locations on the latent continuum and classified into Class Intervals on this basis in order to graphically inspect the accordance of observations with the model. There is a close conformity of the data with the model. In addition to graphical inspection of data, a range of statistical tests of fit are used to evaluate whether departures of observations from the model can be attributed to random effects alone, as required, or whether there are systematic departures from the model.

Polytomous extensions of the Rasch model

There are multiple polytomous extensions to the Rasch model, which generalize the dichotomous model so that it can be applied in contexts in which successive integer scores represent categories of increasing level or magnitude of a latent trait, such as increasing ability, motor function, endorsement of a statement, and so forth. These polytomous extensions are, for example, applicable to the use of Likert scales, grading in educational assessment, and scoring of performances by judges.

Other considerations

A criticism of the Rasch model is that it is overly restrictive or prescriptive because an assumption of the model is that all items have equal discrimination, whereas in practice, items discriminations vary, and thus no data set will ever show perfect data-model fit. A frequent misunderstanding is that the Rasch model does not permit each item to have a different discrimination, but equal discrimination is an assumption of invariant measurement, so differing item discriminations are not forbidden, but rather indicate that measurement quality does not equal a theoretical ideal. Just as in physical measurement, real world datasets will never perfectly match theoretical models, so the relevant question is whether a particular data set provides sufficient quality of measurement for the purpose at hand, not whether it perfectly matches an unattainable standard of perfection.

A criticism specific to the use of the Rasch model with response data from multiple choice items is that there is no provision in the model for guessing because the left asymptote always approaches a zero probability in the Rasch model. This implies that a person of low ability will always get an item wrong. However, low-ability individuals completing a multiple-choice exam have a substantially higher probability of choosing the correct answer by chance alone (for a k-option item, the likelihood is around 1/k).

The three-parameter logistic model relaxes both these assumptions and the two-parameter logistic model (2PL) allows varying slopes.[21] However, the specification of uniform discrimination and zero left asymptote are necessary properties of the model in order to sustain sufficiency of the simple, unweighted raw score. In practice, the non-zero lower asymptote found in multiple-choice datasets is less of a threat to measurement than commonly assumed and typically does not result in substantive errors in measurement when well-developed test items are used sensibly [22]

Verhelst & Glas (1995) derive Conditional Maximum Likelihood (CML) equations for a model they refer to as the One Parameter Logistic Model (OPLM). In algebraic form it appears to be identical with the 2PL model, but OPLM contains preset discrimination indexes rather than 2PL's estimated discrimination parameters. As noted by these authors, though, the problem one faces in estimation with estimated discrimination parameters is that the discriminations are unknown, meaning that the weighted raw score "is not a mere statistic, and hence it is impossible to use CML as an estimation method".[23]: 217 That is, sufficiency of the weighted "score" in the 2PL cannot be used according to the way in which a sufficient statistic is defined. If the weights are imputed instead of being estimated, as in OPLM, conditional estimation is possible and some of the properties of the Rasch model are retained.[24][23] In OPLM, the values of the discrimination index are restricted to between 1 and 15. A limitation of this approach is that in practice, values of discrimination indexes must be preset as a starting point. This means some type of estimation of discrimination is involved when the purpose is to avoid doing so.

The Rasch model for dichotomous data inherently entails a single discrimination parameter which, as noted by Rasch,[1]: 121 constitutes an arbitrary choice of the unit in terms of which magnitudes of the latent trait are expressed or estimated. However, the Rasch model requires that the discrimination is uniform across interactions between persons and items within a specified frame of reference (i.e. the assessment context given conditions for assessment).

Application of the model provides diagnostic information regarding how well the criterion is met. Application of the model can also provide information about how well items or questions on assessments work to measure the ability or trait. For instance, knowing the proportion of persons that engage in a given behavior, the Rasch model can be used to derive the relations between difficulty of behaviors, attitudes and behaviors.[25] Prominent advocates of Rasch models include Benjamin Drake Wright, David Andrich and Erling Andersen.

See also

References

- ↑ 1.0 1.1 1.2 1.3 1.4 Rasch, G. (1980). Probabilistic models for some intelligence and attainment tests. Foreword and afterword by B.D. Wright (Expanded ed.). Chicago: The University of Chicago Press. ISBN 978-0226705538. https://archive.org/details/probabilisticmod0000rasc.

- ↑ Istiqomah, Istiqomah; Hasanati, Nida (2022-10-27). "Development of Student Academic Performance Determinants Using Rasch Model Analysis". Psympathic: Jurnal Ilmiah Psikologi 9 (1): 17–30. doi:10.15575/psy.v9i1.7571. ISSN 2502-2903. https://journal.uinsgd.ac.id/index.php/psy/article/view/7571.

- ↑ Bezruczko, N. (2005). Rasch measurement in health sciences. Maple Grove, MN: JAM Press. ISBN 978-0975535134. https://archive.org/details/raschmeasurement0000bezr.

- ↑ Moral, F. J.; Rebollo, F. J. (2017). "Characterization of soil fertility using the Rasch model". Journal of Soil Science and Plant Nutrition (Springer Science and Business Media LLC) (ahead): 0. doi:10.4067/s0718-95162017005000035. ISSN 0718-9516.

- ↑ Bechtel, Gordon G. (1985). "Generalizing the Rasch Model for Consumer Rating Scales". Marketing Science (Institute for Operations Research and the Management Sciences (INFORMS)) 4 (1): 62–73. doi:10.1287/mksc.4.1.62. ISSN 0732-2399. https://archive.org/details/sim_marketing-science_winter-1985_4_1/page/62.

- ↑ Wright, B. D. (1977). "Solving measurement problems with the Rasch model". Journal of Educational Measurement 14 (2): 97–116. doi:10.1111/j.1745-3984.1977.tb00031.x.

- ↑ Linacre, J.M. (2005). "Rasch dichotomous model vs. One-parameter Logistic Model". Rasch Measurement Transactions 19 (3): 1032. https://www.rasch.org/rmt/rmt193.pdf.

- ↑ Rasch, G. (1977). "On Specific Objectivity: An attempt at formalizing the request for generality and validity of scientific statements". The Danish Yearbook of Philosophy 14: 58–93. doi:10.1163/24689300-01401006. https://www.rasch.org/memo18.htm.

- ↑ Andrich, D. (January 2004). "Controversy and the Rasch model: a characteristic of incompatible paradigms?". Medical Care (Lippincott Williams & Wilkins) 42 (1 Suppl): 107–116. doi:10.1097/01.mlr.0000103528.48582.7c. PMID 14707751.

- ↑ Wright, B. D. (1984). "Despair and hope for educational measurement". Contemporary Education Review 3 (1): 281–288. http://www.rasch.org/memo41.htm.

- ↑ Wright, B. D. (1999). "Fundamental measurement for psychology". in Embretson, S. E.. The new rules of measurement: What every educator and psychologist should know. Hillsdale: Lawrence Erlbaum Associates. pp. 65–104. ISBN 9781410603593. https://www.rasch.org/memo64.htm.

- ↑ Thurstone, L. L. (1927). "A law of comparative judgment". Psychological Review 34 (4): 273. doi:10.1037/h0070288. https://brocku.ca/MeadProject/Thurstone/Thurstone_1927f.html.

- ↑ Luce, R. Duncan (1994). "Thurstone and sensory scaling: Then and now.". Psychological Review (American Psychological Association (APA)) 101 (2): 271–277. doi:10.1037/0033-295x.101.2.271. ISSN 0033-295X. https://archive.org/details/sim_psychological-review_1994-04_101_2/page/270.

- ↑ Andrich, D. (1978b). "Relationships between the Thurstone and Rasch approaches to item scaling". Applied Psychological Measurement 2 (3): 449–460. doi:10.1177/014662167800200319. https://conservancy.umn.edu/bitstreams/2c28cfc3-b987-4d25-8b33-863cdfa3a7e2/download.

- ↑ Kuhn, Thomas S. (1961). "The Function of Measurement in Modern Physical Science". Isis (University of Chicago Press) 52 (2): 161–193. doi:10.1086/349468. ISSN 0021-1753.

- ↑ Sources include

- Alagumalai, S.; Curtis, D.D.; Hungi, N. (2005). Applied Rasch Measurement: A book of exemplars. Springer-Kluwer. ISBN 1-4020-3076-2.

- (Bezruczko 2005)

- (Bond Fox)

- Burro, Roberto (5 October 2016). "To be objective in Experimental Phenomenology: a Psychophysics application". SpringerPlus (Springer Science and Business Media LLC) 5 (1): 1720. doi:10.1186/s40064-016-3418-4. ISSN 2193-1801. PMID 27777856.

- Fisher, W. P. Jr.; Wright, B. D., eds (1994). "Applications of probabilistic conjoint measurement". International Journal of Educational Research 21 (6): 557–664. doi:10.1016/0883-0355(94)90012-4.

- Masters, G. N.; Keeves, J. P., eds (1999). Advances in measurement in educational research and assessment. New York: Pergamon. ISBN 978-0080433486. https://archive.org/details/advancesinmeasur0000unse.

- (Journal of Applied Measurement {{{2}}})

- ↑ Bond, T.G.; Fox, C.M. (2007). Applying the Rasch Model: Fundamental measurement in the human sciences (2nd ed.). Lawrence Erlbaum. doi:10.4324/9781410614575. ISBN 9781410614575.

- ↑ Rasch, G. (1961). "On general laws and the meaning of measurement in psychology". Fourth Berkeley Symposium on Mathematical Statistics and Probability. Berkeley, California: University of California Press. pp. 321–334. http://projecteuclid.org/DPubS?verb=Display&version=1.0&service=UI&handle=euclid.bsmsp/1200512895&page=record.

- ↑ Andersen, E.B. (1977). "Sufficient statistics and latent trait models". Psychometrika 42: 69–81. doi:10.1007/BF02293746. https://archive.org/details/sim_psychometrika_1977-03_42_1/page/68.

- ↑ Andrich, D. (2010). "Sufficiency and conditional estimation of person parameters in the polytomous Rasch model". Psychometrika 75 (2): 292–308. doi:10.1007/s11336-010-9154-8. https://www.researchgate.net/publication/226239068.

- ↑ Birnbaum, A. (1968). "Some latent trait models and their use in inferring an examinee’s ability". in Lord, F.M.; Novick, M.R.. Statistical theories of mental test scores. Reading, MA: Addison–Wesley. ISBN 978-1-59311-934-8.

- ↑ Holster, Trevor A.; Lake, J. W. (2016). "Guessing and the Rasch model". Language Assessment Quarterly 13 (2): 124–141. doi:10.1080/15434303.2016.1160096. https://www.researchgate.net/publication/301794933.

- ↑ 23.0 23.1 Verhelst, N.D.; Glas, C.A.W. (1995). "The one parameter logistic model". in Fischer, G.H.; Molenaar, I.W.. Rasch Models: Foundations, recent developments, and applications. New York: Springer Verlag. pp. 215–238. doi:10.1007/978-1-4612-4230-7_12. ISBN 978-1-4612-4230-7.

- ↑ Verhelst, N.D.; Glas, C.A.W.; Verstralen, H.H.F.M. (1995). One parameter logistic model (OPLM). Arnhem: CITO.

- ↑ Byrka, Katarzyna; Jȩdrzejewski, Arkadiusz; Sznajd-Weron, Katarzyna; Weron, Rafał (2016-09-01). "Difficulty is critical: The importance of social factors in modeling diffusion of green products and practices". Renewable and Sustainable Energy Reviews 62: 723–735. doi:10.1016/j.rser.2016.04.063. Bibcode: 2016RSERv..62..723B. https://zenodo.org/record/889778.

Further reading

- Andrich, D. (1978a). "A rating formulation for ordered response categories". Psychometrika 43 (4): 357–74. doi:10.1007/BF02293814.

- Andrich, D. (1988). Rasch models for measurement. Beverly Hills: Sage Publications. ISBN 978-1-5063-1937-7. https://archive.org/details/raschmodelsforme0000andr.

- Baker, F. (2001). The Basics of Item Response Theory. ERIC Clearinghouse on Assessment and Evaluation, University of Maryland, College Park, MD. ISBN 1-886047-03-0. https://eric.ed.gov/?id=ED458219. Available free with software included from "IRT". http://edres.org/irt/.

- Fischer, G.H.; Molenaar, I.W., eds (1995). Rasch models: foundations, recent developments and applications. New York: Springer-Verlag. ISBN 0-387-94499-0. https://archive.org/details/raschmodelsfound0000unse.

- Goldstein, H; Blinkhorn, S (1977). "Monitoring Educational Standards: an inappropriate model". Bull.Br.Psychol.Soc. 30: 309–311. https://www.bristol.ac.uk/media-library/sites/cmm/migrated/documents/educational-standards-an-inappropriate-model.pdf.

- Goldstein, H; Blinkhorn, S (1982). "The Rasch Model Still Does Not Fit". BERJ 82: 167–170. https://www.bristol.ac.uk/media-library/sites/cmm/migrated/documents/rasch-still-does-not-fit.pdf.

- Hambleton, RK; Jones, RW (1993). "Comparison of classical test theory and item response". Educational Measurement: Issues and Practice 12 (3): 38–47. doi:10.1111/j.1745-3992.1993.tb00543.x. http://www.ncme.org/pubs/items/24.pdf. available in the "ITEMS Series". http://www.ncme.org/pubs/items.cfm.

- Harris, D. (1989). "Comparison of 1-, 2-, and 3-parameter IRT models". Educational Measurement: Issues and Practice 8: 35–41. doi:10.1111/j.1745-3992.1989.tb00313.x. http://www.ncme.org/pubs/items/13.pdf. available in the "ITEMS Series". http://www.ncme.org/pubs/items.cfm.

- Linacre, J. M. (1999). "Understanding Rasch measurement: Estimation methods for Rasch measures". Journal of Outcome Measurement 3 (4): 382–405. PMID 10572388. https://www.researchgate.net/publication/12729624.

- von Davier, M.; Carstensen, C. H. (2007). Multivariate and Mixture Distribution Rasch Models: Extensions and Applications. New York: Springer. doi:10.1007/978-0-387-49839-3. ISBN 978-0-387-49839-3. https://www.springer.com/gp/book/9780387329161.

- von Davier, M. (2016). "Rasch Model". in van der Linden, Wim J.. Handbook of Item Response Theory. Boca Raton: CRC Press. doi:10.1201/9781315374512. ISBN 9781315374512. https://api.pageplace.de/preview/DT0400.9781482282474_A34089181/preview-9781482282474_A34089181.pdf.

- Wright, B.D.; Stone, M.H. (1979). Best Test Design. Chicago, IL: MESA Press. https://research.acer.edu.au/measurement/1/.

- Wu, M.; Adams, R. (2007). Applying the Rasch model to psycho-social measurement: A practical approach. Melbourne, Australia: Educational Measurement Solutions. https://www.edmeasurement.com.au/pdf/RaschMeasurement_Complete.pdf. Available free from Educational Measurement Solutions

External links

- Institute for Objective Measurement Online Rasch Resources

- Pearson Psychometrics Laboratory, with information about Rasch models

- "Journal of Applied Measurement". http://www.jampress.org.

- Journal of Outcome Measurement (all issues available for free downloading)

- Berkeley Evaluation & Assessment Research Center (ConstructMap software)

- Directory of Rasch Software – freeware and paid

- "IRT Modeling Lab". http://work.psych.uiuc.edu/irt/.

- National Council on Measurement in Education (NCME)

- Rasch Measurement Transactions

- The Standards for Educational and Psychological Testing

- The Trouble with Rasch

|