Causal inference

Causal inference is the process of determining the independent, actual effect of a particular phenomenon that is a component of a larger system. The main difference between causal inference and inference of association is that causal inference analyzes the response of an effect variable when a cause of the effect variable is changed.[1][2]

The study of why things occur is called etiology, and can be described using the language of scientific causal notation. Causal inference is said to provide the evidence of causality theorized by causal reasoning. Causal inference is widely studied across all sciences. Several innovations in the development and implementation of methodology designed to determine causality have proliferated in recent decades. Causal inference remains especially difficult where experimentation is difficult or impossible, which is common throughout most sciences.

The approaches to causal inference are broadly applicable across all types of scientific disciplines, and many methods of causal inference that were designed for certain disciplines have found use in other disciplines. This article outlines the basic process behind causal inference and details some of the more conventional tests used across different disciplines; however, this should not be mistaken as a suggestion that these methods apply only to those disciplines, merely that they are the most commonly used in that discipline.

Causal inference is difficult to perform and there is significant debate amongst scientists about the proper way to determine causality. Despite other innovations, there remain concerns of misattribution by scientists of correlative results as causal, of the usage of incorrect methodologies by scientists, and of deliberate manipulation by scientists of analytical results in order to obtain statistically significant estimates. Particular concern is raised in the use of regression models, especially linear regression models.

Definition

Inferring the cause of something has been described as:

- "...reason[ing] to the conclusion that something is, or is likely to be, the cause of something else".[3]

- "Identification of the cause or causes of a phenomenon, by establishing covariation of cause and effect, a time-order relationship with the cause preceding the effect, and the elimination of plausible alternative causes."[4]

Methodology

General

Causal inference is conducted via the study of systems where the measure of one variable is suspected to affect the measure of another. Causal inference is conducted with regard to the scientific method. The first step of causal inference is to formulate a falsifiable null hypothesis, which is subsequently tested with statistical methods. Frequentist statistical inference is the use of statistical methods to determine the probability that the data occur under the null hypothesis by chance; Bayesian inference is used to determine the effect of an independent variable.[5] Statistical inference is generally used to determine the difference between variations in the original data that are random variation or the effect of a well-specified causal mechanism. Notably, correlation does not imply causation, so the study of causality is as concerned with the study of potential causal mechanisms as it is with variation amongst the data.[6] A frequently sought after standard of causal inference is an experiment wherein treatment is randomly assigned but all other confounding factors are held constant. Most of the efforts in causal inference are in the attempt to replicate experimental conditions.

Epidemiological studies employ different epidemiological methods of collecting and measuring evidence of risk factors and effect and different ways of measuring association between the two. Results of a 2020 review of methods for causal inference found that using existing literature for clinical training programs can be challenging. This is because published articles often assume an advanced technical background, they may be written from multiple statistical, epidemiological, computer science, or philosophical perspectives, methodological approaches continue to expand rapidly, and many aspects of causal inference receive limited coverage.[7] Common frameworks for causal inference include the causal pie model (component-cause), Pearl's structural causal model (causal diagram + do-calculus), structural equation modeling, and Rubin causal model (potential-outcome), which are often used in areas such as social sciences and epidemiology.[8]

Experimental

Experimental verification of causal mechanisms is possible using experimental methods. The main motivation behind an experiment is to hold other experimental variables constant while purposefully manipulating the variable of interest. If the experiment produces statistically significant effects as a result of only the treatment variable being manipulated, there is grounds to believe that a causal effect can be assigned to the treatment variable, assuming that other standards for experimental design have been met.

Quasi-experimental

Quasi-experimental verification of causal mechanisms is conducted when traditional experimental methods are unavailable. This may be the result of prohibitive costs of conducting an experiment, or the inherent infeasibility of conducting an experiment, especially experiments that are concerned with large systems such as economies of electoral systems, or for treatments that are considered to present a danger to the well-being of test subjects. Quasi-experiments may also occur where information is withheld for legal reasons.

Approaches in epidemiology

Epidemiology studies patterns of health and disease in defined populations of living beings in order to infer causes and effects. An association between an exposure to a putative risk factor and a disease may be suggestive of, but is not equivalent to causality because correlation does not imply causation. Historically, Koch's postulates have been used since the 19th century to decide if a microorganism was the cause of a disease. In the 20th century the Bradford Hill criteria, described in 1965[9] have been used to assess causality of variables outside microbiology, although even these criteria are not exclusive ways to determine causality. In molecular epidemiology the phenomena studied are on a molecular biology level, including genetics, where biomarkers are evidence of cause or effects.

Beginning in the early 2010s, researchers began to identify evidence for influence of the exposure on molecular pathology within diseased tissue or cells, in the emerging interdisciplinary field of molecular pathological epidemiology (MPE). Linking the exposure to molecular pathologic signatures of the disease can help to assess causality. Considering the inherent nature of heterogeneity of a given disease, the unique disease principle, disease phenotyping and subtyping are trends in biomedical and public health sciences, exemplified as personalized medicine and precision medicine.

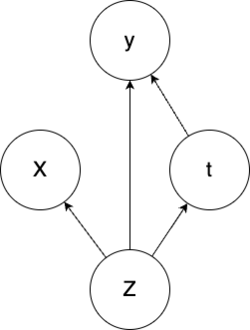

Causal Inference has also been used for treatment effect estimation. Assuming a set of observable patient symptoms(X) caused by a set of hidden causes(Z) we can choose to give or not a treatment t. The result of the giving or not giving the treatment is the effect estimation y. If the treatment is not guaranteed to have a positive effect then the decision whether the treatment should be applied or not depends firstly on expert knowledge that encompasses the causal connections. For novel diseases, this expert knowledge may not be available. As a result, we rely solely on past treatment outcomes to make decisions. A modified variational autoencoder can be used to model the causal graph described above.[10] While the above scenario could be modelled without the use of the hidden confounder(Z) we would lose the insight that the symptoms a patient together with other factors impacts both the treatment assignment and the outcome.

Approaches in social sciences

Social science

The social sciences in general have moved increasingly toward including quantitative frameworks for assessing causality. Much of this has been described as a means of providing greater rigor to social science methodology. Political science was significantly influenced by the publication of Designing Social Inquiry, by Gary King, Robert Keohane, and Sidney Verba, in 1994. King, Keohane, and Verba recommend that researchers apply both quantitative and qualitative methods and adopt the language of statistical inference to be clearer about their subjects of interest and units of analysis.[11][12] Proponents of quantitative methods have also increasingly adopted the potential outcomes framework, developed by Donald Rubin, as a standard for inferring causality.[13]

While much of the emphasis remains on statistical inference in the potential outcomes framework, social science methodologists have developed new tools to conduct causal inference with both qualitative and quantitative methods, sometimes called a "mixed methods" approach.[14][15] Advocates of diverse methodological approaches argue that different methodologies are better suited to different subjects of study. Sociologist Herbert Smith and political scientists James Mahoney and Gary Goertz have cited the observation of Paul W. Holland, a statistician and author of the 1986 article "Statistics and Causal Inference", that statistical inference is most appropriate for assessing the "effects of causes" rather than the "causes of effects".[16][17]

Qualitative methodologists have argued that formalized models of causation, including process tracing and fuzzy set theory, provide opportunities to infer causation through the identification of critical factors within case studies or through a process of comparison among several case studies.[12] These methodologies are also valuable for subjects in which a limited number of potential observations or the presence of confounding variables would limit the applicability of statistical inference. On longer timescales, persistence studies uses causal inference to link historical events to later political, economic and social outcomes.[18]

Economics and political science

In the economic sciences and political sciences causal inference is often difficult, owing to the real world complexity of economic and political realities and the inability to recreate many large-scale phenomena within controlled experiments. Causal inference in the economic and political sciences continues to see improvement in methodology and rigor, due to the increased level of technology available to social scientists, the increase in the number of social scientists and research, and improvements to causal inference methodologies throughout social sciences.[19] Despite the difficulties inherent in determining causality in economic systems, several widely employed methods exist throughout those fields.

Theoretical methods

Economists and political scientists can use theory (often studied in theory-driven econometrics) to estimate the magnitude of supposedly causal relationships in cases where they believe a causal relationship exists.[20] Theorists can presuppose a mechanism believed to be causal and describe the effects using data analysis to justify their proposed theory. For example, theorists can use logic to construct a model, such as theorizing that rain causes fluctuations in economic productivity but that the converse is not true.[21] However, using purely theoretical claims that do not offer any predictive insights has been called "pre-scientific" because there is no ability to predict the impact of the supposed causal properties.[5] It is worth reiterating that regression analysis in the social science does not inherently imply causality, as many phenomena may correlate in the short run or in particular datasets but demonstrate no correlation in other time periods or other datasets. Thus, the attribution of causality to correlative properties is premature absent a well defined and reasoned causal mechanism.

Instrumental variables

The instrumental variables (IV) technique is a method of determining causality that involves the elimination of a correlation between one of a model's explanatory variables and the model's error term. This method presumes that if a model's error term moves similarly with the variation of another variable, then the model's error term is probably an effect of variation in that explanatory variable. The elimination of this correlation through the introduction of a new instrumental variable thus reduces the error present in the model as a whole.[22]

Model specification

Model specification is the act of selecting a model to be used in data analysis. Social scientists (and, indeed, all scientists) must determine the correct model to use because different models are good at estimating different relationships.[23] Model specification can be useful in determining causality that is slow to emerge, where the effects of an action in one period are only felt in a later period. It is worth remembering that correlations only measure whether two variables have similar variance, not whether they affect one another in a particular direction; thus, one cannot determine the direction of a causal relation based on correlations only. Because causal acts are believed to precede causal effects, social scientists can use a model that looks specifically for the effect of one variable on another over a period of time. This leads to using the variables representing phenomena happening earlier as treatment effects, where econometric tests are used to look for later changes in data that are attributed to the effect of such treatment effects, where a meaningful difference in results following a meaningful difference in treatment effects may indicate causality between the treatment effects and the measured effects (e.g., Granger-causality tests). Such studies are examples of time-series analysis.[24]

Sensitivity analysis

Other variables, or regressors in regression analysis, are either included or not included across various implementations of the same model to ensure that different sources of variation can be studied more separately from one another. This is a form of sensitivity analysis: it is the study of how sensitive an implementation of a model is to the addition of one or more new variables.[25] A chief motivating concern in the use of sensitivity analysis is the pursuit of discovering confounding variables. Confounding variables are variables that have a large impact on the results of a statistical test but are not the variable that causal inference is trying to study. Confounding variables may cause a regressor to appear to be significant in one implementation, but not in another.

Multicollinearity

Another reason for the use of sensitivity analysis is to detect multicollinearity. Multicollinearity is the phenomenon where the correlation between two explanatory variables is very high. A high level of correlation between two such variables can dramatically affect the outcome of a statistical analysis, where small variations in highly correlated data can flip the effect of a variable from a positive direction to a negative direction, or vice versa. This is an inherent property of variance testing. Determining multicollinearity is useful in sensitivity analysis because the elimination of highly correlated variables in different model implementations can prevent the dramatic changes in results that result from the inclusion of such variables.[26]

There are limits to sensitivity analysis' ability to prevent the deleterious effects of multicollinearity, especially in the social sciences, where systems are complex. Because it is theoretically impossible to include or even measure all of the confounding factors in a sufficiently complex system, econometric models are susceptible to the common-cause fallacy, where causal effects are incorrectly attributed to the wrong variable because the correct variable was not captured in the original data. This is an example of the failure to account for a lurking variable.[27]

Design-based econometrics

Recently, improved methodology in design-based econometrics has popularized the use of both natural experiments and quasi-experimental research designs to study the causal mechanisms that such experiments are believed to identify.[28]

Experimental methods

In applied economics and political science, randomized field experiments are widely used to identify causal effects, since they help address confounding that complicates observational studies. This approach has also been adopted in marketing science, where firms conduct large-scale randomized advertising trials to estimate causal returns on investment (see § Applications in marketing below).[29]

Applications in marketing

Causal inference has become central to modern marketing science, where advertisers and platforms seek to estimate the true incremental effect of marketing interventions rather than relying on correlative metrics such as last-click attribution. Because consumers are simultaneously exposed to many channels and external demand drivers, isolating the causal contribution of a single campaign poses methodological challenges analogous to those found in epidemiology and the social sciences.[30]

Incrementality experiments

Randomized controlled trials, often called incrementality experiments or lift studies in industry usage, are the most direct method for estimating the causal effect of advertising. In a typical design, a population of users or geographic regions is randomly split into treatment and control groups; the treatment group is exposed to the advertising while the control group is withheld from exposure or shown a placebo advertisement. The difference in conversion or revenue outcomes between the two groups provides an unbiased estimate of the average treatment effect. Lewis and Rao (2015) demonstrated that because per-person advertising effects are small relative to the variance in consumer purchasing, very large sample sizes are required to obtain precise estimates, which they termed "the unfavorable economics of measuring the returns to advertising".[29] Gordon et al. (2019) compared experimental estimates from large-scale Facebook field experiments against several observational approaches, finding that observational methods frequently overstated advertising effects by substantial margins, underscoring the importance of randomized designs.[30] These findings are consistent with the broader potential outcomes framework developed by Donald Rubin, which underpins much of modern incrementality measurement.[13]

Synthetic control and geo-experiments

When user-level randomization is infeasible—for example, in television, radio, or outdoor advertising—researchers often turn to geographic or market-level quasi-experiments. The synthetic control method, introduced by Abadie and Gardeazabal (2003) and formalized by Abadie, Diamond, and Hainmueller (2010), constructs a weighted combination of untreated comparison units to approximate the counterfactual outcome of a treated unit.[31] Google's GeoX framework applies a related approach to advertising, randomly assigning geographic regions to treatment and control conditions and using Bayesian structural time-series models to estimate the causal impact of regional ad campaigns.[32][33] The Platform Incrementality Experiment (PIE) framework, proposed in 2023, extends geo-experimental methodology to cross-platform settings, enabling advertisers to measure the incremental contribution of an individual media platform while accounting for activity on competing platforms.[34]

Media mix modeling

Marketing mix modeling (MMM), also known as media mix modeling, takes a complementary approach by fitting aggregate-level regression models—typically using time-series data on advertising spend, pricing, promotions, and sales—to estimate the marginal contribution of each marketing channel. Unlike incrementality experiments, which provide causal estimates for a specific campaign over a defined window, MMM aims to decompose historical variation in a dependent variable across all measured drivers simultaneously. Modern implementations increasingly incorporate Bayesian estimation, informative priors derived from experimental lift studies, and Markov chain Monte Carlo methods to quantify uncertainty. Jin et al. (2017) proposed a widely adopted Bayesian MMM framework that models the carryover and saturation effects of advertising using flexible functional forms estimated via informative priors.[35][36] Practitioners have noted that MMM estimates are most credible when calibrated against experimental benchmarks, creating a feedback loop between observational modeling and randomized experimentation.[30][32]

Approaches in computer science

Causal inference is an important concept in the field of causal artificial intelligence. Determination of cause and effect from joint observational data for two time-independent variables, say X and Y, has been tackled using asymmetry between evidence for some model in the directions, X → Y and Y → X. The primary approaches are based on Algorithmic information theory models and noise models.[37]

Noise models

Incorporate an independent noise term in the model to compare the evidences of the two directions.

Here are some of the noise models for the hypothesis Y → X with the noise E:

- Additive noise:[38]

- Linear noise:[39]

- Post-nonlinear:[40]

- Heteroskedastic noise:

- Functional noise:[41]

The common assumption in these models are:

- There are no other causes of Y.

- X and E have no common causes.

- Distribution of cause is independent from causal mechanisms.

On an intuitive level, the idea is that the factorization of the joint distribution P(Cause, Effect) into P(Cause)*P(Effect | Cause) typically yields models of lower total complexity than the factorization into P(Effect)*P(Cause | Effect). Although the notion of "complexity" is intuitively appealing, it is not obvious how it should be precisely defined.[41] A different family of methods attempt to discover causal "footprints" from large amounts of labeled data, and allow the prediction of more flexible causal relations.[42]

Malpractice in causal inference

Despite the advancements in the development of methodologies used to determine causality, significant weaknesses in determining causality remain. These weaknesses can be attributed both to the inherent difficulty of determining causal relations in complex systems but also to cases of scientific malpractice. Separate from the difficulties of causal inference, the perception that large numbers of scholars in the social sciences engage in non-scientific methodology exists among some large groups of social scientists. Criticism of economists and social scientists as passing off descriptive studies as causal studies are rife within those fields.[5]

Scientific malpractice and flawed methodology

In the sciences, especially in the social sciences, there is concern among scholars that scientific malpractice is widespread. As scientific study is a broad topic, there are theoretically limitless ways to have a causal inference undermined through no fault of a researcher. Nonetheless, there remain concerns among scientists that large numbers of researchers do not perform basic duties or practice sufficiently diverse methods in causal inference.[43][19][44]

One prominent example of common non-causal methodology is the erroneous assumption of correlative properties as causal properties. There is no inherent causality in phenomena that correlate. Regression models are designed to measure variance within data relative to a theoretical model: there is nothing to suggest that data that presents high levels of covariance have any meaningful relationship (absent a proposed causal mechanism with predictive properties or a random assignment of treatment). The use of flawed methodology has been claimed to be widespread, with common examples of such malpractice being the overuse of correlative models, especially the overuse of regression models and particularly linear regression models.[5] The presupposition that two correlated phenomena are inherently related is a logical fallacy known as spurious correlation. Some social scientists claim that widespread use of methodology that attributes causality to spurious correlations have been detrimental to the integrity of the social sciences, although improvements stemming from better methodologies have been noted.[28]

A potential effect of scientific studies that erroneously conflate correlation with causality is an increase in the number of scientific findings whose results are not reproducible by third parties. Such non-reproducibility is a logical consequence of findings that correlation only temporarily being overgeneralized into mechanisms that have no inherent relationship, where new data does not contain the previous, idiosyncratic correlations of the original data. Debates over the effect of malpractice versus the effect of the inherent difficulties of searching for causality are ongoing.[45] Critics of widely practiced methodologies argue that researchers have engaged statistical manipulation in order to publish articles that supposedly demonstrate evidence of causality but are actually examples of spurious correlation being touted as evidence of causality: such endeavors may be referred to as P hacking.[46] To prevent this, some have advocated that researchers preregister their research designs prior to conducting to their studies so that they do not inadvertently overemphasize a nonreproducible finding that was not the initial subject of inquiry but was found to be statistically significant during data analysis.[47]

See also

- Causal analysis

- Causal model

- Granger causality

- Marketing mix modeling

- Multivariate statistics

- Partial least squares regression

- Pathogenesis

- Pathology

- Probabilistic causation

- Probabilistic argumentation

- Probabilistic logic

- Regression analysis

- Transfer entropy

References

- ↑ Pearl, Judea (1 January 2009). "Causal inference in statistics: An overview". Statistics Surveys 3: 96–146. doi:10.1214/09-SS057. http://ftp.cs.ucla.edu/pub/stat_ser/r350.pdf. Retrieved 24 September 2012.

- ↑ Morgan, Stephen; Winship, Chris (2007). Counterfactuals and Causal inference. Cambridge University Press. ISBN 978-0-521-67193-4.

- ↑ "causal inference". Encyclopædia Britannica, Inc.. http://www.britannica.com/EBchecked/topic/1442615/causal-inference.

- ↑ John Shaughnessy; Eugene Zechmeister; Jeanne Zechmeister (2000). Research Methods in Psychology. McGraw-Hill Humanities/Social Sciences/Languages. pp. Chapter 1 : Introduction. ISBN 978-0077825362. http://www.mhhe.com/socscience/psychology/shaugh/ch01_concepts.html. Retrieved 24 August 2014.

- ↑ 5.0 5.1 5.2 5.3 Schrodt, Philip A (2014-03-01). "Seven deadly sins of contemporary quantitative political analysis" (in en). Journal of Peace Research 51 (2): 287–300. doi:10.1177/0022343313499597. ISSN 0022-3433. https://doi.org/10.1177/0022343313499597. Retrieved 16 February 2021.

- ↑ Diaz Ochoa, Juan Guillermo (2025). "Complexity Measurements and Causation for Dynamic Complex Systems" (in en). Understanding Complex Systems. doi:10.1007/978-3-031-84709-7. ISSN 1860-0832. https://link.springer.com/book/10.1007/978-3-031-84709-7.

- ↑ Landsittel, Douglas; Srivastava, Avantika; Kropf, Kristin (2020). "A Narrative Review of Methods for Causal Inference and Associated Educational Resources" (in en). Quality Management in Health Care 29 (4): 260–269. doi:10.1097/QMH.0000000000000276. ISSN 1063-8628. PMID 32991545. https://dx.doi.org/10.1097%2FQMH.0000000000000276. Retrieved 26 February 2021.

- ↑ Greenland, Sander; Brumback, Babette (October 2002). "An overview of relations among causal modelling methods" (in en). International Journal of Epidemiology 31 (5): 1030–1037. doi:10.1093/ije/31.5.1030. ISSN 1464-3685. PMID 12435780.

- ↑ Hill, Austin Bradford (1965). "The Environment and Disease: Association or Causation?". Proceedings of the Royal Society of Medicine 58 (5): 295–300. doi:10.1177/003591576505800503. PMID 14283879. PMC 1898525. http://www.edwardtufte.com/tufte/hill. Retrieved 25 February 2014.

- ↑ Louizos, Christos; Shalit, Uri; Mooij, Joris; Sontag, David; Zemel, Richard; Welling, Max (2017). "Causal Effect Inference with Deep Latent-Variable Models". arXiv:1705.08821 [stat.ML].

- ↑ King, Gary (2012). Designing social inquiry : scientific inference in qualitative research. Princeton Univ. Press. ISBN 978-0691034713. OCLC 754613241.

- ↑ 12.0 12.1 Mahoney, James (January 2010). "After KKV". World Politics 62 (1): 120–147. doi:10.1017/S0043887109990220.

- ↑ 13.0 13.1 Rubin, Donald B. (1974). "Estimating causal effects of treatments in randomized and nonrandomized studies". Journal of Educational Psychology 66 (5): 688–701. doi:10.1037/h0037350.

- ↑ Creswell, John W.; Clark, Vicki L. Plano (2011) (in en). Designing and Conducting Mixed Methods Research. SAGE Publications. ISBN 9781412975179. https://books.google.com/books?id=YcdlPWPJRBcC. Retrieved 23 February 2021.

- ↑ Seawright, Jason (September 2016) (in en). Multi-Method Social Science by Jason Seawright. Cambridge Core. doi:10.1017/CBO9781316160831. ISBN 9781316160831. https://www.cambridge.org/core/books/multimethod-social-science/286C2742878FBCC6225E2F10D6095A0C. Retrieved 2019-04-18.

- ↑ Smith, Herbert L. (10 February 2014). "Effects of Causes and Causes of Effects: Some Remarks from the Sociological Side". Sociological Methods and Research 43 (3): 406–415. doi:10.1177/0049124114521149. PMID 25477697.

- ↑ Goertz, Gary; Mahoney, James (2006). "A Tale of Two Cultures: Contrasting Quantitative and Qualitative Research" (in en). Political Analysis 14 (3): 227–249. doi:10.1093/pan/mpj017. ISSN 1047-1987.

- ↑ Cirone, Alexandra; Pepinsky, Thomas B. (2022). "Historical Persistence" (in en). Annual Review of Political Science 25 (1): 241–259. doi:10.1146/annurev-polisci-051120-104325. ISSN 1094-2939.

- ↑ 19.0 19.1 Angrist, Joshua D.; Pischke, Jörn-Steffen (June 2010). "The Credibility Revolution in Empirical Economics: How Better Research Design Is Taking the Con out of Econometrics" (in en). Journal of Economic Perspectives 24 (2): 3–30. doi:10.1257/jep.24.2.3. ISSN 0895-3309.

- ↑ University, Carnegie Mellon. "Theory of Causation - Department of Philosophy - Dietrich College of Humanities and Social Sciences - Carnegie Mellon University" (in en). http://www.cmu.edu/dietrich/philosophy/research/areas/science-methodology/theory-of-causation.html.

- ↑ Simon, Herbert (1977). Models of Discovery. Dordrecht: Springer. p. 52.

- ↑ Angrist, Joshua D.; Krueger, Alan B. (2001). "Instrumental Variables and the Search for Identification: From Supply and Demand to Natural Experiments". Journal of Economic Perspectives 15 (4): 69–85. doi:10.1257/jep.15.4.69. https://economics.mit.edu/files/18. Retrieved 16 February 2021.

- ↑ Allen, Michael Patrick, ed (1997). "Model specification in regression analysis" (in en). Understanding Regression Analysis. Boston, MA: Springer US. pp. 166–170. doi:10.1007/978-0-585-25657-3_35. ISBN 978-0-585-25657-3. https://doi.org/10.1007/978-0-585-25657-3_35. Retrieved 2021-02-16.

- ↑ Maziarz, Mariusz (2020). The Philosophy of Causality in Economics: Causal Inferences and Policy Proposals. New York: Routledge.

- ↑ Salciccioli, Justin D.; Crutain, Yves; Komorowski, Matthieu; Marshall, Dominic C. (2016). "Sensitivity Analysis and Model Validation". in MIT Critical Data (in en). Secondary Analysis of Electronic Health Records. Cham: Springer International Publishing. pp. 263–271. doi:10.1007/978-3-319-43742-2_17. ISBN 978-3-319-43742-2.

- ↑ Illowsky, Barbara (2013). "Introductory Statistics". https://openstax.org/details/introductory-statistics.

- ↑ Henschen, Tobias (2018). "The in-principle inconclusiveness of causal evidence in macroeconomics". European Journal for Philosophy of Science 8 (3): 709–733. doi:10.1007/s13194-018-0207-7.

- ↑ 28.0 28.1 Angrist Joshua & Pischke Jörn-Steffen (2008). Mostly Harmless Econometrics: An Empiricist's Companion. Princeton: Princeton University Press.

- ↑ 29.0 29.1 Lewis, Randall A.; Rao, Justin M. (2015). "The unfavorable economics of measuring the returns to advertising". The Quarterly Journal of Economics 130 (4): 1941–1973. doi:10.1093/qje/qjv023.

- ↑ 30.0 30.1 30.2 Gordon, Brett R.; Zettelmeyer, Florian; Bhargava, Neha; Chapsky, Dan (2019). "A comparison of approaches to advertising measurement: Evidence from big field experiments at Facebook". Marketing Science 38 (2): 193–225. doi:10.1287/mksc.2018.1135.

- ↑ Abadie, Alberto; Diamond, Alexis; Hainmueller, Jens (2010). "Synthetic control methods for comparative case studies: Estimating the effect of California's tobacco control program". Journal of the American Statistical Association 105 (490): 493–505. doi:10.1198/jasa.2009.ap08746.

- ↑ 32.0 32.1 Brodersen, Kay H.; Gallusser, Fabian; Koehler, Jim; Remy, Nicolas; Scott, Steven L. (2015). "Inferring causal impact using Bayesian structural time-series models". Annals of Applied Statistics 9 (1): 247–274. doi:10.1214/14-AOAS788.

- ↑ Vaver, Jon; Koehler, Jim (2011). "Measuring Ad Effectiveness Using Geo Experiments". Google Research. https://research.google/pubs/pub38355/.

- ↑ "Platform Incrementality Experiment (PIE): An Industry Framework for Cross-Platform Incrementality Testing". Interactive Advertising Bureau. 2023. https://www.iab.com/insights/platform-incrementality-experiment/.

- ↑ Jin, Yuxue; Wang, Yueqing; Sun, Yunting; Chan, David; Koehler, Jim (2017). Bayesian Methods for Media Mix Modeling with Carryover and Shape Effects (Technical report). Google Research.

- ↑ "Marketing Mix Modeling (MMM) Fundamentals: A Modern Guide". Haus. https://www.haus.io/blog/marketing-mix-modeling-mmm-fundamentals-a-modern-guide.

- ↑ Peters, Jonas; Janzing, Dominik; Schölkopf, Bernhard (2017). Elements of Causal Inference: Foundations and Learning Algorithms. MIT Press. ISBN 978-0-262-03731-0.

- ↑ Hoyer, Patrik O., et al. "Nonlinear causal discovery with additive noise models ." NIPS. Vol. 21. 2008.

- ↑ Shimizu, Shohei (2011). "DirectLiNGAM: A direct method for learning a linear non-Gaussian structural equation model". The Journal of Machine Learning Research 12: 1225–1248. http://www.jmlr.org/papers/volume12/shimizu11a/shimizu11a.pdf. Retrieved 27 July 2019.

- ↑ Zhang, Kun, and Aapo Hyvärinen. "On the identifiability of the post-nonlinear causal model ." Proceedings of the Twenty-Fifth Conference on Uncertainty in Artificial Intelligence. AUAI Press, 2009.

- ↑ 41.0 41.1 Mooij, Joris M., et al. "Probabilistic latent variable models for distinguishing between cause and effect ." NIPS. 2010.

- ↑ Lopez-Paz, David, et al. "Towards a learning theory of cause-effect inference " ICML. 2015

- ↑ Achen, Christopher H. (June 2002). "Toward a new political methodology: Microfoundations and ART" (in en). Annual Review of Political Science 5 (1): 423–450. doi:10.1146/annurev.polisci.5.112801.080943. ISSN 1094-2939.

- ↑ Vandenbroucke, Jan P; Broadbent, Alex; Pearce, Neil (December 2016). "Causality and causal inference in epidemiology: the need for a pluralistic approach". International Journal of Epidemiology 45 (6): 1776–1786. doi:10.1093/ije/dyv341. ISSN 0300-5771. PMID 26800751.

- ↑ Greenland, Sander (January 2017). "For and Against Methodologies: Some Perspectives on Recent Causal and Statistical Inference Debates". European Journal of Epidemiology 32 (1): 3–20. doi:10.1007/s10654-017-0230-6. ISSN 1573-7284. PMID 28220361.

- ↑ Dominus, Susan (18 October 2017). "When the Revolution Came for Amy Cuddy" (in en-US). The New York Times. ISSN 0362-4331. https://www.nytimes.com/2017/10/18/magazine/when-the-revolution-came-for-amy-cuddy.html.

- ↑ "The Statistical Crisis in Science" (in en). 6 February 2017. https://www.americanscientist.org/article/the-statistical-crisis-in-science.

Bibliography

- Hernán, MA; Robins, JM (2020-01-21). Causal Inference: What If. Barnsley: Boca Raton: Chapman & Hall/CRC. https://www.hsph.harvard.edu/miguel-hernan/causal-inference-book/.

External links

- NIPS 2013 Workshop on Causality

- Causal inference at the Max Planck Institute for Intelligent Systems Tübingen

|