Global illumination

| Three-dimensional (3D) computer graphics |

|---|

|

| Fundamentals |

| Primary uses |

| Related topics |

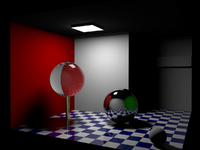

Global illumination (GI), or indirect illumination, refers to a group of algorithms used in 3D computer graphics meant to add more realistic lighting to 3D scenes. Such algorithms take into account not only the light that comes directly from a light source (direct illumination), but also subsequent "bounces" where light rays are reflected by other surfaces in the scene (indirect illumination).

The term "global illumination" was first used by Turner Whitted in his paper "An improved illumination model for shaded display",[1] to differentiate between illumination calculations at a local scale (using geometric information directly, such as in Phong shading), a microscopic scale (extending local geometry with microfacet detail), and a global scale, including not only the geometry itself but also the visibility of every other object in the scene.[2] Theoretically, reflections, refractions, transparency, and shadows are all examples of global illumination, because when simulating them, one object affects the rendering of another (as opposed to an object being affected only by a direct source of light). In practice, however, only the simulation of diffuse inter-reflection or caustics is called global illumination, especially in real-time settings.

Algorithms

Global illumination is a key aspect to the realism of a 3D scene. Naive 3D lighting will only take into account direct light, meaning any light which radiates off a light source and bounces directly into the virtual camera. Shadows will appear completely dark, due to light not interacting with any other surface before it reaches the camera. As this is not what occurs in real life, we perceive the resulting image as incomplete. Applying full global illumination allows for the missing effects that makes an image feel more natural. However, global illumination is computationally more expensive and consequently much slower to generate.

Most algorithms, especially those focusing on real-time solutions, model diffuse inter-reflection exclusively, which is a very important part of global illumination; however, some also model indirect specular reflections, refraction, and indirect shadowing, which allows for a closer approximation of the reality and produces more appealing images. The algorithms used to calculate the distribution of light energy between surfaces of a scene are closely related to heat transfer simulations performed using finite-element methods in engineering design.

Radiosity, ray tracing, beam tracing, cone tracing, path tracing, Metropolis light transport and photon mapping are all examples of algorithms used for global illumination in offline settings, some of which may be used together to yield results that trade between accuracy and speed, depending on the implementation.

Real-time applications

Achieving accurate computation of global illumination in real-time remains difficult.[3] On one end, the diffuse inter-reflection component of global illumination is sometimes approximated by an "ambient" term in the lighting equation, which is also called "ambient lighting" or "ambient color" in 3D software. Though this method is one of the cheapest ways to simulate indirect lighting, when used alone it does not provide an adequately realistic effect. Ambient lighting is known to "flatten" shadows in 3D scenes, making the overall visual effect more bland. Beyond ambient lighting, techniques which trace the path of light accurately have historically been either too slow for consumer hardware or limited to static and precomputed environments. This proves problematic, as most applications allow for input from an user that can affect their surroundings, and the precalculation steps may introduce constraints upon the artists. Consequently, research has been dedicated to finding a balance between adequate performance, accurate visual results, and interactivity.

Starting with Nvidia's RTX 20 series, consumer graphics hardware has been extended to allow for ray tracing computations to be performed in real time through hardware acceleration. This has allowed for further improvements, as applications can now harness the power of this acceleration to provide not only precise lighting results, but the ability to affect said lighting dynamically. Some content that has taken advantage of this capability includes Cyberpunk 2077, Indiana Jones and the Great Circle, and Alan Wake 2, among others.[4][5]

For an overview of the current state of real-time global illumination, see [6] or [7].

Procedure

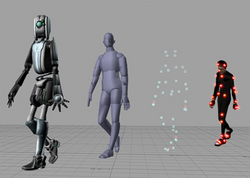

Algorithms which attempt to simulate global illumination are numerical approximations of the rendering equation. Well-known algorithms for computing global illumination include path tracing, photon mapping and radiosity. The following approaches can be distinguished here:

- Inversion:

- Not applied in practice

- Expansion:

- Bi-directional approach: Photon mapping + Distributed ray tracing, Bi-directional path tracing, Metropolis light transport

- Iteration:

A full overview can be found in [8].

List of methods

| Method | Application | Description/Notes |

|---|---|---|

| Ray tracing | Offline/Real-time | The process of tracing a set of virtual paths (rays) from one point in space to another, testing for intersections with geometry. Ray tracing is useful as it can imitate the way light physically travels in the real world. Several variants exist for solving problems related to sampling, aliasing, and soft shadows, such as Distributed ray tracing, cone tracing, and beam tracing. |

| Path tracing | Offline | An extension to ray tracing that uses the Monte Carlo method of sampling to approximate a solution to the rendering equation. This makes path tracing unbiased. Variants include bi-directional path tracing and energy redistribution path tracing.[9] |

| Photon mapping | Offline | Rays from both the camera and each light source are traced around the scene and connected to produce plausible radiance. It provides consistent results, but is biased. Variants include progressive photon mapping and stochastic progressive photon mapping.[10] |

| Radiosity | Offline | A finite element method approach to the rendering equation, dividing the scene into patches and computing the radiosity between each patch. It is good for precomputations, but assumes completely diffuse geometries. Improved versions include instant radiosity[11] and bidirectional instant radiosity,[12] both of which use virtual point lights (VPLs) instead of patches. |

| Lightcuts | Offline | A method of improving performance when computing the contribution of several light sources at a surface, by clustering lights into a light tree and selectively choosing the appropriate "cut" for each point.[13] Enhanced variants include multidimensional lightcuts and bidirectional lightcuts. |

| Point based global illumination | Offline | Point based global illumination (PBGI) discretizes the scene into a point-cloud which contains radiance information for the surface a point lies on.[14] It has been extensively used in movie animations for its relative speed and lack of noise compared to per-pixel calculations like path tracing.[15] |

| Metropolis light transport | Offline | Builds upon bi-directional path tracing using the Metropolis-Hastings algorithm, exploring paths adjacent to ones which have already been found. It remains unbiased, while usually converging faster than path tracing. An extension was introduced called multiplexed metropolis light transport.[16] |

| Image-based lighting | Real-time | Image-based lighting (IBL) can refer to a number of different effects. Usually, it refers to an approximate method of global illumination through the use of high-dynamic-range images (HDRIs), also known as environment maps, which encompass the entire scene and light surfaces based on their normal direction.

IBL has also been used to describe image proxies, or image-based reflections, which represent surfaces as flat image planes to improve the appearance of reflections. They have been used in games such as Remember Me[17] and Thief (2014),[18] as well as the Unreal Engine 3 Samaritan demo.[19] |

| Ambient occlusion | Real-time | An approximate solution to global illumination that shades the areas of a scene most likely to be occluded by another object. It describes how "exposed" a point in the scene is to incoming light, and has been useful to improve realism for a comparatively low cost as opposed to indirect light. The effect can be reproduced through alterations to other methods, such as VXGI or SSGI. |

| Irradiance volumes | Real-time | Irradiance volumes encode global illumination results in evenly spaced points in 3D space (probes) for rendering of static scenes.[20] Dynamic surfaces can be lit based on their position inside the volume and their normal direction. Initially used cubemaps to store irradiance at each probe, but was later improved by compressing into spherical harmonics (SH).[21] |

| Light propagation volumes | Real-time | The light propagation volume (LPV) approximately achieves global illumination in real-time with lattices and spherical harmonics to represent the spatial and angular distribution of light in the scene.[22] The technique was later expanded to include approximate occlusion and specular indirect lighting, as well as farther coverage through the nesting of multiple lattices with decreasing resolution.[23] It was used in earlier versions of CryEngine and Unreal Engine.[24] |

| Voxel-based solutions | Real-time | Voxel-based techniques use a discretization of the scene into a volume to simplify lighting calculations. Solutions in this category might store varying information, such as geometric occupancy only, or the material properties of underlying surfaces, etc. Examples include compressed radiance caching volumes,[25] voxel cone tracing,[26] sparse voxel octrees,[27] and VXGI.[28] Voxel cone tracing was used and improved in The Tomorrow Children, where the technique provided the entirety of the lighting in the game.[29] |

| Precomputed probe solutions | Real-time | Extensions of the irradiance volume that simulate global illumination of dynamic light sources by relighting the scene based on non-lighting information, such as geometry or visibility, precomputed in a "baking" stage beforehand. Examples of this technique include deferred radiance transfer volumes[30] and a successor used in Tom Clancy's The Division.[31] |

| Screen-space global illumination | Real-time | Screen-space global illumination (SSGI) methods use the information visible to the screen, usually through the use of an already existing G-Buffer, to approximate indirect lighting. Variants exist for ambient occlusion (SSAO), for which the technique was initially developed, and specular reflections (SSR).

The most common approach is screen-space ray marching.[32] Additional techniques include screen space directional occlusion,[33] "deep" buffers,[34][35] and horizon-based visibility bitmasks.[36] |

| Dynamic Diffuse Global Illumination | Real-time | Unlike precomputed probe solutions, Dynamic Diffuse Global Illumination (DDGI) uses probes to calculate both lighting and geometric information in real time, using hardware-accelerated ray tracing to approximate geometry.[37] An offshoot of this technique uses SDF primitives to represent a scene and reflective shadow maps to sample lights, improving on performance by removing the hardware requirement and better approximating occlusions, at the cost of manual setup.[38] |

| Global Illumination Based on Surfels | Real-time | A technique created by Electronic Arts' SEED group that discretizes the scene with surface elements, "surfels", in real time, and uses them to accumulate the result of light calculations done through hardware ray tracing.[39] It borrows from the ideas of point based global illumination. It is currently integrated into the Frostbite engine. |

| Lumen | Real-time | A full global illumination solution that relies on an advanced screen-space radiance caching method, alongside an SDF volume representation of objects, to provide accurate and stable indirect lighting, shadowing, and reflections within a fully dynamic scene.[40] Screen probes use importance sampling techniques to intelligently distribute rays, and distant lighting uses a world space probe fallback.[41] It is integrated into Unreal Engine 5. |

See also

- Rendering equation

- Bias of an estimator

- Bidirectional scattering distribution function

- Consistent estimator

- Unbiased rendering

- Category:Global illumination software

References

- ↑ Whitted, Turner (1980-06-01). "An improved illumination model for shaded display". Commun. ACM 23 (6): 343–349. doi:10.1145/358876.358882. ISSN 0001-0782. https://dl.acm.org/doi/10.1145/358876.358882.

- ↑ Whitted, Turner (2020-01-01). "Origins of Global Illumination". IEEE Computer Graphics and Applications 40 (1): 20–27. doi:10.1109/MCG.2019.2957688. ISSN 0272-1716. PMID 31944940. Bibcode: 2020ICGA...40a..20W.

- ↑ Kurachi, Noriko (2011). The Magic of Computer Graphics. CRC Press. p. 339. ISBN 9781439873571. https://play.google.com/store/books/details?id=YjLOBQAAQBAJ. Retrieved 24 September 2017.

- ↑ "List of games that support ray tracing". https://www.pcgamingwiki.com/wiki/List_of_games_that_support_ray_tracing.

- ↑ Burnes, Andrew (2025-01-30). "NVIDIA DLSS & GeForce RTX: List Of All Games, Engines And Applications Featuring GeForce RTX-Powered Technology And Features" (in en-us). https://www.nvidia.com/en-us/geforce/news/nvidia-rtx-games-engines-apps/.

- ↑ Toth, Benoit (2022-03-21). "The state-of-art of Dynamic Global Illumination in video-games". Cnam-Enjmin. https://enjmin.cnam.fr/recherche/le-blog/l-etat-d-avancement-de-la-dynamique-d-illumination-globale-dans-les-jeux-video-par-benoit-toth-1322932.kjsp?RH=1570198561448.

- ↑ Tuo, Chen; Ziheng, Zhou; Zhenyu, Wu; Songhai, Zhang (2025-03-12). "Overview of Real-Time Global Illumination Rendering Methods without Pre-Processing" (in zh, en). Journal of Computer-Aided Design & Computer Graphics 37 (7): 1101–1115. doi:10.3724/SP.J.1089.2024-00683. ISSN 1003-9775. https://www.jcad.cn/en/article/doi/10.3724/SP.J.1089.2024-00683.

- ↑ Dutre, Philip; Bekaert, Philippe; Bala, Kavita (2006-09-25). Advanced Global Illumination (2nd ed.). AK Peters. ISBN 9781568813073.

- ↑ Cline, D.; Talbot, J.; Egbert, P. (2005). "Energy redistribution path tracing". ACM Transactions on Graphics 24 (3): 1186–95. doi:10.1145/1073204.1073330.

- ↑ "Toshiya Hachisuka at UTokyo". ci.i.u-tokyo.ac.jp. http://www.ci.i.u-tokyo.ac.jp/~hachisuka/.

- ↑ "Instant Radiosity: Keller (SIGGRAPH 1997)". https://www.cs.cornell.edu/courses/cs6630/2012sp/slides/Boyadzhiev-Matzen-InstantRadiosity.pdf.

- ↑ Segovia, B.; Iehl, J.C.; Mitanchey, R.; Péroche, B. (2006). "Bidirectional instant radiosity". Rendering Techniques. Eurographics Association. pp. 389–397. http://artis.imag.fr/Projets/Cyber-II/Publications/SIMP06a.pdf.

- ↑ Walter, Bruce; Fernandez, Sebastian; Arbree, Adam; Bala, Kavita; Donikian, Michael; Greenberg, Donald P. (1 July 2005). "Lightcuts". ACM Transactions on Graphics 24 (3): 1098–1107. doi:10.1145/1073204.1073318.

- ↑ "Point-based Global Illumination". https://perso.telecom-paristech.fr/boubek/PBGI/#topics.

- ↑ Daemen, Karsten (November 14, 2012). "Point Based Global Illumination: An introduction". KU Leuven. http://www.karstendaemen.com/thesis/files/intro_pbgi.pdf.

- ↑ Hachisuka, T.; Kaplanyan, A.S.; Dachsbacher, C. (2014). "Multiplexed metropolis light transport". ACM Transactions on Graphics 33 (4): 1–10. doi:10.1145/2601097.2601138. http://www.ci.i.u-tokyo.ac.jp/~hachisuka/mmlt.pdf.

- ↑ Lagarde, Sébastien; Zanuttini, Antoine (2013). "Practical Planar Reflections using Cubemaps and Image Proxies". in Engel, Wolfgang F.. GPU Pro 4: Advanced Rendering Techniques (1 ed.). Boca Raton: CRC Press, Taylor & Francis Group. pp. 51–68. ISBN 978-1-4665-6743-6.

- ↑ Sikachev, Peter; Delmont, Samuel; Doyon, Uriel; Bucci, Jean-Normand (2016). "Next-Generation Rendering in Thief". in Engel, Wolfgang F.. GPU Pro 6: Advanced Rendering Techniques. Boca Raton: CRC Press/Taylor Francis Group. pp. 65–90. ISBN 978-1-4822-6461-6.

- ↑ "Image Based Reflections". https://docs.unrealengine.com/udk/Three/ImageBasedReflections.html.

- ↑ Greger, Gene; Shirley, Peter; Hubbard, Philip M.; Greenberg, Donald P. (1998-03-01). "The Irradiance Volume". IEEE Comput. Graph. Appl. 18 (2): 32–43. doi:10.1109/38.656788. ISSN 0272-1716. Bibcode: 1998ICGA...18b..32G. https://doi.org/10.1109/38.656788.

- ↑ Oat, Christopher (2005). "Irradiance Volumes for Games". https://www.chrisoat.com/papers/Oat_GDC2005_IrradianceVolumesForGames.pdf.

- ↑ Kaplanyan, Anton (2009-08-03). "Light Propagation Volumes in CryEngine 3". https://advances.realtimerendering.com/s2009/Light_Propagation_Volumes.pdf.

- ↑ Kaplanyan, Anton; Dachsbacher, Carsten (2010-02-19). "Cascaded light propagation volumes for real-time indirect illumination". Proceedings of the 2010 ACM SIGGRAPH Symposium on Interactive 3D Graphics and Games. doi:10.1145/1730804.1730821. ISBN 9781605589398. http://www.vis.uni-stuttgart.de/~dachsbcn/download/lpv.pdf.

- ↑ "Light Propagation Volumes | Unreal Engine 4.27 Documentation | Epic Developer Community" (in en-us). https://dev.epicgames.com/documentation/en-us/unreal-engine/lighting-the-environment-in-unreal-engine.

- ↑ Vardis, Kostas; Papaioannou, Georgios; Gkaravelis, Anastasios (2014-12-16). Stamminger, Marc; McGuire, Morgan. eds. "Real-time Radiance Caching using Chrominance Compression". The Journal of Computer Graphics Techniques 3 (4): 111–131. https://jcgt.org/published/0003/04/06/.

- ↑ Crassin, Cyril; Neyret, Fabrice; Sainz, Miguel; Green, Simon; Eisemann, Elmar (2011-08-07). "Interactive indirect illumination using voxel-based cone tracing: An insight". ACM SIGGRAPH 2011 Talks. SIGGRAPH '11. New York, NY, USA: Association for Computing Machinery. pp. 1. doi:10.1145/2037826.2037853. ISBN 978-1-4503-0974-5. https://doi.org/10.1145/2037826.2037853.

- ↑ "Voxel-Based Global Illumination (SVOGI)". https://www.cryengine.com/docs/static/engines/cryengine-5/categories/23756816/pages/25535599.

- ↑ "VXGI | GeForce". geforce.com. 2015-04-08. http://www.geforce.com/hardware/technology/vxgi.

- ↑ McLaren, James (2014-09-03). "Cascaded Voxel Cone Tracing in The Tomorrow Children". https://fumufumu.q-games.com/archives/Cascaded_Voxel_Cone_Tracing_final.pdf.

- ↑ Gilabert, Mickael; Stefanov, Nikolay (2012-03-09). "Deferred Radiance Transfer Volumes: Global Illumination in Far Cry 3". https://gdcvault.com/play/1015326/Deferred-Radiance-Transfer-Volumes-Global.

- ↑ Stefanov, Nikolay (2016-03-16). "Global Illumination in 'Tom Clancy's The Division'". https://gdcvault.com/play/1023273/Global-Illumination-in-Tom-Clancy.

- ↑ Sachdeva, Shubham (2022-04-22). "Dynamic, Noise Free, Screen Space Diffuse Global Illumination". https://gamehacker1999.github.io/posts/SSGI/.

- ↑ Ritschel, Tobias; Grosch, Thorsten; Seidel, Hans-Peter (2009-02-27). "Approximating dynamic global illumination in image space". Proceedings of the 2009 symposium on Interactive 3D graphics and games. I3D '09. New York, NY, USA: Association for Computing Machinery. pp. 75–82. doi:10.1145/1507149.1507161. ISBN 978-1-60558-429-4. https://doi.org/10.1145/1507149.1507161.

- ↑ Mara, Michael; McGuire, Morgan; Nowrouzezahrai, Derek; Luebke, David (2016-06-24). "Deep G-Buffers for Stable Global Illumination Approximation". Proceedings of the High Performance Graphics 2016: 11. https://casual-effects.com/research/Mara2016DeepGBuffer/index.html#images.

- ↑ Nalbach, Oliver; Ritschel, Tobias; Seidel, Hans-Peter (2014-03-14). "Deep screen space". Proceedings of the 18th meeting of the ACM SIGGRAPH Symposium on Interactive 3D Graphics and Games. I3D '14. New York, NY, USA: Association for Computing Machinery. pp. 79–86. doi:10.1145/2556700.2556708. ISBN 978-1-4503-2717-6. https://doi.org/10.1145/2556700.2556708.

- ↑ Therrien, Oliver; Levesque, Yannick; Gilet, Guillaume (2023). "Screen Space Indirect Lighting with Visibility Bitmask". The Visual Computer 39 (11): 5925–5936. doi:10.1007/s00371-022-02703-y. https://link.springer.com/article/10.1007/s00371-022-02703-y.

- ↑ Majercik, Zander; Guertin, Jean-Philippe; Nowrouzezahrai, Derek; McGuire, Morgan (2019-06-05). Willmott, Andrer; Olano, Marc. eds. "Dynamic Diffuse Global Illumination with Ray-Traced Irradiance Fields". Journal of Computer Graphics Techniques 8 (2): 1–30. ISSN 2331-7418. https://jcgt.org/published/0008/02/01/.

- ↑ Hu, Jinkai; K. Yip, Milo; Elias Alonso, Guillermo; Shi-hao, Gu; Tang, Xiangjun; Xiaogang, Jin (2020). "Signed Distance Fields Dynamic Diffuse Global Illumination". arXiv:2007.14394 [cs.GR].

- ↑ Brinck, Andreas; Bei, Xiangshun; Halen, Henrik; Hayward, Kyle (2021-08-11). "Global Illumination Based on Surfels". SIGGRAPH. http://advances.realtimerendering.com/s2021/index.html.

- ↑ "Lumen Technical Details in Unreal Engine | Unreal Engine 5.7 Documentation | Epic Developer Community" (in en-us). https://dev.epicgames.com/documentation/en-us/unreal-engine/lumen-technical-details-in-unreal-engine.

- ↑ Wright, Daniel (2021-08-11). "Radiance Caching for Real-Time Global Illumination". SIGGRAPH. https://advances.realtimerendering.com/s2021/index.html.

External links

- Video demonstrating global illumination and the ambient color effect

- Collection of real-time GI techniques

- Real-time GI demos – survey of practical real-time GI techniques as a list of executable demos

- kuleuven - This page contains the Global Illumination Compendium, an effort to bring together most of the useful formulas and equations for global illumination algorithms in computer graphics.

- Theory and practical implementation of Global Illumination using Monte Carlo Path Tracing.

|