Isolation forest

A major contributor to this article appears to have a close connection with its subject. (October 2023) (Learn how and when to remove this template message) |

Isolation Forest is an algorithm for data anomaly detection using binary trees. It was developed by Fei Tony Liu in 2008.[1] It has a linear time complexity and a low memory use, which works well for high-volume data.[2][3] It is based on the assumption that because anomalies are few and different from other data, they can be isolated using few partitions. Like decision tree algorithms, it does not perform density estimation. Unlike decision tree algorithms, it uses only path length to output an anomaly score, and does not use leaf node statistics of class distribution or target value.

Isolation Forest is fast because it splits the data space, randomly selecting an attribute and split point. The anomaly score is inversely associated with the path-length because anomalies need fewer splits to be isolated, because they are few and different.

History

The Isolation Forest (iForest) algorithm was initially proposed by Fei Tony Liu, Kai Ming Ting and Zhi-Hua Zhou in 2008.[2] In 2012 the same authors showed that iForest has linear time complexity, a small memory requirement, and is applicable to high-dimensional data.[3] In 2010, an extension of the algorithm, SCiforest, was published to address clustered and axis-paralleled anomalies.[4]

Isolation trees

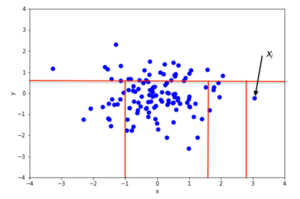

The premise of the Isolation Forest algorithm is that anomalous data points are easier to separate from the rest of the sample. In order to isolate a data point, the algorithm recursively generates partitions on the sample by randomly selecting an attribute and then randomly selecting a split value between the minimum and maximum values allowed for that attribute.

An example of random partitioning in a 2D dataset of normally distributed points is shown in the first figure for a non-anomalous point and in the second one for a point that is more likely to be an anomaly. It is apparent from the pictures how anomalies require fewer random partitions to be isolated, compared to normal points.

Recursive partitioning can be represented by a tree structure named Isolation Tree, while the number of partitions required to isolate a point can be interpreted as the length of the path, within the tree, to reach a terminating node starting from the root. For example, the path length of point in the first figure is greater than the path length of in the second figure.

Let be a set of d-dimensional points and . An Isolation Tree (iTree) is defined as a data structure with the following properties:

- for each node in the Tree, is either an external-node with no child, or an internal-node with one “test” and exactly two child nodes ( and )

- a test at node consists of an attribute and a split value such that the test determines the traversal of a data point to either or .

In order to build an iTree, the algorithm recursively divides by randomly selecting an attribute and a split value , until either

- the node has only one instance, or

- all data at the node have the same values.

When the iTree is fully grown, each point in is isolated at one of the external nodes. Intuitively, the anomalous points are those (easier to isolate, hence) with the smaller path length in the tree, where the path length of point is defined as the number of edges traverses from the root node to get to an external node.

A probabilistic explanation of iTree is provided in the original iForest paper.[2]

Anomaly detection

Anomaly detection with Isolation Forest is done as follows:[4]

- Use the training dataset to build some number of iTrees

- For each data point in the test set:

- Pass it through all the iTrees, counting the path length for each tree

- Assign an “anomaly score” to the instance

- Label the point as “anomaly” if its score is greater than a predefined threshold, which depends on the domain

Anomaly score

The algorithm for computing the anomaly score of a data point is based on the observation that the structure of iTrees is equivalent to that of Binary Search Trees (BST): a termination to an external node of the iTree corresponds to an unsuccessful search in the BST.[4] Therefore, the estimation of average for external node terminations is the same as that of the unsuccessful searches in BST, that is[5]

where is the test set size, is the sample set size and is the harmonic number, which can be estimated by , where is the Euler-Mascheroni constant.

Above, is the average given , so we can use it to normalize to get an estimate of the anomaly score for a given instance x:

where is the average value of from a collection of iTrees. For any data point :

- if is close to then is very likely an anomaly

- if is smaller than then is likely normal

- if all points in the sample score around , then likely they are all normal

Application of isolation forest for credit card fraud detection (anomaly)

The Isolation Forest algorithm has shown its effectiveness in spotting anomalies in data sets like uncovering credit card fraud instances among transactions, by European cardholders with an unbalanced dataset where it can distinguish fraudulent activities from legitimate ones by identifying rare patterns that show notable differences.[6]

Dataset and preprocessing

In this research projects dataset, there are 284807 transactions recorded in total out of which only 492 are identified as fraudulent (0.172%). Due to this imbalance between authentic and fraudulent transactions detection of fraud becomes quite demanding; hence specialized metrics such as the Area Under the Precision Recall Curve (AUPRC) are essential for accurate evaluation rather, than relying solely on traditional accuracy measures.[6]

The dataset consists of PCA transformed features (from V1, to V28) well as the Time (time elapsed since the initial transaction) and Amount (transaction value). We processed the dataset using the steps:

Scaling : The Time and Amount features by utilizing StandardScaler to standardize their input range.[7]

Imputation: Missing data in the dataset were filled in by using the average of the corresponding columns, with SimpleImputer.[7]

Feature Selection : To enhance the model's effectiveness and accuracy in predictions and analysis tasks was to choose features with the kurtosis value for further examination because these particular features tend to have the most significant outliers that could potentially signal irregularities or anomalies within the data set used for modeling purposes. For training purposes specifically, a selection of the 10 features were identified and prioritized as key components, in refining the model's capabilities and enhancing its overall performance.[8]

Model training and hyperparameter tuning

The Isolation Forest model was specifically trained on transactions (Class=0) focusing on recognizing common behavioral patterns in data analysis tasks. The algorithm separates out instances by measuring the distance needed to isolate them within a collection of randomly divided trees.[6]

Hyperparameter Tuning:

A grid search was performed over the following hyperparameters

Contamination: Expected percentage of anomalies in the dataset, tested at values 0.01, 0.02, and 0.05 [8]

Max Features: Number of features to sample for each tree, tested at values 5, 8, and 10.[8]

The best configuration was found with:

- Contamination: 0.01

- Max Features: 10

Results and evaluation

The model was evaluated on a separate test set using accuracy, precision, recall, and the Area Under the Precision-Recall Curve (AUPRC). Below are the key results:

- Accuracy: 0.99

- Precision: 0.06

- Recall: 0.38

- AUPRC: 0.22

While the accuracy seems impressive at glance it mainly showcases the prevalence of regular transactions within the dataset. Precision and recall emphasize the challenges in detecting fraud because of the significant imbalance present. In assessing both precision and recall the AUPRC offers an evaluation by considering the balance between precision and recall.[6]

Visualizing results

1. Scatter Plot of Detected Anomalies

Key details

- Red Points: Represent the fraudulent transactions flagged by the model as anomalies. These points deviate significantly from the dense clusters of normal transactions, showcasing the algorithm's capability to isolate outliers effectively.

- Blue Points: Represent the normal transactions, which form the dense cluster at the center of the plot. These are transactions the model identified as not being anomalies.

- Interpretability: The use of two features with high kurtosis helps in visually understanding the model's decision-making process. In high-dimensional data like this (28 PCA-transformed features), reducing to two dimensions with the most extreme outliers provides an interpretable representation of the results.

Observation: The plot shows that many fraudulent transactions (red points) are located on the edges or far from the central cluster of normal transactions (blue points). However, some red points overlap with the blue cluster, indicating potential false positives or challenging cases for the model.

2. Precision-Recall Curve

Key Details:

- X-axis (Recall): Represents the proportion of true fraudulent transactions (positives) that were correctly identified by the model. A higher recall indicates fewer missed frauds.

- Y-axis(Precision): Represents the proportion of transactions flagged as fraud that were fraudulent. A higher precision indicates fewer false positives.

- AUPRC Value: The Area Under the Precision-Recall Curve (AUPRC) quantifies the model's performance. For this dataset, the AUPRC is 0.22, reflecting the difficulty of detecting fraud in a highly imbalanced dataset.

Observation:

- At high recall values, precision decreases sharply, indicating that as the model becomes more aggressive in identifying anomalies, it also flags more normal transactions as fraud, leading to a higher false-positive rate.

- Conversely, at higher precision values, recall decreases, meaning the model becomes more conservative and misses many fraudulent transactions.

Strengths of isolation forest

- Scalability: With a linear time complexity of O(n*logn), Isolation Forest is efficient for large datasets.[6]

- Unsupervised Nature: The model does not rely on labeled data, making it suitable for anomaly detection in various domains.[8]

- Feature-agnostic: The algorithm adapts to different datasets without making assumptions about feature distributions.[7]

Challenges

- Imbalanced Data: Low precision indicates that many normal transactions are incorrectly flagged as fraud, leading to false positives.[7]

- Sensitivity to Hyperparameters: Contamination rate and feature sampling heavily influence the model's performance, requiring extensive tuning.[8]

- Interpretability: While effective, the algorithm's outputs can be challenging to interpret without domain-specific knowledge.[6]

Future directions

- Combining Models: A hybrid approach, integrating supervised learning with Isolation Forest, may enhance performance by leveraging labeled data for known fraud cases.[7]

- Active Learning: Incorporating feedback loops to iteratively refine the model using misclassified transactions could improve recall and precision.[8]

- Feature Engineering: Adding transaction metadata, such as merchant location and transaction type, could further aid anomaly detection.[6]

Conclusion

The Isolation Forest algorithm provides a robust solution for anomaly detection, particularly in domains like fraud detection where anomalies are rare and challenging to identify. However, its reliance on hyperparameters and sensitivity to imbalanced data necessitate careful tuning and complementary techniques for optimal results.[6][8]

Properties

- Sub-sampling: Because iForest does not need to isolate normal instances, it can often ignore most of the training set. Thus, it works very well when the sampling size is kept small, unlike most other methods, which benefit from a large sample size.[2][3]

- Swamping: When normal instances are too close to anomalies, the number of partitions required to separate anomalies increases, a phenomenon known as swamping, which makes it more difficult for iForest to discriminate between anomalies and normal points. A main reason for swamping is the presence of too much data; so a possible solution is sub-sampling. Because iForest performs well under sub-sampling, reducing the number of points in the sample is also a good way to reduce the effect of swamping.[2] In real-time settings, the combination of small sequential data windows and sub-sampling has been shown to improve anomaly detection performance without compromising accuracy.[9]

- Masking: When there are many anomalies, some of them can aggregate in a dense, large cluster, making it more difficult to separate the single anomalies and so to identify them. This phenomenon is called “masking”, and as with swamping, is more likely when the sample is big and can be alleviated through sub-sampling.[2]

- High-dimensional data: A main limitation of standard, distance-based methods is their inefficiency in dealing with high dimensional data.[10] The main reason is that in a high-dimensional space, every point is equally sparse, so using a distance-based measure of separation is ineffective. Unfortunately, high-dimensional data also affects the detection performance of iForest, but the performance can be vastly improved by using feature selection, like Kurtosis, to reduce the dimensionality of the sample.[2][4]

- Normal instances only: iForest performs well even if the training set contains no anomalous points.[4] This is because iForest describes data distributions such that long tree paths correspond to normal data points. Thus, the presence of anomalies is irrelevant to detection performance.

Parameter selection

The performance of the Isolation Forest algorithm is highly dependent on the selection of its parameters. Properly tuning these parameters can significantly enhance the algorithm's ability to accurately identify anomalies. Understanding the role and impact of each parameter is crucial for optimizing the model's performance.[11]

The Isolation Forest algorithm involves several key parameters that influence its behavior and effectiveness. These parameters control various aspects of the tree construction process, the size of the sub-samples, and the thresholds for identifying anomalies.[11] Selecting appropriate parameters is the key to the performance of the Isolation Forest algorithm. Each of the parameters influences anomaly detection differently. Key parameters include:

Number of Trees : This parameter determines the number of trees in the Isolation Forest. A higher number of trees improves anomaly detection accuracy but increases computational costs. The optimal number balances resource availability with performance needs. For example, a smaller dataset might require fewer trees to save on computation, while larger datasets benefit from additional trees to capture more complexity.[2]

Subsample Size : The subsample size dictates the number of data points used to construct each tree. Smaller subsample sizes reduce computational complexity but may capture less variability in the data. For instance, a subsample size of 256 is commonly used, but the optimal value depends on dataset characteristics.[2]

Contamination Factor : This parameter estimates the proportion of outliers in the dataset. Higher contamination values flag more data points as anomalies, which can lead to false positives. Tuning this parameter carefully based on domain knowledge or cross-validation is critical to avoid bias or misclassification.[3]

Maximum Features: This parameter specifies the number of random features to consider for each split in the tree. Limiting the number of features increases randomness, making the model more robust. However, in high-dimensional datasets, selecting only the most informative features prevents overfitting and improves generalization.[2][3]

Tree Depth : Tree depth determines the maximum number of splits for a tree. Deeper trees better capture data complexity but risk overfitting, especially in small datasets. Shallow trees, on the other hand, improve computational efficiency.[3]

The table below summarizes parameter selection strategies based on dataset characteristics.

| Parameter | Small Datasets | Large Datasets | High-Dimensional Data | Imbalanced Datasets |

|---|---|---|---|---|

Number of Trees n_estimators |

Use fewer trees to save on computation.[13] | More trees improve performance, but are costly.[14] | Requires more trees to capture complexity.[15] | Adjust based on dataset size.[15] |

Subsample Size max_samples |

Smaller subsample reduces cost.[13] | Larger subsample increases accuracy.[14] | Dimensionality reduction can optimize subsample size.[15] | Smaller subsample for efficiency.[15] |

Contamination Factor contamination |

Tune based on domain knowledge.[13] | Cross-validation for tuning.[14] | Careful tuning to avoid misclassification.[15] | Lower contamination helps avoid bias.[15] |

Maximum Features max_features |

Use all features unless limited by computation.[13] | Logarithmic or √n scaling for large datasets.[14] | Select most informative features.[15] | Balance feature selection to avoid overfitting.[15] |

Tree Depth max_depth |

Moderate depth to prevent overfitting.[13] | Shallower depth to save on computation.[14] | Deeper trees to capture data complexity.[15] | Adjust to balance overfitting.[15] |

Benefits of Proper Parameter Tuning:

Improved Accuracy: Fine-tuning parameters helps the algorithm better distinguish between normal data and anomalies, reducing false positives and negatives.[11]

Computational Efficiency: Selecting appropriate values for parameters like the number of trees and sub-sample size makes the algorithm more efficient without sacrificing accuracy.[11]

Generalization: Limiting tree depth and using bootstrap sampling helps the model generalize better to new data, reducing overfitting.[11]

SCiForest

SCiForest (Isolation Forest with Split-selection Criterion) is an extension of the original Isolation Forest algorithm, specifically designed to target clustered anomalies. It introduces a split-selection criterion and uses random hyper-planes that are non-axis-parallel to the original attributes. SCiForest does not require an optimal hyper-plane at every node; instead, it generates multiple random hyper-planes, and through sufficient trials, a good-enough hyper-plane is selected. This approach makes the resulting model highly effective due to the aggregate power of the ensemble learner.[4]

Steps in SCiForest implementation

The implementation of SciForest involves four primary steps, each tailored to improve anomaly detection by isolating clustered anomalies more effectively than standard Isolation Forest methods.

1. Subspace selection

Using techniques like KMeans or hierarchical clustering, SciForest organizes features into clusters to identify meaningful subsets. By sampling random subspaces, SciForest emphasizes meaningful feature groups, reducing noise and improving focus. This reduces the impact of irrelevant or noisy dimensions.[4]

2. Isolation tree construction

Within each selected subspace, isolation trees are constructed. These trees isolate points through random recursive splitting:

- A feature is selected randomly from the subspace.

- A random split value within the feature's range is chosen to partition the data.

Anomalous points, being sparse or distinct, are isolated more quickly (shorter path lengths) compared to normal points.[2]

3. Anomaly scoring

For each data point, the isolation depth () is calculated for all trees across all subspaces. The anomaly score for a data point is defined as:

Where:

- : Path length for data point in the -th tree.

- : Total number of isolation trees.

Points with lower average path lengths () are more likely to be anomalies. [3]

4. Thresholding

The final anomaly scores are compared against a predefined threshold to classify data points. If , the point is classified as anomalous; otherwise, it is normal. The anomaly score threshold, θ, can be tailored to specific applications to control the proportion of identified anomalies. [6]

These steps collectively enable SciForest to adapt to varied data distributions while maintaining efficiency in anomaly detection.

SCiForest implementation flowchart

This flowchart visually represents the step-by-step process of SCiForest implementation, from inputting high-dimensional datasets to detecting anomalies. Each step is highlighted with its key functionality, providing a clear overview of the methodology.

Extended isolation forest

Extended Isolation Forest (Extended IF or EIF) is another extension of the original Isolation Forest algorithm. Extended IF uses rotated trees in different planes, similarly to SCiForest and random values are selected to split the data, such as a random slope or intercept.

The standard Isolation Forest requires two pieces of information, those being 1) a random feature or coordinate, and 2) a random value for the feature from the range of available values in the data. The Extended IF also requires only two pieces of information, this time being 1) a random slope for the branch cut, and 2) a random intercept for the branch cut which is chosen from the range of available values of the training data. This makes the Extended IF simpler than using rotation trees.[16]

The figure depicts a score map of a regular Isolation Forest in comparison to an Extended Isolation Forest for a sinusoidal-shaped data-set. This image allows us to clearly observe the improvement made by the Extended Isolation Forest in evaluating the scores much more accurately when compared to the shape of the data. While the regular isolation forest fails in capturing the sinusoid shape of the data and properly evaluating the anomaly scores. The regular Isolation Forest shapes the anomaly scores into a rectangular shape and simply assumes that any region nearby the sinusoid data point is not to be anomalous. In comparison, the EIF is more accurate in evaluating anomaly scores with more detail and unlike its predecessor, the EIF is able to detect anomalies that are close to the sinusoid shape of the data but are still anomalous. The original EIF publication includes also this comparison with a single-blob-shaped data-set and a two-blob-shaped data-set, also comparing the EIF results to isolation forest using rotation trees.[16]

Improvements in extended isolation forest

The Extended Isolation Forest enhances the traditional Isolation Forest algorithm by addressing some of its limitations, particularly in handling high-dimensional data and improving anomaly detection accuracy. Key improvements in EIF include:

Enhanced Splitting Mechanism: Unlike traditional Isolation Forest, which uses random axis-aligned splits, EIF uses hyperplanes for splitting data. This approach allows for more flexible and accurate partitioning of the data space, which is especially useful in high-dimensional datasets.

Improved Anomaly Scoring: EIF refines the anomaly scoring process by considering the distance of data points from the hyperplane used in splitting. This provides a more granular and precise anomaly score, leading to better differentiation between normal and anomalous points.

Handling of High-Dimensional Data: The use of hyperplanes also improves EIF's performance in high-dimensional spaces. Traditional Isolation Forest can suffer from the curse of dimensionality in such scenarios, but EIF mitigates this issue by creating more meaningful and informative partitions in the data space.[17]

Open source implementations

Original implementation by Fei Tony Liu is Isolation Forest in R.

Other implementations (in alphabetical order):

- ELKI contains a Java implementation.

- Isolation Forest - A distributed Spark/Scala implementation with Open Neural Network Exchange (ONNX) export for easy cross-platform inference.[18]

- Isolation Forest by H2O-3 - A Python implementation.

- Package solitude implementation in R.

- Python implementation with examples in scikit-learn.

- Spark iForest - A distributed Apache Spark implementation in Scala/Python.

- PyOD IForest - Another Python implementation in the popular Python Outlier Detection (PyOD) library.

Other variations of Isolation Forest algorithm implementations:

- Extended Isolation Forest – An implementation of Extended Isolation Forest. [16]

- Extended Isolation Forest by H2O-3 - An implementation of Extended Isolation Forest.

- (Python, R, C/C++) Isolation Forest and variations - An implementation of Isolation Forest and its variations.[19]

Python implementation with Scikit-learn

The isolation forest algorithm is commonly used by data scientists through the version made available in the scikit-learn library. The snippet below depicts a brief implementation of an isolation forest, with direct explanations with comments.

import pandas as pd

from sklearn.ensemble import IsolationForest

# Consider 'data.csv' is a file containing samples as rows and features as column, and a column labeled 'Class' with a binary classification of your samples.

df = pd.read_csv("data.csv")

X = df.drop(columns=["Class"])

y = df["Class"]

# Determine how many samples will be outliers based on the classification

outlier_fraction = len(df[df["Class"] == 1]) / float(len(df[df["Class"] == 0]))

# Create and fit model, parameters can be optimized

model = IsolationForest(n_estimators=100, contamination=outlier_fraction, random_state=42)

model.fit(df)

In this snippet we can observe the simplicity of a standard implementation of the algorithm. The only requirement data that the user needs to adjust is the outlier fraction in which the user determines a percentage of the samples to be classifier as outliers. This can be commonly done by selection a group among the positive and negative samples according to a giving classification. Most of the other steps are pretty standard to any decision tree based technique made available through scikit-learn, in which the user simply needs to split the target variable from the features and fit the model after it is defined with a giving number of estimators (or trees).

This snippet is a shortened adapted version of an implementation explored by GeeksforGeeks, which can be accessed for further explorations.[20]

See also

References

- ↑ Liu, Fei Tony (7 July 2014). "First Isolation Forest implementation on Sourceforge". https://sourceforge.net/projects/iforest/.

- ↑ 2.00 2.01 2.02 2.03 2.04 2.05 2.06 2.07 2.08 2.09 2.10 Liu, Fei Tony; Ting, Kai Ming; Zhou, Zhi-Hua (December 2008). "Isolation Forest". 2008 Eighth IEEE International Conference on Data Mining. pp. 413–422. doi:10.1109/ICDM.2008.17. ISBN 978-0-7695-3502-9.

- ↑ 3.0 3.1 3.2 3.3 3.4 3.5 3.6 Liu, Fei Tony; Ting, Kai Ming; Zhou, Zhi-Hua (December 2008). "Isolation-Based Anomaly Detection". ACM Transactions on Knowledge Discovery from Data 6: 3:1–3:39. doi:10.1145/2133360.2133363. https://dl.acm.org/doi/10.1145/2133360.2133363.

- ↑ 4.0 4.1 4.2 4.3 4.4 4.5 4.6 Liu, Fei Tony; Ting, Kai Ming; Zhou, Zhi-Hua (September 2010). "On Detecting Clustered Anomalies Using SCiForest". Joint European Conference on Machine Learning and Knowledge Discovery in Databases - ECML PKDD 2010: Machine Learning and Knowledge Discovery in Databases. Lecture Notes in Computer Science. 6322. pp. 274–290. doi:10.1007/978-3-642-15883-4_18. ISBN 978-3-642-15882-7.

- ↑ Shaffer, Clifford A. (2011). Data structures & algorithm analysis in Java (3rd Dover ed.). Mineola, NY: Dover Publications. ISBN 9780486485812. OCLC 721884651.

- ↑ 6.0 6.1 6.2 6.3 6.4 6.5 6.6 6.7 6.8 Dal Pozzolo, Andrea; Caelen, Olivier; Johnson, Reid A; Bontempi, Gianluca (2015). "Calibrating Probability with Undersampling for Unbalanced Classification". 2015 IEEE Symposium Series on Computational Intelligence. pp. 159–166. doi:10.1109/SSCI.2015.33. ISBN 978-1-4799-7560-0. https://www.researchgate.net/publication/283349138.

- ↑ 7.0 7.1 7.2 7.3 7.4 7.5 7.6 "Credit Card Fraud Detection Dataset". https://www.kaggle.com/datasets/mlg-ulb/creditcardfraud.

- ↑ 8.0 8.1 8.2 8.3 8.4 8.5 8.6 Dal Pozzolo, Andrea; Caelen, Olivier; Le Borgne, Yann-Ael; Waterschoot, Serge; Bontempi, Gianluca. "Learned lessons in credit card fraud detection from a practitioner perspective". Expert Systems with Applications 41 (10): 4915–4928. doi:10.1016/j.eswa.2014.03.026. https://www.researchgate.net/publication/260837261.

- ↑ Gao, Jingqin; Ozbay, Kaan; Hu, Yu (2024-02-08). "Real-time anomaly detection of short-term traffic disruptions in urban areas through adaptive isolation forest" (in en). Journal of Intelligent Transportation Systems 29 (3): 269–286. doi:10.1080/15472450.2024.2312809. ISSN 1547-2450. https://www.tandfonline.com/doi/full/10.1080/15472450.2024.2312809.

- ↑ Dilini Talagala, Priyanga; Hyndman, Rob J.; Smith-Miles, Kate (12 Aug 2019). "Anomaly Detection in High Dimensional Data". arXiv:1908.04000 [stat.ML].

- ↑ 11.0 11.1 11.2 11.3 11.4 "Hyperparameter Tuning Isolation Forest | Restackio" (in en). https://www.restack.io/p/hyperparameter-tuning-answer-isolation-forest-cat-ai.

- ↑ "Andrea Dal Pozzolo". https://dalpozz.github.io/.

- ↑ 13.0 13.1 13.2 13.3 13.4 Michael Heigl; Ashutosh Anand Kumar; Andreas Urmann; Dalibor Fiala; Martin Schramm; Robert Hable (2021). "On the Improvement of the Isolation Forest Algorithm for Outlier Detection with Streaming Data". Electronics 10 (13): 1534. doi:10.3390/electronics10131534.

- ↑ 14.0 14.1 14.2 14.3 14.4 Yassine Chabchoub; M. U. Togbe; Aboubacar Boly; Rachid Chiky (2022). "An In-Depth Study and Improvement of Isolation Forest". IEEE Access 10: 10219–10237. doi:10.1109/ACCESS.2022.3144425. Bibcode: 2022IEEEA..1010219C.

- ↑ 15.00 15.01 15.02 15.03 15.04 15.05 15.06 15.07 15.08 15.09 "Tuning Isolation Forest for Anomaly Detection | Restackio" (in en). https://www.restack.io/p/ai-anomaly-detection-answer-tuning-isolation-forest-cat-ai.

- ↑ 16.0 16.1 16.2 16.3 Hariri, Sahand; Kind, Matias Carrasco; Brunner, Robert J. (April 2021). "Extended Isolation Forest". IEEE Transactions on Knowledge and Data Engineering 33 (4): 1479–1489. doi:10.1109/TKDE.2019.2947676. ISSN 1558-2191. Bibcode: 2021ITKDE..33.1479H.

- ↑ Hariri, Sahand; Kind, Matias Carrasco; Brunner, Robert J. (2021-04-01). "Extended Isolation Forest". IEEE Transactions on Knowledge and Data Engineering 33 (4): 1479–1489. doi:10.1109/TKDE.2019.2947676. ISSN 1041-4347. Bibcode: 2021ITKDE..33.1479H.

- ↑ Verbus, James (13 August 2019). "Detecting and preventing abuse on LinkedIn using isolation forests" (in en). https://engineering.linkedin.com/blog/2019/isolation-forest.

- ↑ Cortes, David (2019). "Distance approximation using Isolation Forests". arXiv:1910.12362 [stat.ML].

- ↑ GeeksforGeeks, What is Isolation Forest?, accessed November 19, 2024.

|