MM algorithm

The MM algorithm is an iterative optimization method which exploits the convexity of a function in order to find its maxima or minima. The MM stands for “Majorize-Minimization” or “Minorize-Maximization”, depending on whether the desired optimization is a minimization or a maximization. Despite the name, MM itself is not an algorithm, but a description of how to construct an optimization algorithm.

The expectation–maximization algorithm can be treated as a special case of the MM algorithm.[1][2] However, in the EM algorithm conditional expectations are usually involved, while in the MM algorithm convexity and inequalities are the main focus, and it is easier to understand and apply in most cases.[3]

History

The historical basis for the MM algorithm can be dated back to at least 1970, when Ortega and Rheinboldt were performing studies related to line search methods.[4] The same concept continued to reappear in different areas in different forms. In 2000, Hunter and Lange put forth "MM" as a general framework.[5] Recent studies[who?] have applied the method in a wide range of subject areas, such as mathematics, statistics, machine learning and engineering.[citation needed]

Algorithm

The MM algorithm works by finding a surrogate function that minorizes or majorizes the objective function. Optimizing the surrogate function will either improve the value of the objective function or leave it unchanged.

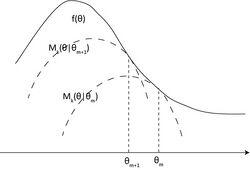

Taking the minorize-maximization version, let be the objective concave function to be maximized. At the m step of the algorithm, , the constructed function will be called the minorized version of the objective function (the surrogate function) at if

Then, maximize instead of , and let

The above iterative method will guarantee that will converge to a local optimum or a saddle point as m goes to infinity.[6] By the above construction

The marching of and the surrogate functions relative to the objective function is shown in the figure.

Majorize-Minimization is the same procedure but with a convex objective to be minimised.

Constructing the surrogate function

One can use any inequality to construct the desired majorized/minorized version of the objective function. Typical choices include

- Jensen's inequality

- Convexity inequality

- Cauchy–Schwarz inequality

- Inequality of arithmetic and geometric means

- Quadratic majorization/mininorization via second order Taylor expansion of twice-differentiable functions with bounded curvature.

References

- ↑ Lange, Kenneth. "The MM Algorithm". http://www.stat.berkeley.edu/~aldous/Colloq/lange-talk.pdf.

- ↑ Lange, Kenneth (2016). MM Optimization Algorithms. SIAM. doi:10.1137/1.9781611974409. ISBN 978-1-61197-439-3.

- ↑ Lange, K.; Zhou, H. (2022). "A legacy of EM algorithms". International Statistical Review 90 (Suppl 1): S52–S66. doi:10.1111/insr.12526. PMID 37204987.

- ↑ Ortega, J.M.; Rheinboldt, W.C. (1970). Iterative Solutions of Nonlinear Equations in Several Variables. New York: Academic. pp. 253–255. ISBN 9780898719468. https://archive.org/details/iterativesolutio0000orte.

- ↑ Hunter, D.R.; Lange, K. (2000). "Quantile Regression via an MM Algorithm". Journal of Computational and Graphical Statistics 9 (1): 60–77. doi:10.2307/1390613.

- ↑ Wu, C. F. Jeff (1983). "On the Convergence Properties of the EM Algorithm". Annals of Statistics 11 (1): 95–103. doi:10.1214/aos/1176346060.

|