Innovation Method

In statistics, the Innovation Method provides an estimator for the parameters of stochastic differential equations given a time series of (potentially noisy) observations of the state variables. In the framework of continuous-discrete state space models, the innovation estimator is obtained by maximizing the log-likelihood of the corresponding discrete-time innovation process with respect to the parameters. The innovation estimator can be classified as a M-estimator, a quasi-maximum likelihood estimator or a prediction error estimator depending on the inferential considerations that want to be emphasized. The innovation method is a system identification technique for developing mathematical models of dynamical systems from measured data and for the optimal design of experiments.

Background

Stochastic differential equations (SDEs) have become an important mathematical tool for describing the time evolution of several random phenomenon in natural, social and applied sciences. Statistical inference for SDEs is thus of great importance in applications for model building, model selection, model identification and forecasting. To carry out statistical inference for SDEs, measurements of the state variables of these random phenomena are indispensable. Usually, in practice, only a few state variables are measured by physical devices that introduce random measurement errors (observational errors).

Mathematical model for inference

The innovation estimator.[1] for SDEs is defined in the framework of continuous-discrete state space models.[2] These models arise as natural mathematical representation of the temporal evolution of continuous random phenomena and their measurements in a succession of time instants. In the simplest formulation, these continuous-discrete models [2] are expressed in term of a SDE of the form

describing the time evolution of state variables of the phenomenon for all time instant , and an observation equation

describing the time series of measurements of at least one of the variables of the random phenomenon on time instants . In the model (1)-(2), and are differentiable functions, is an -dimensional standard Wiener process, is a vector of parameters, is a sequence of -dimensional i.i.d. Gaussian random vectors independent of , an positive definite matrix, and an matrix.

Statistical problem to solve

Once the dynamics of a phenomenon is described by a state equation as (1) and the way of measurement the state variables specified by an observation equation as (2), the inference problem to solve is the following:[1][3] given partial and noisy observations of the stochastic process on the observation times , estimate the unobserved state variable of and the unknown parameters in (1) that better fit to the given observations.

Discrete-time innovation process

Let be the sequence of observation times of the states of (1), and the time series of partial and noisy measurements of described by the observation equation (2).

Further, let and be the conditional mean and variance of with , where denotes the expected value of random vectors.

The random sequence with

defines the discrete-time innovation process,[4][1][5] where is proved to be an independent normally distributed random vector with zero mean and variance

for small enough , with . In practice,[6] this distribution for the discrete-time innovation is valid when, with a suitable selection of both, the number of observations and the time distance between consecutive observations, the time series of observations of the SDE contains the main information about the continuous-time process . That is, when the sampling of the continuous-time process has low distortion (aliasing) and when there is a suitable signal-noise ratio.

Innovation estimator

The innovation estimator for the parameters of the SDE (1) is the one that maximizes the likelihood function of the discrete-time innovation process with respect to the parameters.[1] More precisely, given measurements of the state space model (1)-(2) with on the innovation estimator for the parameters of (1) is defined by

where

being the discrete-time innovation (3) and the innovation variance (4) of the model (1)-(2) at , for all In the above expression for the conditional mean and variance are computed by the continuous-discrete filtering algorithm for the evolution of the moments (Section 6.4 in[2]), for all

Differences with the maximum likelihood estimator

The maximum likelihood estimator of the parameters in the model (1)-(2) involves the evaluation of the - usually unknown - transition density function between the states and of the diffusion process for all the observation times and .[7] Instead of this, the innovation estimator (5) is obtained by maximizing the likelihood of the discrete-time innovation process taking into account that are Gaussian and independent random vectors. Remarkably, whereas the transition density function changes when the SDE for does, the transition density function for the innovation process remains Gaussian independently of the SDEs for . Only in the case that the diffusion is described by a linear SDE with additive noise, the density function is Gaussian and equal to and so the maximum likelihood and the innovation estimator coincide.[5] Otherwise,[5] the innovation estimator is an approximation to the maximum likelihood estimator and, in this sense, the innovation estimator is a Quasi-Maximum Likelihood estimator. In addition, the innovation method is a particular instance of the Prediction Error method according to the definition given in.[8] Therefore, the asymptotic results obtained in for that general class of estimators are valid for the innovation estimators.[1][9][10] Intuitively, by following the typical control engineering viewpoint, it is expected that the innovation process - viewed as a measure of the prediction errors of the fitted model - be approximately a white noise process when the models fit the data,[11][3] which can be used as a practical tool for designing of models and for optimal experimental design.[6]

Properties

The innovation estimator (5) has a number of important attributes:

- Under conventional regularity conditions, the innovation estimator (5) is consistent and asymptoticaly normal distributed.[1][10][12]

- For selecting models,[11] the maximum log-likelihood of the innovation estimator (5) can be used to compute the Akaike or Bayesian information criterion.

- The confidence limits for the innovation estimator is estimated with[6]

where is the t-student distribution with significance level, and degrees of freedom . Here, denotes the variance of the innovation estimator , where

is the Fisher Information matrix the innovation estimator of and

is the entry of the matrix with and , for .

- The distribution of the fitting-innovation process measures the goodness of fit of the model to the data.[1][3][11][6]

- For smooth enough function , nonlinear observation equations of the form

can be transformed to the simpler one (2), and the innovation estimator (5) can be applied.[5]

Approximate Innovation estimators

In practice, close form expressions for computing and in (5) are only available for a few models (1)-(2). Therefore, approximate filtering algorithms as the following are used in applications.

Given measurements and the initial filter estimates , , the approximate Linear Minimum Variance (LMV) filter for the model (1)-(2) is iteratively defined at each observation time by the prediction estimates[2][13]

and

with initial conditions and , and the filter estimates

and

with filter gain

for all , where is an approximation to the solution of (1) on the observation times .

Given measurements of the state space model (1)-(2) with on , the approximate innovation estimator for the parameters of (1) is defined by[1][12]

where

being

and

approximations to the discrete-time innovation (3) and innovation variance (4), respectively, resulting from the filtering algorithm (7)-(8).

For models with complete observations free of noise (i.e., with and in (2)), the approximate innovation estimator (9) reduces to the known Quasi-Maximum Likelihood estimators for SDEs.[12]

Main conventional-type estimators

Conventional-type innovation estimators are those (9) derived from conventional-type continuous-discrete or discrete-discrete approximate filtering algorithms. With approximate continuous-discrete filters there are the innovation estimators based on Local Linearization (LL) filters,[1][14][5] on the extended Kalman filter,[15][16] and on the second order filters.[3][16] Approximate innovation estimators based on discrete-discrete filters result from the discretization of the SDE (1) by means of a numerical scheme.[17][18] Typically, the effectiveness of these innovation estimators is directly related to the stability of the involved filtering algorithms.

A shared drawback of these conventional-type filters is that, once the observations are given, the error between the approximate and the exact innovation process is fixed and completely settled by the time distance between observations.[12] This might set a large bias of the approximate innovation estimators in some applications, bias that cannot be corrected by increasing the number of observations. However, the conventional-type innovation estimators are useful in many practical situations for which only medium or low accuracy for the parameter estimation is required.[12]

Order-β innovation estimators

Let us consider the finer time discretization of the time interval satisfying the condition . Further, let be the approximate value of obtained from a discretization of the equation (1) for all , and

for all

a continuous-time approximation to .

A order- LMV filter.[13] is an approximate LMV filter for which is an order- weak approximation to satisfying (10) and the weak convergence condition

for all and any times continuously differentiable functions for which and all its partial derivatives up to order have polynomial growth, being a positive constant. This order- LMV filter converges with rate to the exact LMV filter as goes to zero,[13] where is the maximum stepsize of the time discretization on which the approximation to is defined.

A order- innovation estimator is an approximate innovation estimator (9) for which the approximations to the discrete-time innovation (3) and innovation variance (4), respectively, resulting from an order- LMV filter.[12]

Approximations of any kind converging to in a weak sense (as, e.g., those in [19][13]) can be used to design an order- LMV filter and, consequently, an order- innovation estimator. These order- innovation estimators are intended for the recurrent practical situation in which a diffusion process should be identified from a reduced number of observations distant in time or when high accuracy for the estimated parameters is required.

Properties

An order- innovation estimator has a number of important properties:[12][6]

- For each given data of observations, converges to the exact innovation estimator as the maximum stepsize of the time discretization goes to zero.

- For finite samples of observations, the expected value of converges to the expected value of the exact innovation estimator as goes to zero.

- For an increasing number of observations, is asymptotically normal distributed and its bias decreases when goes to zero.

- Likewise to the convergence of the order- LMV filter to the exact LMV filter, for the convergence and asymptotic properties of there are no constraints on the time distance between two consecutive observations and , nor on the time discretization

- Approximations for the Akaike or Bayesian information criterion and confidence limits are directly obtained by replacing the exact estimator by its approximation . These approximations converge to the corresponding exact one when the maximum stepsize of the time discretization goes to zero.

- The distribution of the approximate fitting-innovation process measures the goodness of fit of the model to the data, which is also used as a practical tool for designing of models and for optimal experimental design.

- For smooth enough function , nonlinear observation equations of the form (6) can be transformed to the simpler one (2), and the order- innovation estimator can be applied.

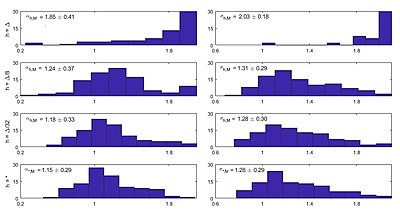

Figure 1 presents the histograms of the differences and between the exact innovation estimator with the conventional and order- innovation estimators for the parameters and of the equation[12]

obtained from 100 time series of noisy observations

of on the observation times , , with and . The classical and the order- Local Linearization filters of the innovation estimators and are defined as in,[12] respectively, on the uniform time discretizations and , with . The number of stochastic simulations of the order- Local Linearization filter is estimated via an adaptive sampling algorithm with moderate tolerance. The Figure 1 illustrates the convergence of the order- innovation estimator to the exact innovation estimators as decreases, which substantially improves the estimation provided by the conventional innovation estimator .

Deterministic approximations

The order- innovation estimators overcome the drawback of the conventional-type innovation estimators concerning the impossibility of reducing bias.[12] However, the viable bias reduction of an order- innovation estimators might eventually require that the associated order- LMV filter performs a large number of stochastic simulations.[13] In situations where only low or medium precision approximate estimators are needed, an alternative deterministic filter algorithm - called deterministic order- LMV filter [13] - can be obtained by tracking the first two conditional moments and of the order- weak approximation at all the time instants in between two consecutive observation times and . That is, the value of the predictions and in the filtering algorithm are computed from the recursive formulas

and with

and with . The approximate innovation estimators defined with these deterministic order- LMV filters not longer converge to the exact innovation estimator, but allow a significant bias reduction in the estimated parameters for a given finite sample with a lower computational cost.

Figure 2 presents the histograms and the confidence limits of the approximate innovation estimators and for the parameters and of the Van der Pol oscillator with random frequency[12]

obtained from 100 time series of partial and noisy observations

of on the observation times , , with and . The deterministic order- Local Linearization filter of the innovation estimators and is defined,[12] for each estimator, on uniform time discretizations , with and on an adaptive time-stepping discretization with moderate relative and absolute tolerances, respectively. Observe the bias reduction of the estimated parameter as decreases.

Software

A Matlab implementation of various approximate innovation estimators is provided by the SdeEstimation toolbox.[20] This toolbox has Local Linearization filters, including deterministic and stochastic options with fixed step sizes and sample numbers. It also offers adaptive time stepping and sampling algorithms, along with local and global optimization algorithms for innovation estimation. For models with complete observations free of noise, various approximations to the Quasi-Maximum Likelihood estimator are implemented in R.[21]

References

- ↑ 1.0 1.1 1.2 1.3 1.4 1.5 1.6 1.7 1.8 Ozaki, Tohru (1994), Bozdogan, H.; Sclove, S. L.; Gupta, A. K. et al., eds., "The Local Linearization Filter with Application to Nonlinear System Identifications" (in en), Proceedings of the First US/Japan Conference on the Frontiers of Statistical Modeling: An Informational Approach: Volume 3 Engineering and Scientific Applications (Dordrecht: Springer Netherlands): pp. 217–240, doi:10.1007/978-94-011-0854-6_10, ISBN 978-94-011-0854-6, https://doi.org/10.1007/978-94-011-0854-6_10, retrieved 2023-07-06

- ↑ 2.0 2.1 2.2 2.3 Jazwinski A.H., Stochastic Processes and Filtering Theory, Academic Press, New York, 1970.

- ↑ 3.0 3.1 3.2 3.3 Nielsen, Jan Nygaard; Vestergaard, Martin (2000). "Estimation in continuous-time stochastic volatility models using nonlinear filters". International Journal of Theoretical and Applied Finance 03 (2): 279–308. doi:10.1142/S0219024900000139. ISSN 0219-0249. https://www.worldscientific.com/doi/abs/10.1142/S0219024900000139.

- ↑ Kailath T., Lectures on Wiener and Kalman Filtering. New York: Springer-Verlag, 1981.

- ↑ 5.0 5.1 5.2 5.3 5.4 Jimenez, J. C.; Ozaki, T. (2006). "An Approximate Innovation Method For The Estimation Of Diffusion Processes From Discrete Data" (in en). Journal of Time Series Analysis 27 (1): 77–97. doi:10.1111/j.1467-9892.2005.00454.x. ISSN 0143-9782. https://onlinelibrary.wiley.com/doi/10.1111/j.1467-9892.2005.00454.x.

- ↑ 6.0 6.1 6.2 6.3 6.4 Jimenez, J. C.; Yoshimoto, A.; Miwakeichi, F. (2021-08-24). "State and parameter estimation of stochastic physical systems from uncertain and indirect measurements" (in en). The European Physical Journal Plus 136 (8): 136, 869. doi:10.1140/epjp/s13360-021-01859-1. ISSN 2190-5444. Bibcode: 2021EPJP..136..869J. https://doi.org/10.1140/epjp/s13360-021-01859-1.

- ↑ Schweppe, F. (1965). "Evaluation of likelihood functions for Gaussian signals". IEEE Transactions on Information Theory 11 (1): 61–70. doi:10.1109/TIT.1965.1053737. ISSN 1557-9654. https://ieeexplore.ieee.org/document/1053737.

- ↑ Ljung L., System Identification, Theory for the User (2nd edn). Englewood Cliffs: Prentice Hall, 1999.

- ↑ Lennart, Ljung; Caines, Peter E. (1980). "Asymptotic normality of prediction error estimators for approximate system models" (in en). Stochastics 3 (1–4): 29–46. doi:10.1080/17442507908833135. ISSN 0090-9491. http://www.tandfonline.com/doi/abs/10.1080/17442507908833135.

- ↑ 10.0 10.1 Nolsoe K., Nielsen, J.N., Madsen H. (2000) "Prediction-based estimating function for diffusion processes with measurement noise", Technical Reports 2000, No. 10, Informatics and Mathematical Modelling, Technical University of Denmark.

- ↑ 11.0 11.1 11.2 Ozaki, T.; Jimenez, J. C.; Haggan-Ozaki, V. (2000). "The Role of the Likelihood Function in the Estimation of Chaos Models" (in en). Journal of Time Series Analysis 21 (4): 363–387. doi:10.1111/1467-9892.00189. ISSN 0143-9782. https://onlinelibrary.wiley.com/doi/10.1111/1467-9892.00189.

- ↑ 12.00 12.01 12.02 12.03 12.04 12.05 12.06 12.07 12.08 12.09 12.10 12.11 Jimenez, J.C. (2020). "Bias reduction in the estimation of diffusion processes from discrete observations". IMA Journal of Mathematical Control and Information 37 (4): 1468–1505. doi:10.1093/imamci/dnaa021. https://academic.oup.com/imamci/article/37/4/1468/5903959. Retrieved 2023-07-06.

- ↑ 13.0 13.1 13.2 13.3 13.4 13.5 Jimenez, J.C. (2019). "Approximate linear minimum variance filters for continuous-discrete state space models: convergence and practical adaptive algorithms". IMA Journal of Mathematical Control and Information 36 (2): 341–378. doi:10.1093/imamci/dnx047. https://academic.oup.com/imamci/article/36/2/341/4634018. Retrieved 2023-07-06.

- ↑ Shoji, Isao (1998). "A comparative study of maximum likelihood estimators for nonlinear dynamical system models" (in en). International Journal of Control 71 (3): 391–404. doi:10.1080/002071798221731. ISSN 0020-7179. https://www.tandfonline.com/doi/full/10.1080/002071798221731.

- ↑ Nielsen, Jan Nygaard; Madsen, Henrik (2001-01-01). "Applying the EKF to stochastic differential equations with level effects" (in en). Automatica 37 (1): 107–112. doi:10.1016/S0005-1098(00)00128-X. ISSN 0005-1098. https://www.sciencedirect.com/science/article/pii/S000510980000128X.

- ↑ 16.0 16.1 Singer, Hermann (2002). "Parameter Estimation of Nonlinear Stochastic Differential Equations: Simulated Maximum Likelihood versus Extended Kalman Filter and Itô-Taylor Expansion" (in en). Journal of Computational and Graphical Statistics 11 (4): 972–995. doi:10.1198/106186002808. ISSN 1061-8600. http://www.tandfonline.com/doi/abs/10.1198/106186002808.

- ↑ Ozaki, Tohru; Iino, Mitsunori (2001). "An innovation approach to non-Gaussian time series analysis" (in en). Journal of Applied Probability 38 (A): 78–92. doi:10.1239/jap/1085496593. ISSN 0021-9002. https://www.cambridge.org/core/journals/journal-of-applied-probability/article/abs/an-innovation-approach-to-nongaussian-time-series-analysis/B22EEB7243BC878CEB6DA367B0EEE7F5.

- ↑ Peng, H.; Ozaki, T.; Jimenez, J.C. (2002). "Modeling and control for foreign exchange based on a continuous time stochastic microstructure model". Proceedings of the 41st IEEE Conference on Decision and Control, 2002. 4. pp. 4440–4445 vol.4. doi:10.1109/CDC.2002.1185071. ISBN 0-7803-7516-5. https://ieeexplore.ieee.org/document/1185071.

- ↑ Kloeden P.E., Platen E., Numerical Solution of Stochastic Differential Equations, 3rd edn. Berlin: Springer, 1999.

- ↑ "GitHub - locallinearization/SdeEstimation" (in en). https://github.com/locallinearization/SdeEstimation.

- ↑ Iacus S.M., Simulation and inference for stochastic differential equations: with R examples, New York: Springer, 2008.