Goodness of fit

| Part of a series on |

| Regression analysis |

|---|

|

| Models |

| Estimation |

| Background |

|

|

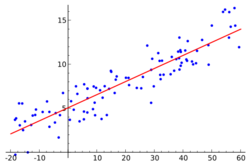

The goodness of fit of a statistical model describes how well it fits a set of observations. Measures of goodness of fit typically summarize the discrepancy between observed values and the values expected under the model in question. Such measures can be used in statistical hypothesis testing, e.g. to test for normality of residuals, to test whether two samples are drawn from identical distributions (see Kolmogorov–Smirnov test), or whether outcome frequencies follow a specified distribution (see Pearson's chi-square test). In the analysis of variance, one of the components into which the variance is partitioned may be a lack-of-fit sum of squares.

Fit of distributions

In assessing whether a given distribution is suited to a data-set, the following tests and their underlying measures of fit can be used:

- Bayesian information criterion

- Kolmogorov–Smirnov test

- Cramér–von Mises criterion

- Anderson–Darling test

- Berk-Jones tests[1][2]

- Shapiro–Wilk test

- Chi-squared test

- Akaike information criterion

- Hosmer–Lemeshow test

- Kuiper's test

- Kernelized Stein discrepancy[3][4]

- Zhang's ZK, ZC and ZA tests[5]

- Moran test

- Density Based Empirical Likelihood Ratio tests[6]

Regression analysis

In regression analysis, more specifically regression validation, the following topics relate to goodness of fit:

- Coefficient of determination (the R-squared measure of goodness of fit);

- Lack-of-fit sum of squares;

- Mallows's Cp criterion

- Prediction error

- Reduced chi-square

Categorical data

The following are examples that arise in the context of categorical data.

Pearson's chi-square test

Pearson's chi-square test uses a measure of goodness of fit which is the sum of differences between observed and expected outcome frequencies (that is, counts of observations), each squared and divided by the expectation:

where:

- Oi = an observed count for bin i

- Ei = an expected count for bin i, asserted by the null hypothesis.

The expected frequency is calculated by: where:

- F = the cumulative distribution function for the probability distribution being tested.

- Yu = the upper limit for class i,

- Yl = the lower limit for class i, and

- N = the sample size

The resulting value can be compared with a chi-square distribution to determine the goodness of fit. The chi-square distribution has (k − c) degrees of freedom, where k is the number of non-empty cells and c is the number of estimated parameters (including location and scale parameters and shape parameters) for the distribution plus one. For example, for a 3-parameter Weibull distribution, c = 4.

Binomial case

A binomial experiment is a sequence of independent trials in which the trials can result in one of two outcomes, success or failure. There are n trials each with probability of success, denoted by p. Provided that npi ≫ 1 for every i (where i = 1, 2, ..., k), then

This has approximately a chi-square distribution with k − 1 degrees of freedom. The fact that there are k − 1 degrees of freedom is a consequence of the restriction . We know there are k observed cell counts, however, once any k − 1 are known, the remaining one is uniquely determined. Basically, one can say, there are only k − 1 freely determined cell counts, thus k − 1 degrees of freedom.

G-test

G-tests are likelihood-ratio tests of statistical significance that are increasingly being used in situations where Pearson's chi-square tests were previously recommended.[7]

The general formula for G is

where and are the same as for the chi-square test, denotes the natural logarithm, and the sum is taken over all non-empty cells. Furthermore, the total observed count should be equal to the total expected count:where is the total number of observations.

G-tests have been recommended at least since the 1981 edition of the popular statistics textbook by Robert R. Sokal and F. James Rohlf.[8]

See also

- All models are wrong

- Deviance (statistics) (related to GLM)

- Overfitting

- Statistical model validation

- Theil–Sen estimator

References

- ↑ Berk, Robert H.; Jones, Douglas H. (1979). "Goodness-of-fit test statistics that dominate the Kolmogorov statistics". Zeitschrift für Wahrscheinlichkeitstheorie und Verwandte Gebiete 47 (1): 47–59. doi:10.1007/BF00533250.

- ↑ Moscovich, Amit; Nadler, Boaz; Spiegelman, Clifford (2016). "On the exact Berk-Jones statistics and their p-value calculation". Electronic Journal of Statistics 10 (2). doi:10.1214/16-EJS1172.

- ↑ Liu, Qiang; Lee, Jason; Jordan, Michael (20 June 2016). "A Kernelized Stein Discrepancy for Goodness-of-fit Tests". The 33rd International Conference on Machine Learning. New York, New York, USA: Proceedings of Machine Learning Research. pp. 276–284. http://proceedings.mlr.press/v48/liub16.html.

- ↑ Chwialkowski, Kacper; Strathmann, Heiko; Gretton, Arthur (20 June 2016). "A Kernel Test of Goodness of Fit". The 33rd International Conference on Machine Learning. New York, New York, USA: Proceedings of Machine Learning Research. pp. 2606–2615. http://proceedings.mlr.press/v48/chwialkowski16.html.

- ↑ Zhang, Jin (2002). "Powerful goodness-of-fit tests based on the likelihood ratio". J. R. Stat. Soc. B 64 (2): 281–294. doi:10.1111/1467-9868.00337. http://anakena.dcc.uchile.cl/~mnmonsal/eso.pdf. Retrieved 5 November 2018.

- ↑ Vexler, Albert; Gurevich, Gregory (2010). "Empirical Likelihood Ratios Applied to Goodness-of-Fit Tests Based on Sample Entropy". Computational Statistics and Data Analysis 54 (2): 531–545. doi:10.1016/j.csda.2009.09.025.

- ↑ McDonald, J.H. (2014). "G–test of goodness-of-fit". Handbook of Biological Statistics (Third ed.). Baltimore, Maryland: Sparky House Publishing. pp. 53–58. http://www.biostathandbook.com/gtestgof.html.

- ↑ Sokal, R. R.; Rohlf, F. J. (1981). Biometry: The Principles and Practice of Statistics in Biological Research (Second ed.). W. H. Freeman. ISBN 0-7167-2411-1. https://archive.org/details/biometryprincipl00soka_0.

Further reading

- Huber-Carol, C.; Balakrishnan, N.; Nikulin, M. S. et al., eds. (2002), Goodness-of-Fit Tests and Model Validity, Springer

- Ingster, Yu. I.; Suslina, I. A. (2003), Nonparametric Goodness-of-Fit Testing Under Gaussian Models, Springer

- Rayner, J. C. W.; Thas, O.; Best, D. J. (2009), Smooth Tests of Goodness of Fit (2nd ed.), Wiley

- "Empirical likelihood ratios applied to goodness-of-fit tests based on sample entropy", Computational Statistics & Data Analysis 54 (2): 531–545, 2010, doi:10.1016/j.csda.2009.09.025

|