Akaike information criterion

The Akaike information criterion (AIC) is an estimator of prediction error and thereby relative quality of statistical models for a given set of data.[1][2][3] Given a collection of models for the data, AIC estimates the quality of each model, relative to each of the other models. Thus, AIC provides a means for model selection.

AIC is founded on information theory. When a statistical model is used to represent the process that generated the data, the representation will almost never be exact; so some information will be lost by using the model to represent the process. AIC estimates the relative amount of information lost by a given model: the less information a model loses, the higher the quality of that model.

In estimating the amount of information lost by a model, AIC deals with the trade-off between the goodness of fit of the model and the simplicity of the model. In other words, AIC deals with both the risk of overfitting and the risk of underfitting.

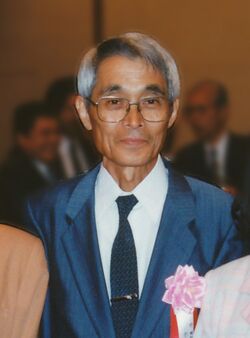

The Akaike information criterion is named after the Japanese statistician Hirotugu Akaike, who formulated it. It now forms the basis of a paradigm for the foundations of statistics and is also widely used for statistical inference.

Definition

Suppose that we have a statistical model of some data. Let k be the number of estimated parameters in the model. Let be the maximized value of the likelihood function for the model. Then the AIC value of the model is the following.[4][5]

Given a set of candidate models for the data, the preferred model is the one with the minimum AIC value. Thus, AIC rewards goodness of fit (as assessed by the likelihood function), but it also includes a penalty that is an increasing function of the number of estimated parameters. The penalty discourages overfitting, which is desired because increasing the number of parameters in the model almost always improves the goodness of the fit.

Suppose that the data is generated by some unknown process f. We consider two candidate models to represent f: g1 and g2. If we knew f, then we could find the information lost from using g1 to represent f by calculating the Kullback–Leibler divergence, DKL(f ‖ g1); similarly, the information lost from using g2 to represent f could be found by calculating DKL(f ‖ g2). We would then, generally, choose the candidate model that minimized the information loss.

We cannot choose with certainty, because we do not know f. (Akaike 1974) showed, however, that we can estimate, via AIC, how much more (or less) information is lost by g1 than by g2. The estimate, though, is only valid asymptotically; if the number of data points is small, then some correction is often necessary (see AICc, below).

Note that AIC tells nothing about the absolute quality of a model, only the quality relative to other models. Thus, if all the candidate models fit poorly, AIC will not give any warning of that. Hence, after selecting a model via AIC, it is usually good practice to validate the absolute quality of the model. Such validation commonly includes checks of the model's residuals (to determine whether the residuals seem like random) and tests of the model's predictions. For more on this topic, see statistical model validation.

How to use AIC in practice

To apply AIC in practice, we start with a set of candidate models, and then find the models' corresponding AIC values. There will almost always be information lost due to using a candidate model to represent the "true model," i.e. the process that generated the data. We wish to select, from among the candidate models, the model that minimizes the information loss. We cannot choose with certainty, but we can minimize the estimated information loss.

Suppose that there are R candidate models. Denote the AIC values of those models by AIC1, AIC2, AIC3, ..., AICR. Let AICmin be the minimum of those values. Then the quantity exp((AICmin − AICi)/2) can be interpreted as being proportional to the probability that the ith model minimizes the (estimated) information loss.[6]

As an example, suppose that there are three candidate models, whose AIC values are 100, 102, and 110. Then the second model is exp((100 − 102)/2) = 0.368 times as probable as the first model to minimize the information loss. Similarly, the third model is exp((100 − 110)/2) = 0.007 times as probable as the first model to minimize the information loss.

In this example, we would omit the third model from further consideration. We then have three options: (1) gather more data, in the hope that this will allow clearly distinguishing between the first two models; (2) simply conclude that the data is insufficient to support selecting one model from among the first two; (3) take a weighted average of the first two models, with weights proportional to 1 and 0.368, respectively, and then do statistical inference based on the weighted multimodel.[7]

The quantity exp((AICmin − AICi)/2) is known as the relative likelihood of model i. It is closely related to the likelihood ratio used in the likelihood-ratio test. Indeed, if all the models in the candidate set have the same number of parameters, then using AIC might at first appear to be very similar to using the likelihood-ratio test. There are, however, important distinctions. In particular, the likelihood-ratio test is valid only for nested models, whereas AIC (and AICc) has no such restriction.[8][9]

Hypothesis testing

Every statistical hypothesis test can be formulated as a comparison of statistical models. Hence, every statistical hypothesis test can be replicated via AIC. Two examples are briefly described in the subsections below. Details for those examples, and many more examples, are given by (Sakamoto Ishiguro) and (Konishi Kitagawa).

Replicating Student's t-test

As an example of a hypothesis test, consider the t-test to compare the means of two normally-distributed populations. The input to the t-test comprises a random sample from each of the two populations.

To formulate the test as a comparison of models, we construct two different models. The first model models the two populations as having potentially different means and standard deviations. The likelihood function for the first model is thus the product of the likelihoods for two distinct normal distributions; so it has four parameters: μ1, σ1, μ2, σ2. To be explicit, the likelihood function is as follows (denoting the sample sizes by n1 and n2).

The second model models the two populations as having the same means and the same standard deviations. The likelihood function for the second model thus sets μ1 = μ2 and σ1 = σ2 in the above equation; so it only has two parameters.

We then maximize the likelihood functions for the two models (in practice, we maximize the log-likelihood functions); after that, it is easy to calculate the AIC values of the models. We next calculate the relative likelihood. For instance, if the second model was only 0.01 times as likely as the first model, then we would omit the second model from further consideration: so we would conclude that the two populations have different means.

The t-test assumes that the two populations have identical standard deviations; the test tends to be unreliable if the assumption is false and the sizes of the two samples are very different (Welch's t-test would be better). Comparing the means of the populations via AIC, as in the example above, has the same disadvantage. However, one could create a third model that allows different standard deviations. This third model would have the advantage of not making such assumptions at the cost of an additional parameter and thus degree of freedom.

Comparing categorical data sets

For another example of a hypothesis test, suppose that we have two populations, and each member of each population is in one of two categories—category #1 or category #2. Each population is binomially distributed. We want to know whether the distributions of the two populations are the same. We are given a random sample from each of the two populations.

Let m be the size of the sample from the first population. Let m1 be the number of observations (in the sample) in category #1; so the number of observations in category #2 is m − m1. Similarly, let n be the size of the sample from the second population. Let n1 be the number of observations (in the sample) in category #1.

Let p be the probability that a randomly-chosen member of the first population is in category #1. Hence, the probability that a randomly-chosen member of the first population is in category #2 is 1 − p. Note that the distribution of the first population has one parameter. Let q be the probability that a randomly-chosen member of the second population is in category #1. Note that the distribution of the second population also has one parameter.

To compare the distributions of the two populations, we construct two different models. The first model models the two populations as having potentially different distributions. The likelihood function for the first model is thus the product of the likelihoods for two distinct binomial distributions; so it has two parameters: p, q. To be explicit, the likelihood function is as follows.

The second model models the two populations as having the same distribution. The likelihood function for the second model thus sets p = q in the above equation; so the second model has one parameter.

We then maximize the likelihood functions for the two models (in practice, we maximize the log-likelihood functions); after that, it is easy to calculate the AIC values of the models. We next calculate the relative likelihood. For instance, if the second model was only 0.01 times as likely as the first model, then we would omit the second model from further consideration: so we would conclude that the two populations have different distributions.

Foundations of statistics

Statistical inference is generally regarded as comprising hypothesis testing and estimation. Hypothesis testing can be done via AIC, as discussed above. Regarding estimation, there are two types: point estimation and interval estimation. Point estimation can be done within the AIC paradigm: it is provided by maximum likelihood estimation. Interval estimation can also be done within the AIC paradigm: it is provided by likelihood intervals. Hence, statistical inference generally can be done within the AIC paradigm.

The most commonly used paradigms for statistical inference are frequentist inference and Bayesian inference. AIC, though, can be used to do statistical inference without relying on either the frequentist paradigm or the Bayesian paradigm: because AIC can be interpreted without the aid of significance levels or Bayesian priors.[10] In other words, AIC can be used to form a foundation of statistics that is distinct from both frequentism and Bayesianism.[11][12]

Modification for small sample size

When the sample size is small, there is a substantial probability that AIC will select models that have too many parameters, i.e. that AIC will overfit.[13][14][15] To address such potential overfitting, AICc was developed: AICc is AIC with a correction for small sample sizes.

The formula for AICc depends upon the statistical model. Assuming that the model is univariate, is linear in its parameters, and has normally-distributed residuals (conditional upon regressors), then the formula for AICc is as follows.[16][17][18][19]

—where n denotes the sample size and k denotes the number of parameters. Thus, AICc is essentially AIC with an extra penalty term for the number of parameters. Note that as n → ∞, the extra penalty term converges to 0, and thus AICc converges to AIC.[20]

If the assumption that the model is univariate and linear with normal residuals does not hold, then the formula for AICc will generally be different from the formula above. For some models, the formula can be difficult to determine. For every model that has AICc available, though, the formula for AICc is given by AIC plus terms that includes both k and k2. In comparison, the formula for AIC includes k but not k2. In other words, AIC is a first-order estimate (of the information loss), whereas AICc is a second-order estimate.[21]

Further discussion of the formula, with examples of other assumptions, is given by (Burnham Anderson) and by (Konishi Kitagawa). In particular, with other assumptions, bootstrap estimation of the formula is often feasible.

To summarize, AICc has the advantage of tending to be more accurate than AIC (especially for small samples), but AICc also has the disadvantage of sometimes being much more difficult to compute than AIC. Note that if all the candidate models have the same k and the same formula for AICc, then AICc and AIC will give identical (relative) valuations; hence, there will be no disadvantage in using AIC, instead of AICc. Furthermore, if n is many times larger than k2, then the extra penalty term will be negligible; hence, the disadvantage in using AIC, instead of AICc, will be negligible.

History

The Akaike information criterion was formulated by the statistician Hirotugu Akaike. It was originally named "an information criterion".[22] It was first announced in English by Akaike at a 1971 symposium; the proceedings of the symposium were published in 1973.[22][23] The 1973 publication, though, was only an informal presentation of the concepts.[24] The first formal publication was a 1974 paper by Akaike.[5]

The initial derivation of AIC relied upon some strong assumptions. (Takeuchi 1976) showed that the assumptions could be made much weaker. Takeuchi's work, however, was in Japanese and was not widely known outside Japan for many years. (Translated in [25])

AIC was originally proposed for linear regression (only) by (Sugiura 1978). That instigated the work of (Hurvich Tsai), and several further papers by the same authors, which extended the situations in which AICc could be applied.

The first general exposition of the information-theoretic approach was the volume by (Burnham Anderson). It includes an English presentation of the work of Takeuchi. The volume led to far greater use of AIC, and it now has more than 64,000 citations on Google Scholar.

Akaike called his approach an "entropy maximization principle", because the approach is founded on the concept of entropy in information theory. Indeed, minimizing AIC in a statistical model is effectively equivalent to maximizing entropy in a thermodynamic system; in other words, the information-theoretic approach in statistics is essentially applying the second law of thermodynamics. As such, AIC has roots in the work of Ludwig Boltzmann on entropy. For more on these issues, see (Akaike 1985) and (Burnham Anderson).

Usage tips

Counting parameters

A statistical model must account for random errors. A straight line model might be formally described as yi = b0 + b1xi + εi. Here, the εi are the residuals from the straight line fit. If the εi are assumed to be i.i.d. Gaussian (with zero mean), then the model has three parameters: b0, b1, and the variance of the Gaussian distributions. Thus, when calculating the AIC value of this model, we should use k=3. More generally, for any least squares model with i.i.d. Gaussian residuals, the variance of the residuals' distributions should be counted as one of the parameters.[26]

As another example, consider a first-order autoregressive model, defined by xi = c + φxi−1 + εi, with the εi being i.i.d. Gaussian (with zero mean). For this model, there are three parameters: c, φ, and the variance of the εi. More generally, a pth-order autoregressive model has p + 2 parameters. (If, however, c is not estimated from the data, but instead given in advance, then there are only p + 1 parameters.)

Transforming data

The AIC values of the candidate models must all be computed with the same data set. Sometimes, though, we might want to compare a model of the response variable, y, with a model of the logarithm of the response variable, log(y). More generally, we might want to compare a model of the data with a model of transformed data. Following is an illustration of how to deal with data transforms (adapted from (Burnham Anderson): "Investigators should be sure that all hypotheses are modeled using the same response variable").

Suppose that we want to compare two models: one with a normal distribution of y and one with a normal distribution of log(y). We should not directly compare the AIC values of the two models. Instead, we should transform the normal cumulative distribution function to first take the logarithm of y. To do that, we need to perform the relevant integration by substitution: thus, we need to multiply by the derivative of the (natural) logarithm function, which is 1/y. Hence, the transformed distribution has the following probability density function:

—which is the probability density function for the log-normal distribution. We then compare the AIC value of the normal model against the AIC value of the log-normal model.

For misspecified model, Takeuchi's Information Criterion (TIC) might be more appropriate. However, TIC often suffers from instability caused by estimation errors.[27]

Comparisons with other model selection methods

Several alternative model selection criteria have been proposed and studied in statistical literature. These include the Bayesian information criterion (BIC), cross-validation methods, least squares fitting, Mallows's Cp, and other information-theoretic approaches such as Widely Applicable Information Criterion (WAIC), Deviance information criterion (DIC), and Hannan–Quinn information criterion (HQC). These methods differ in their assumptions, asymptotic behavior, and suitability depending on the goals of the analysis — such as prediction, inference, or model interpretation. A comprehensive overview of AIC and other model selection methods is given by Ding et al. (2018).[28]

Comparison with BIC

A critical difference between AIC and BIC (and their variants) lies in their asymptotic behavior under well-specified and misspecified model classes.[29] Their fundamental differences have been well-studied in regression variable selection and autoregression order selection[30] problems. In general, if the goal is prediction, AIC and leave-one-out cross-validations are preferred.

The formula for the Bayesian information criterion (BIC) is similar to the formula for AIC, but with a different penalty for the number of parameters. With AIC the penalty is 2k, whereas with BIC the penalty is ln(n)k.

A comparison of AIC/AICc and BIC is given by (Burnham Anderson), with follow-up remarks by (Burnham Anderson). The authors show that AIC/AICc can be derived in the same Bayesian framework as BIC, just by using different prior probabilities. In the Bayesian derivation of BIC, though, each candidate model has a prior probability of 1/R (where R is the number of candidate models). Additionally, the authors present a few simulation studies that suggest AICc tends to have practical/performance advantages over BIC.

A point made by several researchers is that AIC and BIC are appropriate for different tasks. In particular, BIC is argued to be appropriate for selecting the "true model" (i.e. the process that generated the data) from the set of candidate models, whereas AIC is not appropriate. To be specific, if the "true model" is in the set of candidates, then BIC will select the "true model" with probability 1, as n → ∞; in contrast, when selection is done via AIC, the probability can be less than 1.[31][32][33] Proponents of AIC argue that this issue is negligible, because the "true model" is virtually never in the candidate set. Indeed, it is a common aphorism in statistics that "all models are wrong"; hence the "true model" (i.e. reality) cannot be in the candidate set.

Another comparison of AIC and BIC is given by ( Vrieze 2012). Vrieze presents a simulation study—which allows the "true model" to be in the candidate set (unlike with virtually all real data). The simulation study demonstrates, in particular, that AIC sometimes selects a much better model than BIC even when the "true model" is in the candidate set. The reason is that, for finite n, BIC can have a substantial risk of selecting a very bad model from the candidate set. This reason can arise even when n is much larger than k2. With AIC, the risk of selecting a very bad model is minimized.

If the "true model" is not in the candidate set, then the most that we can hope to do is select the model that best approximates the "true model". AIC is appropriate for finding the best approximating model, under certain assumptions.[31][32][33] (Those assumptions include, in particular, that the approximating is done with regard to information loss.)

Comparison of AIC and BIC in the context of regression is given by (Yang 2005). In regression, AIC is asymptotically optimal for selecting the model with the least mean squared error, under the assumption that the "true model" is not in the candidate set. BIC is not asymptotically optimal under the assumption. Yang additionally shows that the rate at which AIC converges to the optimum is, in a certain sense, the best possible.

Comparison with least squares

Sometimes, each candidate model assumes that the residuals are distributed according to independent identical normal distributions (with zero mean). That gives rise to least squares model fitting.

With least squares fitting, the maximum likelihood estimate for the variance of a model's residuals distributions is

- ,

where the residual sum of squares is

Then, the maximum value of a model's log-likelihood function is (see Normal distribution):

where C is a constant independent of the model, and dependent only on the particular data points, i.e. it does not change if the data does not change.

That gives:[34]

Because only differences in AIC are meaningful, the constant C can be ignored, which allows us to conveniently take the following for model comparisons:

Note that if all the models have the same k, then selecting the model with minimum AIC is equivalent to selecting the model with minimum RSS—which is the usual objective of model selection based on least squares.

Comparison with cross-validation

Leave-one-out cross-validation is asymptotically equivalent to AIC, for ordinary linear regression models.[35] Asymptotic equivalence to AIC also holds for mixed-effects models.[36]

Comparison with Mallows's Cp

Akaike stated, 'It is interesting to note that the use of a statistic proposed by Mallows is essentially equivalent to our present approach'.[23] However, the precise relation between AIC and Cp requires some nuance.

Under a normal regression model with unknown error variance , the AIC statistic, as noted above, is

(I deliberately stop using here to avoid confusion below). For large samples, if this model is correct, then should be close to the true error variance , and using a one-term Taylor series for the logarithm,

-

()

This final expression (neglecting terms with n) is Mallows' Cp when happens to be known. In the more usual situation where this is unknown, an estimate , typically derived from a model using all possible predictors, must be substituted. This leads to an asymptotic equivalence between AIC and Cp. However, Akaike noted that 'unfortunately some subjective judgement is required for the choice of in the definition of Cp'.[5]

In the unusual case that is known, AIC is exactly equal to (1). As a result, (1) is sometimes considered to be AIC, and AIC and Cp are claimed to be equivalent.[37] Such statements should be considered incorrect; when AIC is correctly implemented, the equivalence is only asymptotic.

Other information criteria

Other model selection criteria include the Widely Applicable Information Criterion (WAIC) and the Deviance Information Criterion (DIC), both of which are widely used in Bayesian model selection. WAIC, in particular, is asymptotically equivalent to leave-one-out cross-validation and applies even in complex or singular models. The Hannan–Quinn criterion (HQC) offers a middle ground between AIC and BIC by applying a lighter penalty than BIC but a heavier one than AIC. The Minimum Description Length (MDL) principle, closely related to BIC, approaches model selection from an information-theoretic perspective, treating it as a compression problem. Each of these methods has advantages depending on model complexity, sample size, and the goal of analysis.

See also

- Deviance information criterion

- Focused information criterion

- Hannan–Quinn information criterion

- Maximum likelihood estimation

- Principle of maximum entropy

- Wilks' theorem

Notes

- ↑ Stoica, P.; Selen, Y. (2004), "Model-order selection: a review of information criterion rules", IEEE Signal Processing Magazine 21 (July): 36–47, doi:10.1109/MSP.2004.1311138, Bibcode: 2004ISPM...21...36S

- ↑ McElreath, Richard (2016). Statistical Rethinking: A Bayesian Course with Examples in R and Stan. CRC Press. p. 189. ISBN 978-1-4822-5344-3. "AIC provides a surprisingly simple estimate of the average out-of-sample deviance."

- ↑ Taddy, Matt (2019). Business Data Science: Combining Machine Learning and Economics to Optimize, Automate, and Accelerate Business Decisions. New York: McGraw-Hill. p. 90. ISBN 978-1-260-45277-8. https://books.google.com/books?id=yPOUDwAAQBAJ&pg=PA90. "The AIC is an estimate for OOS deviance."

- ↑ Burnham & Anderson 2002, §2.2

- ↑ 5.0 5.1 5.2 Akaike 1974

- ↑ Burnham & Anderson 2002, §2.9.1, §6.4.5

- ↑ Burnham & Anderson 2002

- ↑ Burnham & Anderson 2002, §2.12.4

- ↑ Murtaugh 2014

- ↑ Burnham & Anderson 2002, p. 99

- ↑ Bandyopadhyay & Forster 2011

- ↑ Sakamoto, Ishiguro & Kitagawa 1986

- ↑ McQuarrie & Tsai 1998

- ↑ Claeskens & Hjort 2008, §8.3

- ↑ Giraud 2015, §2.9.1

- ↑ (Sugiura 1978)

- ↑ (Hurvich Tsai)

- ↑ Cavanaugh 1997

- ↑ Burnham & Anderson 2002, §2.4

- ↑ Burnham & Anderson 2004

- ↑ Burnham & Anderson 2002, §7.4

- ↑ 22.0 22.1 Findley & Parzen 1995

- ↑ 23.0 23.1 Akaike 1973

- ↑ deLeeuw 1992

- ↑ Takeuchi, Kei (2020), Takeuchi, Kei, ed., "On the Problem of Model Selection Based on the Data" (in en), Contributions on Theory of Mathematical Statistics (Tokyo: Springer Japan): pp. 329–356, doi:10.1007/978-4-431-55239-0_12, ISBN 978-4-431-55239-0

- ↑ Burnham & Anderson 2002, p. 63

- ↑ Matsuda, Takeru; Uehara, Masatoshi; Hyvarinen, Aapo (2021). "Information criteria for non-normalized models". Journal of Machine Learning Research 22 (158): 1–33. ISSN 1533-7928. http://jmlr.org/papers/v22/20-1366.html.

- ↑ Ding, Jie; Tarokh, Vahid; Yang, Yuhong (2018-11-14). "Model Selection Techniques: An Overview". IEEE Signal Processing Magazine 35 (6): 16–34. doi:10.1109/MSP.2018.2867638. Bibcode: 2018ISPM...35f..16D.

- ↑ Ding, Jie; Tarokh, Vahid; Yang, Yuhong (November 2018). "Model Selection Techniques: An Overview". IEEE Signal Processing Magazine 35 (6): 16–34. doi:10.1109/MSP.2018.2867638. ISSN 1053-5888. Bibcode: 2018ISPM...35f..16D. https://resolver.caltech.edu/CaltechAUTHORS:20181128-150927005.

- ↑ Ding, J.; Tarokh, V.; Yang, Y. (June 2018). "Bridging AIC and BIC: A New Criterion for Autoregression". IEEE Transactions on Information Theory 64 (6): 4024–4043. doi:10.1109/TIT.2017.2717599. ISSN 1557-9654. Bibcode: 2018ITIT...64.4024D.

- ↑ 31.0 31.1 Burnham & Anderson 2002, §6.3-6.4

- ↑ 32.0 32.1 Vrieze 2012

- ↑ 33.0 33.1 Aho, Derryberry & Peterson 2014

- ↑ Burnham & Anderson 2002, p. 63

- ↑ Stone 1977

- ↑ Fang 2011

- ↑ Boisbunon et al. 2014

References

- Aho, K.; Derryberry, D.; Peterson, T. (2014), "Model selection for ecologists: the worldviews of AIC and BIC", Ecology 95 (3): 631–636, doi:10.1890/13-1452.1, PMID 24804445, Bibcode: 2014Ecol...95..631A.

- Akaike, H. (1973), "Information theory and an extension of the maximum likelihood principle", in Petrov, B. N.; Csáki, F., 2nd International Symposium on Information Theory, Tsahkadsor, Armenia, USSR, September 2-8, 1971, Budapest: Akadémiai Kiadó, pp. 267–281. Republished in Kotz, S.; Johnson, N. L., eds. (1992), Breakthroughs in Statistics, I, Springer-Verlag, pp. 610–624.

- Akaike, H. (1974), "A new look at the statistical model identification", IEEE Transactions on Automatic Control 19 (6): 716–723, doi:10.1109/TAC.1974.1100705, Bibcode: 1974ITAC...19..716A.

- Akaike, H. (1985), "Prediction and entropy", in Atkinson, A. C.; Fienberg, S. E., A Celebration of Statistics, Springer, pp. 1–24.

- Bandyopadhyay, P. S., ed. (2011), Philosophy of Statistics, North-Holland Publishing.

- Boisbunon, A.; Canu, S.; Fourdrinier, D.; Strawderman, W.; Wells, M. T. (2014), "Akaike's Information Criterion, Cp and estimators of loss for elliptically symmetric distributions", International Statistical Review 82 (3): 422–439, doi:10.1111/insr.12052.

- Burnham, K. P.; Anderson, D. R. (2002), Model Selection and Multimodel Inference: A practical information-theoretic approach (2nd ed.), Springer-Verlag.

- Burnham, K. P.; Anderson, D. R. (2004), "Multimodel inference: understanding AIC and BIC in Model Selection", Sociological Methods & Research 33: 261–304, doi:10.1177/0049124104268644, http://www.sortie-nd.org/lme/Statistical%20Papers/Burnham_and_Anderson_2004_Multimodel_Inference.pdf.

- Cavanaugh, J. E. (1997), "Unifying the derivations of the Akaike and corrected Akaike information criteria", Statistics & Probability Letters 31 (2): 201–208, doi:10.1016/s0167-7152(96)00128-9.

- Claeskens, G.; Hjort, N. L. (2008), Model Selection and Model Averaging, Cambridge University Press. [Note: the AIC defined by Claeskens & Hjort is the negative of the standard definition—as originally given by Akaike and followed by other authors.]

- deLeeuw, J. (1992), "Introduction to Akaike (1973) information theory and an extension of the maximum likelihood principle", in Kotz, S.; Johnson, N. L., Breakthroughs in Statistics I, Springer, pp. 599–609, http://gifi.stat.ucla.edu/janspubs/1990/chapters/deleeuw_C_90c.pdf, retrieved 2014-11-27.

- Fang, Yixin (2011), "Asymptotic equivalence between cross-validations and Akaike Information Criteria in mixed-effects models", Journal of Data Science 9: 15–21, http://www.jds-online.com/file_download/278/JDS-652a.pdf, retrieved 2011-04-16.

- "A conversation with Hirotugu Akaike", Statistical Science 10: 104–117, 1995, doi:10.1214/ss/1177010133.

- Giraud, C. (2015), Introduction to High-Dimensional Statistics, CRC Press.

- Hurvich, C. M.; Tsai, C.-L. (1989), "Regression and time series model selection in small samples", Biometrika 76 (2): 297–307, doi:10.1093/biomet/76.2.297.

- Konishi, S.; Kitagawa, G. (2008), Information Criteria and Statistical Modeling, Springer.

- McQuarrie, A. D. R.; Tsai, C.-L. (1998), Regression and Time Series Model Selection, World Scientific.

- Murtaugh, P. A. (2014), "In defense of P values", Ecology 95 (3): 611–617, doi:10.1890/13-0590.1, PMID 24804441, Bibcode: 2014Ecol...95..611M, https://zenodo.org/record/894459.

- Akaike Information Criterion Statistics, D. Reidel, 1986.

- Stone, M. (1977), "An asymptotic equivalence of choice of model by cross-validation and Akaike's criterion", Journal of the Royal Statistical Society, Series B 39 (1): 44–47, doi:10.1111/j.2517-6161.1977.tb01603.x.

- Sugiura, N. (1978), "Further analysis of the data by Akaike's information criterion and the finite corrections", Communications in Statistics - Theory and Methods 7: 13–26, doi:10.1080/03610927808827599.

- Takeuchi, K. (1976), " " (in ja), Suri Kagaku 153: 12–18, ISSN 0386-2240.

- Vrieze, S. I. (2012), "Model selection and psychological theory: a discussion of the differences between the Akaike Information Criterion (AIC) and the Bayesian Information Criterion (BIC)", Psychological Methods 17 (2): 228–243, doi:10.1037/a0027127, PMID 22309957.

- Yang, Y. (2005), "Can the strengths of AIC and BIC be shared?", Biometrika 92: 937–950, doi:10.1093/biomet/92.4.937.

Further reading

- Akaike, H. (21 December 1981), "This Week's Citation Classic", Current Contents Engineering, Technology, and Applied Sciences 12 (51): 42, http://www.garfield.library.upenn.edu/classics1981/A1981MS54100001.pdf, retrieved 16 December 2004 [Hirotogu Akaike comments on how he arrived at AIC]

- Anderson, D. R. (2008), Model Based Inference in the Life Sciences, Springer

- Arnold, T. W. (2010), "Uninformative parameters and model selection using Akaike's Information Criterion", Journal of Wildlife Management 74 (6): 1175–1178, doi:10.1111/j.1937-2817.2010.tb01236.x, Bibcode: 2010JWMan..74.1175A

- Burnham, K. P.; Anderson, D. R.; Huyvaert, K. P. (2011), "AIC model selection and multimodel inference in behavioral ecology", Behavioral Ecology and Sociobiology 65: 23–35, doi:10.1007/s00265-010-1029-6, https://wolfweb.unr.edu/~ldyer/classes/396/burnham2011.pdf, retrieved 2018-05-04

- Cavanaugh, J. E.; Neath, A. A. (2019), "The Akaike information criterion", WIREs Computational Statistics 11 (3), doi:10.1002/wics.1460

- Ing, C.-K.; Wei, C.-Z. (2005), "Order selection for same-realization predictions in autoregressive processes", Annals of Statistics 33 (5): 2423–2474, doi:10.1214/009053605000000525

- Ko, V.; Hjort, N. L. (2019), "Copula information criterion for model selection with two-stage maximum likelihood estimation", Econometrics and Statistics 12: 167–180, doi:10.1016/j.ecosta.2019.01.001, http://urn.nb.no/URN:NBN:no-77993

- Larski, S. (2012), The Problem of Model Selection and Scientific Realism, London School of Economics, http://etheses.lse.ac.uk/615/1/StanislavLarski_Problem_Model_Selection.pdf

- Pan, W. (2001), "Akaike's Information Criterion in generalized estimating equations", Biometrics 57 (1): 120–125, doi:10.1111/j.0006-341X.2001.00120.x, PMID 11252586

- Parzen, E.; Tanabe, K.; Kitagawa, G., eds. (1998), Selected Papers of Hirotugu Akaike, Springer Series in Statistics, Springer, doi:10.1007/978-1-4612-1694-0, ISBN 978-1-4612-7248-9

- Saefken, B.; Kneib, T.; van Waveren, C.-S.; Greven, S. (2014), "A unifying approach to the estimation of the conditional Akaike information in generalized linear mixed models", Electronic Journal of Statistics 8: 201–225, doi:10.1214/14-EJS881

|