Software:Emotion recognition

| Artificial intelligence |

|---|

| Major goals |

| Approaches |

| Philosophy |

| History |

| Technology |

| Glossary |

Emotion recognition is the process of identifying human emotion. People vary widely in their accuracy at recognizing the emotions of others. Use of technology to help people with emotion recognition is a relatively nascent research area. Generally, the technology works best if it uses multiple modalities in context. To date, the most work has been conducted on automating the recognition of facial expressions from video, spoken expressions from audio, written expressions from text, and physiology as measured by wearables.

Human

Humans show a great deal of variability in their abilities to recognize emotion. A key point to keep in mind when learning about automated emotion recognition is that there are several sources of "ground truth", or truth about what the real emotion is. Suppose we are trying to recognize the emotions of Alex. One source is "what would most people say that Alex is feeling?" In this case, the 'truth' may not correspond to what Alex feels, but may correspond to what most people would say it looks like Alex feels. For example, Alex may actually feel sad, but he puts on a big smile and then most people say he looks happy. If an automated method achieves the same results as a group of observers it may be considered accurate, even if it does not actually measure what Alex truly feels. Another source of 'truth' is to ask Alex what he truly feels. This works if Alex has a good sense of his internal state, and wants to tell you what it is, and is capable of putting it accurately into words or a number. However, some people are alexithymic and do not have a good sense of their internal feelings, or they are not able to communicate them accurately with words and numbers. In general, getting to the truth of what emotion is actually present can take some work, can vary depending on the criteria that are selected, and will usually involve maintaining some level of uncertainty.

Automatic

| Parts of this software (those related to section) need to be updated. Please update this software to reflect recent events or newly available information. (November 2025) |

Decades of scientific research have been conducted developing and evaluating methods for automated emotion recognition. There is now an extensive literature proposing and evaluating hundreds of different kinds of methods, leveraging techniques from multiple areas, such as signal processing, machine learning, computer vision, and speech processing. Different methodologies and techniques may be employed to interpret emotion such as Bayesian networks.[1] , Gaussian Mixture models[2] and Hidden Markov Models[3] and deep neural networks.[4]

Approaches

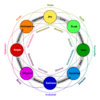

The accuracy of emotion recognition is usually improved when it combines the analysis of human expressions from multimodal forms such as texts, physiology, audio, or video.[5] Different emotion types are detected through the integration of information from facial expressions, body movement and gestures, and speech.[6] The technology is said to contribute in the emergence of the so-called emotional or emotive Internet.[7]

The existing approaches in emotion recognition to classify certain emotion types can be generally classified into three main categories: knowledge-based techniques, statistical methods, and hybrid approaches.[8]

Knowledge-based techniques

Knowledge-based techniques (sometimes referred to as lexicon-based techniques), utilize domain knowledge and the semantic and syntactic characteristics of text and potentially spoken language in order to detect certain emotion types.[9] In this approach, it is common to use knowledge-based resources during the emotion classification process such as WordNet, SenticNet,[10] ConceptNet, and EmotiNet,[11] to name a few.[12] One of the advantages of this approach is the accessibility and economy brought about by the large availability of such knowledge-based resources.[8] A limitation of this technique on the other hand, is its inability to handle concept nuances and complex linguistic rules.[8]

Knowledge-based techniques can be mainly classified into two categories: dictionary-based and corpus-based approaches. Dictionary-based approaches find opinion or emotion seed words in a dictionary and search for their synonyms and antonyms to expand the initial list of opinions or emotions.[13] Corpus-based approaches on the other hand, start with a seed list of opinion or emotion words, and expand the database by finding other words with context-specific characteristics in a large corpus.[13] While corpus-based approaches take into account context, their performance still vary in different domains since a word in one domain can have a different orientation in another domain.[14]

Statistical methods

Statistical methods commonly involve the use of different supervised machine learning algorithms in which a large set of annotated data is fed into the algorithms for the system to learn and predict the appropriate emotion types.[8] Machine learning algorithms generally provide more reasonable classification accuracy compared to other approaches, but one of the challenges in achieving good results in the classification process, is the need to have a sufficiently large training set.[8]

Some of the most commonly used machine learning algorithms include Support Vector Machines (SVM), Naive Bayes, and Maximum Entropy.[15] Deep learning, which is under the unsupervised family of machine learning, is also widely employed in emotion recognition.[16][17][18] Well-known deep learning algorithms include different architectures of Artificial Neural Network (ANN) such as Convolutional Neural Network (CNN), Long Short-term Memory (LSTM), and Extreme Learning Machine (ELM).[15] The popularity of deep learning approaches in the domain of emotion recognition may be mainly attributed to its success in related applications such as in computer vision, speech recognition, and Natural Language Processing (NLP).[15]

Hybrid approaches

Hybrid approaches in emotion recognition are essentially a combination of knowledge-based techniques and statistical methods, which exploit complementary characteristics from both techniques.[8] Some of the works that have applied an ensemble of knowledge-driven linguistic elements and statistical methods include sentic computing and iFeel, both of which have adopted the concept-level knowledge-based resource SenticNet.[19][20] The role of such knowledge-based resources in the implementation of hybrid approaches is highly important in the emotion classification process.[12] Since hybrid techniques gain from the benefits offered by both knowledge-based and statistical approaches, they tend to have better classification performance as opposed to employing knowledge-based or statistical methods independently. A downside of using hybrid techniques however, is the computational complexity during the classification process.[12]

Datasets

Data is an integral part of the existing approaches in emotion recognition and in most cases it is a challenge to obtain annotated data that is necessary to train machine learning algorithms.[13] For the task of classifying different emotion types from multimodal sources in the form of texts, audio, videos or physiological signals, the following datasets are available:

- HUMAINE: provides natural clips with emotion words and context labels in multiple modalities[21]

- Belfast database: provides clips with a wide range of emotions from TV programs and interview recordings[22]

- SEMAINE: provides audiovisual recordings between a person and a virtual agent and contains emotion annotations such as angry, happy, fear, disgust, sadness, contempt, and amusement[23]

- IEMOCAP: provides recordings of dyadic sessions between actors and contains emotion annotations such as happiness, anger, sadness, frustration, and neutral state[24]

- eNTERFACE: provides audiovisual recordings of subjects from seven nationalities and contains emotion annotations such as happiness, anger, sadness, surprise, disgust, and fear[25]

- DEAP: provides electroencephalography (EEG), electrocardiography (ECG), and face video recordings, as well as emotion annotations in terms of valence, arousal, and dominance of people watching film clips[26]

- DREAMER: provides electroencephalography (EEG) and electrocardiography (ECG) recordings, as well as emotion annotations in terms of valence, dominance of people watching film clips[27]

- MELD: is a multiparty conversational dataset where each utterance is labeled with emotion and sentiment. MELD[28] provides conversations in video format and hence suitable for multimodal emotion recognition and sentiment analysis. MELD is useful for multimodal sentiment analysis and emotion recognition, dialogue systems and emotion recognition in conversations.[29]

- MuSe: provides audiovisual recordings of natural interactions between a person and an object.[30] It has discrete and continuous emotion annotations in terms of valence, arousal and trustworthiness as well as speech topics useful for multimodal sentiment analysis and emotion recognition.

- UIT-VSMEC: is a standard Vietnamese Social Media Emotion Corpus (UIT-VSMEC) with about 6,927 human-annotated sentences with six emotion labels, contributing to emotion recognition research in Vietnamese which is a low-resource language in Natural Language Processing (NLP).[31]

- BED: provides valence and arousal of people watching images. It also includes electroencephalography (EEG) recordings of people exposed to various stimuli (SSVEP, resting with eyes closed, resting with eyes open, cognitive tasks) for the task of EEG-based biometrics.[32]

Applications

Emotion recognition is used in society for a variety of reasons. Affectiva, which spun out of MIT, provides artificial intelligence software that makes it more efficient to do tasks previously done manually by people, mainly to gather facial expression and vocal expression information related to specific contexts where viewers have consented to share this information. For example, instead of filling out a lengthy survey about how you feel at each point watching an educational video or advertisement, you can consent to have a camera watch your face and listen to what you say, and note during which parts of the experience you show expressions such as boredom, interest, confusion, or smiling. (Note that this does not imply it is reading your innermost feelings—it only reads what you express outwardly.) Other uses by Affectiva include helping children with autism, helping people who are blind to read facial expressions, helping robots interact more intelligently with people, and monitoring signs of attention while driving in an effort to enhance driver safety.[33]

Academic research increasingly uses emotion recognition as a method to study social science questions around elections, protests, and democracy. Several studies focus on the facial expressions of political candidates on social media and find that politicians tend to express happiness.[34][35][36] However, this research finds that computer vision tools such as Amazon Rekognition are only accurate for happiness and are mostly reliable as 'happy detectors'.[37] Researchers examining protests, where negative affect such as anger is expected, have therefore developed their own models to more accurately study expressions of negativity and violence in democratic processes.[38]

A patent filed by Snapchat in 2015 describes a method of extracting data about crowds at public events by performing algorithmic emotion recognition on users' geotagged selfies.[39]

Emotient was a startup company which applied emotion recognition to reading frowns, smiles, and other expressions on faces, namely artificial intelligence to predict "attitudes and actions based on facial expressions".[40] Apple bought Emotient in 2016 and uses emotion recognition technology to enhance the emotional intelligence of its products.[40]

nViso provides real-time emotion recognition for web and mobile applications through a real-time API.[41] Visage Technologies AB offers emotion estimation as a part of their Visage SDK for marketing and scientific research and similar purposes.[42]

Eyeris is an emotion recognition company that works with embedded system manufacturers including car makers and social robotic companies on integrating its face analytics and emotion recognition software; as well as with video content creators to help them measure the perceived effectiveness of their short and long form video creative.[43][44]

Subfields

Emotion recognition is probably to gain the best outcome if applying multiple modalities by combining different objects, including text (conversation), audio, video, and physiology to detect emotions.

Emotion recognition in text

Text data is a favorable research object for emotion recognition when it is free and available everywhere in human life. Compare to other types of data, the storage of text data is lighter and easy to compress to the best performance due to the frequent repetition of words and characters in languages. Emotions can be extracted from two essential text forms: written texts and conversations (dialogues).[45] For written texts, many scholars focus on working with sentence level to extract "words/phrases" representing emotions.[46][47]

Emotion recognition in audio

Different from emotion recognition in text, vocal signals are used for the recognition to extract emotions from audio.[48].Unlike images and videos, which are typically two-dimensional or three-dimensional data capturing spatial or spatio-temporal features, audio is inherently one-dimensional time-series data that represents variations in sound amplitude over time. This fundamental difference makes emotion recognition from audio unique. Instead of relying on visual cues or textual semantics, audio-based emotion detection focuses on prosodic and acoustic features such as pitch, intensity, speech rate, and voice quality.[49]

Emotion recognition in video

Video data is a combination of audio data, image data and sometimes texts (in case of subtitles[50]).

Emotion recognition in conversation

Emotion recognition in conversation (ERC) extracts opinions between participants from massive conversational data in social platforms, such as Facebook, Twitter, YouTube, and others.[29] ERC can take input data like text, audio, video or a combination form to detect several emotions such as fear, lust, pain, and pleasure.

See also

- Affective computing

- Face perception

- Facial recognition system

- Sentiment analysis

- Interpersonal accuracy

References

- ↑ Miyakoshi, Yoshihiro, and Shohei Kato. "Facial Emotion Detection Considering Partial Occlusion Of Face Using Baysian Network". Computers and Informatics (2011): 96–101.

- ↑ Hari Krishna Vydana, P. Phani Kumar, K. Sri Rama Krishna and Anil Kumar Vuppala. "Improved emotion recognition using GMM-UBMs". 2015 International Conference on Signal Processing and Communication Engineering Systems

- ↑ B. Schuller, G. Rigoll M. Lang. "Hidden Markov model-based speech emotion recognition". ICME '03. Proceedings. 2003 International Conference on Multimedia and Expo, 2003.

- ↑ Singh, Premjeet; Saha, Goutam; Sahidullah, Md (2021). "Non-linear frequency warping using constant-Q transformation for speech emotion recognition". 2021 International Conference on Computer Communication and Informatics (ICCCI). pp. 1–4. doi:10.1109/ICCCI50826.2021.9402569. ISBN 978-1-7281-5875-4.

- ↑ Poria, Soujanya; Cambria, Erik; Bajpai, Rajiv; Hussain, Amir (September 2017). "A review of affective computing: From unimodal analysis to multimodal fusion". Information Fusion 37: 98–125. doi:10.1016/j.inffus.2017.02.003. http://researchrepository.napier.ac.uk/Output/1792429. Retrieved 18 May 2021.

- ↑ Caridakis, George; Castellano, Ginevra; Kessous, Loic; Raouzaiou, Amaryllis; Malatesta, Lori; Asteriadis, Stelios; Karpouzis, Kostas (19 September 2007). "Multimodal emotion recognition from expressive faces, body gestures and speech" (in en). Artificial Intelligence and Innovations 2007: From Theory to Applications. IFIP the International Federation for Information Processing. 247. pp. 375–388. doi:10.1007/978-0-387-74161-1_41. ISBN 978-0-387-74160-4.

- ↑ Price (23 August 2015). "Tapping Into The Emotional Internet" (in en-US). https://techcrunch.com/2015/08/23/tapping-into-the-emotional-internet/.

- ↑ 8.0 8.1 8.2 8.3 8.4 8.5 Cambria, Erik (March 2016). "Affective Computing and Sentiment Analysis". IEEE Intelligent Systems 31 (2): 102–107. doi:10.1109/MIS.2016.31. Bibcode: 2016IISys..31b.102..

- ↑ Taboada, Maite; Brooke, Julian; Tofiloski, Milan; Voll, Kimberly; Stede, Manfred (June 2011). "Lexicon-Based Methods for Sentiment Analysis". Computational Linguistics 37 (2): 267–307. doi:10.1162/coli_a_00049. ISSN 0891-2017.

- ↑ Cambria, Erik; Liu, Qian; Decherchi, Sergio; Xing, Frank; Kwok, Kenneth (2022). "SenticNet 7: A Commonsense-based Neurosymbolic AI Framework for Explainable Sentiment Analysis". pp. 3829–3839. https://sentic.net/senticnet-7.pdf.

- ↑ Balahur, Alexandra; Hermida, JesúS M; Montoyo, AndréS (1 November 2012). "Detecting implicit expressions of emotion in text: A comparative analysis". Decision Support Systems 53 (4): 742–753. doi:10.1016/j.dss.2012.05.024. ISSN 0167-9236. https://dl.acm.org/citation.cfm?id=2364904.

- ↑ 12.0 12.1 12.2 Medhat, Walaa; Hassan, Ahmed; Korashy, Hoda (December 2014). "Sentiment analysis algorithms and applications: A survey". Ain Shams Engineering Journal 5 (4): 1093–1113. doi:10.1016/j.asej.2014.04.011.

- ↑ 13.0 13.1 13.2 Madhoushi, Zohreh; Hamdan, Abdul Razak; Zainudin, Suhaila (2015). "Sentiment analysis techniques in recent works". 2015 Science and Information Conference (SAI). pp. 288–291. doi:10.1109/SAI.2015.7237157. ISBN 978-1-4799-8547-0.

- ↑ Hemmatian, Fatemeh; Sohrabi, Mohammad Karim (18 December 2017). "A survey on classification techniques for opinion mining and sentiment analysis". Artificial Intelligence Review 52 (3): 1495–1545. doi:10.1007/s10462-017-9599-6.

- ↑ 15.0 15.1 15.2 Sun, Shiliang; Luo, Chen; Chen, Junyu (July 2017). "A review of natural language processing techniques for opinion mining systems". Information Fusion 36: 10–25. doi:10.1016/j.inffus.2016.10.004.

- ↑ Majumder, Navonil; Poria, Soujanya; Gelbukh, Alexander; Cambria, Erik (March 2017). "Deep Learning-Based Document Modeling for Personality Detection from Text". IEEE Intelligent Systems 32 (2): 74–79. doi:10.1109/MIS.2017.23. Bibcode: 2017IISys..32b..74M.

- ↑ Mahendhiran, P. D.; Kannimuthu, S. (May 2018). "Deep Learning Techniques for Polarity Classification in Multimodal Sentiment Analysis". International Journal of Information Technology & Decision Making 17 (3): 883–910. doi:10.1142/S0219622018500128.

- ↑ Yu, Hongliang; Gui, Liangke; Madaio, Michael; Ogan, Amy; Cassell, Justine; Morency, Louis-Philippe (23 October 2017). "Temporally Selective Attention Model for Social and Affective State Recognition in Multimedia Content". Proceedings of the 25th ACM international conference on Multimedia. MM '17. ACM. pp. 1743–1751. doi:10.1145/3123266.3123413. ISBN 9781450349062.

- ↑ Cambria, Erik; Hussain, Amir (2015). Sentic Computing: A Common-Sense-Based Framework for Concept-Level Sentiment Analysis. Springer Publishing Company, Incorporated. ISBN 978-3319236537. https://dl.acm.org/citation.cfm?id=2878632.

- ↑ Araújo, Matheus; Gonçalves, Pollyanna; Cha, Meeyoung; Benevenuto, Fabrício (7 April 2014). "IFeel: A system that compares and combines sentiment analysis methods". Proceedings of the 23rd International Conference on World Wide Web. WWW '14 Companion. ACM. pp. 75–78. doi:10.1145/2567948.2577013. ISBN 9781450327459.

- ↑ Paolo Petta, ed (2011). Emotion-oriented systems the humaine handbook. Berlin: Springer. ISBN 978-3-642-15184-2.

- ↑ Douglas-Cowie, Ellen; Campbell, Nick; Cowie, Roddy; Roach, Peter (1 April 2003). "Emotional speech: towards a new generation of databases". Speech Communication 40 (1–2): 33–60. doi:10.1016/S0167-6393(02)00070-5. ISSN 0167-6393. https://dl.acm.org/citation.cfm?id=772595.

- ↑ McKeown, G.; Valstar, M.; Cowie, R.; Pantic, M.; Schroder, M. (January 2012). "The SEMAINE Database: Annotated Multimodal Records of Emotionally Colored Conversations between a Person and a Limited Agent". IEEE Transactions on Affective Computing 3 (1): 5–17. doi:10.1109/T-AFFC.2011.20. Bibcode: 2012ITAfC...3....5M. https://pure.qub.ac.uk/portal/en/publications/the-semaine-database-annotated-multimodal-records-of-emotionally-colored-conversations-between-a-person-and-a-limited-agent(4f349228-ebb5-4964-be2c-18f3559be29f).html.

- ↑ Busso, Carlos; Bulut, Murtaza; Lee, Chi-Chun; Kazemzadeh, Abe; Mower, Emily; Kim, Samuel; Chang, Jeannette N.; Lee, Sungbok et al. (5 November 2008). "IEMOCAP: interactive emotional dyadic motion capture database" (in en). Language Resources and Evaluation 42 (4): 335–359. doi:10.1007/s10579-008-9076-6. ISSN 1574-020X.

- ↑ Martin, O.; Kotsia, I.; Macq, B.; Pitas, I. (3 April 2006). "The eNTERFACE'05 Audio-Visual Emotion Database". 22nd International Conference on Data Engineering Workshops (ICDEW'06). Icdew '06. IEEE Computer Society. pp. 8–. doi:10.1109/ICDEW.2006.145. ISBN 9780769525716. https://dl.acm.org/citation.cfm?id=1130193.

- ↑ Koelstra, Sander; Muhl, Christian; Soleymani, Mohammad; Lee, Jong-Seok; Yazdani, Ashkan; Ebrahimi, Touradj; Pun, Thierry; Nijholt, Anton et al. (January 2012). "DEAP: A Database for Emotion Analysis Using Physiological Signals". IEEE Transactions on Affective Computing 3 (1): 18–31. doi:10.1109/T-AFFC.2011.15. ISSN 1949-3045. Bibcode: 2012ITAfC...3...18K.

- ↑ Katsigiannis, Stamos; Ramzan, Naeem (January 2018). "DREAMER: A Database for Emotion Recognition Through EEG and ECG Signals From Wireless Low-cost Off-the-Shelf Devices". IEEE Journal of Biomedical and Health Informatics 22 (1): 98–107. doi:10.1109/JBHI.2017.2688239. ISSN 2168-2194. PMID 28368836. Bibcode: 2018IJBHI..22...98K. https://myresearchspace.uws.ac.uk/ws/files/1077176/Accepted_Author_Manuscript.pdf. Retrieved 1 October 2019.

- ↑ Poria, Soujanya; Hazarika, Devamanyu; Majumder, Navonil; Naik, Gautam; Cambria, Erik; Mihalcea, Rada (2019). "MELD: A Multimodal Multi-Party Dataset for Emotion Recognition in Conversations". Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics (Stroudsburg, PA, USA: Association for Computational Linguistics): 527–536. doi:10.18653/v1/p19-1050.

- ↑ 29.0 29.1 Poria, S., Majumder, N., Mihalcea, R., & Hovy, E. (2019). Emotion recognition in conversation: Research challenges, datasets, and recent advances. IEEE Access, 7, 100943-100953.

- ↑ Stappen, Lukas; Schuller, Björn; Lefter, Iulia; Cambria, Erik; Kompatsiaris, Ioannis (2020). "Summary of MuSe 2020: Multimodal Sentiment Analysis, Emotion-target Engagement and Trustworthiness Detection in Real-life Media". Proceedings of the 28th ACM International Conference on Multimedia. Seattle, PA, USA: Association for Computing Machinery. pp. 4769–4770. doi:10.1145/3394171.3421901. ISBN 9781450379885.

- ↑ Ho, Vong (2020). "Emotion Recognition for Vietnamese Social Media Text". Computational Linguistics. Communications in Computer and Information Science. 1215. pp. 319–333. doi:10.1007/978-981-15-6168-9_27. ISBN 978-981-15-6167-2. https://link.springer.com/chapter/10.1007/978-981-15-6168-9_27.

- ↑ Arnau-González, Pablo; Katsigiannis, Stamos; Arevalillo-Herráez, Miguel; Ramzan, Naeem (February 2021). "BED: A new dataset for EEG-based biometrics". IEEE Internet of Things Journal (Early Access) (15): 12219. doi:10.1109/JIOT.2021.3061727. ISSN 2327-4662. Bibcode: 2021IITJ....812219A.

- ↑ "Affectiva". http://www.affectiva.com.

- ↑ Bossetta, Michael; Schmøkel, Rasmus (2023-01-02). "Cross-Platform Emotions and Audience Engagement in Social Media Political Campaigning: Comparing Candidates' Facebook and Instagram Images in the 2020 US Election" (in en). Political Communication 40 (1): 48–68. doi:10.1080/10584609.2022.2128949. ISSN 1058-4609.

- ↑ Peng, Yilang (January 2021). "What Makes Politicians' Instagram Posts Popular? Analyzing Social Media Strategies of Candidates and Office Holders with Computer Vision" (in en). The International Journal of Press/Politics 26 (1): 143–166. doi:10.1177/1940161220964769. ISSN 1940-1612. http://journals.sagepub.com/doi/10.1177/1940161220964769.

- ↑ Haim, Mario; Jungblut, Marc (2021-03-15). "Politicians' Self-depiction and Their News Portrayal: Evidence from 28 Countries Using Visual Computational Analysis" (in en). Political Communication 38 (1–2): 55–74. doi:10.1080/10584609.2020.1753869. ISSN 1058-4609. https://www.tandfonline.com/doi/full/10.1080/10584609.2020.1753869.

- ↑ Bossetta, Michael; Schmøkel, Rasmus (2023-01-02). "Cross-Platform Emotions and Audience Engagement in Social Media Political Campaigning: Comparing Candidates' Facebook and Instagram Images in the 2020 US Election" (in en). Political Communication 40 (1): 48–68. doi:10.1080/10584609.2022.2128949. ISSN 1058-4609.

- ↑ Won, Donghyeon; Steinert-Threlkeld, Zachary C.; Joo, Jungseock (2017-10-19). "Protest Activity Detection and Perceived Violence Estimation from Social Media Images". Proceedings of the 25th ACM international conference on Multimedia. MM '17. New York, NY, USA: Association for Computing Machinery. pp. 786–794. doi:10.1145/3123266.3123282. ISBN 978-1-4503-4906-2. https://doi.org/10.1145/3123266.3123282.

- ↑ Bushwick, Sophie. "This Video Watches You Back" (in en). https://www.scientificamerican.com/article/this-video-watches-you-back/.

- ↑ 40.0 40.1 DeMuth Jr., Chris (8 January 2016). "Apple Reads Your Mind". M&A Daily (Seeking Alpha). http://seekingalpha.com/article/3798766-apple-reads-your-mind.

- ↑ "nViso". http://www.nviso.ch.

- ↑ "Visage Technologies". https://visagetechnologies.com/products-and-services/visagesdk/faceanalysis/.

- ↑ "Feeling sad, angry? Your future car will know". http://www.cnet.com/roadshow/news/eyeris-emovu-detects-driver-emotions/.

- ↑ Varagur, Krithika (2016-03-22). "Cars May Soon Warn Drivers Before They Nod Off". Huffington Post. http://www.huffingtonpost.com/entry/drowsy-driving-warning-system_us_56eadd1be4b09bf44a9c96aa.

- ↑ Shivhare, S. N., & Khethawat, S. (2012). Emotion detection from text. arXiv preprint arXiv:1205.4944

- ↑ Ezhilarasi, R., & Minu, R. I. (2012). Automatic emotion recognition and classification. Procedia Engineering, 38, 21-26.

- ↑ Krcadinac, U., Pasquier, P., Jovanovic, J., & Devedzic, V. (2013). Synesketch: An open source library for sentence-based emotion recognition. IEEE Transactions on Affective Computing, 4(3), 312-325.

- ↑ Schmitt, M., Ringeval, F., & Schuller, B. W. (2016, September). At the Border of Acoustics and Linguistics: Bag-of-Audio-Words for the Recognition of Emotions in Speech. In Interspeech (pp. 495-499).

- ↑ Jose, Jiby Mariya; Jeeva, Jose (June 2023). "Energy-Reduced Bio-Inspired 1D-CNN for Audio Emotion Recognition". International Journal of Scientific Research in Computer Science, Engineering and Information Technology 11 (3): 1034–1054. doi:10.32628/CSEIT25113386. https://www.researchgate.net/publication/392929222. Retrieved 24 July 2025.

- ↑ Dhall, A., Goecke, R., Lucey, S., & Gedeon, T. (2012). Collecting large, richly annotated facial-expression databases from movies. IEEE multimedia, (3), 34-41.

Template:Nonverbal communication

|