Beta-binomial distribution

|

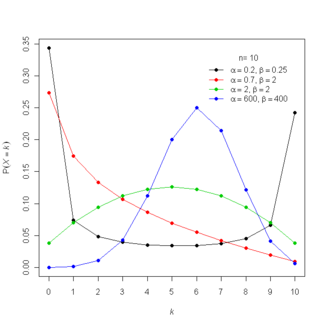

Probability mass function  | |||

|

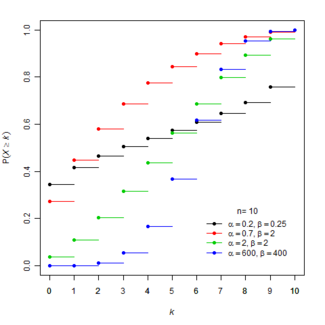

Cumulative distribution function  | |||

| Notation | |||

|---|---|---|---|

| Parameters |

n ∈ N0 — number of trials (real) (real) | ||

| Support | x ∈ { 0, …, n } | ||

| pmf |

where is the beta function | ||

| CDF |

where 3F2(a;b;x) is the generalized hypergeometric function | ||

| Mean | |||

| Variance | |||

| Skewness | |||

| Kurtosis | See text | ||

| MGF | where is the hypergeometric function | ||

| CF | |||

| PGF | |||

In probability theory and statistics, the beta-binomial distribution is a family of discrete probability distributions on a finite support of non-negative integers arising when the probability of success in each of a fixed or known number of Bernoulli trials is either unknown or random. The beta-binomial distribution is the binomial distribution in which the probability of success at each of n trials is not fixed but randomly drawn from a beta distribution. It is frequently used in Bayesian statistics, empirical Bayes methods and classical statistics to capture overdispersion in binomial type distributed data.

The beta-binomial is a one-dimensional version of the Dirichlet-multinomial distribution as the binomial and beta distributions are univariate versions of the multinomial and Dirichlet distributions respectively. The special case where α and β are integers is also known as the negative hypergeometric distribution.

Motivation and derivation

As a compound distribution

The beta distribution is a conjugate distribution of the binomial distribution. This fact leads to an analytically tractable compound distribution where one can think of the parameter in the binomial distribution as being randomly drawn from a beta distribution. Suppose we were interested in predicting the number of heads, in future trials. This is given by

Using the properties of the beta function, this can alternatively be written

As an urn model

The beta-binomial distribution can also be motivated via an urn model for positive integer values of α and β, known as the Pólya urn model. Specifically, imagine an urn containing α red balls and β black balls, where random draws are made. If a red ball is observed, then two red balls are returned to the urn. Likewise, if a black ball is drawn, then two black balls are returned to the urn. If this is repeated n times, then the probability of observing x red balls follows a beta-binomial distribution with parameters n, α and β.

By contrast, if the random draws are with simple replacement (no balls over and above the observed ball are added to the urn), then the distribution follows a binomial distribution and if the random draws are made without replacement, the distribution follows a hypergeometric distribution.

Moments and properties

The first three raw moments are

and the kurtosis is

Letting we note, suggestively, that the mean can be written as

and the variance as

where . The parameter is known as the "intra class" or "intra cluster" correlation. It is this positive correlation which gives rise to overdispersion. Note that when , no information is available to distinguish between the beta and binomial variation, and the two models have equal variances.

Factorial moments

The r-th factorial moment of a Beta-binomial random variable X is

- .

Point estimates

Method of moments

The method of moments estimates can be gained by noting the first and second moments of the beta-binomial and setting those equal to the sample moments and . We find

These estimates can be non-sensically negative which is evidence that the data is either undispersed or underdispersed relative to the binomial distribution. In this case, the binomial distribution and the hypergeometric distribution are alternative candidates respectively.

Maximum likelihood estimation

While closed-form maximum likelihood estimates are impractical, given that the pdf consists of common functions (gamma function and/or Beta functions), they can be easily found via direct numerical optimization. Maximum likelihood estimates from empirical data can be computed using general methods for fitting multinomial Pólya distributions, methods for which are described in (Minka 2003). The R package VGAM through the function vglm, via maximum likelihood, facilitates the fitting of glm type models with responses distributed according to the beta-binomial distribution. There is no requirement that n is fixed throughout the observations.

Example: Sex ratio heterogeneity

The following data gives the number of male children among the first 12 children of family size 13 in 6115 families taken from hospital records in 19th century Saxony (Sokal and Rohlf, p. 59 from Lindsey). The 13th child is ignored to blunt the effect of families non-randomly stopping when a desired gender is reached.

| Males | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 |

| Families | 3 | 24 | 104 | 286 | 670 | 1033 | 1343 | 1112 | 829 | 478 | 181 | 45 | 7 |

The first two sample moments are

and therefore the method of moments estimates are

The maximum likelihood estimates can be found numerically

and the maximized log-likelihood is

from which we find the AIC

The AIC for the competing binomial model is AIC = 25070.34 and thus we see that the beta-binomial model provides a superior fit to the data i.e. there is evidence for overdispersion. Trivers and Willard postulate a theoretical justification for heterogeneity in gender-proneness among mammalian offspring.

The superior fit is evident especially among the tails

| Males | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 |

| Observed Families | 3 | 24 | 104 | 286 | 670 | 1033 | 1343 | 1112 | 829 | 478 | 181 | 45 | 7 |

| Fitted Expected (Beta-Binomial) | 2.3 | 22.6 | 104.8 | 310.9 | 655.7 | 1036.2 | 1257.9 | 1182.1 | 853.6 | 461.9 | 177.9 | 43.8 | 5.2 |

| Fitted Expected (Binomial p = 0.519215) | 0.9 | 12.1 | 71.8 | 258.5 | 628.1 | 1085.2 | 1367.3 | 1265.6 | 854.2 | 410.0 | 132.8 | 26.1 | 2.3 |

Role in Bayesian statistics

The beta-binomial distribution plays a prominent role in the Bayesian estimation of a Bernoulli success probability which we wish to estimate based on data. Let be a sample of independent and identically distributed Bernoulli random variables . Suppose, our knowledge of - in Bayesian fashion - is uncertain and is modeled by the prior distribution . If then through compounding, the prior predictive distribution of

- .

After observing we note that the posterior distribution for

where is a normalizing constant. We recognize the posterior distribution of as a .

Thus, again through compounding, we find that the posterior predictive distribution of a sum of a future sample of size of random variables is

- .

Generating random variates

To draw a beta-binomial random variate simply draw and then draw .

Related distributions

- where .

- where is the discrete uniform distribution.

- If then

- where and and is the binomial distribution.

- where is the Poisson distribution.

- where is the geometric distribution.

- where is the negative binomial distribution.

See also

References

- Minka, Thomas P. (2003). Estimating a Dirichlet distribution. Microsoft Technical Report.

External links

- Using the Beta-binomial distribution to assess performance of a biometric identification device

- Fastfit contains Matlab code for fitting Beta-Binomial distributions (in the form of two-dimensional Pólya distributions) to data.

- Interactive graphic: Univariate Distribution Relationships

- Beta-binomial functions in VGAM R package

- Beta-binomial distribution in Sandia National Labs Cognitive Foundry Java library

|