Conditional independence

| Part of a series on statistics |

| Probability theory |

|---|

|

In probability theory, conditional independence describes situations in which an observation is irrelevant or redundant when evaluating the certainty of a hypothesis. Conditional independence is usually formulated in terms of conditional probability, as a special case where the probability of the hypothesis given the uninformative observation is equal to the probability without. If is the hypothesis, and and are observations, conditional independence can be stated as an equality:

where is the probability of given both and . Since the probability of given is the same as the probability of given both and , this equality expresses that contributes nothing to the certainty of . In this case, and are said to be conditionally independent given , written symbolically as: .

The concept of conditional independence is essential to graph-based theories of statistical inference, as it establishes a mathematical relation between a collection of conditional statements and a graphoid.

Conditional independence of events

Let , , and be events. and are said to be conditionally independent given if and only if and. This property is symmetric (more on this below) and often written as , which should be read as.

Equivalently, conditional independence may be stated as where is the joint probability of and given . This alternate formulation states that and are independent events, given .

It demonstrates that is equivalent to .

Proof of the equivalent definition

Examples

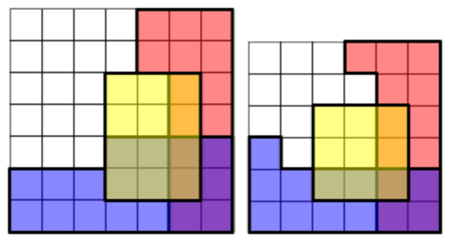

Coloured boxes

Each cell represents a possible outcome. The events , and are represented by the areas shaded red, blue and yellow respectively. The overlap between the events and is shaded purple.

The probabilities of these events are shaded areas with respect to the total area. In both examples and are conditionally independent given because:

but not conditionally independent given because:

Proximity and delays

Let events A and B be defined as the probability that person A and person B will be home in time for dinner where both people are randomly sampled from the entire world. Events A and B can be assumed to be independent i.e. knowledge that A is late has minimal to no change on the probability that B will be late. However, if a third event is introduced, person A and person B live in the same neighborhood, the two events are now considered not conditionally independent. Traffic conditions and weather-related events that might delay person A, might delay person B as well. Given the third event and knowledge that person A was late, the probability that person B will be late does meaningfully change.[2]

Dice rolling

Conditional independence depends on the nature of the third event. If you roll two dice, one may assume that the two dice behave independently of each other. Looking at the results of one die will not tell you about the result of the second die. (That is, the two dice are independent.) If, however, the 1st die's result is a 3, and someone tells you about a third event - that the sum of the two results is even - then this extra unit of information restricts the options for the 2nd result to an odd number. In other words, two events can be independent, but NOT conditionally independent.[2]

Height and vocabulary

Height and vocabulary are dependent since very small people tend to be children, known for their more basic vocabularies. But knowing that two people are 19 years old (i.e., conditional on age) there is no reason to think that one person's vocabulary is larger if we are told that they are taller.

Conditional independence of random variables

Two discrete random variables and are conditionally independent given a third discrete random variable if and only if they are independent in their conditional probability distribution given . That is, and are conditionally independent given if and only if, given any value of , the probability distribution of is the same for all values of and the probability distribution of is the same for all values of . Formally:

|

|

() |

where is the conditional cumulative distribution function of and given .

Two events and are conditionally independent given a σ-algebra if

where denotes the conditional expectation of the indicator function of the event , , given the sigma algebra . That is,

Two random variables and are conditionally independent given a σ-algebra if the above equation holds for all in and in .

Two random variables and are conditionally independent given a random variable if they are independent given σ(W): the σ-algebra generated by . This is commonly written:

- or

This is read " is independent of , given "; the conditioning applies to the whole statement: "( is independent of ) given ".

This notation extends for " is independent of ."

If assumes a countable set of values, this is equivalent to the conditional independence of X and Y for the events of the form . Conditional independence of more than two events, or of more than two random variables, is defined analogously.

The following two examples show that neither implies nor is implied by .

First, suppose is 0 with probability 0.5 and 1 otherwise. When W = 0 take and to be independent, each having the value 0 with probability 0.99 and the value 1 otherwise. When , and are again independent, but this time they take the value 1 with probability 0.99. Then . But and are dependent, because Pr(X = 0) < Pr(X = 0|Y = 0). This is because Pr(X = 0) = 0.5, but if Y = 0 then it's very likely that W = 0 and thus that X = 0 as well, so Pr(X = 0|Y = 0) > 0.5.

For the second example, suppose , each taking the values 0 and 1 with probability 0.5. Let be the product . Then when , Pr(X = 0) = 2/3, but Pr(X = 0|Y = 0) = 1/2, so is false. This is also an example of Explaining Away. See Kevin Murphy's tutorial [3] where and take the values "brainy" and "sporty".

Conditional independence of random vectors

Two random vectors and are conditionally independent given a third random vector if and only if they are independent in their conditional cumulative distribution given . Formally:

|

|

() |

where , and and the conditional cumulative distributions are defined as follows.

Uses in Bayesian inference

Let p be the proportion of voters who will vote "yes" in an upcoming referendum. In taking an opinion poll, one chooses n voters randomly from the population. For i = 1, ..., n, let Xi = 1 or 0 corresponding, respectively, to whether or not the ith chosen voter will or will not vote "yes".

In a frequentist approach to statistical inference one would not attribute any probability distribution to p (unless the probabilities could be somehow interpreted as relative frequencies of occurrence of some event or as proportions of some population) and one would say that X1, ..., Xn are independent random variables.

By contrast, in a Bayesian approach to statistical inference, one would assign a probability distribution to p regardless of the non-existence of any such "frequency" interpretation, and one would construe the probabilities as degrees of belief that p is in any interval to which a probability is assigned. In that model, the random variables X1, ..., Xn are not independent, but they are conditionally independent given the value of p. In particular, if a large number of the Xs are observed to be equal to 1, that would imply a high conditional probability, given that observation, that p is near 1, and thus a high conditional probability, given that observation, that the next X to be observed will be equal to 1.

Rules of conditional independence

A set of rules governing statements of conditional independence have been derived from the basic definition.[4][5]

These rules were termed "Graphoid Axioms" by Pearl and Paz,[6] because they hold in graphs, where is interpreted to mean: "All paths from X to A are intercepted by the set B".[7]

Symmetry

Proof:

From the definition of conditional independence,

Decomposition

Proof From the definition of conditional independence, we seek to show that:

. The left side of this equality is:

, where the expression on the right side of this equality is the summation over such that of the conditional probability of on . Further decomposing,

. Special cases of this property include

-

- Proof: Let us define and be an 'extraction' function . Then:

-

- Proof: Let us define and be again an 'extraction' function . Then:

Weak union

Proof:

Given , we aim to show

. We begin with the left side of the equation

. From the given condition

. Thus , so we have shown that .

Special Cases:

Some textbooks present the property as

- [8].

- .

Both versions can be shown to follow from the weak union property given initially via the same method as in the decomposition section above.

Contraction

Proof

This property can be proved by noticing , each equality of which is asserted by and , respectively.

Intersection

For strictly positive probability distributions,[5] the following also holds:

Proof

By assumption:

Using this equality, together with the Law of total probability applied to :

Since and , it follows that .

Technical note: since these implications hold for any probability space, they will still hold if one considers a sub-universe by conditioning everything on another variable, say K. For example, would also mean that .

See also

References

- ↑ To see that this is the case, one needs to realise that Pr(R ∩ B | Y) is the probability of an overlap of R and B (the purple shaded area) in the Y area. Since, in the picture on the left, there are two squares where R and B overlap within the Y area, and the Y area has twelve squares, Pr(R ∩ B | Y) = 2/12 = 1/6. Similarly, Pr(R | Y) = 4/12 = 1/3 and Pr(B | Y) = 6/12 = 1/2.

- ↑ 2.0 2.1 Could someone explain conditional independence?

- ↑ "Graphical Models". http://people.cs.ubc.ca/~murphyk/Bayes/bnintro.html.

- ↑ "Conditional Independence in Statistical Theory". Journal of the Royal Statistical Society, Series B 41 (1): 1–31. 1979.

- ↑ 5.0 5.1 J Pearl, Causality: Models, Reasoning, and Inference, 2000, Cambridge University Press

- ↑ du Boulay, Benedict; Hogg, David C.; Steels, Luc, eds (1986). "Advances in Artificial Intelligence II, Seventh European Conference on Artificial Intelligence, ECAI 1986, Brighton, UK, July 20–25, 1986, Proceedings". North-Holland. pp. 357–363. https://ftp.cs.ucla.edu/pub/stat_ser/r53-L.pdf.

- ↑ Pearl, Judea (1988). Probabilistic reasoning in intelligent systems: networks of plausible inference. Morgan Kaufmann. ISBN 9780934613736. https://archive.org/details/probabilisticrea00pear.

- ↑ Koller, Daphne; Friedman, Nir (2009). Probabilistic Graphical Models. Cambridge, MA: The MIT Press. ISBN 9780262013192.

External links

|