Distributed computing

A distributed system is a system whose components are located on different networked computers, which communicate and coordinate their actions by passing messages to one another.[1][2] Distributed computing is a field of computer science that studies distributed systems.

The components of a distributed system interact with one another in order to achieve a common goal. Three significant challenges of distributed systems are: maintaining concurrency of components, overcoming the lack of a global clock, and managing the independent failure of components.[1] When a component of one system fails, the entire system does not fail.[3] Examples of distributed systems vary from SOA-based systems to massively multiplayer online games to peer-to-peer applications.

A computer program that runs within a distributed system is called a distributed program,[4] and distributed programming is the process of writing such programs.[5] There are many different types of implementations for the message passing mechanism, including pure HTTP, RPC-like connectors and message queues.[6]

Distributed computing also refers to the use of distributed systems to solve computational problems. In distributed computing, a problem is divided into many tasks, each of which is solved by one or more computers,[7] which communicate with each other via message passing.[8]

Introduction

The word distributed in terms such as "distributed system", "distributed programming", and "distributed algorithm" originally referred to computer networks where individual computers were physically distributed within some geographical area.[9] The terms are nowadays used in a much wider sense, even referring to autonomous processes that run on the same physical computer and interact with each other by message passing.[8]

While there is no single definition of a distributed system,[10] the following defining properties are commonly used as:

- There are several autonomous computational entities (computers or nodes), each of which has its own local memory.[11]

- The entities communicate with each other by message passing.[12]

A distributed system may have a common goal, such as solving a large computational problem;[13] the user then perceives the collection of autonomous processors as a unit. Alternatively, each computer may have its own user with individual needs, and the purpose of the distributed system is to coordinate the use of shared resources or provide communication services to the users.[14]

Other typical properties of distributed systems include the following:

- The system has to tolerate failures in individual computers.[15]

- The structure of the system (network topology, network latency, number of computers) is not known in advance, the system may consist of different kinds of computers and network links, and the system may change during the execution of a distributed program.[16]

- Each computer has only a limited, incomplete view of the system. Each computer may know only one part of the input.[17]

Parallel and distributed computing

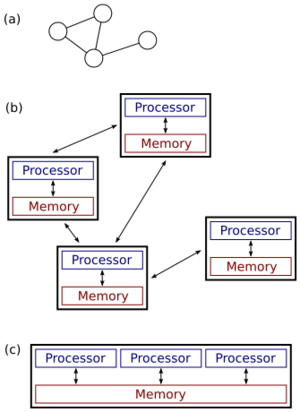

(c): a parallel system.

Distributed systems are groups of networked computers which share a common goal for their work. The terms "concurrent computing", "parallel computing", and "distributed computing" have much overlap, and no clear distinction exists between them.[18] The same system may be characterized both as "parallel" and "distributed"; the processors in a typical distributed system run concurrently in parallel.[19] Parallel computing may be seen as a particularly tightly coupled form of distributed computing,[20] and distributed computing may be seen as a loosely coupled form of parallel computing.[10] Nevertheless, it is possible to roughly classify concurrent systems as "parallel" or "distributed" using the following criteria:

- In parallel computing, all processors may have access to a shared memory to exchange information between processors.[21]

- In distributed computing, each processor has its own private memory (distributed memory). Information is exchanged by passing messages between the processors.[22]

The figure on the right illustrates the difference between distributed and parallel systems. Figure (a) is a schematic view of a typical distributed system; the system is represented as a network topology in which each node is a computer and each line connecting the nodes is a communication link. Figure (b) shows the same distributed system in more detail: each computer has its own local memory, and information can be exchanged only by passing messages from one node to another by using the available communication links. Figure (c) shows a parallel system in which each processor has a direct access to a shared memory.

The situation is further complicated by the traditional uses of the terms parallel and distributed algorithm that do not quite match the above definitions of parallel and distributed systems (see below for more detailed discussion). Nevertheless, as a rule of thumb, high-performance parallel computation in a shared-memory multiprocessor uses parallel algorithms while the coordination of a large-scale distributed system uses distributed algorithms.[23]

History

The use of concurrent processes which communicate through message-passing has its roots in operating system architectures studied in the 1960s.[24] The first widespread distributed systems were local-area networks such as Ethernet, which was invented in the 1970s.[25]

ARPANET, one of the predecessors of the Internet, was introduced in the late 1960s, and ARPANET e-mail was invented in the early 1970s. E-mail became the most successful application of ARPANET,[26] and it is probably the earliest example of a large-scale distributed application. In addition to ARPANET (and its successor, the global Internet), other early worldwide computer networks included Usenet and FidoNet from the 1980s, both of which were used to support distributed discussion systems.[27]

The study of distributed computing became its own branch of computer science in the late 1970s and early 1980s. The first conference in the field, Symposium on Principles of Distributed Computing (PODC), dates back to 1982, and its counterpart International Symposium on Distributed Computing (DISC) was first held in Ottawa in 1985 as the International Workshop on Distributed Algorithms on Graphs.[28]

Architectures

Various hardware and software architectures are used for distributed computing. At a lower level, it is necessary to interconnect multiple CPUs with some sort of network, regardless of whether that network is printed onto a circuit board or made up of loosely coupled devices and cables. At a higher level, it is necessary to interconnect processes running on those CPUs with some sort of communication system.[29]

Distributed programming typically falls into one of several basic architectures: client–server, three-tier, n-tier, or peer-to-peer; or categories: loose coupling, or tight coupling.[30]

- Client–server: architectures where smart clients contact the server for data then format and display it to the users. Input at the client is committed back to the server when it represents a permanent change.

- Three-tier: architectures that move the client intelligence to a middle tier so that stateless clients can be used. This simplifies application deployment. Most web applications are three-tier.

- n-tier: architectures that refer typically to web applications which further forward their requests to other enterprise services. This type of application is the one most responsible for the success of application servers.

- Peer-to-peer: architectures where there are no special machines that provide a service or manage the network resources.[31]: 227 Instead all responsibilities are uniformly divided among all machines, known as peers. Peers can serve both as clients and as servers.[32] Examples of this architecture include BitTorrent and the bitcoin network.

Another basic aspect of distributed computing architecture is the method of communicating and coordinating work among concurrent processes. Through various message passing protocols, processes may communicate directly with one another, typically in a main/sub relationship. Alternatively, a "database-centric" architecture can enable distributed computing to be done without any form of direct inter-process communication, by utilizing a shared database.[33] Database-centric architecture in particular provides relational processing analytics in a schematic architecture allowing for live environment relay. This enables distributed computing functions both within and beyond the parameters of a networked database.[34]

Applications

Reasons for using distributed systems and distributed computing may include:

- The very nature of an application may require the use of a communication network that connects several computers: for example, data produced in one physical location and required in another location.

- There are many cases in which the use of a single computer would be possible in principle, but the use of a distributed system is beneficial for practical reasons. For example:

- It can allow for much larger storage and memory, faster compute, and higher bandwidth than a single machine.

- It can provide more reliability than a non-distributed system, as there is no single point of failure. Moreover, a distributed system may be easier to expand and manage than a monolithic uniprocessor system.[35]

- It may be more cost-efficient to obtain the desired level of performance by using a cluster of several low-end computers, in comparison with a single high-end computer.

Examples

Examples of distributed systems and applications of distributed computing include the following:[36]

- telecommunication networks:

- telephone networks and cellular networks,

- computer networks such as the Internet,

- wireless sensor networks,

- routing algorithms;

- network applications:

- World Wide Web and peer-to-peer networks,

- massively multiplayer online games and virtual reality communities,

- distributed databases and distributed database management systems,

- network file systems,

- distributed cache such as burst buffers,

- distributed information processing systems such as banking systems and airline reservation systems;

- real-time process control:

- aircraft control systems,

- industrial control systems;

- parallel computation:

- scientific computing, including cluster computing, grid computing, cloud computing,[37] and various volunteer computing projects,

- distributed rendering in computer graphics.

- peer-to-peer

Theoretical foundations

Models

Many tasks that we would like to automate by using a computer are of question–answer type: we would like to ask a question and the computer should produce an answer. In theoretical computer science, such tasks are called computational problems. Formally, a computational problem consists of instances together with a solution for each instance. Instances are questions that we can ask, and solutions are desired answers to these questions.

Theoretical computer science seeks to understand which computational problems can be solved by using a computer (computability theory) and how efficiently (computational complexity theory). Traditionally, it is said that a problem can be solved by using a computer if we can design an algorithm that produces a correct solution for any given instance. Such an algorithm can be implemented as a computer program that runs on a general-purpose computer: the program reads a problem instance from input, performs some computation, and produces the solution as output. Formalisms such as random-access machines or universal Turing machines can be used as abstract models of a sequential general-purpose computer executing such an algorithm.[38][39]

The field of concurrent and distributed computing studies similar questions in the case of either multiple computers, or a computer that executes a network of interacting processes: which computational problems can be solved in such a network and how efficiently? However, it is not at all obvious what is meant by "solving a problem" in the case of a concurrent or distributed system: for example, what is the task of the algorithm designer, and what is the concurrent or distributed equivalent of a sequential general-purpose computer?[citation needed]

The discussion below focuses on the case of multiple computers, although many of the issues are the same for concurrent processes running on a single computer.

Three viewpoints are commonly used:

- Parallel algorithms in shared-memory model

- All processors have access to a shared memory. The algorithm designer chooses the program executed by each processor.

- One theoretical model is the parallel random-access machines (PRAM) that are used.[40] However, the classical PRAM model assumes synchronous access to the shared memory.

- Shared-memory programs can be extended to distributed systems if the underlying operating system encapsulates the communication between nodes and virtually unifies the memory across all individual systems.

- A model that is closer to the behavior of real-world multiprocessor machines and takes into account the use of machine instructions, such as Compare-and-swap (CAS), is that of asynchronous shared memory. There is a wide body of work on this model, a summary of which can be found in the literature.[41][42]

- Parallel algorithms in message-passing model

- The algorithm designer chooses the structure of the network, as well as the program executed by each computer.

- Models such as Boolean circuits and sorting networks are used.[43] A Boolean circuit can be seen as a computer network: each gate is a computer that runs an extremely simple computer program. Similarly, a sorting network can be seen as a computer network: each comparator is a computer.

- Distributed algorithms in message-passing model

- The algorithm designer only chooses the computer program. All computers run the same program. The system must work correctly regardless of the structure of the network.

- A commonly used model is a graph with one finite-state machine per node.

In the case of distributed algorithms, computational problems are typically related to graphs. Often the graph that describes the structure of the computer network is the problem instance. This is illustrated in the following example.[44]

An example

Consider the computational problem of finding a coloring of a given graph G. Different fields might take the following approaches:

- Centralized algorithms[44]

- The graph G is encoded as a string, and the string is given as input to a computer. The computer program finds a coloring of the graph, encodes the coloring as a string, and outputs the result.

- Parallel algorithms

- Again, the graph G is encoded as a string. However, multiple computers can access the same string in parallel. Each computer might focus on one part of the graph and produce a coloring for that part.

- The main focus is on high-performance computation that exploits the processing power of multiple computers in parallel.

- Distributed algorithms

- The graph G is the structure of the computer network. There is one computer for each node of G and one communication link for each edge of G. Initially, each computer only knows about its immediate neighbors in the graph G; the computers must exchange messages with each other to discover more about the structure of G. Each computer must produce its own color as output.

- The main focus is on coordinating the operation of an arbitrary distributed system.[44]

While the field of parallel algorithms has a different focus than the field of distributed algorithms, there is much interaction between the two fields. For example, the Cole–Vishkin algorithm for graph coloring[45] was originally presented as a parallel algorithm, but the same technique can also be used directly as a distributed algorithm.

Moreover, a parallel algorithm can be implemented either in a parallel system (using shared memory) or in a distributed system (using message passing).[46] The traditional boundary between parallel and distributed algorithms (choose a suitable network vs. run in any given network) does not lie in the same place as the boundary between parallel and distributed systems (shared memory vs. message passing).

Complexity measures

In parallel algorithms, yet another resource in addition to time and space is the number of computers. Indeed, often there is a trade-off between the running time and the number of computers: the problem can be solved faster if there are more computers running in parallel (see speedup). If a decision problem can be solved in polylogarithmic time by using a polynomial number of processors, then the problem is said to be in the class NC.[47] The class NC can be defined equally well by using the PRAM formalism or Boolean circuits—PRAM machines can simulate Boolean circuits efficiently and vice versa.[48]

In the analysis of distributed algorithms, more attention is usually paid on communication operations than computational steps. Perhaps the simplest model of distributed computing is a synchronous system where all nodes operate in a lockstep fashion. This model is commonly known as the LOCAL model. During each communication round, all nodes in parallel (1) receive the latest messages from their neighbours, (2) perform arbitrary local computation, and (3) send new messages to their neighbors. In such systems, a central complexity measure is the number of synchronous communication rounds required to complete the task.[49]

This complexity measure is closely related to the diameter of the network. Let D be the diameter of the network. On the one hand, any computable problem can be solved trivially in a synchronous distributed system in approximately 2D communication rounds: simply gather all information in one location (D rounds), solve the problem, and inform each node about the solution (D rounds).

On the other hand, if the running time of the algorithm is much smaller than D communication rounds, then the nodes in the network must produce their output without having the possibility to obtain information about distant parts of the network. In other words, the nodes must make globally consistent decisions based on information that is available in their local D-neighbourhood. Many distributed algorithms are known with the running time much smaller than D rounds, and understanding which problems can be solved by such algorithms is one of the central research questions of the field.[50] Typically an algorithm which solves a problem in polylogarithmic time in the network size is considered efficient in this model.

Another commonly used measure is the total number of bits transmitted in the network (cf. communication complexity).[51] The features of this concept are typically captured with the CONGEST(B) model, which is similarly defined as the LOCAL model, but where single messages can only contain B bits.

Other problems

Traditional computational problems take the perspective that the user asks a question, a computer (or a distributed system) processes the question, then produces an answer and stops. However, there are also problems where the system is required not to stop, including the dining philosophers problem and other similar mutual exclusion problems. In these problems, the distributed system is supposed to continuously coordinate the use of shared resources so that no conflicts or deadlocks occur.

There are also fundamental challenges that are unique to distributed computing, for example those related to fault-tolerance. Examples of related problems include consensus problems,[52] Byzantine fault tolerance,[53] and self-stabilisation.[54]

Much research is also focused on understanding the asynchronous nature of distributed systems:

- Synchronizers can be used to run synchronous algorithms in asynchronous systems.[55]

- Logical clocks provide a causal happened-before ordering of events.[56]

- Clock synchronization algorithms provide globally consistent physical time stamps.[57]

Coordinator election (or leader election) is the process of designating a single process as the organizer of some task distributed among several computers (nodes). Before the task is begun, all network nodes are either unaware which node will serve as the "coordinator" (or leader) of the task, or unable to communicate with the current coordinator. After a coordinator election algorithm has been run, however, each node throughout the network recognizes a particular, unique node as the task coordinator.[58]

The network nodes communicate among themselves in order to decide which of them will get into the "coordinator" state. For that, they need some method in order to break the symmetry among them. For example, if each node has unique and comparable identities, then the nodes can compare their identities, and decide that the node with the highest identity is the coordinator.[58]

The definition of this problem is often attributed to LeLann, who formalized it as a method to create a new token in a token ring network in which the token has been lost.[59]

Coordinator election algorithms are designed to be economical in terms of total bytes transmitted, and time. The algorithm suggested by Gallager, Humblet, and Spira [60] for general undirected graphs has had a strong impact on the design of distributed algorithms in general, and won the Dijkstra Prize for an influential paper in distributed computing.

Many other algorithms were suggested for different kinds of network graphs, such as undirected rings, unidirectional rings, complete graphs, grids, directed Euler graphs, and others. A general method that decouples the issue of the graph family from the design of the coordinator election algorithm was suggested by Korach, Kutten, and Moran.[61]

In order to perform coordination, distributed systems employ the concept of coordinators. The coordinator election problem is to choose a process from among a group of processes on different processors in a distributed system to act as the central coordinator. Several central coordinator election algorithms exist.[62]

Properties of distributed systems

So far the focus has been on designing a distributed system that solves a given problem. A complementary research problem is studying the properties of a given distributed system.[63][64]

The halting problem is an analogous example from the field of centralised computation: we are given a computer program and the task is to decide whether it halts or runs forever. The halting problem is undecidable in the general case, and naturally understanding the behaviour of a computer network is at least as hard as understanding the behaviour of one computer.[65]

However, there are many interesting special cases that are decidable. In particular, it is possible to reason about the behaviour of a network of finite-state machines. One example is telling whether a given network of interacting (asynchronous and non-deterministic) finite-state machines can reach a deadlock. This problem is PSPACE-complete,[66] i.e., it is decidable, but not likely that there is an efficient (centralised, parallel or distributed) algorithm that solves the problem in the case of large networks.

See also

- Actor model

- AppScale

- BOINC

- Code mobility

- Dataflow programming

- Decentralized computing

- Distributed algorithm

- Distributed algorithmic mechanism design

- Distributed cache

- Distributed GIS

- Distributed networking

- Distributed operating system

- Eventual consistency

- Edsger W. Dijkstra Prize in Distributed Computing

- Federation (information technology)

- Fog computing

- Folding@home

- Grid computing

- Inferno

- Internet GIS

- Jungle computing

- Layered queueing network

- Library Oriented Architecture (LOA)

- List of distributed computing conferences

- List of volunteer computing projects

- Model checking

- OpenHarmony

- HarmonyOS

- Parallel distributed processing

- Parallel programming model

- Plan 9 from Bell Labs

- Shared nothing architecture

- Web GIS

Notes

- ↑ 1.0 1.1 Tanenbaum, Andrew S.; Steen, Maarten van (2002). Distributed systems: principles and paradigms. Upper Saddle River, NJ: Pearson Prentice Hall. ISBN 0-13-088893-1. https://www.distributed-systems.net/index.php/books/ds3/. Retrieved 2020-08-28.

- ↑ "Distributed Programs". Texts in Computer Science. London: Springer London. 2010. pp. 373–406. doi:10.1007/978-1-84882-745-5_11. ISBN 978-1-84882-744-8. "Systems consist of a number of physically distributed components that work independently using their private storage, but also communicate from time to time by explicit message passing. Such systems are called distributed systems."

- ↑ Dusseau & Dusseau 2016, p. 1–2.

- ↑ "Distributed Programs". Texts in Computer Science. London: Springer London. 2010. pp. 373–406. doi:10.1007/978-1-84882-745-5_11. ISBN 978-1-84882-744-8. "Distributed programs are abstract descriptions of distributed systems. A distributed program consists of a collection of processes that work concurrently and communicate by explicit message passing. Each process can access a set of variables which are disjoint from the variables that can be changed by any other process."

- ↑ (Andrews 2000). (Dolev 2000). (Ghosh 2007), p. 10.

- ↑ Magnoni, L. (2015). "Modern Messaging for Distributed Sytems (sic)" (in en). Journal of Physics: Conference Series 608 (1): 012038. doi:10.1088/1742-6596/608/1/012038. ISSN 1742-6596.

- ↑ (Godfrey 2002).

- ↑ 8.0 8.1 (Andrews 2000), p. 291–292. (Dolev 2000), p. 5.

- ↑ (Lynch 1996), p. 1.

- ↑ 10.0 10.1 (Ghosh 2007), p. 10.

- ↑ (Andrews 2000), pp. 8–9, 291. (Dolev 2000), p. 5. (Ghosh 2007), p. 3. (Lynch 1996), p. xix, 1. (Peleg 2000), p. xv.

- ↑ (Andrews 2000), p. 291. (Ghosh 2007), p. 3. (Peleg 2000), p. 4.

- ↑ (Ghosh 2007), p. 3–4. (Peleg 2000), p. 1.

- ↑ (Ghosh 2007), p. 4. (Peleg 2000), p. 2.

- ↑ (Ghosh 2007), p. 4, 8. (Lynch 1996), p. 2–3. (Peleg 2000), p. 4.

- ↑ (Lynch 1996), p. 2. (Peleg 2000), p. 1.

- ↑ (Ghosh 2007), p. 7. (Lynch 1996), p. xix, 2. (Peleg 2000), p. 4.

- ↑ (Ghosh 2007), p. 10. (Keidar 2008).

- ↑ (Lynch 1996), p. xix, 1–2. (Peleg 2000), p. 1.

- ↑ (Peleg 2000), p. 1.

- ↑ (Papadimitriou 1994), Chapter 15. (Keidar 2008).

- ↑ See references in Introduction.

- ↑ Bentaleb, A.; Yifan, L. (2016). "Parallel and Distributed Algorithms". National University of Singapore. http://www.comp.nus.edu.sg/~rahul/allfiles/cs6234-16-pds.pdf.

- ↑ (Andrews 2000), p. 348.

- ↑ (Andrews 2000), p. 32.

- ↑ (Peter 2004), The history of email .

- ↑ Banks, M. (2012). On the Way to the Web: The Secret History of the Internet and its Founders. Apress. pp. 44–5. ISBN 9781430250746. https://books.google.com/books?id=1J78hiHKaPoC&pg=PT67. Retrieved 2018-07-20.

- ↑ Tel, G. (2000). Introduction to Distributed Algorithms. Cambridge University Press. pp. 35–36. ISBN 9780521794831. https://books.google.com/books?id=vlpnS25qAJQC&pg=PA35. Retrieved 2018-07-20.

- ↑ Ohlídal, M.Expression error: Unrecognized word "et". (2006). "Evolutionary Design of OAB and AAB Communication Schedules for Interconnection Networks". in Rothlauf, F.. Applications of Evolutionary Computing. Springer Science & Business Media. pp. 267–78. ISBN 9783540332374.

- ↑ Real Time And Distributed Computing Systems. ISSN 2278-0661. https://pdfs.semanticscholar.org/2950/37ee46ac281590f67435380cebc385ac9749.pdf. Retrieved 2017-01-09.

- ↑ Vigna P, Casey MJ. The Age of Cryptocurrency: How Bitcoin and the Blockchain Are Challenging the Global Economic Order St. Martin's Press January 27, 2015 ISBN 9781250065636

- ↑ Quang Hieu Vu; Mihai Lupu; Beng Chin Ooi (2010). Peer-to-peer computing : principles and applications. Heidelberg: Springer. pp. 16. ISBN 9783642035135. OCLC 663093862.

- ↑ "A database-centric virtual chemistry system", J Chem Inf Model 46 (3): 1034–9, 2006, doi:10.1021/ci050360b, PMID 16711722.

- ↑ Chiu, G (1990). "A model for optimal database allocation in distributed computing systems". Proceedings. IEEE INFOCOM'90: Ninth Annual Joint Conference of the IEEE Computer and Communications Societies.

- ↑ (Elmasri Navathe), Section 24.1.2.

- ↑ (Andrews 2000), p. 10–11. (Ghosh 2007), p. 4–6. (Lynch 1996), p. xix, 1. (Peleg 2000), p. xv. (Elmasri Navathe), Section 24.

- ↑ Haussmann, J. (2019). "Cost-efficient parallel processing of irregularly structured problems in cloud computing environments". Journal of Cluster Computing 22 (3): 887–909. doi:10.1007/s10586-018-2879-3.

- ↑ Toomarian, N.B.; Barhen, J.; Gulati, S. (1992). "Neural Networks for Real-Time Robotic Applications". in Fijany, A.. Parallel Computation Systems For Robotics: Algorithms And Architectures. World Scientific. p. 214. ISBN 9789814506175. https://books.google.com/books?id=CKTsCgAAQBAJ&pg=PA214. Retrieved 2018-07-20.

- ↑ Savage, J.E. (1998). Models of Computation: Exploring the Power of Computing. Addison Wesley. p. 209. ISBN 9780201895391.

- ↑ (Cormen Leiserson), Section 30.

- ↑ (Herlihy Shavit), Chapters 2–6.

- ↑ (Lynch 1996)

- ↑ (Cormen Leiserson), Sections 28 and 29.

- ↑ 44.0 44.1 44.2 TULSIRAMJI GAIKWAD-PATIL College of Engineering & Technology, Nagpur Department of Information Technology Introduction to Distributed Systems[1]

- ↑ (Cole Vishkin). (Cormen Leiserson), Section 30.5.

- ↑ (Andrews 2000), p. ix.

- ↑ (Arora Barak), Section 6.7. (Papadimitriou 1994), Section 15.3.

- ↑ (Papadimitriou 1994), Section 15.2.

- ↑ (Lynch 1996), p. 17–23.

- ↑ (Peleg 2000), Sections 2.3 and 7. (Linial 1992). (Naor Stockmeyer).

- ↑ Schneider, J.; Wattenhofer, R. (2011). "Trading Bit, Message, and Time Complexity of Distributed Algorithms". in Peleg, D.. Distributed Computing. Springer Science & Business Media. pp. 51–65. ISBN 9783642240997. https://books.google.com/books?id=dT6nwpXvES4C&pg=PA51. Retrieved 2018-07-20.

- ↑ (Lynch 1996), Sections 5–7. (Ghosh 2007), Chapter 13.

- ↑ (Lynch 1996), p. 99–102. (Ghosh 2007), p. 192–193.

- ↑ (Dolev 2000). (Ghosh 2007), Chapter 17.

- ↑ (Lynch 1996), Section 16. (Peleg 2000), Section 6.

- ↑ (Lynch 1996), Section 18. (Ghosh 2007), Sections 6.2–6.3.

- ↑ (Ghosh 2007), Section 6.4.

- ↑ 58.0 58.1 Haloi, S. (2015). Apache ZooKeeper Essentials. Packt Publishing Ltd. pp. 100–101. ISBN 9781784398323. https://books.google.com/books?id=Ym9uBgAAQBAJ&pg=PA100. Retrieved 2018-07-20.

- ↑ LeLann, G. (1977). "Distributed systems - toward a formal approach". Information Processing 77: 155·160.

- ↑ R. G. Gallager, P. A. Humblet, and P. M. Spira (January 1983). "A Distributed Algorithm for Minimum-Weight Spanning Trees". ACM Transactions on Programming Languages and Systems 5 (1): 66–77. doi:10.1145/357195.357200. http://www.apposite-tech.com/blog/wp-content/uploads/2017/09/p66-gallager.pdf.

- ↑ Korach, Ephraim; Kutten, Shay; Moran, Shlomo (1990). "A Modular Technique for the Design of Efficient Distributed Leader Finding Algorithms". ACM Transactions on Programming Languages and Systems 12 (1): 84–101. doi:10.1145/77606.77610. https://www.cs.technion.ac.il/~moran/r/PS/kkm.pdf.

- ↑ Hamilton, Howard. "Distributed Algorithms". http://www2.cs.uregina.ca/~hamilton/courses/330/notes/distributed/distributed.html.

- ↑ "Major unsolved problems in distributed systems?". https://cstheory.stackexchange.com/q/10045.

- ↑ "How big data and distributed systems solve traditional scalability problems". http://www.theserverside.com/feature/How-big-data-and-distributed-systems-solve-traditional-scalability-problems.

- ↑ Svozil, K. (2011). "Indeterminism and Randomness Through Physics". in Hector, Z.. Randomness Through Computation: Some Answers, More Questions. World Scientific. pp. 112–3. ISBN 9789814462631. https://books.google.com/books?id=ep_FCgAAQBAJ&pg=PA112. Retrieved 2018-07-20.

- ↑ (Papadimitriou 1994), Section 19.3.

References

- Books

- Andrews, Gregory R. (2000), Foundations of Multithreaded, Parallel, and Distributed Programming, Addison–Wesley, ISBN 978-0-201-35752-3, https://archive.org/details/foundationsofmul0000andr.

- Computational Complexity – A Modern Approach, Cambridge University Press, 2009, ISBN 978-0-521-42426-4.

- Introduction to Algorithms (1st ed.), MIT Press, 1990, ISBN 978-0-262-03141-7.

- Self-Stabilization, MIT Press, 2000, ISBN 978-0-262-04178-2.

- Elmasri, Ramez (2000), Fundamentals of Database Systems (3rd ed.), Addison–Wesley, ISBN 978-0-201-54263-9.

- Ghosh, Sukumar (2007), Distributed Systems – An Algorithmic Approach, Chapman & Hall/CRC, ISBN 978-1-58488-564-1.

- Lynch, Nancy A. (1996), Distributed Algorithms, Morgan Kaufmann, ISBN 978-1-55860-348-6, https://archive.org/details/distributedalgor0000lync.

- The Art of Multiprocessor Programming, Morgan Kaufmann, 2008, ISBN 978-0-12-370591-4.

- Computational Complexity, Addison–Wesley, 1994, ISBN 978-0-201-53082-7.

- Peleg, David (2000), Distributed Computing: A Locality-Sensitive Approach, SIAM, ISBN 978-0-89871-464-7, http://www.ec-securehost.com/SIAM/DT05.html, retrieved 2009-07-16.

- Articles

- Cole, Richard (1986), "Deterministic coin tossing with applications to optimal parallel list ranking", Information and Control 70 (1): 32–53, doi:10.1016/S0019-9958(86)80023-7.

- Keidar (2008), "Distributed computing column 32 – The year in review", ACM SIGACT News 39 (4): 53–54, doi:10.1145/1466390.1466402, http://webee.technion.ac.il/~idish/sigactNews/#column%2032, retrieved 2009-08-20.

- "Locality in distributed graph algorithms", SIAM Journal on Computing 21 (1): 193–201, 1992, doi:10.1137/0221015.

- Naor, Moni; Stockmeyer, Larry (1995), "What can be computed locally?", SIAM Journal on Computing 24 (6): 1259–1277, doi:10.1137/S0097539793254571, http://www.wisdom.weizmann.ac.il/~naor/PAPERS/lcl.pdf.

- Web sites

- Godfrey, Bill (2002). "A primer on distributed computing". https://billpg.com/bacchae-co-uk/docs/dist.html.

- Peter, Ian (2004). "Ian Peter's History of the Internet". http://www.nethistory.info/History%20of%20the%20Internet/.

Further reading

- Books

- Attiya, Hagit and Jennifer Welch (2004), Distributed Computing: Fundamentals, Simulations, and Advanced Topics, Wiley-Interscience ISBN 0-471-45324-2.

- Christian Cachin; Rachid Guerraoui; Luís Rodrigues (2011), Introduction to Reliable and Secure Distributed Programming (2. ed.), Springer, ISBN 978-3-642-15259-7, Bibcode: 2011itra.book.....C

- Coulouris, George (2011), Distributed Systems: Concepts and Design (5th Edition), Addison-Wesley ISBN 0-132-14301-1.

- Faber, Jim (1998), Java Distributed Computing, O'Reilly, http://docstore.mik.ua/orelly/java-ent/dist/index.htm, retrieved 2010-09-29: Java Distributed Computing by Jim Faber, 1998

- Garg, Vijay K. (2002), Elements of Distributed Computing, Wiley-IEEE Press ISBN 0-471-03600-5.

- Tel, Gerard (1994), Introduction to Distributed Algorithms, Cambridge University Press

- Chandy, Mani (1988), Parallel Program Design, Addison-Wesley ISBN 0201058669

- Dusseau, Remzi H.; Dusseau, Andrea (2016). Operating Systems: Three Easy Pieces, Chapter 48 Distributed Systems. https://pages.cs.wisc.edu/~remzi/OSTEP/dist-intro.pdf. Retrieved 8 October 2021.

- Articles

- Keidar, Idit; Rajsbaum, Sergio, eds. (2000–2009), "Distributed computing column", ACM SIGACT News, http://webee.technion.ac.il/~idish/sigactNews/, retrieved 2009-08-16.

- Birrell, A. D.; Levin, R.; Schroeder, M. D.; Needham, R. M. (April 1982). "Grapevine: An exercise in distributed computing". Communications of the ACM 25 (4): 260–274. doi:10.1145/358468.358487. http://web.cs.wpi.edu/~cs4513/d07/Papers/Birrell,%20Levin,%20et.%20al.,%20Grapevine.pdf.

- Conference Papers

- Rodriguez, Carlos; Villagra, Marcos; Baran, Benjamin (2007). "Asynchronous team algorithms for Boolean Satisfiability". 2007 2nd Bio-Inspired Models of Network, Information and Computing Systems. pp. 66–69. doi:10.1109/BIMNICS.2007.4610083.

External links

|