Gibbs phenomenon

In mathematics, the Gibbs phenomenon is the oscillatory behavior of the Fourier series of a piecewise continuously differentiable periodic function around a jump discontinuity. The th partial Fourier series of the function (formed by summing the lowest constituent sinusoids of the Fourier series of the function) produces large peaks around the jump which overshoot and undershoot the function values. As more sinusoids are used, this approximation error approaches a limit of about 9% of the jump, though the infinite Fourier series sum does eventually converge almost everywhere.[1]

The Gibbs phenomenon was observed by experimental physicists and was believed to be due to imperfections in the measuring apparatus,[2] but it is in fact a mathematical result. It is one cause of ringing artifacts in signal processing. It is named after Josiah Willard Gibbs.

Description

The Gibbs phenomenon is a behavior of the Fourier series of a function with a jump discontinuity and is described as the following:

As more Fourier series constituents or components are taken, the Fourier series shows the first overshoot in the oscillatory behavior around the jump point approaching ~ 9% of the (full) jump and this oscillation does not disappear but gets closer to the point so that the integral of the oscillation approaches zero.

At the jump point, the Fourier series gives the average of the function's both side limits toward the point.

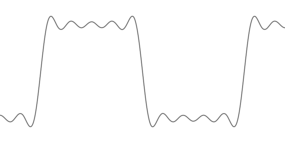

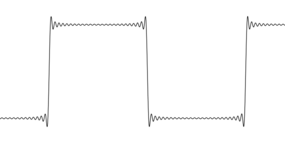

Square wave example

The three pictures on the right demonstrate the Gibbs phenomenon for a square wave (with peak-to-peak amplitude of from to and the periodicity ) whose th partial Fourier series is

where . More precisely, this square wave is the function which equals between and and between and for every integer ; thus, this square wave has a jump discontinuity of peak-to-peak height at every integer multiple of .

As more sinusoidal terms are added (i.e., increasing ), the error of the partial Fourier series converges to a fixed height. But because the width of the error continues to narrow, the area of the error – and hence the energy of the error – converges to 0.[3] The square wave analysis reveals that the error exceeds the height (from zero) of the square wave by (OEIS: A243268)

or about 9% of the full jump . More generally, at any discontinuity of a piecewise continuously differentiable function with a jump of , the th partial Fourier series of the function will (for a very large value) overshoot this jump by an error approaching at one end and undershoot it by the same amount at the other end; thus the "full jump" in the partial Fourier series will be about 18% larger than the full jump in the original function. At the discontinuity, the partial Fourier series will converge to the midpoint of the jump (regardless of the actual value of the original function at the discontinuity) as a consequence of Dirichlet's theorem.[4] The quantity (OEIS: A036792) is sometimes known as the Wilbraham–Gibbs constant.[5]

History

The Gibbs phenomenon was first noticed and analyzed by Henry Wilbraham in an 1848 paper.[6] The paper attracted little attention until 1914 when it was mentioned in Heinrich Burkhardt's review of mathematical analysis in Klein's encyclopedia.[7] In 1898, Albert A. Michelson developed a device that could compute and re-synthesize the Fourier series.[8] A widespread anecdote says that when the Fourier coefficients for a square wave were input to the machine, the graph would oscillate at the discontinuities, and that because it was a physical device subject to manufacturing flaws, Michelson was convinced that the overshoot was caused by errors in the machine. In fact the graphs produced by the machine were not good enough to exhibit the Gibbs phenomenon clearly, and Michelson may not have noticed it as he made no mention of this effect in his paper (Michelson Stratton) about his machine or his later letters to Nature.[9]

Inspired by correspondence in Nature between Michelson and A. E. H. Love about the convergence of the Fourier series of the square wave function, J. Willard Gibbs published a note in 1898 pointing out the important distinction between the limit of the graphs of the partial sums of the Fourier series of a sawtooth wave and the graph of the limit of those partial sums. In his first letter Gibbs failed to notice the Gibbs phenomenon, and the limit that he described for the graphs of the partial sums was inaccurate. In 1899 he published a correction in which he described the overshoot at the point of discontinuity (Nature, April 27, 1899, p. 606). In 1906, Maxime Bôcher gave a detailed mathematical analysis of that overshoot, coining the term "Gibbs phenomenon"[10] and bringing it into widespread use.[9]

After the existence of Henry Wilbraham's paper became widely known, in 1925 Horatio Scott Carslaw remarked, "We may still call this property of Fourier's series (and certain other series) Gibbs's phenomenon; but we must no longer claim that the property was first discovered by Gibbs."[11]

Explanation

Informally, the Gibbs phenomenon reflects the difficulty inherent in approximating a discontinuous function by a finite series of continuous sinusoidal waves. It is important to put emphasis on the word finite, because even though every partial sum of the Fourier series overshoots around each discontinuity it is approximating, the limit of summing an infinite number of sinusoidal waves does not. The overshoot peaks moves closer and closer to the discontinuity as more terms are summed, so convergence is possible.

There is no contradiction (between the overshoot error converging to a non-zero height even though the infinite sum has no overshoot), because the overshoot peaks move toward the discontinuity. The Gibbs phenomenon thus exhibits pointwise convergence, but not uniform convergence. For a piecewise continuously differentiable (class C1) function, the Fourier series converges to the function at every point except at jump discontinuities. At jump discontinuities, the infinite sum will converge to the jump discontinuity's midpoint (i.e. the average of the values of the function on either side of the jump), as a consequence of Dirichlet's theorem.[4]

The Gibbs phenomenon is closely related to the principle that the smoothness of a function controls the decay rate of its Fourier coefficients. Fourier coefficients of smoother functions will more rapidly decay (resulting in faster convergence), whereas Fourier coefficients of discontinuous functions will slowly decay (resulting in slower convergence). For example, the discontinuous square wave has Fourier coefficients that decay only at the rate of , while the continuous triangle wave has Fourier coefficients that decay at a much faster rate of .

This only provides a partial explanation of the Gibbs phenomenon, since Fourier series with absolutely convergent Fourier coefficients would be uniformly convergent by the Weierstrass M-test and would thus be unable to exhibit the above oscillatory behavior. By the same token, it is impossible for a discontinuous function to have absolutely convergent Fourier coefficients, since the function would thus be the uniform limit of continuous functions and therefore be continuous, a contradiction. See Convergence of Fourier series § Absolute convergence.

Solutions

Since the Gibbs phenomenon comes from undershooting, it may be eliminated by using kernels that are never negative, such as the Fejér kernel.[12][13]

In practice, the difficulties associated with the Gibbs phenomenon can be ameliorated by using a smoother method of Fourier series summation, such as Fejér summation or Riesz summation, or by using sigma-approximation. Using a continuous wavelet transform, the wavelet Gibbs phenomenon never exceeds the Fourier Gibbs phenomenon.[14] Also, using the discrete wavelet transform with Haar basis functions, the Gibbs phenomenon does not occur at all in the case of continuous data at jump discontinuities,[15] and is minimal in the discrete case at large change points. In wavelet analysis, this is commonly referred to as the Longo phenomenon. In the polynomial interpolation setting, the Gibbs phenomenon can be mitigated using the S-Gibbs algorithm.[16]

Formal mathematical description of the Gibbs phenomenon

Let be a piecewise continuously differentiable function which is periodic with some period . Suppose that at some point , the left limit and right limit of the function differ by a non-zero jump of :

For each positive integer ≥ 1, let be the th partial Fourier series ( can be treated as a mathematical operator on functions.)

where the Fourier coefficients for integers are given by the usual formulae

Then we have and but

More generally, if is any sequence of real numbers which converges to as , and if the jump of is positive then and

If instead the jump of is negative, one needs to interchange limit superior () with limit inferior (), and also interchange the and signs, in the above two inequalities.

Proof of the Gibbs phenomenon in a general case

Stated again, let be a piecewise continuously differentiable function which is periodic with some period , and this function has multiple jump discontinuity points denoted where and so on. At each discontinuity, the amount of the vertical full jump is .

Then, can be expressed as the sum of a continuous function and a multi-step function which is the sum of step functions such as[17]

as the th partial Fourier series of will converge well at all points except points near discontinuities . Around each discontinuity point , will only have the Gibbs phenomenon of its own (the maximum oscillatory convergence error of ~ 9% of the jump , as shown in the square wave analysis) because other functions are continuous () or flat zero ( where ) around that point. This proves how the Gibbs phenomenon occurs at every discontinuity.

Signal processing explanation

From a signal processing point of view, the Gibbs phenomenon is the step response of a low-pass filter, and the oscillations are called ringing or ringing artifacts. Truncating the Fourier transform of a signal on the real line, or the Fourier series of a periodic signal (equivalently, a signal on the circle), corresponds to filtering out the higher frequencies with an ideal (brick-wall) low-pass filter. This can be represented as convolution of the original signal with the impulse response of the filter (also known as the kernel), which is the sinc function. Thus, the Gibbs phenomenon can be seen as the result of convolving a Heaviside step function (if periodicity is not required) or a square wave (if periodic) with a sinc function: the oscillations in the sinc function cause the ripples in the output.

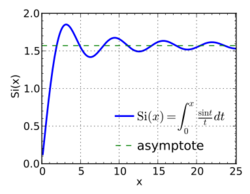

In the case of convolving with a Heaviside step function, the resulting function is exactly the integral of the sinc function, the sine integral; for a square wave the description is not as simply stated. For the step function, the magnitude of the undershoot is thus exactly the integral of the left tail until the first negative zero: for the normalized sinc of unit sampling period, this is The overshoot is accordingly of the same magnitude: the integral of the right tail or (equivalently) the difference between the integral from negative infinity to the first positive zero minus 1 (the non-overshooting value).

The overshoot and undershoot can be understood thus: kernels are generally normalized to have integral 1, so they result in a mapping of constant functions to constant functions – otherwise they have gain. The value of a convolution at a point is a linear combination of the input signal, with coefficients (weights) the values of the kernel.

If a kernel is non-negative, such as for a Gaussian kernel, then the value of the filtered signal will be a convex combination of the input values (the coefficients (the kernel) integrate to 1, and are non-negative), and will thus fall between the minimum and maximum of the input signal – it will not undershoot or overshoot. If, on the other hand, the kernel assumes negative values, such as the sinc function, then the value of the filtered signal will instead be an affine combination of the input values and may fall outside of the minimum and maximum of the input signal, resulting in undershoot and overshoot, as in the Gibbs phenomenon.

Taking a longer expansion – cutting at a higher frequency – corresponds in the frequency domain to widening the brick-wall, which in the time domain corresponds to narrowing the sinc function and increasing its height by the same factor, leaving the integrals between corresponding points unchanged. This is a general feature of the Fourier transform: widening in one domain corresponds to narrowing and increasing height in the other. This results in the oscillations in sinc being narrower and taller, and (in the filtered function after convolution) yields oscillations that are narrower (and thus with smaller area) but which do not have reduced magnitude: cutting off at any finite frequency results in a sinc function, however narrow, with the same tail integrals. This explains the persistence of the overshoot and undershoot.

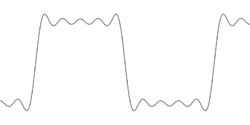

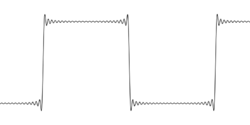

-

Oscillations can be interpreted as convolution with a sinc.

-

Higher cutoff makes the sinc narrower but taller, with the same magnitude tail integrals, yielding higher frequency oscillations, but whose magnitude does not vanish.

Thus, the features of the Gibbs phenomenon are interpreted as follows:

- the undershoot is due to the impulse response having a negative tail integral, which is possible because the function takes negative values;

- the overshoot offsets this, by symmetry (the overall integral does not change under filtering);

- the persistence of the oscillations is because increasing the cutoff narrows the impulse response but does not reduce its integral – the oscillations thus move towards the discontinuity, but do not decrease in magnitude.

Square wave analysis

We examine the th partial Fourier series of a square wave with the periodicity and a discontinuity of a vertical "full" jump from at . Because the case of odd is very similar, let us just deal with the case when is even:

with . ( where is the number of non-zero sinusoidal Fourier series components so there are literatures using instead of .) Substituting (a point of discontinuity), we obtain as claimed above. (The first term that only survives is the average of the Fourier series.)

Next, we find the first maximum of the oscillation around the discontinuity by checking the first and second derivatives of . The first condition for the maximum is that the first derivative equals to zero as

where the 2nd equality is from one of Lagrange's trigonometric identities. Solving this condition gives for integers excluding multiples of to avoid the zero denominator, so and their negatives are allowed.

The second derivative of at is

Thus, the first maximum occurs at () and at this value is

If we introduce the normalized sinc function for , we can rewrite this as

For a sufficiently large , the expression in the square brackets is a Riemann sum approximation to the integral (more precisely, it is a midpoint rule approximation with spacing ). Since the sinc function is continuous, this approximation converges to the integral as . Thus, we have

which was claimed in the previous section. A similar computation shows

Consequences

The Gibbs phenomenon is undesirable because it causes artifacts, namely clipping from the overshoot and undershoot, and ringing artifacts from the oscillations. In the case of low-pass filtering, these can be reduced or eliminated by using different low-pass filters.

In MRI, the Gibbs phenomenon causes artifacts in the presence of adjacent regions of markedly differing signal intensity. This is most commonly encountered in spinal MRIs where the Gibbs phenomenon may simulate the appearance of syringomyelia.

The Gibbs phenomenon manifests as a cross pattern artifact in the discrete Fourier transform of an image,[18] where most images (e.g. micrographs or photographs) have a sharp discontinuity between boundaries at the top / bottom and left / right of an image. When periodic boundary conditions are imposed in the Fourier transform, this jump discontinuity is represented by continuum of frequencies along the axes in reciprocal space (i.e. a cross pattern of intensity in the Fourier transform).

And although this article mainly focused on the difficulty with trying to construct discontinuities without artifacts in the time domain with only a partial Fourier series, it is also important to consider that because the inverse Fourier transform is extremely similar to the Fourier transform, there equivalently is difficulty with trying to construct discontinuities in the frequency domain using only a partial Fourier series. Thus for instance because idealized brick-wall and rectangular filters have discontinuities in the frequency domain, their exact representation in the time domain necessarily requires an infinitely-long sinc filter impulse response, since a finite impulse response will result in Gibbs rippling in the frequency response near cut-off frequencies, though this rippling can be reduced by windowing finite impulse response filters (at the expense of wider transition bands).[19]

See also

- Mach bands

- Pinsky phenomenon

- Runge's phenomenon (a similar phenomenon in polynomial approximations)

- σ-approximation which adjusts a Fourier summation to eliminate the Gibbs phenomenon which would otherwise occur at discontinuities

- Sine integral

Notes

- ↑ H. S. Carslaw (1930). "Chapter IX". Introduction to the theory of Fourier's series and integrals (Third ed.). New York: Dover Publications Inc.. https://books.google.com/books?id=JNVAAAAAIAAJ&q=intitle:Introduction+intitle:to+intitle:the+intitle:theory+intitle:of+intitle:Fourier%27s+intitle:series+intitle:and+intitle:integrals+inauthor:carslaw.

- ↑ Vretblad 2000 Section 4.7.

- ↑ "6.7: Gibbs Phenomena" (in en). 2020-05-24. https://eng.libretexts.org/Bookshelves/Electrical_Engineering/Signal_Processing_and_Modeling/Signals_and_Systems_(Baraniuk_et_al.)/06%3A_Continuous_Time_Fourier_Series_(CTFS)/6.07%3A_Gibbs_Phenomena.

- ↑ 4.0 4.1 M. Pinsky (2002). Introduction to Fourier Analysis and Wavelets. United states of America: Brooks/Cole. p. 27. https://archive.org/details/introductiontofo00pins_232.

- ↑ Steven R. Finch, Mathematical Constants, Cambridge University Press, 2003, Section 4.1 Gibbs-Wilbraham constant, p. 249.

- ↑ Wilbraham, Henry (1848) "On a certain periodic function", The Cambridge and Dublin Mathematical Journal, 3 : 198–201.

- ↑ Encyklopädie der Mathematischen Wissenschaften mit Einschluss ihrer Anwendungen. II T. 1 H 1. Wiesbaden: Vieweg+Teubner Verlag. 1914. p. 1049. http://gdz.sub.uni-goettingen.de/pdfcache/PPN360506208/PPN360506208___LOG_0158.pdf. Retrieved 14 September 2016.

- ↑ Hammack, Bill; Kranz, Steve; Carpenter, Bruce (2014-10-29) (in en). Albert Michelson's Harmonic Analyzer: A Visual Tour of a Nineteenth Century Machine that Performs Fourier Analysis. Articulate Noise Books. ISBN 978-0-9839661-7-3. http://www.engineerguy.com/fourier/. Retrieved 14 September 2016.

- ↑ 9.0 9.1 Hewitt, Edwin; Hewitt, Robert E. (1979). "The Gibbs-Wilbraham phenomenon: An episode in Fourier analysis". Archive for History of Exact Sciences 21 (2): 129–160. doi:10.1007/BF00330404. Available on-line at: National Chiao Tung University: Open Course Ware: Hewitt & Hewitt, 1979.

- ↑ Bôcher, Maxime (April 1906) "Introduction to the theory of Fourier's series", Annals of Mathethematics, second series, 7 (3) : 81–152. The Gibbs phenomenon is discussed on pages 123–132; Gibbs's role is mentioned on page 129.

- ↑ Carslaw, H. S. (1 October 1925). "A historical note on Gibbs' phenomenon in Fourier's series and integrals" (in EN). Bulletin of the American Mathematical Society 31 (8): 420–424. doi:10.1090/s0002-9904-1925-04081-1. ISSN 0002-9904. https://projecteuclid.org/euclid.bams/1183486614. Retrieved 14 September 2016.

- ↑ Gottlieb, David; Shu, Chi-Wang (January 1997). "On the Gibbs Phenomenon and Its Resolution" (in en). SIAM Review 39 (4): 644–668. doi:10.1137/S0036144596301390. ISSN 0036-1445. Bibcode: 1997SIAMR..39..644G. http://epubs.siam.org/doi/10.1137/S0036144596301390.

- ↑ Gottlieb, Sigal; Jung, Jae-Hun; Kim, Saeja (March 2011). "A Review of David Gottlieb's Work on the Resolution of the Gibbs Phenomenon" (in en). Communications in Computational Physics 9 (3): 497–519. doi:10.4208/cicp.301109.170510s. ISSN 1815-2406. Bibcode: 2011CCoPh...9..497G. https://www.cambridge.org/core/journals/communications-in-computational-physics/article/abs/review-of-david-gottliebs-work-on-the-resolution-of-the-gibbs-phenomenon/C77B063AC18D2AF7CF7A6A77028C8528.

- ↑ Rasmussen, Henrik O. "The Wavelet Gibbs Phenomenon". In Wavelets, Fractals and Fourier Transforms, Eds M. Farge et al., Clarendon Press, Oxford, 1993.

- ↑ Kelly, Susan E. (1995). "Gibbs Phenomenon for Wavelets". Applied and Computational Harmonic Analysis (3). http://www.uwlax.edu/faculty/kelly/Publications/GibbsJan.pdf. Retrieved 2012-03-31.

- ↑ De Marchi, Stefano; Marchetti, Francesco; Perracchione, Emma; Poggiali, Davide (2020). "Polynomial interpolation via mapped bases without resampling". J. Comput. Appl. Math. 364. doi:10.1016/j.cam.2019.112347. ISSN 0377-0427.

- ↑ Fay, Temple H.; Kloppers, P. Hendrik (2001). "The Gibbs' phenomenon". International Journal of Mathematical Education in Science and Technology 32 (1): 73–89. doi:10.1080/00207390117151. https://www.tandfonline.com/doi/abs/10.1080/00207390117151.

- ↑ R. Hovden, Y. Jiang, H.L. Xin, L.F. Kourkoutis (2015). "Periodic Artifact Reduction in Fourier Transforms of Full Field Atomic Resolution Images". Microscopy and Microanalysis 21 (2): 436–441. doi:10.1017/S1431927614014639. PMID 25597865. Bibcode: 2015MiMic..21..436H. https://www.cambridge.org/core/journals/microscopy-and-microanalysis/article/div-classtitleperiodic-artifact-reduction-in-fourier-transforms-of-full-field-atomic-resolution-imagesdiv/80D0E226F0B4B16627AA0B6B9BD24F24.

- ↑ "Gibbs phenomenon | RecordingBlogs". https://www.recordingblogs.com/wiki/gibbs-phenomenon.

References

- Gibbs, J. Willard (1898), "Fourier's Series", Nature 59 (1522): 200, doi:10.1038/059200b0, ISSN 0028-0836, Bibcode: 1898Natur..59..200G, https://zenodo.org/record/1429384

- Gibbs, J. Willard (1899), "Fourier's Series", Nature 59 (1539): 606, doi:10.1038/059606a0, ISSN 0028-0836, Bibcode: 1899Natur..59..606G

- Michelson, A. A.; Stratton, S. W. (1898), "A new harmonic analyser", Philosophical Magazine 5 (45): 85–91

- Zygmund, Antoni (1959). Trigonometric Series (2nd ed.). Cambridge University Press. Volume 1, Volume 2.

- Wilbraham, Henry (1848), "On a certain periodic function", The Cambridge and Dublin Mathematical Journal 3: 198–201, https://books.google.com/books?id=JrQ4AAAAMAAJ&pg=PA198

- Paul J. Nahin, Dr. Euler's Fabulous Formula, Princeton University Press, 2006. Ch. 4, Sect. 4.

- Vretblad, Anders (2000), Fourier Analysis and its Applications, Graduate Texts in Mathematics, 223, New York: Springer Publishing, p. 93, ISBN 978-0-387-00836-3

External links

- Hazewinkel, Michiel, ed. (2001), "Gibbs phenomenon", Encyclopedia of Mathematics, Springer Science+Business Media B.V. / Kluwer Academic Publishers, ISBN 978-1-55608-010-4, https://www.encyclopediaofmath.org/index.php?title=p/g044410

- Weisstein, Eric W., "Gibbs Phenomenon". From MathWorld—A Wolfram Web Resource.

- Prandoni, Paolo, "Gibbs Phenomenon".

- Radaelli-Sanchez, Ricardo, and Richard Baraniuk, "Gibbs Phenomenon". The Connexions Project. (Creative Commons Attribution License)

- Horatio S Carslaw : Introduction to the theory of Fourier's series and integrals.pdf (introductiontot00unkngoog.pdf ) at archive.org

- A Python implementation of the S-Gibbs algorithm mitigating the Gibbs Phenomenon https://github.com/pog87/FakeNodes.

|