Spiking neural network

This article has multiple issues. Please help improve it or discuss these issues on the talk page. (Learn how and when to remove these template messages)

(Learn how and when to remove this template message) |

| Machine learning and data mining |

|---|

|

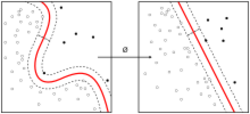

thumb File:Spiking Neural Network Controlled Virtual Insect Navigate in an Random Terrain.ogv Spiking neural networks (SNNs) are artificial neural networks (ANN) that mimic natural neural networks.[1] These models leverage timing of discrete spikes as the main information carrier.[2]

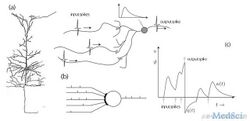

In addition to neuronal and synaptic state, SNNs incorporate the concept of time into their operating model. The idea is that neurons in the SNN do not transmit information at each propagation cycle (as it happens with typical multi-layer perceptron networks), but rather transmit information only when a membrane potential—an intrinsic quality of the neuron related to its membrane electrical charge—reaches a specific value, called the threshold. When the membrane potential reaches the threshold, the neuron fires, and generates a signal that travels to other neurons which, in turn, increase or decrease their potentials in response to this signal. A neuron model that fires at the moment of threshold crossing is also called a spiking neuron model.[3]

While spike rates can be considered the analogue of the variable output of a traditional ANN,[4] neurobiology research indicated that high speed processing cannot be performed solely through a rate-based scheme. For example humans can perform an image recognition task requiring no more than 10ms of processing time per neuron through the successive layers (going from the retina to the temporal lobe). This time window is too short for rate-based encoding. The precise spike timings in a small set of spiking neurons also has a higher information coding capacity compared with a rate-based approach.[5]

The most prominent spiking neuron model is the leaky integrate-and-fire model.[6] In that model, the momentary activation level (modeled as a differential equation) is normally considered to be the neuron's state, with incoming spikes pushing this value higher or lower, until the state eventually either decays or—if the firing threshold is reached—the neuron fires. After firing, the state variable is reset to a lower value.

Various decoding methods exist for interpreting the outgoing spike train as a real-value number, relying on either the frequency of spikes (rate-code), the time-to-first-spike after stimulation, or the interval between spikes.

History

Many multi-layer artificial neural networks are fully connected, receiving input from every neuron in the previous layer and signalling every neuron in the subsequent layer. Although these networks have achieved breakthroughs, they do not match biological networks and do not mimic neurons.[citation needed]

The biology-inspired Hodgkin–Huxley model of a spiking neuron was proposed in 1952. This model described how action potentials are initiated and propagated. Communication between neurons, which requires the exchange of chemical neurotransmitters in the synaptic gap, is described in models such as the integrate-and-fire model, FitzHugh–Nagumo model (1961–1962), and Hindmarsh–Rose model (1984). The leaky integrate-and-fire model (or a derivative) is commonly used as it is easier to compute than Hodgkin–Huxley.[7]

While the notion of an artificial spiking neural network became popular only in the twenty-first century,[8][9][10] studies between 1980 and 1995 supported the concept. The first models of this type of ANN appeared to simulate non-algorithmic intelligent information processing systems.[11][12][13] However, the notion of the spiking neural network as a mathematical model was first worked on in the early 1970s.[14]

As of 2019 SNNs lagged behind ANNs in accuracy, but the gap is decreasing, and has vanished on some tasks.[15]

Underpinnings

Information in the brain is represented as action potentials (neuron spikes), which may group into spike trains or coordinated waves. A fundamental question of neuroscience is to determine whether neurons communicate by a rate or temporal code.[16] Temporal coding implies that a single spiking neuron can replace hundreds of hidden units on a conventional neural net.[1]

SNNs define a neuron's current state as its potential (possibly modeled as a differential equation).[17] An input pulse causes the potential to rise and then gradually decline. Encoding schemes can interpret these pulse sequences as a number, considering pulse frequency and pulse interval.[18] Using the precise time of pulse occurrence, a neural network can consider more information and offer better computing properties.[19]

SNNs compute in the continuous domain. Such neurons test for activation only when their potentials reach a certain value. When a neuron is activated, it produces a signal that is passed to connected neurons, accordingly raising or lowering their potentials.

The SNN approach produces a continuous output instead of the binary output of traditional ANNs. Pulse trains are not easily interpretable, hence the need for encoding schemes. However, a pulse train representation may be more suited for processing spatiotemporal data (or real-world sensory data classification).[20] SNNs connect neurons only to nearby neurons so that they process input blocks separately (similar to CNN using filters). They consider time by encoding information as pulse trains so as not to lose information. This avoids the complexity of a recurrent neural network (RNN). Impulse neurons are more powerful computational units than traditional artificial neurons.[21]

SNNs are theoretically more powerful than so called "second-generation networks" defined as ANNs "based on computational units that apply activation function with a continuous set of possible output values to a weighted sum (or polynomial) of the inputs"; however, SNN training issues and hardware requirements limit their use. Although unsupervised biologically inspired learning methods are available such as Hebbian learning and STDP, no effective supervised training method is suitable for SNNs that can provide better performance than second-generation networks.[21] Spike-based activation of SNNs is not differentiable, thus gradient descent-based backpropagation (BP) is not available.

SNNs have much larger computational costs for simulating realistic neural models than traditional ANNs.[22]

Pulse-coupled neural networks (PCNN) are often confused with SNNs. A PCNN can be seen as a kind of SNN.

Researchers are actively working on various topics. The first concerns differentiability. The expressions for both the forward- and backward-learning methods contain the derivative of the neural activation function which is not differentiable because a neuron's output is either 1 when it spikes, and 0 otherwise. This all-or-nothing behavior disrupts gradients and makes these neurons unsuitable for gradient-based optimization. Approaches to resolving it include:

- resorting to entirely biologically inspired local learning rules for the hidden units

- translating conventionally trained "rate-based" NNs to SNNs

- smoothing the network model to be continuously differentiable

- defining an SG (Surrogate Gradient) as a continuous relaxation of the real gradients

The second concerns the optimization algorithm. Standard BP can be expensive in terms of computation, memory, and communication and may be poorly suited to the hardware that implements it (e.g., a computer, brain, or neuromorphic device).[23]

Incorporating additional neuron dynamics such as Spike Frequency Adaptation (SFA) is a notable advance, enhancing efficiency and computational power.[6][24] These neurons sit between biological complexity and computational complexity.[25] Originating from biological insights, SFA offers significant computational benefits by reducing power usage,[26] especially in cases of repetitive or intense stimuli. This adaptation improves signal/noise clarity and introduces an elementary short-term memory at the neuron level, which in turn, improves accuracy and efficiency.[27] This was mostly achieved using compartmental neuron models. The simpler versions are of neuron models with adaptive thresholds, are an indirect way of achieving SFA. It equips SNNs with improved learning capabilities, even with constrained synaptic plasticity, and elevates computational efficiency.[28][29] This feature lessens the demand on network layers by decreasing the need for spike processing, thus lowering computational load and memory access time—essential aspects of neural computation. Moreover, SNNs utilizing neurons capable of SFA achieve levels of accuracy that rival those of conventional ANNs,[30][31] while also requiring fewer neurons for comparable tasks. This efficiency streamlines the computational workflow and conserves space and energy, while maintaining technical integrity. High-performance deep spiking neural networks can operate with 0.3 spikes per neuron.[32]

Applications

SNNs can in principle be applied to the same applications as traditional ANNs.[33] In addition, SNNs can model the central nervous system of biological organisms, such as an insect seeking food without prior knowledge of the environment.[34] Due to their relative realism, they can be used to study biological neural circuits. Starting with a hypothesis about the topology of a biological neuronal circuit and its function, recordings of this circuit can be compared to the output of a corresponding SNN, evaluating the plausibility of the hypothesis. SNNs lack effective training mechanisms, which can complicate some applications, including computer vision.

When using SNNs for image based data, the images need to be converted into binary spike trains.[35] Types of encodings include:[36]

- Temporal coding; generating one spike per neuron, in which spike latency is inversely proportional to the pixel intensity.

- Rate coding: converting pixel intensity into a spike train, where the number of spikes is proportional to the pixel intensity.

- Direct coding; using a trainable layer to generate a floating-point value for each time step. The layer converts each pixel at a certain time step into a floating-point value, and then a threshold is used on the generated floating-point values to pick either zero or one.

- Phase coding; encoding temporal information into spike patterns based on a global oscillator.

- Burst coding; transmitting spikes in bursts, increasing communication reliability.

Software

A diverse range of application software can simulate SNNs. This software can be classified according to its uses:

SNN simulation

These simulate complex neural models. Large networks usually require lengthy processing. Candidates include:[37]

- Brian – developed by Romain Brette and Dan Goodman at the École Normale Supérieure;

- GENESIS (the GEneral NEural SImulation System[38]) – developed in James Bower's laboratory at Caltech;

- NEST – developed by the NEST Initiative;

- NEURON – mainly developed by Michael Hines, John W. Moore and Ted Carnevale in Yale University and Duke University;

- RAVSim (Runtime Tool) [39] – mainly developed by Sanaullah in Bielefeld University of Applied Sciences and Arts;

- snnTorch – an open-source Python library that simplifies building spiking neural networks and implementing gradient-based training using PyTorch.[40]

Hardware

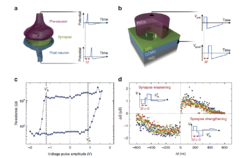

Efforts to implement hardware-based spiking neural networks (SNNs) began in the 1980s,[41] when researchers began exploring brain-inspired neuromorphic systems. In the following decades, advancements in semiconductor technologies enabled the development of several notable projects.[42]

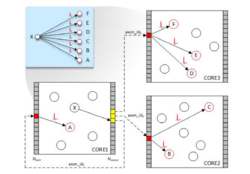

One such project is SpiNNaker, developed at the University of Manchester, which utilizes millions of processing cores for large-scale simulation of spiking neurons.

TrueNorth, developed by IBM, is one of the first commercial neuromorphic chips, designed for energy-efficient and parallel processing.

Loihi, an Intel research chip, focuses on online learning and adaptability in neuromorphic modeling.

In research contexts, platforms such as BrainScaleS, developed in Europe, integrate analog and digital circuitry to accelerate neural simulations. Neurogrid, from Stanford University, was designed to efficiently simulate biological neurons and synapses. Dynap-se [43] models developed by iniLabs, are a family of low-power, event-based neuromorphic chip intended for use in robotics and Internet of Things (IoT) applications.

In addition, hardware based on memristors and other emerging memory technologies is being explored for the implementation of SNNs, with the goal of achieving lower power consumption and improved compatibility with biological neural models.[42]

Sutton and Barto proposed that future neuromorphic architectures[44] will comprise billions of nanosynapses, which require a clear understanding of the accompanying physical mechanisms. Experimental systems based on ferroelectric tunnel junctions have been used to show that STDP can be harnessed from heterogeneous polarization switching. Through combined scanning probe imaging, electrical transport and atomic-scale molecular dynamics, conductance variations can be modelled by nucleation-dominated domain reversal. Simulations showed that arrays of ferroelectric nanosynapses can autonomously learn to recognize patterns in a predictable way, opening the path towards unsupervised learning.[45]

Benchmarks

Classification capabilities of spiking networks trained according to unsupervised learning methods[46] have been tested on benchmark datasets such as Iris, Wisconsin Breast Cancer or Statlog Landsat dataset.[47][48] Various approaches to information encoding and network design have been used such as a 2-layer feedforward network for data clustering and classification. Based on Hopfield (1995) the authors implemented models of local receptive fields combining the properties of radial basis functions and spiking neurons to convert input signals having a floating-point representation into a spiking representation.[49][50]

See also

References

- ↑ 1.0 1.1 "Networks of spiking neurons: The third generation of neural network models". Neural Networks 10 (9): 1659–1671. 1997. doi:10.1016/S0893-6080(97)00011-7. ISSN 0893-6080.

- ↑ Auge, Daniel; Hille, Julian; Mueller, Etienne; Knoll, Alois (2021-12-01). "A Survey of Encoding Techniques for Signal Processing in Spiking Neural Networks" (in en). Neural Processing Letters 53 (6): 4693–4710. doi:10.1007/s11063-021-10562-2. ISSN 1573-773X.

- ↑ Spiking neuron models: single neurons, populations, plasticity. Cambridge, U.K.: Cambridge University Press. 2002. ISBN 0-511-07817-X. OCLC 57417395.

- ↑ Wang, Xiangwen; Lin, Xianghong; Dang, Xiaochao (2020-05-01). "Supervised learning in spiking neural networks: A review of algorithms and evaluations". Neural Networks 125: 258–280. doi:10.1016/j.neunet.2020.02.011. ISSN 0893-6080. PMID 32146356. https://www.sciencedirect.com/science/article/pii/S0893608020300563.

- ↑ Taherkhani, Aboozar; Belatreche, Ammar; Li, Yuhua; Cosma, Georgina; Maguire, Liam P.; McGinnity, T. M. (2020-02-01). "A review of learning in biologically plausible spiking neural networks". Neural Networks 122: 253–272. doi:10.1016/j.neunet.2019.09.036. ISSN 0893-6080. PMID 31726331. https://www.sciencedirect.com/science/article/pii/S0893608019303181.

- ↑ 6.0 6.1 Ganguly, Chittotosh; Bezugam, Sai Sukruth; Abs, Elisabeth; Payvand, Melika; Dey, Sounak; Suri, Manan (2024-02-01). "Spike frequency adaptation: bridging neural models and neuromorphic applications" (in en). Communications Engineering 3 (1): 22. doi:10.1038/s44172-024-00165-9. ISSN 2731-3395.

- ↑ "Flexon: A Flexible Digital Neuron for Efficient Spiking Neural Network Simulations". 2018 ACM/IEEE 45th Annual International Symposium on Computer Architecture (ISCA). June 2018. pp. 275–288. doi:10.1109/isca.2018.00032. ISBN 978-1-5386-5984-7.

- ↑ Goodman, D. F., & Brette, R. (2008). Brian: a simulator for spiking neural networks in python. Frontiers in neuroinformatics, 2, 350.

- ↑ Vreeken, J. (2003). Spiking neural networks, an introduction

- ↑ Yamazaki, K.; Vo-Ho, V. K.; Bulsara, D.; Le, N (30 June 2022). "Spiking neural networks and their applications: A review". Brain Sciences 12 (7): 863. doi:10.3390/brainsci12070863. PMID 35884670.

- ↑ Ballard, D. H. (1987, July). Modular learning in neural networks. In Proceedings of the sixth National conference on Artificial intelligence-Volume 1 (pp. 279-284).

- ↑ Peretto, P. (1984). Collective properties of neural networks: a statistical physics approach. Biological cybernetics, 50(1), 51-62.

- ↑ Kurogi, S. (1987). A model of neural network for spatiotemporal pattern recognition. Biological cybernetics, 57(1), 103-114.

- ↑ Anderson, J. A. (1972). A simple neural network generating an interactive memory. Mathematical biosciences, 14(3-4), 197-220.

- ↑ "Deep learning in spiking neural networks". Neural Networks 111: 47–63. March 2019. doi:10.1016/j.neunet.2018.12.002. PMID 30682710.

- ↑ "Spiking Neurons". Pulsed Neural Networks. MIT Press. 2001. ISBN 978-0-262-63221-8. https://books.google.com/books?id=jEug7sJXP2MC&q=%22Pulsed+Neural+Networks%22+rate-code+neuroscience&pg=PA3.

- ↑ Hodgkin, A. L.; Huxley, A. F. (1952-08-28). "A quantitative description of membrane current and its application to conduction and excitation in nerve" (in en). The Journal of Physiology 117 (4): 500–544. doi:10.1113/jphysiol.1952.sp004764. ISSN 0022-3751. PMID 12991237.

- ↑ Dan, Yang; Poo, Mu-Ming (July 2006). "Spike Timing-Dependent Plasticity: From Synapse to Perception" (in en). Physiological Reviews 86 (3): 1033–1048. doi:10.1152/physrev.00030.2005. ISSN 0031-9333. PMID 16816145. https://www.physiology.org/doi/10.1152/physrev.00030.2005.

- ↑ Nagornov, Nikolay N.; Lyakhov, Pavel A.; Bergerman, Maxim V.; Kalita, Diana I. (2024). "Modern Trends in Improving the Technical Characteristics of Devices and Systems for Digital Image Processing". IEEE Access 12: 44659–44681. doi:10.1109/ACCESS.2024.3381493. ISSN 2169-3536. Bibcode: 2024IEEEA..1244659N.

- ↑ Van Wezel M (2020). A robust modular spiking neural networks training methodology for time-series datasets: With a focus on gesture control (Master of Science thesis). Delft University of Technology.

- ↑ 21.0 21.1 "Networks of spiking neurons: The third generation of neural network models". Neural Networks 10 (9): 1659–1671. 1997. doi:10.1016/S0893-6080(97)00011-7.

- ↑ Furber, Steve (August 2016). "Large-scale neuromorphic computing systems" (in en). Journal of Neural Engineering 13 (5). doi:10.1088/1741-2560/13/5/051001. ISSN 1741-2552. PMID 27529195. Bibcode: 2016JNEng..13e1001F.

- ↑ Neftci, Emre O.; Mostafa, Hesham; Zenke, Friedemann (2019). "Surrogate Gradient Learning in Spiking Neural Networks: Bringing the Power of Gradient-Based Optimization to Spiking Neural Networks". IEEE Signal Processing Magazine 36 (6): 51–63. doi:10.1109/msp.2019.2931595. Bibcode: 2019ISPM...36f..51N.

- ↑ Salaj, Darjan; Subramoney, Anand; Kraisnikovic, Ceca; Bellec, Guillaume; Legenstein, Robert; Maass, Wolfgang (2021-07-26). O'Leary, Timothy; Behrens, Timothy E; Gutierrez, Gabrielle. eds. "Spike frequency adaptation supports network computations on temporally dispersed information". eLife 10. doi:10.7554/eLife.65459. ISSN 2050-084X. PMID 34310281.

- ↑ Izhikevich, E.M. (2004). "Which model to use for cortical spiking neurons?". IEEE Transactions on Neural Networks 15 (5): 1063–1070. doi:10.1109/tnn.2004.832719. PMID 15484883. Bibcode: 2004ITNN...15.1063I.

- ↑ Adibi, M., McDonald, J. S., Clifford, C. W. & Arabzadeh, E. Adaptation improves neural coding efficiency despite increasing correlations in variability. J. Neurosci. 33, 2108–2120 (2013)

- ↑ Laughlin, S. (1981). "A simple coding procedure enhances a neuron's information capacity". Zeitschrift für Naturforschung C 36 (9–10): 910–912. ISSN 0341-0382. PMID 7303823.

- ↑ Querlioz, Damien; Bichler, Olivier; Dollfus, Philippe; Gamrat, Christian (2013). "Immunity to Device Variations in a Spiking Neural Network With Memristive Nanodevices". IEEE Transactions on Nanotechnology 12 (3): 288–295. doi:10.1109/TNANO.2013.2250995. Bibcode: 2013ITNan..12..288Q. https://hal.science/hal-01826840.

- ↑ Yamazaki, Kashu; Vo-Ho, Viet-Khoa; Bulsara, Darshan; Le, Ngan (July 2022). "Spiking Neural Networks and Their Applications: A Review" (in en). Brain Sciences 12 (7): 863. doi:10.3390/brainsci12070863. ISSN 2076-3425. PMID 35884670.

- ↑ Shaban, Ahmed; Bezugam, Sai Sukruth; Suri, Manan (2021-07-09). "An adaptive threshold neuron for recurrent spiking neural networks with nanodevice hardware implementation" (in en). Nature Communications 12 (1): 4234. doi:10.1038/s41467-021-24427-8. ISSN 2041-1723. PMID 34244491. PMC 8270926. Bibcode: 2021NatCo..12.4234S. https://rdcu.be/dyCf4.

- ↑ Bellec, Guillaume; Salaj, Darjan; Subramoney, Anand; Legenstein, Robert; Maass, Wolfgang (2018-12-25), Long short-term memory and learning-to-learn in networks of spiking neurons

- ↑ Stanojevic, Ana; Woźniak, Stanisław; Bellec, Guillaume; Cherubini, Giovanni; Pantazi, Angeliki; Gerstner, Wulfram (2024-08-09). "High-performance deep spiking neural networks with 0.3 spikes per neuron" (in en). Nature Communications 15 (1): 6793. doi:10.1038/s41467-024-51110-5. ISSN 2041-1723. PMID 39122775. Bibcode: 2024NatCo..15.6793S.

- ↑ "A simple Aplysia-like spiking neural network to generate adaptive behavior in autonomous robots". Adaptive Behavior 14 (5): 306–324. 2008. doi:10.1177/1059712308093869.

- ↑ "Spike-based indirect training of a spiking neural network-controlled virtual insect". 52nd IEEE Conference on Decision and Control. Dec 2013. pp. 6798–6805. doi:10.1109/CDC.2013.6760966. ISBN 978-1-4673-5717-3.

- ↑ "Spiking Neural Networks and Their Applications: A Review". Brain Sciences 12 (7): 863. June 2022. doi:10.3390/brainsci12070863. PMID 35884670.

- ↑ Kim Y, Park H, Moitra A, Bhattacharjee A, Venkatesha Y, Panda P (2022-01-31). "Rate Coding or Direct Coding: Which One is Better for Accurate, Robust, and Energy-efficient Spiking Neural Networks?". arXiv:2202.03133 [cs.NE].

- ↑ "Synaptic plasticity: taming the beast". Nature Neuroscience 3 (S11): 1178–1183. November 2000. doi:10.1038/81453. PMID 11127835.

- ↑ "New results on recurrent network training: unifying the algorithms and accelerating convergence". IEEE Transactions on Neural Networks 11 (3): 697–709. May 2000. doi:10.1109/72.846741. PMID 18249797. Bibcode: 2000ITNN...11..697A.

- ↑ "Evaluation of Spiking Neural Nets-Based Image Classification Using the Runtime Simulator RAVSim". International Journal of Neural Systems 33 (9). August 2023. doi:10.1142/S0129065723500442. PMID 37604777.

- ↑ Eshraghian, J. K., Ward, M., Neftci, E., Wang, X., Lenz, G., Dwivedi, G., Bennamoun, M., Jeong, D. S., & Lu, W. D. (2023). Training spiking neural networks using lessons from deep learning. Proceedings of the IEEE, 111(9), 1016–1054.

- ↑ Valle, Maurizio (2002). "Analog VLSI Implementation of Artificial Neural Networks with Supervised On-Chip Learning". Analog Integrated Circuits and Signal Processing 33 (3): 263–287. doi:10.1023/A:1020717929709. ISSN 0925-1030. Bibcode: 2002AICSP..33..263V.

- ↑ 42.0 42.1 James, Conrad D.; Aimone, James B.; Miner, Nadine E.; Vineyard, Craig M.; Rothganger, Fredrick H.; Carlson, Kristofor D.; Mulder, Samuel A.; Draelos, Timothy J. et al. (2017-01-01). "A historical survey of algorithms and hardware architectures for neural-inspired and neuromorphic computing applications". Biologically Inspired Cognitive Architectures 19: 49–64. doi:10.1016/j.bica.2016.11.002. ISSN 2212-683X.

- ↑ Richter, Ole; Wu, Chenxi; Whatley, Adrian M; Köstinger, German; Nielsen, Carsten; Qiao, Ning; Indiveri, Giacomo (2024-03-01). "DYNAP-SE2: a scalable multi-core dynamic neuromorphic asynchronous spiking neural network processor". Neuromorphic Computing and Engineering 4 (1): 014003. doi:10.1088/2634-4386/ad1cd7. ISSN 2634-4386. https://iopscience.iop.org/article/10.1088/2634-4386/ad1cd7.

- ↑ Sutton RS, Barto AG (2002) Reinforcement Learning: An Introduction. Bradford Books, MIT Press, Cambridge, MA.

- ↑ "Learning through ferroelectric domain dynamics in solid-state synapses". Nature Communications 8. April 2017. doi:10.1038/ncomms14736. PMID 28368007. Bibcode: 2017NatCo...814736B.

- ↑ "Supervised learning in spiking neural networks with ReSuMe: sequence learning, classification, and spike shifting". Neural Computation 22 (2): 467–510. February 2010. doi:10.1162/neco.2009.11-08-901. PMID 19842989.

- ↑ "UCI repository of machine learning databases.". 1998. http://www.ics.uci.edu/~mlearn/MLRepository.html.

- ↑ "Error-backpropagation in temporally encoded networks of spiking neurons.". Neurocomputing 48 (1–4): 17–37. 2002. doi:10.1016/S0925-2312(01)00658-0. https://ir.cwi.nl/pub/4369.

- ↑ "Optimal spike-timing-dependent plasticity for precise action potential firing in supervised learning". Neural Computation 18 (6): 1318–1348. June 2006. doi:10.1162/neco.2006.18.6.1318. PMID 16764506. Bibcode: 2005q.bio.....2037P.

- ↑ "Unsupervised clustering with spiking neurons by sparse temporal coding and multilayer RBF networks.". IEEE Transactions on Neural Networks 13 (2): 426–435. March 2002. doi:10.1109/72.991428. PMID 18244443. Bibcode: 2002ITNN...13..426B. https://ir.cwi.nl/pub/4370.

|