Mechanistic interpretability

| Machine learning and data mining |

|---|

|

Mechanistic interpretability (often abbreviated as mech interp, mechinterp, or MI) is a subfield of research within explainable artificial intelligence that aims to understand the internal workings of neural networks by analyzing the mechanisms present in their computations. The approach seeks to analyze neural networks in a manner similar to how binary computer programs can be reverse-engineered to understand their functions.

History

The term mechanistic interpretability was coined by Chris Olah as a description of his work in circuit analysis as opposed to usual methods in interpretable AI. Circuit analysis attempted to completely characterize individual features and circuits within models, while the broader field tended towards gradient-based approaches like saliency maps.[1][2]

Before circuit analysis, work in the subfield combined various techniques such as feature visualization, dimensionality reduction, and attribution with human-computer interaction methods to analyze models like the vision model Inception v1.[3][4]

Key concepts

Mechanistic interpretability aims to identify structures, circuits or algorithms encoded in the weights of machine learning models.[5][6] This contrasts with earlier interpretability methods that focused primarily on input-output explanations.[7]

Linear representation hypothesis

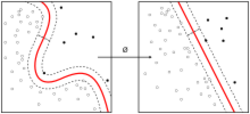

This hypothesis suggests that high-level concepts are represented as linear directions in the activation space of neural networks. Empirical evidence from word embeddings and large language models supports this view, although it does not hold up universally.[8][9]

Methods

Mechanistic interpretability employs causal methods to understand how internal model components influence outputs, often using formal tools from causality theory.[10]

Mechanistic interpretability, in the field of AI safety, is used to understand and verify the behavior of complex AI systems, and to attempt to identify potential risks[11][6] such as AI misalignment.

Sparse autoencoders

A sparse autoencoder (SAE) is a model trained to disentangle neural network activations into sparse representations. The learned dimensions often represent simple, human-understandable concepts. The technique was applied to large language model interpretability by Anthropic.[12][13][14]

Features and circuits

A circuit in a neural network is composed of causal chains of feature activations. By mapping out what circuits lead to what downstream consequences, as well as by activating and inhibiting circuits, one can analyze how a neural network (such as an LLM) reaches a given result from a given input.[15]

References

- ↑ Saphra, Naomi; Wiegreffe, Sarah (2024). "Mechanistic?". BlackboxNLP workshop. https://aclanthology.org/2024.blackboxnlp-1.30.pdf.

- ↑ Olah, Chris; Cammarata, Nick; Schubert, Ludwig; Goh, Gabriel; Petrov, Michael; Carter, Shan (2020-03-10). "Zoom In: An Introduction to Circuits". Distill 5 (3). doi:10.23915/distill.00024.001. ISSN 2476-0757. https://distill.pub/2020/circuits/zoom-in.

- ↑ Olah, Chris; Satyanarayan, Arvind; Johnson, Ian; Carter, Shan; Schubert, Ludwig; Ye, Katherine; Mordvintsev, Alexander (2018-03-06). "The Building Blocks of Interpretability" (in en). Distill 3 (3). doi:10.23915/distill.00010. ISSN 2476-0757. https://distill.pub/2018/building-blocks.

- ↑ Olah, Chris; Cammarata, Nick; Schubert, Ludwig; Goh, Gabriel; Petrov, Michael; Carter, Shan (2020-04-01). "An Overview of Early Vision in InceptionV1" (in en). Distill 5 (4). doi:10.23915/distill.00024.002. ISSN 2476-0757. https://distill.pub/2020/circuits/early-vision.

- ↑ Conmy, Arthur; Mavor-Parker, Augustine N.; Lynch, Aengus; Heimersheim, Stefan; Garriga-Alonso, Adrià (2023-12-10). "Towards automated circuit discovery for mechanistic interpretability". Red Hook, New York: Curran Associates Inc.. pp. 16318–16352. https://dl.acm.org/doi/10.5555/3666122.3666841.

- ↑ 6.0 6.1 Levy, Steven (2025-10-27). "Why AI Breaks Bad" (in en-US). Wired. ISSN 1059-1028. https://www.wired.com/story/ai-black-box-interpretability-problem/.

- ↑ Kästner, Lena; Crook, Barnaby (2024-10-11). "Explaining AI through mechanistic interpretability" (in en). European Journal for Philosophy of Science 14 (4). doi:10.1007/s13194-024-00614-4. ISSN 1879-4920.

- ↑ Mikolov, Tomas; Yih, Wen-tau; Zweig, Geoffrey (June 2013). "Linguistic Regularities in Continuous Space Word Representations". in Vanderwende, Lucy; Daumé III, Hal; Kirchhoff, Katrin. Atlanta, Georgia: Association for Computational Linguistics. pp. 746–751. https://aclanthology.org/N13-1090/.

- ↑ Park, Kiho; Choe, Yo Joong; Veitch, Victor (2024-07-21). "The linear representation hypothesis and the geometry of large language models". 235. Vienna, Austria. pp. 39643–39666. https://dl.acm.org/doi/10.5555/3692070.3693675.

- ↑ Vig, Jesse; Gehrmann, Sebastian; Belinkov, Yonatan; Qian, Sharon; Nevo, Daniel; Singer, Yaron; Shieber, Stuart (2020-12-06). "Investigating gender bias in language models using causal mediation analysis". Red Hook, New York: Curran Associates Inc.. pp. 12388–12401. ISBN 978-1-7138-2954-6. https://dl.acm.org/doi/10.5555/3495724.3496763.

- ↑ Sullivan, Mark (2025-04-22). "This startup wants to reprogram the mind of AI—and just got $50 million to do it". Fast Company. https://www.fastcompany.com/91320043/this-startup-wants-to-reprogram-the-mind-of-ai-and-just-got-50-million-to-do-it.

- ↑ "Researchers are figuring out how large language models work". The Economist. 2024-07-11. ISSN 0013-0613. https://www.economist.com/science-and-technology/2024/07/11/researchers-are-figuring-out-how-large-language-models-work.

- ↑ Geiger, Atticus; Ibeling, Duligur; Zur, Amir; Chaudhary, Maheep; Chauhan, Sonakshi; Huang, Jing; Arora, Aryaman; Wu, Zhengxuan et al. (2025). "Causal Abstraction: A Theoretical Foundation for Mechanistic Interpretability". Journal of Machine Learning Research 26 (83): 1–64. ISSN 1533-7928. http://jmlr.org/papers/v26/23-0058.html.

- ↑ Somvanshi, Shriyank; Islam, Md Monzurul; Rafe, Amir; Tusti, Anannya Ghosh; Chakraborty, Arka; Baitullah, Anika; Chowdhury, Tausif Islam; Alnawmasi, Nawaf et al. (June 2026). "Bridging the Black Box: A Survey on Mechanistic Interpretability in AI" (in en). ACM Computing Surveys 58 (8). doi:10.1145/3787104. ISSN 0360-0300.

- ↑ Lindsey, Jack; Gurnee, Wes; Ameisen, Emmanuel (2025-03-27). "On the Biology of a Large Language Model". Anthropic. https://transformer-circuits.pub/2025/attribution-graphs/biology.html.

Further reading

- Nanda, Neel (2023). "Emergent Linear Representations in World Models of Self-Supervised Sequence Models". BlackNLP Workshop: 16–30. doi:10.18653/v1/2023.blackboxnlp-1.2. https://aclanthology.org/2023.blackboxnlp-1.2/.

|