Relevance vector machine

| Machine learning and data mining |

|---|

|

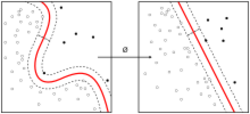

In mathematics, a Relevance Vector Machine (RVM) is a machine learning technique that uses Bayesian inference to obtain parsimonious solutions for regression and probabilistic classification.[1] A greedy optimisation procedure and thus fast version were subsequently developed.[2][3] The RVM has an identical functional form to the support vector machine, but provides probabilistic classification.

It is actually equivalent to a Gaussian process model with covariance function:

where is the kernel function (usually Gaussian), are the variances of the prior on the weight vector , and are the input vectors of the training set.[4]

Compared to that of support vector machines (SVM), the Bayesian formulation of the RVM avoids the set of free parameters of the SVM (that usually require cross-validation-based post-optimizations). However RVMs use an expectation maximization (EM)-like learning method and are therefore at risk of local minima. This is unlike the standard sequential minimal optimization (SMO)-based algorithms employed by SVMs, which are guaranteed to find a global optimum (of the convex problem).

The relevance vector machine was patented in the United States by Microsoft (patent expired September 4, 2019).[5]

See also

- Kernel trick

- Platt scaling: turns an SVM into a probability model

References

- ↑ Tipping, Michael E. (2001). "Sparse Bayesian Learning and the Relevance Vector Machine". Journal of Machine Learning Research 1: 211–244. http://jmlr.csail.mit.edu/papers/v1/tipping01a.html.

- ↑ Tipping, Michael; Faul, Anita (2003). "Fast Marginal Likelihood Maximisation for Sparse Bayesian Models". Proceedings of the Ninth International Workshop on Artificial Intelligence and Statistics: 276–283. https://proceedings.mlr.press/r4/tipping03a.html. Retrieved 21 November 2024.

- ↑ Faul, Anita; Tipping, Michael (2001). "Analysis of Sparse Bayesian Learning". Advances in Neural Information Processing Systems. https://proceedings.neurips.cc/paper_files/paper/2001/file/02b1be0d48924c327124732726097157-Paper.pdf. Retrieved 21 November 2024.

- ↑ Candela, Joaquin Quiñonero (2004). "Sparse Probabilistic Linear Models and the RVM". Learning with Uncertainty - Gaussian Processes and Relevance Vector Machines (PDF) (Ph.D.). Technical University of Denmark. Retrieved April 22, 2016.

- ↑ Michael E. Tipping, "Relevance vector machine", US patent 6633857

|