3D user interaction

In computing, 3D interaction is a form of human-machine interaction where users are able to move and perform interaction in 3D space. Both human and machine process information where the physical position of elements in the 3D space is relevant.

The 3D space used for interaction can be the real physical space, a virtual space representation simulated on the computer, or a combination of both. When the real physical space is used for data input, the human interacts with the machine performing actions using an input device that detects the 3D position of the human interaction, among other things. When it is used for data output, the simulated 3D virtual scene is projected onto the real environment through one output device.

The principles of 3D interaction are applied in a variety of domains such as tourism, art, gaming, simulation, education, information visualization, or scientific visualization.[1]

History

Research in 3D interaction and 3D display began in the 1960s, pioneered by researchers like Ivan Sutherland, Fred Brooks, Bob Sproull, Andrew Ortony and Richard Feldman. But it was not until 1962 when Morton Heilig invented the Sensorama simulator.[2] It provided 3D video feedback, as well motion, audio, and feedbacks to produce a virtual environment. The next stage of development was Dr. Ivan Sutherland’s completion of his pioneering work in 1968, the Sword of Damocles.[3] He created a head-mounted display that produced 3D virtual environment by presenting a left and right still image of that environment.

Availability of technology as well as impractical costs held back the development and application of virtual environments until the 1980s. Applications were limited to military ventures in the United States. Since then, further research and technological advancements have allowed new doors to be opened to application in various other areas such as education, entertainment, and manufacturing.

Background

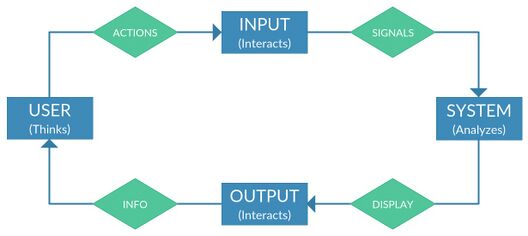

In 3D interaction, users carry out their tasks and perform functions by exchanging information with computer systems in 3D space. It is an intuitive type of interaction because humans interact in three dimensions in the real world. The tasks that users perform have been classified as selection and manipulation of objects in virtual space, navigation, and system control. Tasks can be performed in virtual space through interaction techniques and by utilizing interaction devices. 3D interaction techniques were classified according to the task group it supports. Techniques that support navigation tasks are classified as navigation techniques. Techniques that support object selection and manipulation are labeled selection and manipulation techniques. Lastly, system control techniques support tasks that have to do with controlling the application itself. A consistent and efficient mapping between techniques and interaction devices must be made in order for the system to be usable and effective. Interfaces associated with 3D interaction are called 3D interfaces. Like other types of user interfaces, it involves two-way communication between users and system, but allows users to perform action in 3D space. Input devices permit the users to give directions and commands to the system, while output devices allow the machine to present information back to them.

3D interfaces have been used in applications that feature virtual environments, and augmented and mixed realities. In virtual environments, users may interact directly with the environment or use tools with specific functionalities to do so. 3D interaction occurs when physical tools are controlled in 3D spatial context to control a corresponding virtual tool.

Users experience a sense of presence when engaged in an immersive virtual world. Enabling the users to interact with this world in 3D allows them to make use of natural and intrinsic knowledge of how information exchange takes place with physical objects in the real world. Texture, sound, and speech can all be used to augment 3D interaction. Currently, users still have difficulty in interpreting 3D space visuals and understanding how interaction occurs. Although it’s a natural way for humans to move around in a three-dimensional world, the difficulty exists because many of the cues present in real environments are missing from virtual environments. Perception and occlusion are the primary perceptual cues used by humans. Also, even though scenes in virtual space appear three-dimensional, they are still displayed on a 2D surface so some inconsistencies in depth perception will still exist.

3D user interfaces

User interfaces are the means for communication between users and systems. 3D interfaces include media for 3D representation of system state, and media for 3D user input or manipulation. Using 3D representations is not enough to create 3D interaction. The users must have a way of performing actions in 3D as well. To that effect, special input and output devices have been developed to support this type of interaction. Some, such as the 3D mouse, were developed based on existing devices for 2D interaction.

3D user interfaces, are user interfaces where 3D interaction takes place, this means that the user's tasks occur directly within a three-dimensional space. The user must communicate with commands, requests, questions, intent, and goals to the system, and in turn this one has to provide feedback, requests for input, information about their status, and so on.

Both the user and the system do not have the same type of language, therefore to make possible the communication process, the interfaces must serve as intermediaries or translators between them.

The way the user transforms perceptions into actions is called Human transfer function, and the way the system transforms signals into display information is called System transfer function. 3D user interfaces are actually physical devices that communicate the user and the system with the minimum delay, in this case there are two types: 3D User Interface Output Hardware and 3D User Interface Input Hardware.

3D user interface output hardware

Output devices, also called display devices, allow the machine to provide information or feedback to one or more users through the human perceptual system. Most of them are focused on stimulating the visual, auditory, or haptic senses. However, in some unusual cases they also can stimulate the user's olfactory system.

3D visual displays

This type of devices are the most popular and its goal is to present the information produced by the system through the human visual system in a three-dimensional way. The main features that distinguish these devices are: field of regard and field of view, spatial resolution, screen geometry, light transfer mechanism, refresh rate and ergonomics.

Another way to characterize these devices is according to the different categories of depth perception cues used to achieve that the user can understand the three-dimensional information. The main types of displays used in 3D user interfaces are: monitors, surround-screen displays, workbenches, hemispherical displays, head-mounted displays, arm-mounted displays and autostereoscopic displays. Virtual reality headsets and CAVEs (Cave Automatic Virtual Environment) are examples of a fully immersive visual display, where the user can see only the virtual world and not the real world. Semi-immersive displays allow users to see both. Monitors and workbenches are examples of semi-immersive displays.

3D audio displays

3D Audio displays are devices that present information (in this case sound) through the human auditory system, which is especially useful when supplying location and spatial information to the users. Its objective is to generate and display a spatialized 3D sound so the user can use its psychoacoustic skills and be able to determine the location and direction of the sound. There are different localizations cues: binaural cues, spectral and dynamic cues, head-related transfer functions, reverberation, sound intensity and vision and environment familiarity. Adding background audio component to a display also adds to the sense of realism.

3D haptic displays

These devices use the sense of touch to simulate the physical interaction between the user and a virtual object. There are three different types of 3D Haptic displays: those that provide the user a sense of force, the ones that simulate the sense of touch and those that use both. The main features that distinguish these devices are: haptic presentation capability, resolution and ergonomics. The human haptic system has 2 fundamental kinds of cues, tactile and kinesthetic. Tactile cues are a type of human touch cues that have a wide variety of skin receptors located below the surface of the skin that provide information about the texture, temperature, pressure and damage. Kinesthetic cues are a type of human touch cues that have many receptors in the muscles, joints and tendons that provide information about the angle of joints and stress and length of muscles.

3D user interface input hardware

These hardware devices are called input devices and their aim is to capture and interpret the actions performed by the user. The degrees of freedom (DOF) are one of the main features of these systems. Classical interface components (such as mouse and keyboards and arguably touchscreen) are often inappropriate for non 2D interaction needs.[1] These systems are also differentiated according to how much physical interaction is needed to use the device, purely active need to be manipulated to produce information, purely passive do not need to. The main categories of these devices are standard (desktop) input devices, tracking devices, control devices, navigation equipment, gesture interfaces, 3D mice, and brain–computer interfaces.

Desktop Input devices

This type of devices are designed for an interaction 3D on a desktop, many of them have an initial design thought in a traditional interaction in two dimensions, but with an appropriate mapping between the system and the device, this can work perfectly in a three-dimensional way. There are different types of them: keyboards, 2D mice and trackballs, pen-based tablets and stylus, and joysticks. Nonetheless, many studies have questioned the appropriateness of desktop interface components for 3D interaction [1][4][5] though this is still debated.[6][7]

Tracking devices

3D user interaction systems are based primarily on motion tracking technologies, to obtain all the necessary information from the user through the analysis of their movements or gestures, these technologies are called, tracking technologies.

Trackers detect or monitor head, hand or body movements and send that information to the computer. The computer then translates it and ensures that position and orientation are reflected accurately in the virtual world. Tracking is important in presenting the correct viewpoint, coordinating the spatial and sound information presented to users as well the tasks or functions that they could perform. 3D trackers have been identified as mechanical, magnetic, ultrasonic, optical, and hybrid inertial. Examples of trackers include motion trackers, eye trackers, and data gloves. A simple 2D mouse may be considered a navigation device if it allows the user to move to a different location in a virtual 3D space. Navigation devices such as the treadmill and bicycle make use of the natural ways that humans travel in the real world. Treadmills simulate walking or running and bicycles or similar type equipment simulate vehicular travel. In the case of navigation devices, the information passed on to the machine is the user's location and movements in virtual space. Wired gloves and bodysuits allow gestural interaction to occur. These send hand or body position and movement information to the computer using sensors.

For the full development of a 3D User Interaction system, is required to have access to a few basic parameters, all this technology-based system should know, or at least partially, as the relative position of the user, the absolute position, angular velocity, rotation data, orientation or height. The collection of these data is achieved through systems of space tracking and sensors in multiple forms, as well as the use of different techniques to obtain. The ideal system for this type of interaction is a system based on the tracking of the position, using six degrees of freedom (6-DOF), these systems are characterized by the ability to obtain absolute 3D position of the user, in this way will get information on all possible three-dimensional field angles.

The implementation of these systems can be achieved by using various technologies, such as electromagnetic fields, optical, or ultrasonically tracking, but all share the main limitation, they should have a fixed external reference, either a base, an array of cameras, or a set of visible markers, so this single system can be carried out in prepared areas. Inertial tracking systems do not require external reference such as those based on movement, are based on the collection of data using accelerometers, gyroscopes, or video cameras, without a fixed reference mandatory, in the majority of cases, the main problem of this system, is based on not obtaining the absolute position, since not part of any pre-set external reference point so it always gets the relative position of the user, aspect that causes cumulative errors in the process of sampling data. The goal to achieve in a 3D tracking system would be based on obtaining a system of 6-DOF able to get absolute positioning and precision of movement and orientation, with a precision and an uncut space very high, a good example of a rough situation would be a mobile phone, since it has all the motion capture sensors and also GPS tracking of latitude, but currently these systems are not so accurate to capture data with a precision of centimeters and therefore would be invalid.

However, there are several systems that are closely adapted to the objectives pursued, the determining factor for them is that systems are auto content, i.e., all-in-one and does not require a fixed prior reference, these systems are as follows:

Nintendo Wii Remote ("Wiimote")

The Wii Remote device does not offer a technology based on 6-DOF since again, cannot provide absolute position, in contrast, is equipped with a multitude of sensors, which convert a 2D device in a great tool of interaction in 3D environments.

This device has gyroscopes to detect rotation of the user, accelerometers ADXL3000, for obtaining speed and movement of the hands, optical sensors for determining orientation and electronic compasses and infra-red devices to capture the position.

This type of device can be affected by external references of infra-red light bulbs or candles, causing errors in the accuracy of the position.

Google Tango Devices

The Tango Platform is an augmented reality computing platform, developed and authored by the Advanced Technology and Projects (ATAP), a skunkworks division of Google. It uses computer vision and internal sensors (like gyroscopes) to enable mobile devices, such as smartphones and tablets, to detect their position relative to the world around them without using GPS or other external signals. It can therefore be used to provide 6-DOF input which can also be combined with its multi-touch screen.[8] The Google Tango devices can be seen as more integrated solutions than the early prototypes combining spatially-tracked devices with touch-enabled-screens for 3D environments.[9][10][11]

Microsoft Kinect

The Microsoft Kinect device offers us a different motion capture technology for tracking.

Instead of basing its operation on sensors, this is based on a structured light scanner, located in a bar, which allows tracking of the entire body through the detection of about 20 spatial points, of which 3 different degrees of freedom are measured to obtain position, velocity and rotation of each point.

Its main advantage is ease of use, and the no requirement of an external device attached by the user, and its main disadvantage lies in the inability to detect the orientation of the user, thus limiting certain space and guidance functions.

Leap Motion

The Leap Motion is a new system of tracking of hands, designed for small spaces, allowing a new interaction in 3D environments for desktop applications, so it offers a great fluidity when browsing through three-dimensional environments in a realistic way.

It is a small device that connects via USB to a computer, and used two cameras with infra-red light LED, allowing the analysis of a hemispheric area about 1 meter on its surface, thus recording responses from 300 frames per second, information is sent to the computer to be processed by the specific software company.

3D Interaction Techniques

3D Interaction Techniques are the different ways that the user can interact with the 3D virtual environment to execute different kind of tasks. The quality of these techniques has a profound effect on the quality of the entire 3D User Interfaces. They can be classified into three different groups: Navigation, Selection and manipulation and System control.

Navigation

The computer needs to provide the user with information regarding location and movement. Navigation is the most used by the user in big 3D environments and presents different challenges as supporting spatial awareness, giving efficient movements between distant places and making navigation bearable so the user can focus on more important tasks. These techniques, navigation tasks, can be divided into two components: travel and wayfinding. Travel involves moving from the current location to the desired point. Wayfinding refers to finding and setting routes to get to a travel goal within the virtual environment.

Travel

Travel is a conceptual technique that consists in the movement of the viewpoint (virtual eye, virtual camera) from one location to another. This orientation is usually handled in immersive virtual environments by head tracking. There exists five types of travel interaction technique:

- Physical movement: uses the user's body motion to move through the virtual environment. This is an appropriate technique when you need an augmented perception of the feeling of being present, or when the user needs to do physical effort for a simulation.

- Manual viewpoint manipulation: the user's hands movements determine the action in the virtual environment. One example could be when the user moves their hands in a way that seems like they are grabbing a virtual rope, and then pulls themself up. This technique could be easy to learn and efficient, but can cause fatigue.

- Steering: the user has to constantly indicate in what direction to move. This is a common and efficient technique. One example of this is the gaze-directed steering, where the head orientation determines the direction of travel.

- Target-based travel: the user specifies a destination point, and the viewpoint moves to the new location. This travel can be executed by teleport, where the user is instantly moved to the destination point, or, the system can execute some stream of transition movements to the destiny. These techniques are very simple from the user's point of view, because they only have to indicate the destination.

- Route planning: the user specifies the path that should be taken through the environment, and the system executes the movement. The user may draw a path on a map. This technique allows users to control travel, while they have the ability to do other tasks during motion.

Wayfinding

Wayfinding is the cognitive process of defining a route for the surrounding environment, using and acquiring spatial knowledge to construct a cognitive map of the environment. In virtual space it is different and more difficult to do than in the real world because synthetic environments are often missing perceptual cues and movement constraints. It can be supported using user-centered techniques such as using a larger field of view and supplying motion cues, or environment-centered techniques like structural organization and wayfinding principles.

In order for a good wayfinding, users should receive wayfinding supports during the virtual environment travel to facilitate it because of the constraints from the virtual world.

These supports can be user-centered supports such as a large field-of-view or even non-visual support such as audio, or environment-centered support, artificial cues and structural organization to define clearly different parts of the environment. Some of the most used artificial cues are maps, compasses and grids, or even architectural cues like lighting, color and texture.

Selection and Manipulation

Selection and Manipulation techniques for 3D environments must accomplish at least one of three basic tasks: object selection, object positioning and object rotation.

Users need to be able to manipulate virtual objects. Manipulation tasks involve selecting and moving an object. Sometimes, the rotation of the object is involved as well. Direct-hand manipulation is the most natural technique because manipulating physical objects with the hand is intuitive for humans. However, this is not always possible. A virtual hand that can select and re-locate virtual objects will work as well.

3D widgets can be used to put controls on objects: these are usually called 3D Gizmos or Manipulators (a good example are the ones from Blender). Users can employ these to re-locate, re-scale or re-orient an object (Translate, Scale, Rotate).

Other techniques include the Go-Go technique and ray casting, where a virtual ray is used to point to and select an object.

Selection

The task of selecting objects or 3D volumes in a 3D environments requires first being able to find the desired target and then being able to select it. Most 3D datasets/environments are severed by occlusion problems,[12] so the first step of finding the target relies on manipulation of the viewpoint or of the 3D data itself in order to properly identify the object or volume of interest. This initial step is then of course tightly coupled with manipulations in 3D. Once the target is visually identified, users have access to a variety of techniques to select it.

Usually, the system provides the user a 3D cursor represented as a human hand whose movements correspond to the motion of the hand tracker. This virtual hand technique [13] is rather intuitive because simulates a real-world interaction with objects but with the limit of objects that we can reach inside a reach-area.

To avoid this limit, there are many techniques that have been suggested, like the Go-Go technique.[14] This technique allows the user to extend the reach-area using a non-linear mapping of the hand: when the user extends the hand beyond a fixed threshold distance, the mapping becomes non-linear and the hand grows.

Another technique to select and manipulate objects in 3D virtual spaces consists in pointing at objects using a virtual-ray emanating from the virtual hand.[15] When the ray intersects with the objects, it can be manipulated. Several variations of this technique has been made, like the aperture technique, which uses a conic pointer addressed for the user's eyes, estimated from the head location, to select distant objects. This technique also uses a hand sensor to adjust the conic pointer size.

Many other techniques, relying on different input strategies, have also been developed.[16][17]

Manipulation

3D Manipulations occurs before a selection task (in order to visually identify a 3D selection target) and after a selection has occurred, to manipulate the selected object. 3D Manipulations require 3 DOF for rotations (1 DOF per axis, namely x, y, z) and 3 DOF for translations (1 DOF per axis) and at least 1 additional DOF for uniform zoom (or alternatively 3 additional DOF for non-uniform zoom operations).

3D Manipulations, like navigation, is one of the essential tasks with 3D data, objects or environments. It is the basis of many 3D software (such as Blender, Autodesk, VTK) which are widely used. These software, available mostly on computers, are thus almost always combined with a mouse and keyboard. To provide enough DOFs (the mouse only offers 2), these software rely on modding with a key in order to separately control all the DOFs involved in 3D manipulations. With the recent avent of multi-touch enabled smartphones and tablets, the interaction mappings of these software have been adapted to multi-touch (which offers more simultaneous DOF manipulations than a mouse and keyboard). A survey conducted in 2017 of 36 commercial and academic mobile applications on Android and iOS however suggested that most applications did not provide a way to control the minimum 6 DOFs required,[7] but that among those which did, most made use of a 3D version of the RST (Rotation Scale Translation) mapping: 1 finger is used for rotation around x and y, while two-finger interaction controls rotation around z, and translation along x, y, and z.

System Control

System control techniques allows the user to send commands to an application, activate some functionality, change the interaction (or system) mode, or modify a parameter. The command sender always includes the selection of an element from a set. System control techniques as techniques that support system control tasks in three-dimensions can be categorized into four groups:

- Graphical menus: visual representations of commands.

- Voice commands: menus accessed via voice.

- Gestural interaction: command accessed via body gesture.

- Tools: virtual objects with an implicit function or mode.

Also exists different hybrid techniques that combine some of the types.

Symbolic input

This task allows the user to enter and/or edit, for example, text, making it possible to annotate 3D scenes or 3D objects.

See also

- Finger tracking

- Interaction technique

- Interaction design

- Human–computer interaction

- Cave Automatic Virtual Environment (CAVE)

- Virtual reality

References

- ↑ 1.0 1.1 1.2 Bowman, Doug A. (2004). 3D User Interfaces: Theory and Practice. Redwood City, CA, USA: Addison Wesley Longman Publishing Co., Inc.. ISBN 978-0201758672.

- ↑ Heilig, Morton L, "Sensorama simulator", US patent 3050870A, published 1962-08-28

- ↑ Sutherland, I. E. (1968). "A head-mounted three dimensional display ". Proceedings of AFIPS 68, pp. 757-764

- ↑ Chen, Michael; Mountford, S. Joy; Sellen, Abigail (1988). "Proceedings of the 15th annual conference on Computer graphics and interactive techniques - SIGGRAPH '88". New York, New York, USA: ACM Press. pp. 121–129. doi:10.1145/54852.378497. ISBN 0-89791-275-6.

- ↑ Yu, Lingyun; Svetachov, Pjotr; Isenberg, Petra; Everts, Maarten H.; Isenberg, Tobias (2010-10-28). "FI3D: Direct-Touch Interaction for the Exploration of 3D Scientific Visualization Spaces". IEEE Transactions on Visualization and Computer Graphics 16 (6): 1613–1622. doi:10.1109/TVCG.2010.157. ISSN 1077-2626. PMID 20975204. https://hal.inria.fr/inria-00587377/PDF/Yu_2010_FDT.pdf.

- ↑ Terrenghi, Lucia; Kirk, David; Sellen, Abigail; Izadi, Shahram (2007). "Proceedings of the SIGCHI Conference on Human Factors in Computing Systems". New York, New York, USA: ACM Press. pp. 1157–1166. doi:10.1145/1240624.1240799. ISBN 978-1-59593-593-9.

- ↑ 7.0 7.1 Besançon, Lonni; Issartel, Paul; Ammi, Mehdi; Isenberg, Tobias (2017). "Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems". New York, New York, USA: ACM Press. pp. 4727–4740. doi:10.1145/3025453.3025863. ISBN 978-1-4503-4655-9.

- ↑ Besancon, Lonni; Issartel, Paul; Ammi, Mehdi; Isenberg, Tobias (2017). "Hybrid Tactile/Tangible Interaction for 3D Data Exploration". IEEE Transactions on Visualization and Computer Graphics 23 (1): 881–890. doi:10.1109/tvcg.2016.2599217. ISSN 1077-2626. PMID 27875202. https://hal.inria.fr/hal-01372922/document.

- ↑ Fitzmaurice, George W.; Buxton, William (1997). "Proceedings of the ACM SIGCHI Conference on Human factors in computing systems". New York, New York, USA: ACM Press. pp. 43–50. doi:10.1145/258549.258578. ISBN 0-89791-802-9.

- ↑ Angus, Ian G.; Sowizral, Henry A. (1995-03-30). "Embedding the 2D interaction metaphor in a real 3D virtual environment". in Fisher, Scott S.; Merritt, John O.; Bolas, Mark T.. 2409. SPIE. pp. 282–293. doi:10.1117/12.205875.

- ↑ Poupyrev, I.; Tomokazu, N.; Weghorst, S. (1998). "Proceedings. IEEE 1998 Virtual Reality Annual International Symposium (Cat. No.98CB36180)". IEEE Comput. Soc. pp. 126–132. doi:10.1109/vrais.1998.658467. ISBN 0-8186-8362-7.

- ↑ Shneiderman, B. (1996). "Proceedings 1996 IEEE Symposium on Visual Languages". IEEE Comput. Soc. Press. pp. 336–343. doi:10.1109/vl.1996.545307. ISBN 0-8186-7508-X.

- ↑ Poupyrev, I.; Ichikawa, T.; Weghorst, S.; Billinghurst, M. (1998). "Egocentric Object Manipulation in Virtual Environments: Empirical Evaluation of Interaction Techniques". Computer Graphics Forum 17 (3): 41–52. doi:10.1111/1467-8659.00252. ISSN 0167-7055.

- ↑ Poupyrev, Ivan; Billinghurst, Mark; Weghorst, Suzanne; Ichikawa, Tadao (1996). "The go-go interaction technique: Non-linear mapping for direct manipulation in VR". Proceedings of the 9th annual ACM symposium on User interface software and technology - UIST '96. pp. 79–80. doi:10.1145/237091.237102. ISBN 978-0897917988. http://www.ivanpoupyrev.com/e-library/1998_1996/uist96.pdf. Retrieved 2018-05-18.

- ↑ Mine, Mark R. (1995). Virtual Environment Interaction Techniques (PDF) (Technical report). Department of Computer Science University of North Carolina.

- ↑ Argelaguet, Ferran; Andujar, Carlos (2013). "A survey of 3D object selection techniques for virtual environments". Computers & Graphics 37 (3): 121–136. doi:10.1016/j.cag.2012.12.003. ISSN 0097-8493. https://hal.archives-ouvertes.fr/hal-00907787/file/Manuscript.pdf.

- ↑ Besançon, Lonni; Sereno, Mickael; Yu, Lingyun; Ammi, Mehdi; Isenberg, Tobias (2019). "Hybrid Touch/Tangible Spatial 3D Data Selection". Computer Graphics Forum (Wiley) 38 (3): 553–567. doi:10.1111/cgf.13710. ISSN 0167-7055. https://hal.inria.fr/hal-02079308/file/Besancon_2019_HTT.pdf.

- Reading List

- 3D Interaction With and From Handheld Computers. Visited March 28, 2008

- Bowman, D., Kruijff, E., LaViola, J., Poupyrev, I. (2001, February). An Introduction to 3-D User Interface Design. Presence, 10(1), 96–108.

- Bowman, D., Kruijff, E., LaViola, J., Poupyrev, I. (2005). 3D User Interfaces: Theory and Practice. Boston: Addison–Wesley.

- Bowman, Doug. 3D User Interfaces. Interaction Design Foundation. Retrieved October 15, 2015

- Burdea, G. C., Coiffet, P. (2003). Virtual Reality Technology (2nd ed.). New Jersey: John Wiley & Sons Inc.

- Carroll, J. M. (2002). Human–Computer Interaction in the New Millennium. New York: ACM Press

- Csisinko, M., Kaufmann, H. (2007, March). Towards a Universal Implementation of 3D User Interaction Techniques [Proceedings of Specification, Authoring, Adaptation of Mixed Reality User Interfaces Workshop, IEEE VR]. Charlotte, NC, USA.

- Fröhlich, B.; Plate, J. (2000). "The Cubic Mouse: A New Device for 3D Input". New York: ACM Press. pp. 526–531. doi:10.1145/332040.332491.

- Interaction Techniques. DLR - Simulations- und Softwaretechnik. Retrieved October 18, 2015

- Keijser, J.; Carpendale, S.; Hancock, M.; Isenberg, T. (2007). "Exploring 3D Interaction in Alternate Control-Display Space Mappings". Los Alamitos, CA: IEEE Computer Society. pp. 526–531. https://innovis.cpsc.ucalgary.ca/innovis/uploads/Publications/Publications/Keijser_2007_E3I.pdf.

- Larijani, L. C. (1993). The Virtual Reality Primer. United States of America: R. R. Donnelley and Sons Company.

- Rhijn, A. van (2006). Configurable Input Devices for 3D Interaction using Optical Tracking. Eindhoven: Technische Universiteit Eindhoven.

- Stuerzlinger, W., Dadgari, D., Oh, J-Y. (2006, April). Reality-Based Object Movement Techniques for 3D. CHI 2006 Workshop: "What is the Next Generation of Human–Computer Interaction?". Workshop presentation.

- The CAVE (CAVE Automatic Virtual Environment). Visited March 28, 2007

- The Java 3-D Enabled CAVE at the Sun Centre of Excellence for Visual Genomics. Visited March 28, 2007

- Vince, J. (1998). Essential Virtual Reality Fast. Great Britain: Springer-Verlag London Limited

- Virtual Reality. Visited March 28, 2007

- Yuan, C., (2005, December). Seamless 3D Interaction in AR – A Vision-Based Approach. In Proceedings of the First International Symposium, ISVC (pp. 321–328). Lake Tahoe, NV, USA: Springer Berlin/ Heidelberg.

External links

- Bibliography on 3D Interaction and Spatial Input

- The Inventor of the 3D Window Interface 1998

- 3DI Group

- 3D Interaction in Virtual Environments