Gram–Schmidt process

In mathematics, particularly linear algebra and numerical analysis, the Gram–Schmidt process or Gram-Schmidt algorithm is a way of finding a set of two or more vectors that are perpendicular to each other.

By technical definition, it is a method of constructing an orthonormal basis from a set of vectors in an inner product space, most commonly the Euclidean space equipped with the standard inner product. The Gram–Schmidt process takes a finite, linearly independent set of vectors for k ≤ n and generates an orthogonal set that spans the same -dimensional subspace of as .

The method is named after Jørgen Pedersen Gram and Erhard Schmidt, but Pierre-Simon Laplace had been familiar with it before Gram and Schmidt.[1] In the theory of Lie group decompositions, it is generalized by the Iwasawa decomposition.

The application of the Gram–Schmidt process to the column vectors of a full column rank matrix yields the QR decomposition (it is decomposed into an orthogonal and a triangular matrix).

The Gram–Schmidt process

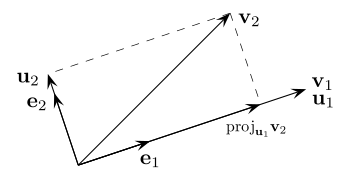

The vector projection of a vector on a nonzero vector is defined as[note 1] where denotes the dot product of the vectors and . This means that is the orthogonal projection of onto the line spanned by . If is the zero vector, then is defined as the zero vector.

Given nonzero linearly-independent vectors the Gram–Schmidt process defines the vectors as follows:

The sequence is the required system of orthogonal vectors, and the normalized vectors form an orthonormal set. The calculation of the sequence is known as Gram–Schmidt orthogonalization, and the calculation of the sequence is known as Gram–Schmidt orthonormalization.

To check that these formulas yield an orthogonal sequence, first compute by substituting the above formula for : we get zero. Then use this to compute again by substituting the formula for : we get zero. For arbitrary the proof is accomplished by mathematical induction.

Geometrically, this method proceeds as follows: to compute , it projects orthogonally onto the subspace generated by , which is the same as the subspace generated by . The vector is then defined to be the difference between and this projection, guaranteed to be orthogonal to all of the vectors in the subspace .

The Gram–Schmidt process also applies to a linearly independent countably infinite sequence {vi}i. The result is an orthogonal (or orthonormal) sequence {ui}i such that for natural number n: the algebraic span of is the same as that of .

If the Gram–Schmidt process is applied to a linearly dependent sequence, it outputs the 0 vector on the th step, assuming that is a linear combination of . If an orthonormal basis is to be produced, then the algorithm should test for zero vectors in the output and discard them because no multiple of a zero vector can have a length of 1. The number of vectors output by the algorithm will then be the dimension of the space spanned by the original inputs.

A variant of the Gram–Schmidt process using transfinite recursion applied to a (possibly uncountably) infinite sequence of vectors yields a set of orthonormal vectors with such that for any , the completion of the span of is the same as that of . In particular, when applied to a (algebraic) basis of a Hilbert space (or, more generally, a basis of any dense subspace), it yields a (functional-analytic) orthonormal basis. Note that in the general case often the strict inequality holds, even if the starting set was linearly independent, and the span of need not be a subspace of the span of (rather, it's a subspace of its completion).

Example

Euclidean space

Consider the following set of vectors in (with the conventional inner product)

Now, perform Gram–Schmidt, to obtain an orthogonal set of vectors:

We check that the vectors and are indeed orthogonal: noting that if the dot product of two vectors is 0 then they are orthogonal.

For non-zero vectors, we can then normalize the vectors by dividing out their sizes as shown above:

Properties

Denote by the result of applying the Gram–Schmidt process to a collection of vectors . This yields a map .

It has the following properties:

- It is continuous

- It is orientation preserving in the sense that .

- It commutes with orthogonal maps:

Let be orthogonal (with respect to the given inner product). Then we have

Further, a parametrized version of the Gram–Schmidt process yields a (strong) deformation retraction of the general linear group onto the orthogonal group .

Numerical stability

When this process is implemented on a computer, the vectors are often not quite orthogonal, due to rounding errors. For the Gram–Schmidt process as described above (sometimes referred to as "classical Gram–Schmidt") this loss of orthogonality is particularly bad; therefore, it is said that the (classical) Gram–Schmidt process is numerically unstable.

The Gram–Schmidt process can be stabilized by a small modification; this version is sometimes referred to as modified Gram-Schmidt or MGS. This approach gives the same result as the original formula in exact arithmetic and introduces smaller errors in finite-precision arithmetic.

Instead of computing the vector uk as it is computed as

This method is used in the previous animation, when the intermediate vector is used when orthogonalizing the blue vector .

Here is another description of the modified algorithm. Given the vectors , in our first step we produce vectors by removing components along the direction of . In formulas, . After this step we already have two of our desired orthogonal vectors , namely , but we also made already orthogonal to . Next, we orthogonalize those remaining vectors against . This means we compute by subtraction . Now we have stored the vectors where the first three vectors are already and the remaining vectors are already orthogonal to . As should be clear now, the next step orthogonalizes against . Proceeding in this manner we find the full set of orthogonal vectors . If orthonormal vectors are desired, then we normalize as we go, so that the denominators in the subtraction formulas turn into ones.

Algorithm

The following MATLAB algorithm implements classical Gram–Schmidt orthonormalization. The vectors v1, ..., vk (columns of matrix V, so that V(:,j) is the th vector) are replaced by orthonormal vectors (columns of U) which span the same subspace.

function U = gramschmidt(V)

[n, k] = size(V);

U = zeros(n,k);

U(:,1) = V(:,1) / norm(V(:,1));

for i = 2:k

U(:,i) = V(:,i);

for j = 1:i-1

U(:,i) = U(:,i) - (U(:,j)'*U(:,i)) * U(:,j);

end

U(:,i) = U(:,i) / norm(U(:,i));

end

end

The cost of this algorithm is asymptotically O(nk2) floating point operations, where n is the dimensionality of the vectors.[2]

Via Gaussian elimination

If the rows {v1, ..., vk} are written as a matrix , then applying Gaussian elimination to the augmented matrix will produce the orthogonalized vectors in place of . However the matrix must be brought to row echelon form, using only the row operation of adding a scalar multiple of one row to another.[3] For example, taking as above, we have

And reducing this to row echelon form produces

The normalized vectors are then as in the example above.

Determinant formula

The result of the Gram–Schmidt process may be expressed in a non-recursive formula using determinants.

where and, for , is the Gram determinant

Note that the expression for is a "formal" determinant, i.e. the matrix contains both scalars and vectors; the meaning of this expression is defined to be the result of a cofactor expansion along the row of vectors.

The determinant formula for the Gram-Schmidt is computationally (exponentially) slower than the recursive algorithms described above; it is mainly of theoretical interest.

Expressed using geometric algebra

Expressed using notation used in geometric algebra, the unnormalized results of the Gram–Schmidt process can be expressed as which is equivalent to the expression using the operator defined above. The results can equivalently be expressed as[4] which is closely related to the expression using determinants above.

Alternatives

Other orthogonalization algorithms use Householder transformations or Givens rotations. The algorithms using Householder transformations are more stable than the stabilized Gram–Schmidt process. On the other hand, the Gram–Schmidt process produces the th orthogonalized vector after the th iteration, while orthogonalization using Householder reflections produces all the vectors only at the end. This makes only the Gram–Schmidt process applicable for iterative methods like the Arnoldi iteration.

Yet another alternative is motivated by the use of Cholesky decomposition for inverting the matrix of the normal equations in linear least squares. Let be a full column rank matrix, whose columns need to be orthogonalized. The matrix is Hermitian and positive definite, so it can be written as using the Cholesky decomposition. The lower triangular matrix with strictly positive diagonal entries is invertible. Then columns of the matrix are orthonormal and span the same subspace as the columns of the original matrix . The explicit use of the product makes the algorithm unstable, especially if the product's condition number is large. Nevertheless, this algorithm is used in practice and implemented in some software packages because of its high efficiency and simplicity.

In quantum mechanics there are several orthogonalization schemes with characteristics better suited for certain applications than original Gram–Schmidt. Nevertheless, it remains a popular and effective algorithm for even the largest electronic structure calculations.[5]

Run-time complexity

Gram-Schmidt orthogonalization can be done in strongly-polynomial time. The run-time analysis is similar to that of Gaussian elimination.[6]: 40

See also

References

- ↑ Cheney, Ward; Kincaid, David (2009). Linear Algebra: Theory and Applications. Sudbury, Ma: Jones and Bartlett. pp. 544, 558. ISBN 978-0-7637-5020-6. https://books.google.com/books?id=Gg3Uj1GkHK8C&pg=PA544.

- ↑ Golub & Van Loan 1996, §5.2.8.

- ↑ Pursell, Lyle; Trimble, S. Y. (1 January 1991). "Gram-Schmidt Orthogonalization by Gauss Elimination". The American Mathematical Monthly 98 (6): 544–549. doi:10.2307/2324877.

- ↑ Doran, Chris; Lasenby, Anthony (2007). Geometric Algebra for Physicists. Cambridge University Press. p. 124. ISBN 978-0-521-71595-9.

- ↑ Pursell, Yukihiro (2011). "First-principles calculations of electron states of a silicon nanowire with 100,000 atoms on the K computer". Proceedings of 2011 International Conference for High Performance Computing, Networking, Storage and Analysis. pp. 1:1–1:11. doi:10.1145/2063384.2063386. ISBN 9781450307710.

- ↑ Template:Cite Geometric Algorithms and Combinatorial Optimization

Notes

- ↑ In the complex case, this assumes that the inner product is linear in the first argument and conjugate-linear in the second. In physics a more common convention is linearity in the second argument, in which case we define

Sources

- Bau III, David; Trefethen, Lloyd N. (1997), Numerical linear algebra, Philadelphia: Society for Industrial and Applied Mathematics, ISBN 978-0-89871-361-9.

- Golub, Gene H.; Van Loan, Charles F. (1996), Matrix Computations (3rd ed.), Johns Hopkins, ISBN 978-0-8018-5414-9.

- Greub, Werner (1975), Linear Algebra (4th ed.), Springer.

- Soliverez, C. E.; Gagliano, E. (1985), "Orthonormalization on the plane: a geometric approach", Mex. J. Phys. 31 (4): 743–758, http://rmf.smf.mx/pdf/rmf/31/4/31_4_743.pdf, retrieved 2013-06-22.

External links

- Hazewinkel, Michiel, ed. (2001), "Orthogonalization", Encyclopedia of Mathematics, Springer Science+Business Media B.V. / Kluwer Academic Publishers, ISBN 978-1-55608-010-4, https://www.encyclopediaofmath.org/index.php?title=p/o070420

- Harvey Mudd College Math Tutorial on the Gram-Schmidt algorithm

- Earliest known uses of some of the words of mathematics: G The entry "Gram-Schmidt orthogonalization" has some information and references on the origins of the method.

- Demos: Gram Schmidt process in plane and Gram Schmidt process in space

- Gram-Schmidt orthogonalization applet

- NAG Gram–Schmidt orthogonalization of n vectors of order m routine

- Proof: Raymond Puzio, Keenan Kidwell. "proof of Gram-Schmidt orthogonalization algorithm" (version 8). PlanetMath.org.

|