Comparison of vector algebra and geometric algebra

This article possibly contains original research. (March 2016) (Learn how and when to remove this template message) |

Geometric algebra is an extension of vector algebra, providing additional algebraic structures on vector spaces, with geometric interpretations.

Vector algebra uses all dimensions and signatures, as does geometric algebra, notably 3+1 spacetime as well as 2 dimensions.

Basic concepts and operations

Geometric algebra (GA) is an extension or completion of vector algebra (VA).[1] The reader is herein assumed to be familiar with the basic concepts and operations of VA and this article will mainly concern itself with operations in the GA of 3D space (nor is this article intended to be mathematically rigorous). In GA, vectors are not normally written boldface as the meaning is usually clear from the context.

The fundamental difference is that GA provides a new product of vectors called the "geometric product". Elements of GA are graded multivectors: scalars are grade 0, usual vectors are grade 1, bivectors are grade 2 and the highest grade (3 in the 3D case) is traditionally called the pseudoscalar and designated .

The ungeneralized 3D vector form of the geometric product is:[2]

that is the sum of the usual dot (inner) product and the outer (exterior) product (this last is closely related to the cross product and will be explained below).

In VA, entities such as pseudovectors and pseudoscalars need to be bolted on, whereas in GA the equivalent bivector and pseudovector respectively exist naturally as subspaces of the algebra.

For example, applying vector calculus in 2 dimensions, such as to compute torque or curl, requires adding an artificial 3rd dimension and extending the vector field to be constant in that dimension, or alternately considering these to be scalars. The torque or curl is then a normal vector field in this 3rd dimension. By contrast, geometric algebra in 2 dimensions defines these as a pseudoscalar field (a bivector), without requiring a 3rd dimension. Similarly, the scalar triple product is ad hoc, and can instead be expressed uniformly using the exterior product and the geometric product.

Translations between formalisms

Here are some comparisons between standard vector relations and their corresponding exterior product and geometric product equivalents. All the exterior and geometric product equivalents here are good for more than three dimensions, and some also for two. In two dimensions the cross product is undefined even if what it describes (like torque) is perfectly well defined in a plane without introducing an arbitrary normal vector outside of the space.

Many of these relationships only require the introduction of the exterior product to generalize, but since that may not be familiar to somebody with only a background in vector algebra and calculus, some examples are given.

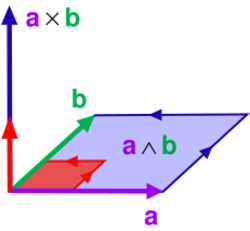

Cross and exterior products

is perpendicular to the plane containing and .

is an oriented representation of the same plane.

We have the pseudoscalar (right handed orthonormal frame) and so

- returns a bivector and

- returns a vector perpendicular to the plane.

This yields a convenient definition for the cross product of traditional vector algebra:

(this is antisymmetric). Relevant is the distinction between polar and axial vectors in vector algebra, which is natural in geometric algebra as the distinction between vectors and bivectors (elements of grade two).

The here is a unit pseudoscalar of Euclidean 3-space, which establishes a duality between the vectors and the bivectors, and is named so because of the expected property

The equivalence of the cross product and the exterior product expression above can be confirmed by direct multiplication of with a determinant expansion of the exterior product

See also Cross product as an exterior product. Essentially, the geometric product of a bivector and the pseudoscalar of Euclidean 3-space provides a method of calculation of the Hodge dual.

Cross and commutator products

The pseudovector/bivector subalgebra of the geometric algebra of Euclidean 3-dimensional space form a 3-dimensional vector space themselves. Let the standard unit pseudovectors/bivectors of the subalgebra be , , and , and the anti-commutative commutator product be defined as , where is the geometric product. The commutator product is distributive over addition and linear, as the geometric product is distributive over addition and linear.

From the definition of the commutator product, , and satisfy the following equalities: which imply, by the anti-commutativity of the commutator product, that

The anti-commutativity of the commutator product also implies that

These equalities and properties are sufficient to determine the commutator product of any two pseudovectors/bivectors and . As the pseudovectors/bivectors form a vector space, each pseudovector/bivector can be defined as the sum of three orthogonal components parallel to the standard basis pseudovectors/bivectors:

Their commutator product can be expanded using its distributive property: which is precisely the cross product in vector algebra for pseudovectors.

Norm of a vector

Ordinarily,

Making use of the geometric product and the fact that the exterior product of a vector with itself is zero:

Lagrange identity

In three dimensions the product of two vector lengths can be expressed in terms of the dot and cross products

The corresponding generalization expressed using the geometric product is

This follows from expanding the geometric product of a pair of vectors with its reverse

Determinant expansion of cross and wedge products

Linear algebra texts will often use the determinant for the solution of linear systems by Cramer's rule or for and matrix inversion.

An alternative treatment is to axiomatically introduce the wedge product, and then demonstrate that this can be used directly to solve linear systems. This is shown below, and does not require sophisticated math skills to understand.

It is then possible to define determinants as nothing more than the coefficients of the wedge product in terms of "unit k-vectors" ( terms) expansions as above.

- A one-by-one determinant is the coefficient of for an 1-vector.

- A two-by-two determinant is the coefficient of for an bivector

- A three-by-three determinant is the coefficient of for an trivector

- ...

When linear system solution is introduced via the wedge product, Cramer's rule follows as a side-effect, and there is no need to lead up to the end results with definitions of minors, matrices, matrix invertibility, adjoints, cofactors, Laplace expansions, theorems on determinant multiplication and row column exchanges, and so forth.

Matrix related

Matrix inversion (Cramer's rule) and determinants can be naturally expressed in terms of the wedge product.

The use of the wedge product in the solution of linear equations can be quite useful for various geometric product calculations.

Traditionally, instead of using the wedge product, Cramer's rule is usually presented as a generic algorithm that can be used to solve linear equations of the form (or equivalently to invert a matrix). Namely

This is a useful theoretic result. For numerical problems row reduction with pivots and other methods are more stable and efficient.

When the wedge product is coupled with the Clifford product and put into a natural geometric context, the fact that the determinants are used in the expression of parallelogram area and parallelepiped volumes (and higher-dimensional generalizations thereof) also comes as a nice side-effect.

As is also shown below, results such as Cramer's rule also follow directly from the wedge product's selection of non-identical elements. The result is then simple enough that it could be derived easily if required instead of having to remember or look up a rule.

Two variables example

Pre- and post-multiplying by and ,

Provided the solution is

For , this is Cramer's rule since the factors of the wedge products

divide out.

Similarly, for three, or N variables, the same ideas hold

Again, for the three variable three equation case this is Cramer's rule since the factors of all the wedge products divide out, leaving the familiar determinants.

A numeric example with three equations and two unknowns: In case there are more equations than variables and the equations have a solution, then each of the k-vector quotients will be scalars.

To illustrate here is the solution of a simple example with three equations and two unknowns.

The right wedge product with solves for

and a left wedge product with solves for

Observe that both of these equations have the same factor, so one can compute this only once (if this was zero it would indicate the system of equations has no solution).

Collection of results for and yields a Cramer's rule-like form:

Writing , we have the result:

Equation of a plane

For the plane of all points through the plane passing through three independent points , , and , the normal form of the equation is

The equivalent wedge product equation is

Projection and rejection

Using the Gram–Schmidt process a single vector can be decomposed into two components with respect to a reference vector, namely the projection onto a unit vector in a reference direction, and the difference between the vector and that projection.

With , the projection of onto is

Orthogonal to that vector is the difference, designated the rejection,

The rejection can be expressed as a single geometric algebraic product in a few different ways

The similarity in form between the projection and the rejection is notable. The sum of these recovers the original vector

Here the projection is in its customary vector form. An alternate formulation is possible that puts the projection in a form that differs from the usual vector formulation

Working backwards from the result, it can be observed that this orthogonal decomposition result can in fact follow more directly from the definition of the geometric product itself.

With this approach, the original geometrical consideration is not necessarily obvious, but it is a much quicker way to get at the same algebraic result.

However, the hint that one can work backwards, coupled with the knowledge that the wedge product can be used to solve sets of linear equations (see: [1][Usurped!] ), the problem of orthogonal decomposition can be posed directly,

Let , where . To discard the portions of that are colinear with , take the exterior product

Here the geometric product can be employed

Because the geometric product is invertible, this can be solved for x:

The same techniques can be applied to similar problems, such as calculation of the component of a vector in a plane and perpendicular to the plane.

For three dimensions the projective and rejective components of a vector with respect to an arbitrary non-zero unit vector, can be expressed in terms of the dot and cross product

For the general case the same result can be written in terms of the dot and wedge product and the geometric product of that and the unit vector

It's also worthwhile to point out that this result can also be expressed using right or left vector division as defined by the geometric product:

Like vector projection and rejection, higher-dimensional analogs of that calculation are also possible using the geometric product.

As an example, one can calculate the component of a vector perpendicular to a plane and the projection of that vector onto the plane.

Let , where . As above, to discard the portions of that are colinear with or , take the wedge product

Having done this calculation with a vector projection, one can guess that this quantity equals . One can also guess there is a vector and bivector dot product like quantity such that the allows the calculation of the component of a vector that is in the "direction of a plane". Both of these guesses are correct, and validating these facts is worthwhile. However, skipping ahead slightly, this to-be-proven fact allows for a nice closed form solution of the vector component outside of the plane:

Notice the similarities between this planar rejection result and the vector rejection result. To calculate the component of a vector outside of a plane we take the volume spanned by three vectors (trivector) and "divide out" the plane.

Independent of any use of the geometric product it can be shown that this rejection in terms of the standard basis is

where

is the squared area of the parallelogram formed by , and .

The (squared) magnitude of is

Thus, the (squared) volume of the parallelopiped (base area times perpendicular height) is

Note the similarity in form to the w, u, v trivector itself

which, if you take the set of as a basis for the trivector space, suggests this is the natural way to define the measure of a trivector. Loosely speaking, the measure of a vector is a length, the measure of a bivector is an area, and the measure of a trivector is a volume.

If a vector is factored directly into projective and rejective terms using the geometric product , then it is not necessarily obvious that the rejection term, a product of vector and bivector is even a vector. Expansion of the vector bivector product in terms of the standard basis vectors has the following form

- Let

It can be shown that

(a result that can be shown more easily straight from ).

The rejective term is perpendicular to , since implies .

The magnitude of is

So, the quantity

is the squared area of the parallelogram formed by and .

It is also noteworthy that the bivector can be expressed as

Thus is it natural, if one considers each term as a basis vector of the bivector space, to define the (squared) "length" of that bivector as the (squared) area.

Going back to the geometric product expression for the length of the rejection we see that the length of the quotient, a vector, is in this case is the "length" of the bivector divided by the length of the divisor.

This may not be a general result for the length of the product of two k-vectors, however it is a result that may help build some intuition about the significance of the algebraic operations. Namely,

- When a vector is divided out of the plane (parallelogram span) formed from it and another vector, what remains is the perpendicular component of the remaining vector, and its length is the planar area divided by the length of the vector that was divided out.

Area of the parallelogram defined by u and v

If A is the area of the parallelogram defined by u and v, then

and

Note that this squared bivector is a geometric multiplication; this computation can alternatively be stated as the Gram determinant of the two vectors.

Angle between two vectors

Volume of the parallelopiped formed by three vectors

In vector algebra, the volume of a parallelopiped is given by the square root of the squared norm of the scalar triple product:

Product of a vector and a bivector

In order to justify the normal to a plane result above, a general examination of the product of a vector and bivector is required. Namely,

This has two parts, the vector part where or , and the trivector parts where no indexes equal. After some index summation trickery, and grouping terms and so forth, this is

The trivector term is . Expansion of yields the same trivector term (it is the completely symmetric part), and the vector term is negated. Like the geometric product of two vectors, this geometric product can be grouped into symmetric and antisymmetric parts, one of which is a pure k-vector. In analogy the antisymmetric part of this product can be called a generalized dot product, and is roughly speaking the dot product of a "plane" (bivector), and a vector.

The properties of this generalized dot product remain to be explored, but first here is a summary of the notation

Let , where , and . Expressing and the , products in terms of these components is

With the conditions and definitions above, and some manipulation, it can be shown that the term , which then justifies the previous solution of the normal to a plane problem. Since the vector term of the vector bivector product the name dot product is zero when the vector is perpendicular to the plane (bivector), and this vector, bivector "dot product" selects only the components that are in the plane, so in analogy to the vector-vector dot product this name itself is justified by more than the fact this is the non-wedge product term of the geometric vector-bivector product.

Derivative of a unit vector

It can be shown that a unit vector derivative can be expressed using the cross product

The equivalent geometric product generalization is

Thus this derivative is the component of in the direction perpendicular to . In other words, this is minus the projection of that vector onto .

This intuitively makes sense (but a picture would help) since a unit vector is constrained to circular motion, and any change to a unit vector due to a change in its generating vector has to be in the direction of the rejection of from . That rejection has to be scaled by 1/|r| to get the final result.

When the objective isn't comparing to the cross product, it's also notable that this unit vector derivative can be written

See also

Citations

- ↑ Vold 1993, p. 1.

- ↑ Gull, Lasenby & Doran 1993, p. 6.

References and further reading

- Vold, Terje G. (1993), "An introduction to Geometric Algebra with an Application in Rigid Body mechanics", American Journal of Physics 61 (6): 491, doi:10.1119/1.17201, Bibcode: 1993AmJPh..61..491V, http://www.helsinki.fi/kemia/fysikaalinen/opetus/matnum/Lectures/2009/Vold_1.pdf

- Gull, S.F.; Lasenby, A.N; Doran, C:J:L (1993), Imaginary Numbers are not Real – the Geometric Algebra of Spacetime, http://geometry.mrao.cam.ac.uk/wp-content/uploads/2015/02/ImagNumbersArentReal.pdf

|