Laplace distribution

|

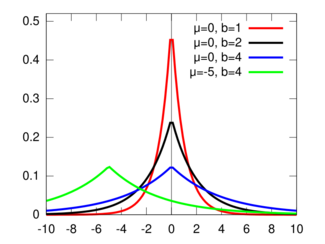

Probability density function  | |||

|

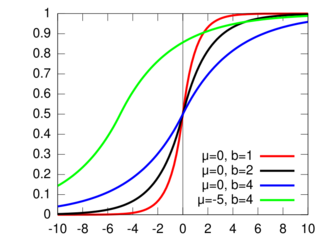

Cumulative distribution function  | |||

| Parameters |

location (real) scale (real) | ||

|---|---|---|---|

| Support | |||

| CDF | |||

| Quantile | |||

| Mean | |||

| Median | |||

| Mode | |||

| Variance | |||

| Skewness | |||

| Kurtosis | |||

| Entropy | |||

| MGF | |||

| CF | |||

In probability theory and statistics, the Laplace distribution is a continuous probability distribution named after Pierre-Simon Laplace. It is also sometimes called the double exponential distribution, because it can be thought of as two exponential distributions (with an additional location parameter) spliced together along the x-axis,[2] although the term is also sometimes used to refer to the Gumbel distribution. The difference between two independent identically distributed exponential random variables is governed by a Laplace distribution, as is a Brownian motion evaluated at an exponentially distributed random time . Increments of Laplace motion or a variance gamma process evaluated over the time scale also have a Laplace distribution.

Definitions

Probability density function

A random variable has a distribution if its probability density function is

where is a location parameter, and , which is sometimes referred to as the "diversity", is a scale parameter. If and , the positive half-line is exactly an exponential distribution scaled by 1/2.[3]

The probability density function of the Laplace distribution is also reminiscent of the normal distribution; however, whereas the normal distribution is expressed in terms of the squared difference from the mean , the Laplace density is expressed in terms of the absolute difference from the mean. Consequently, the Laplace distribution has fatter tails than the normal distribution. It is a special case of the generalized normal distribution and the hyperbolic distribution. Continuous symmetric distributions that have exponential tails, like the Laplace distribution, but which have probability density functions that are differentiable at the mode include the logistic distribution, hyperbolic secant distribution, and the Champernowne distribution.

Cumulative distribution function

The Laplace distribution is easy to integrate (if one distinguishes two symmetric cases) due to the use of the absolute value function. Its cumulative distribution function is as follows:

The inverse cumulative distribution function is given by

Properties

Moments

Related distributions

- If then .

- If then .

- If then (exponential distribution).

- If then .

- If then .

- If then (exponential power distribution).

- If (normal distribution) then and .

- If then (chi-squared distribution).

- If then . (F-distribution)

- If (uniform distribution) then .

- If and (Bernoulli distribution) independent of , then .

- If and independent of , then .

- If has a Rademacher distribution and then .

- If and independent of , then .

- If (geometric stable distribution) then .

- The Laplace distribution is a limiting case of the hyperbolic distribution.

- If with (Rayleigh distribution) then . Note that if , then with , which in turn equals the exponential distribution .

- Given an integer , if (gamma distribution, using characterization), then (infinite divisibility)[4]

- If X has a Laplace distribution, then Y = eX has a log-Laplace distribution; conversely, if X has a log-Laplace distribution, then its logarithm has a Laplace distribution.

Probability of a Laplace being greater than another

Let be independent laplace random variables: and , and we want to compute .

The probability of can be reduced (using the properties below) to , where . This probability is equal to

When , both expressions are replaced by their limit as :

To compute the case for , note that

since when .

Relation to the exponential distribution

A Laplace random variable can be represented as the difference of two independent and identically distributed (iid) exponential random variables.[4] One way to show this is by using the characteristic function approach. For any set of independent continuous random variables, for any linear combination of those variables, its characteristic function (which uniquely determines the distribution) can be acquired by multiplying the corresponding characteristic functions.

Consider two i.i.d random variables . The characteristic functions for are

respectively. On multiplying these characteristic functions (equivalent to the characteristic function of the sum of the random variables ), the result is

This is the same as the characteristic function for , which is

Sargan distributions

Sargan distributions are a system of distributions of which the Laplace distribution is a core member. A th order Sargan distribution has density[5][6]

for parameters . The Laplace distribution results for .

Statistical inference

Given independent and identically distributed samples , the maximum likelihood (MLE) estimator of is the sample median,[7]

revealing a link between the Laplace distribution and least absolute deviations. A correction for small samples can be applied as follows:

(see: exponential distribution#Parameter estimation).

Occurrence and applications

The Laplacian distribution has been used in speech recognition to model priors on DFT coefficients [8] and in JPEG image compression to model AC coefficients [9] generated by a DCT.

- The addition of noise drawn from a Laplacian distribution, with scaling parameter appropriate to a function's sensitivity, to the output of a statistical database query is the most common means to provide differential privacy in statistical databases.

- In regression analysis, the least absolute deviations estimate arises as the maximum likelihood estimate if the errors have a Laplace distribution.

- The Lasso can be thought of as a Bayesian regression with a Laplacian prior for the coefficients.[11]

- In hydrology the Laplace distribution is applied to extreme events such as annual maximum one-day rainfalls and river discharges. The blue picture, made with CumFreq, illustrates an example of fitting the Laplace distribution to ranked annually maximum one-day rainfalls showing also the 90% confidence belt based on the binomial distribution. The rainfall data are represented by plotting positions as part of the cumulative frequency analysis.

- The Laplace distribution has applications in finance. For example, S.G. Kou developed a model for financial instrument prices incorporating a Laplace distribution (in some cases an asymmetric Laplace distribution) to address problems of skewness, kurtosis and the volatility smile that often occur when using a normal distribution for pricing these instruments.[12][13]

- The Laplace distribution, being a composite or double distribution, is applicable in situations where the lower values originate under different external conditions than the higher ones so that they follow a different pattern.[14]

Random variate generation

Given a random variable drawn from the uniform distribution in the interval , the random variable

has a Laplace distribution with parameters and . This follows from the inverse cumulative distribution function given above.

A variate can also be generated as the difference of two i.i.d. random variables. Equivalently, can also be generated as the logarithm of the ratio of two i.i.d. uniform random variables.

History

This distribution is often referred to as "Laplace's first law of errors". He published it in 1774, modeling the frequency of an error as an exponential function of its magnitude once its sign was disregarded. Laplace would later replace this model with his "second law of errors", based on the normal distribution, after the discovery of the central limit theorem.[15][16]

Keynes published a paper in 1911 based on his earlier thesis wherein he showed that the Laplace distribution minimised the absolute deviation from the median.[17]

See also

- Generalized normal distribution

- Multivariate Laplace distribution

- Besov measure, a generalisation of the Laplace distribution to function spaces

- Cauchy distribution, also called the "Lorentzian distribution", ie the Fourier transform of the Laplace

- Characteristic function (probability theory)

References

- ↑ 1.0 1.1 Norton, Matthew; Khokhlov, Valentyn; Uryasev, Stan (2019). "Calculating CVaR and bPOE for common probability distributions with application to portfolio optimization and density estimation". Annals of Operations Research (Springer) 299 (1–2): 1281–1315. doi:10.1007/s10479-019-03373-1. http://uryasev.ams.stonybrook.edu/wp-content/uploads/2019/10/Norton2019_CVaR_bPOE.pdf. Retrieved 2023-02-27.

- ↑ Chattamvelli, Rajan; Shanmugam, Ramalingam (2021), "Laplace Distribution" (in en), Continuous Distributions in Engineering and the Applied Sciences – Part II (Cham: Springer International Publishing): pp. 189–199, doi:10.1007/978-3-031-02435-1_4, ISBN 978-3-031-01307-2, https://link.springer.com/10.1007/978-3-031-02435-1_4, retrieved 2025-04-04

- ↑ Huang, Yunfei. (2022). "Sparse inference and active learning of stochastic differential equations from data". Scientific Reports 12 (1): 21691. doi:10.1038/s41598-022-25638-9. PMID 36522347. Bibcode: 2022NatSR..1221691H.

- ↑ 4.0 4.1 Kotz, Samuel; Kozubowski, Tomasz J.; Podgórski, Krzysztof (2001). The Laplace distribution and generalizations: a revisit with applications to Communications, Economics, Engineering and Finance. Birkhauser. pp. 23 (Proposition 2.2.2, Equation 2.2.8). ISBN 9780817641665. https://books.google.com/books?id=cb8B07hwULUC&q=laplace+distribution+exponential+characteristic+function&pg=PA23.

- ↑ Everitt, B.S. (2002) The Cambridge Dictionary of Statistics, CUP. ISBN 0-521-81099-X

- ↑ Johnson, N.L., Kotz S., Balakrishnan, N. (1994) Continuous Univariate Distributions, Wiley. ISBN 0-471-58495-9. p. 60

- ↑ Robert M. Norton (May 1984). "The Double Exponential Distribution: Using Calculus to Find a Maximum Likelihood Estimator". The American Statistician (American Statistical Association) 38 (2): 135–136. doi:10.2307/2683252.

- ↑ Eltoft, T.; Taesu Kim; Te-Won Lee (2006). "On the multivariate Laplace distribution". IEEE Signal Processing Letters 13 (5): 300–303. doi:10.1109/LSP.2006.870353. Bibcode: 2006ISPL...13..300E. http://eo.uit.no/publications/TE-SPL-06.pdf. Retrieved 2012-07-04.

- ↑ Minguillon, J.; Pujol, J. (2001). "JPEG standard uniform quantization error modeling with applications to sequential and progressive operation modes". Journal of Electronic Imaging 10 (2): 475–485. doi:10.1117/1.1344592. Bibcode: 2001JEI....10..475M. http://openaccess.uoc.edu/webapps/o2/bitstream/10609/6263/6/jei-jpeg.pdf.

- ↑ CumFreq for probability distribution fitting

- ↑ Pardo, Scott (2020). Statistical Analysis of Empirical Data Methods for Applied Sciences. Springer. p. 58. ISBN 978-3-030-43327-7. https://books.google.com/books?id=k0nhDwAAQBAJ&pg=PA58.

- ↑ Kou, S.G. (August 8, 2002). "A Jump-Diffusion Model for Option Pricing". Management Science 48 (8): 1086–1101. doi:10.1287/mnsc.48.8.1086.166. https://www.jstor.org/stable/822677. Retrieved 2022-03-01.

- ↑ Chen, Jian (2018). General Equilibrium Option Pricing Method: Theoretical and Empirical Study. Springer. p. 70. ISBN 9789811074288.

- ↑ A collection of composite distributions

- ↑ Laplace, P-S. (1774). Mémoire sur la probabilité des causes par les évènements. Mémoires de l’Academie Royale des Sciences Presentés par Divers Savan, 6, 621–656

- ↑ Wilson, Edwin Bidwell (1923). "First and Second Laws of Error". Journal of the American Statistical Association (Informa UK Limited) 18 (143): 841–851. doi:10.1080/01621459.1923.10502116. ISSN 0162-1459.

This article incorporates text from this source, which is in the public domain.

This article incorporates text from this source, which is in the public domain.

- ↑ Keynes, J. M. (1911). "The Principal Averages and the Laws of Error which Lead to Them". Journal of the Royal Statistical Society (JSTOR) 74 (3): 322–331. doi:10.2307/2340444. ISSN 0952-8385. https://zenodo.org/record/2253549.

External links

- Hazewinkel, Michiel, ed. (2001), "Laplace distribution", Encyclopedia of Mathematics, Springer Science+Business Media B.V. / Kluwer Academic Publishers, ISBN 978-1-55608-010-4, https://www.encyclopediaofmath.org/index.php?title=p/l057460

|