Cartesian tensor

In geometry and linear algebra, a Cartesian tensor uses an orthonormal basis to represent a tensor in a Euclidean space in the form of components. Converting a tensor's components from one such basis to another is done through an orthogonal transformation.

The most familiar coordinate systems are the two-dimensional and three-dimensional Cartesian coordinate systems. Cartesian tensors may be used with any Euclidean space, or more technically, any finite-dimensional vector space over the field of real numbers that has an inner product.

Use of Cartesian tensors occurs in physics and engineering, such as with the Cauchy stress tensor and the moment of inertia tensor in rigid body dynamics. Sometimes general curvilinear coordinates are convenient, as in high-deformation continuum mechanics, or even necessary, as in general relativity. While orthonormal bases may be found for some such coordinate systems (e.g. tangent to spherical coordinates), Cartesian tensors may provide considerable simplification for applications in which rotations of rectilinear coordinate axes suffice. The transformation is a passive transformation, since the coordinates are changed and not the physical system.

Cartesian basis and related terminology

Vectors in three dimensions

In 3D Euclidean space, , the standard basis is ex, ey, ez. Each basis vector points along the x-, y-, and z-axes, and the vectors are all unit vectors (or normalized), so the basis is orthonormal.

Throughout, when referring to Cartesian coordinates in three dimensions, a right-handed system is assumed and this is much more common than a left-handed system in practice, see orientation (vector space) for details.

For Cartesian tensors of order 1, a Cartesian vector a can be written algebraically as a linear combination of the basis vectors ex, ey, ez:

where the coordinates of the vector with respect to the Cartesian basis are denoted ax, ay, az. It is common and helpful to display the basis vectors as column vectors

when we have a coordinate vector in a column vector representation:

A row vector representation is also legitimate, although in the context of general curvilinear coordinate systems the row and column vector representations are used separately for specific reasons – see Einstein notation and covariance and contravariance of vectors for why.

The term "component" of a vector is ambiguous: it could refer to:

- a specific coordinate of the vector such as az (a scalar), and similarly for x and y, or

- the coordinate scalar-multiplying the corresponding basis vector, in which case the "y-component" of a is ayey (a vector), and similarly for x and z.

A more general notation is tensor index notation, which has the flexibility of numerical values rather than fixed coordinate labels. The Cartesian labels are replaced by tensor indices in the basis vectors ex ↦ e1, ey ↦ e2, ez ↦ e3 and coordinates ax ↦ a1, ay ↦ a2, az ↦ a3. In general, the notation e1, e2, e3 refers to any basis, and a1, a2, a3 refers to the corresponding coordinate system; although here they are restricted to the Cartesian system. Then:

It is standard to use the Einstein notation—the summation sign for summation over an index that is present exactly twice within a term may be suppressed for notational conciseness:

An advantage of the index notation over coordinate-specific notations is the independence of the dimension of the underlying vector space, i.e. the same expression on the right hand side takes the same form in higher dimensions (see below). Previously, the Cartesian labels x, y, z were just labels and not indices. (It is informal to say "i = x, y, z").

Second-order tensors in three dimensions

A dyadic tensor T is an order-2 tensor formed by the tensor product ⊗ of two Cartesian vectors a and b, written T = a ⊗ b. Analogous to vectors, it can be written as a linear combination of the tensor basis ex ⊗ ex ≡ exx, ex ⊗ ey ≡ exy, ..., ez ⊗ ez ≡ ezz (the right-hand side of each identity is only an abbreviation, nothing more):

Representing each basis tensor as a matrix:

then T can be represented more systematically as a matrix:

See matrix multiplication for the notational correspondence between matrices and the dot and tensor products.

More generally, whether or not T is a tensor product of two vectors, it is always a linear combination of the basis tensors with coordinates Txx, Txy, ..., Tzz:

while in terms of tensor indices:

and in matrix form:

Second-order tensors occur naturally in physics and engineering when physical quantities have directional dependence in the system, often in a "stimulus-response" way. This can be mathematically seen through one aspect of tensors – they are multilinear functions. A second-order tensor T which takes in a vector u of some magnitude and direction will return a vector v; of a different magnitude and in a different direction to u, in general. The notation used for functions in mathematical analysis leads us to write v − T(u),[1] while the same idea can be expressed in matrix and index notations[2] (including the summation convention), respectively:

By "linear", if u = ρr + σs for two scalars ρ and σ and vectors r and s, then in function and index notations:

and similarly for the matrix notation. The function, matrix, and index notations all mean the same thing. The matrix forms provide a clear display of the components, while the index form allows easier tensor-algebraic manipulation of the formulae in a compact manner. Both provide the physical interpretation of directions; vectors have one direction, while second-order tensors connect two directions together. One can associate a tensor index or coordinate label with a basis vector direction.

The use of second-order tensors are the minimum to describe changes in magnitudes and directions of vectors, as the dot product of two vectors is always a scalar, while the cross product of two vectors is always a pseudovector perpendicular to the plane defined by the vectors, so these products of vectors alone cannot obtain a new vector of any magnitude in any direction. (See also below for more on the dot and cross products). The tensor product of two vectors is a second-order tensor, although this has no obvious directional interpretation by itself.

The previous idea can be continued: if T takes in two vectors p and q, it will return a scalar r. In function notation we write r = T(p, q), while in matrix and index notations (including the summation convention) respectively:

The tensor T is linear in both input vectors. When vectors and tensors are written without reference to components, and indices are not used, sometimes a dot ⋅ is placed where summations over indices (known as tensor contractions) are taken. For the above cases:[1][2]

motivated by the dot product notation:

More generally, a tensor of order m which takes in n vectors (where n is between 0 and m inclusive) will return a tensor of order m − n, see Tensor § As multilinear maps for further generalizations and details. The concepts above also apply to pseudovectors in the same way as for vectors. The vectors and tensors themselves can vary within throughout space, in which case we have vector fields and tensor fields, and can also depend on time.

Following are some examples:

| An applied or given... | ...to a material or object of... | ...results in... | ...in the material or object, given by: |

|---|---|---|---|

| unit vector n | Cauchy stress tensor σ | a traction force t | |

| angular velocity ω | moment of inertia I | an angular momentum J | |

| a rotational kinetic energy T | |||

| electric field E | electrical conductivity σ | a current density flow J | |

| polarizability α (related to the permittivity ε and electric susceptibility χE) | an induced polarization field P | ||

| magnetic H field | magnetic permeability μ | a magnetic B field |

For the electrical conduction example, the index and matrix notations would be:

while for the rotational kinetic energy T:

See also constitutive equation for more specialized examples.

Vectors and tensors in n dimensions

In n-dimensional Euclidean space over the real numbers, , the standard basis is denoted e1, e2, e3, ... en. Each basis vector ei points along the positive xi axis, with the basis being orthonormal. Component j of ei is given by the Kronecker delta:

A vector in takes the form:

Similarly for the order-2 tensor above, for each vector a and b in :

or more generally:

Transformations of Cartesian vectors (any number of dimensions)

Meaning of "invariance" under coordinate transformations

The position vector x in is a simple and common example of a vector, and can be represented in any coordinate system. Consider the case of rectangular coordinate systems with orthonormal bases only. It is possible to have a coordinate system with rectangular geometry if the basis vectors are all mutually perpendicular and not normalized, in which case the basis is orthogonal but not orthonormal. However, orthonormal bases are easier to manipulate and are often used in practice. The following results are true for orthonormal bases, not orthogonal ones.

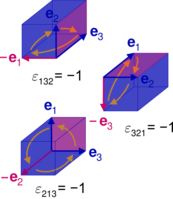

In one rectangular coordinate system, x as a contravector has coordinates xi and basis vectors ei, while as a covector it has coordinates xi and basis covectors ei, and we have:

In another rectangular coordinate system, x as a contravector has coordinates xi and basis ei, while as a covector it has coordinates xi and basis ei, and we have:

Each new coordinate is a function of all the old ones, and vice versa for the inverse function:

and similarly each new basis vector is a function of all the old ones, and vice versa for the inverse function:

for all i, j.

A vector is invariant under any change of basis, so if coordinates transform according to a transformation matrix L, the bases transform according to the matrix inverse L−1, and conversely if the coordinates transform according to inverse L−1, the bases transform according to the matrix L. The difference between each of these transformations is shown conventionally through the indices as superscripts for contravariance and subscripts for covariance, and the coordinates and bases are linearly transformed according to the following rules:

| Vector elements | Contravariant transformation law | Covariant transformation law |

|---|---|---|

| Coordinates | ||

| Basis | ||

| Any vector |

where Lij represents the entries of the transformation matrix (row number is i and column number is j) and (L−1)ik denotes the entries of the inverse matrix of the matrix Lik.

If L is an orthogonal transformation (orthogonal matrix), the objects transforming by it are defined as Cartesian tensors. This geometrically has the interpretation that a rectangular coordinate system is mapped to another rectangular coordinate system, in which the norm of the vector x is preserved (and distances are preserved).

The determinant of L is det(L) = ±1, which corresponds to two types of orthogonal transformation: (+1) for rotations and (−1) for improper rotations (including reflections).

There are considerable algebraic simplifications, the matrix transpose is the inverse from the definition of an orthogonal transformation:

From the previous table, orthogonal transformations of covectors and contravectors are identical. There is no need to differ between raising and lowering indices, and in this context and applications to physics and engineering the indices are usually all subscripted to remove confusion for exponents. All indices will be lowered in the remainder of this article. One can determine the actual raised and lowered indices by considering which quantities are covectors or contravectors, and the relevant transformation rules.

Exactly the same transformation rules apply to any vector a, not only the position vector. If its components ai do not transform according to the rules, a is not a vector.

Despite the similarity between the expressions above, for the change of coordinates such as xj = Lijxi, and the action of a tensor on a vector like bi = Tij aj, L is not a tensor, but T is. In the change of coordinates, L is a matrix, used to relate two rectangular coordinate systems with orthonormal bases together. For the tensor relating a vector to a vector, the vectors and tensors throughout the equation all belong to the same coordinate system and basis.

Derivatives and Jacobian matrix elements

The entries of L are partial derivatives of the new or old coordinates with respect to the old or new coordinates, respectively.

Differentiating xi with respect to xk:

so

is an element of the Jacobian matrix. There is a (partially mnemonical) correspondence between index positions attached to L and in the partial derivative: i at the top and j at the bottom, in each case, although for Cartesian tensors the indices can be lowered.

Conversely, differentiating xj with respect to xi:

so

is an element of the inverse Jacobian matrix, with a similar index correspondence.

Many sources state transformations in terms of the partial derivatives:

and the explicit matrix equations in 3d are:

similarly for

Projections along coordinate axes

As with all linear transformations, L depends on the basis chosen. For two orthonormal bases

- projecting x to the x axes:

- projecting x to the x axes:

Hence the components reduce to direction cosines between the xi and xj axes:

where θij and θji are the angles between the xi and xj axes. In general, θij is not equal to θji, because for example θ12 and θ21 are two different angles.

The transformation of coordinates can be written:

and the explicit matrix equations in 3d are:

similarly for

The geometric interpretation is the xi components equal to the sum of projecting the xj components onto the xj axes.

The numbers ei⋅ej arranged into a matrix would form a symmetric matrix (a matrix equal to its own transpose) due to the symmetry in the dot products, in fact it is the metric tensor g. By contrast ei⋅ej or ei⋅ej do not form symmetric matrices in general, as displayed above. Therefore, while the L matrices are still orthogonal, they are not symmetric.

Apart from a rotation about any one axis, in which the xi and xi for some i coincide, the angles are not the same as Euler angles, and so the L matrices are not the same as the rotation matrices.

Transformation of the dot and cross products (three dimensions only)

The dot product and cross product occur very frequently, in applications of vector analysis to physics and engineering, examples include:

- power transferred P by an object exerting a force F with velocity v along a straight-line path:

- tangential velocity v at a point x of a rotating rigid body with angular velocity ω:

- potential energy U of a magnetic dipole of magnetic moment m in a uniform external magnetic field B:

- angular momentum J for a particle with position vector r and momentum p:

- torque τ acting on an electric dipole of electric dipole moment p in a uniform external electric field E:

- induced surface current density jS in a magnetic material of magnetization M on a surface with unit normal n:

How these products transform under orthogonal transformations is illustrated below.

Dot product, Kronecker delta, and metric tensor

The dot product ⋅ of each possible pairing of the basis vectors follows from the basis being orthonormal. For perpendicular pairs we have

while for parallel pairs we have

Replacing Cartesian labels by index notation as shown above, these results can be summarized by

where δij are the components of the Kronecker delta. The Cartesian basis can be used to represent δ in this way.

In addition, each metric tensor component gij with respect to any basis is the dot product of a pairing of basis vectors:

For the Cartesian basis the components arranged into a matrix are:

so are the simplest possible for the metric tensor, namely the δ:

This is not true for general bases: orthogonal coordinates have diagonal metrics containing various scale factors (i.e. not necessarily 1), while general curvilinear coordinates could also have nonzero entries for off-diagonal components.

The dot product of two vectors a and b transforms according to

which is intuitive, since the dot product of two vectors is a single scalar independent of any coordinates. This also applies more generally to any coordinate systems, not just rectangular ones; the dot product in one coordinate system is the same in any other.

Cross product, Levi-Civita symbol, and pseudovectors

For the cross product (×) of two vectors, the results are (almost) the other way round. Again, assuming a right-handed 3d Cartesian coordinate system, cyclic permutations in perpendicular directions yield the next vector in the cyclic collection of vectors:

while parallel vectors clearly vanish:

and replacing Cartesian labels by index notation as above, these can be summarized by:

where i, j, k are indices which take values 1, 2, 3. It follows that:

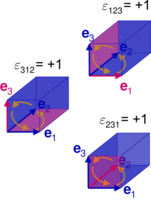

These permutation relations and their corresponding values are important, and there is an object coinciding with this property: the Levi-Civita symbol, denoted by ε. The Levi-Civita symbol entries can be represented by the Cartesian basis:

which geometrically corresponds to the volume of a cube spanned by the orthonormal basis vectors, with sign indicating orientation (and not a "positive or negative volume"). Here, the orientation is fixed by ε123 = +1, for a right-handed system. A left-handed system would fix ε123 = −1 or equivalently ε321 = +1.

The scalar triple product can now be written:

with the geometric interpretation of volume (of the parallelepiped spanned by a, b, c) and algebraically is a determinant:[3]: 23

This in turn can be used to rewrite the cross product of two vectors as follows:

Contrary to its appearance, the Levi-Civita symbol is not a tensor, but a pseudotensor, the components transform according to:

Therefore, the transformation of the cross product of a and b is:

and so a × b transforms as a pseudovector, because of the determinant factor.

The tensor index notation applies to any object which has entities that form multidimensional arrays – not everything with indices is a tensor by default. Instead, tensors are defined by how their coordinates and basis elements change under a transformation from one coordinate system to another.

Note the cross product of two vectors is a pseudovector, while the cross product of a pseudovector with a vector is another vector.

Applications of the δ tensor and ε pseudotensor

Other identities can be formed from the δ tensor and ε pseudotensor, a notable and very useful identity is one that converts two Levi-Civita symbols adjacently contracted over two indices into an antisymmetrized combination of Kronecker deltas:

The index forms of the dot and cross products, together with this identity, greatly facilitate the manipulation and derivation of other identities in vector calculus and algebra, which in turn are used extensively in physics and engineering. For instance, it is clear the dot and cross products are distributive over vector addition:

without resort to any geometric constructions – the derivation in each case is a quick line of algebra. Although the procedure is less obvious, the vector triple product can also be derived. Rewriting in index notation:

and because cyclic permutations of indices in the ε symbol does not change its value, cyclically permuting indices in εkℓm to obtain εℓmk allows us to use the above δ-ε identity to convert the ε symbols into δ tensors:

thusly:

Note this is antisymmetric in b and c, as expected from the left hand side. Similarly, via index notation or even just cyclically relabelling a, b, and c in the previous result and taking the negative:

and the difference in results show that the cross product is not associative. More complex identities, like quadruple products;

and so on, can be derived in a similar manner.

Transformations of Cartesian tensors (any number of dimensions)

Tensors are defined as quantities which transform in a certain way under linear transformations of coordinates.

Second order

Let a = aiei and b = biei be two vectors, so that they transform according to aj = aiLij, bj = biLij.

Taking the tensor product gives:

then applying the transformation to the components

and to the bases

gives the transformation law of an order-2 tensor. The tensor a⊗b is invariant under this transformation:

More generally, for any order-2 tensor

the components transform according to;

and the basis transforms by:

If R does not transform according to this rule – whatever quantity R may be – it is not an order-2 tensor.

Any order

More generally, for any order p tensor

the components transform according to;

and the basis transforms by:

For a pseudotensor S of order p, the components transform according to;

Pseudovectors as antisymmetric second order tensors

The antisymmetric nature of the cross product can be recast into a tensorial form as follows.[2] Let c be a vector, a be a pseudovector, b be another vector, and T be a second order tensor such that:

As the cross product is linear in a and b, the components of T can be found by inspection, and they are:

so the pseudovector a can be written as an antisymmetric tensor. This transforms as a tensor, not a pseudotensor. For the mechanical example above for the tangential velocity of a rigid body, given by v = ω × x, this can be rewritten as v = Ω ⋅ x where Ω is the tensor corresponding to the pseudovector ω:

For an example in electromagnetism, while the electric field E is a vector field, the magnetic field B is a pseudovector field. These fields are defined from the Lorentz force for a particle of electric charge q traveling at velocity v:

and considering the second term containing the cross product of a pseudovector B and velocity vector v, it can be written in matrix form, with F, E, and v as column vectors and B as an antisymmetric matrix:

If a pseudovector is explicitly given by a cross product of two vectors (as opposed to entering the cross product with another vector), then such pseudovectors can also be written as antisymmetric tensors of second order, with each entry a component of the cross product. The angular momentum of a classical pointlike particle orbiting about an axis, defined by J = x × p, is another example of a pseudovector, with corresponding antisymmetric tensor:

Although Cartesian tensors do not occur in the theory of relativity; the tensor form of orbital angular momentum J enters the spacelike part of the relativistic angular momentum tensor, and the above tensor form of the magnetic field B enters the spacelike part of the electromagnetic tensor.

Vector and tensor calculus

The following formulae are only so simple in Cartesian coordinates – in general curvilinear coordinates there are factors of the metric and its determinant – see tensors in curvilinear coordinates for more general analysis.

Vector calculus

Following are the differential operators of vector calculus. Throughout, let Φ(r, t) be a scalar field, and

be vector fields, in which all scalar and vector fields are functions of the position vector r and time t.

The gradient operator in Cartesian coordinates is given by:

and in index notation, this is usually abbreviated in various ways:

This operator acts on a scalar field Φ to obtain the vector field directed in the maximum rate of increase of Φ:

The index notation for the dot and cross products carries over to the differential operators of vector calculus.[3]: 197

The directional derivative of a scalar field Φ is the rate of change of Φ along some direction vector a (not necessarily a unit vector), formed out of the components of a and the gradient:

The divergence of a vector field A is:

Note the interchange of the components of the gradient and vector field yields a different differential operator

which could act on scalar or vector fields. In fact, if A is replaced by the velocity field u(r, t) of a fluid, this is a term in the material derivative (with many other names) of continuum mechanics, with another term being the partial time derivative:

which usually acts on the velocity field leading to the non-linearity in the Navier-Stokes equations.

As for the curl of a vector field A, this can be defined as a pseudovector field by means of the ε symbol:

which is only valid in three dimensions, or an antisymmetric tensor field of second order via antisymmetrization of indices, indicated by delimiting the antisymmetrized indices by square brackets (see Ricci calculus):

which is valid in any number of dimensions. In each case, the order of the gradient and vector field components should not be interchanged as this would result in a different differential operator:

which could act on scalar or vector fields.

Finally, the Laplacian operator is defined in two ways, the divergence of the gradient of a scalar field Φ:

or the square of the gradient operator, which acts on a scalar field Φ or a vector field A:

In physics and engineering, the gradient, divergence, curl, and Laplacian operator arise inevitably in fluid mechanics, Newtonian gravitation, electromagnetism, heat conduction, and even quantum mechanics.

Vector calculus identities can be derived in a similar way to those of vector dot and cross products and combinations. For example, in three dimensions, the curl of a cross product of two vector fields A and B:

where the product rule was used, and throughout the differential operator was not interchanged with A or B. Thus:

Tensor calculus

One can continue the operations on tensors of higher order. Let T = T(r, t) denote a second order tensor field, again dependent on the position vector r and time t.

For instance, the gradient of a vector field in two equivalent notations ("dyadic" and "tensor", respectively) is:

which is a tensor field of second order.

The divergence of a tensor is:

which is a vector field. This arises in continuum mechanics in Cauchy's laws of motion – the divergence of the Cauchy stress tensor σ is a vector field, related to body forces acting on the fluid.

Difference from the standard tensor calculus

Cartesian tensors are as in tensor algebra, but Euclidean structure of and restriction of the basis brings some simplifications compared to the general theory.

The general tensor algebra consists of general mixed tensors of type (p, q):

with basis elements:

the components transform according to:

as for the bases:

For Cartesian tensors, only the order p + q of the tensor matters in a Euclidean space with an orthonormal basis, and all p + q indices can be lowered. A Cartesian basis does not exist unless the vector space has a positive-definite metric, and thus cannot be used in relativistic contexts.

History

Dyadic tensors were historically the first approach to formulating second-order tensors, similarly triadic tensors for third-order tensors, and so on. Cartesian tensors use tensor index notation, in which the variance may be glossed over and is often ignored, since the components remain unchanged by raising and lowering indices.

See also

- Tensor algebra

- Tensor calculus

- Tensors in curvilinear coordinates

- Rotation group

References

- ↑ 1.0 1.1 C.W. Misner; K.S. Thorne; J.A. Wheeler (15 September 1973). Gravitation. Macmillan. ISBN 0-7167-0344-0., used throughout

- ↑ 2.0 2.1 2.2 T. W. B. Kibble (1973). Classical Mechanics. European physics series (2nd ed.). McGraw Hill. ISBN 978-0-07-084018-8., see Appendix C.

- ↑ 3.0 3.1 M. R. Spiegel; S. Lipcshutz; D. Spellman (2009). Vector analysis. Schaum's Outlines (2nd ed.). McGraw Hill. ISBN 978-0-07-161545-7.

General references

- D. C. Kay (1988). Tensor Calculus. Schaum's Outlines. McGraw Hill. pp. 18–19, 31–32. ISBN 0-07-033484-6.

- M. R. Spiegel; S. Lipcshutz; D. Spellman (2009). Vector analysis. Schaum's Outlines (2nd ed.). McGraw Hill. p. 227. ISBN 978-0-07-161545-7.

- J.R. Tyldesley (1975). An introduction to tensor analysis for engineers and applied scientists. Longman. pp. 5–13. ISBN 0-582-44355-5. https://books.google.com/books?id=PODXAAAAMAAJ.

Further reading and applications

- S. Lipcshutz; M. Lipson (2009). Linear Algebra. Schaum's Outlines (4th ed.). McGraw Hill. ISBN 978-0-07-154352-1.

- Pei Chi Chou (1992). Elasticity: Tensor, Dyadic, and Engineering Approaches. Courier Dover Publications. ISBN 048-666-958-0. https://books.google.com/books?id=9-pJ7Kg5XmAC&q=cartesian+tensor.

- T. W. Körner (2012). Vectors, Pure and Applied: A General Introduction to Linear Algebra. Cambridge University Press. p. 216. ISBN 978-11070-3356-6. https://books.google.com/books?id=RO_TMc7ETPEC&q=cartesian+tensor.

- R. Torretti (1996). Relativity and Geometry. Courier Dover Publications. p. 103. ISBN 0-4866-90466. https://books.google.com/books?id=vpW_sBxwr88C&q=cartesian+tensor&pg=PA103.

- J. J. L. Synge; A. Schild (1978). Tensor Calculus. Courier Dover Publications. p. 128. ISBN 0-4861-4139-X. https://books.google.com/books?id=8vlGhlxqZjsC&q=cartesian+tensor&pg=PA127.

- C. A. Balafoutis; R. V. Patel (1991). Dynamic Analysis of Robot Manipulators: A Cartesian Tensor Approach. The Kluwer International Series in Engineering and Computer Science: Robotics: vision, manipulation and sensors. 131. Springer. ISBN 0792-391-454. https://books.google.com/books?id=7BcpyUjmLpUC&q=cartesian+tensor.

- S. G. Tzafestas (1992). Robotic systems: advanced techniques and applications. Springer. ISBN 0-792-317-491. https://books.google.com/books?id=iG5W9IQhjd4C&q=cartesian+tensor&pg=PA45.

- T. Dass; S. K. Sharma (1998). Mathematical Methods In Classical And Quantum Physics. Universities Press. p. 144. ISBN 817-371-0899. https://books.google.com/books?id=AQCsAxpZ7ToC&q=cartesian+tensor&pg=PA144.

- G. F. J. Temple (2004). Cartesian Tensors: An Introduction. Dover Books on Mathematics Series. Dover. ISBN 0-4864-3908-9. https://books.google.com/books?id=56WqzKbTMtMC&q=Cartesian+Tensors:+An+Introduction+temple.

- H. Jeffreys (1961). Cartesian Tensors. Cambridge University Press. ISBN 9780521054232. https://books.google.com/books?id=oOYIAQAAIAAJ&q=cartesian+tensors.

External links

- Cartesian Tensors

- V. N. Kaliakin, Brief Review of Tensors, University of Delaware

- R. E. Hunt, Cartesian Tensors, University of Cambridge

|