Count sketch

| Machine learning and data mining |

|---|

|

Count sketch is a type of dimensionality reduction that is particularly efficient in statistics, machine learning and algorithms.[1][2] It was invented by Moses Charikar, Kevin Chen and Martin Farach-Colton[3] in an effort to speed up the AMS Sketch by Alon, Matias and Szegedy for approximating the frequency moments of streams[4] (these calculations require counting of the number of occurrences for the distinct elements of the stream).

The sketch is nearly identical[citation needed] to the Feature hashing algorithm by John Moody,[5] but differs in its use of hash functions with low dependence, which makes it more practical. In order to still have a high probability of success, the median trick is used to aggregate multiple count sketches, rather than the mean.

These properties allow use for explicit kernel methods, bilinear pooling in neural networks and is a cornerstone in many numerical linear algebra algorithms.[6]

Intuitive explanation

The inventors of this data structure offer the following iterative explanation of its operation:[3]

- at the simplest level, the output of a single hash function s mapping stream elements q into {+1, -1} is feeding a single up/down counter C. After a single pass over the data, the frequency of a stream element q can be approximated, although extremely poorly, by the expected value ;

- a straightforward way to improve the variance of the previous estimate is to use an array of different hash functions , each connected to its own counter . For each element q, the still holds, so averaging across the i range will tighten the approximation;

- the previous construct still has a major deficiency: if a lower-frequency-but-still-important output element a exhibits a hash collision with a high-frequency element, estimate can be significantly affected. Avoiding this requires reducing the frequency of collision counter updates between any two distinct elements. This is achieved by replacing each in the previous construct with an array of m counters (making the counter set into a two-dimensional matrix ), with index j of a particular counter to be incremented/decremented selected via another set of hash functions that map element q into the range {1..m}. Since , averaging across all values of i will work.

Mathematical definition

1. For constants and (to be defined later) independently choose random hash functions and such that and . It is necessary that the hash families from which and are chosen be pairwise independent.

2. For each item in the stream, add to the th bucket of the th hash.

At the end of this process, one has sums where

To estimate the count of s one computes the following value:

The values are unbiased estimates of how many times has appeared in the stream.

The estimate has variance , where is the length of the stream and is .[7]

Furthermore, is guaranteed to never be more than off from the true value, with probability .

Vector formulation

Alternatively Count-Sketch can be seen as a linear mapping with a non-linear reconstruction function. Let , be a collection of matrices, defined by

for and 0 everywhere else.

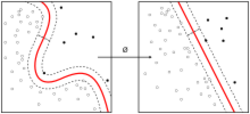

Then a vector is sketched by . To reconstruct we take . This gives the same guarantees as stated above, if we take and .

Relation to Tensor sketch

The count sketch projection of the outer product of two vectors is equivalent to the convolution of two component count sketches.

The count sketch computes a vector convolution

, where and are independent count sketch matrices.

Pham and Pagh[8] show that this equals – a count sketch of the outer product of vectors, where denotes Kronecker product.

The fast Fourier transform can be used to do fast convolution of count sketches. By using the face-splitting product[9][10][11] such structures can be computed much faster than normal matrices.

See also

- Count–min sketch is a version of algorithm with smaller memory requirements (and weaker error guarantees as a tradeoff).

- Tensorsketch

References

- ↑ Faisal M. Algashaam; Kien Nguyen; Mohamed Alkanhal; Vinod Chandran; Wageeh Boles. "Multispectral Periocular Classification WithMultimodal Compact Multi-Linear Pooling" [1]. IEEE Access, Vol. 5. 2017.

- ↑ Ahle, Thomas; Knudsen, Jakob (2019-09-03). "Almost Optimal Tensor Sketch". https://www.researchgate.net/publication/335617805.

- ↑ 3.0 3.1 Charikar, Chen & Farach-Colton 2004.

- ↑ Alon, Noga, Yossi Matias, and Mario Szegedy. "The space complexity of approximating the frequency moments." Journal of Computer and system sciences 58.1 (1999): 137-147.

- ↑ Moody, John. "Fast learning in multi-resolution hierarchies." Advances in neural information processing systems. 1989.

- ↑ Woodruff, David P. "Sketching as a Tool for Numerical Linear Algebra." Theoretical Computer Science 10.1-2 (2014): 1–157.

- ↑ Larsen, Kasper Green, Rasmus Pagh, and Jakub Tětek. "CountSketches, Feature Hashing and the Median of Three." International Conference on Machine Learning. PMLR, 2021.

- ↑ Ninh, Pham (2013). "Fast and scalable polynomial kernels via explicit feature maps". SIGKDD international conference on Knowledge discovery and data mining. Association for Computing Machinery. doi:10.1145/2487575.2487591.

- ↑ Slyusar, V. I. (1998). "End products in matrices in radar applications". Radioelectronics and Communications Systems 41 (3): 50–53. http://slyusar.kiev.ua/en/IZV_1998_3.pdf.

- ↑ Slyusar, V. I. (1997-05-20). "Analytical model of the digital antenna array on a basis of face-splitting matrix products.". Proc. ICATT-97, Kyiv: 108–109. http://slyusar.kiev.ua/ICATT97.pdf.

- ↑ Slyusar, V. I. (March 13, 1998). "A Family of Face Products of Matrices and its Properties". Cybernetics and Systems Analysis C/C of Kibernetika I Sistemnyi Analiz.- 1999. 35 (3): 379–384. doi:10.1007/BF02733426. http://slyusar.kiev.ua/FACE.pdf.

Further reading

- Charikar, Moses; Chen, Kevin; Farach-Colton, Martin (2004). "Finding frequent items in data streams". Theoretical Computer Science (Elsevier BV) 312 (1): 3–15. doi:10.1016/s0304-3975(03)00400-6. ISSN 0304-3975. https://people.cs.rutgers.edu/~farach/pubs/FrequentStream.pdf.

- Faisal M. Algashaam; Kien Nguyen; Mohamed Alkanhal; Vinod Chandran; Wageeh Boles. "Multispectral Periocular Classification WithMultimodal Compact Multi-Linear Pooling" [1]. IEEE Access, Vol. 5. 2017.

- Ahle, Thomas; Knudsen, Jakob (2019-09-03). "Almost Optimal Tensor Sketch". https://www.researchgate.net/publication/335617805.

|