Khatri–Rao product

This article provides insufficient context for those unfamiliar with the subject. (January 2023) (Learn how and when to remove this template message) |

In mathematics, the Khatri–Rao product of matrices is defined as[1][2][3]

in which the ij-th block is the mipi × njqj sized Kronecker product of the corresponding blocks of A and B, assuming the number of row and column partitions of both matrices is equal. The size of the product is then (Σi mipi) × (Σj njqj).

For example, if A and B both are 2 × 2 partitioned matrices e.g.:

we obtain:

This is a submatrix of the Tracy–Singh product [4] of the two matrices (each partition in this example is a partition in a corner of the Tracy–Singh product) and also may be called the block Kronecker product.

Column-wise Kronecker product

A column-wise Kronecker product of two matrices may also be called the Khatri–Rao product. This product assumes the partitions of the matrices are their columns. In this case m1 = m, p1 = p, n = q and for each j: nj = pj = 1. The resulting product is a mp × n matrix of which each column is the Kronecker product of the corresponding columns of A and B. Using the matrices from the previous examples with the columns partitioned:

so that:

This column-wise version of the Khatri–Rao product is useful in linear algebra approaches to data analytical processing[5] and in optimizing the solution of inverse problems dealing with a diagonal matrix.[6][7]

In 1996 the Column-wise Khatri–Rao product was proposed to estimate the angles of arrival (AOAs) and delays of multipath signals[8] and four coordinates of signals sources[9] at a digital antenna array.

Face-splitting product

The alternative concept of the matrix product, which uses row-wise splitting of matrices with a given quantity of rows, was proposed by V. Slyusar[10] in 1996.[9][11][12][13][14]

This matrix operation was named the "face-splitting product" of matrices[11][13] or the "transposed Khatri–Rao product". This type of operation is based on row-by-row Kronecker products of two matrices. Using the matrices from the previous examples with the rows partitioned:

the result can be obtained:[9][11][13]

Main properties

- Transpose (V. Slyusar, 1996[9][11][12]):

- ,

- Bilinearity and associativity:[9][11][12]

where A, B and C are matrices, and k is a scalar,

- ,[12]

- The mixed-product property (V. Slyusar, 1997[12]): where denotes the Hadamard product,

- ,[12]

- ,[9]

- ,[16]

- , where is a permutation matrix.[7]

-

Similarly:

- ,

-

- ,[12]

- ,

where and are vectors, - ,[17] ,

-

- ,[18]

-

- ,

where is vector convolution and is the Fourier transform matrix (this result is an evolving of count sketch properties[19]), -

- ,[20]

- ,[21]

- ,

- , where is matrix, is matrix,.

-

- ,[12]

- [13]= ,

- ,[21]

where is the vector consisting of the diagonal elements of , means stack the columns of a matrix on top of each other to give a vector. -

Similarly:

- ,

Examples[18]

Theorem[18]

If , where are independent components a random matrix with independent identically distributed rows , such that

- and ,

then for any vector

with probability if the quantity of rows

In particular, if the entries of are can get

which matches the Johnson–Lindenstrauss lemma of when is small.

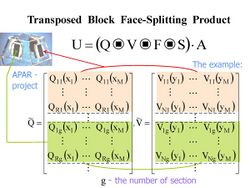

Block face-splitting product

According to the definition of V. Slyusar[9][13] the block face-splitting product of two partitioned matrices with a given quantity of rows in blocks

can be written as :

The transposed block face-splitting product (or Block column-wise version of the Khatri–Rao product) of two partitioned matrices with a given quantity of columns in blocks has a view:[9][13]

Main properties

Applications

The Face-splitting product and the Block Face-splitting product used in the tensor-matrix theory of digital antenna arrays. These operations used also in:

- Artificial Intelligence and Machine learning systems to minimization of convolution and tensor sketch operations,[18]

- A popular Natural Language Processing models, and hypergraph models of similarity,[22]

- Generalized linear array model in statistics[21]

- Two- and multidimensional P-spline approximation of data,[20]

- Studies of genotype x environment interactions.[23]

See also

Notes

- ↑ Khatri C. G., C. R. Rao (1968). "Solutions to some functional equations and their applications to characterization of probability distributions". Sankhya 30: 167–180. http://library.isical.ac.in:8080/xmlui/bitstream/handle/10263/614/68.E02.pdf. Retrieved 2008-08-21.

- ↑ Liu, Shuangzhe (1999). "Matrix Results on the Khatri–Rao and Tracy–Singh Products". Linear Algebra and Its Applications 289 (1–3): 267–277. doi:10.1016/S0024-3795(98)10209-4.

- ↑ Zhang X; Yang Z; Cao C. (2002), "Inequalities involving Khatri–Rao products of positive semi-definite matrices", Applied Mathematics E-notes 2: 117–124

- ↑ Liu, Shuangzhe; Trenkler, Götz (2008). "Hadamard, Khatri-Rao, Kronecker and other matrix products". International Journal of Information and Systems Sciences 4 (1): 160–177.

- ↑ See e.g. H. D. Macedo and J.N. Oliveira. A linear algebra approach to OLAP. Formal Aspects of Computing, 27(2):283–307, 2015.

- ↑ Lev-Ari, Hanoch (2005-01-01). "Efficient Solution of Linear Matrix Equations with Application to Multistatic Antenna Array Processing" (in EN). Communications in Information & Systems 05 (1): 123–130. doi:10.4310/CIS.2005.v5.n1.a5. ISSN 1526-7555. http://www.ims.cuhk.edu.hk/~cis/2005.1/05.pdf.

- ↑ 7.0 7.1 Masiero, B.; Nascimento, V. H. (2017-05-01). "Revisiting the Kronecker Array Transform". IEEE Signal Processing Letters 24 (5): 525–529. doi:10.1109/LSP.2017.2674969. ISSN 1070-9908. Bibcode: 2017ISPL...24..525M. https://zenodo.org/record/896497.

- ↑ Vanderveen, M. C., Ng, B. C., Papadias, C. B., & Paulraj, A. (n.d.). Joint angle and delay estimation (JADE) for signals in multipath environments. Conference Record of The Thirtieth Asilomar Conference on Signals, Systems and Computers. – DOI:10.1109/acssc.1996.599145

- ↑ 9.0 9.1 9.2 9.3 9.4 9.5 9.6 9.7 Slyusar, V. I. (December 27, 1996). "End products in matrices in radar applications.". Radioelectronics and Communications Systems 41 (3): 50–53. http://slyusar.kiev.ua/en/IZV_1998_3.pdf.

- ↑ Anna Esteve, Eva Boj & Josep Fortiana (2009): "Interaction Terms in Distance-Based Regression," Communications in Statistics – Theory and Methods, 38:19, p. 3501 [1]

- ↑ 11.0 11.1 11.2 11.3 11.4 Slyusar, V. I. (1997-05-20). "Analytical model of the digital antenna array on a basis of face-splitting matrix products.". Proc. ICATT-97, Kyiv: 108–109. http://slyusar.kiev.ua/ICATT97.pdf.

- ↑ 12.0 12.1 12.2 12.3 12.4 12.5 12.6 12.7 Slyusar, V. I. (1997-09-15). "New operations of matrices product for applications of radars". Proc. Direct and Inverse Problems of Electromagnetic and Acoustic Wave Theory (DIPED-97), Lviv.: 73–74. http://slyusar.kiev.ua/DIPED_1997.pdf.

- ↑ 13.00 13.01 13.02 13.03 13.04 13.05 13.06 13.07 13.08 13.09 Slyusar, V. I. (March 13, 1998). "A Family of Face Products of Matrices and its Properties". Cybernetics and Systems Analysis C/C of Kibernetika I Sistemnyi Analiz. 1999. 35 (3): 379–384. doi:10.1007/BF02733426. http://slyusar.kiev.ua/FACE.pdf.

- ↑ Slyusar, V. I. (2003). "Generalized face-products of matrices in models of digital antenna arrays with nonidentical channels". Radioelectronics and Communications Systems 46 (10): 9–17. http://slyusar.kiev.ua/en/IZV_2003_10.pdf.

- ↑ 15.0 15.1 15.2 15.3 15.4 Vadym Slyusar. New Matrix Operations for DSP (Lecture). April 1999. – DOI: 10.13140/RG.2.2.31620.76164/1

- ↑ 16.0 16.1 C. Radhakrishna Rao. Estimation of Heteroscedastic Variances in Linear Models.//Journal of the American Statistical Association, Vol. 65, No. 329 (Mar., 1970), pp. 161–172

- ↑ Kasiviswanathan, Shiva Prasad, et al. «The price of privately releasing contingency tables and the spectra of random matrices with correlated rows.» Proceedings of the forty-second ACM symposium on Theory of computing. 2010.

- ↑ 18.0 18.1 18.2 18.3 Thomas D. Ahle, Jakob Bæk Tejs Knudsen. Almost Optimal Tensor Sketch. Published 2019. Mathematics, Computer Science, ArXiv

- ↑ Ninh, Pham (2013). "Fast and scalable polynomial kernels via explicit feature maps". SIGKDD international conference on Knowledge discovery and data mining. Association for Computing Machinery. doi:10.1145/2487575.2487591.

- ↑ 20.0 20.1 Eilers, Paul H.C.; Marx, Brian D. (2003). "Multivariate calibration with temperature interaction using two-dimensional penalized signal regression.". Chemometrics and Intelligent Laboratory Systems 66 (2): 159–174. doi:10.1016/S0169-7439(03)00029-7.

- ↑ 21.0 21.1 21.2 Currie, I. D.; Durban, M.; Eilers, P. H. C. (2006). "Generalized linear array models with applications to multidimensional smoothing". Journal of the Royal Statistical Society 68 (2): 259–280. doi:10.1111/j.1467-9868.2006.00543.x.

- ↑ Bryan Bischof. Higher order co-occurrence tensors for hypergraphs via face-splitting. Published 15 February 2020, Mathematics, Computer Science, ArXiv

- ↑ Johannes W. R. Martini, Jose Crossa, Fernando H. Toledo, Jaime Cuevas. On Hadamard and Kronecker products in covariance structures for genotype x environment interaction.//Plant Genome. 2020;13:e20033. Page 5. [2]

References

- Khatri C. G., C. R. Rao (1968). "Solutions to some functional equations and their applications to characterization of probability distributions". Sankhya 30: 167–180. http://sankhya.isical.ac.in/search/30a2/30a2019.html. Retrieved 2008-08-21.

- Rao C.R.; Rao M. Bhaskara (1998), Matrix Algebra and Its Applications to Statistics and Econometrics, World Scientific, pp. 216

- Zhang X; Yang Z; Cao C. (2002), "Inequalities involving Khatri–Rao products of positive semi-definite matrices", Applied Mathematics E-notes 2: 117–124

- Liu Shuangzhe; Trenkler Götz (2008), "Hadamard, Khatri-Rao, Kronecker and other matrix products", International Journal of Information and Systems Sciences 4: 160–177

|