Gaussian function

In mathematics, a Gaussian function, often simply referred to as a Gaussian, is a function of the base form and with parametric extension for arbitrary real constants a, b and non-zero c. It is named after the mathematician Carl Friedrich Gauss. The graph of a Gaussian is a characteristic symmetric "bell curve" shape. The parameter a is the height of the curve's peak, b is the position of the center of the peak, and c (the standard deviation, sometimes called the Gaussian RMS width) controls the width of the "bell".

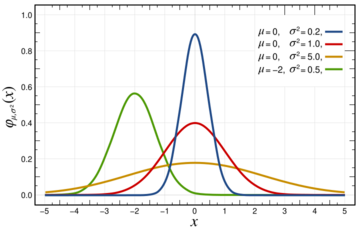

Gaussian functions are often used to represent the probability density function of a normally distributed random variable with expected value μ = b and variance σ2 = c2. In this case, the Gaussian is of the form[1]

Gaussian functions are widely used in statistics to describe the normal distributions, in signal processing to define Gaussian filters, in image processing where two-dimensional Gaussians are used for Gaussian blurs, and in mathematics to solve heat equations and diffusion equations and to define the Weierstrass transform. They are also abundantly used in quantum chemistry to form basis sets.

Properties

Gaussian functions arise by composing the exponential function with a concave quadratic function: where (Note: in , not to be confused with )

The Gaussian functions are thus those functions whose logarithm is a concave quadratic function.

The parameter c is related to the full width at half maximum (FWHM) of the peak according to

The function may then be expressed in terms of the FWHM, represented by w:

Alternatively, the parameter c can be interpreted by saying that the two inflection points of the function occur at x = b ± c.

The full width at tenth of maximum (FWTM) for a Gaussian could be of interest and is

Gaussian functions are analytic, and their limit as x → ∞ is 0 (for the above case of b = 0).

Gaussian functions are among those functions that are elementary but lack elementary antiderivatives; the integral of the Gaussian function is the error function:

Nonetheless, their improper integrals over the whole real line can be evaluated exactly, using the Gaussian integral and one obtains

This integral is 1 if and only if (the normalizing constant), and in this case the Gaussian is the probability density function of a normally distributed random variable with expected value μ = b and variance σ2 = c2:

These Gaussians are plotted in the accompanying figure.

The product of two Gaussian functions is a Gaussian, and the convolution of two Gaussian functions is also a Gaussian, with variance being the sum of the original variances: . The product of two Gaussian probability density functions (PDFs), though, is not in general a Gaussian PDF.

The Fourier uncertainty principle becomes an equality if and only if (modulated) Gaussian functions are considered.[2]

Taking the Fourier transform (unitary, angular-frequency convention) of a Gaussian function with parameters a = 1, b = 0 and c yields another Gaussian function, with parameters , b = 0 and 1/c.[3] So in particular the Gaussian functions with b = 0 and c = a are kept fixed by the Fourier transform (they are eigenfunctions of the Fourier transform with eigenvalue 1). A physical realization is that of the diffraction pattern: for example, a photographic slide whose transmittance has a Gaussian variation is also a Gaussian function.

The fact that the Gaussian function is an eigenfunction of the continuous Fourier transform allows us to derive the following interesting[clarification needed] identity from the Poisson summation formula:

Integral of a Gaussian function

The integral of an arbitrary Gaussian function is

An alternative form is where f must be strictly positive for the integral to converge.

Relation to standard Gaussian integral

The integral for some real constants a, b, c > 0 can be calculated by putting it into the form of a Gaussian integral. First, the constant a can simply be factored out of the integral. Next, the variable of integration is changed from x to y = x − b: and then to :

Then, using the Gaussian integral identity

we have

Two-dimensional Gaussian function

Base form:

In two dimensions, the argument of the exponential function in the Gaussian function is any negative-definite quadratic form. Consequently, the level sets of the Gaussian will always be ellipses.

A particular example of a two-dimensional Gaussian function is

Here the coefficient A is the amplitude, x0, y0 is the center, and σx, σy are the x and y spreads of the blob. The figure on the right was created using A = 1, x0 = 0, y0 = 0, σx = σy = 1.

The volume under the Gaussian function is given by

In general, a two-dimensional elliptical Gaussian function is expressed as where the matrix is positive-definite.

Using this formulation, the figure on the right can be created using A = 1, (x0, y0) = (0, 0), a = c = 1/2, b = 0.

Meaning of parameters for the general equation

For the general form of the equation the coefficient A is the height of the peak and (x0, y0) is the center of the blob.

If we set then we rotate the blob by a positive, counter-clockwise angle (for negative, clockwise rotation, invert the signs in the b coefficient).[4]

To get back the coefficients , and from , and use

Example rotations of Gaussian blobs can be seen in the following examples:

|

|

|

Using the following Octave code, one can easily see the effect of changing the parameters:

A = 1;

x0 = 0; y0 = 0;

sigma_X = 1;

sigma_Y = 2;

[X, Y] = meshgrid(-5:.1:5, -5:.1:5);

for theta = 0:pi/100:pi

a = cos(theta)^2 / (2 * sigma_X^2) + sin(theta)^2 / (2 * sigma_Y^2);

b = sin(2 * theta) / (4 * sigma_X^2) - sin(2 * theta) / (4 * sigma_Y^2);

c = sin(theta)^2 / (2 * sigma_X^2) + cos(theta)^2 / (2 * sigma_Y^2);

Z = A * exp(-(a * (X - x0).^2 + 2 * b * (X - x0) .* (Y - y0) + c * (Y - y0).^2));

surf(X, Y, Z);

shading interp;

view(-36, 36)

waitforbuttonpress

end

Such functions are often used in image processing and in computational models of visual system function—see the articles on scale space and affine shape adaptation.

Also see multivariate normal distribution.

Higher-order Gaussian or super-Gaussian function or generalized Gaussian function

A more general formulation of a Gaussian function with a flat-top and Gaussian fall-off can be taken by raising the content of the exponent to a power :

This function is known as a super-Gaussian function and is often used for Gaussian beam formulation.[5] This function may also be expressed in terms of the full width at half maximum (FWHM), represented by w:

In a two-dimensional formulation, a Gaussian function along and can be combined[6] with potentially different and to form a rectangular Gaussian distribution: or an elliptical Gaussian distribution:

Multi-dimensional Gaussian function

In an -dimensional space a Gaussian function can be defined as where is a column of coordinates, is a positive-definite matrix, and denotes matrix transposition.

The integral of this Gaussian function over the whole -dimensional space is given as

It can be easily calculated by diagonalizing the matrix and changing the integration variables to the eigenvectors of .

More generally a shifted Gaussian function is defined as where is the shift vector and the matrix can be assumed to be symmetric, , and positive-definite. The following integrals with this function can be calculated with the same technique:

where

Estimation of parameters

A number of fields such as stellar photometry, Gaussian beam characterization, and emission/absorption line spectroscopy work with sampled Gaussian functions and need to accurately estimate the height, position, and width parameters of the function. There are three unknown parameters for a 1D Gaussian function (a, b, c) and five for a 2D Gaussian function .

The most common method for estimating the Gaussian parameters is to take the logarithm of the data and fit a parabola to the resulting data set.[7][8] While this provides a simple curve fitting procedure, the resulting algorithm may be biased by excessively weighting small data values, which can produce large errors in the profile estimate. One can partially compensate for this problem through weighted least squares estimation, reducing the weight of small data values, but this too can be biased by allowing the tail of the Gaussian to dominate the fit. In order to remove the bias, one can instead use an iteratively reweighted least squares procedure, in which the weights are updated at each iteration.[8] It is also possible to perform non-linear regression directly on the data, without involving the logarithmic data transformation; for more options, see probability distribution fitting.

Parameter precision

Once one has an algorithm for estimating the Gaussian function parameters, it is also important to know how precise those estimates are. Any least squares estimation algorithm can provide numerical estimates for the variance of each parameter (i.e., the variance of the estimated height, position, and width of the function). One can also use Cramér–Rao bound theory to obtain an analytical expression for the lower bound on the parameter variances, given certain assumptions about the data.[9][10]

- The noise in the measured profile is either i.i.d. Gaussian, or the noise is Poisson-distributed.

- The spacing between each sampling (i.e. the distance between pixels measuring the data) is uniform.

- The peak is "well-sampled", so that less than 10% of the area or volume under the peak (area if a 1D Gaussian, volume if a 2D Gaussian) lies outside the measurement region.

- The width of the peak is much larger than the distance between sample locations (i.e. the detector pixels must be at least 5 times smaller than the Gaussian FWHM).

When these assumptions are satisfied, the following covariance matrix K applies for the 1D profile parameters , , and under i.i.d. Gaussian noise and under Poisson noise:[9] where is the width of the pixels used to sample the function, is the quantum efficiency of the detector, and indicates the standard deviation of the measurement noise. Thus, the individual variances for the parameters are, in the Gaussian noise case,

and in the Poisson noise case,

For the 2D profile parameters giving the amplitude , position , and width of the profile, the following covariance matrices apply:[10]

where the individual parameter variances are given by the diagonal elements of the covariance matrix.

Discrete Gaussian

One may ask for a discrete analog to the Gaussian; this is necessary in discrete applications, particularly digital signal processing. A simple answer is to sample the continuous Gaussian, yielding the sampled Gaussian kernel. However, this discrete function does not have the discrete analogs of the properties of the continuous function, and can lead to undesired effects, as described in the article scale space implementation.

An alternative approach is to use the discrete Gaussian kernel:[11] where denotes the modified Bessel functions of integer order.

This is the discrete analog of the continuous Gaussian in that it is the solution to the discrete diffusion equation (discrete space, continuous time), just as the continuous Gaussian is the solution to the continuous diffusion equation.[11][12]

Applications

Gaussian functions appear in many contexts in the natural sciences, the social sciences, mathematics, and engineering. Some examples include:

- In statistics and probability theory, Gaussian functions appear as the density function of the normal distribution, which is a limiting probability distribution of complicated sums, according to the central limit theorem.

- Gaussian functions are the Green's function for the (homogeneous and isotropic) diffusion equation (and to the heat equation, which is the same thing), a partial differential equation that describes the time evolution of a mass-density under diffusion. Specifically, if the mass-density at time t=0 is given by a Dirac delta, which essentially means that the mass is initially concentrated in a single point, then the mass-distribution at time t will be given by a Gaussian function, with the parameter a being linearly related to 1/√t and c being linearly related to √t; this time-varying Gaussian is described by the heat kernel. More generally, if the initial mass-density is φ(x), then the mass-density at later times is obtained by taking the convolution of φ with a Gaussian function. The convolution of a function with a Gaussian is also known as a Weierstrass transform.

- A Gaussian function is the wave function of the ground state of the quantum harmonic oscillator.

- The molecular orbitals used in computational chemistry can be linear combinations of Gaussian functions called Gaussian orbitals (see also basis set).

- Mathematically, the derivatives of the Gaussian function can be represented using Hermite functions. For unit variance, the n-th derivative of the Gaussian is the Gaussian function itself multiplied by the n-th Hermite polynomial, up to scale.

- Consequently, Gaussian functions are also associated with the vacuum state in quantum field theory.

- Gaussian beams are used in optical systems, microwave systems and lasers.

- In scale space representation, Gaussian functions are used as smoothing kernels for generating multi-scale representations in computer vision and image processing. Specifically, derivatives of Gaussians (Hermite functions) are used as a basis for defining a large number of types of visual operations.

- Gaussian functions are used to define some types of artificial neural networks.

- In fluorescence microscopy a 2D Gaussian function is used to approximate the Airy disk, describing the intensity distribution produced by a point source.

- In signal processing they serve to define Gaussian filters, such as in image processing where 2D Gaussians are used for Gaussian blurs. In digital signal processing, one uses a discrete Gaussian kernel, which may be approximated by the Binomial coefficient[13] or sampling a Gaussian.

- In geostatistics they have been used for understanding the variability between the patterns of a complex training image. They are used with kernel methods to cluster the patterns in the feature space.[14]

See also

References

- ↑ Squires, G. L. (2001-08-30). Practical Physics (4 ed.). Cambridge University Press. doi:10.1017/cbo9781139164498. ISBN 978-0-521-77940-1. https://www.cambridge.org/core/product/identifier/9781139164498/type/book.

- ↑ Folland, Gerald B.; Sitaram, Alladi (1997). "The uncertainty principle: A mathematical survey". The Journal of Fourier Analysis and Applications 3 (3): 207–238. doi:10.1007/BF02649110. ISSN 1069-5869. Bibcode: 1997JFAA....3..207F.

- ↑ Weisstein, Eric W.. "Fourier Transform – Gaussian". MathWorld. https://mathworld.wolfram.com/FourierTransformGaussian.html.

- ↑ Nawri, Nikolai. "Berechnung von Kovarianzellipsen". http://imkbemu.physik.uni-karlsruhe.de/~eisatlas/covariance_ellipses.pdf.

- ↑ Parent, A., M. Morin, and P. Lavigne. "Propagation of super-Gaussian field distributions". Optical and Quantum Electronics 24.9 (1992): S1071–S1079.

- ↑ "GLAD optical software commands manual, Entry on GAUSSIAN command". 2016-12-15. http://www.aor.com/anonymous/pub/commands.pdf.

- ↑ Caruana, Richard A.; Searle, Roger B.; Heller, Thomas.; Shupack, Saul I. (1986). "Fast algorithm for the resolution of spectra". Analytical Chemistry (American Chemical Society (ACS)) 58 (6): 1162–1167. doi:10.1021/ac00297a041. ISSN 0003-2700.

- ↑ 8.0 8.1 Hongwei Guo, "A simple algorithm for fitting a Gaussian function," IEEE Sign. Proc. Mag. 28(9): 134-137 (2011).

- ↑ 9.0 9.1 N. Hagen, M. Kupinski, and E. L. Dereniak, "Gaussian profile estimation in one dimension," Appl. Opt. 46:5374–5383 (2007)

- ↑ 10.0 10.1 N. Hagen and E. L. Dereniak, "Gaussian profile estimation in two dimensions," Appl. Opt. 47:6842–6851 (2008)

- ↑ 11.0 11.1 Lindeberg, T., "Scale-space for discrete signals," PAMI(12), No. 3, March 1990, pp. 234–254.

- ↑ Campbell, J, 2007, The SMM model as a boundary value problem using the discrete diffusion equation, Theor Popul Biol. 2007 Dec;72(4):539–46.

- ↑ Haddad, R.A. and Akansu, A.N., 1991, A Class of Fast Gaussian Binomial Filters for Speech and Image processing, IEEE Trans. on Signal Processing, 39-3: 723–727

- ↑ Honarkhah, M and Caers, J, 2010, Stochastic Simulation of Patterns Using Distance-Based Pattern Modeling, Mathematical Geosciences, 42: 487–517

Further reading

- Haberman, Richard (2013). "10.3.3 Inverse Fourier transform of a Gaussian". Applied Partial Differential Equations. Boston: PEARSON. ISBN 978-0-321-79705-6.

External links

- Mathworld, includes a proof for the relations between c and FWHM

- "Integrating The Bell Curve". MathPages.com. http://www.mathpages.com/home/kmath045/kmath045.htm.

- Haskell, Erlang and Perl implementation of Gaussian distribution

- Bensimhoun Michael, N-Dimensional Cumulative Function, And Other Useful Facts About Gaussians and Normal Densities (2009)

- Code for fitting Gaussians in ImageJ and Fiji.

|