Antiderivative

| Part of a series of articles about |

| Calculus |

|---|

In calculus, an antiderivative, inverse derivative, primitive function, primitive integral or indefinite integral[Note 1] of a function f is a differentiable function F whose derivative is equal to the original function f. This can be stated symbolically as F' = f.[1][2] The process of solving for antiderivatives is called antidifferentiation (or indefinite integration), and its opposite operation is called differentiation, which is the process of finding a derivative. Antiderivatives are often denoted by capital Roman letters such as F and G.

Antiderivatives are related to definite integrals through the second fundamental theorem of calculus: the definite integral of a function over a closed interval where the function is Riemann integrable is equal to the difference between the values of an antiderivative evaluated at the endpoints of the interval.

In physics, antiderivatives arise in the context of rectilinear motion (e.g., in explaining the relationship between position, velocity and acceleration).[3] The discrete equivalent of the notion of antiderivative is antidifference.

Examples

The function is an antiderivative of , since the derivative of is . And since the derivative of a constant is zero, will have an infinite number of antiderivatives, such as , etc. Thus, all the antiderivatives of can be obtained by changing the value of c in , where c is an arbitrary constant known as the constant of integration. Essentially, the graphs of antiderivatives of a given function are vertical translations of each other, with each graph's vertical location depending upon the value c.

More generally, the power function has antiderivative if n ≠ −1, and if n = −1.

In physics, the integration of acceleration yields velocity plus a constant. The constant is the initial velocity term that would be lost upon taking the derivative of velocity, because the derivative of a constant term is zero. This same pattern applies to further integrations and derivatives of motion (position, velocity, acceleration, and so on).[3] Thus, integration produces the relations of acceleration, velocity and displacement:

Uses and properties

Antiderivatives can be used to compute definite integrals, using the fundamental theorem of calculus: if F is an antiderivative of the continuous function f over the interval , then:

Because of this, each of the infinitely many antiderivatives of a given function f may be called the "indefinite integral" of f and written using the integral symbol with no bounds:

If F is an antiderivative of f, and the function f is defined on some interval, then every other antiderivative G of f differs from F by a constant: there exists a number c such that for all x. c is called the constant of integration. If the domain of F is a disjoint union of two or more (open) intervals, then a different constant of integration may be chosen for each of the intervals. For instance

is the most general antiderivative of on its natural domain

Every continuous function f has an antiderivative, and one antiderivative F is given by the definite integral of f with variable upper boundary: for any a in the domain of f. Varying the lower boundary produces other antiderivatives, but not necessarily all possible antiderivatives. This is another formulation of the fundamental theorem of calculus.

There are many functions whose antiderivatives, even though they exist, cannot be expressed in terms of elementary functions (like polynomials, exponential functions, logarithms, trigonometric functions, inverse trigonometric functions and their combinations). Examples of these are

- the error function

- the Fresnel function

- the sine integral

- the logarithmic integral function and

- sophomore's dream

For a more detailed discussion, see also Differential Galois theory.

Techniques of integration

Finding antiderivatives of elementary functions is often considerably harder than finding their derivatives (indeed, there is no pre-defined method for computing indefinite integrals).[4] For some elementary functions, it is impossible to find an antiderivative in terms of other elementary functions. To learn more, see elementary functions and nonelementary integral.

There exist many properties and techniques for finding antiderivatives. These include, among others:

- The linearity of integration (which breaks complicated integrals into simpler ones)

- Integration by substitution, often combined with trigonometric identities or the natural logarithm

- The inverse chain rule method (a special case of integration by substitution)

- Integration by parts (to integrate products of functions)

- Inverse function integration (a formula that expresses the antiderivative of the inverse f−1 of an invertible and continuous function f, in terms of the antiderivative of f and of f−1).

- The method of partial fractions in integration (which allows us to integrate all rational functions—fractions of two polynomials)

- The Risch algorithm

- Additional techniques for multiple integrations (see for instance double integrals, polar coordinates, the Jacobian and the Stokes' theorem)

- Numerical integration (a technique for approximating a definite integral when no elementary antiderivative exists, as in the case of exp(−x2))

- Algebraic manipulation of integrand (so that other integration techniques, such as integration by substitution, may be used)

- Cauchy formula for repeated integration (to calculate the n-times antiderivative of a function)

Computer algebra systems can be used to automate some or all of the work involved in the symbolic techniques above, which is particularly useful when the algebraic manipulations involved are very complex or lengthy. Integrals which have already been derived can be looked up in a table of integrals.

Of non-continuous functions

Non-continuous functions can have antiderivatives. While there are still open questions in this area, it is known that:

- Some highly pathological functions with large sets of discontinuities may nevertheless have antiderivatives.

- In some cases, the antiderivatives of such pathological functions may be found by Riemann integration, while in other cases these functions are not Riemann integrable.

Assuming that the domains of the functions are open intervals:

- A necessary, but not sufficient, condition for a function f to have an antiderivative is that f have the intermediate value property. That is, if [a, b] is a subinterval of the domain of f and y is any real number between f(a) and f(b), then there exists a c between a and b such that f(c) = y. This is a consequence of Darboux's theorem.

- The set of discontinuities of f must be a meagre set. This set must also be an F-sigma set (since the set of discontinuities of any function must be of this type). Moreover, for any meagre F-sigma set, one can construct some function f having an antiderivative, which has the given set as its set of discontinuities.

- If f has an antiderivative, is bounded on closed finite subintervals of the domain and has a set of discontinuities of Lebesgue measure 0, then an antiderivative may be found by integration in the sense of Lebesgue. In fact, using more powerful integrals like the Henstock–Kurzweil integral, every function for which an antiderivative exists is integrable, and its general integral coincides with its antiderivative.

- If f has an antiderivative F on a closed interval , then for any choice of partition if one chooses sample points as specified by the mean value theorem, then the corresponding Riemann sum telescopes to the value . However if f is unbounded, or if f is bounded but the set of discontinuities of f has positive Lebesgue measure, a different choice of sample points may give a significantly different value for the Riemann sum, no matter how fine the partition. See Example 4 below.

Some examples

- The function

with is not continuous at but has the antiderivative

with . Since f is bounded on closed finite intervals and is only discontinuous at 0, the antiderivative F may be obtained by integration: . - The function with is not continuous at but has the antiderivative with . Unlike Example 1, f(x) is unbounded in any interval containing 0, so the Riemann integral is undefined.

- If f(x) is the function in Example 1 and F is its antiderivative, and is a dense countable subset of the open interval then the function has an antiderivative The set of discontinuities of g is precisely the set . Since g is bounded on closed finite intervals and the set of discontinuities has measure 0, the antiderivative G may be found by integration.

- Let be a dense countable subset of the open interval Consider the everywhere continuous strictly increasing function

It can be shown that

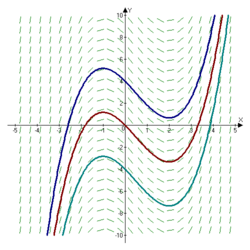

Figure 1.

Figure 2. for all values x where the series converges, and that the graph of F(x) has vertical tangent lines at all other values of x. In particular the graph has vertical tangent lines at all points in the set .

Moreover for all x where the derivative is defined. It follows that the inverse function is differentiable everywhere and that

for all x in the set which is dense in the interval Thus g has an antiderivative G. On the other hand, it can not be true that

since for any partition of , one can choose sample points for the Riemann sum from the set , giving a value of 0 for the sum. It follows that g has a set of discontinuities of positive Lebesgue measure. Figure 1 on the right shows an approximation to the graph of g(x) where and the series is truncated to 8 terms. Figure 2 shows the graph of an approximation to the antiderivative G(x), also truncated to 8 terms. On the other hand if the Riemann integral is replaced by the Lebesgue integral, then Fatou's lemma or the dominated convergence theorem shows that g does satisfy the fundamental theorem of calculus in that context. - In Examples 3 and 4, the sets of discontinuities of the functions g are dense only in a finite open interval However, these examples can be easily modified so as to have sets of discontinuities which are dense on the entire real line . Let Then has a dense set of discontinuities on and has antiderivative

- Using a similar method as in Example 5, one can modify g in Example 4 so as to vanish at all rational numbers. If one uses a naive version of the Riemann integral defined as the limit of left-hand or right-hand Riemann sums over regular partitions, one will obtain that the integral of such a function g over an interval is 0 whenever a and b are both rational, instead of . Thus the fundamental theorem of calculus will fail spectacularly.

- A function which has an antiderivative may still fail to be Riemann integrable. The derivative of Volterra's function is an example.

Basic formulae

- If , then .

See also

- Antiderivative (complex analysis)

- Formal antiderivative

- Jackson integral

- Lists of integrals

- Symbolic integration

- Area

Notes

- ↑ Antiderivatives are also called general integrals, and sometimes integrals. The latter term is generic, and refers not only to indefinite integrals (antiderivatives), but also to definite integrals. When the word integral is used without additional specification, the reader is supposed to deduce from the context whether it refers to a definite or indefinite integral. Some authors define the indefinite integral of a function as the set of its infinitely many possible antiderivatives. Others define it as an arbitrarily selected element of that set. This article adopts the latter approach. In English A-Level Mathematics textbooks one can find the term complete primitive - L. Bostock and S. Chandler (1978) Pure Mathematics 1; The solution of a differential equation including the arbitrary constant is called the general solution (or sometimes the complete primitive).

References

- ↑ Stewart, James (2008). Calculus: Early Transcendentals (6th ed.). Brooks/Cole. ISBN 978-0-495-01166-8. https://archive.org/details/calculusearlytra00stew_1.

- ↑ Larson, Ron; Edwards, Bruce H. (2009). Calculus (9th ed.). Brooks/Cole. ISBN 978-0-547-16702-2.

- ↑ 3.0 3.1 "4.9: Antiderivatives" (in en). 2017-04-27. https://math.libretexts.org/Bookshelves/Calculus/Map%3A_Calculus__Early_Transcendentals_(Stewart)/04%3A_Applications_of_Differentiation/4.09%3A_Antiderivatives.

- ↑ "Antiderivative and Indefinite Integration | Brilliant Math & Science Wiki" (in en-us). https://brilliant.org/wiki/antiderivative-and-indefinite-integration/.

Further reading

- Introduction to Classical Real Analysis, by Karl R. Stromberg; Wadsworth, 1981 (see also)

- Historical Essay On Continuity Of Derivatives by Dave L. Renfro

External links

- Wolfram Integrator — Free online symbolic integration with Mathematica

- Function Calculator from WIMS

- Integral at HyperPhysics

- Antiderivatives and indefinite integrals at the Khan Academy

- Integral calculator at Symbolab

- The Antiderivative at MIT

- Introduction to Integrals at SparkNotes

- Antiderivatives at Harvy Mudd College

|