Reproducibility

Reproducibility, closely related to replicability and repeatability, is a major principle underpinning the scientific method. For the findings of a study to be reproducible means that results obtained by an experiment or an observational study or in a statistical analysis of a data set should be achieved again with a high degree of reliability when the study is replicated. There are different kinds of replication[1] but typically replication studies involve different researchers using the same methodology. Only after one or several such successful replications should a result be recognized as scientific knowledge.

History

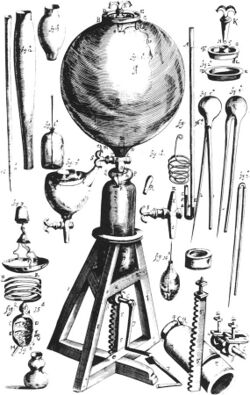

The first to stress the importance of reproducibility in science was the Anglo-Irish chemist Robert Boyle, in England in the 17th century. Boyle's air pump was designed to generate and study vacuum, which at the time was a very controversial concept. Indeed, distinguished philosophers such as René Descartes and Thomas Hobbes denied the very possibility of vacuum existence. Historians of science Steven Shapin and Simon Schaffer, in their 1985 book Leviathan and the Air-Pump, describe the debate between Boyle and Hobbes, ostensibly over the nature of vacuum, as fundamentally an argument about how useful knowledge should be gained. Boyle, a pioneer of the experimental method, maintained that the foundations of knowledge should be constituted by experimentally produced facts, which can be made believable to a scientific community by their reproducibility. By repeating the same experiment over and over again, Boyle argued, the certainty of fact will emerge.

The air pump, which in the 17th century was a complicated and expensive apparatus to build, also led to one of the first documented disputes over the reproducibility of a particular scientific phenomenon. In the 1660s, the Dutch scientist Christiaan Huygens built his own air pump in Amsterdam, the first one outside the direct management of Boyle and his assistant at the time Robert Hooke. Huygens reported an effect he termed "anomalous suspension", in which water appeared to levitate in a glass jar inside his air pump (in fact suspended over an air bubble), but Boyle and Hooke could not replicate this phenomenon in their own pumps. As Shapin and Schaffer describe, "it became clear that unless the phenomenon could be produced in England with one of the two pumps available, then no one in England would accept the claims Huygens had made, or his competence in working the pump". Huygens was finally invited to England in 1663, and under his personal guidance Hooke was able to replicate anomalous suspension of water. Following this Huygens was elected a Foreign Member of the Royal Society. However, Shapin and Schaffer also note that "the accomplishment of replication was dependent on contingent acts of judgment. One cannot write down a formula saying when replication was or was not achieved".[2]

The philosopher of science Karl Popper noted briefly in his famous 1934 book The Logic of Scientific Discovery that "non-reproducible single occurrences are of no significance to science".[3] The statistician Ronald Fisher wrote in his 1935 book The Design of Experiments, which set the foundations for the modern scientific practice of hypothesis testing and statistical significance, that "we may say that a phenomenon is experimentally demonstrable when we know how to conduct an experiment which will rarely fail to give us statistically significant results".[4] Such assertions express a common dogma in modern science that reproducibility is a necessary condition (although not necessarily sufficient) for establishing a scientific fact, and in practice for establishing scientific authority in any field of knowledge. However, as noted above by Shapin and Schaffer, this dogma is not well-formulated quantitatively, such as statistical significance for instance, and therefore it is not explicitly established how many times must a fact be replicated to be considered reproducible.

Terminology

Replicability and repeatability are related terms broadly or loosely synonymous with reproducibility (for example, among the general public), but they are often usefully differentiated in more precise senses, as follows.

Two major steps are naturally distinguished in connection with reproducibility of experimental or observational studies: when new data are obtained in the attempt to achieve it, the term replicability is often used, and the new study is a replication or replicate of the original one. Obtaining the same results when analyzing the data set of the original study again with the same procedures, many authors use the term reproducibility in a narrow, technical sense coming from its use in computational research. Repeatability is related to the repetition of the experiment within the same study by the same researchers. Reproducibility in the original, wide sense is only acknowledged if a replication performed by an independent researcher team is successful.

The terms reproducibility and replicability sometimes appear even in the scientific literature with reversed meaning,[5][6] as different research fields settled on their own definitions for the same terms.[7]

Measures of reproducibility and repeatability

In chemistry, the terms reproducibility and repeatability are used with a specific quantitative meaning.[8] In inter-laboratory experiments, a concentration or other quantity of a chemical substance is measured repeatedly in different laboratories to assess the variability of the measurements. Then, the standard deviation of the difference between two values obtained within the same laboratory is called repeatability. The standard deviation for the difference between two measurement from different laboratories is called reproducibility.[9] These measures are related to the more general concept of variance components in metrology.

Reproducible research

Reproducible research method

The term reproducible research refers to the idea that scientific results should be documented in such a way that their deduction is fully transparent. This requires a detailed description of the methods used to obtain the data[10][11] and making the full dataset and the code to calculate the results easily accessible.[12][13][14][15][16][17] This is the essential part of open science.

To make any research project computationally reproducible, general practice involves all data and files being clearly separated, labelled, and documented. All operations should be fully documented and automated as much as practicable, avoiding manual intervention where feasible. The workflow should be designed as a sequence of smaller steps that are combined so that the intermediate outputs from one step directly feed as inputs into the next step. Version control should be used as it lets the history of the project be easily reviewed and allows for the documenting and tracking of changes in a transparent manner.

A basic workflow for reproducible research involves data acquisition, data processing and data analysis. Data acquisition primarily consists of obtaining primary data from a primary source such as surveys, field observations, experimental research, or obtaining data from an existing source. Data processing involves the processing and review of the raw data collected in the first stage, and includes data entry, data manipulation and filtering and may be done using software. The data should be digitized and prepared for data analysis. Data may be analysed with the use of software to interpret or visualise statistics or data to produce the desired results of the research such as quantitative results including figures and tables. The use of software and automation enhances the reproducibility of research methods.[18]

There are systems that facilitate such documentation, like the R Markdown language[19] or the Jupyter notebook.[20][21][22] The Open Science Framework provides a platform and useful tools to support reproducible research.

Reproducible research in practice

Psychology has seen a renewal of internal concerns about irreproducible results (see the entry on replicability crisis for empirical results on success rates of replications). Researchers showed in a 2006 study that, of 141 authors of a publication from the American Psychological Association (APA) empirical articles, 103 (73%) did not respond with their data over a six-month period.[23] In a follow-up study published in 2015, it was found that 246 out of 394 contacted authors of papers in APA journals did not share their data upon request (62%).[24] In a 2012 paper, it was suggested that researchers should publish data along with their works, and a dataset was released alongside as a demonstration.[25] In 2017, an article published in Scientific Data suggested that this may not be sufficient and that the whole analysis context should be disclosed.[26]

In economics, concerns have been raised in relation to the credibility and reliability of published research. In other sciences, reproducibility is regarded as fundamental and is often a prerequisite to research being published, however in economic sciences it is not seen as a priority of the greatest importance. Most peer-reviewed economic journals do not take any substantive measures to ensure that published results are reproducible, however, the top economics journals have been moving to adopt mandatory data and code archives.[27] There is low or no incentives for researchers to share their data, and authors would have to bear the costs of compiling data into reusable forms. Economic research is often not reproducible as only a portion of journals have adequate disclosure policies for datasets and program code, and even if they do, authors frequently do not comply with them or they are not enforced by the publisher. A Study of 599 articles published in 37 peer-reviewed journals revealed that while some journals have achieved significant compliance rates, significant portion have only partially complied, or not complied at all. On an article level, the average compliance rate was 47.5%; and on a journal level, the average compliance rate was 38%, ranging from 13% to 99%.[28]

A 2018 study published in the journal PLOS ONE found that 14.4% of a sample of public health statistics researchers had shared their data or code or both.[29]

There have been initiatives to improve reporting and hence reproducibility in the medical literature for many years, beginning with the CONSORT initiative, which is now part of a wider initiative, the EQUATOR Network. This group has recently turned its attention to how better reporting might reduce waste in research,[30] especially biomedical research.

Reproducible research is key to new discoveries in pharmacology. A Phase I discovery will be followed by Phase II reproductions as a drug develops towards commercial production. In recent decades Phase II success has fallen from 28% to 18%. A 2011 study found that 65% of medical studies were inconsistent when re-tested, and only 6% were completely reproducible.[31]

Some efforts have been made to increase replicability beyond the social and biomedical sciences. Studies in the humanities tend to rely more on expertise and hermeneutics which may make replicability more difficult. Nonetheless, some efforts have been made to call for more transparency and documentation in the humanities.[32]

Noteworthy irreproducible results

Hideyo Noguchi became famous for correctly identifying the bacterial agent of syphilis, but also claimed that he could culture this agent in his laboratory. Nobody else has been able to produce this latter result.[33]

In March 1989, University of Utah chemists Stanley Pons and Martin Fleischmann reported the production of excess heat that could only be explained by a nuclear process ("cold fusion"). The report was astounding given the simplicity of the equipment: it was essentially an electrolysis cell containing heavy water and a palladium cathode which rapidly absorbed the deuterium produced during electrolysis. The news media reported on the experiments widely, and it was a front-page item on many newspapers around the world (see science by press conference). Over the next several months others tried to replicate the experiment, but were unsuccessful.[34]

Nikola Tesla claimed as early as 1899 to have used a high frequency current to light gas-filled lamps from over 25 miles (40 km) away without using wires. In 1904 he built Wardenclyffe Tower on Long Island to demonstrate means to send and receive power without connecting wires. The facility was never fully operational and was not completed due to economic problems, so no attempt to reproduce his first result was ever carried out.[35]

Other examples where contrary evidence has refuted the original claim:

- N-rays, a hypothesized form of radiation subsequently found to be illusory

- Polywater, a hypothesized polymerized form of water found to be just water with common contaminations

- Stimulus-triggered acquisition of pluripotency, revealed to be the result of fraud

- GFAJ-1, a bacterium that could purportedly incorporate arsenic into its DNA in place of phosphorus

- MMR vaccine controversy — a study in The Lancet claiming the MMR vaccine caused autism was revealed to be fraudulent

- Schön scandal — semiconductor "breakthroughs" revealed to be fraudulent

- Power posing — a social psychology phenomenon that went viral after being the subject of a very popular TED talk, but was unable to be replicated in dozens of studies[36]

See also

- Metascience

- Accuracy

- ANOVA gauge R&R

- Contingency

- Corroboration

- Reproducible builds

- Falsifiability

- Hypothesis

- Measurement uncertainty

- Pathological science

- Pseudoscience

- Replication (statistics)

- Replication crisis

- Research transparency

- ReScience C (journal)

- Retraction in academic publishing

- Tautology

- Testability

- Verification and validation

References

- ↑ Tsang, Eric W. K.; Kwan, Kai-man (1999). "Replication and Theory Development in Organizational Science: A Critical Realist Perspective". Academy of Management Review 24 (4): 759–780. doi:10.5465/amr.1999.2553252. ISSN 0363-7425.

- ↑ Steven Shapin and Simon Schaffer, Leviathan and the Air-Pump, Princeton University Press, Princeton, New Jersey (1985).

- ↑ This citation is from the 1959 translation to English, Karl Popper, The Logic of Scientific Discovery, Routledge, London, 1992, p. 66.

- ↑ Ronald Fisher, The Design of Experiments, (1971) [1935](9th ed.), Macmillan, p. 14.

- ↑ Barba, Lorena A. (2018). "Terminologies for Reproducible Research". arXiv:1802.03311 [cs.DL].

- ↑ Liberman, Mark. "Replicability vs. reproducibility — or is it the other way round?". https://languagelog.ldc.upenn.edu/nll/?p=21956.

- ↑ Van Eyghen, Hans; Van den Brink, Gijsbert; Peels, Rik (2024). "Brooke on the Merton Thesis: A Direct Replication of John Hedley Brooke's Chapter on Scientific and Religious Reform.". Zygon 59 (2). https://www.zygonjournal.org/article/id/11497/#!.

- ↑ IUPAC - reproducibility (R05305). doi:10.1351/goldbook.R05305. https://goldbook.iupac.org/terms/view/R05305. Retrieved 2022-03-04.

- ↑ Subcommittee E11.20 on Test Method Evaluation and Quality Control (2014). "Standard Practice for Use of the Terms Precision and Bias in ASTM Test Methods". ASTM International. https://www.astm.org/Standards/E177.htm.

- ↑ King, Gary (1995). "Replication, Replication". PS: Political Science and Politics 28 (3): 444–452. doi:10.2307/420301. ISSN 1049-0965. http://nrs.harvard.edu/urn-3:HUL.InstRepos:4266312.

- ↑ Kühne, Martin; Liehr, Andreas W. (2009). "Improving the Traditional Information Management in Natural Sciences". Data Science Journal 8 (1): 18–27. doi:10.2481/dsj.8.18. https://datascience.codata.org/jms/article/download/dsj.8.18/198. Retrieved 2019-09-05.

- ↑ Fomel, Sergey; Claerbout, Jon (2009). "Guest Editors' Introduction: Reproducible Research". Computing in Science and Engineering 11 (1): 5–7. doi:10.1109/MCSE.2009.14. Bibcode: 2009CSE....11a...5F.

- ↑ Buckheit, Jonathan B.; Donoho, David L. (May 1995). WaveLab and Reproducible Research (Report). California, United States: Stanford University, Department of Statistics. Technical Report No. 474. https://statistics.stanford.edu/sites/default/files/EFS%20NSF%20474.pdf. Retrieved 5 January 2015.

- ↑ "The Yale Law School Round Table on Data and Core Sharing: "Reproducible Research"". Computing in Science and Engineering 12 (5): 8–12. 2010. doi:10.1109/MCSE.2010.113.

- ↑ Marwick, Ben (2016). "Computational reproducibility in archaeological research: Basic principles and a case study of their implementation". Journal of Archaeological Method and Theory 24 (2): 424–450. doi:10.1007/s10816-015-9272-9. https://ro.uow.edu.au/smhpapers/4034.

- ↑ Goodman, Steven N.; Fanelli, Daniele; Ioannidis, John P. A. (1 June 2016). "What does research reproducibility mean?". Science Translational Medicine 8 (341): 341ps12. doi:10.1126/scitranslmed.aaf5027. PMID 27252173.

- ↑ Harris J.K; Johnson K.J; Combs T.B; Carothers B.J; Luke D.A; Wang X (2019). "Three Changes Public Health Scientists Can Make to Help Build a Culture of Reproducible Research". Public Health Rep. Public Health Reports 134 (2): 109–111. doi:10.1177/0033354918821076. ISSN 0033-3549. OCLC 7991854250. PMID 30657732.

- ↑ Kitzes, Justin; Turek, Daniel; Deniz, Fatma (2018). The practice of reproducible research case studies and lessons from the data-intensive sciences. Oakland, California: University of California Press. pp. 19–30. ISBN 978-0-520-29474-5.

- ↑ Marwick, Ben; Boettiger, Carl; Mullen, Lincoln (29 September 2017). "Packaging data analytical work reproducibly using R (and friends)". The American Statistician 72: 80–88. doi:10.1080/00031305.2017.1375986. http://ro.uow.edu.au/cgi/viewcontent.cgi?article=6445&context=smhpapers.

- ↑ Kluyver, Thomas; Ragan-Kelley, Benjamin; Perez, Fernando; Granger, Brian; Bussonnier, Matthias; Frederic, Jonathan; Kelley, Kyle; Hamrick, Jessica et al. (2016). "Jupyter Notebooks–a publishing format for reproducible computational workflows". in Loizides, F; Schmidt, B. 20th International Conference on Electronic Publishing. IOS Press. pp. 87–90. doi:10.3233/978-1-61499-649-1-87. https://eprints.soton.ac.uk/403913/1/STAL9781614996491-0087.pdf.

- ↑ Beg, Marijan; Taka, Juliette; Kluyver, Thomas; Konovalov, Alexander; Ragan-Kelley, Min; Thiery, Nicolas M.; Fangohr, Hans (1 March 2021). "Using Jupyter for Reproducible Scientific Workflows". Computing in Science & Engineering 23 (2): 36–46. doi:10.1109/MCSE.2021.3052101. Bibcode: 2021CSE....23b..36B.

- ↑ Granger, Brian E.; Perez, Fernando (1 March 2021). "Jupyter: Thinking and Storytelling With Code and Data". Computing in Science & Engineering 23 (2): 7–14. doi:10.1109/MCSE.2021.3059263. Bibcode: 2021CSE....23b...7G.

- ↑ Wicherts, J. M.; Borsboom, D.; Kats, J.; Molenaar, D. (2006). "The poor availability of psychological research data for reanalysis". American Psychologist 61 (7): 726–728. doi:10.1037/0003-066X.61.7.726. PMID 17032082.

- ↑ Vanpaemel, W.; Vermorgen, M.; Deriemaecker, L.; Storms, G. (2015). "Are we wasting a good crisis? The availability of psychological research data after the storm". Collabra 1 (1): 1–5. doi:10.1525/collabra.13.

- ↑ Wicherts, J. M.; Bakker, M. (2012). "Publish (your data) or (let the data) perish! Why not publish your data too?". Intelligence 40 (2): 73–76. doi:10.1016/j.intell.2012.01.004.

- ↑ Pasquier, Thomas; Lau, Matthew K.; Trisovic, Ana; Boose, Emery R.; Couturier, Ben; Crosas, Mercè; Ellison, Aaron M.; Gibson, Valerie et al. (5 September 2017). "If these data could talk". Scientific Data 4 (1): 170114. doi:10.1038/sdata.2017.114. PMID 28872630. Bibcode: 2017NatSD...470114P.

- ↑ McCullough, Bruce (March 2009). "Open Access Economics Journals and the Market for Reproducible Economic Research". Economic Analysis and Policy 39 (1): 117–126. doi:10.1016/S0313-5926(09)50047-1.

- ↑ Vlaeminck, Sven; Podkrajac, Felix (2017-12-10). "Journals in Economic Sciences: Paying Lip Service to Reproducible Research?". IASSIST Quarterly 41 (1–4): 16. doi:10.29173/iq6. https://iassistquarterly.com/index.php/iassist/article/view/6/905.

- ↑ Harris, Jenine K.; Johnson, Kimberly J.; Carothers, Bobbi J.; Combs, Todd B.; Luke, Douglas A.; Wang, Xiaoyan (2018). "Use of reproducible research practices in public health: A survey of public health analysts.". PLOS ONE 13 (9). doi:10.1371/journal.pone.0202447. ISSN 1932-6203. OCLC 7891624396. PMID 30208041. Bibcode: 2018PLoSO..1302447H.

- ↑ "Research Waste/EQUATOR Conference | Research Waste". http://researchwaste.net/research-wasteequator-conference/.

- ↑ Prinz, F.; Schlange, T.; Asadullah, K. (2011). "Believe it or not: How much can we rely on published data on potential drug targets?". Nature Reviews Drug Discovery 10 (9): 712. doi:10.1038/nrd3439-c1. PMID 21892149.

- ↑ Van Eyghen, Hans; Van den Brink, Gijsbert; Peels, Rik (2024). "Brooke on the Merton Thesis: A Direct Replication of John Hedley Brooke's Chapter on Scientific and Religious Reform". Zygon: Journal of Religion and Science 59 (2). doi:10.16995/zygon.11497. https://www.zygonjournal.org/article/id/11497/.

- ↑ Tan, SY; Furubayashi, J (2014). "Hideyo Noguchi (1876-1928): Distinguished bacteriologist". Singapore Medical Journal 55 (10): 550–551. doi:10.11622/smedj.2014140. ISSN 0037-5675. PMID 25631898.

- ↑ Browne, Malcolm (3 May 1989). Physicists Debunk Claim Of a New Kind of Fusion. http://partners.nytimes.com/library/national/science/050399sci-cold-fusion.html. Retrieved 3 February 2017.

- ↑ Cheney, Margaret (1999), Tesla, Master of Lightning, New York: Barnes & Noble Books, ISBN 0-7607-1005-8, pp. 107.; "Unable to overcome his financial burdens, he was forced to close the laboratory in 1905."

- ↑ Dominus, Susan (October 18, 2017). "When the Revolution Came for Amy Cuddy". New York Times Magazine. https://www.nytimes.com/2017/10/18/magazine/when-the-revolution-came-for-amy-cuddy.html.

Further reading

- Timmer, John (October 2006). "Scientists on Science: Reproducibility". Ars Technica. https://arstechnica.com/science/2006/10/5744/.

- Saey, Tina Hesman (January 2015). "Is redoing scientific research the best way to find truth? During replication attempts, too many studies fail to pass muster". Science News. https://www.sciencenews.org/article/redoing-scientific-research-best-way-find-truth. "Science is not irrevocably broken, [epidemiologist John Ioannidis] asserts. It just needs some improvements. "Despite the fact that I've published papers with pretty depressive titles, I'm actually an optimist," Ioannidis says. "I find no other investment of a society that is better placed than science.""

External links

- Transparency and Openness Promotion Guidelines from the Center for Open Science

- Guidelines for Evaluating and Expressing the Uncertainty of NIST Measurement Results of the National Institute of Standards and Technology

- Reproducible papers with artifacts by the CTuning foundation

- ReproducibleResearch.net

|