Studentized residual

This article has multiple issues. Please help improve it or discuss these issues on the talk page. (Learn how and when to remove these template messages)

(Learn how and when to remove this template message)

|

| Part of a series on |

| Regression analysis |

|---|

|

| Models |

| Estimation |

| Background |

|

|

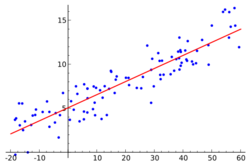

In statistics, a studentized residual is the dimensionless ratio resulting from the division of a residual by an estimate of its standard deviation, both expressed in the same units. It is a form of a Student's t-statistic, with the estimate of error varying between points.

This is an important technique in the detection of outliers. It is among several named in honor of William Sealey Gosset, who wrote under the pseudonym "Student" (e.g., Student's distribution). Dividing a statistic by a sample standard deviation is called studentizing, in analogy with standardizing and normalizing.

Motivation

The key reason for studentizing is that, in regression analysis of a multivariate distribution, the variances of the residuals at different input variable values may differ, even if the variances of the errors at these different input variable values are equal. The issue is the difference between errors and residuals in statistics, particularly the behavior of residuals in regressions.

Consider the simple linear regression model

Given a random sample (Xi, Yi), i = 1, ..., n, each pair (Xi, Yi) satisfies

where the errors , are independent and all have the same variance . The residuals are not the true errors, but estimates, based on the observable data. When the method of least squares is used to estimate and , then the residuals , unlike the errors , cannot be independent since they satisfy the two constraints

and

(Here εi is the ith error, and is the ith residual.)

The residuals, unlike the errors, do not all have the same variance: the variance decreases as the corresponding x-value gets farther from the average x-value. This is not a feature of the data itself, but of the regression better fitting values at the ends of the domain. It is also reflected in the influence functions of various data points on the regression coefficients: endpoints have more influence. This can also be seen because the residuals at endpoints depend greatly on the slope of a fitted line, while the residuals at the middle are relatively insensitive to the slope. The fact that the variances of the residuals differ, even though the variances of the true errors are all equal to each other, is the principal reason for the need for studentization.

It is not simply a matter of the population parameters (mean and standard deviation) being unknown – it is that regressions yield different residual distributions at different data points, unlike point estimators of univariate distributions, which share a common distribution for residuals.

Background

For this simple model, the design matrix is

and the hat matrix H is the matrix of the orthogonal projection onto the column space of the design matrix:

The leverage hii is the ith diagonal entry in the hat matrix. The variance of the ith residual is

In case the design matrix X has only two columns (as in the example above), this is equal to

In the case of an arithmetic mean, the design matrix X has only one column (a vector of ones), and this is simply:

Calculation

Given the definitions above, the Studentized residual is then

where hii is the leverage, and is an appropriate estimate of σ (see below).

In the case of a mean, this is equal to:

Internal and external studentization

The usual estimate of σ2 is the internally studentized residual

where m is the number of parameters in the model (2 in our example).

But if the i th case is suspected of being improbably large, then it would also not be normally distributed. Hence it is prudent to exclude the i th observation from the process of estimating the variance when one is considering whether the i th case may be an outlier, and instead use the externally studentized residual, which is

based on all the residuals except the suspect i th residual. Here is to emphasize that for suspect i are computed with i th case excluded.

If the estimate σ2 includes the i th case, then it is called the internally studentized residual, (also known as the standardized residual [1]). If the estimate is used instead, excluding the i th case, then it is called the externally studentized, .

Distribution

If the errors are independent and normally distributed with expected value 0 and variance σ2, then the probability distribution of the ith externally studentized residual is a Student's t-distribution with n − m − 1 degrees of freedom, and can range from to .

On the other hand, the internally studentized residuals are in the range , where ν = n − m is the number of residual degrees of freedom. If ti represents the internally studentized residual, and again assuming that the errors are independent identically distributed Gaussian variables, then:[2]

where t is a random variable distributed as Student's t-distribution with ν − 1 degrees of freedom. In fact, this implies that ti2 /ν follows the beta distribution B(1/2,(ν − 1)/2). The distribution above is sometimes referred to as the tau distribution;[2] it was first derived by Thompson in 1935.[3]

When ν = 3, the internally studentized residuals are uniformly distributed between and . If there is only one residual degree of freedom, the above formula for the distribution of internally studentized residuals doesn't apply. In this case, the ti are all either +1 or −1, with 50% chance for each.

The standard deviation of the distribution of internally studentized residuals is always 1, but this does not imply that the standard deviation of all the ti of a particular experiment is 1. For instance, the internally studentized residuals when fitting a straight line going through (0, 0) to the points (1, 4), (2, −1), (2, −1) are , and the standard deviation of these is not 1.

Note that any pair of studentized residual ti and tj (where ), are NOT i.i.d. They have the same distribution, but are not independent due to constraints on the residuals having to sum to 0 and to have them be orthogonal to the design matrix.

Software implementations

Many programs and statistics packages, such as R, Python, etc., include implementations of Studentized residual.

| Language/Program | Function | Notes |

|---|---|---|

| R | rstandard(model, ...) |

internally studentized. See [2] |

| R | rstudent(model, ...) |

externally studentized. See [3] |

See also

- Cook's distance – a measure of changes in regression coefficients when an observation is deleted

- Grubbs's test

- Normalization (statistics)

- Samuelson's inequality

- Standard score

- William Sealy Gosset

References

- ↑ Regression Deletion Diagnostics R docs

- ↑ 2.0 2.1 Allen J. Pope (1976), "The statistics of residuals and the detection of outliers", U.S. Dept. of Commerce, National Oceanic and Atmospheric Administration, National Ocean Survey, Geodetic Research and Development Laboratory, 136 pages, [1], eq.(6)

- ↑ Thompson, William R. (1935). "On a Criterion for the Rejection of Observations and the Distribution of the Ratio of Deviation to Sample Standard Deviation". The Annals of Mathematical Statistics 6 (4): 214–219. doi:10.1214/aoms/1177732567.

Further reading

- Cook, R. Dennis; Weisberg, Sanford (1982). Residuals and Influence in Regression. (Repr. ed.). New York: Chapman and Hall. ISBN 041224280X. http://www.stat.umn.edu/rir/. Retrieved 23 February 2013.

|