k-means clustering

| Machine learning and data mining |

|---|

|

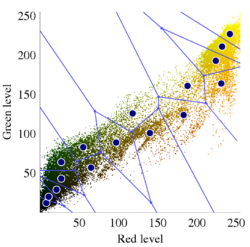

k-means clustering is a method of vector quantization, originally from signal processing, that aims to partition n observations into k clusters in which each observation belongs to the cluster with the nearest mean (cluster centers or cluster centroid), serving as a prototype of the cluster. This results in a partitioning of the data space into Voronoi cells. k-means clustering minimizes within-cluster variances (squared Euclidean distances), but not regular Euclidean distances, which would be the more difficult Weber problem: the mean optimizes squared errors, whereas only the geometric median minimizes Euclidean distances. For instance, better Euclidean solutions can be found using k-medians and k-medoids.

The problem is computationally difficult (NP-hard); however, efficient heuristic algorithms converge quickly to a local optimum. These are usually similar to the expectation-maximization algorithm for mixtures of Gaussian distributions via an iterative refinement approach employed by both k-means and Gaussian mixture modeling. They both use cluster centers to model the data; however, k-means clustering tends to find clusters of comparable spatial extent, while the Gaussian mixture model allows clusters to have different shapes.

The unsupervised k-means algorithm has a loose relationship to the k-nearest neighbor classifier, a popular supervised machine learning technique for classification that is often confused with k-means due to the name. Applying the 1-nearest neighbor classifier to the cluster centers obtained by k-means classifies new data into the existing clusters. This is known as nearest centroid classifier or Rocchio algorithm.

Description

Given a set of observations (x1, x2, ..., xn), where each observation is a d-dimensional real vector, k-means clustering aims to partition the n observations into k (≤ n) sets S = {S1, S2, ..., Sk} so as to minimize the within-cluster sum of squares (WCSS) (i.e. variance). Formally, the objective is to find: [math]\displaystyle{ \mathop\operatorname{arg\,min}_\mathbf{S} \sum_{i=1}^{k} \sum_{\mathbf x \in S_i} \left\| \mathbf x - \boldsymbol\mu_i \right\|^2 = \mathop\operatorname{arg\,min}_\mathbf{S} \sum_{i=1}^k |S_i| \operatorname{Var} S_i }[/math] where μi is the mean (also called centroid) of points in [math]\displaystyle{ S_i }[/math], i.e. [math]\displaystyle{ \boldsymbol{\mu_i} = \frac{1}{|S_i|}\sum_{\mathbf x \in S_i} \mathbf x, }[/math] [math]\displaystyle{ |S_i| }[/math] is the size of [math]\displaystyle{ S_i }[/math], and [math]\displaystyle{ \|\cdot\| }[/math] is the usual L2 norm . This is equivalent to minimizing the pairwise squared deviations of points in the same cluster: [math]\displaystyle{ \mathop\operatorname{arg\,min}_\mathbf{S} \sum_{i=1}^{k} \, \frac{1}{ |S_i|} \, \sum_{\mathbf{x}, \mathbf{y} \in S_i} \left\| \mathbf{x} - \mathbf{y} \right\|^2 }[/math] The equivalence can be deduced from identity [math]\displaystyle{ |S_i|\sum_{\mathbf x \in S_i} \left\| \mathbf x - \boldsymbol\mu_i \right\|^2 = \frac{1}{2} \sum_{\mathbf{x},\mathbf{y} \in S_i}\left\|\mathbf x - \mathbf y\right\|^2 }[/math]. Since the total variance is constant, this is equivalent to maximizing the sum of squared deviations between points in different clusters (between-cluster sum of squares, BCSS).[1] This deterministic relationship is also related to the law of total variance in probability theory.

History

The term "k-means" was first used by James MacQueen in 1967,[2] though the idea goes back to Hugo Steinhaus in 1956.[3] The standard algorithm was first proposed by Stuart Lloyd of Bell Labs in 1957 as a technique for pulse-code modulation, although it was not published as a journal article until 1982.[4] In 1965, Edward W. Forgy published essentially the same method, which is why it is sometimes referred to as the Lloyd–Forgy algorithm.[5]

Algorithms

Standard algorithm (naive k-means)

The most common algorithm uses an iterative refinement technique. Due to its ubiquity, it is often called "the k-means algorithm"; it is also referred to as Lloyd's algorithm, particularly in the computer science community. It is sometimes also referred to as "naïve k-means", because there exist much faster alternatives.[6]

Given an initial set of k means m1(1), ..., mk(1) (see below), the algorithm proceeds by alternating between two steps:[7]

- Assignment step: Assign each observation to the cluster with the nearest mean: that with the least squared Euclidean distance.[8] (Mathematically, this means partitioning the observations according to the Voronoi diagram generated by the means.) [math]\displaystyle{ S_i^{(t)} = \left \{ x_p : \left \| x_p - m^{(t)}_i \right \|^2 \le \left \| x_p - m^{(t)}_j \right \|^2 \ \forall j, 1 \le j \le k \right\}, }[/math] where each [math]\displaystyle{ x_p }[/math] is assigned to exactly one [math]\displaystyle{ S^{(t)} }[/math], even if it could be assigned to two or more of them.

- Update step: Recalculate means (centroids) for observations assigned to each cluster. [math]\displaystyle{ m^{(t+1)}_i = \frac{1}{\left|S^{(t)}_i\right|} \sum_{x_j \in S^{(t)}_i} x_j }[/math]

The algorithm has converged when the assignments no longer change. The algorithm is not guaranteed to find the optimum.[9]

The algorithm is often presented as assigning objects to the nearest cluster by distance. Using a different distance function other than (squared) Euclidean distance may prevent the algorithm from converging. Various modifications of k-means such as spherical k-means and k-medoids have been proposed to allow using other distance measures.

Initialization methods

Commonly used initialization methods are Forgy and Random Partition.[10] The Forgy method randomly chooses k observations from the dataset and uses these as the initial means. The Random Partition method first randomly assigns a cluster to each observation and then proceeds to the update step, thus computing the initial mean to be the centroid of the cluster's randomly assigned points. The Forgy method tends to spread the initial means out, while Random Partition places all of them close to the center of the data set. According to Hamerly et al.,[10] the Random Partition method is generally preferable for algorithms such as the k-harmonic means and fuzzy k-means. For expectation maximization and standard k-means algorithms, the Forgy method of initialization is preferable. A comprehensive study by Celebi et al.,[11] however, found that popular initialization methods such as Forgy, Random Partition, and Maximin often perform poorly, whereas Bradley and Fayyad's approach[12] performs "consistently" in "the best group" and k-means++ performs "generally well".

- Demonstration of the standard algorithm

2. k clusters are created by associating every observation with the nearest mean. The partitions here represent the Voronoi diagram generated by the means.

3. The centroid of each of the k clusters becomes the new mean.

The algorithm does not guarantee convergence to the global optimum. The result may depend on the initial clusters. As the algorithm is usually fast, it is common to run it multiple times with different starting conditions. However, worst-case performance can be slow: in particular certain point sets, even in two dimensions, converge in exponential time, that is 2Ω(n).[13] These point sets do not seem to arise in practice: this is corroborated by the fact that the smoothed running time of k-means is polynomial.[14]

The "assignment" step is referred to as the "expectation step", while the "update step" is a maximization step, making this algorithm a variant of the generalized expectation-maximization algorithm.

Complexity

Finding the optimal solution to the k-means clustering problem for observations in d dimensions is:

- NP-hard in general Euclidean space (of d dimensions) even for two clusters,[15][16]

- NP-hard for a general number of clusters k even in the plane,[17]

- if k and d (the dimension) are fixed, the problem can be exactly solved in time [math]\displaystyle{ O(n^{dk+1}) }[/math], where n is the number of entities to be clustered.[18]

Thus, a variety of heuristic algorithms such as Lloyd's algorithm given above are generally used.

The running time of Lloyd's algorithm (and most variants) is [math]\displaystyle{ O(n k d i) }[/math],[9][19] where:

- n is the number of d-dimensional vectors (to be clustered)

- k the number of clusters

- i the number of iterations needed until convergence.

On data that does have a clustering structure, the number of iterations until convergence is often small, and results only improve slightly after the first dozen iterations. Lloyd's algorithm is therefore often considered to be of "linear" complexity in practice, although it is in the worst case superpolynomial when performed until convergence.[20]

- In the worst-case, Lloyd's algorithm needs [math]\displaystyle{ i = 2^{\Omega(\sqrt{n})} }[/math] iterations, so that the worst-case complexity of Lloyd's algorithm is superpolynomial.[20]

- Lloyd's k-means algorithm has polynomial smoothed running time. It is shown that[14] for arbitrary set of n points in [math]\displaystyle{ [0,1]^d }[/math], if each point is independently perturbed by a normal distribution with mean 0 and variance [math]\displaystyle{ \sigma^2 }[/math], then the expected running time of k-means algorithm is bounded by [math]\displaystyle{ O( n^{34} k^{34} d^8 \log^4(n) / \sigma^6 ) }[/math], which is a polynomial in n, k, d and [math]\displaystyle{ 1/\sigma }[/math].

- Better bounds are proven for simple cases. For example, it is shown that the running time of k-means algorithm is bounded by [math]\displaystyle{ O(dn^4M^2) }[/math] for n points in an integer lattice [math]\displaystyle{ \{1, \dots, M\}^d }[/math].[21]

Lloyd's algorithm is the standard approach for this problem. However, it spends a lot of processing time computing the distances between each of the k cluster centers and the n data points. Since points usually stay in the same clusters after a few iterations, much of this work is unnecessary, making the naïve implementation very inefficient. Some implementations use caching and the triangle inequality in order to create bounds and accelerate Lloyd's algorithm.[9][22][23][24][25]

Variations

- Jenks natural breaks optimization: k-means applied to univariate data

- k-medians clustering uses the median in each dimension instead of the mean, and this way minimizes [math]\displaystyle{ L_1 }[/math] norm (Taxicab geometry).

- k-medoids (also: Partitioning Around Medoids, PAM) uses the medoid instead of the mean, and this way minimizes the sum of distances for arbitrary distance functions.

- Fuzzy C-Means Clustering is a soft version of k-means, where each data point has a fuzzy degree of belonging to each cluster.

- Gaussian mixture models trained with expectation-maximization algorithm (EM algorithm) maintains probabilistic assignments to clusters, instead of deterministic assignments, and multivariate Gaussian distributions instead of means.

- k-means++ chooses initial centers in a way that gives a provable upper bound on the WCSS objective.

- The filtering algorithm uses kd-trees to speed up each k-means step.[26]

- Some methods attempt to speed up each k-means step using the triangle inequality.[22][23][24][27][25]

- Escape local optima by swapping points between clusters.[9]

- The Spherical k-means clustering algorithm is suitable for textual data.[28]

- Hierarchical variants such as Bisecting k-means,[29] X-means clustering[30] and G-means clustering[31] repeatedly split clusters to build a hierarchy, and can also try to automatically determine the optimal number of clusters in a dataset.

- Internal cluster evaluation measures such as cluster silhouette can be helpful at determining the number of clusters.

- Minkowski weighted k-means automatically calculates cluster specific feature weights, supporting the intuitive idea that a feature may have different degrees of relevance at different features.[32] These weights can also be used to re-scale a given data set, increasing the likelihood of a cluster validity index to be optimized at the expected number of clusters.[33]

- Mini-batch k-means: k-means variation using "mini batch" samples for data sets that do not fit into memory.[34]

- Otsu's method

Hartigan–Wong method

Hartigan and Wong's method[9] provides a variation of k-means algorithm which progresses towards a local minimum of the minimum sum-of-squares problem with different solution updates. The method is a local search that iteratively attempts to relocate a sample into a different cluster as long as this process improves the objective function. When no sample can be relocated into a different cluster with an improvement of the objective, the method stops (in a local minimum). In a similar way as the classical k-means, the approach remains a heuristic since it does not necessarily guarantee that the final solution is globally optimum.

Let [math]\displaystyle{ \varphi(S_j) }[/math] be the individual cost of [math]\displaystyle{ S_j }[/math] defined by [math]\displaystyle{ \sum_{x \in S_j} (x - \mu_j)^2 }[/math], with [math]\displaystyle{ \mu_j }[/math] the center of the cluster.

- Assignment step

- Hartigan and Wong's method starts by partitioning the points into random clusters [math]\displaystyle{ \{ S_j \}_{j \in \{1, \cdots k\}} }[/math].

- Update step

- Next it determines the [math]\displaystyle{ n,m \in \{1, \ldots, k \} }[/math] and [math]\displaystyle{ x \in S_n }[/math] for which the following function reaches a maximum [math]\displaystyle{ \Delta(m,n,x) = \varphi(S_n) + \varphi(S_m) - \varphi(S_n \setminus \{ x \} ) - \varphi(S_m \cup \{ x \} ). }[/math] For the [math]\displaystyle{ x,n,m }[/math] that reach this maximum, [math]\displaystyle{ x }[/math] moves from the cluster [math]\displaystyle{ S_n }[/math] to the cluster [math]\displaystyle{ S_m }[/math].

- Termination

- The algorithm terminates once [math]\displaystyle{ \Delta(m,n,x) }[/math] is less than zero for all [math]\displaystyle{ x,n,m }[/math].

Different move acceptance strategies can be used. In a first-improvement strategy, any improving relocation can be applied, whereas in a best-improvement strategy, all possible relocations are iteratively tested and only the best is applied at each iteration. The former approach favors speed, whether the latter approach generally favors solution quality at the expense of additional computational time. The function [math]\displaystyle{ \Delta }[/math] used to calculate the result of a relocation can also be efficiently evaluated by using equality[35]

[math]\displaystyle{ \Delta(x,n,m) = \frac{ \mid S_n \mid }{ \mid S_n \mid - 1} \cdot \lVert \mu_n - x \rVert^2 - \frac{ \mid S_m \mid }{ \mid S_m \mid + 1} \cdot \lVert \mu_m - x \rVert^2. }[/math]

Global optimization and meta-heuristics

The classical k-means algorithm and its variations are known to only converge to local minima of the minimum-sum-of-squares clustering problem defined as [math]\displaystyle{ \mathop\operatorname{arg\,min}_\mathbf{S} \sum_{i=1}^{k} \sum_{\mathbf x \in S_i} \left\| \mathbf x - \boldsymbol\mu_i \right\|^2 . }[/math] Many studies have attempted to improve the convergence behavior of the algorithm and maximize the chances of attaining the global optimum (or at least, local minima of better quality). Initialization and restart techniques discussed in the previous sections are one alternative to find better solutions. More recently, global optimization algorithms based on branch-and-bound and semidefinite programming have produced ‘’provenly optimal’’ solutions for datasets with up to 4,177 entities and 20,531 features.[36] As expected, due to the NP-hardness of the subjacent optimization problem, the computational time of optimal algorithms for K-means quickly increases beyond this size. Optimal solutions for small- and medium-scale still remain valuable as a benchmark tool, to evaluate the quality of other heuristics. To find high-quality local minima within a controlled computational time but without optimality guarantees, other works have explored metaheuristics and other global optimization techniques, e.g., based on incremental approaches and convex optimization,[37] random swaps[38] (i.e., iterated local search), variable neighborhood search[39] and genetic algorithms.[40][41] It is indeed known that finding better local minima of the minimum sum-of-squares clustering problem can make the difference between failure and success to recover cluster structures in feature spaces of high dimension.[41]

Discussion

Three key features of k-means that make it efficient are often regarded as its biggest drawbacks:

- Euclidean distance is used as a metric and variance is used as a measure of cluster scatter.

- The number of clusters k is an input parameter: an inappropriate choice of k may yield poor results. That is why, when performing k-means, it is important to run diagnostic checks for determining the number of clusters in the data set.

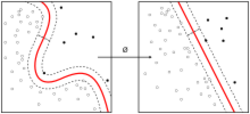

- Convergence to a local minimum may produce counterintuitive ("wrong") results (see example in Fig.).

A key limitation of k-means is its cluster model. The concept is based on spherical clusters that are separable so that the mean converges towards the cluster center. The clusters are expected to be of similar size, so that the assignment to the nearest cluster center is the correct assignment. When for example applying k-means with a value of [math]\displaystyle{ k=3 }[/math] onto the well-known Iris flower data set, the result often fails to separate the three Iris species contained in the data set. With [math]\displaystyle{ k=2 }[/math], the two visible clusters (one containing two species) will be discovered, whereas with [math]\displaystyle{ k=3 }[/math] one of the two clusters will be split into two even parts. In fact, [math]\displaystyle{ k = 2 }[/math] is more appropriate for this data set, despite the data set's containing 3 classes. As with any other clustering algorithm, the k-means result makes assumptions that the data satisfy certain criteria. It works well on some data sets, and fails on others.

The result of k-means can be seen as the Voronoi cells of the cluster means. Since data is split halfway between cluster means, this can lead to suboptimal splits as can be seen in the "mouse" example. The Gaussian models used by the expectation-maximization algorithm (arguably a generalization of k-means) are more flexible by having both variances and covariances. The EM result is thus able to accommodate clusters of variable size much better than k-means as well as correlated clusters (not in this example). In counterpart, EM requires the optimization of a larger number of free parameters and poses some methodological issues due to vanishing clusters or badly-conditioned covariance matrices. K-means is closely related to nonparametric Bayesian modeling.[43]

Applications

k-means clustering is rather easy to apply to even large data sets, particularly when using heuristics such as Lloyd's algorithm. It has been successfully used in market segmentation, computer vision, and astronomy among many other domains. It often is used as a preprocessing step for other algorithms, for example to find a starting configuration.

Vector quantization

k-means originates from signal processing, and still finds use in this domain. For example, in computer graphics, color quantization is the task of reducing the color palette of an image to a fixed number of colors k. The k-means algorithm can easily be used for this task and produces competitive results. A use case for this approach is image segmentation. Other uses of vector quantization include non-random sampling, as k-means can easily be used to choose k different but prototypical objects from a large data set for further analysis.

Cluster analysis

In cluster analysis, the k-means algorithm can be used to partition the input data set into k partitions (clusters).

However, the pure k-means algorithm is not very flexible, and as such is of limited use (except for when vector quantization as above is actually the desired use case). In particular, the parameter k is known to be hard to choose (as discussed above) when not given by external constraints. Another limitation is that it cannot be used with arbitrary distance functions or on non-numerical data. For these use cases, many other algorithms are superior.

Feature learning

k-means clustering has been used as a feature learning (or dictionary learning) step, in either (semi-)supervised learning or unsupervised learning.[44] The basic approach is first to train a k-means clustering representation, using the input training data (which need not be labelled). Then, to project any input datum into the new feature space, an "encoding" function, such as the thresholded matrix-product of the datum with the centroid locations, computes the distance from the datum to each centroid, or simply an indicator function for the nearest centroid,[44][45] or some smooth transformation of the distance.[46] Alternatively, transforming the sample-cluster distance through a Gaussian RBF, obtains the hidden layer of a radial basis function network.[47]

This use of k-means has been successfully combined with simple, linear classifiers for semi-supervised learning in NLP (specifically for named entity recognition)[48] and in computer vision. On an object recognition task, it was found to exhibit comparable performance with more sophisticated feature learning approaches such as autoencoders and restricted Boltzmann machines.[46] However, it generally requires more data, for equivalent performance, because each data point only contributes to one "feature".[44]

Relation to other algorithms

Gaussian mixture model

The slow "standard algorithm" for k-means clustering, and its associated expectation-maximization algorithm, is a special case of a Gaussian mixture model, specifically, the limiting case when fixing all covariances to be diagonal, equal and have infinitesimal small variance.[49]:850 Instead of small variances, a hard cluster assignment can also be used to show another equivalence of k-means clustering to a special case of "hard" Gaussian mixture modelling.[50](11.4.2.5) This does not mean that it is efficient to use Gaussian mixture modelling to compute k-means, but just that there is a theoretical relationship, and that Gaussian mixture modelling can be interpreted as a generalization of k-means; on the contrary, it has been suggested to use k-means clustering to find starting points for Gaussian mixture modelling on difficult data.[49]:849

k-SVD

Another generalization of the k-means algorithm is the k-SVD algorithm, which estimates data points as a sparse linear combination of "codebook vectors". k-means corresponds to the special case of using a single codebook vector, with a weight of 1.[51]

Principal component analysis

The relaxed solution of k-means clustering, specified by the cluster indicators, is given by principal component analysis (PCA).[52][53] The intuition is that k-means describe spherically shaped (ball-like) clusters. If the data has 2 clusters, the line connecting the two centroids is the best 1-dimensional projection direction, which is also the first PCA direction. Cutting the line at the center of mass separates the clusters (this is the continuous relaxation of the discrete cluster indicator). If the data have three clusters, the 2-dimensional plane spanned by three cluster centroids is the best 2-D projection. This plane is also defined by the first two PCA dimensions. Well-separated clusters are effectively modelled by ball-shaped clusters and thus discovered by k-means. Non-ball-shaped clusters are hard to separate when they are close. For example, two half-moon shaped clusters intertwined in space do not separate well when projected onto PCA subspace. k-means should not be expected to do well on this data.[54] It is straightforward to produce counterexamples to the statement that the cluster centroid subspace is spanned by the principal directions.[55]

Mean shift clustering

Basic mean shift clustering algorithms maintain a set of data points the same size as the input data set. Initially, this set is copied from the input set. Then this set is iteratively replaced by the mean of those points in the set that are within a given distance of that point. By contrast, k-means restricts this updated set to k points usually much less than the number of points in the input data set, and replaces each point in this set by the mean of all points in the input set that are closer to that point than any other (e.g. within the Voronoi partition of each updating point). A mean shift algorithm that is similar then to k-means, called likelihood mean shift, replaces the set of points undergoing replacement by the mean of all points in the input set that are within a given distance of the changing set.[56] One of the advantages of mean shift over k-means is that the number of clusters is not pre-specified, because mean shift is likely to find only a few clusters if only a small number exist. However, mean shift can be much slower than k-means, and still requires selection of a bandwidth parameter. Mean shift has soft variants.

Independent component analysis

Under sparsity assumptions and when input data is pre-processed with the whitening transformation, k-means produces the solution to the linear independent component analysis (ICA) task. This aids in explaining the successful application of k-means to feature learning.[57]

Bilateral filtering

k-means implicitly assumes that the ordering of the input data set does not matter. The bilateral filter is similar to k-means and mean shift in that it maintains a set of data points that are iteratively replaced by means. However, the bilateral filter restricts the calculation of the (kernel weighted) mean to include only points that are close in the ordering of the input data.[56] This makes it applicable to problems such as image denoising, where the spatial arrangement of pixels in an image is of critical importance.

Similar problems

The set of squared error minimizing cluster functions also includes the k-medoids algorithm, an approach which forces the center point of each cluster to be one of the actual points, i.e., it uses medoids in place of centroids.

Software implementations

Different implementations of the algorithm exhibit performance differences, with the fastest on a test data set finishing in 10 seconds, the slowest taking 25,988 seconds (~7 hours).[1] The differences can be attributed to implementation quality, language and compiler differences, different termination criteria and precision levels, and the use of indexes for acceleration.

Free Software/Open Source

The following implementations are available under Free/Open Source Software licenses, with publicly available source code.

- Accord.NET contains C# implementations for k-means, k-means++ and k-modes.

- ALGLIB contains parallelized C++ and C# implementations for k-means and k-means++.

- AOSP contains a Java implementation for k-means.

- CrimeStat implements two spatial k-means algorithms, one of which allows the user to define the starting locations.

- ELKI contains k-means (with Lloyd and MacQueen iteration, along with different initializations such as k-means++ initialization) and various more advanced clustering algorithms.

- Smile contains k-means and various more other algorithms and results visualization (for java, kotlin and scala).

- Julia contains a k-means implementation in the JuliaStats Clustering package.

- KNIME contains nodes for k-means and k-medoids.

- Mahout contains a MapReduce based k-means.

- mlpack contains a C++ implementation of k-means.

- Octave contains k-means.

- OpenCV contains a k-means implementation.

- Orange includes a component for k-means clustering with automatic selection of k and cluster silhouette scoring.

- PSPP contains k-means, The QUICK CLUSTER command performs k-means clustering on the dataset.

- R contains three k-means variations.

- SciPy and scikit-learn contain multiple k-means implementations.

- Spark MLlib implements a distributed k-means algorithm.

- Torch contains an unsup package that provides k-means clustering.

- Weka contains k-means and x-means.

Proprietary

The following implementations are available under proprietary license terms, and may not have publicly available source code.

- Ayasdi

- Mathematica

- MATLAB

- OriginPro

- RapidMiner

- SAP HANA

- SAS

- SPSS

- Stata

See also

- BFR algorithm

- Centroidal Voronoi tessellation

- Head/tail Breaks

- k q-flats

- k-means++

- Linde–Buzo–Gray algorithm

- Self-organizing map

References

- ↑ 1.0 1.1 Kriegel, Hans-Peter; Schubert, Erich; Zimek, Arthur (2016). "The (black) art of runtime evaluation: Are we comparing algorithms or implementations?". Knowledge and Information Systems 52 (2): 341–378. doi:10.1007/s10115-016-1004-2. ISSN 0219-1377.

- ↑ MacQueen, J. B. (1967). "Some Methods for classification and Analysis of Multivariate Observations". Proceedings of 5th Berkeley Symposium on Mathematical Statistics and Probability. 1. University of California Press. pp. 281–297. http://projecteuclid.org/euclid.bsmsp/1200512992. Retrieved 2009-04-07.

- ↑ Steinhaus, Hugo (1957). "Sur la division des corps matériels en parties" (in fr). Bull. Acad. Polon. Sci. 4 (12): 801–804.

- ↑ Lloyd, Stuart P. (1957). "Least square quantization in PCM". Bell Telephone Laboratories Paper. Published in journal much later: Lloyd, Stuart P. (1982). "Least squares quantization in PCM". IEEE Transactions on Information Theory 28 (2): 129–137. doi:10.1109/TIT.1982.1056489. http://www.cs.toronto.edu/~roweis/csc2515-2006/readings/lloyd57.pdf. Retrieved 2009-04-15.

- ↑ Forgy, Edward W. (1965). "Cluster analysis of multivariate data: efficiency versus interpretability of classifications". Biometrics 21 (3): 768–769.

- ↑ Pelleg, Dan; Moore, Andrew (1999). "Accelerating exact k -means algorithms with geometric reasoning" (in en). Proceedings of the fifth ACM SIGKDD international conference on Knowledge discovery and data mining. San Diego, California, United States: ACM Press. pp. 277–281. doi:10.1145/312129.312248. ISBN 9781581131437. http://portal.acm.org/citation.cfm?doid=312129.312248.

- ↑ MacKay, David (2003). "Chapter 20. An Example Inference Task: Clustering". Information Theory, Inference and Learning Algorithms. Cambridge University Press. pp. 284–292. ISBN 978-0-521-64298-9. http://www.inference.phy.cam.ac.uk/mackay/itprnn/ps/284.292.pdf.

- ↑ Since the square root is a monotone function, this also is the minimum Euclidean distance assignment.

- ↑ 9.0 9.1 9.2 9.3 9.4 Hartigan, J. A.; Wong, M. A. (1979). "Algorithm AS 136: A k-Means Clustering Algorithm". Journal of the Royal Statistical Society, Series C 28 (1): 100–108.

- ↑ 10.0 10.1 Hamerly, Greg; Elkan, Charles (2002). "Alternatives to the k-means algorithm that find better clusterings". http://people.csail.mit.edu/tieu/notebook/kmeans/15_p600-hamerly.pdf.

- ↑ Celebi, M. E.; Kingravi, H. A.; Vela, P. A. (2013). "A comparative study of efficient initialization methods for the k-means clustering algorithm". Expert Systems with Applications 40 (1): 200–210. doi:10.1016/j.eswa.2012.07.021.

- ↑ Bradley, Paul S.; Fayyad, Usama M. (1998). "Refining Initial Points for k-Means Clustering".

- ↑ Vattani, A. (2011). "k-means requires exponentially many iterations even in the plane". Discrete and Computational Geometry 45 (4): 596–616. doi:10.1007/s00454-011-9340-1. http://cseweb.ucsd.edu/users/avattani/papers/kmeans-journal.pdf.

- ↑ 14.0 14.1 Arthur, David; Manthey, B.; Roeglin, H. (2009). "k-means has polynomial smoothed complexity".

- ↑ Aloise, D.; Deshpande, A.; Hansen, P.; Popat, P. (2009). "NP-hardness of Euclidean sum-of-squares clustering". Machine Learning 75 (2): 245–249. doi:10.1007/s10994-009-5103-0.

- ↑ Dasgupta, S.; Freund, Y. (July 2009). "Random Projection Trees for Vector Quantization". IEEE Transactions on Information Theory 55 (7): 3229–42. doi:10.1109/TIT.2009.2021326.

- ↑ Mahajan, Meena; Nimbhorkar, Prajakta; Varadarajan, Kasturi (2009). "The Planar k-Means Problem is NP-Hard". WALCOM: Algorithms and Computation. Lecture Notes in Computer Science. 5431. pp. 274–285. doi:10.1007/978-3-642-00202-1_24. ISBN 978-3-642-00201-4.

- ↑ Inaba, M.; Katoh, N.; Imai, H. (1994). "Applications of weighted Voronoi diagrams and randomization to variance-based k-clustering". Proceedings of 10th ACM Symposium on Computational Geometry. pp. 332–9. doi:10.1145/177424.178042.

- ↑ Manning, Christopher D.; Raghavan, Prabhakar; Schütze, Hinrich (2008). Introduction to information retrieval. Cambridge University Press. ISBN 978-0521865715. OCLC 190786122.

- ↑ 20.0 20.1 Arthur, David; Vassilvitskii, Sergei (2006-01-01). "How slow is the k -means method?". Proceedings of the twenty-second annual symposium on Computational geometry. SCG '06. ACM. pp. 144–153. doi:10.1145/1137856.1137880. ISBN 978-1595933409.

- ↑ Bhowmick, Abhishek (2009). "A theoretical analysis of Lloyd's algorithm for k-means clustering". https://gautam5.cse.iitk.ac.in/opencs/sites/default/files/final.pdf. See also here.

- ↑ 22.0 22.1 Phillips, Steven J. (2002). "Acceleration of K-Means and Related Clustering Algorithms". in Mount, David M.. Acceleration of k-Means and Related Clustering Algorithms. Lecture Notes in Computer Science. 2409. Springer. pp. 166–177. doi:10.1007/3-540-45643-0_13. ISBN 978-3-540-43977-6.

- ↑ 23.0 23.1 Elkan, Charles (2003). "Using the triangle inequality to accelerate k-means". http://www-cse.ucsd.edu/~elkan/kmeansicml03.pdf.

- ↑ 24.0 24.1 Hamerly, Greg (2010). "Making k-means even faster". Proceedings of the 2010 SIAM International Conference on Data Mining. pp. 130–140. doi:10.1137/1.9781611972801.12. ISBN 978-0-89871-703-7.

- ↑ 25.0 25.1 Hamerly, Greg; Drake, Jonathan (2015). "Accelerating Lloyd's Algorithm for k-Means Clustering". Partitional Clustering Algorithms. pp. 41–78. doi:10.1007/978-3-319-09259-1_2. ISBN 978-3-319-09258-4.

- ↑ Kanungo, Tapas; Mount, David M.; Netanyahu, Nathan S.; Piatko, Christine D.; Silverman, Ruth; Wu, Angela Y. (2002). "An efficient k-means clustering algorithm: Analysis and implementation". IEEE Transactions on Pattern Analysis and Machine Intelligence 24 (7): 881–892. doi:10.1109/TPAMI.2002.1017616. http://www.cs.umd.edu/~mount/Papers/pami02.pdf. Retrieved 2009-04-24.

- ↑ Drake, Jonathan (2012). "Accelerated k-means with adaptive distance bounds". The 5th NIPS Workshop on Optimization for Machine Learning, OPT2012. http://opt.kyb.tuebingen.mpg.de/papers/opt2012_paper_13.pdf.

- ↑ Dhillon, I. S.; Modha, D. M. (2001). "Concept decompositions for large sparse text data using clustering". Machine Learning 42 (1): 143–175. doi:10.1023/a:1007612920971.

- ↑ Steinbach, M.; Karypis, G.; Kumar, V. (2000). ""A comparison of document clustering techniques". In". KDD Workshop on Text Mining 400 (1): 525–526.

- ↑ Pelleg, D.; & Moore, A. W. (2000, June). "X-means: Extending k-means with Efficient Estimation of the Number of Clusters". In ICML, Vol. 1

- ↑ Hamerly, Greg; Elkan, Charles (2004). "Learning the k in k-means". Advances in Neural Information Processing Systems 16: 281. http://papers.nips.cc/paper/2526-learning-the-k-in-k-means.pdf.

- ↑ Amorim, R. C.; Mirkin, B. (2012). "Minkowski Metric, Feature Weighting and Anomalous Cluster Initialisation in k-Means Clustering". Pattern Recognition 45 (3): 1061–1075. doi:10.1016/j.patcog.2011.08.012.

- ↑ Amorim, R. C.; Hennig, C. (2015). "Recovering the number of clusters in data sets with noise features using feature rescaling factors". Information Sciences 324: 126–145. doi:10.1016/j.ins.2015.06.039.

- ↑ Sculley, David (2010). "Web-scale k-means clustering". ACM. pp. 1177–1178. http://dl.acm.org/citation.cfm?id=1772862. Retrieved 2016-12-21.

- ↑ Telgarsky, Matus. "Hartigan's Method: k-means Clustering without Voronoi". http://proceedings.mlr.press/v9/telgarsky10a/telgarsky10a.pdf.

- ↑ Piccialli, Veronica; Sudoso, Antonio M.; Wiegele, Angelika (2022-03-28). "SOS-SDP: An Exact Solver for Minimum Sum-of-Squares Clustering" (in en). INFORMS Journal on Computing 34 (4): 2144–2162. doi:10.1287/ijoc.2022.1166. ISSN 1091-9856. http://pubsonline.informs.org/doi/10.1287/ijoc.2022.1166.

- ↑ Bagirov, A. M.; Taheri, S.; Ugon, J. (2016). "Nonsmooth DC programming approach to the minimum sum-of-squares clustering problems". Pattern Recognition 53: 12–24. doi:10.1016/j.patcog.2015.11.011. Bibcode: 2016PatRe..53...12B.

- ↑ Fränti, Pasi (2018). "Efficiency of random swap clustering". Journal of Big Data 5 (1): 1–21. doi:10.1186/s40537-018-0122-y.

- ↑ Hansen, P.; Mladenovic, N. (2001). "J-Means: A new local search heuristic for minimum sum of squares clustering". Pattern Recognition 34 (2): 405–413. doi:10.1016/S0031-3203(99)00216-2. Bibcode: 2001PatRe..34..405H.

- ↑ Krishna, K.; Murty, M. N. (1999). "Genetic k-means algorithm". IEEE Transactions on Systems, Man, and Cybernetics - Part B: Cybernetics 29 (3): 433–439. doi:10.1109/3477.764879. PMID 18252317. https://www.researchgate.net/publication/5600582.

- ↑ 41.0 41.1 Gribel, Daniel; Vidal, Thibaut (2019). "HG-means: A scalable hybrid metaheuristic for minimum sum-of-squares clustering". Pattern Recognition 88: 569–583. doi:10.1016/j.patcog.2018.12.022.

- ↑ Mirkes, E. M.. "K-means and k-medoids applet". http://www.math.le.ac.uk/people/ag153/homepage/KmeansKmedoids/Kmeans_Kmedoids.html.

- ↑ Kulis, Brian; Jordan, Michael I. (2012-06-26). Revisiting k-means: new algorithms via Bayesian nonparametrics. 1131–1138. ISBN 9781450312851. https://icml.cc/2012/papers/291.pdf.

- ↑ 44.0 44.1 44.2 Coates, Adam; Ng, Andrew Y. (2012). "Learning feature representations with k-means". in Montavon, G.. Neural Networks: Tricks of the Trade. Springer. https://cs.stanford.edu/~acoates/papers/coatesng_nntot2012.pdf.

- ↑ Csurka, Gabriella; Dance, Christopher C.; Fan, Lixin; Willamowski, Jutta; Bray, Cédric (2004). "Visual categorization with bags of keypoints". ECCV Workshop on Statistical Learning in Computer Vision. https://www.cs.cmu.edu/~efros/courses/LBMV07/Papers/csurka-eccv-04.pdf.

- ↑ 46.0 46.1 Coates, Adam; Lee, Honglak; Ng, Andrew Y. (2011). "An analysis of single-layer networks in unsupervised feature learning". International Conference on Artificial Intelligence and Statistics (AISTATS). http://www.stanford.edu/~acoates/papers/coatesleeng_aistats_2011.pdf.

- ↑ Schwenker, Friedhelm; Kestler, Hans A.; Palm, Günther (2001). "Three learning phases for radial-basis-function networks". Neural Networks 14 (4–5): 439–458. doi:10.1016/s0893-6080(01)00027-2. PMID 11411631.

- ↑ Lin, Dekang; Wu, Xiaoyun (2009). "Phrase clustering for discriminative learning". Annual Meeting of the ACL and IJCNLP. pp. 1030–1038. http://www.aclweb.org/anthology/P/P09/P09-1116.pdf.

- ↑ 49.0 49.1 Press, W. H.; Teukolsky, S. A.; Vetterling, W. T.; Flannery, B. P. (2007). "Section 16.1. Gaussian Mixture Models and k-Means Clustering". Numerical Recipes: The Art of Scientific Computing (3rd ed.). New York (NY): Cambridge University Press. ISBN 978-0-521-88068-8. http://apps.nrbook.com/empanel/index.html#pg=842.

- ↑ Kevin P. Murphy (2012). Machine learning : a probabilistic perspective. Cambridge, Mass.: MIT Press. ISBN 978-0-262-30524-2. OCLC 810414751.

- ↑ Aharon, Michal; Elad, Michael; Bruckstein, Alfred (2006). "K-SVD: An Algorithm for Designing Overcomplete Dictionaries for Sparse Representation". IEEE Transactions on Signal Processing 54 (11): 4311. doi:10.1109/TSP.2006.881199. Bibcode: 2006ITSP...54.4311A. http://www.cs.technion.ac.il/FREDDY/papers/120.pdf.

- ↑ Zha, Hongyuan; Ding, Chris; Gu, Ming; He, Xiaofeng; Simon, Horst D. (December 2001). "Spectral Relaxation for k-means Clustering". Neural Information Processing Systems Vol.14 (NIPS 2001): 1057–1064. http://ranger.uta.edu/~chqding/papers/Zha-Kmeans.pdf.

- ↑ Ding, Chris; He, Xiaofeng (July 2004). "K-means Clustering via Principal Component Analysis". Proceedings of International Conference on Machine Learning (ICML 2004): 225–232. http://ranger.uta.edu/~chqding/papers/KmeansPCA1.pdf.

- ↑ Drineas, Petros; Frieze, Alan M.; Kannan, Ravi; Vempala, Santosh; Vinay, Vishwanathan (2004). "Clustering large graphs via the singular value decomposition". Machine Learning 56 (1–3): 9–33. doi:10.1023/b:mach.0000033113.59016.96. http://www.cc.gatech.edu/~vempala/papers/dfkvv.pdf. Retrieved 2012-08-02.

- ↑ Cohen, Michael B.; Elder, Sam; Musco, Cameron; Musco, Christopher; Persu, Madalina (2014). "Dimensionality reduction for k-means clustering and low rank approximation (Appendix B)". arXiv:1410.6801 [cs.DS].

- ↑ 56.0 56.1 Little, Max A.; Jones, Nick S. (2011). "Generalized Methods and Solvers for Piecewise Constant Signals: Part I". Proceedings of the Royal Society A 467 (2135): 3088–3114. doi:10.1098/rspa.2010.0671. PMID 22003312. PMC 3191861. Bibcode: 2011RSPSA.467.3088L. http://www.maxlittle.net/publications/pwc_filtering_arxiv.pdf.

- ↑ Vinnikov, Alon; Shalev-Shwartz, Shai (2014). "K-means Recovers ICA Filters when Independent Components are Sparse". Proceedings of the International Conference on Machine Learning (ICML 2014). http://www.cs.huji.ac.il/~shais/papers/KmeansICA_ICML2014.pdf.

|