Dirichlet process

In probability theory, Dirichlet processes (after the distribution associated with Peter Gustav Lejeune Dirichlet) are a family of stochastic processes whose realizations are probability distributions. In other words, a Dirichlet process is a probability distribution whose range is itself a set of probability distributions. It is often used in Bayesian inference to describe the prior knowledge about the distribution of random variables—how likely it is that the random variables are distributed according to one or another particular distribution.

As an example, a bag of 100 real-world dice is a random probability mass function (random pmf)—to sample this random pmf you put your hand in the bag and draw out a die, that is, you draw a pmf. A bag of dice manufactured using a crude process 100 years ago will likely have probabilities that deviate wildly from the uniform pmf, whereas a bag of state-of-the-art dice used by Las Vegas casinos may have barely perceptible imperfections. We can model the randomness of pmfs with the Dirichlet distribution.[1]

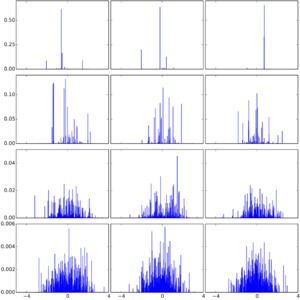

The Dirichlet process is specified by a base distribution [math]\displaystyle{ H }[/math] and a positive real number [math]\displaystyle{ \alpha }[/math] called the concentration parameter (also known as scaling parameter). The base distribution is the expected value of the process, i.e., the Dirichlet process draws distributions "around" the base distribution the way a normal distribution draws real numbers around its mean. However, even if the base distribution is continuous, the distributions drawn from the Dirichlet process are almost surely discrete. The scaling parameter specifies how strong this discretization is: in the limit of [math]\displaystyle{ \alpha\rightarrow 0 }[/math], the realizations are all concentrated at a single value, while in the limit of [math]\displaystyle{ \alpha\rightarrow\infty }[/math] the realizations become continuous. Between the two extremes the realizations are discrete distributions with less and less concentration as [math]\displaystyle{ \alpha }[/math] increases.

The Dirichlet process can also be seen as the infinite-dimensional generalization of the Dirichlet distribution. In the same way as the Dirichlet distribution is the conjugate prior for the categorical distribution, the Dirichlet process is the conjugate prior for infinite, nonparametric discrete distributions. A particularly important application of Dirichlet processes is as a prior probability distribution in infinite mixture models.

The Dirichlet process was formally introduced by Thomas S. Ferguson in 1973.[2] It has since been applied in data mining and machine learning, among others for natural language processing, computer vision and bioinformatics.

Introduction

Dirichlet processes are usually used when modelling data that tends to repeat previous values in a so-called "rich get richer" fashion. Specifically, suppose that the generation of values [math]\displaystyle{ X_1,X_2,\dots }[/math] can be simulated by the following algorithm.

- Input: [math]\displaystyle{ H }[/math] (a probability distribution called base distribution), [math]\displaystyle{ \alpha }[/math] (a positive real number called scaling parameter)

- For [math]\displaystyle{ n\ge 1 }[/math]:

a) With probability [math]\displaystyle{ \frac{\alpha}{\alpha+n-1} }[/math] draw [math]\displaystyle{ X_n }[/math] from [math]\displaystyle{ H }[/math].

b) With probability [math]\displaystyle{ \frac{n_x}{\alpha+n-1} }[/math] set [math]\displaystyle{ X_n=x }[/math], where [math]\displaystyle{ n_x }[/math] is the number of previous observations of [math]\displaystyle{ x }[/math].

(Formally, [math]\displaystyle{ n_x := | \{ j\colon X_j=x \text{ and } j\lt n \} | }[/math] where [math]\displaystyle{ | \cdot | }[/math] denotes the number of elements in the set.)

At the same time, another common model for data is that the observations [math]\displaystyle{ X_1,X_2,\dots }[/math] are assumed to be independent and identically distributed (i.i.d.) according to some (random) distribution [math]\displaystyle{ P }[/math]. The goal of introducing Dirichlet processes is to be able to describe the procedure outlined above in this i.i.d. model.

The [math]\displaystyle{ X_1,X_2,\dots }[/math] observations in the algorithm are not independent, since we have to consider the previous results when generating the next value. They are, however, exchangeable. This fact can be shown by calculating the joint probability distribution of the observations and noticing that the resulting formula only depends on which [math]\displaystyle{ x }[/math] values occur among the observations and how many repetitions they each have. Because of this exchangeability, de Finetti's representation theorem applies and it implies that the observations [math]\displaystyle{ X_1,X_2,\dots }[/math] are conditionally independent given a (latent) distribution [math]\displaystyle{ P }[/math]. This [math]\displaystyle{ P }[/math] is a random variable itself and has a distribution. This distribution (over distributions) is called a Dirichlet process ([math]\displaystyle{ \operatorname{DP} }[/math]). In summary, this means that we get an equivalent procedure to the above algorithm:

- Draw a distribution [math]\displaystyle{ P }[/math] from [math]\displaystyle{ \operatorname{DP}\left(H,\alpha\right) }[/math]

- Draw observations [math]\displaystyle{ X_1,X_2,\dots }[/math] independently from [math]\displaystyle{ P }[/math].

In practice, however, drawing a concrete distribution [math]\displaystyle{ P }[/math] is impossible, since its specification requires an infinite amount of information. This is a common phenomenon in the context of Bayesian non-parametric statistics where a typical task is to learn distributions on function spaces, which involve effectively infinitely many parameters. The key insight is that in many applications the infinite-dimensional distributions appear only as an intermediary computational device and are not required for either the initial specification of prior beliefs or for the statement of the final inference.

Formal definition

Given a measurable set S, a base probability distribution H and a positive real number [math]\displaystyle{ \alpha }[/math], the Dirichlet process [math]\displaystyle{ \operatorname{DP}(H, \alpha) }[/math] is a stochastic process whose sample path (or realization, i.e. an infinite sequence of random variates drawn from the process) is a probability distribution over S, such that the following holds. For any measurable finite partition of S, denoted [math]\displaystyle{ \{B_i\}_{i=1}^n }[/math],

- [math]\displaystyle{ \text{if } X \sim \operatorname{DP}(H,\alpha) }[/math]

- [math]\displaystyle{ \text{then }(X(B_1),\dots,X(B_n)) \sim \operatorname{Dir}(\alpha H(B_1),\dots, \alpha H(B_n)), }[/math]

where [math]\displaystyle{ \operatorname{Dir} }[/math] denotes the Dirichlet distribution and the notation [math]\displaystyle{ X \sim D }[/math] means that the random variable [math]\displaystyle{ X }[/math] has the distribution [math]\displaystyle{ D }[/math].

Alternative views

There are several equivalent views of the Dirichlet process. Besides the formal definition above, the Dirichlet process can be defined implicitly through de Finetti's theorem as described in the first section; this is often called the Chinese restaurant process. A third alternative is the stick-breaking process, which defines the Dirichlet process constructively by writing a distribution sampled from the process as [math]\displaystyle{ f(x)=\sum_{k=1}^\infty\beta_k\delta_{x_k}(x) }[/math], where [math]\displaystyle{ \{x_k\}_{k=1}^\infty }[/math] are samples from the base distribution [math]\displaystyle{ H }[/math], [math]\displaystyle{ \delta_{x_k} }[/math] is an indicator function centered on [math]\displaystyle{ x_k }[/math] (zero everywhere except for [math]\displaystyle{ \delta_{x_k}(x_k)=1 }[/math]) and the [math]\displaystyle{ \beta_k }[/math] are defined by a recursive scheme that repeatedly samples from the beta distribution [math]\displaystyle{ \operatorname{Beta}(1,\alpha) }[/math].

The Chinese restaurant process

File:Chinese Restaurant Process for DP(0.5,H).webm A widely employed metaphor for the Dirichlet process is based on the so-called Chinese restaurant process. The metaphor is as follows:

Imagine a Chinese restaurant in which customers enter. A new customer sits down at a table with a probability proportional to the number of customers already sitting there. Additionally, a customer opens a new table with a probability proportional to the scaling parameter [math]\displaystyle{ \alpha }[/math]. After infinitely many customers entered, one obtains a probability distribution over infinitely many tables to be chosen. This probability distribution over the tables is a random sample of the probabilities of observations drawn from a Dirichlet process with scaling parameter [math]\displaystyle{ \alpha }[/math].

If one associates draws from the base measure [math]\displaystyle{ H }[/math] with every table, the resulting distribution over the sample space [math]\displaystyle{ S }[/math] is a random sample of a Dirichlet process. The Chinese restaurant process is related to the Pólya urn sampling scheme which yields samples from finite Dirichlet distributions.

Because customers sit at a table with a probability proportional to the number of customers already sitting at the table, two properties of the DP can be deduced:

- The Dirichlet process exhibits a self-reinforcing property: The more often a given value has been sampled in the past, the more likely it is to be sampled again.

- Even if [math]\displaystyle{ H }[/math] is a distribution over an uncountable set, there is a nonzero probability that two samples will have exactly the same value because the probability mass will concentrate on a small number of tables.

The stick-breaking process

A third approach to the Dirichlet process is the so-called stick-breaking process view. Conceptually, this involves repeatedly breaking off and discarding a random fraction (sampled from a Beta distribution) of a "stick" that is initially of length 1. Remember that draws from a Dirichlet process are distributions over a set [math]\displaystyle{ S }[/math]. As noted previously, the distribution drawn is discrete with probability 1. In the stick-breaking process view, we explicitly use the discreteness and give the probability mass function of this (random) discrete distribution as:

- [math]\displaystyle{ f(\theta) = \sum_{k=1}^\infty \beta_k \cdot \delta_{\theta_k}(\theta) }[/math]

where [math]\displaystyle{ \delta_{\theta_k} }[/math] is the indicator function which evaluates to zero everywhere, except for [math]\displaystyle{ \delta_{\theta_k}(\theta_k)=1 }[/math]. Since this distribution is random itself, its mass function is parameterized by two sets of random variables: the locations [math]\displaystyle{ \left\{\theta_k\right\}_{k=1}^\infty }[/math] and the corresponding probabilities [math]\displaystyle{ \left\{\beta_k\right\}_{k=1}^\infty }[/math]. In the following, we present without proof what these random variables are.

The locations [math]\displaystyle{ \theta_k }[/math] are independent and identically distributed according to [math]\displaystyle{ H }[/math], the base distribution of the Dirichlet process. The probabilities [math]\displaystyle{ \beta_k }[/math] are given by a procedure resembling the breaking of a unit-length stick (hence the name):

- [math]\displaystyle{ \beta_k = \beta'_k\cdot\prod_{i=1}^{k-1}\left(1-\beta'_i\right) }[/math]

where [math]\displaystyle{ \beta'_k }[/math] are independent random variables with the beta distribution [math]\displaystyle{ \operatorname{Beta}(1,\alpha) }[/math]. The resemblance to 'stick-breaking' can be seen by considering [math]\displaystyle{ \beta_k }[/math] as the length of a piece of a stick. We start with a unit-length stick and in each step we break off a portion of the remaining stick according to [math]\displaystyle{ \beta'_k }[/math] and assign this broken-off piece to [math]\displaystyle{ \beta_k }[/math]. The formula can be understood by noting that after the first k − 1 values have their portions assigned, the length of the remainder of the stick is [math]\displaystyle{ \prod_{i=1}^{k-1}\left(1-\beta'_i\right) }[/math] and this piece is broken according to [math]\displaystyle{ \beta'_k }[/math] and gets assigned to [math]\displaystyle{ \beta_k }[/math].

The smaller [math]\displaystyle{ \alpha }[/math] is, the less of the stick will be left for subsequent values (on average), yielding more concentrated distributions.

The stick-breaking process is similar to the construction where one samples sequentially from marginal beta distributions in order to generate a sample from a Dirichlet distribution.[4]

The Pólya urn scheme

Yet another way to visualize the Dirichlet process and Chinese restaurant process is as a modified Pólya urn scheme sometimes called the Blackwell–MacQueen sampling scheme. Imagine that we start with an urn filled with [math]\displaystyle{ \alpha }[/math] black balls. Then we proceed as follows:

- Each time we need an observation, we draw a ball from the urn.

- If the ball is black, we generate a new (non-black) colour uniformly, label a new ball this colour, drop the new ball into the urn along with the ball we drew, and return the colour we generated.

- Otherwise, label a new ball with the colour of the ball we drew, drop the new ball into the urn along with the ball we drew, and return the colour we observed.

The resulting distribution over colours is the same as the distribution over tables in the Chinese restaurant process. Furthermore, when we draw a black ball, if rather than generating a new colour, we instead pick a random value from a base distribution [math]\displaystyle{ H }[/math] and use that value to label the new ball, the resulting distribution over labels will be the same as the distribution over the values in a Dirichlet process.

Use as a prior distribution

The Dirichlet Process can be used as a prior distribution to estimate the probability distribution that generates the data. In this section, we consider the model

- [math]\displaystyle{ \begin{align} P & \sim \textrm{DP}(H, \alpha )\\ X_1, \ldots, X_n \mid P & \, \overset{\textrm{i.i.d.}}{\sim} \, P. \end{align} }[/math]

The Dirichlet Process distribution satisfies prior conjugacy, posterior consistency, and the Bernstein–von Mises theorem.[5]

Prior conjugacy

In this model, the posterior distribution is again a Dirichlet process. This means that the Dirichlet process is a conjugate prior for this model. The posterior distribution is given by

- [math]\displaystyle{ \begin{align} P \mid X_1, \ldots, X_n &\sim \textrm{DP}\left(\frac{\alpha}{\alpha + n} H + \frac{1}{\alpha + n} \sum_{i = 1}^n \delta_{X_i}, \; \alpha + n \right) \\ &= \textrm{DP}\left(\frac{\alpha}{\alpha + n} H + \frac{n}{\alpha + n} \mathbb{P}_n, \; \alpha + n \right) \end{align} }[/math]

where [math]\displaystyle{ \mathbb{P}_n }[/math] is defined below.

Posterior consistency

If we take the frequentist view of probability, we believe there is a true probability distribution [math]\displaystyle{ P_0 }[/math] that generated the data. Then it turns out that the Dirichlet process is consistent in the weak topology, which means that for every weak neighbourhood [math]\displaystyle{ U }[/math] of [math]\displaystyle{ P_0 }[/math], the posterior probability of [math]\displaystyle{ U }[/math] converges to [math]\displaystyle{ 1 }[/math].

Bernstein–Von Mises theorem

In order to interpret the credible sets as confidence sets, a Bernstein–von Mises theorem is needed. In case of the Dirichlet process we compare the posterior distribution with the empirical process [math]\displaystyle{ \mathbb{P}_n = \frac{1}{n} \sum_{i = 1}^n \delta_{X_i} }[/math]. Suppose [math]\displaystyle{ \mathcal{F} }[/math] is a [math]\displaystyle{ P_0 }[/math]-Donsker class, i.e.

- [math]\displaystyle{ \sqrt n \left( \mathbb{P}_n - P_0 \right) \rightsquigarrow G_{P_0} }[/math]

for some Brownian Bridge [math]\displaystyle{ G_{P_0} }[/math]. Suppose also that there exists a function [math]\displaystyle{ F }[/math] such that [math]\displaystyle{ F(x) \geq \sup_{f \in \mathcal{F}} f(x) }[/math] such that [math]\displaystyle{ \int F^2 \, \mathrm{d} H \lt \infty }[/math], then, [math]\displaystyle{ P_0 }[/math] almost surely

- [math]\displaystyle{ \sqrt{n} \left( P - \mathbb{P}_n \right) \mid X_1, \cdots, X_n \rightsquigarrow G_{P_0}. }[/math]

This implies that credible sets you construct are asymptotic confidence sets, and the Bayesian inference based on the Dirichlet process is asymptotically also valid frequentist inference.

Use in Dirichlet mixture models

To understand what Dirichlet processes are and the problem they solve we consider the example of data clustering. It is a common situation that data points are assumed to be distributed in a hierarchical fashion where each data point belongs to a (randomly chosen) cluster and the members of a cluster are further distributed randomly within that cluster.

Example 1

For example, we might be interested in how people will vote on a number of questions in an upcoming election. A reasonable model for this situation might be to classify each voter as a liberal, a conservative or a moderate and then model the event that a voter says "Yes" to any particular question as a Bernoulli random variable with the probability dependent on which political cluster they belong to. By looking at how votes were cast in previous years on similar pieces of legislation one could fit a predictive model using a simple clustering algorithm such as k-means. That algorithm, however, requires knowing in advance the number of clusters that generated the data. In many situations, it is not possible to determine this ahead of time, and even when we can reasonably assume a number of clusters we would still like to be able to check this assumption. For example, in the voting example above the division into liberal, conservative and moderate might not be finely tuned enough; attributes such as a religion, class or race could also be critical for modelling voter behaviour, resulting in more clusters in the model.

Example 2

As another example, we might be interested in modelling the velocities of galaxies using a simple model assuming that the velocities are clustered, for instance by assuming each velocity is distributed according to the normal distribution [math]\displaystyle{ v_i\sim N(\mu_k,\sigma^2) }[/math], where the [math]\displaystyle{ i }[/math]th observation belongs to the [math]\displaystyle{ k }[/math]th cluster of galaxies with common expected velocity. In this case it is far from obvious how to determine a priori how many clusters (of common velocities) there should be and any model for this would be highly suspect and should be checked against the data. By using a Dirichlet process prior for the distribution of cluster means we circumvent the need to explicitly specify ahead of time how many clusters there are, although the concentration parameter still controls it implicitly.

We consider this example in more detail. A first naive model is to presuppose that there are [math]\displaystyle{ K }[/math] clusters of normally distributed velocities with common known fixed variance [math]\displaystyle{ \sigma^2 }[/math]. Denoting the event that the [math]\displaystyle{ i }[/math]th observation is in the [math]\displaystyle{ k }[/math]th cluster as [math]\displaystyle{ z_i=k }[/math] we can write this model as:

- [math]\displaystyle{ \begin{align} (v_i \mid z_i=k, \mu_k) & \sim N(\mu_k,\sigma^2) \\ \operatorname{P} (z_i=k) & = \pi_k \\ (\boldsymbol{\pi}\mid \alpha) & \sim \operatorname{Dir}\left(\frac{\alpha}{K} \cdot \mathbf{1}_K\right) \\ \mu_k & \sim H(\lambda) \end{align} }[/math]

That is, we assume that the data belongs to [math]\displaystyle{ K }[/math] distinct clusters with means [math]\displaystyle{ \mu_k }[/math] and that [math]\displaystyle{ \pi_k }[/math] is the (unknown) prior probability of a data point belonging to the [math]\displaystyle{ k }[/math]th cluster. We assume that we have no initial information distinguishing the clusters, which is captured by the symmetric prior [math]\displaystyle{ \operatorname{Dir}\left(\alpha/K\cdot\mathbf{1}_K\right) }[/math]. Here [math]\displaystyle{ \operatorname{Dir} }[/math] denotes the Dirichlet distribution and [math]\displaystyle{ \mathbf{1}_K }[/math] denotes a vector of length [math]\displaystyle{ K }[/math] where each element is 1. We further assign independent and identical prior distributions [math]\displaystyle{ H(\lambda) }[/math] to each of the cluster means, where [math]\displaystyle{ H }[/math] may be any parametric distribution with parameters denoted as [math]\displaystyle{ \lambda }[/math]. The hyper-parameters [math]\displaystyle{ \alpha }[/math] and [math]\displaystyle{ \lambda }[/math] are taken to be known fixed constants, chosen to reflect our prior beliefs about the system. To understand the connection to Dirichlet process priors we rewrite this model in an equivalent but more suggestive form:

- [math]\displaystyle{ \begin{align} (v_i \mid \tilde{\mu}_i) & \sim N(\tilde{\mu}_i,\sigma^2) \\ \tilde{\mu}_i & \sim G=\sum_{k=1}^K \pi_k \delta_{\mu_k} (\tilde{\mu}_i) \\ (\boldsymbol{\pi}\mid \alpha) & \sim \operatorname{Dir}\left(\frac{\alpha}{K} \cdot \mathbf{1}_K \right) \\ \mu_k & \sim H(\lambda) \end{align} }[/math]

Instead of imagining that each data point is first assigned a cluster and then drawn from the distribution associated to that cluster we now think of each observation being associated with parameter [math]\displaystyle{ \tilde{\mu}_i }[/math] drawn from some discrete distribution [math]\displaystyle{ G }[/math] with support on the [math]\displaystyle{ K }[/math] means. That is, we are now treating the [math]\displaystyle{ \tilde{\mu}_i }[/math] as being drawn from the random distribution [math]\displaystyle{ G }[/math] and our prior information is incorporated into the model by the distribution over distributions [math]\displaystyle{ G }[/math].

File:Parameter estimation process infinite Gaussian mixture model.webm

We would now like to extend this model to work without pre-specifying a fixed number of clusters [math]\displaystyle{ K }[/math]. Mathematically, this means we would like to select a random prior distribution [math]\displaystyle{ G(\tilde{\mu}_i)=\sum_{k=1}^\infty\pi_k \delta_{\mu_k}(\tilde{\mu}_i) }[/math] where the values of the clusters means [math]\displaystyle{ \mu_k }[/math] are again independently distributed according to [math]\displaystyle{ H\left(\lambda\right) }[/math] and the distribution over [math]\displaystyle{ \pi_k }[/math] is symmetric over the infinite set of clusters. This is exactly what is accomplished by the model:

- [math]\displaystyle{ \begin{align} (v_i \mid \tilde{\mu}_i) & \sim N(\tilde{\mu}_i,\sigma^2)\\ \tilde{\mu}_i & \sim G \\ G & \sim \operatorname{DP}(H(\lambda),\alpha) \end{align} }[/math]

With this in hand we can better understand the computational merits of the Dirichlet process. Suppose that we wanted to draw [math]\displaystyle{ n }[/math] observations from the naive model with exactly [math]\displaystyle{ K }[/math] clusters. A simple algorithm for doing this would be to draw [math]\displaystyle{ K }[/math] values of [math]\displaystyle{ \mu_k }[/math] from [math]\displaystyle{ H(\lambda) }[/math], a distribution [math]\displaystyle{ \pi }[/math] from [math]\displaystyle{ \operatorname{Dir}\left(\alpha/K\cdot\mathbf{1}_K\right) }[/math] and then for each observation independently sample the cluster [math]\displaystyle{ k }[/math] with probability [math]\displaystyle{ \pi_k }[/math] and the value of the observation according to [math]\displaystyle{ N\left(\mu_k,\sigma^2\right) }[/math]. It is easy to see that this algorithm does not work in case where we allow infinite clusters because this would require sampling an infinite dimensional parameter [math]\displaystyle{ \boldsymbol{\pi} }[/math]. However, it is still possible to sample observations [math]\displaystyle{ v_i }[/math]. One can e.g. use the Chinese restaurant representation described below and calculate the probability for used clusters and a new cluster to be created. This avoids having to explicitly specify [math]\displaystyle{ \boldsymbol{\pi} }[/math]. Other solutions are based on a truncation of clusters: A (high) upper bound to the true number of clusters is introduced and cluster numbers higher than the lower bound are treated as one cluster.

Fitting the model described above based on observed data [math]\displaystyle{ D }[/math] means finding the posterior distribution [math]\displaystyle{ p\left(\boldsymbol{\pi},\boldsymbol{\mu}\mid D\right) }[/math] over cluster probabilities and their associated means. In the infinite dimensional case it is obviously impossible to write down the posterior explicitly. It is, however, possible to draw samples from this posterior using a modified Gibbs sampler.[6] This is the critical fact that makes the Dirichlet process prior useful for inference.

Applications of the Dirichlet process

Dirichlet processes are frequently used in Bayesian nonparametric statistics. "Nonparametric" here does not mean a parameter-less model, rather a model in which representations grow as more data are observed. Bayesian nonparametric models have gained considerable popularity in the field of machine learning because of the above-mentioned flexibility, especially in unsupervised learning. In a Bayesian nonparametric model, the prior and posterior distributions are not parametric distributions, but stochastic processes.[7] The fact that the Dirichlet distribution is a probability distribution on the simplex of sets of non-negative numbers that sum to one makes it a good candidate to model distributions over distributions or distributions over functions. Additionally, the nonparametric nature of this model makes it an ideal candidate for clustering problems where the distinct number of clusters is unknown beforehand. In addition, the Dirichlet process has also been used for developing a mixture of expert models, in the context of supervised learning algorithms (regression or classification settings). For instance, mixtures of Gaussian process experts, where the number of required experts must be inferred from the data.[8][9]

As draws from a Dirichlet process are discrete, an important use is as a prior probability in infinite mixture models. In this case, [math]\displaystyle{ S }[/math] is the parametric set of component distributions. The generative process is therefore that a sample is drawn from a Dirichlet process, and for each data point, in turn, a value is drawn from this sample distribution and used as the component distribution for that data point. The fact that there is no limit to the number of distinct components which may be generated makes this kind of model appropriate for the case when the number of mixture components is not well-defined in advance. For example, the infinite mixture of Gaussians model,[10] as well as associated mixture regression models, e.g.[11]

The infinite nature of these models also lends them to natural language processing applications, where it is often desirable to treat the vocabulary as an infinite, discrete set.

The Dirichlet Process can also be used for nonparametric hypothesis testing, i.e. to develop Bayesian nonparametric versions of the classical nonparametric hypothesis tests, e.g. sign test, Wilcoxon rank-sum test, Wilcoxon signed-rank test, etc. For instance, Bayesian nonparametric versions of the Wilcoxon rank-sum test and the Wilcoxon signed-rank test have been developed by using the imprecise Dirichlet process, a prior ignorance Dirichlet process.[citation needed]

Related distributions

- The Pitman–Yor process is a generalization of the Dirichlet process to accommodate power-law tails

- The hierarchical Dirichlet process extends the ordinary Dirichlet process for modelling grouped data.

References

- ↑ Frigyik, Bela A.; Kapila, Amol; Gupta, Maya R.. "Introduction to the Dirichlet Distribution and Related Processes". http://mayagupta.org/publications/FrigyikKapilaGuptaIntroToDirichlet.pdf.

- ↑ Ferguson, Thomas (1973). "Bayesian analysis of some nonparametric problems". Annals of Statistics 1 (2): 209–230. doi:10.1214/aos/1176342360.

- ↑ "Dirichlet Process and Dirichlet Distribution – Polya Restaurant Scheme and Chinese Restaurant Process". http://topicmodels.west.uni-koblenz.de/ckling/tmt/crp.html?parameters=0.5&dp=1#.

- ↑ For the proof, see Paisley, John (August 2010). "A simple proof of the stick-breaking construction of the Dirichlet Process". http://www.columbia.edu/~jwp2128/Teaching/E6892/papers/SimpleProof.pdf.

- ↑ Aad van der Vaart, Subhashis Ghosal (2017). Fundamentals of Bayesian Nonparametric Inference. Cambridge University Press. ISBN 978-0-521-87826-5.

- ↑ Sudderth, Erik (2006). Graphical Models for Visual Object Recognition and Tracking (PDF) (Ph.D.). MIT Press.

- ↑ Nils Lid Hjort; Chris Holmes, Peter Müller; Stephen G. Walker (2010). Bayesian Nonparametrics. Cambridge University Press. ISBN 978-0-521-51346-3.

- ↑ Sotirios P. Chatzis, "A Latent Variable Gaussian Process Model with Pitman-Yor Process Priors for Multiclass Classification," Neurocomputing, vol. 120, pp. 482–489, Nov. 2013. doi:10.1016/j.neucom.2013.04.029

- ↑ Sotirios P. Chatzis, Yiannis Demiris, "Nonparametric mixtures of Gaussian processes with power-law behaviour," IEEE Transactions on Neural Networks and Learning Systems, vol. 23, no. 12, pp. 1862–1871, Dec. 2012. doi:10.1109/TNNLS.2012.2217986

- ↑ Rasmussen, Carl (2000). "The Infinite Gaussian Mixture Model". Advances in Neural Information Processing Systems 12: 554–560. http://www.gatsby.ucl.ac.uk/~edward/pub/inf.mix.nips.99.pdf.

- ↑ Sotirios P. Chatzis, Dimitrios Korkinof, and Yiannis Demiris, "A nonparametric Bayesian approach toward robot learning by demonstration," Robotics and Autonomous Systems, vol. 60, no. 6, pp. 789–802, June 2012. doi:10.1016/j.robot.2012.02.005

External links

- Introduction to the Dirichlet Distribution and Related Processes by Frigyik, Kapila and Gupta

- Yee Whye Teh's overview of Dirichlet processes

- Webpage for the NIPS 2003 workshop on non-parametric Bayesian methods

- Michael Jordan's NIPS 2005 tutorial: Nonparametric Bayesian Methods: Dirichlet Processes, Chinese Restaurant Processes and All That

- Peter Green's summary of construction of Dirichlet Processes

- Peter Green's paper on probabilistic models of Dirichlet Processes with implications for statistical modelling and analysis

- Zoubin Ghahramani's UAI 2005 tutorial on Nonparametric Bayesian methods

- GIMM software for performing cluster analysis using Infinite Mixture Models

- A Toy Example of Clustering using Dirichlet Process. by Zhiyuan Weng

|