Autoregressive model

This article includes a list of references, but its sources remain unclear because it has insufficient inline citations. (March 2011) (Learn how and when to remove this template message) |

In statistics, econometrics, and signal processing, an autoregressive (AR) model is a representation of a type of random process; as such, it can be used to describe certain time-varying processes in nature, economics, behavior, etc. The autoregressive model specifies that the output variable depends linearly on its own previous values and on a stochastic term (an imperfectly predictable term); thus the model is in the form of a stochastic difference equation (or recurrence relation) which should not be confused with a differential equation. Together with the moving-average (MA) model, it is a special case and key component of the more general autoregressive–moving-average (ARMA) and autoregressive integrated moving average (ARIMA) models of time series, which have a more complicated stochastic structure; it is also a special case of the vector autoregressive model (VAR), which consists of a system of more than one interlocking stochastic difference equation in more than one evolving random variable. Another important extension is the time-varying autoregressive (TVAR) model, where the autoregressive coefficients are allowed to change over time to model evolving or non-stationary processes. TVAR models are widely applied in cases where the underlying dynamics of the system are not constant, such as in sensors time series modelling[1][2], finance[3], climate science[4], economics[5], signal processing[6] and telecommunications[7], radar systems[8], and biological signals[9].

Unlike the moving-average (MA) model, the autoregressive model is not always stationary; non-stationarity can arise either due to the presence of a unit root or due to time-varying model parameters, as in time-varying autoregressive (TVAR) models.

Large language models are called autoregressive, but they are not a classical autoregressive model in this sense because they are not linear.

Definition

The notation indicates an autoregressive model of order p. The AR(p) model is defined as

where are the parameters of the model, and is white noise.[10][11] This can be equivalently written using the backshift operator B as

so that, moving the summation term to the left side and using polynomial notation, we have

An autoregressive model can thus be viewed as the output of an all-pole infinite impulse response filter whose input is white noise.

Some parameter constraints are necessary for the model to remain weak-sense stationary. For example, processes in the AR(1) model with are not stationary. More generally, for an AR(p) model to be weak-sense stationary, the roots of the polynomial must lie outside the unit circle, i.e., each (complex) root must satisfy (see pages 89,92 [12]).

Intertemporal effect of shocks

In an AR process, a one-time shock affects values of the evolving variable infinitely far into the future. For example, consider the AR(1) model . A non-zero value for at say time t=1 affects by the amount . Then by the AR equation for in terms of , this affects by the amount . Then by the AR equation for in terms of , this affects by the amount . Continuing this process shows that the effect of never ends, although if the process is stationary then the effect diminishes toward zero in the limit.

Because each shock affects X values infinitely far into the future from when they occur, any given value Xt is affected by shocks occurring infinitely far into the past. This can also be seen by rewriting the autoregression

(where the constant term has been suppressed by assuming that the variable has been measured as deviations from its mean) as

When the polynomial division on the right side is carried out, the polynomial in the backshift operator applied to has an infinite order—that is, an infinite number of lagged values of appear on the right side of the equation.

Characteristic polynomial

where are the roots of the polynomial

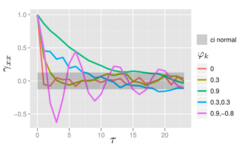

The autocorrelation function of an AR(p) process is a sum of decaying exponentials.

- Each real root contributes a component to the autocorrelation function that decays exponentially.

- Similarly, each pair of complex conjugate roots contributes an exponentially damped oscillation.

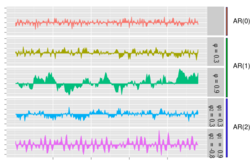

Graphs of AR(p) processes

The simplest AR process is AR(0), which has no dependence between the terms. Only the error/innovation/noise term contributes to the output of the process, so in the figure, AR(0) corresponds to white noise.

For an AR(1) process with a positive , only the previous term in the process and the noise term contribute to the output. If is close to 0, then the process still looks like white noise, but as approaches 1, the output gets a larger contribution from the previous term relative to the noise. This results in a "smoothing" or integration of the output, similar to a low pass filter.

For an AR(2) process, the previous two terms and the noise term contribute to the output. If both and are positive, the output will resemble a low pass filter, with the high frequency part of the noise decreased. If is positive while is negative, then the process favors changes in sign between terms of the process. The output oscillates. This can be linked to edge detection or detection of change in direction.

Example: An AR(1) process

An AR(1) process is given by:where is a white noise process with zero mean and constant variance . (Note: The subscript on has been dropped.) The process is weak-sense stationary if since it is obtained as the output of a stable filter whose input is white noise. (If then the variance of depends on time lag t, so that the variance of the series diverges to infinity as t goes to infinity, and is therefore not weak-sense stationary.) Assuming , the mean is identical for all values of t by definition of weak sense stationarity. If the mean is denoted by , it follows fromthatand hence

The variance is

where is the standard deviation of . This can be shown by noting that

and then by noticing that the quantity above is a stable fixed point of this relation.

The autocovariance is given by

It can be seen that the autocovariance function decays with a decay time (also called time constant) of .[13]

The spectral density function is the Fourier transform of the autocovariance function. In discrete terms this will be the discrete-time Fourier transform:

This expression is periodic due to the discrete nature of the , which is manifested as the cosine term in the denominator. If we assume that the sampling time () is much smaller than the decay time (), then we can use a continuum approximation to :

which yields a Lorentzian profile for the spectral density:

where is the angular frequency associated with the decay time .

An alternative expression for can be derived by first substituting for in the defining equation. Continuing this process N times yields

For N approaching infinity, will approach zero and:

It is seen that is white noise convolved with the kernel plus the constant mean. If the white noise is a Gaussian process then is also a Gaussian process. In other cases, the central limit theorem indicates that will be approximately normally distributed when is close to one.

For , the process will be a geometric progression (exponential growth or decay). In this case, the solution can be found analytically: whereby is an unknown constant (initial condition).

Explicit mean/difference form of AR(1) process

The AR(1) model is the discrete-time analogy of the continuous Ornstein-Uhlenbeck process. It is therefore sometimes useful to understand the properties of the AR(1) model cast in an equivalent form. In this form, the AR(1) model, with process parameter , is given by

- , where , is the model mean, and is a white-noise process with zero mean and constant variance .

By rewriting this as and then deriving (by induction) , one can show that

- and

- .

Choosing the maximum lag

The partial autocorrelation of an AR(p) process equals zero at lags larger than p, so the appropriate maximum lag p is the one after which the partial autocorrelations are all zero.

Calculation of the AR parameters

There are many ways to estimate the coefficients, such as the ordinary least squares procedure or method of moments (through Yule–Walker equations).

The AR(p) model is given by the equation

It is based on parameters where i = 1, ..., p. There is a direct correspondence between these parameters and the covariance function of the process, and this correspondence can be inverted to determine the parameters from the autocorrelation function (which is itself obtained from the covariances). This is done using the Yule–Walker equations.

Yule–Walker equations

The Yule–Walker equations, named for Udny Yule and Gilbert Walker,[14][15] are the following set of equations.[16]

where m = 0, …, p, yielding p + 1 equations. Here is the autocovariance function of Xt, is the standard deviation of the input noise process, and is the Kronecker delta function.

Because the last part of an individual equation is non-zero only if m = 0, the set of equations can be solved by representing the equations for m > 0 in matrix form, thus getting the equation

which can be solved for all The remaining equation for m = 0 is

which, once are known, can be solved for

An alternative formulation is in terms of the autocorrelation function. The AR parameters are determined by the first p+1 elements of the autocorrelation function. The full autocorrelation function can then be derived by recursively calculating [17]

Examples for some Low-order AR(p) processes

- p=1

- Hence

- p=2

- The Yule–Walker equations for an AR(2) process are

- Remember that

- Using the first equation yields

- Using the recursion formula yields

- The Yule–Walker equations for an AR(2) process are

Estimation of AR parameters

The above equations (the Yule–Walker equations) provide several routes to estimating the parameters of an AR(p) model, by replacing the theoretical covariances with estimated values.[18] Some of these variants can be described as follows:

- Estimation of autocovariances or autocorrelations. Here each of these terms is estimated separately, using conventional estimates. There are different ways of doing this and the choice between these affects the properties of the estimation scheme. For example, negative estimates of the variance can be produced by some choices.

- Formulation as a least squares regression problem in which an ordinary least squares prediction problem is constructed, basing prediction of values of Xt on the p previous values of the same series. This can be thought of as a forward-prediction scheme. The normal equations for this problem can be seen to correspond to an approximation of the matrix form of the Yule–Walker equations in which each appearance of an autocovariance of the same lag is replaced by a slightly different estimate.

- Formulation as an extended form of ordinary least squares prediction problem. Here two sets of prediction equations are combined into a single estimation scheme and a single set of normal equations. One set is the set of forward-prediction equations and the other is a corresponding set of backward prediction equations, relating to the backward representation of the AR model:

- Here predicted values of Xt would be based on the p future values of the same series.[clarification needed] This way of estimating the AR parameters is due to John Parker Burg,[19] and is called the Burg method:[20] Burg and later authors called these particular estimates "maximum entropy estimates",[21] but the reasoning behind this applies to the use of any set of estimated AR parameters. Compared to the estimation scheme using only the forward prediction equations, different estimates of the autocovariances are produced, and the estimates have different stability properties. Burg estimates are particularly associated with maximum entropy spectral estimation.[22]

Other possible approaches to estimation include maximum likelihood estimation. Two distinct variants of maximum likelihood are available: in one (broadly equivalent to the forward prediction least squares scheme) the likelihood function considered is that corresponding to the conditional distribution of later values in the series given the initial p values in the series; in the second, the likelihood function considered is that corresponding to the unconditional joint distribution of all the values in the observed series. Substantial differences in the results of these approaches can occur if the observed series is short, or if the process is close to non-stationarity.

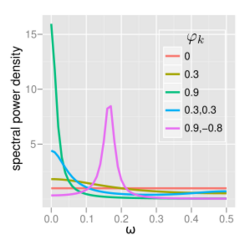

Spectrum

The power spectral density (PSD) of an AR(p) process with noise variance is[17]

AR(0)

For white noise (AR(0))

AR(1)

For AR(1)

- If there is a single spectral peak at , often referred to as red noise. As becomes nearer 1, there is stronger power at low frequencies, i.e. larger time lags. This is then a low-pass filter, when applied to full spectrum light, everything except for the red light will be filtered.

- If there is a minimum at , often referred to as blue noise. This similarly acts as a high-pass filter, everything except for blue light will be filtered.

AR(2)

The behavior of an AR(2) process is determined entirely by the roots of it characteristic equation, which is expressed in terms of the lag operator as:

or equivalently by the poles of its transfer function, which is defined in the Z domain by:

It follows that the poles are values of z satisfying:

- ,

which yields:

- .

and are the reciprocals of the characteristic roots, as well as the eigenvalues of the temporal update matrix:

AR(2) processes can be split into three groups depending on the characteristics of their roots/poles:

- When , the process has a pair of complex-conjugate poles, creating a mid-frequency peak at:

with bandwidth about the peak inversely proportional to the moduli of the poles:

The terms involving square roots are all real in the case of complex poles since they exist only when .

Otherwise the process has real roots, and:

- When it acts as a low-pass filter on the white noise with a spectral peak at

- When it acts as a high-pass filter on the white noise with a spectral peak at .

The process is non-stationary when the poles are on or outside the unit circle, or equivalently when the characteristic roots are on or inside the unit circle. The process is stable when the poles are strictly within the unit circle (roots strictly outside the unit circle), or equivalently when the coefficients are in the triangle .

The full PSD function can be expressed in real form as:

Implementations in statistics packages

- R – the stats package includes ar function;[23] the astsa package includes sarima function to fit various models including AR.[24]

- MATLAB – the Econometrics Toolbox[25] and System Identification Toolbox[26] include AR models.[27]

- MATLAB and Octave – the TSA toolbox contains several estimation functions for uni-variate, multivariate, and adaptive AR models.[28]

- PyMC3 – the Bayesian statistics and probabilistic programming framework supports AR modes with p lags.

- bayesloop – supports parameter inference and model selection for the AR-1 process with time-varying parameters.[29]

- Python – statsmodels.org hosts an AR model.[30]

Impulse response

The impulse response of a system is the change in an evolving variable in response to a change in the value of a shock term k periods earlier, as a function of k. Since the AR model is a special case of the vector autoregressive model, the computation of the impulse response in vector autoregression#impulse response applies here.

n-step-ahead forecasting

Once the parameters of the autoregression

have been estimated, the autoregression can be used to forecast an arbitrary number of periods into the future. First use t to refer to the first period for which data is not yet available; substitute the known preceding values Xt-i for i=1, ..., p into the autoregressive equation while setting the error term equal to zero (because we forecast Xt to equal its expected value, and the expected value of the unobserved error term is zero). The output of the autoregressive equation is the forecast for the first unobserved period. Next, use t to refer to the next period for which data is not yet available; again the autoregressive equation is used to make the forecast, with one difference: the value of X one period prior to the one now being forecast is not known, so its expected value—the predicted value arising from the previous forecasting step—is used instead. Then for future periods the same procedure is used, each time using one more forecast value on the right side of the predictive equation until, after p predictions, all p right-side values are predicted values from preceding steps.

There are four sources of uncertainty regarding predictions obtained in this manner: (1) uncertainty as to whether the autoregressive model is the correct model; (2) uncertainty about the accuracy of the forecasted values that are used as lagged values in the right side of the autoregressive equation; (3) uncertainty about the true values of the autoregressive coefficients; and (4) uncertainty about the value of the error term for the period being predicted. Each of the last three can be quantified and combined to give a confidence interval for the n-step-ahead predictions; the confidence interval will become wider as n increases because of the use of an increasing number of estimated values for the right-side variables.

See also

- Moving average model

- Linear difference equation

- Predictive analytics

- Linear predictive coding

- Resonance

- Levinson recursion

- Ornstein–Uhlenbeck process

- Infinite impulse response

Notes

- ↑ Souza, Douglas Baptista de; Leao, Bruno Paes (26 October 2023). "Data Augmentation of Sensor Time Series using Time-varying Autoregressive Processes". Annual Conference of the PHM Society 15 (1). doi:10.36001/phmconf.2023.v15i1.3565.

- ↑ Souza, Douglas Baptista de; Leao, Bruno Paes (5 November 2024). "Data Augmentation of Multivariate Sensor Time Series using Autoregressive Models and Application to Failure Prognostics". Annual Conference of the PHM Society 16 (1). doi:10.36001/phmconf.2024.v16i1.4145.

- ↑ Jia, Zhixuan; Li, Wang; Jiang, Yunlong; Liu, Xingshen (9 July 2025). "The Use of Minimization Solvers for Optimizing Time-Varying Autoregressive Models and Their Applications in Finance". Mathematics 13 (14): 2230. doi:10.3390/math13142230.

- ↑ Diodato, Nazzareno; Di Salvo, Cristina; Bellocchi, Gianni (18 March 2025). "Climate driven generative time-varying model for improved decadal storm power predictions in the Mediterranean". Communications Earth & Environment 6 (1): 212. doi:10.1038/s43247-025-02196-2. Bibcode: 2025ComEE...6..212D.

- ↑ Inayati, Syarifah; Iriawan, Nur (31 December 2024). "Time-Varying Autoregressive Models for Economic Forecasting". Matematika: 131–142. doi:10.11113/matematika.v40.n3.1654.

- ↑ Baptista de Souza, Douglas; Kuhn, Eduardo Vinicius; Seara, Rui (January 2019). "A Time-Varying Autoregressive Model for Characterizing Nonstationary Processes". IEEE Signal Processing Letters 26 (1): 134–138. doi:10.1109/LSP.2018.2880086. Bibcode: 2019ISPL...26..134B.

- ↑ Wang, Shihan; Chen, Tao; Wang, Hongjian (17 March 2023). "IDBD-Based Beamforming Algorithm for Improving the Performance of Phased Array Radar in Nonstationary Environments". Sensors 23 (6): 3211. doi:10.3390/s23063211. PMID 36991922. Bibcode: 2023Senso..23.3211W.

- ↑ Abramovich, Yuri I.; Spencer, Nicholas K.; Turley, Michael D. E. (April 2007). "Time-Varying Autoregressive (TVAR) Models for Multiple Radar Observations". IEEE Transactions on Signal Processing 55 (4): 1298–1311. doi:10.1109/TSP.2006.888064. Bibcode: 2007ITSP...55.1298A.

- ↑ Gutierrez, D.; Salazar-Varas, R. (August 2011). "EEG signal classification using time-varying autoregressive models and common spatial patterns". 2011 Annual International Conference of the IEEE Engineering in Medicine and Biology Society. pp. 6585–6588. doi:10.1109/IEMBS.2011.6091624. ISBN 978-1-4577-1589-1.

- ↑ Box, George E. P. (1994) (in en). Time series analysis : forecasting and control. Gwilym M. Jenkins, Gregory C. Reinsel (3rd ed.). Englewood Cliffs, N.J.: Prentice Hall. pp. 54. ISBN 0-13-060774-6. OCLC 28888762.

- ↑ Shumway, Robert H. (2000) (in en). Time series analysis and its applications. David S. Stoffer. New York: Springer. pp. 90–91. ISBN 0-387-98950-1. OCLC 42392178.

- ↑ Shumway, Robert H.; Stoffer, David (2010). Time series analysis and its applications : with R examples (3rd ed.). Springer. ISBN 978-1441978646.

- ↑ Lai, Dihui; and Lu, Bingfeng; "Understanding Autoregressive Model for Time Series as a Deterministic Dynamic System" , in Predictive Analytics and Futurism, June 2017, number 15, June 2017, pages 7-9

- ↑ Yule, G. Udny (1927) "On a Method of Investigating Periodicities in Disturbed Series, with Special Reference to Wolfer's Sunspot Numbers" , Philosophical Transactions of the Royal Society of London, Ser. A, Vol. 226, 267–298.]

- ↑ Walker, Gilbert (1931) "On Periodicity in Series of Related Terms" , Proceedings of the Royal Society of London, Ser. A, Vol. 131, 518–532.

- ↑ Theodoridis, Sergios (2015-04-10). "Chapter 1. Probability and Stochastic Processes". Machine Learning: A Bayesian and Optimization Perspective. Academic Press, 2015. pp. 9–51. ISBN 978-0-12-801522-3.

- ↑ 17.0 17.1 Von Storch, Hans; Zwiers, Francis W. (2001). Statistical analysis in climate research. Cambridge University Press. doi:10.1017/CBO9780511612336. ISBN 0-521-01230-9.

- ↑ Eshel, Gidon. "The Yule Walker Equations for the AR Coefficients". http://www-stat.wharton.upenn.edu/~steele/Courses/956/Resource/YWSourceFiles/YW-Eshel.pdf.

- ↑ Burg, John Parker (1968); "A new analysis technique for time series data", in Modern Spectrum Analysis (Edited by D. G. Childers), NATO Advanced Study Institute of Signal Processing with emphasis on Underwater Acoustics. IEEE Press, New York.

- ↑ Brockwell, Peter J.; Dahlhaus, Rainer; Trindade, A. Alexandre (2005). "Modified Burg Algorithms for Multivariate Subset Autoregression". Statistica Sinica 15: 197–213. http://www3.stat.sinica.edu.tw/statistica/oldpdf/A15n112.pdf.

- ↑ Burg, John Parker (1967) "Maximum Entropy Spectral Analysis", Proceedings of the 37th Meeting of the Society of Exploration Geophysicists, Oklahoma City, Oklahoma.

- ↑ Bos, Robert; De Waele, Stijn; Broersen, Piet M. T. (2002). "Autoregressive spectral estimation by application of the Burg algorithm to irregularly sampled data". IEEE Transactions on Instrumentation and Measurement 51 (6): 1289. doi:10.1109/TIM.2002.808031. Bibcode: 2002ITIM...51.1289B. http://resolver.tudelft.nl/uuid:870559f7-f1e9-4968-83da-edd19485eaaf. Retrieved 2019-12-11.

- ↑ "Fit Autoregressive Models to Time Series" (in R)

- ↑ Stoffer, David; Poison, Nicky (2023-01-09). "astsa: Applied Statistical Time Series Analysis". https://cran.r-project.org/web/packages/astsa/.

- ↑ "Econometrics Toolbox". https://www.mathworks.com/products/econometrics.html.

- ↑ "System Identification Toolbox". https://www.mathworks.com/products/sysid.html.

- ↑ "Autoregressive Model - MATLAB & Simulink". https://www.mathworks.com/help/econ/autoregressive-model.html.

- ↑ "The Time Series Analysis (TSA) toolbox for Octave and MATLAB". http://pub.ist.ac.at/~schloegl/matlab/tsa/.

- ↑ "christophmark/bayesloop". December 7, 2021. https://github.com/christophmark/bayesloop.

- ↑ "statsmodels.tsa.ar_model.AutoReg — statsmodels 0.12.2 documentation". https://www.statsmodels.org/stable/generated/statsmodels.tsa.ar_model.AutoReg.html.

References

- Mills, Terence C. (1990). Time Series Techniques for Economists. Cambridge University Press. ISBN 9780521343398. https://archive.org/details/timeseriestechni0000mill.

- Percival, Donald B.; Walden, Andrew T. (1993). Spectral Analysis for Physical Applications. Cambridge University Press. Bibcode: 1993sapa.book.....P.

- Pandit, Sudhakar M.; Wu, Shien-Ming (1983). Time Series and System Analysis with Applications. John Wiley & Sons.

External links

- AutoRegression Analysis (AR) by Paul Bourke

- Econometrics lecture (topic: Autoregressive models) on YouTube by Mark Thoma

|