Pattern recognition

Pattern recognition is the task of assigning a class to an observation based on patterns extracted from data. While similar, pattern recognition (PR) is not to be confused with pattern machines (PM) which may possess (PR) capabilities but their primary function is to distinguish and create emergent pattern. PR has applications in statistical data analysis, signal processing, image analysis, information retrieval, bioinformatics, data compression, computer graphics and machine learning. Pattern recognition has its origins in statistics and engineering; some modern approaches to pattern recognition include the use of machine learning, due to the increased availability of big data and a new abundance of processing power.

Pattern recognition systems are commonly trained from labeled "training" data. When no labeled data are available, other algorithms can be used to discover previously unknown patterns. KDD and data mining have a larger focus on unsupervised methods and stronger connection to business use. Pattern recognition focuses more on the signal and also takes acquisition and signal processing into consideration. It originated in engineering, and the term is popular in the context of computer vision: a leading computer vision conference is named Conference on Computer Vision and Pattern Recognition.

In machine learning, pattern recognition is the assignment of a label to a given input value. In statistics, discriminant analysis was introduced for this same purpose in 1936. An example of pattern recognition is classification, which attempts to assign each input value to one of a given set of classes (for example, determine whether a given email is "spam"). Pattern recognition is a more general problem that encompasses other types of output as well. Other examples are regression, which assigns a real-valued output to each input;[1] sequence labeling, which assigns a class to each member of a sequence of values[2] (for example, part of speech tagging, which assigns a part of speech to each word in an input sentence); and parsing, which assigns a parse tree to an input sentence, describing the syntactic structure of the sentence.[3]

Pattern recognition algorithms generally aim to provide a reasonable answer for all possible inputs and to perform "most likely" matching of the inputs, taking into account their statistical variation. This is opposed to pattern matching algorithms, which look for exact matches in the input with pre-existing patterns. A common example of a pattern-matching algorithm is regular expression matching, which looks for patterns of a given sort in textual data and is included in the search capabilities of many text editors and word processors.

Overview

A modern definition of pattern recognition is:

The field of pattern recognition is concerned with the automatic discovery of regularities in data through the use of computer algorithms and with the use of these regularities to take actions such as classifying the data into different categories.[4]

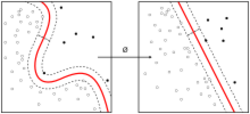

Pattern recognition is generally categorized according to the type of learning procedure used to generate the output value. Supervised learning assumes that a set of training data (the training set) has been provided, consisting of a set of instances that have been properly labeled by hand with the correct output. A learning procedure then generates a model that attempts to meet two sometimes conflicting objectives: Perform as well as possible on the training data, and generalize as well as possible to new data (usually, this means being as simple as possible, for some technical definition of "simple", in accordance with Occam's Razor, discussed below). Unsupervised learning, on the other hand, assumes training data that has not been hand-labeled, and attempts to find inherent patterns in the data that can then be used to determine the correct output value for new data instances.[5] A combination of the two that has been explored is semi-supervised learning, which uses a combination of labeled and unlabeled data (typically a small set of labeled data combined with a large amount of unlabeled data). In cases of unsupervised learning, there may be no training data at all.

Sometimes different terms are used to describe the corresponding supervised and unsupervised learning procedures for the same type of output. The unsupervised equivalent of classification is normally known as clustering, based on the common perception of the task as involving no training data to speak of, and of grouping the input data into clusters based on some inherent similarity measure (e.g. the distance between instances, considered as vectors in a multi-dimensional vector space), rather than assigning each input instance into one of a set of pre-defined classes. In some fields, the terminology is different. In community ecology, the term classification is used to refer to what is commonly known as "clustering".

The piece of input data for which an output value is generated is formally termed an instance. The instance is formally described by a vector of features, which together constitute a description of all known characteristics of the instance. These feature vectors can be seen as defining points in an appropriate multidimensional space, and methods for manipulating vectors in vector spaces can be correspondingly applied to them, such as computing the dot product or the angle between two vectors. Features typically are either categorical (also known as nominal, i.e., consisting of one of a set of unordered items, such as a gender of "male" or "female", or a blood type of "A", "B", "AB" or "O"), ordinal (consisting of one of a set of ordered items, e.g., "large", "medium" or "small"), integer-valued (e.g., a count of the number of occurrences of a particular word in an email) or real-valued (e.g., a measurement of blood pressure). Often, categorical and ordinal data are grouped together, and this is also the case for integer-valued and real-valued data. Many algorithms work only in terms of categorical data and require that real-valued or integer-valued data be discretized into groups (e.g., less than 5, between 5 and 10, or greater than 10).

Probabilistic classifiers

Many common pattern recognition algorithms are probabilistic in nature, in that they use statistical inference to find the best label for a given instance. Unlike other algorithms, which simply output a "best" label, often probabilistic algorithms also output a probability of the instance being described by the given label. In addition, many probabilistic algorithms output a list of the N-best labels with associated probabilities, for some value of N, instead of simply a single best label. When the number of possible labels is fairly small (e.g., in the case of classification), N may be set so that the probability of all possible labels is output. Probabilistic algorithms have many advantages over non-probabilistic algorithms:

- They output a confidence value associated with their choice. (Note that some other algorithms may also output confidence values, but in general, only for probabilistic algorithms is this value mathematically grounded in probability theory. Non-probabilistic confidence values can in general not be given any specific meaning, and only used to compare against other confidence values output by the same algorithm.)

- Correspondingly, they can abstain when the confidence of choosing any particular output is too low.

- Because of the probabilities output, probabilistic pattern-recognition algorithms can be more effectively incorporated into larger machine-learning tasks, in a way that partially or completely avoids the problem of error propagation.

Number of important feature variables

Feature selection algorithms attempt to directly prune out redundant or irrelevant features. A general introduction to feature selection which summarizes approaches and challenges, has been given.[6] The complexity of feature-selection is, because of its non-monotonous character, an optimization problem where given a total of [math]\displaystyle{ n }[/math] features the powerset consisting of all [math]\displaystyle{ 2^n-1 }[/math] subsets of features need to be explored. The Branch-and-Bound algorithm[7] does reduce this complexity but is intractable for medium to large values of the number of available features [math]\displaystyle{ n }[/math]

Techniques to transform the raw feature vectors (feature extraction) are sometimes used prior to application of the pattern-matching algorithm. Feature extraction algorithms attempt to reduce a large-dimensionality feature vector into a smaller-dimensionality vector that is easier to work with and encodes less redundancy, using mathematical techniques such as principal components analysis (PCA). The distinction between feature selection and feature extraction is that the resulting features after feature extraction has taken place are of a different sort than the original features and may not easily be interpretable, while the features left after feature selection are simply a subset of the original features.

Problem statement

The problem of pattern recognition can be stated as follows: Given an unknown function [math]\displaystyle{ g:\mathcal{X}\rightarrow\mathcal{Y} }[/math] (the ground truth) that maps input instances [math]\displaystyle{ \boldsymbol{x} \in \mathcal{X} }[/math] to output labels [math]\displaystyle{ y \in \mathcal{Y} }[/math], along with training data [math]\displaystyle{ \mathbf{D} = \{(\boldsymbol{x}_1,y_1),\dots,(\boldsymbol{x}_n, y_n)\} }[/math] assumed to represent accurate examples of the mapping, produce a function [math]\displaystyle{ h:\mathcal{X}\rightarrow\mathcal{Y} }[/math] that approximates as closely as possible the correct mapping [math]\displaystyle{ g }[/math]. (For example, if the problem is filtering spam, then [math]\displaystyle{ \boldsymbol{x}_i }[/math] is some representation of an email and [math]\displaystyle{ y }[/math] is either "spam" or "non-spam"). In order for this to be a well-defined problem, "approximates as closely as possible" needs to be defined rigorously. In decision theory, this is defined by specifying a loss function or cost function that assigns a specific value to "loss" resulting from producing an incorrect label. The goal then is to minimize the expected loss, with the expectation taken over the probability distribution of [math]\displaystyle{ \mathcal{X} }[/math]. In practice, neither the distribution of [math]\displaystyle{ \mathcal{X} }[/math] nor the ground truth function [math]\displaystyle{ g:\mathcal{X}\rightarrow\mathcal{Y} }[/math] are known exactly, but can be computed only empirically by collecting a large number of samples of [math]\displaystyle{ \mathcal{X} }[/math] and hand-labeling them using the correct value of [math]\displaystyle{ \mathcal{Y} }[/math] (a time-consuming process, which is typically the limiting factor in the amount of data of this sort that can be collected). The particular loss function depends on the type of label being predicted. For example, in the case of classification, the simple zero-one loss function is often sufficient. This corresponds simply to assigning a loss of 1 to any incorrect labeling and implies that the optimal classifier minimizes the error rate on independent test data (i.e. counting up the fraction of instances that the learned function [math]\displaystyle{ h:\mathcal{X}\rightarrow\mathcal{Y} }[/math] labels wrongly, which is equivalent to maximizing the number of correctly classified instances). The goal of the learning procedure is then to minimize the error rate (maximize the correctness) on a "typical" test set.

For a probabilistic pattern recognizer, the problem is instead to estimate the probability of each possible output label given a particular input instance, i.e., to estimate a function of the form

- [math]\displaystyle{ p({\rm label}|\boldsymbol{x},\boldsymbol\theta) = f\left(\boldsymbol{x};\boldsymbol{\theta}\right) }[/math]

where the feature vector input is [math]\displaystyle{ \boldsymbol{x} }[/math], and the function f is typically parameterized by some parameters [math]\displaystyle{ \boldsymbol{\theta} }[/math].[8] In a discriminative approach to the problem, f is estimated directly. In a generative approach, however, the inverse probability [math]\displaystyle{ p({\boldsymbol{x}|\rm label}) }[/math] is instead estimated and combined with the prior probability [math]\displaystyle{ p({\rm label}|\boldsymbol\theta) }[/math] using Bayes' rule, as follows:

- [math]\displaystyle{ p({\rm label}|\boldsymbol{x},\boldsymbol\theta) = \frac{p({\boldsymbol{x}|\rm label,\boldsymbol\theta}) p({\rm label|\boldsymbol\theta})}{\sum_{L \in \text{all labels}} p(\boldsymbol{x}|L) p(L|\boldsymbol\theta)}. }[/math]

When the labels are continuously distributed (e.g., in regression analysis), the denominator involves integration rather than summation:

- [math]\displaystyle{ p({\rm label}|\boldsymbol{x},\boldsymbol\theta) = \frac{p({\boldsymbol{x}|\rm label,\boldsymbol\theta}) p({\rm label|\boldsymbol\theta})}{\int_{L \in \text{all labels}} p(\boldsymbol{x}|L) p(L|\boldsymbol\theta) \operatorname{d}L}. }[/math]

The value of [math]\displaystyle{ \boldsymbol\theta }[/math] is typically learned using maximum a posteriori (MAP) estimation. This finds the best value that simultaneously meets two conflicting objects: To perform as well as possible on the training data (smallest error-rate) and to find the simplest possible model. Essentially, this combines maximum likelihood estimation with a regularization procedure that favors simpler models over more complex models. In a Bayesian context, the regularization procedure can be viewed as placing a prior probability [math]\displaystyle{ p(\boldsymbol\theta) }[/math] on different values of [math]\displaystyle{ \boldsymbol\theta }[/math]. Mathematically:

- [math]\displaystyle{ \boldsymbol\theta^* = \arg \max_{\boldsymbol\theta} p(\boldsymbol\theta|\mathbf{D}) }[/math]

where [math]\displaystyle{ \boldsymbol\theta^* }[/math] is the value used for [math]\displaystyle{ \boldsymbol\theta }[/math] in the subsequent evaluation procedure, and [math]\displaystyle{ p(\boldsymbol\theta|\mathbf{D}) }[/math], the posterior probability of [math]\displaystyle{ \boldsymbol\theta }[/math], is given by

- [math]\displaystyle{ p(\boldsymbol\theta|\mathbf{D}) = \left[\prod_{i=1}^n p(y_i|\boldsymbol{x}_i,\boldsymbol\theta) \right] p(\boldsymbol\theta). }[/math]

In the Bayesian approach to this problem, instead of choosing a single parameter vector [math]\displaystyle{ \boldsymbol{\theta}^* }[/math], the probability of a given label for a new instance [math]\displaystyle{ \boldsymbol{x} }[/math] is computed by integrating over all possible values of [math]\displaystyle{ \boldsymbol\theta }[/math], weighted according to the posterior probability:

- [math]\displaystyle{ p({\rm label}|\boldsymbol{x}) = \int p({\rm label}|\boldsymbol{x},\boldsymbol\theta)p(\boldsymbol{\theta}|\mathbf{D}) \operatorname{d}\boldsymbol{\theta}. }[/math]

Frequentist or Bayesian approach to pattern recognition

The first pattern classifier – the linear discriminant presented by Fisher – was developed in the frequentist tradition. The frequentist approach entails that the model parameters are considered unknown, but objective. The parameters are then computed (estimated) from the collected data. For the linear discriminant, these parameters are precisely the mean vectors and the covariance matrix. Also the probability of each class [math]\displaystyle{ p({\rm label}|\boldsymbol\theta) }[/math] is estimated from the collected dataset. Note that the usage of 'Bayes rule' in a pattern classifier does not make the classification approach Bayesian.

Bayesian statistics has its origin in Greek philosophy where a distinction was already made between the 'a priori' and the 'a posteriori' knowledge. Later Kant defined his distinction between what is a priori known – before observation – and the empirical knowledge gained from observations. In a Bayesian pattern classifier, the class probabilities [math]\displaystyle{ p({\rm label}|\boldsymbol\theta) }[/math] can be chosen by the user, which are then a priori. Moreover, experience quantified as a priori parameter values can be weighted with empirical observations – using e.g., the Beta- (conjugate prior) and Dirichlet-distributions. The Bayesian approach facilitates a seamless intermixing between expert knowledge in the form of subjective probabilities, and objective observations.

Probabilistic pattern classifiers can be used according to a frequentist or a Bayesian approach.

Uses

Within medical science, pattern recognition is the basis for computer-aided diagnosis (CAD) systems. CAD describes a procedure that supports the doctor's interpretations and findings. Other typical applications of pattern recognition techniques are automatic speech recognition, speaker identification, classification of text into several categories (e.g., spam or non-spam email messages), the automatic recognition of handwriting on postal envelopes, automatic recognition of images of human faces, or handwriting image extraction from medical forms.[9][10] The last two examples form the subtopic image analysis of pattern recognition that deals with digital images as input to pattern recognition systems.[11][12]

Optical character recognition is an example of the application of a pattern classifier. The method of signing one's name was captured with stylus and overlay starting in 1990.[citation needed] The strokes, speed, relative min, relative max, acceleration and pressure is used to uniquely identify and confirm identity. Banks were first offered this technology, but were content to collect from the FDIC for any bank fraud and did not want to inconvenience customers.[citation needed]

Pattern recognition has many real-world applications in image processing. Some examples include:

- identification and authentication: e.g., license plate recognition,[13] fingerprint analysis, face detection/verification;,[14] and voice-based authentication.[15]

- medical diagnosis: e.g., screening for cervical cancer (Papnet),[16] breast tumors or heart sounds;

- defense: various navigation and guidance systems, target recognition systems, shape recognition technology etc.

- mobility: advanced driver assistance systems, autonomous vehicle technology, etc.[17][18][19][20][21]

In psychology, pattern recognition is used to make sense of and identify objects, and is closely related to perception. This explains how the sensory inputs humans receive are made meaningful. Pattern recognition can be thought of in two different ways. The first concerns template matching and the second concerns feature detection. A template is a pattern used to produce items of the same proportions. The template-matching hypothesis suggests that incoming stimuli are compared with templates in the long-term memory. If there is a match, the stimulus is identified. Feature detection models, such as the Pandemonium system for classifying letters (Selfridge, 1959), suggest that the stimuli are broken down into their component parts for identification. One observation is a capital E having three horizontal lines and one vertical line.[22]

Algorithms

Algorithms for pattern recognition depend on the type of label output, on whether learning is supervised or unsupervised, and on whether the algorithm is statistical or non-statistical in nature. Statistical algorithms can further be categorized as generative or discriminative.

Classification methods (methods predicting categorical labels)

Parametric:[23]

- Linear discriminant analysis

- Quadratic discriminant analysis

- Maximum entropy classifier (aka logistic regression, multinomial logistic regression): Note that logistic regression is an algorithm for classification, despite its name. (The name comes from the fact that logistic regression uses an extension of a linear regression model to model the probability of an input being in a particular class.)

Nonparametric:[24]

- Decision trees, decision lists

- Kernel estimation and K-nearest-neighbor algorithms

- Naive Bayes classifier

- Neural networks (multi-layer perceptrons)

- Perceptrons

- Support vector machines

- Gene expression programming

Clustering methods (methods for classifying and predicting categorical labels)

- Categorical mixture models

- Hierarchical clustering (agglomerative or divisive)

- K-means clustering

- Correlation clustering

- Kernel principal component analysis (Kernel PCA)

Ensemble learning algorithms (supervised meta-algorithms for combining multiple learning algorithms together)

- Boosting (meta-algorithm)

- Bootstrap aggregating ("bagging")

- Ensemble averaging

- Mixture of experts, hierarchical mixture of experts

General methods for predicting arbitrarily-structured (sets of) labels

Multilinear subspace learning algorithms (predicting labels of multidimensional data using tensor representations)

Unsupervised:

Real-valued sequence labeling methods (predicting sequences of real-valued labels)

Regression methods (predicting real-valued labels)

- Gaussian process regression (kriging)

- Linear regression and extensions

- Independent component analysis (ICA)

- Principal components analysis (PCA)

Sequence labeling methods (predicting sequences of categorical labels)

- Conditional random fields (CRFs)

- Hidden Markov models (HMMs)

- Maximum entropy Markov models (MEMMs)

- Recurrent neural networks (RNNs)

- Dynamic time warping (DTW)

See also

- Adaptive resonance theory

- Black box

- Cache language model

- Compound-term processing

- Computer-aided diagnosis

- Data mining

- Deep Learning

- Information theory

- List of numerical-analysis software

- List of numerical libraries

- Neocognitron

- Perception

- Perceptual learning

- Predictive analytics

- Prior knowledge for pattern recognition

- Sequence mining

- Template matching

- Contextual image classification

- List of datasets for machine learning research

References

- ↑ Howard, W.R. (2007-02-20). "Pattern Recognition and Machine Learning". Kybernetes 36 (2): 275. doi:10.1108/03684920710743466. ISSN 0368-492X.

- ↑ "Sequence Labeling". https://pubweb.eng.utah.edu/~cs6961/slides/seq-labeling1.4ps.pdf.

- ↑ Ian., Chiswell (2007). Mathematical logic, p. 34. Oxford University Press. ISBN 9780199215621. OCLC 799802313.

- ↑ Bishop, Christopher M. (2006). Pattern Recognition and Machine Learning. Springer.

- ↑ Carvalko, J.R., Preston K. (1972). "On Determining Optimum Simple Golay Marking Transforms for Binary Image Processing". IEEE Transactions on Computers 21 (12): 1430–33. doi:10.1109/T-C.1972.223519..

- ↑ Isabelle Guyon Clopinet, André Elisseeff (2003). An Introduction to Variable and Feature Selection. The Journal of Machine Learning Research, Vol. 3, 1157-1182. Link

- ↑ Iman Foroutan; Jack Sklansky (1987). "Feature Selection for Automatic Classification of Non-Gaussian Data". IEEE Transactions on Systems, Man, and Cybernetics 17 (2): 187–198. doi:10.1109/TSMC.1987.4309029..

- ↑ For linear discriminant analysis the parameter vector [math]\displaystyle{ \boldsymbol\theta }[/math] consists of the two mean vectors [math]\displaystyle{ \boldsymbol\mu_1 }[/math] and [math]\displaystyle{ \boldsymbol\mu_2 }[/math] and the common covariance matrix [math]\displaystyle{ \boldsymbol\Sigma }[/math].

- ↑ Milewski, Robert; Govindaraju, Venu (31 March 2008). "Binarization and cleanup of handwritten text from carbon copy medical form images". Pattern Recognition 41 (4): 1308–1315. doi:10.1016/j.patcog.2007.08.018. Bibcode: 2008PatRe..41.1308M. http://dl.acm.org/citation.cfm?id=1324656. Retrieved 26 October 2011.

- ↑ Sarangi, Susanta; Sahidullah, Md; Saha, Goutam (September 2020). "Optimization of data-driven filterbank for automatic speaker verification". Digital Signal Processing 104: 102795. doi:10.1016/j.dsp.2020.102795.

- ↑ Richard O. Duda, Peter E. Hart, David G. Stork (2001). Pattern classification (2nd ed.). Wiley, New York. ISBN 978-0-471-05669-0. https://books.google.com/books?id=Br33IRC3PkQC. Retrieved 2019-11-26.

- ↑ R. Brunelli, Template Matching Techniques in Computer Vision: Theory and Practice, Wiley, ISBN:978-0-470-51706-2, 2009

- ↑ THE AUTOMATIC NUMBER PLATE RECOGNITION TUTORIAL http://anpr-tutorial.com/

- ↑ Neural Networks for Face Recognition Companion to Chapter 4 of the textbook Machine Learning.

- ↑ Poddar, Arnab; Sahidullah, Md; Saha, Goutam (March 2018). "Speaker Verification with Short Utterances: A Review of Challenges, Trends and Opportunities". IET Biometrics 7 (2): 91–101. doi:10.1049/iet-bmt.2017.0065. https://ieeexplore.ieee.org/document/8302747. Retrieved 2019-08-27.

- ↑ PAPNET For Cervical Screening

- ↑ "Development of an Autonomous Vehicle Control Strategy Using a Single Camera and Deep Neural Networks (2018-01-0035 Technical Paper)- SAE Mobilus" (in en). https://saemobilus.sae.org/content/2018-01-0035.

- ↑ Gerdes, J. Christian; Kegelman, John C.; Kapania, Nitin R.; Brown, Matthew; Spielberg, Nathan A. (2019-03-27). "Neural network vehicle models for high-performance automated driving" (in en). Science Robotics 4 (28): eaaw1975. doi:10.1126/scirobotics.aaw1975. ISSN 2470-9476. PMID 33137751.

- ↑ Pickering, Chris (2017-08-15). "How AI is paving the way for fully autonomous cars" (in en-UK). https://www.theengineer.co.uk/ai-autonomous-cars/.

- ↑ Ray, Baishakhi; Jana, Suman; Pei, Kexin; Tian, Yuchi (2017-08-28) (in en). DeepTest: Automated Testing of Deep-Neural-Network-driven Autonomous Cars. Bibcode: 2017arXiv170808559T.

- ↑ Sinha, P. K.; Hadjiiski, L. M.; Mutib, K. (1993-04-01). "Neural Networks in Autonomous Vehicle Control". IFAC Proceedings Volumes. 1st IFAC International Workshop on Intelligent Autonomous Vehicles, Hampshire, UK, 18–21 April 26 (1): 335–340. doi:10.1016/S1474-6670(17)49322-0. ISSN 1474-6670.

- ↑ "A-level Psychology Attention Revision - Pattern recognition | S-cool, the revision website". S-cool.co.uk. http://www.s-cool.co.uk/a-level/psychology/attention/revise-it/pattern-recognition.

- ↑ Assuming known distributional shape of feature distributions per class, such as the Gaussian shape.

- ↑ No distributional assumption regarding shape of feature distributions per class.

Further reading

- Fukunaga, Keinosuke (1990). Introduction to Statistical Pattern Recognition (2nd ed.). Boston: Academic Press. ISBN 978-0-12-269851-4. https://archive.org/details/introductiontost1990fuku.

- Hornegger, Joachim; Paulus, Dietrich W. R. (1999). Applied Pattern Recognition: A Practical Introduction to Image and Speech Processing in C++ (2nd ed.). San Francisco: Morgan Kaufmann Publishers. ISBN 978-3-528-15558-2.

- Schuermann, Juergen (1996). Pattern Classification: A Unified View of Statistical and Neural Approaches. New York: Wiley. ISBN 978-0-471-13534-0.

- Godfried T. Toussaint, ed (1988). Computational Morphology. Amsterdam: North-Holland Publishing Company. ISBN 9781483296722. https://books.google.com/books?id=ObOjBQAAQBAJ.

- Kulikowski, Casimir A.; Weiss, Sholom M. (1991). Computer Systems That Learn: Classification and Prediction Methods from Statistics, Neural Nets, Machine Learning, and Expert Systems. San Francisco: Morgan Kaufmann Publishers. ISBN 978-1-55860-065-2.

- Duda, Richard O.; Hart, Peter E.; Stork, David G. (2000). Pattern Classification (2nd ed.). Wiley-Interscience. ISBN 978-0471056690. https://books.google.com/books?id=Br33IRC3PkQC.

- Jain, Anil.K.; Duin, Robert.P.W.; Mao, Jianchang (2000). "Statistical pattern recognition: a review". IEEE Transactions on Pattern Analysis and Machine Intelligence 22 (1): 4–37. doi:10.1109/34.824819.

- An introductory tutorial to classifiers (introducing the basic terms, with numeric example)

- Kovalevsky, V. A. (1980). Image Pattern Recognition. New York, NY: Springer New York. ISBN 978-1-4612-6033-2. OCLC 852790446.

External links

- The International Association for Pattern Recognition

- List of Pattern Recognition web sites

- Journal of Pattern Recognition Research

- Pattern Recognition Info

- Pattern Recognition (Journal of the Pattern Recognition Society)

- International Journal of Pattern Recognition and Artificial Intelligence

- International Journal of Applied Pattern Recognition

- Open Pattern Recognition Project, intended to be an open source platform for sharing algorithms of pattern recognition

- Improved Fast Pattern Matching Improved Fast Pattern Matching

|