Barrier function

In constrained optimization, a field of mathematics, a barrier function is a continuous function whose value on a point increases to infinity as the point approaches the boundary of the feasible region of an optimization problem.[1][2] Such functions are used to replace inequality constraints by a penalizing term in the objective function that is easier to handle. A barrier function is also called an interior penalty function, as it is a penalty function that forces the solution to remain within the interior of the feasible region.

The two most common types of barrier functions are inverse barrier functions and logarithmic barrier functions. Resumption of interest in logarithmic barrier functions was motivated by their connection with primal-dual interior point methods.

Motivation

Consider the following constrained optimization problem:

- minimize f(x)

- subject to x ≤ b

where b is some constant. If one wishes to remove the inequality constraint, the problem can be re-formulated as

- minimize f(x) + c(x),

- where c(x) = ∞ if x > b, and zero otherwise.

This problem is equivalent to the first. It gets rid of the inequality, but introduces the issue that the penalty function c, and therefore the objective function f(x) + c(x), is discontinuous, preventing the use of calculus to solve it.

A barrier function, now, is a continuous approximation g to c that tends to infinity as x approaches b from above. Using such a function, a new optimization problem is formulated, viz.

- minimize f(x) + μ g(x)

where μ > 0 is a free parameter. This problem is not equivalent to the original, but as μ approaches zero, it becomes an ever-better approximation.[3]

Logarithmic barrier function

For logarithmic barrier functions, [math]\displaystyle{ g(x,b) }[/math] is defined as [math]\displaystyle{ -\log(b-x) }[/math] when [math]\displaystyle{ x \lt b }[/math] and [math]\displaystyle{ \infty }[/math] otherwise (in 1 dimension. See below for a definition in higher dimensions). This essentially relies on the fact that [math]\displaystyle{ \log(t) }[/math] tends to negative infinity as [math]\displaystyle{ t }[/math] tends to 0.

This introduces a gradient to the function being optimized which favors less extreme values of [math]\displaystyle{ x }[/math] (in this case values lower than [math]\displaystyle{ b }[/math]), while having relatively low impact on the function away from these extremes.

Logarithmic barrier functions may be favored over less computationally expensive inverse barrier functions depending on the function being optimized.

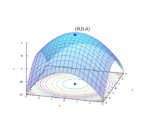

Higher dimensions

Extending to higher dimensions is simple, provided each dimension is independent. For each variable [math]\displaystyle{ x_i }[/math] which should be limited to be strictly lower than [math]\displaystyle{ b_i }[/math], add [math]\displaystyle{ -\log(b_i-x_i) }[/math].

Formal definition

Minimize [math]\displaystyle{ \mathbf c^Tx }[/math] subject to [math]\displaystyle{ \mathbf a_i^T x \le b_i, i = 1,\ldots,m }[/math]

Assume strictly feasible: [math]\displaystyle{ \{\mathbf x|A x \lt b\}\ne\emptyset }[/math]

Define logarithmic barrier [math]\displaystyle{ g(x) = \begin{cases} \sum_{i=1}^m -\log(b_i - a_i^Tx) & \text{for } Ax\lt b \\ +\infty & \text{otherwise} \end{cases} }[/math]

See also

References

- ↑ Nesterov, Yurii (2018). Lectures on Convex Optimization (2 ed.). Cham, Switzerland: Springer. p. 56. ISBN 978-3-319-91577-7.

- ↑ Nocedal, Jorge; Wright, Stephen (2006). Numerical Optimization (2 ed.). New York, NY: Springer. p. 566. ISBN 0-387-30303-0.

- ↑ Vanderbei, Robert J. (2001). Linear Programming: Foundations and Extensions. Kluwer. pp. 277–279.

External links

- Lecture 14: Barrier method from Professor Lieven Vandenberghe of UCLA

|