Combinatorial optimization

| Network science | ||||

|---|---|---|---|---|

| Network types | ||||

| Graphs | ||||

|

||||

| Models | ||||

|

||||

| ||||

Combinatorial optimization is a subfield of mathematical optimization that consists of finding an optimal object from a finite set of objects,[1] where the set of feasible solutions is discrete or can be reduced to a discrete set. Typical combinatorial optimization problems are the travelling salesman problem ("TSP"), the minimum spanning tree problem ("MST"), and the knapsack problem. In many such problems, such as the ones previously mentioned, exhaustive search is not tractable, and so specialized algorithms that quickly rule out large parts of the search space or approximation algorithms must be resorted to instead.

Combinatorial optimization is related to operations research, algorithm theory, and computational complexity theory. It has important applications in several fields, including artificial intelligence, machine learning, auction theory, software engineering, VLSI, applied mathematics and theoretical computer science.

Some research literature[2] considers discrete optimization to consist of integer programming together with combinatorial optimization (which in a turn is composed of optimization problems dealing with graph structures), although all of these topics have closely intertwined research literature. [clarification needed]

Applications

Applications of combinatorial optimization include, but are not limited to:

- Logistics[3]

- Supply chain optimization[4]

- Developing the best airline network of spokes and destinations

- Deciding which taxis in a fleet to route to pick up fares

- Determining the optimal way to deliver packages

- Allocating jobs to people optimally

- Designing water distribution networks

- Earth science problems (e.g. reservoir flow-rates)[5]

Methods

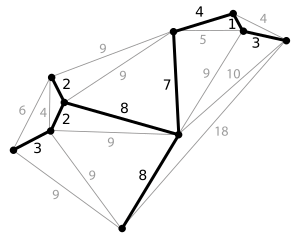

There is a large amount of literature on polynomial-time algorithms for certain special classes of discrete optimization. A considerable amount of it is unified by the theory of linear programming. Some examples of combinatorial optimization problems that are covered by this framework are shortest paths and shortest-path trees, flows and circulations, spanning trees, matching, and matroid problems.

For NP-complete discrete optimization problems, current research literature includes the following topics:

- polynomial-time exactly solvable special cases of the problem at hand (e.g. fixed-parameter tractable problems)

- algorithms that perform well on "random" instances (e.g. for the traveling salesman problem)

- approximation algorithms that run in polynomial time and find a solution that is close to optimal

- parameterized approximation algorithms that run in FPT time and find a solution close to the optimum

- solving real-world instances that arise in practice and do not necessarily exhibit the worst-case behavior of in NP-complete problems (e.g. real-world TSP instances with tens of thousands of nodes[6]).

Combinatorial optimization problems can be viewed as searching for the best element of some set of discrete items; therefore, in principle, any sort of search algorithm or metaheuristic can be used to solve them. Perhaps the most universally applicable[weasel words] approaches are branch-and-bound (an exact algorithm which can be stopped at any point in time to serve as heuristic), branch-and-cut (uses linear optimisation to generate bounds), dynamic programming (a recursive solution construction with limited search window) and tabu search (a greedy-type swapping algorithm). However, generic search algorithms are not guaranteed to find an optimal solution first, nor are they guaranteed to run quickly (in polynomial time). Since some discrete optimization problems are NP-complete, such as the traveling salesman (decision) problem,[7] this is expected unless P=NP.

For each combinatorial optimization problem, there is a corresponding decision problem that asks whether there is a feasible solution for some particular measure . For example, if there is a graph which contains vertices and , an optimization problem might be "find a path from to that uses the fewest edges". This problem might have an answer of, say, 4. A corresponding decision problem would be "is there a path from to that uses 10 or fewer edges?" This problem can be answered with a simple 'yes' or 'no'.

The field of approximation algorithms deals with algorithms to find near-optimal solutions to hard problems. The usual decision version is then an inadequate definition of the problem since it only specifies acceptable solutions. Even though we could introduce suitable decision problems, the problem is then more naturally characterized as an optimization problem.[8]

NP optimization problem

An NP-optimization problem (NPO) is a combinatorial optimization problem with the following additional conditions.[9] Note that the below referred polynomials are functions of the size of the respective functions' inputs, not the size of some implicit set of input instances.

- the size of every feasible solution is polynomially bounded in the size of the given instance ,

- the languages and can be recognized in polynomial time, and

- is polynomial-time computable.

This implies that the corresponding decision problem is in NP. In computer science, interesting optimization problems usually have the above properties and are therefore NPO problems. A problem is additionally called a P-optimization (PO) problem, if there exists an algorithm which finds optimal solutions in polynomial time. Often, when dealing with the class NPO, one is interested in optimization problems for which the decision versions are NP-complete. Note that hardness relations are always with respect to some reduction. Due to the connection between approximation algorithms and computational optimization problems, reductions which preserve approximation in some respect are for this subject preferred than the usual Turing and Karp reductions. An example of such a reduction would be L-reduction. For this reason, optimization problems with NP-complete decision versions are not necessarily called NPO-complete.[10]

NPO is divided into the following subclasses according to their approximability:[9]

- NPO(I): Equals FPTAS. Contains the Knapsack problem.

- NPO(II): Equals PTAS. Contains the Makespan scheduling problem.

- NPO(III): :The class of NPO problems that have polynomial-time algorithms which computes solutions with a cost at most c times the optimal cost (for minimization problems) or a cost at least of the optimal cost (for maximization problems). In Hromkovič's book[which?], excluded from this class are all NPO(II)-problems save if P=NP. Without the exclusion, equals APX. Contains MAX-SAT and metric TSP.

- NPO(IV): :The class of NPO problems with polynomial-time algorithms approximating the optimal solution by a ratio that is polynomial in a logarithm of the size of the input. In Hromkovič's book, all NPO(III)-problems are excluded from this class unless P=NP. Contains the set cover problem.

- NPO(V): :The class of NPO problems with polynomial-time algorithms approximating the optimal solution by a ratio bounded by some function on n. In Hromkovic's book, all NPO(IV)-problems are excluded from this class unless P=NP. Contains the TSP and clique problem.

An NPO problem is called polynomially bounded (PB) if, for every instance and for every solution , the measure is bounded by a polynomial function of the size of . The class NPOPB is the class of NPO problems that are polynomially-bounded.

Specific problems

- Assignment problem

- Bin packing problem

- Closure problem

- Constraint satisfaction problem

- Cutting stock problem

- Dominating set problem

- Integer programming

- Job shop scheduling

- Knapsack problem

- Minimum relevant variables in linear system

- Minimum spanning tree

- Nurse scheduling problem

- Set cover problem

- Talent Scheduling

- Traveling salesman problem

- Vehicle rescheduling problem

- Vehicle routing problem

- Weapon target assignment problem

See also

Notes

- ↑ Schrijver 2003, p. 1.

- ↑ Discrete Optimization. Elsevier. http://www.elsevier.com/locate/disopt. Retrieved 2009-06-08.

- ↑ Sbihi, Abdelkader; Eglese, Richard W. (2007). "Combinatorial optimization and Green Logistics". 4OR 5 (2): 99–116. doi:10.1007/s10288-007-0047-3. https://hal.archives-ouvertes.fr/hal-00644076/file/COGL_4or.pdf. Retrieved 2019-12-26.

- ↑ Eskandarpour, Majid; Dejax, Pierre; Miemczyk, Joe; Péton, Olivier (2015). "Sustainable supply chain network design: An optimization-oriented review". Omega 54: 11–32. doi:10.1016/j.omega.2015.01.006. https://hal.archives-ouvertes.fr/hal-01154605/file/eskandarpour-et-al%20review%20R2.pdf. Retrieved 2019-12-26.

- ↑ Hobé, Alex; Vogler, Daniel; Seybold, Martin P.; Ebigbo, Anozie; Settgast, Randolph R.; Saar, Martin O. (2018). "Estimating fluid flow rates through fracture networks using combinatorial optimization". Advances in Water Resources 122: 85–97. doi:10.1016/j.advwatres.2018.10.002. Bibcode: 2018AdWR..122...85H. https://www.sciencedirect.com/science/article/abs/pii/S0309170818300666. Retrieved 2020-09-16.

- ↑ Cook 2016.

- ↑ "Approximation-TSP". https://www.csd.uoc.gr/~hy583/papers/ch11.pdf.

- ↑ Ausiello, Giorgio (2003), Complexity and Approximation (Corrected ed.), Springer, ISBN 978-3-540-65431-5

- ↑ 9.0 9.1 Hromkovic, Juraj (2002), Algorithmics for Hard Problems, Texts in Theoretical Computer Science (2nd ed.), Springer, ISBN 978-3-540-44134-2

- ↑ Kann, Viggo (1992), On the Approximability of NP-complete Optimization Problems, Royal Institute of Technology, Sweden, ISBN 91-7170-082-X

- ↑ Take one city, and take all possible orders of the other 14 cities. Then divide by two because it does not matter in which direction in time they come after each other: 14!/2 = 43,589,145,600.

References

- Beasley, J. E.. "Integer programming". http://people.brunel.ac.uk/~mastjjb/jeb/or/ip.html.

- Cook, William J.; Cunningham, William H.; Pulleyblank, William R.; Schrijver, Alexander (1997). Combinatorial Optimization. Wiley. ISBN 0-471-55894-X.

- Cook, William (2016). "Optimal TSP Tours". University of Waterloo. http://www.tsp.gatech.edu/optimal/index.html. (Information on the largest TSP instances solved to date.)

- "A Compendium of NP Optimization Problems". http://www.nada.kth.se/%7Eviggo/wwwcompendium/. (This is a continuously updated catalog of approximability results for NP optimization problems.)

- Quantum Annealing and Related Optimization Methods. Lecture Notes in Physics. 679. Springer. 2005. ISBN 978-3-540-27987-7. Bibcode: 2005qnro.book.....D.

- Das, Arnab; Chakrabarti, Bikas K (2008). "Colloquium: Quantum annealing and analog quantum computation". Rev. Mod. Phys. 80 (3): 1061. doi:10.1103/RevModPhys.80.1061. Bibcode: 2008RvMP...80.1061D.

- Lawler, Eugene (2001). Combinatorial Optimization: Networks and Matroids. Dover. ISBN 0-486-41453-1.

- Lee, Jon (2004). A First Course in Combinatorial Optimization. Cambridge University Press. ISBN 0-521-01012-8. https://books.google.com/books?id=3pL1B7WVYnAC.

- Papadimitriou, Christos H.; Steiglitz, Kenneth (July 1998). Combinatorial Optimization : Algorithms and Complexity. Dover. ISBN 0-486-40258-4.

- Schrijver, Alexander (2003). Combinatorial Optimization: Polyhedra and Efficiency. Algorithms and Combinatorics. 24. Springer. ISBN 9783540443896. https://books.google.com/books?id=mqGeSQ6dJycC.

- Schrijver, Alexander (2005). "On the history of combinatorial optimization (till 1960)". Handbook of Discrete Optimization. Elsevier. pp. 1–68. http://homepages.cwi.nl/~lex/files/histco.pdf.

- Schrijver, Alexander (February 1, 2006). A Course in Combinatorial Optimization. http://homepages.cwi.nl/~lex/files/dict.pdf.

- Sierksma, Gerard; Ghosh, Diptesh (2010). Networks in Action; Text and Computer Exercises in Network Optimization. Springer. ISBN 978-1-4419-5512-8.

- Gerard Sierksma; Yori Zwols (2015). Linear and Integer Optimization: Theory and Practice. CRC Press. ISBN 978-1-498-71016-9.

- Pintea, C-M. (2014). Advances in Bio-inspired Computing for Combinatorial Optimization Problem. Intelligent Systems Reference Library. Springer. ISBN 978-3-642-40178-7. https://www.springer.com/la/book/9783642401787.

External links

- Journal of Combinatorial Optimization

- The Aussois Combinatorial Optimization Workshop

- Java Combinatorial Optimization Platform (open source code)

- Why is scheduling people hard?

- Complexity classes for optimization problems / Stefan Kugele

eo:Diskreta optimumigo

|