Quasi-Newton method

Quasi-Newton methods are methods used to either find zeroes or local maxima and minima of functions, as an alternative to Newton's method. They can be used if the Jacobian or Hessian is unavailable or is too expensive to compute at every iteration. The "full" Newton's method requires the Jacobian in order to search for zeros, or the Hessian for finding extrema.

Search for zeros: root finding

Newton's method to find zeroes of a function of multiple variables is given by , where is the left inverse of the Jacobian matrix of evaluated for .

Strictly speaking, any method that replaces the exact Jacobian with an approximation is a quasi-Newton method.[1] For instance, the chord method (where is replaced by for all iterations) is a simple example. The methods given below for optimization refer to an important subclass of quasi-Newton methods, secant methods.[2]

Using methods developed to find extrema in order to find zeroes is not always a good idea, as the majority of the methods used to find extrema require that the matrix that is used is symmetrical. While this holds in the context of the search for extrema, it rarely holds when searching for zeroes. Broyden's "good" and "bad" methods are two methods commonly used to find extrema that can also be applied to find zeroes. Other methods that can be used are the column-updating method, the inverse column-updating method, the quasi-Newton least squares method and the quasi-Newton inverse least squares method.

More recently quasi-Newton methods have been applied to find the solution of multiple coupled systems of equations (e.g. fluid–structure interaction problems or interaction problems in physics). They allow the solution to be found by solving each constituent system separately (which is simpler than the global system) in a cyclic, iterative fashion until the solution of the global system is found.[2][3]

Search for extrema: optimization

The search for a minimum or maximum of a scalar-valued function is nothing else than the search for the zeroes of the gradient of that function. Therefore, quasi-Newton methods can be readily applied to find extrema of a function. In other words, if is the gradient of , then searching for the zeroes of the vector-valued function corresponds to the search for the extrema of the scalar-valued function ; the Jacobian of now becomes the Hessian of . The main difference is that the Hessian matrix is a symmetric matrix, unlike the Jacobian when searching for zeroes. Most quasi-Newton methods used in optimization exploit this property.

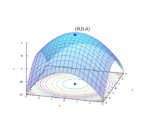

In optimization, quasi-Newton methods (a special case of variable-metric methods) are algorithms for finding local maxima and minima of functions. Quasi-Newton methods are based on Newton's method to find the stationary point of a function, where the gradient is 0. Newton's method assumes that the function can be locally approximated as a quadratic in the region around the optimum, and uses the first and second derivatives to find the stationary point. In higher dimensions, Newton's method uses the gradient and the Hessian matrix of second derivatives of the function to be minimized.

In quasi-Newton methods the Hessian matrix does not need to be computed. The Hessian is updated by analyzing successive gradient vectors instead. Quasi-Newton methods are a generalization of the secant method to find the root of the first derivative for multidimensional problems. In multiple dimensions the secant equation is under-determined, and quasi-Newton methods differ in how they constrain the solution, typically by adding a simple low-rank update to the current estimate of the Hessian.

The first quasi-Newton algorithm was proposed by William C. Davidon, a physicist working at Argonne National Laboratory. He developed the first quasi-Newton algorithm in 1959: the DFP updating formula, which was later popularized by Fletcher and Powell in 1963, but is rarely used today. The most common quasi-Newton algorithms are currently the SR1 formula (for "symmetric rank-one"), the BHHH method, the widespread BFGS method (suggested independently by Broyden, Fletcher, Goldfarb, and Shanno, in 1970), and its low-memory extension L-BFGS. The Broyden's class is a linear combination of the DFP and BFGS methods.

The SR1 formula does not guarantee the update matrix to maintain positive-definiteness and can be used for indefinite problems. The Broyden's method does not require the update matrix to be symmetric and is used to find the root of a general system of equations (rather than the gradient) by updating the Jacobian (rather than the Hessian).

One of the chief advantages of quasi-Newton methods over Newton's method is that the Hessian matrix (or, in the case of quasi-Newton methods, its approximation) does not need to be inverted. Newton's method, and its derivatives such as interior point methods, require the Hessian to be inverted, which is typically implemented by solving a system of linear equations and is often quite costly. In contrast, quasi-Newton methods usually generate an estimate of directly.

As in Newton's method, one uses a second-order approximation to find the minimum of a function . The Taylor series of around an iterate is

where () is the gradient, and an approximation to the Hessian matrix.[4] The gradient of this approximation (with respect to ) is

and setting this gradient to zero (which is the goal of optimization) provides the Newton step:

The Hessian approximation is chosen to satisfy

which is called the secant equation (the Taylor series of the gradient itself). In more than one dimension is underdetermined. In one dimension, solving for and applying the Newton's step with the updated value is equivalent to the secant method. The various quasi-Newton methods differ in their choice of the solution to the secant equation (in one dimension, all the variants are equivalent). Most methods (but with exceptions, such as Broyden's method) seek a symmetric solution (); furthermore, the variants listed below can be motivated by finding an update that is as close as possible to in some norm; that is, , where is some positive-definite matrix that defines the norm. An approximate initial value is often sufficient to achieve rapid convergence, although there is no general strategy to choose .[5] Note that should be positive-definite. The unknown is updated applying the Newton's step calculated using the current approximate Hessian matrix :

- , with chosen to satisfy the Wolfe conditions;

- ;

- The gradient computed at the new point , and

is used to update the approximate Hessian , or directly its inverse using the Sherman–Morrison formula.

- A key property of the BFGS and DFP updates is that if is positive-definite, and is chosen to satisfy the Wolfe conditions, then is also positive-definite.

The most popular update formulas are:

Method BFGS Broyden Broyden family DFP SR1

Other methods are Pearson's method, McCormick's method, the Powell symmetric Broyden (PSB) method and Greenstadt's method.[2]

Relationship to matrix inversion

When is a convex quadratic function with positive-definite Hessian , one would expect the matrices generated by a quasi-Newton method to converge to the inverse Hessian . This is indeed the case for the class of quasi-Newton methods based on least-change updates.[6]

Notable implementations

Implementations of quasi-Newton methods are available in many programming languages.

Notable open source implementations include:

- GNU Octave uses a form of BFGS in its

fsolvefunction, with trust region extensions. - GNU Scientific Library implements the Broyden-Fletcher-Goldfarb-Shanno (BFGS) algorithm.

- ALGLIB implements (L)BFGS in C++ and C#

- R's

optimgeneral-purpose optimizer routine uses the BFGS method by usingmethod="BFGS".[7] - Scipy.optimize has fmin_bfgs. In the SciPy extension to Python, the

scipy.optimize.minimizefunction includes, among other methods, a BFGS implementation.[8]

Notable proprietary implementations include:

- Mathematica includes quasi-Newton solvers.[9]

- The NAG Library contains several routines[10] for minimizing or maximizing a function[11] which use quasi-Newton algorithms.

- In MATLAB's Optimization Toolbox, the

fminuncfunction uses (among other methods) the BFGS quasi-Newton method.[12] Many of the constrained methods of the Optimization toolbox use BFGS and the variant L-BFGS.[13]<

See also

- BFGS method

- L-BFGS

- OWL-QN

- Broyden's method

- DFP updating formula

- Newton's method

- Newton's method in optimization

- SR1 formula

References

- ↑ Broyden, C. G. (1972). "Quasi-Newton Methods". in Murray, W.. Numerical Methods for Unconstrained Optimization. London: Academic Press. pp. 87–106. ISBN 0-12-512250-0.

- ↑ 2.0 2.1 2.2 Haelterman, Rob (2009). "Analytical study of the Least Squares Quasi-Newton method for interaction problems". PhD Thesis, Ghent University. https://lib.ugent.be/catalog/rug01:001333190.

- ↑ Rob Haelterman, Dirk Van Eester, Daan Verleyen (2015). "Accelerating the solution of a physics model inside a tokamak using the (Inverse) Column Updating Method". Journal of Computational and Applied Mathematics 279: 133–144. doi:10.1016/j.cam.2014.11.005.

- ↑ "Introduction to Taylor's theorem for multivariable functions - Math Insight". https://mathinsight.org/taylors_theorem_multivariable_introduction. Retrieved November 11, 2021.

- ↑ Nocedal, Jorge; Wright, Stephen J. (2006). Numerical Optimization. New York: Springer. pp. 142. ISBN 0-387-98793-2. https://archive.org/details/numericaloptimiz00noce_990.

- ↑ Robert Mansel Gower; Peter Richtarik (2015). "Randomized Quasi-Newton Updates are Linearly Convergent Matrix Inversion Algorithms". arXiv:1602.01768 [math.NA].

- ↑ "optim function - RDocumentation" (in en). https://www.rdocumentation.org/packages/stats/versions/3.6.2/topics/optim.

- ↑ "Scipy.optimize.minimize — SciPy v1.7.1 Manual". http://docs.scipy.org/doc/scipy/reference/generated/scipy.optimize.minimize.html.

- ↑ "Unconstrained Optimization: Methods for Local Minimization—Wolfram Language Documentation". https://reference.wolfram.com/language/tutorial/UnconstrainedOptimizationMethodsForLocalMinimization.html.en.

- ↑ The Numerical Algorithms Group. "Keyword Index: Quasi-Newton". NAG Library Manual, Mark 23. http://www.nag.co.uk/numeric/fl/nagdoc_fl23/html/INDEXES/KWIC/quasi-newton.html.

- ↑ The Numerical Algorithms Group. "E04 – Minimizing or Maximizing a Function". NAG Library Manual, Mark 23. http://www.nag.co.uk/numeric/fl/nagdoc_fl23/pdf/E04/e04intro.pdf.

- ↑ "Find minimum of unconstrained multivariable function - MATLAB fminunc". http://www.mathworks.com/help/toolbox/optim/ug/fminunc.html.

- ↑ "Constrained Nonlinear Optimization Algorithms - MATLAB & Simulink". https://www.mathworks.com/help/optim/ug/constrained-nonlinear-optimization-algorithms.html.

Further reading

- Bonnans, J. F.; Gilbert, J. Ch.; Lemaréchal, C.; Sagastizábal, C. A. (2006). Numerical Optimization : Theoretical and Numerical Aspects (Second ed.). Springer. ISBN 3-540-35445-X.

- Fletcher, Roger (1987), Practical methods of optimization (2nd ed.), New York: John Wiley & Sons, ISBN 978-0-471-91547-8, https://archive.org/details/practicalmethods0000flet.

- Nocedal, Jorge; Wright, Stephen J. (1999). "Quasi-Newton Methods". Numerical Optimization. New York: Springer. pp. 192–221. ISBN 0-387-98793-2. https://books.google.com/books?id=7wDpBwAAQBAJ&pg=PA192.

- Press, W. H.; Teukolsky, S. A.; Vetterling, W. T.; Flannery, B. P. (2007). "Section 10.9. Quasi-Newton or Variable Metric Methods in Multidimensions". Numerical Recipes: The Art of Scientific Computing (3rd ed.). New York: Cambridge University Press. ISBN 978-0-521-88068-8. http://apps.nrbook.com/empanel/index.html#pg=521.

- Scales, L. E. (1985). Introduction to Non-Linear Optimization. New York: MacMillan. pp. 84–106. ISBN 0-333-32552-4. https://books.google.com/books?id=AEJdDwAAQBAJ&pg=PA84.