Criss-cross algorithm

In mathematical optimization, the criss-cross algorithm is any of a family of algorithms for linear programming. Variants of the criss-cross algorithm also solve more general problems with linear inequality constraints and nonlinear objective functions; there are criss-cross algorithms for linear-fractional programming problems,[1][2] quadratic-programming problems, and linear complementarity problems.[3]

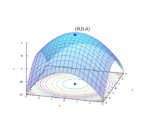

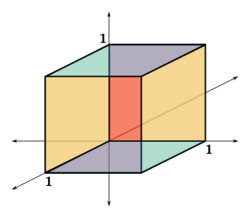

Like the simplex algorithm of George B. Dantzig, the criss-cross algorithm is not a polynomial-time algorithm for linear programming. Both algorithms visit all 2D corners of a (perturbed) cube in dimension D, the Klee–Minty cube (after Victor Klee and George J. Minty), in the worst case.[4][5] However, when it is started at a random corner, the criss-cross algorithm on average visits only D additional corners.[6][7][8] Thus, for the three-dimensional cube, the algorithm visits all 8 corners in the worst case and exactly 3 additional corners on average.

History

The criss-cross algorithm was published independently by Tamas Terlaky[9] and by Zhe-Min Wang;[10] related algorithms appeared in unpublished reports by other authors.[3]

Comparison with the simplex algorithm for linear optimization

In linear programming, the criss-cross algorithm pivots between a sequence of bases but differs from the simplex algorithm. The simplex algorithm first finds a (primal-) feasible basis by solving a "phase-one problem"; in "phase two", the simplex algorithm pivots between a sequence of basic feasible solutions so that the objective function is non-decreasing with each pivot, terminating with an optimal solution (also finally finding a "dual feasible" solution).[3][11]

The criss-cross algorithm is simpler than the simplex algorithm, because the criss-cross algorithm only has one phase. Its pivoting rules are similar to the least-index pivoting rule of Bland.[12] Bland's rule uses only signs of coefficients rather than their (real-number) order when deciding eligible pivots. Bland's rule selects an entering variables by comparing values of reduced costs, using the real-number ordering of the eligible pivots.[12][13] Unlike Bland's rule, the criss-cross algorithm is "purely combinatorial", selecting an entering variable and a leaving variable by considering only the signs of coefficients rather than their real-number ordering.[3][11] The criss-cross algorithm has been applied to furnish constructive proofs of basic results in linear algebra, such as the lemma of Farkas.[14]

While most simplex variants are monotonic in the objective (strictly in the non-degenerate case), most variants of the criss-cross algorithm lack a monotone merit function which can be a disadvantage in practice.

Description

The criss-cross algorithm works on a standard pivot tableau (or on-the-fly calculated parts of a tableau, if implemented like the revised simplex method). In a general step, if the tableau is primal or dual infeasible, it selects one of the infeasible rows / columns as the pivot row / column using an index selection rule. An important property is that the selection is made on the union of the infeasible indices and the standard version of the algorithm does not distinguish column and row indices (that is, the column indices basic in the rows). If a row is selected then the algorithm uses the index selection rule to identify a position to a dual type pivot, while if a column is selected then it uses the index selection rule to find a row position and carries out a primal type pivot.

Computational complexity: Worst and average cases

The time complexity of an algorithm counts the number of arithmetic operations sufficient for the algorithm to solve the problem. For example, Gaussian elimination requires on the order of D3 operations, and so it is said to have polynomial time-complexity, because its complexity is bounded by a cubic polynomial. There are examples of algorithms that do not have polynomial-time complexity. For example, a generalization of Gaussian elimination called Buchberger's algorithm has for its complexity an exponential function of the problem data (the degree of the polynomials and the number of variables of the multivariate polynomials). Because exponential functions eventually grow much faster than polynomial functions, an exponential complexity implies that an algorithm has slow performance on large problems.

Several algorithms for linear programming—Khachiyan's ellipsoidal algorithm, Karmarkar's projective algorithm, and central-path algorithms—have polynomial time-complexity (in the worst case and thus on average). The ellipsoidal and projective algorithms were published before the criss-cross algorithm.

However, like the simplex algorithm of Dantzig, the criss-cross algorithm is not a polynomial-time algorithm for linear programming. Terlaky's criss-cross algorithm visits all the 2D corners of a (perturbed) cube in dimension D, according to a paper of Roos; Roos's paper modifies the Klee–Minty construction of a cube on which the simplex algorithm takes 2D steps.[3][4][5] Like the simplex algorithm, the criss-cross algorithm visits all 8 corners of the three-dimensional cube in the worst case.

When it is initialized at a random corner of the cube, the criss-cross algorithm visits only D additional corners, however, according to a 1994 paper by Fukuda and Namiki.[6][7] Trivially, the simplex algorithm takes on average D steps for a cube.[8][15] Like the simplex algorithm, the criss-cross algorithm visits exactly 3 additional corners of the three-dimensional cube on average.

Variants

The criss-cross algorithm has been extended to solve more general problems than linear programming problems.

Other optimization problems with linear constraints

There are variants of the criss-cross algorithm for linear programming, for quadratic programming, and for the linear-complementarity problem with "sufficient matrices";[3][6][16][17][18][19] conversely, for linear complementarity problems, the criss-cross algorithm terminates finitely only if the matrix is a sufficient matrix.[18][19] A sufficient matrix is a generalization both of a positive-definite matrix and of a P-matrix, whose principal minors are each positive.[18][19][20] The criss-cross algorithm has been adapted also for linear-fractional programming.[1][2]

Vertex enumeration

The criss-cross algorithm was used in an algorithm for enumerating all the vertices of a polytope, which was published by David Avis and Komei Fukuda in 1992.[21] Avis and Fukuda presented an algorithm which finds the v vertices of a polyhedron defined by a nondegenerate system of n linear inequalities in D dimensions (or, dually, the v facets of the convex hull of n points in D dimensions, where each facet contains exactly D given points) in time O(nDv) and O(nD) space.[22]

Oriented matroids

The criss-cross algorithm is often studied using the theory of oriented matroids (OMs), which is a combinatorial abstraction of linear-optimization theory.[17][23] Indeed, Bland's pivoting rule was based on his previous papers on oriented-matroid theory. However, Bland's rule exhibits cycling on some oriented-matroid linear-programming problems.[17] The first purely combinatorial algorithm for linear programming was devised by Michael J. Todd.[17][24] Todd's algorithm was developed not only for linear-programming in the setting of oriented matroids, but also for quadratic-programming problems and linear-complementarity problems.[17][24] Todd's algorithm is complicated even to state, unfortunately, and its finite-convergence proofs are somewhat complicated.[17]

The criss-cross algorithm and its proof of finite termination can be simply stated and readily extend the setting of oriented matroids. The algorithm can be further simplified for linear feasibility problems, that is for linear systems with nonnegative variables; these problems can be formulated for oriented matroids.[14] The criss-cross algorithm has been adapted for problems that are more complicated than linear programming: There are oriented-matroid variants also for the quadratic-programming problem and for the linear-complementarity problem.[3][16][17]

Summary

The criss-cross algorithm is a simply stated algorithm for linear programming. It was the second fully combinatorial algorithm for linear programming. The partially combinatorial simplex algorithm of Bland cycles on some (nonrealizable) oriented matroids. The first fully combinatorial algorithm was published by Todd, and it is also like the simplex algorithm in that it preserves feasibility after the first feasible basis is generated; however, Todd's rule is complicated. The criss-cross algorithm is not a simplex-like algorithm, because it need not maintain feasibility. The criss-cross algorithm does not have polynomial time-complexity, however.

Researchers have extended the criss-cross algorithm for many optimization-problems, including linear-fractional programming. The criss-cross algorithm can solve quadratic programming problems and linear complementarity problems, even in the setting of oriented matroids. Even when generalized, the criss-cross algorithm remains simply stated.

See also

- Jack Edmonds (pioneer of combinatorial optimization and oriented-matroid theorist; doctoral advisor of Komei Fukuda)

Notes

- ↑ 1.0 1.1 (Illés Szirmai)

- ↑ 2.0 2.1 Stancu-Minasian, I. M. (August 2006). "A sixth bibliography of fractional programming". Optimization 55 (4): 405–428. doi:10.1080/02331930600819613.

- ↑ 3.0 3.1 3.2 3.3 3.4 3.5 3.6 (Fukuda Terlaky)

- ↑ 4.0 4.1 (Roos 1990)

- ↑ 5.0 5.1 Klee, Victor; Minty, George J. (1972). "How good is the simplex algorithm?". in Shisha, Oved. Inequalities III (Proceedings of the Third Symposium on Inequalities held at the University of California, Los Angeles, Calif., September 1–9, 1969, dedicated to the memory of Theodore S. Motzkin). New York-London: Academic Press. pp. 159–175.

- ↑ 6.0 6.1 6.2 (Fukuda Terlaky)

- ↑ 7.0 7.1 (Fukuda Namiki)

- ↑ 8.0 8.1 The simplex algorithm takes on average D steps for a cube. (Borgwardt 1987): Borgwardt, Karl-Heinz (1987). The simplex method: A probabilistic analysis. Algorithms and Combinatorics (Study and Research Texts). 1. Berlin: Springer-Verlag. pp. xii+268. ISBN 978-3-540-17096-9.

- ↑ (Terlaky 1985) and (Terlaky 1987)

- ↑ (Wang 1987)

- ↑ 11.0 11.1 (Terlaky Zhang)

- ↑ 12.0 12.1 Bland, Robert G. (May 1977). "New finite pivoting rules for the simplex method". Mathematics of Operations Research 2 (2): 103–107. doi:10.1287/moor.2.2.103.

- ↑ Bland's rule is also related to an earlier least-index rule, which was proposed by Katta G. Murty for the linear complementarity problem, according to (Fukuda Namiki).

- ↑ 14.0 14.1 (Klafszky Terlaky)

- ↑ More generally, for the simplex algorithm, the expected number of steps is proportional to D for linear-programming problems that are randomly drawn from the Euclidean unit sphere, as proved by Borgwardt and by Smale.

- ↑ 16.0 16.1 (Fukuda Namiki)

- ↑ 17.0 17.1 17.2 17.3 17.4 17.5 17.6 Björner, Anders; Las Vergnas, Michel; Sturmfels, Bernd; White, Neil; Ziegler, Günter (1999). "10 Linear programming". Oriented Matroids. Cambridge University Press. pp. 417–479. doi:10.1017/CBO9780511586507. ISBN 978-0-521-77750-6.

- ↑ 18.0 18.1 18.2 den Hertog, D.; Roos, C.; Terlaky, T. (1 July 1993). "The linear complementarity problem, sufficient matrices, and the criss-cross method". Linear Algebra and Its Applications 187: 1–14. doi:10.1016/0024-3795(93)90124-7. http://core.ac.uk/download/pdf/6714737.pdf.

- ↑ 19.0 19.1 19.2 Csizmadia, Zsolt; Illés, Tibor (2006). "New criss-cross type algorithms for linear complementarity problems with sufficient matrices" (pdf). Optimization Methods and Software 21 (2): 247–266. doi:10.1080/10556780500095009. http://www.cs.elte.hu/opres/orr/download/ORR03_1.pdf. Retrieved 30 August 2011.

- ↑ Cottle, R. W.; Pang, J.-S.; Venkateswaran, V. (March–April 1989). "Sufficient matrices and the linear complementarity problem". Linear Algebra and Its Applications 114–115: 231–249. doi:10.1016/0024-3795(89)90463-1.

- ↑ (Avis Fukuda)

- ↑ The v vertices in a simple arrangement of n hyperplanes in D dimensions can be found in O(n2Dv) time and O(nD) space complexity.

- ↑ The theory of oriented matroids was initiated by R. Tyrrell Rockafellar. (Rockafellar 1969):

Rockafellar, R. T. (1969). "The elementary vectors of a subspace of (1967)". in R. C. Bose and T. A. Dowling. Combinatorial Mathematics and its Applications. The University of North Carolina Monograph Series in Probability and Statistics. Chapel Hill, North Carolina: University of North Carolina Press.. pp. 104–127. PDF reprint. http://www.math.washington.edu/~rtr/papers/rtr-ElemVectors.pdf.

Rockafellar was influenced by the earlier studies of Albert W. Tucker and George J. Minty. Tucker and Minty had studied the sign patterns of the matrices arising through the pivoting operations of Dantzig's simplex algorithm.

- ↑ 24.0 24.1 Todd, Michael J. (1985). "Linear and quadratic programming in oriented matroids". Journal of Combinatorial Theory. Series B 39 (2): 105–133. doi:10.1016/0095-8956(85)90042-5.

References

- Avis, David; Fukuda, Komei (December 1992). "A pivoting algorithm for convex hulls and vertex enumeration of arrangements and polyhedra". Discrete and Computational Geometry 8 (ACM Symposium on Computational Geometry (North Conway, NH, 1991) number 1): 295–313. doi:10.1007/BF02293050.

- Csizmadia, Zsolt; Illés, Tibor (2006). "New criss-cross type algorithms for linear complementarity problems with sufficient matrices" (pdf). Optimization Methods and Software 21 (2): 247–266. doi:10.1080/10556780500095009. http://www.cs.elte.hu/opres/orr/download/ORR03_1.pdf. Retrieved 30 August 2011.

- Fukuda, Komei; Namiki, Makoto (March 1994). "On extremal behaviors of Murty's least index method". Mathematical Programming 64 (1): 365–370. doi:10.1007/BF01582581.

- Fukuda, Komei; Terlaky, Tamás (1997). Liebling, Thomas M.; de Werra, Dominique. eds. "Criss-cross methods: A fresh view on pivot algorithms". Mathematical Programming, Series B 79 (Papers from the 16th International Symposium on Mathematical Programming held in Lausanne, 1997, number 1–3): 369–395. doi:10.1007/BF02614325. Postscript preprint.

- den Hertog, D.; Roos, C.; Terlaky, T. (1 July 1993). "The linear complementarity problem, sufficient matrices, and the criss-cross method". Linear Algebra and Its Applications 187: 1–14. doi:10.1016/0024-3795(93)90124-7. http://core.ac.uk/download/pdf/6714737.pdf.

- Illés, Tibor; Szirmai, Ákos; Terlaky, Tamás (1999). "The finite criss-cross method for hyperbolic programming". European Journal of Operational Research 114 (1): 198–214. doi:10.1016/S0377-2217(98)00049-6. Postscript preprint. http://www.sciencedirect.com/science/article/B6VCT-3W3DFHB-M/2/4b0e2fcfc2a71e8c14c61640b32e805a.

- Klafszky, Emil; Terlaky, Tamás (June 1991). "The role of pivoting in proving some fundamental theorems of linear algebra". Linear Algebra and Its Applications 151: 97–118. doi:10.1016/0024-3795(91)90356-2.

- Roos, C. (1990). "An exponential example for Terlaky's pivoting rule for the criss-cross simplex method". Mathematical Programming. Series A 46 (1): 79–84. doi:10.1007/BF01585729.

- Terlaky, T. (1985). "A convergent criss-cross method". Optimization: A Journal of Mathematical Programming and Operations Research 16 (5): 683–690. doi:10.1080/02331938508843067. ISSN 0233-1934.

- Terlaky, Tamás (1987). "A finite crisscross method for oriented matroids". Journal of Combinatorial Theory. Series B 42 (3): 319–327. doi:10.1016/0095-8956(87)90049-9. ISSN 0095-8956.

- Terlaky, Tamás; Zhang, Shu Zhong (1993). "Pivot rules for linear programming: A Survey on recent theoretical developments". Annals of Operations Research 46–47 (Degeneracy in optimization problems, number 1): 203–233. doi:10.1007/BF02096264. ISSN 0254-5330.

- Wang, Zhe Min (1987). "A finite conformal-elimination free algorithm over oriented matroid programming". Chinese Annals of Mathematics (Shuxue Niankan B Ji). Series B 8 (1): 120–125. ISSN 0252-9599.

External links

- Komei Fukuda (ETH Zentrum, Zurich) with publications

- Tamás Terlaky (Lehigh University) with publications

|