Physics:Energy

| Energy | |

|---|---|

| |

Common symbols | E |

| SI unit | joule[1] (J) |

Other units | kWh, BTU, calorie, eV, erg, foot-pound[2] |

| In SI base units | J = kg⋅m2⋅s−2[3] |

| Extensive? | yes[4] |

| Conserved? | yes |

| Dimension | M L2 T−2[5] |

Energy (from grc ἐνέργεια (enérgeia) 'activity') is the quantitative property that is transferred to a body or to a physical system, recognizable in the performance of work and in the form of heat and light. Energy is a conserved quantity—the law of conservation of energy states that energy can be converted in form, but not created or destroyed. The unit of measurement for energy in the International System of Units (SI) is the joule (J).

Forms of energy include the kinetic energy of a moving object, the potential energy stored by an object (for instance due to its position in a field), the elastic energy stored in a solid object, chemical energy associated with chemical reactions, the radiant energy carried by electromagnetic radiation, the internal energy contained within a thermodynamic system, and rest energy associated with an object's rest mass. These are not mutually exclusive.

All living organisms constantly take in and release energy. The Earth's climate and ecosystems processes are driven primarily by radiant energy from the Sun.[6]

Forms

The total energy of a system can be subdivided and classified into potential energy, kinetic energy, or combinations of the two in various ways. Kinetic energy is determined by the movement of an object – or the composite motion of the object's components – while potential energy reflects the potential of an object to have motion, generally being based upon the object's position within a field or what is stored within the field itself.[7]

While these two categories are sufficient to describe all forms of energy, it is often convenient to refer to particular combinations of potential and kinetic energy as its own form. For example, the sum of translational and rotational kinetic and potential energy within a system is referred to as mechanical energy, whereas nuclear energy refers to the combined potentials within an atomic nucleus from either the nuclear force or the weak force, among other examples.[8]

| Type of energy | Description |

|---|---|

| Chemical | potential energy due to chemical bonds |

| Chromodynamic | potential energy that binds quarks to form hadrons |

| Elastic | potential energy due to the deformation of a material (or its container) exhibiting a restorative force as it returns to its original shape |

| Electric | potential energy due to or stored in electric fields |

| Gravitational | potential energy due to or stored in gravitational fields |

| Ionization | potential energy that binds an electron to its atom or molecule |

| Magnetic | potential energy due to or stored in magnetic fields |

| Mechanical | the sum of macroscopic translational and rotational kinetic and potential energies |

| Mechanical wave | kinetic and potential energy in an elastic material due to a propagating oscillation of matter |

| Nuclear | potential energy that binds nucleons to form the atomic nucleus (and nuclear reactions) |

| Radiant | potential energy stored in the fields of waves propagated by electromagnetic radiation, including light |

| Rest | potential energy due to an object's rest mass |

| Rotational | kinetic energy due to the rotation of an object |

| Sound wave | kinetic and potential energy in a material due to a sound propagated wave (a particular type of mechanical wave) |

| Thermal | kinetic energy of the microscopic motion of particles, a kind of disordered equivalent of mechanical energy |

History

The word energy derives from the Ancient Greek: ἐνέργεια, romanized: energeia, lit. 'activity, operation',[11] which possibly appears for the first time in the work of Aristotle in the 4th century BC. In contrast to the modern definition, energeia was a qualitative philosophical concept, broad enough to include ideas such as happiness and pleasure.[12]

In the late 17th century, Gottfried Leibniz proposed the idea of the Latin: vis viva, or living force, which defined as the product of the mass of an object and its velocity squared; he believed that total vis viva was conserved. To account for slowing due to friction, Leibniz theorized that thermal energy consisted of the motions of the constituent parts of matter, although it would be more than a century until this was generally accepted. The modern analog of this property, kinetic energy, differs from vis viva only by a factor of two.[13] Writing in the early 18th century, Émilie du Châtelet proposed the concept of conservation of energy in the marginalia of her French language translation of Newton's Principia Mathematica, which represented the first formulation of a conserved measurable quantity that was distinct from momentum, and which would later be called "energy".[14]

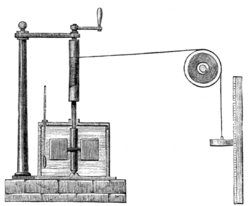

In 1807, Thomas Young was possibly the first to use the term "energy" instead of vis viva, in its modern sense.[15] Gustave-Gaspard Coriolis described "kinetic energy" in 1829 in its modern sense,[16] and in 1853, William Rankine coined the term "potential energy".[17] The law of conservation of energy was also first postulated in the early 19th century, and applies to any isolated system.[18] It was argued for some years whether heat was a physical substance, dubbed the caloric, or merely a physical quantity, such as momentum. In 1845 James Prescott Joule discovered the link between mechanical work and the generation of heat.[19]

These developments led to the theory of conservation of energy, formalized largely by William Thomson (Lord Kelvin) as the field of thermodynamics.[20] Thermodynamics aided the rapid development of explanations of chemical processes by Rudolf Clausius, Josiah Willard Gibbs, Walther Nernst, and others.[21] It also led to a mathematical formulation of the concept of entropy by Clausius[22] and to the introduction of laws of radiant energy by Jožef Stefan.[23] According to Noether's theorem, the conservation of energy is a consequence of the fact that the laws of physics do not change over time.[24] Thus, since 1918, theorists have understood that the law of conservation of energy is the direct mathematical consequence of the translational symmetry of the quantity conjugate to energy, namely time.[25]

Albert Einstein's 1905 theory of special relativity showed that rest mass corresponds to an equivalent amount of rest energy. This means that rest mass can be converted to or from equivalent amounts of (non-material) forms of energy, for example, kinetic energy, potential energy, and electromagnetic radiant energy. When this happens, rest mass is not conserved, unlike the total mass or total energy. All forms of energy contribute to the total mass and total energy. Thus, conservation of energy (total, including material or rest energy) and conservation of mass (total, not just rest) are one (equivalent) law. In the 18th century, these had appeared as two seemingly-distinct laws.[26][27]

The first evidence of quantization in atoms was the observation of spectral lines in light from the sun in the early 1800s by Joseph von Fraunhofer and William Hyde Wollaston. The notion of quantized energy levels was proposed in 1913 by Danish physicist Niels Bohr in the Bohr theory of the atom. The modern quantum mechanical theory giving an explanation of these energy levels in terms of the Schrödinger equation was advanced by Erwin Schrödinger and Werner Heisenberg in 1926.[28] Noether's theorem shows that the symmetry of this equation is equivalent to a conservation of probability.[29] At the quantum level, mass-energy interactions are all subject to this principle.[30] During wave function collapse, the conservation of energy does not hold at the local level, although statistically the principle holds on average for sufficiently large numbers of collapses.[31] Conservation of energy does apply during wave function collapse in H. Everett's many-worlds interpretation of quantum mechanics.[32]

Units of measure

In dimensional analysis, the base units of energy are given by: Work = Force × Distance = M L2 T−2, with the fundamental dimensions of Mass M, Length L, and time T.[5] In the International System of Units (SI), the unit of energy is the joule. It is a derived unit that is equal to the energy expended, or work done, in applying a force of one newton through a distance of one metre.[1]

The SI unit of power, defined as energy per unit of time, is the watt, which is one joule per second.[3] Thus, a kilowatt-hour (kWh), which can be realized as the energy delivered by one kilowatt of power for an hour, is equal to 3.6 million joules.[33] The CGS energy unit is the erg and the imperial and US customary unit is the foot-pound.[34]

Other energy units such as the electronvolt, food calorie, thermodynamic kilocalorie and BTU are used in specific areas of science and commerce.[35][2]

Scientific use

Classical mechanics

| Part of a series on |

| Classical mechanics |

|---|

In classical mechanics, energy is a conceptually and mathematically useful property, as it is a conserved quantity. Several formulations of mechanics have been developed using energy as a core concept.

Work, a function of energy, is force times distance.[36]

This says that the work () is equal to the line integral of the force F along a path C; for details see the mechanical work article. Work and thus energy is frame dependent. For example, consider a ball being hit by a bat. In the center-of-mass reference frame, the bat does no work on the ball. But, in the reference frame of the person swinging the bat, considerable work is done on the ball.[37]

The total energy of a system is sometimes called the Hamiltonian, after William Rowan Hamilton. The classical equations of motion can be written in terms of the Hamiltonian, even for highly complex or abstract systems.[38] These classical equations have direct analogs in nonrelativistic quantum mechanics.[39]

Another energy-related concept is called the Lagrangian, after Joseph-Louis Lagrange. This formalism is as fundamental as the Hamiltonian, and both can be used to derive the equations of motion or be derived from them. It was invented in the context of classical mechanics, but is generally useful in modern physics. The Lagrangian is defined as the kinetic energy minus the potential energy. Usually, the Lagrange formalism is mathematically more convenient than the Hamiltonian for non-conservative systems (such as systems with friction).[40]

Noether's theorem (1918) states that any differentiable symmetry of the action of a physical system has a corresponding conservation law. Noether's theorem has become a fundamental tool of modern theoretical physics and the calculus of variations. A generalisation of the seminal formulations on constants of motion in Lagrangian and Hamiltonian mechanics (1788 and 1833, respectively), it does not apply to systems that cannot be modeled with a Lagrangian;[41] for example, dissipative systems with continuous symmetries need not have a corresponding conservation law.

Chemistry

In the context of chemistry, energy is an attribute of a substance as a consequence of its atomic, molecular, or aggregate structure. Since a chemical transformation is accompanied by a change in one or more of these kinds of structure, it is usually accompanied by a decrease, and sometimes an increase, of the total energy of the substances involved. Some energy may be transferred between the surroundings and the reactants in the form of heat or light; thus the products of a reaction have sometimes more but usually less energy than the reactants. A reaction is said to be exothermic or exergonic if the final state is lower on the energy scale than the initial state; in the less common case of endothermic reactions the situation is the reverse.[42]

Chemical reactions are usually not possible unless the reactants surmount an energy barrier known as the activation energy. The speed of a chemical reaction (at a given temperature T) is related to the activation energy E by the Boltzmann population factor e−E/kT; that is, the probability of a molecule to have energy greater than or equal to E at a given temperature T. This exponential dependence of a reaction rate on temperature is known as the Arrhenius equation. The activation energy necessary for a chemical reaction can be provided in the form of thermal energy.[43]

Biology

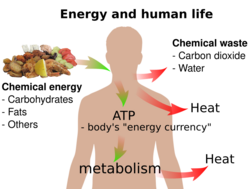

In biology, energy is an attribute of all biological systems, from the biosphere to the smallest living organism. It enables the growth, development, and functioning of a biological cell or organelle in an organism. All living creatures rely on an external source of energy to be able to grow and reproduce – radiant energy from the Sun in the case of green plants and chemical energy (in some form) in the case of animals. Energy provided through cellular respiration is stored in nutrients such as carbohydrates (including sugars), lipids, and proteins by cells.[44]

Sunlight's radiant energy is captured by plants as chemical potential energy in photosynthesis, when carbon dioxide and water (two low-energy compounds) are converted into carbohydrates, lipids, proteins, and oxygen.[45] Release of the energy stored during photosynthesis as heat or light may be triggered suddenly by a spark in a forest fire, or it may be made available more slowly for animal or human metabolism when organic molecules are ingested and catabolism is triggered by enzyme action.[46]

Humans

The basal metabolism rate measures the food energy expenditure per unit time by endothermic animals at rest.[47] In other words it is the energy required by body organs to perform normally. For humans, metabolic equivalent of task (MET) compares the energy expenditure per unit mass while performing a physical activity, relative to a baseline. By convention, this baseline is 3.5 mL of oxygen consumed per kg per minute, which is the energy consumed by a typical individual when sitting quietly.[48]

For example, if our bodies run (on average) at 80 watts, then a light bulb running at 100 watts is running at 1.25 human equivalents (100 ÷ 80) i.e. 1.25 H-e. For a difficult task of only a few seconds' duration, a person can put out thousands of watts, many times the 746 watts in one official horsepower. For tasks lasting a few minutes, a fit human can generate perhaps 1,000 watts. For an activity that must be sustained for an hour, output drops to around 300; for an activity kept up all day, 150 watts is about the maximum.[49] The human equivalent assists understanding of energy flows in physical and biological systems by expressing energy units in human terms: it provides a "feel" for the use of a given amount of energy.[50]

The daily Lua error: Internal error: The interpreter exited with status 0. recommended for a human adult are taken as food molecules,[51] mostly carbohydrates and fats. Only a tiny fraction of the original chemical energy is used for work:[note 1]

- gain in kinetic energy of a sprinter during a 100 m race: 4 kJ

- gain in gravitational potential energy of a 150 kg weight lifted through 2 metres: 3 kJ

- daily food intake of a normal adult: 6–8 MJ

It would appear that living organisms are remarkably inefficient (in the physical sense) in their use of the energy they receive (chemical or radiant energy); most machines manage higher efficiencies.Lua error: Internal error: The interpreter exited with status 0.

In growing organisms the energy that is converted to heat serves a vital purpose, as it allows the organism's tissue to be highly ordered with regard to the molecules it is built from. The second law of thermodynamics states that energy (and matter) tends to become more evenly spread out across the universe: to concentrate energy (or matter) in one specific place, it is necessary to spread out a greater amount of energy (as heat) across the remainder of the universe ("the surroundings").[note 2] Simpler organisms can achieve higher energy efficiencies than more complex ones, but the complex organisms can occupy ecological niches that are not available to their simpler brethren. The conversion of a portion of the chemical energy to heat at each step in a metabolic pathway is the physical reason behind the pyramid of biomass observed in ecology. As an example, to take just the first step in the food chain: of the estimated 124.7 Pg/a of carbon that is fixed by photosynthesis, 64.3 Pg/a (52%) are used for the metabolism of green plants,[52] i.e. reconverted into carbon dioxide and heat.

Cell metabolism

Lua error: Internal error: The interpreter exited with status 0.

Multicellular organisms such as humans have cell forms that are classified as Eukaryote. These cells include an organelle called the mitochondria that generates chemical energy for the rest of the hosting cell. Ninety percent of the oxygen intake by humans is utilized by the mitochondria, especially for nutrient processing.[53] The molecule adenosine triphosphate (ATP) is the primary energy transporter in living cells, providing an energy source for cellular processes. It is continually being broken down and synthesized as a component of cellular respiration.[54]

Two examples of nutrients consumed by animals are glucose (C6H12O6) and stearin (C57H110O6). These food molecules are oxidized to carbon dioxide and water in the mitochondria:[55] and some of the energy is used to convert ADP into ATP:[56][53]

The rest of the chemical energy of the nutrients are converted into heat: the ATP is used as a sort of "energy currency", and some of the chemical energy it contains is used for other metabolism when ATP reacts with OH groups and eventually splits into ADP and phosphate (at each stage of a metabolic pathway, some chemical energy is converted into heat).

Earth sciences

In geology, continental drift, mountain ranges, volcanoes, and earthquakes are phenomena that can be explained in terms of energy transformations in the Earth's interior,[57] while meteorological phenomena like wind, rain, hail, snow, lightning, tornadoes, and hurricanes are all a result of energy transformations in our atmosphere brought about by solar energy.

Sunlight is the main input to Earth's energy budget which accounts for its temperature and climate stability, after accounting for interaction with the atmosphere.[58] Sunlight may be stored as gravitational potential energy after it strikes the Earth, as (for example when) water evaporates from oceans and is deposited upon mountains (where, after being released at a hydroelectric dam, it can be used to drive turbines or generators to produce electricity).[59] An example of a solar-mediated weather event is a hurricane, which occurs when large unstable areas of warm ocean, heated over months, suddenly give up some of their thermal energy to power a few days of violent air movement.[60]

In a slower process, radioactive decay of atoms in the core of the Earth releases heat, which supplies more than half of the planet's internal heat budget.[61] In the present day, this radiogenic heat production was primarily driven by the decay of Uranium-235, Potassium-40, and Thorium-232 some time in the past.[62] This thermal energy drives plate tectonics and may lift mountains, via orogenesis. This slow lifting represents a kind of gravitational potential energy storage of the thermal energy, which may later be transformed into active kinetic energy during landslides, after a triggering event. Earthquakes also release stored elastic potential energy in rocks, a store that has been produced ultimately from the same radioactive heat sources. Thus, according to present understanding, familiar events such as landslides and earthquakes release energy that has been stored as potential energy in the Earth's gravitational field or elastic strain (mechanical potential energy) in rocks.[63] Prior to this, they represent release of energy that has been stored in heavy atoms since the collapse of long-destroyed supernova stars (which created these atoms).[64]

Early in a planet's history, the accretion process provides impact energy that can partially or completely melt the body. This allows a planet to become differentiated by chemical element. Chemical phase changes of minerals during formation provide additional internal heating. Over time the internal heat is brought to the surface then radiated away into space, cooling the body. Accreted radiogenic heat sources settle toward the core, providing thermal energy to the planet on a geologic time scale.[65] Ongoing sedimentation provides a persistent internal energy source for gas giant planets like Jupiter and Saturn.[66]

Cosmology

In cosmology and astronomy the phenomena of stars, nova, supernova, quasars, and gamma-ray bursts are the universe's highest-output energy transformations of matter. All stellar phenomena (including solar activity) are driven by various kinds of energy transformations. Energy in such transformations is either from gravitational collapse of matter (usually molecular hydrogen) into various classes of astronomical objects (stars, black holes, etc.), or from nuclear fusion (of lighter elements, primarily hydrogen).[67]

The nuclear fusion of hydrogen in the Sun also releases another store of potential energy which was created at the time of the Big Bang. At that time, according to theory, space expanded and the universe cooled too rapidly for hydrogen to completely fuse into heavier elements. This meant that hydrogen represents a store of potential energy that can be released by fusion. Such a fusion process is triggered by heat and pressure generated from gravitational collapse of hydrogen clouds when they produce stars, and some of the fusion energy is then transformed into sunlight.[68] Lua error: Internal error: The interpreter exited with status 0.

The accretion of matter onto a compact object is a very efficient means of generating energy from gravitational potential. This behavior is responsible for some of the universe's brightest persistent energy sources.[69] The Penrose process is a theoretical method by which energy could be extracted from a rotating black hole.[70] Hawking radiation is the emission of black-body radiation from a black hole, which results in a steady loss of mass and rotational energy. As the object evaporates, the temperature of this radiation is predicted to increase, speeding up the process.[71]

Quantum mechanics

Lua error: Internal error: The interpreter exited with status 0. In quantum mechanics, energy is defined in terms of the energy operator (Hamiltonian) as a time derivative of the wave function. The Schrödinger equation equates the energy operator to the full energy of a particle or a system. Its results can be considered as a definition of measurement of energy in quantum mechanics. The Schrödinger equation describes the space- and time-dependence of a slowly changing (non-relativistic) wave function of quantum systems. The solution of this equation for a bound system is discrete (a set of permitted states, each characterized by an energy level) which results in the concept of quanta.[72]

In the solution of the Schrödinger equation for any oscillator (vibrator) and for electromagnetic waves in a vacuum, the resulting energy states are related to the frequency by the Planck relation: , where is the Planck constant and the frequency. In the case of an electromagnetic wave these energy states are called quanta of light or photons. For matter waves, the de Broglie relation yields , where is the momentum.[73]

Relativity

When calculating kinetic energy (work to accelerate a massive body from zero speed to some finite speed) relativistically – using Lorentz transformations instead of Newtonian mechanics – Einstein discovered an unexpected by-product of these calculations to be an energy term which does not vanish at zero speed. He called it rest energy: energy which every massive body must possess even when being at rest. The amount of energy is directly proportional to the mass of the body:[74]

where

- m0 is the rest mass of the body,

- c is the speed of light in vacuum,

- is the rest energy.

For example, consider electron–positron annihilation, in which the rest energy of these two individual particles (equivalent to their rest mass) is converted to the radiant energy of the photons produced in the process. In this system the matter and antimatter (electrons and positrons) are destroyed and changed to non-matter (the photons). However, the total mass and total energy do not change during this interaction. The photons each have no rest mass but nonetheless have radiant energy which exhibits the same inertia as did the two original particles. This is a reversible process – the inverse process is called pair creation – in which the rest mass of the particles is created from a sufficiently energetic photon near a nucleus.[75]

Energy and mass are manifestations of one and the same underlying physical property of a system. This property is responsible for the inertia and strength of gravitational interaction of the system ("mass manifestations"),[76] and is also responsible for the potential ability of the system to perform work or heating ("energy manifestations"), subject to the limitations of other physical laws.

In classical physics, energy is a scalar quantity, the canonical conjugate to time. In special relativity energy is also a scalar (although not a Lorentz scalar but a time component of the energy–momentum 4-vector).[77] In other words, energy is invariant with respect to rotations of space, but not invariant with respect to rotations of spacetime (= boosts).

Transformation

Lua error: Internal error: The interpreter exited with status 0.

| Type of transfer process | Description |

|---|---|

| Heat | equal amount of thermal energy in transit spontaneously towards a lower-temperature object |

| Work | equal amount of energy in transit due to a displacement in the direction of an applied force |

| Transfer of material | equal amount of energy carried by matter that is moving from one system to another |

Energy may be transformed between different forms at various efficiencies. Devices that usefully transform between these forms are called transducers. Examples of transducers include a battery (from chemical energy to electric energy), a dam (from gravitational potential energy to the kinetic energy of water spinning the blades of a turbine, and ultimately to electric energy through an electric generator), and a heat engine (from heat to work).[78][79]

Examples of energy transformation include generating electric energy from heat energy via a steam turbine,[79] or lifting an object against gravity using electrical energy driving a crane motor. Lifting against gravity performs mechanical work on the object and stores gravitational potential energy in the object. If the object falls to the ground, gravity does mechanical work on the object which transforms the potential energy in the gravitational field to the kinetic energy released as heat on impact with the ground.[80] The Sun transforms nuclear potential energy to other forms of energy; its total mass does not decrease due to that itself (since it still contains the same total energy even in different forms) but its mass does decrease when the energy escapes out to its surroundings, largely as radiant energy.[81]

There are strict limits to how efficiently heat can be converted into work in a cyclic process, e.g. in a heat engine, as described by Carnot's theorem and the second law of thermodynamics.[82] However, some energy transformations can be quite efficient.[83] The direction of transformations in energy (what kind of energy is transformed to what other kind) is often determined by entropy (equal energy spread among all available degrees of freedom) considerations. In practice all energy transformations are permitted on a sufficiently small scale, but certain larger transformations are highly improbable because it is statistically unlikely that energy or matter will randomly move into more concentrated forms or smaller spaces.[84]

Energy transformations in the universe over time are characterized by various kinds of potential energy, that has been available since the Big Bang, being "released" (transformed to more active types of energy such as kinetic or radiant energy) when a triggering mechanism is available.[85] Familiar examples of such processes include nucleosynthesis, a process ultimately using the gravitational potential energy released from the gravitational collapse of supernovae to "store" energy in the creation of heavy isotopes (such as uranium and thorium), and nuclear decay, a process in which energy is released that was originally stored in these heavy elements, before they were incorporated into the Solar System and the Earth.[86] This energy is triggered and released in nuclear fission bombs or in civil nuclear power generation. Similarly, in the case of a chemical explosion, chemical potential energy is transformed to kinetic and thermal energy in a very short time.[87]

Yet another example of energy transformation is that of a simple gravity pendulum. At its highest points the kinetic energy is zero and the gravitational potential energy is at its maximum. At its lowest point the kinetic energy is at its maximum and is equal to the decrease in potential energy. If one (unrealistically) assumes that there is no friction or other losses, the conversion of energy between these processes would be perfect, and the pendulum would continue swinging forever. Energy is transferred from potential energy () to kinetic energy () and then back to potential energy constantly. This is referred to as conservation of energy.

In this isolated system, energy cannot be created or destroyed; therefore, the initial energy and the final energy will be equal to each other. This can be demonstrated by the following:

|

|

() |

The equation can then be simplified further since (mass times acceleration due to gravity times the height) and (half mass times velocity squared). Then the total amount of energy can be found by adding .[88]

Conservation of energy and mass in transformation

Within a gravitational field, both mass and energy give rise to a measureable weight when trapped in a system with zero momentum. The formula E = mc2, derived by Albert Einstein (1905) quantifies this mass–energy equivalence between relativistic mass and energy within the concept of special relativity. In different theoretical frameworks, similar formulas were derived by J. J. Thomson (1881), Henri Poincaré (1900), Friedrich Hasenöhrl (1904), and others (see Mass–energy equivalence for further information).

Part of the rest energy (equivalent to rest mass) of matter may be converted to other forms of energy (still exhibiting mass), but neither energy nor mass can be destroyed; rather, both remain constant during any process. However, since is extremely large relative to ordinary human scales, the conversion of an everyday amount of rest mass from rest energy to other forms of energy (such as kinetic energy, thermal energy, or the radiant energy carried by light and other radiation) can liberate tremendous amounts of energy, as can be seen in nuclear reactors and nuclear weapons.[89] For example, 1 kg of rest mass equals Lua error: Internal error: The interpreter exited with status 0., equivalent to 21.5 megatonnes of TNT.[90]

Conversely, the mass equivalent of an everyday amount energy is minuscule. Examples of large-scale transformations between the rest energy of matter and other forms of energy are found in nuclear physics and particle physics. The complete conversion of matter, such as atoms, to non-matter, such as photons, occurs during interaction with antimatter.[91]

Reversible and non-reversible transformations

Thermodynamics divides energy transformation into two kinds: reversible processes and irreversible processes. An irreversible process is one in which energy is dissipated (spread) into empty energy states available in a volume, from which it cannot be recovered into more concentrated forms (fewer quantum states), without degradation of even more energy. A reversible process is one in which this sort of dissipation does not happen. For example, conversion of energy from one type of potential field to another is reversible, as in the pendulum system described above.[92]

At the atomic scale, thermal energy is present in the form of motion and vibrations of individual atoms and molecules. When heat is generated, radiation excites lower energy states of these atoms and their surrounding fields. This heating process acts as a reservoir for part of the applied energy, from which it cannot be converted with 100% efficiency into other forms of energy.[93] According to the second law of thermodynamics, this heat can only be completely recovered as usable energy at the price of an increase in some other kind of heat-like disorder in quantum states.

As the universe evolves with time, more and more of its energy becomes trapped in irreversible states (i.e., as heat or as other kinds of increases in disorder). This has led to the hypothesis of the inevitable thermodynamic heat death of the universe. In this heat death the energy of the universe does not change, but the fraction of energy which is available to do work through a heat engine, or be transformed to other usable forms of energy (through the use of generators attached to heat engines), continues to decrease.[94]

Conservation of energy

Lua error: Internal error: The interpreter exited with status 0. The fact that energy can be neither created nor destroyed is called the law of conservation of energy. In the form of the first law of thermodynamics, this states that a closed system's energy is constant unless energy is transferred in or out as work or heat, and that no energy is lost in transfer. The total inflow of energy into a system must equal the total outflow of energy from the system, plus the change in the energy contained within the system. Whenever one measures (or calculates) the total energy of a system of particles whose interactions do not depend explicitly on time, it is found that the total energy of the system always remains constant.[95]

While heat can always be fully converted into work in a reversible isothermal expansion of an ideal gas, for cyclic processes of practical interest in heat engines the second law of thermodynamics states that the system doing work always loses some energy as waste heat. This creates a limit to the amount of heat energy that can do work in a cyclic process, a limit called the available energy. Mechanical and other forms of energy can be transformed in the other direction into thermal energy without such limitations.[96] The total energy of a system can be calculated by adding up all forms of energy in the system.

Richard Feynman said during a 1961 lecture:[97]

There is a fact, or if you wish, a law, governing all natural phenomena that are known to date. There is no known exception to this law – it is exact so far as we know. The law is called the conservation of energy. It states that there is a certain quantity, which we call energy, that does not change in manifold changes which nature undergoes. That is a most abstract idea, because it is a mathematical principle; it says that there is a numerical quantity which does not change when something happens. It is not a description of a mechanism, or anything concrete; it is just a strange fact that we can calculate some number and when we finish watching nature go through her tricks and calculate the number again, it is the same.

— Lua error: Internal error: The interpreter exited with status 0.

Lua error: Internal error: The interpreter exited with status 0.

Most kinds of energy (with gravitational energy being a notable exception)[98] are subject to strict local conservation laws as well. In this case, energy can only be exchanged between adjacent regions of space, and all observers agree as to the volumetric density of energy in any given space. There is also a global law of conservation of energy, stating that the total energy of the universe cannot change; this is a corollary of the local law, but not vice versa.[96][97]

This law is a fundamental principle of physics. As shown rigorously by Noether's theorem, the conservation of energy is a mathematical consequence of translational symmetry of time,[99] a property of most phenomena below the cosmic scale that makes them independent of their locations on the time coordinate. Put differently, yesterday, today, and tomorrow are physically indistinguishable. This is because energy is the quantity which is canonical conjugate to time. This mathematical entanglement of energy and time also results in the uncertainty principle – it is impossible to define the exact amount of energy during any definite time interval (though this is practically significant only for very short time intervals). The uncertainty principle should not be confused with energy conservation – rather it provides mathematical limits to which energy can in principle be defined and measured.

Each of the basic forces of nature is associated with a different type of potential energy, and all types of potential energy (like all other types of energy) appear as system mass, whenever present. For example, a compressed spring will be slightly more massive than before it was compressed. Likewise, whenever energy is transferred between systems by any mechanism, an associated mass is transferred with it.[100]

In quantum mechanics energy is expressed using the Hamiltonian operator. On any time scale, the uncertainty in the energy is given by

which is similar in form to the Heisenberg Uncertainty Principle,[101] but not really mathematically equivalent thereto, since E and t are not dynamically conjugate variables, neither in classical nor in quantum mechanics.[102]

In particle physics, this inequality permits a qualitative understanding of virtual particles, which carry momentum.[102] The exchange of virtual particles with real particles is responsible for the creation of all known fundamental forces (more accurately known as fundamental interactions).[103]<span title="Lua error: Internal error: The interpreter exited with status 0.">: Lua error: Internal error: The interpreter exited with status 0., [101] Virtual photons are also responsible for the electrostatic interaction between electric charges (which results in Coulomb's law),[103]<span title="Lua error: Internal error: The interpreter exited with status 0.">: Lua error: Internal error: The interpreter exited with status 0., [336] for spontaneous radiative decay of excited atomic and nuclear states, for the Casimir force,[104] for the Van der Waals force,[105] and some other observable phenomena.[106]

Energy transfer

Lua error: Internal error: The interpreter exited with status 0.

Closed systems

Energy transfer can be considered for the special case of systems which are closed to transfers of matter. The portion of the energy which is transferred by conservative forces over a distance is measured as the work the source system does on the receiving system. The portion of the energy which does not do work during the transfer is called heat.[note 3] Energy can be transferred between systems in a variety of ways. Examples include the transmission of electromagnetic energy via photons, physical collisions which transfer kinetic energy,[note 4] tidal interactions,[107] and the conductive transfer of thermal energy.[108]

Energy is strictly conserved and is also locally conserved wherever it can be defined. In thermodynamics, for closed systems, the process of energy transfer is described by the first law:[note 5][108]

-

()

where is the amount of energy transferred, represents the work done on or by the system, and represents the heat flow into or out of the system. As a simplification, the heat term, , can sometimes be ignored, especially for fast processes involving gases, which are poor conductors of heat, or when the thermal efficiency of the transfer is high. For such adiabatic processes,

-

()

This simplified equation is the one used to define the joule, for example.

Open systems

Beyond the constraints of closed systems, open systems can gain or lose energy in association with matter transfer (this process is illustrated by injection of an air-fuel mixture into a car engine, a system which gains in energy thereby, without addition of either work or heat). Denoting this energy by , one may write:[109]

-

()

Thermodynamics

Lua error: Internal error: The interpreter exited with status 0.

Internal energy

Internal energy is the sum of all microscopic forms of energy of a system. It is the energy needed to create the system. It is related to the potential energy, e.g., molecular structure, crystal structure, and other geometric aspects, as well as the motion of the particles, in form of kinetic energy. Thermodynamics is chiefly concerned with changes in internal energy and not its absolute value, which is impossible to determine with thermodynamics alone.[110]

First law of thermodynamics

The first law of thermodynamics asserts that the total energy of a system and its surroundings (but not necessarily thermodynamic free energy) is always conserved[111] and that heat flow is a form of energy transfer. For homogeneous systems, with a well-defined temperature and pressure, a commonly used corollary of the first law is that, for a system subject only to pressure forces and heat transfer (e.g., a cylinder-full of gas) without chemical changes, the differential change in the internal energy of the system (with a gain in energy signified by a positive quantity) is given as:[112]

where the first term on the right is the heat transferred into the system, expressed in terms of temperature T and entropy S (in which entropy increases and its change dS is positive when heat is added to the system), and the last term on the right hand side is identified as work done on the system, where pressure is P and volume V (the negative sign results since compression of the system requires work to be done on it and so the volume change, dV, is negative when work is done on the system).

This equation is highly specific, ignoring all chemical, electrical, nuclear, and gravitational forces, effects such as advection of any form of energy other than heat and PV-work. The general formulation of the first law (i.e., conservation of energy) is valid even in situations in which the system is not homogeneous. For these cases the change in internal energy of a closed system is expressed in a general form by:[108]

where is the heat supplied to the system and is the work applied to the system.

Equipartition of energy

The energy of a mechanical harmonic oscillator (a mass on a spring) is alternately kinetic and potential energy. At two points in the oscillation cycle it is entirely kinetic, and at two points it is entirely potential.[88] Over a whole cycle, or over many cycles, average energy is equally split between kinetic and potential. This is an example of the equipartition principle: the total energy of a system with many degrees of freedom is equally split among all available degrees of freedom, on average.[113]

This principle is vitally important to understanding the behavior of a quantity closely related to energy, called entropy. Entropy is a measure of evenness of a distribution of energy between parts of a system. When an isolated system is given more degrees of freedom (i.e., given new available energy states that are the same as existing states), then total energy spreads over all available degrees equally without distinction between "new" and "old" degrees. This mathematical result is part of the second law of thermodynamics. The second law of thermodynamics is simple only for systems which are near or in a physical equilibrium state. For non-equilibrium systems, the laws governing the systems' behavior are still debatable. One of the guiding principles for these systems is the principle of maximum entropy production.[114][115] It states that nonequilibrium systems behave in such a way as to maximize their entropy production.[116]

See also

- Combustion

- Efficient energy use

- Energy democracy

- Energy crisis

- Energy recovery

- Energy recycling

- Index of energy articles

- Index of wave articles

- List of low-energy building techniques

- Orders of magnitude (energy)

- Power station

- Sustainable energy

- Transfer energy

- Waste-to-energy

- Waste-to-energy plant

- Zero-energy building

Notes

- ↑ These examples are solely for illustration, as it is not the energy available for work which limits the performance of the athlete but the power output (in case of a sprinter) and the force (in case of a weightlifter).

- ↑ Crystals are another example of highly ordered systems that exist in nature: in this case too, the order is associated with the transfer of a large amount of heat (known as the lattice energy) to the surroundings.

- ↑ Although heat is "wasted" energy for a specific energy transfer (see: waste heat), it can often be harnessed to do useful work in subsequent interactions. However, the maximum energy that can be "recycled" from such recovery processes is limited by the second law of thermodynamics.

- ↑ The mechanism for most macroscopic physical collisions is actually electromagnetic, but it is very common to simplify the interaction by ignoring the mechanism of collision and just calculate the beginning and end result.

- ↑ There are several sign conventions for this equation. Here, the signs in this equation follow the IUPAC convention.

Lua error: Internal error: The interpreter exited with status 0.

References

- ↑ 1.0 1.1 Soman, K. (2010). International System Of Units: A Handbook On S.I. Unit For Scientists And Engineers. PHI Learning Pvt. Ltd.. p. 15. ISBN 978-81-203-3653-7. https://books.google.com/books?id=d-euEMU2cX8C&pg=PA15.

- ↑ 2.0 2.1 Dougherty, Heather N.; Schissler, Andrew P., eds (2020). SME Mining Reference Handbook (2nd ed.). Society for Mining, Metallurgy & Exploration. pp. 2–3. ISBN 978-0-87335-435-6. https://books.google.com/books?id=sVTHDwAAQBAJ&pg=PA2.

- ↑ 3.0 3.1 Mechtly, E. A. (1964). The International System of Units: Physical Constants and Conversion Factors. NASA SP. 7012. Scientific and Technical Information Division, National Aeronautics and Space Administration. p. 3. https://books.google.com/books?id=xFUCAAAAIAAJ&pg=PA3.

- ↑ Chandra, Sanjeev (2016). Energy, Entropy and Engines: An Introduction to Thermodynamics. John Wiley & Sons. p. 34. ISBN 978-1-119-01318-1. https://books.google.com/books?id=AKfQCwAAQBAJ&pg=PA34.

- ↑ 5.0 5.1 Fuller, Ą. J. Baden (2014). Hammon, P.. ed. Engineering Field Theory. The Commonwealth and international library. Applied electricity and electronics division. Elsevier. ISBN 978-1-4831-8700-6. https://books.google.com/books?id=IJKjBQAAQBAJ&pg=PA7.

- ↑ "Earth's energy flow". https://energyeducation.ca/encyclopedia/Earth%27s_energy_flow.

- ↑ Bobrowsky, Matt (2021). "SCIENCE 101: Q: What Is Energy?" (in en). Science and Children 59 (1): 61–65. doi:10.1080/19434812.2021.12291716. ISSN 0036-8148. https://www.jstor.org/stable/27133353. Retrieved February 5, 2024.

- ↑ "Nuclear Energy | Definition, Formula & Examples | nuclear-power.com" (in en-us). https://www.nuclear-power.com/nuclear-power/nuclear-energy/.

- ↑ Rosen, Marc A.; Dincer, Ibrahim (2007). Exergy: Energy, Environment and Sustainable Development. Elsevier. p. 3. ISBN 978-0-08-053135-9. https://books.google.com/books?id=ruR7U3IjrR0C&pg=PA3.

- ↑ Goel, Anup (2021). Systems in Mechanical Engineering: Fundamentals and Applications. Technical Publications. ISBN 978-93-332-2183-2. https://books.google.com/books?id=XjcfEAAAQBAJ&pg=SA1-PA20.

- ↑ Harper, Douglas. "Energy". Online Etymology Dictionary. http://www.etymonline.com/index.php?term=energy.

- ↑ Chen, Chung-Hwan (September 1956). "Different meanings of the term Energeia in the philosophy of Aristotle". Philosophy and Phenomenological Research 17 (1): 56–65. doi:10.2307/2104687.

- ↑ McDonough, Jeffrey K. (2021). "Leibniz's Philosophy of Physics". in Zalta, Edward N.. The Stanford Encyclopedia of Philosophy (Fall 2021 ed.). Metaphysics Research Lab, Stanford University. https://plato.stanford.edu/archives/fall2021/entries/leibniz-physics/. Retrieved 2025-07-25.

- ↑ "December 1706: Birth of Émilie du Châtelet". APS News. American Physical Society. December 1, 2008. https://www.aps.org/apsnews/2008/12/emilie-du-chatelet.

- ↑ Smith, Crosbie (1998). The Science of Energy – a Cultural History of Energy Physics in Victorian Britain. The University of Chicago Press. ISBN 978-0-226-76420-7.

- ↑ "Gustave-Gaspard Coriolis". Oxford Reference. https://www.oxfordreference.com/display/10.1093/oi/authority.20110803095639380.

- ↑ Young, John (April 13, 2015). "Heat, work and subtle fluids: a commentary on Joule (1850) 'On the mechanical equivalent of heat'". Philosophical Transactions A 373 (2039): 20140348. doi:10.1098/rsta.2014.0348. PMID 25750152. Bibcode: 2015RSPTA.37340348Y.

- ↑ Sarton, G. et al. (September 1929). "The Discovery of the Law of Conservation of Energy". Isis 13 (1): 18–44. doi:10.1086/346430.

- ↑ Williams, Richard (June 1, 2015). "June 1849: James Prescott Joule and the Mechanical Equivalent of Heat". APS News. American Physical Society. https://www.aps.org/apsnews/2015/06/joule-mechanical-equivalent-heat.

- ↑ McEvoy, Paul (2002). Classical Theory. Theory of interacting systems. 2. Microanalytix. p. 151. ISBN 978-1-930832-02-2. https://books.google.com/books?id=dj0wFIxn-PoC&pg=PA151.

- ↑ Fegley, Bruce; Osborne, Rose (2013). Practical Chemical Thermodynamics for Geoscientists. Academic Press. p. 1. ISBN 978-0-12-251100-4. https://books.google.com/books?id=Z8VNZNLNsTcC&pg=PA1.

- ↑ Grossinger, Richard (2012). Embryos, Galaxies, and Sentient Beings: How the Universe Makes Life. North Atlantic Books. p. 22. ISBN 978-1-58394-698-5. https://books.google.com/books?id=NThvbCJiqFUC&pg=PA22.

- ↑ "Josef Stefan". Catholic scientists of the past. Society of Catholic Scientists. https://catholicscientists.org/scientists-of-the-past/josef-stefan/.

- ↑ Lofts, G. et al. (2004). "11 – Mechanical Interactions". Jacaranda Physics 1 (2 ed.). Milton, Queensland, Australia: John Wiley & Sons Australia Limited. p. 286. ISBN 978-0-7016-3777-4.

- ↑ Foot, Robert (2002). Shadowlands: Quest for Mirror Matter in the Universe. Universal-Publishers. p. 114. ISBN 978-1-58112-645-7. https://books.google.com/books?id=3evE2K-ylVIC&pg=PA114.

- ↑ Egdall, Ira Mark (2014). Einstein Relatively Simple: Our Universe Revealed In Everyday Language. World Scientific. p. 99. ISBN 978-981-4525-61-9. https://books.google.com/books?id=KUG7CgAAQBAJ&pg=PA99.

- ↑ Kolek, Erik (2024). On the general, the special and the general-special relativity theory. Chronicles of Business Informatics Physics (CBIP). 1 (2nd ed.). BoD – Books on Demand. pp. 126–128. ISBN 978-3-7597-1218-9. https://books.google.com/books?id=85wWEQAAQBAJ&pg=PA127.

- ↑ Ruedenberg, Klaus; Schwarz, W. H. Eugen (February 13, 2013). "Three Millennia of Atoms and Molecules". Pioneers of Quantum Chemistry. ACS Symposium Series. 1122. American Chemical Society. pp. 1–45. doi:10.1021/bk-2013-1122.ch001. ISBN 978-0-8412-2716-3.

- ↑ Phillips, Lee (2024). Einstein's Tutor: The Story of Emmy Noether and the Invention of Modern Physics. PublicAffairs. ISBN 978-1-5417-0297-4. https://books.google.com/books?id=sbDrEAAAQBAJ&pg=PT280.

- ↑ Lipkin, Harry J. (2014). Quantum Mechanics: New Approaches to Selected Topics. Dover books on physics. Courier Corporation. ISBN 978-0-486-15185-4. https://books.google.com/books?id=pUpMBAAAQBAJ&pg=PA205.

- ↑ Pearle, P. (August 2000). "Wavefunction Collapse and Conservation Laws". Foundations of Physics 30 (8): 1145–1160. doi:10.1023/A:1003677103804. Bibcode: 2000FoPh...30.1145P.

- ↑ Carroll, S. M.; Lodman, J. (2021). "Energy Non-conservation in Quantum Mechanics". Foundations of Physics 51 (83). doi:10.1007/s10701-021-00490-5. Bibcode: 2021FoPh...51...83C.

- ↑ Thompson, Ambler; Taylor, Barry N. (March 2008). Guide for the Use of the International System of Units (SI). National Institute of Standards and Technology. https://physics.nist.gov/cuu/pdf/sp811.pdf. Retrieved 2025-09-16.

- ↑ Patience, Gregory S. (2013). Experimental Methods and Instrumentation for Chemical Engineers. Newnes. p. 9. ISBN 978-0-444-53805-5. https://books.google.com/books?id=nY-MQXBmZBAC&pg=PA9.

- ↑ Orecchini, Fabio; Naso, Vincenzo (2011). Energy Systems in the Era of Energy Vectors: A Key to Define, Analyze and Design Energy Systems Beyond Fossil Fuels. Springer Science & Business Media. pp. 6–8. ISBN 978-0-85729-244-5. https://books.google.com/books?id=vGiBKQ40c7QC&pg=PA7.

- ↑ Greiner, Walter (2006). Classical Mechanics: Point Particles and Relativity. Classical Theoretical Physics. Springer Science & Business Media. p. 109. ISBN 978-0-387-21851-9. https://books.google.com/books?id=CynrBwAAQBAJ&pg=PA109.

- ↑ Obodovskiy, Ilya (2019). Radiation: Fundamentals, Applications, Risks, and Safety. Elsevier. ISBN 978-0-444-63986-8. https://books.google.com/books?id=xmOMDwAAQBAJ&pg=PA74.

- ↑ "Chapter 16.3 – The Hamiltonian". MIT OpenCourseWare website 18.013A. https://ocw.mit.edu/ans7870/18/18.013a/textbook/HTML/chapter16/section03.html.

- ↑ Marchildon, Louis (2013). Quantum Mechanics: From Basic Principles to Numerical Methods and Applications. Advanced Texts in Physics. Springer Science & Business Media. p. 38. ISBN 978-3-662-04750-7. https://books.google.com/books?id=XibsCAAAQBAJ&pg=PA38.

- ↑ Widnall, S. (2009). "Lecture L20 - Energy Methods: Lagrange's Equations". MIT OpenCourseWare website 16.07 Dynamics. https://ocw.mit.edu/courses/16-07-dynamics-fall-2009/b39e882f1524a0f6a98553ee33ea6f35_MIT16_07F09_Lec20.pdf.

- ↑ Berman, Jules J. (2025). Proofs and Logical Arguments Supporting the Foundational Laws of Physics: A Handy Guide for Students and Scientists. CRC Press. pp. 144–146. ISBN 978-1-040-30073-2. https://books.google.com/books?id=LbM1EQAAQBAJ&pg=PA144.

- ↑ See chemical change in: Lewis, Robert A. (2016). Larrañaga, Michael D.; Lewis, Sr., Richard J.. eds. Hawley's Condensed Chemical Dictionary (16 ed.). John Wiley & Sons. ISBN 978-1-119-26784-3. https://books.google.com/books?id=KPrfCwAAQBAJ&pg=PA295.

- ↑ Kondepudi, Dilip; Prigogine, Ilya (2014). Modern Thermodynamics: From Heat Engines to Dissipative Structures (2nd ed.). John Wiley & Sons. pp. 248–250. ISBN 978-1-118-69870-9. https://books.google.com/books?id=SPU8BQAAQBAJ&pg=PA249.

- ↑ Hayamizu, Kohsuke (2017). "Amino Acids and Energy Metabolism: An Overview". in Bagchi, Debasis. Sustained Energy for Enhanced Human Functions and Activity. Academic Press. p. 339. ISBN 978-0-12-809332-0. https://books.google.com/books?id=5epGDgAAQBAJ&pg=PA339.

- ↑ Dimmitt, Mark A. (2016). "Plant ecology of the Sonoran desert region". in Malloy, Richard; Brock, John; Floyd, Anthony et al.. Design with the Desert: Conservation and Sustainable Development. CRC Press. pp. 154–155. ISBN 978-1-000-21884-8. https://books.google.com/books?id=Vef5DwAAQBAJ&pg=PA154.

- ↑ Sinn, Hans-Werner (2012). The Green Paradox: A Supply-Side Approach to Global Warming. MIT Press. ISBN 978-0-262-30058-2. https://books.google.com/books?id=NnwCVOso9vgC&pg=PA85.

- ↑ McNab, Brian K. (1997). "On the Utility of Uniformity in the Definition of Basal Rate of Metabolism". Physiological Zoology 70 (6): 718–720. doi:10.1086/515881. PMID 9361146.

- ↑ Byrne, Nuala M. et al. (September 2005). "Metabolic equivalent: one size does not fit all". Applied Physiology 99 (3): 1112–1119. doi:10.1152/japplphysiol.00023.2004. PMID 15831804.

- ↑ "Human Energy". Uic.edu. http://www.uic.edu/aa/college/gallery400/notions/human%20energy.htm.

- ↑ Bicycle calculator – speed, weight, wattage etc. "Bike Calculator". http://bikecalculator.com/..

- ↑ "How many calories should I eat in a day?". 12 February 2018. https://www.medicalnewstoday.com/articles/245588.

- ↑ Ito, Akihito; Oikawa, Takehisa (2004). "Global Mapping of Terrestrial Primary Productivity and Light-Use Efficiency with a Process-Based Model". in Shiyomi, Masae; Kawahata, Hodaka; Koizumi, Hiroshi et al.. Global Environmental Change in the Ocean and on Land. pp. 343–58. http://www.terrapub.co.jp/e-library/kawahata/pdf/343.pdf. Retrieved 2006-10-02.

- ↑ 53.0 53.1 Liesa, Marc et al. (2020). Arias, Irwin M.; Alter, Harvey J.; Boyer, James L. et al.. eds. The Liver: Biology and Pathobiology (6th ed.). John Wiley & Sons. pp. 86–87. ISBN 978-1-119-43682-9. https://books.google.com/books?id=80bLEAAAQBAJ&pg=PA87.

- ↑ Papachristodoulou, Despo et al. (2014). Biochemistry and Molecular Biology. OUP Oxford. ISBN 978-0-19-960949-9. https://books.google.com/books?id=oPtzBAAAQBAJ&pg=PA5.

- ↑ Cohen, Barbara Janson; Hull, Kerry L. (2020). Memmler's Structure & Function of the Human Body, Enhanced Edition (12th ed.). Jones & Bartlett Learning. p. 375. ISBN 978-1-284-59160-6.

- ↑ Lehninger, Albert L. (1960). "The Enzymic and Morphological Template:Sic? of the Mitochondria". Pediatrics 26 (3): 466–475. doi:10.1542/peds.26.3.466.

- ↑ "Earth's Energy Budget". Okfirst.ocs.ou.edu. http://okfirst.ocs.ou.edu/train/meteorology/EnergyBudget.html.

- ↑ Jackson, Richard E. (2019). Earth Science for Civil and Environmental Engineers. Cambridge University Press. pp. 123–125. ISBN 978-1-108-61581-5. https://books.google.com/books?id=VJiHDwAAQBAJ&pg=PA123.

- ↑ Demirel, Yaşar (2012). Energy: Production, Conversion, Storage, Conservation, and Coupling. Green Energy and Technology. Springer Science & Business Media. p. 305. ISBN 978-1-4471-2371-2. https://books.google.com/books?id=TsY8gJP7b58C&pg=PA305.

- ↑ Ahmadi, Pouria; Dincer, Ibrahim (2018). "Energy Optimization". in Dincer, Ibrahim. Comprehensive Energy Systems. 1. Elsevier. pp. 1139–1140. ISBN 978-0-12-814925-6. https://books.google.com/books?id=foxODwAAQBAJ&pg=RA1-PA1139.

- ↑ Dye, S. T. (September 2012). "Geoneutrinos and the radioactive power of the Earth". Reviews of Geophysics 50 (3). doi:10.1029/2012RG000400. Bibcode: 2012RvGeo..50.3007D.

- ↑ Nédélec, Anne (2025). Earth and Life: A History of Four Billion Years. Oxford University Press. pp. 64–66. ISBN 978-0-19-894543-7. https://books.google.com/books?id=ojZpEQAAQBAJ&pg=PA65.

- ↑ Kennett, Brian (2009). Seismic Wave Propagation in Stratified Media. DOAB Directory of Open Access Books. ANU E Press. p. 59. ISBN 978-1-921536-73-1. https://books.google.com/books?id=Gn89socs0RcC&pg=PA59.

- ↑ Manuel, Oliver K. (2007). Origin of Elements in the Solar System: Implications of Post-1957 Observations. Springer Science & Business Media. pp. 589–634. doi:10.1007/0-306-46927-8_44. ISBN 978-0-306-46927-5. https://books.google.com/books?id=h-zxBwAAQBAJ&pg=PA626.

- ↑ Condie, Kent C. (2005). Earth as an Evolving Planetary System. Elsevier. pp. 393–394. ISBN 978-0-08-049458-6. https://books.google.com/books?id=I_t-hUWi5I8C&pg=PA394.

- ↑ Mankovich, C.; Fortney, J. J. (December 2019). "Evidence for a Dichotomy in the Interior Structures of Jupiter and Saturn from Helium Phase Separation". The Astrophysical Journal 889 (1): 51. doi:10.3847/1538-4357/ab6210. Bibcode: 2019AGUFM.P24B..02M.

- ↑ Hogan, Craig J. (December 6, 2012). "Energy flow in the universe". in Crittenden, Robert G.; Turok, Neil G.. Structure Formation in the Universe. NASA Science Series. Kluwer Academic Publishers. pp. 283–292. ISBN 978-94-010-0540-1. https://books.google.com/books?id=HC7yCAAAQBAJ&pg=PA290.

- ↑ Rolfs, Claus E.; Rodney, William S. (1988). Cauldrons in the Cosmos: Nuclear Astrophysics. Theoretical Astrophysics. University of Chicago Press. p. 153. ISBN 978-0-226-72457-7. https://books.google.com/books?id=BHKLFPUS1RcC&pg=PA153.

- ↑ Longair, Malcolm S. (1996). Our Evolving Universe. CUP Archive. pp. 86−88. ISBN 978-0-521-55091-8. https://books.google.com/books?id=qyA4AAAAIAAJ&pg=PA86.

- ↑ Penrose, R.; Floyd, R. M. (February 1971). "Extraction of Rotational Energy from a Black Hole" (in en). Nature Physical Science 229 (6): 177–179. doi:10.1038/physci229177a0. ISSN 0300-8746. Bibcode: 1971NPhS..229..177P.

- ↑ Kreitler, Paul V. (2006). Trends in Black Hole Research. Nova Publishers. ISBN 978-1-59454-475-0. https://books.google.com/books?id=DGwYf8cOCq4C&pg=PA34.

- ↑ Parker, Michael A. (2018). Physics of Optoelectronics. Optical Science and Engineering. CRC Press. p. 299. ISBN 978-1-4200-2771-6. https://books.google.com/books?id=ReXLBQAAQBAJ&pg=PA299.

- ↑ Alenitsyn, Alexander G. et al. (2020). Concise Handbook of Mathematics and Physics. CRC Press. p. 462. ISBN 978-1-000-16152-6. https://books.google.com/books?id=51gMEAAAQBAJ&pg=PA462.

- ↑ Rahaman, Farook (2022). The Special Theory of Relativity: A Mathematical Approach (2 ed.). Springer Nature. p. 143. ISBN 978-981-19-0497-4. https://books.google.com/books?id=GL9pEAAAQBAJ&pg=PA143.

- ↑ Norton, Andrew (2021). Understanding the Universe: The Physics of the Cosmos from Quasars to Quarks. CRC Press. ISBN 978-1-000-38391-1. https://books.google.com/books?id=N1kkEAAAQBAJ&pg=PA119.

- ↑ Cao, Tian Yu (1998). Conceptual Developments of 20th Century Field Theories. Cambridge University Press. pp. 60–63. ISBN 978-0-521-63420-5. https://books.google.com/books?id=l4PtgYXpb_oC&pg=PA60.

- ↑ Misner, Charles W. et al. (1973). Gravitation. San Francisco: W. H. Freeman. ISBN 978-0-7167-0344-0.

- ↑ "Introduction to Vibration Energy Harvesting". Nonlinearity in Energy Harvesting Systems: Micro- and Nanoscale Applications. Springer. 2016. pp. 7–8. ISBN 978-3-319-20355-3. https://books.google.com/books?id=Zc15DQAAQBAJ&pg=PA8.

- ↑ 79.0 79.1 Stonier, Tom (2012). Information and the Internal Structure of the Universe: An Exploration into Information Physics. Springer Science & Business Media. pp. 96–98. ISBN 978-1-4471-3265-3. https://books.google.com/books?id=5FPlBwAAQBAJ&pg=PA96.

- ↑ Smith, K. M.; Holroyd, P. (2013). Hiller, N.. ed. Engineering Principles for Electrical Technicians. The Commonwealth and International Library: Electrical Engineering Division. Elsevier. pp. 63–64. ISBN 978-1-4831-4030-8. https://books.google.com/books?id=-D4fAwAAQBAJ&pg=PA63.

- ↑ Pinsonneault, Marc; Ryden, Barbara (2023). Stellar Structure and Evolution. The Ohio State astrophysics series. 2. Cambridge University Press. pp. 30. ISBN 978-1-108-83581-7. https://books.google.com/books?id=Fv-xEAAAQBAJ&pg=PA30.

- ↑ Fleck, Robert (2023). Entropy and the Second Law of Thermodynamics: ... or Why Things Tend to Go Wrong and Seem to Get Worse. Springer Nature. ISBN 978-3-031-34950-8. https://books.google.com/books?id=aDfZEAAAQBAJ&pg=PA64.

- ↑ Wald, Robert M. (1992). Space, Time, and Gravity: The Theory of the Big Bang and Black Holes. University of Chicago Press. ISBN 978-0-226-87029-8. https://books.google.com/books?id=sk5a8ieI91kC&pg=PA113.

- ↑ Dincer, Ibrahim; Rosen, Marc (2002). Thermal Energy Storage: Systems and Applications. John Wiley & Sons. ISBN 978-0-471-49573-4. https://books.google.com/books?id=EsfcWE5lX40C&pg=PA20.

- ↑ Neubauer, Raymond L. (2011). Evolution and the Emergent Self: The Rise of Complexity and Behavioral Versatility in Nature. Columbia University Press. pp. 263–266. ISBN 978-0-231-52168-0. https://books.google.com/books?id=5VH_hCSHMCkC&pg=PA263.

- ↑ Shahvisi, Arianne (2021). "Entropy Assymetry". in Knox, Eleanor; Wilson, Alastair. The Routledge Companion to Philosophy of Physics. Routledge Philosophy Companions. Routledge. ISBN 978-1-317-22713-7. https://books.google.com/books?id=dvc8EAAAQBAJ&pg=PT626.

- ↑ Baum, F. A.; Stanyukovich, K. P.; Shekhter, B. I. (December 1959). "Physics of an explosion". Arlington, VA: Defense Technical Information Center. https://apps.dtic.mil/sti/pdfs/AD0400151.pdf.

- ↑ 88.0 88.1 Vázquez, A. L.; Corona-Corona, G. (2018). "Period of the Simple Pendulum without Differential Equations". American Scientific Research Journal for Engineering, Technology, and Sciences 40: 125–131. https://core.ac.uk/download/pdf/235050526.pdf. Retrieved 2025-08-02.

- ↑ Ristinen, Robert A. et al. (2022). Energy and the Environment (4th ed.). John Wiley & Sons. pp. 8–9. ISBN 978-1-119-80025-5. https://books.google.com/books?id=o8V6EAAAQBAJ&pg=PA8.

- ↑ The energy from the rest mass is given by the mass-energy equivalence:

- E = mc2 = 1 kg × (Lua error: Internal error: The interpreter exited with status 0.)2 = Lua error: Internal error: The interpreter exited with status 0.

- TNT energy = Lua error: Internal error: The interpreter exited with status 0.†

- E = (Lua error: Internal error: The interpreter exited with status 0.)/(Lua error: Internal error: The interpreter exited with status 0.) = 21.5 megatonnes

- ↑ Schmidt, G. R. et al. (September 2000). "Antimatter Requirements and Energy Costs for Near-Term Propulsion Applications". Journal of Propulsion and Power 16 (5): 923. doi:10.2514/2.5661.

- ↑ Eu, Byung Chan; Al-ghoul, Mazen (2018). Chemical Thermodynamics: Reversible And Irreversible Thermodynamics (Second ed.). World Scientific Publishing Company. ISBN 978-981-322-607-4. https://books.google.com/books?id=uWdhDwAAQBAJ&pg=PT45.

- ↑ Avison, John (2014). The World of Physics (2nd ed.). Nelson Thornes. p. 414. ISBN 978-0-17-438733-6. https://books.google.com/books?id=DojwZzKAvN8C&pg=PA414.

- ↑ Luscombe, James (2018). Thermodynamics. CRC Press. pp. 60–62. ISBN 978-0-429-01788-9. https://books.google.com/books?id=fFwPEAAAQBAJ&pg=PA60.

- ↑ Kittel, Charles; Knight, Walter D.; Ruderman, Malvin A. (1965). Berkeley Physics Course. 1. McGraw-Hill.

- ↑ 96.0 96.1 The Laws of Thermodynamics. including careful definitions of energy, free energy, et cetera.

- ↑ 97.0 97.1 Feynman, Richard (1964). "Ch. 4: Conservation of Energy". The Feynman Lectures on Physics; Volume 1. US: Addison Wesley. ISBN ((978-0-201-02115-8)). https://feynmanlectures.caltech.edu/I_04.html#Ch4-S1-p2. Retrieved 2022-05-04.

- ↑ Byers, Nina (December 1996). "E. Noether's Discovery of the Deep Connection Between Symmetries and Conservation Laws". UCLA Physics & Astronomy. http://www.physics.ucla.edu/~cwp/articles/noether.asg/noether.html.

- ↑ "Time Invariance". EECS20N. Ptolemy Project. http://ptolemy.eecs.berkeley.edu/eecs20/week9/timeinvariance.html.

- ↑ Schmitz, Wouter (2019). Particles, Fields and Forces: A Conceptual Guide to Quantum Field Theory and the Standard Model. The Frontiers Collection. Springer. p. 245. ISBN 978-3-030-12878-4. https://books.google.com/books?id=wXeUDwAAQBAJ&pg=PA245.

- ↑ Wigner, E. P. (1997). "On the Time–Energy Uncertainty Relation". in Wightman, Arthur S. (in en). Part I: Particles and Fields. Part II: Foundations of Quantum Mechanics. Berlin, Heidelberg: Springer Berlin Heidelberg. pp. 538–548. doi:10.1007/978-3-662-09203-3_58. ISBN 978-3-642-08179-8. http://link.springer.com/10.1007/978-3-662-09203-3_58.

- ↑ 102.0 102.1 Desai, Bipin R. (2010). Quantum Mechanics with Basic Field Theory. Cambridge University Press. p. 63–66. ISBN 978-0-521-87760-2. https://books.google.com/books?id=cScBCJ6wLpYC&pg=PA65.

- ↑ 103.0 103.1 Braibant, Sylvie; Giacomelli, Giorgio; Spurio, Maurizio (2011). Particles and Fundamental Interactions: An Introduction to Particle Physics. Undergraduate Lecture Notes in Physics. Springer Science & Business Media. ISBN 978-94-007-2463-1. https://books.google.com/books?id=0Pp-f0G9_9sC&pg=PA101.

- ↑ Madou, Marc J. (2011). Solid-State Physics, Fluidics, and Analytical Techniques in Micro- and Nanotechnology. CRC Press. p. 542. ISBN 978-1-4398-9534-4. https://books.google.com/books?id=sRvSBQAAQBAJ&pg=PA542.

- ↑ Volokitin, A. I.; Persson, B. N. J. (2007). "Theory of Noncontact Friction". in Gnecco, Enrico; Meyer, Ernst. Fundamentals of Friction and Wear on the Nanoscale. NanoScience and Technology. Springer Science & Business Media. p. 394. ISBN 978-3-540-36807-6. https://books.google.com/books?id=v2Pe5thhNiwC&pg=PA394.

- ↑ Scully, M.; Sokolov, A.; Svidzinsky, A. (2018). "Virtual photons: From the Lamb shift to black holes". Optics and Photonics News 29 (2): 34–40. doi:10.1364/OPN.29.2.000034. https://www.fonlo.org/2018/additionalreading/Scully-Marlan/OPN-VirtualPhotonsBH.pdf. Retrieved 2025-08-04.

- ↑ Jaffe, Robert L.; Taylor, Washington (2018). The Physics of Energy. Cambridge University Press. p. 611. ISBN 978-1-107-01665-1. https://books.google.com/books?id=drZDDwAAQBAJ&pg=PA611.

- ↑ 108.0 108.1 108.2 Borel, Lucien; Favrat, Daniel (2010). Thermodynamics and Energy Systems Analysis: From Energy to Exergy. Engineering Sciences. EPFL Press. ISBN 978-1-4398-3516-6. https://books.google.com/books?id=bnyCpHkqQ_0C&pg=PA16.

- ↑ Rathakrishnan, Ethirajan (2019). Applied Gas Dynamics (2nd ed.). John Wiley & Sons. pp. 12–13. ISBN 978-1-119-50038-4. https://books.google.com/books?id=esaKDwAAQBAJ&pg=PA12.

- ↑ I. Klotz, R. Rosenberg, Chemical Thermodynamics – Basic Concepts and Methods, 7th ed., Wiley (2008), p. 39

- ↑ Kittel and Kroemer (1980). Thermal Physics. New York: W. H. Freeman. ISBN 978-0-7167-1088-2.

- ↑ Chen, Long-Qing (2022). Thermodynamic Equilibrium and Stability of Materials. Springer Nature. pp. 30–31. ISBN 978-981-13-8691-6. https://books.google.com/books?id=82RXEAAAQBAJ&pg=PA30.

- ↑ Dill, Ken; Bromberg, Sarina (2010). Molecular Driving Forces: Statistical Thermodynamics in Biology, Chemistry, Physics, and Nanoscience (2nd ed.). Garland Science. pp. 212–213. ISBN 978-1-1366-7299-6. https://books.google.com/books?id=1gYPBAAAQBAJ&pg=PA212.

- ↑ Onsager, L. (1931). "Reciprocal relations in irreversible processes". Physical Review 37 (4): 405–26. doi:10.1103/PhysRev.37.405. Bibcode: 1931PhRv...37..405O.

- ↑ Martyushev, L. M.; Seleznev, V. D. (2006). "Maximum entropy production principle in physics, chemistry and biology". Physics Reports 426 (1): 1–45. doi:10.1016/j.physrep.2005.12.001. Bibcode: 2006PhR...426....1M.

- ↑ Belkin, A.; Hubler, A.; Bezryadin, A. (2015). "Self-Assembled Wiggling Nano-Structures and the Principle of Maximum Entropy Production". Scientific Reports 5 (1): 8323. doi:10.1038/srep08323. PMID 25662746. Bibcode: 2015NatSR...5.8323B.

Lua error: Internal error: The interpreter exited with status 0.

External links

- Differences between Heat and Thermal energy () – BioCab

- The Journal of Energy History / Revue d'histoire de l'énergie (JEHRHE), 2018–

Lua error: Internal error: The interpreter exited with status 0. Lua error: Internal error: The interpreter exited with status 0. Lua error: Internal error: The interpreter exited with status 0.

Lua error: Internal error: The interpreter exited with status 0.