Double descent

| Machine learning and data mining |

|---|

|

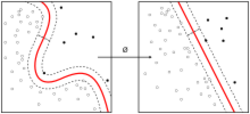

Double descent in statistics and machine learning is the phenomenon where a model's error rate on the test set initially decreases with the number of parameters, then peaks, then decreases again.[2] This phenomenon has been considered surprising, as it contradicts assumptions about overfitting in classical machine learning.[3]

The increase usually occurs near the interpolation threshold, where the number of parameters is the same as the number of training data points (the model is just large enough to fit the training data). Or, more precisely, it is the maximum number of samples on which the model/training procedure achieves approximately on average 0 training error.[4]

History

Early observations of what would later be called double descent in specific models date back to 1989.[5][6]

The term "double descent" was coined by Belkin et. al.[7] in 2019,[3] when the phenomenon gained popularity as a broader concept exhibited by many models.[8][9] The latter development was prompted by a perceived contradiction between the conventional wisdom that too many parameters in the model result in a significant overfitting error (an extrapolation of the bias–variance tradeoff),[10] and the empirical observations in the 2010s that some modern machine learning techniques tend to perform better with larger models.[7][11]

Theoretical models

Double descent occurs in linear regression with isotropic Gaussian covariates and isotropic Gaussian noise.[12]

A model of double descent at the thermodynamic limit has been analyzed using the replica trick, and the result has been confirmed numerically.[13]

A number of works[14][15] have suggested that double descent can be explained using the concept of effective dimension: While a network may have a large number of parameters, in practice only a subset of those parameters are relevant for generalization performance, as measured by the local Hessian curvature. This explanation is formalized through PAC-Bayes compression-based generalization bounds,[16] which show that less complex models are expected to generalize better under a Solomonoff prior.

See also

- Grokking (machine learning)

References

- ↑ Rocks, Jason W. (2022). "Memorizing without overfitting: Bias, variance, and interpolation in overparameterized models". Physical Review Research 4 (1). doi:10.1103/PhysRevResearch.4.013201. PMID 36713351. Bibcode: 2022PhRvR...4a3201R.

- ↑ "Deep Double Descent" (in en). 2019-12-05. https://openai.com/blog/deep-double-descent/.

- ↑ 3.0 3.1 Schaeffer, Rylan; Khona, Mikail; Robertson, Zachary; Boopathy, Akhilan; Pistunova, Kateryna; Rocks, Jason W.; Fiete, Ila Rani; Koyejo, Oluwasanmi (2023-03-24). "Double Descent Demystified: Identifying, Interpreting & Ablating the Sources of a Deep Learning Puzzle". arXiv:2303.14151v1 [cs.LG].

- ↑ Nakkiran, Preetum; Kaplun, Gal; Bansal, Yamini; Yang, Tristan; Barak, Boaz; Sutskever, Ilya (2019-12-04). "Deep Double Descent: Where Bigger Models and More Data Hurt". arXiv:1912.02292 [cs.LG].

- ↑ Vallet, F.; Cailton, J.-G.; Refregier, Ph (June 1989). "Linear and Nonlinear Extension of the Pseudo-Inverse Solution for Learning Boolean Functions" (in en). Europhysics Letters 9 (4): 315. doi:10.1209/0295-5075/9/4/003. ISSN 0295-5075. Bibcode: 1989EL......9..315V. https://dx.doi.org/10.1209/0295-5075/9/4/003.

- ↑ Loog, Marco; Viering, Tom; Mey, Alexander; Krijthe, Jesse H.; Tax, David M. J. (2020-05-19). "A brief prehistory of double descent" (in en). Proceedings of the National Academy of Sciences 117 (20): 10625–10626. doi:10.1073/pnas.2001875117. ISSN 0027-8424. PMID 32371495. Bibcode: 2020PNAS..11710625L.

- ↑ 7.0 7.1 Belkin, Mikhail; Hsu, Daniel; Ma, Siyuan; Mandal, Soumik (2019-08-06). "Reconciling modern machine learning practice and the bias-variance trade-off". Proceedings of the National Academy of Sciences 116 (32): 15849–15854. doi:10.1073/pnas.1903070116. ISSN 0027-8424. PMID 31341078.

- ↑ Spigler, Stefano; Geiger, Mario; d'Ascoli, Stéphane; Sagun, Levent; Biroli, Giulio; Wyart, Matthieu (2019-11-22). "A jamming transition from under- to over-parametrization affects loss landscape and generalization". Journal of Physics A: Mathematical and Theoretical 52 (47): 474001. doi:10.1088/1751-8121/ab4c8b. ISSN 1751-8113.

- ↑ Viering, Tom; Loog, Marco (2023-06-01). "The Shape of Learning Curves: A Review". IEEE Transactions on Pattern Analysis and Machine Intelligence 45 (6): 7799–7819. doi:10.1109/TPAMI.2022.3220744. ISSN 0162-8828. PMID 36350870. Bibcode: 2023ITPAM..45.7799V.

- ↑ Geman, Stuart; Bienenstock, Élie; Doursat, René (1992). "Neural networks and the bias/variance dilemma". Neural Computation 4: 1–58. doi:10.1162/neco.1992.4.1.1. http://web.mit.edu/6.435/www/Geman92.pdf.

- ↑ Preetum Nakkiran; Gal Kaplun; Yamini Bansal; Tristan Yang; Boaz Barak; Ilya Sutskever (29 December 2021). "Deep double descent: where bigger models and more data hurt". Theory and Experiment (IOP Publishing Ltd and SISSA Medialab srl) 2021 (12): 124003. doi:10.1088/1742-5468/ac3a74. Bibcode: 2021JSMTE2021l4003N.

- ↑ Nakkiran, Preetum (2019-12-16). "More Data Can Hurt for Linear Regression: Sample-wise Double Descent". arXiv:1912.07242v1 [stat.ML].

- ↑ Advani, Madhu S.; Saxe, Andrew M.; Sompolinsky, Haim (2020-12-01). "High-dimensional dynamics of generalization error in neural networks". Neural Networks 132: 428–446. doi:10.1016/j.neunet.2020.08.022. ISSN 0893-6080. PMID 33022471.

- ↑ Maddox, Wesley J.; Benton, Gregory W.; Wilson, Andrew Gordon (2020). "Rethinking Parameter Counting in Deep Models: Effective Dimensionality Revisited". arXiv:2003.02139 [cs.LG].

- ↑ Wilson, Andrew Gordon (2025). "Deep Learning is Not So Mysterious or Different". arXiv:2503.02113 [cs.LG].

- ↑ Lotfi, Sanae; Finzi, Marc; Kapoor, Sanyam; Potapczynski, Andres; Goldblum, Micah; Wilson, Andrew G. (2022). "PAC-Bayes Compression Bounds So Tight That They Can Explain Generalization". Advances in Neural Information Processing Systems. 35. pp. 31459–31473. https://proceedings.neurips.cc/paper_files/paper/2022/file/cbeec55c50c3367024bafab2438a021b-Paper-Conference.pdf.

Further reading

- Mikhail Belkin; Daniel Hsu; Ji Xu (2020). "Two Models of Double Descent for Weak Features". SIAM Journal on Mathematics of Data Science 2 (4): 1167–1180. doi:10.1137/20M1336072.

- Mount, John (3 April 2024). "The m = n Machine Learning Anomaly". https://win-vector.com/2024/04/03/the-m-n-machine-learning-anomaly/.

- Preetum Nakkiran; Gal Kaplun; Yamini Bansal; Tristan Yang; Boaz Barak; Ilya Sutskever (29 December 2021). "Deep double descent: where bigger models and more data hurt". Theory and Experiment (IOP Publishing Ltd and SISSA Medialab srl) 2021 (12): 124003. doi:10.1088/1742-5468/ac3a74. Bibcode: 2021JSMTE2021l4003N.

- Song Mei; Andrea Montanari (April 2022). "The Generalization Error of Random Features Regression: Precise Asymptotics and the Double Descent Curve". Communications on Pure and Applied Mathematics 75 (4): 667–766. doi:10.1002/cpa.22008.

- Xiangyu Chang; Yingcong Li; Samet Oymak; Christos Thrampoulidis (2021). "Provable Benefits of Overparameterization in Model Compression: From Double Descent to Pruning Neural Networks". Proceedings of the AAAI Conference on Artificial Intelligence 35 (8).

- Manuchehr Aminian: "Characterizations of Double Descent", SIAM News, Vol.58, No.10 (Dec.,2025).

External links

- "Double Descent: Part 1: A Visual Introduction". https://mlu-explain.github.io/double-descent/.

- "Double Descent: Part 2: A Mathematical Explanation". https://mlu-explain.github.io/double-descent2/.

- Understanding "Deep Double Descent" at evhub.

|