Moving average

In statistics, a moving average (rolling average or running average or moving mean[1] or rolling mean) is a calculation to analyze data points by creating a series of averages of different selections of the full data set. Variations include: simple, cumulative, or weighted forms.

Mathematically, a moving average is a type of convolution. Thus in signal processing it is viewed as a low-pass finite impulse response filter. Because the boxcar function outlines its filter coefficients, it is called a boxcar filter. It is sometimes followed by downsampling.

Given a series of numbers and a fixed subset size, the first element of the moving average is obtained by taking the average of the initial fixed subset of the number series. Then the subset is modified by "shifting forward"; that is, excluding the first number of the series and including the next value in the series.

A moving average is commonly used with time series data to smooth out short-term fluctuations and highlight longer-term trends or cycles - in this case the calculation is sometimes called a time average. The threshold between short-term and long-term depends on the application, and the parameters of the moving average will be set accordingly. It is also used in economics to examine gross domestic product, employment or other macroeconomic time series. When used with non-time series data, a moving average filters higher frequency components without any specific connection to time, although typically some kind of ordering is implied. Viewed simplistically it can be regarded as smoothing the data.

History

The technique of moving averages was invented by the Bank of England in 1833 to conceal the state of its bullion reserves.[2]

Simple moving average

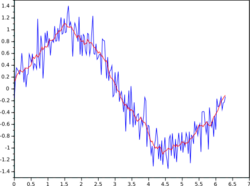

thumb In financial applications a simple moving average (SMA) is the unweighted mean of the previous data-points. However, in science and engineering, the mean is normally taken from an equal number of data on either side of a central value. This ensures that variations in the mean are aligned with the variations in the data rather than being shifted in time. An example of a simple equally weighted running mean is the mean over the last entries of a data-set containing entries. Let those data-points be . This could be closing prices of a stock. The mean over the last data-points (days in this example) is denoted as and calculated as:

When calculating the next mean with the same sampling width the range from to is considered. A new value comes into the sum and the oldest value drops out. This simplifies the calculations by reusing the previous mean . This means that the moving average filter can be computed quite cheaply on real time data with a FIFO / circular buffer and only 3 arithmetic steps.

During the initial filling of the FIFO / circular buffer the sampling window is equal to the data-set size thus and the average calculation is performed as a cumulative moving average.

The period selected () depends on the type of movement of interest, such as short, intermediate, or long-term.

If the data used are not centered around the mean, a simple moving average lags behind the latest datum by half the sample width. An SMA can also be disproportionately influenced by old data dropping out or new data coming in. One characteristic of the SMA is that if the data has a periodic fluctuation, then applying an SMA of that period will eliminate that variation (the average always containing one complete cycle). But a perfectly regular cycle is rarely encountered.[3]

For a number of applications, it is advantageous to avoid the shifting induced by using only "past" data. Hence a central moving average can be computed, using data equally spaced on either side of the point in the series where the mean is calculated.[4] This requires using an odd number of points in the sample window.

A major drawback of the SMA is that it lets through a significant amount of the signal shorter than the window length. This can lead to unexpected artifacts, such as peaks in the smoothed result appearing where there were troughs in the data. It also leads to the result being less smooth than expected since some of the higher frequencies are not properly removed.

Its frequency response is a type of low-pass filter called sinc-in-frequency.

Continuous moving average

The continuous moving average of an integrable function is defined via integration as:

where the environment around defines the intensity of smoothing of the graph of the integrable function. A larger smooths the source graph of the function (blue) more. The animations below show the moving average as animation in dependency of different values for . The fraction is used, because is the interval width for the integral. Naturally, by fundamental theorem of calculus and L'Hôpital's rule.

-

Continuous moving average sine and polynom - visualization of the smoothing with a small interval for integration

-

Continuous moving average sine and polynom - visualization of the smoothing with a larger interval for integration

-

Animation showing the impact of interval width and smoothing by moving average.

Cumulative average

In a cumulative average (CA), the data arrive in an ordered datum stream, and the user would like to get the average of all of the data up until the current datum. For example, an investor may want the average price of all of the stock transactions for a particular stock up until the current time. As each new transaction occurs, the average price at the time of the transaction can be calculated for all of the transactions up to that point using the cumulative average, typically an equally weighted average of the sequence of n values up to the current time:

The brute-force method to calculate this would be to store all of the data and calculate the sum and divide by the number of points every time a new datum arrived. However, it is possible to simply update cumulative average as a new value, becomes available, using the formula

Thus the current cumulative average for a new datum is equal to the previous cumulative average, times n, plus the latest datum, all divided by the number of points received so far, n+1. When all of the data arrive (n = N), then the cumulative average will equal the final average. It is also possible to store a running total of the data as well as the number of points and dividing the total by the number of points to get the CA each time a new datum arrives.

The derivation of the cumulative average formula is straightforward. Using and similarly for n + 1, it is seen that

Solving this equation for results in

Weighted moving average

In the financial field, and more specifically in the analyses of financial data, a weighted moving average (WMA) has the specific meaning of weights that decrease in arithmetical progression.[5] In an n-day WMA the latest day has weight n, the second latest , etc., down to one.

The denominator is a triangle number equal to In the more general case the denominator will always be the sum of the individual weights.

When calculating the WMA across successive values, the difference between the numerators of and is . If we denote the sum by , then

The graph at the right shows how the weights decrease, from highest weight for the most recent data, down to zero. It can be compared to the weights in the exponential moving average which follows.

Exponential moving average

An exponential moving average (EMA), also known as an exponentially weighted moving average (EWMA),[6] is a first-order infinite impulse response filter that applies weighting factors which decrease exponentially. The weighting for each older datum decreases exponentially, never reaching zero. This formulation is according to Hunter (1986).[7]

There is also a multivariate implementation of EWMA, known as MEWMA.[8]

Other weightings

Other weighting systems are used occasionally – for example, in share trading a volume weighting will weight each time period in proportion to its trading volume.

A further weighting, used by actuaries, is Spencer's 15-Point Moving Average[9] (a central moving average). Its symmetric weight coefficients are [−3, −6, −5, 3, 21, 46, 67, 74, 67, 46, 21, 3, −5, −6, −3], which factors as [1, 1, 1, 1]×[1, 1, 1, 1]×[1, 1, 1, 1, 1]×[−3, 3, 4, 3, −3]/320 and leaves samples of any quadratic or cubic polynomial unchanged.[10][11]

Outside the world of finance, weighted running means have many forms and applications. Each weighting function or "kernel" has its own characteristics. In engineering and science the frequency and phase response of the filter is often of primary importance in understanding the desired and undesired distortions that a particular filter will apply to the data.

A mean does not just "smooth" the data. A mean is a form of low-pass filter. The effects of the particular filter used should be understood in order to make an appropriate choice.[citation needed]

Moving median

From a statistical point of view, the moving average, when used to estimate the underlying trend in a time series, is susceptible to rare events such as rapid shocks or other anomalies. A more robust estimate of the trend is the simple moving median over n time points: where the median is found by, for example, sorting the values inside the brackets and finding the value in the middle. For larger values of n, the median can be efficiently computed by updating an indexable skiplist.[12]

Statistically, the moving average is optimal for recovering the underlying trend of the time series when the fluctuations about the trend are normally distributed. However, the normal distribution does not place high probability on very large deviations from the trend which explains why such deviations will have a disproportionately large effect on the trend estimate. It can be shown that if the fluctuations are instead assumed to be Laplace distributed, then the moving median is statistically optimal.[13] For a given variance, the Laplace distribution places higher probability on rare events than does the normal, which explains why the moving median tolerates shocks better than the moving mean.

When the simple moving median above is central, the smoothing is identical to the median filter which has applications in, for example, image signal processing. The Moving Median is a more robust alternative to the Moving Average when it comes to estimating the underlying trend in a time series. While the Moving Average is optimal for recovering the trend if the fluctuations around the trend are normally distributed, it is susceptible to the impact of rare events such as rapid shocks or anomalies. In contrast, the Moving Median, which is found by sorting the values inside the time window and finding the value in the middle, is more resistant to the impact of such rare events. This is because, for a given variance, the Laplace distribution, which the Moving Median assumes, places higher probability on rare events than the normal distribution that the Moving Average assumes. As a result, the Moving Median provides a more reliable and stable estimate of the underlying trend even when the time series is affected by large deviations from the trend. Additionally, the Moving Median smoothing is identical to the Median Filter, which has various applications in image signal processing.

Moving average regression model

In a moving average regression model, a variable of interest is assumed to be a weighted moving average of unobserved independent error terms; the weights in the moving average are parameters to be estimated.

Those two concepts are often confused due to their name, but while they share many similarities, they represent distinct methods and are used in very different contexts.

See also

- Exponential smoothing

- Local regression (LOESS and LOWESS)

- Kernel smoothing

- Moving average convergence/divergence indicator

- Martingale (probability theory)

- Moving average crossover

- Moving least squares

- Rising moving average

- Rolling hash

- Running total

- Savitzky–Golay filter

- Window function

- Zero lag exponential moving average

References

- ↑ Hydrologic Variability of the Cosumnes River Floodplain (Booth et al., San Francisco Estuary and Watershed Science, Volume 4, Issue 2, 2006)

- ↑ Klein, Judy L. (1997-10-28). Statistical Visions in Time. Cambridge University Press. pp. 88. ISBN 9780521420464.

- ↑ Statistical Analysis, Ya-lun Chou, Holt International, 1975, ISBN 0-03-089422-0, section 17.9.

- ↑ The derivation and properties of the simple central moving average are given in full at Savitzky–Golay filter.

- ↑ "Weighted Moving Averages: The Basics". Investopedia. http://www.investopedia.com/articles/technical/060401.asp.

- ↑ "DEALING WITH MEASUREMENT NOISE - Averaging Filter". http://lorien.ncl.ac.uk/ming/filter/filewma.htm.

- ↑ NIST/SEMATECH e-Handbook of Statistical Methods: Single Exponential Smoothing at the National Institute of Standards and Technology

- ↑ Yeh, A.; Lin, D.; Zhou, H.; Venkataramani, C. (2003). "A multivariate exponentially weighted moving average control chart for monitoring process variability". Journal of Applied Statistics 30 (5): 507–536. doi:10.1080/0266476032000053655. ISSN 0266-4763. Bibcode: 2003JApSt..30..507Y. https://www.stat.purdue.edu/~dkjlin/documents/publications/2003/2003_AppStat1.pdf. Retrieved 16 January 2025.

- ↑ Spencer's 15-Point Moving Average — from Wolfram MathWorld

- ↑ Rob J Hyndman. "Moving averages". 2009-11-08. Accessed 2020-08-20.

- ↑ Aditya Guntuboyina. "Statistics 153 (Time Series) : Lecture Three". 2012-01-24. Accessed 2024-01-07.

- ↑ "Efficient Running Median using an Indexable Skiplist « Python recipes « ActiveState Code". http://code.activestate.com/recipes/576930/.

- ↑ G.R. Arce, "Nonlinear Signal Processing: A Statistical Approach", Wiley:New Jersey, US, 2005.

|