Simple linear regression

| Part of a series on |

| Regression analysis |

|---|

|

| Models |

| Estimation |

| Background |

|

|

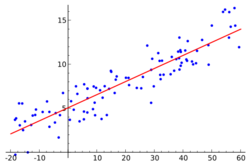

In statistics, simple linear regression (SLR) is a linear regression model with a single explanatory variable.[1][2][3][4][5] That is, it concerns two-dimensional sample points with one independent variable and one dependent variable (conventionally, the x and y coordinates in a Cartesian coordinate system) and finds a linear function (a non-vertical straight line) that, as accurately as possible, predicts the dependent variable values as a function of the independent variable. The adjective simple refers to the fact that the outcome variable is related to a single predictor.

It is common to make the additional stipulation that the ordinary least squares (OLS) method should be used: the accuracy of each predicted value is measured by its squared residual (vertical distance between the point of the data set and the fitted line), and the goal is to make the sum of these squared deviations as small as possible. In this case, the slope of the fitted line is equal to the correlation between y and x corrected by the ratio of standard deviations of these variables. The intercept of the fitted line is such that the line passes through the center of mass (x, y) of the data points.

Formulation and computation

Consider the model function

- [math]\displaystyle{ y = \alpha + \beta x, }[/math]

which describes a line with slope β and y-intercept α. In general such a relationship may not hold exactly for the largely unobserved population of values of the independent and dependent variables; we call the unobserved deviations from the above equation the errors. Suppose we observe n data pairs and call them {(xi, yi), i = 1, ..., n}. We can describe the underlying relationship between yi and xi involving this error term εi by

- [math]\displaystyle{ y_i = \alpha + \beta x_i + \varepsilon_i. }[/math]

This relationship between the true (but unobserved) underlying parameters α and β and the data points is called a linear regression model.

The goal is to find estimated values [math]\displaystyle{ \widehat\alpha }[/math] and [math]\displaystyle{ \widehat\beta }[/math] for the parameters α and β which would provide the "best" fit in some sense for the data points. As mentioned in the introduction, in this article the "best" fit will be understood as in the least-squares approach: a line that minimizes the sum of squared residuals (see also Errors and residuals) [math]\displaystyle{ \widehat\varepsilon_i }[/math] (differences between actual and predicted values of the dependent variable y), each of which is given by, for any candidate parameter values [math]\displaystyle{ \alpha }[/math] and [math]\displaystyle{ \beta }[/math],

- [math]\displaystyle{ \widehat\varepsilon_i =y_i-\alpha -\beta x_i. }[/math]

In other words, [math]\displaystyle{ \widehat\alpha }[/math] and [math]\displaystyle{ \widehat\beta }[/math] solve the following minimization problem:

- [math]\displaystyle{ (\hat\alpha,\, \hat\beta) = \operatorname{argmin}\left( Q(\alpha, \beta) \right), }[/math]

where the objective function Q is:

- [math]\displaystyle{ Q(\alpha, \beta) = \sum_{i=1}^n\widehat\varepsilon_i^{\,2} = \sum_{i=1}^n (y_i -\alpha - \beta x_i)^2\ . }[/math]

By expanding to get a quadratic expression in [math]\displaystyle{ \alpha }[/math] and [math]\displaystyle{ \beta, }[/math] we can derive minimizing values of the function arguments, denoted [math]\displaystyle{ \widehat{\alpha} }[/math] and [math]\displaystyle{ \widehat{\beta} }[/math]:[6]

[math]\displaystyle{ \begin{align} \widehat\alpha & = \bar{y} - ( \widehat\beta\,\bar{x}), \\[5pt] \widehat\beta &= \frac{ \sum_{i=1}^n (x_i - \bar{x})(y_i - \bar{y}) }{ \sum_{i=1}^n (x_i - \bar{x})^2 } = \frac{ \sum_{i=1}^n \Delta x_i \Delta y_i }{ \sum_{i=1}^n \Delta x_i^2 } \end{align} }[/math]

Here we have introduced

- [math]\displaystyle{ \bar x }[/math] and [math]\displaystyle{ \bar y }[/math] as the average of the xi and yi, respectively

- [math]\displaystyle{ \Delta x_i }[/math] and [math]\displaystyle{ \Delta y_i }[/math] as the deviations in xi and yi with respect to their respective means.

Expanded Formulas

The above equations are efficient to use if the mean of the x and y variables ([math]\displaystyle{ \bar{x} \text{ and } \bar{y} }[/math]) are known. If the means are not known at the time of calculation, it may be more efficient to use the expanded version of the [math]\displaystyle{ \widehat\alpha\text{ and }\widehat\beta }[/math] equations. These expanded equations may be derived from the more general polynomial regression equations[7][8] by defining the regression polynomial to be of order 1, as follows.

[math]\displaystyle{ \begin{bmatrix} n & \sum_{i=1 }^nx_i \\ \sum_{i=1}^nx_i & \sum_{i=1}^nx_i^{2} \end{bmatrix} \begin{bmatrix} \widehat\alpha \\ \widehat\beta \end{bmatrix} = \begin{bmatrix} \sum_{ i=0 }^ny_i \\ \sum_{ i=0 }^ny_ix_i \end{bmatrix} }[/math]

The above system of linear equations may be solved directly, or stand-alone equations for [math]\displaystyle{ \widehat\alpha\text{ and }\widehat\beta }[/math] may be derived by expanding the matrix equations above. The resultant equations are algebraically equivalent to the ones shown in the prior paragraph, and are shown below without proof.[9][7]

[math]\displaystyle{ \begin{align} &\qquad \widehat\alpha = \frac{\sum_{i=1}^ ny_i\sum_{i=1}^ nx^2_i - \sum_{i=1}^ nx_i\sum_{i=1}^ nx_iy_i }{n\sum_{i=1}^ nx^2_i-(\sum_{i=1}^ nx_i)^2 } \\ [5pt] \\&\qquad \widehat\beta = \frac {n \sum_{i=1}^n x_iy_i-\sum_{i=1}^n x_i\sum_{ i=1}^n y_i }{ n\sum_{i=1}^n x_i^2-(\sum_{i=1}^nx_i)^2 } \\ &\qquad \end{align} }[/math]

Interpretation

Relationship with the sample covariance matrix

The solution can be reformulated using elements of the covariance matrix: [math]\displaystyle{ \widehat\beta = \frac{ s_{x, y} }{ s^2_{x} } = r_{xy} \frac{s_y}{s_x} }[/math]

where

- rxy is the sample correlation coefficient between x and y

- sx and sy are the uncorrected sample standard deviations of x and y

- [math]\displaystyle{ s^2_x }[/math] and [math]\displaystyle{ s_{x, y} }[/math] are the sample variance and sample covariance, respectively

Substituting the above expressions for [math]\displaystyle{ \widehat{\alpha} }[/math] and [math]\displaystyle{ \widehat{\beta} }[/math] into the original solution yields

- [math]\displaystyle{ \frac{ \hat{y} - \bar{y}}{s_y} = r_{xy} \frac{ x - \bar{x}}{s_x} . }[/math]

This shows that rxy is the slope of the regression line of the standardized data points (and that this line passes through the origin). Since [math]\displaystyle{ -1 \leq r_{xy} \leq 1 }[/math] then we get that if x is some measurement and y is a followup measurement from the same item, then we expect that y (on average) will be closer to the mean measurement than it was to the original value of x. This phenomenon is known as regressions toward the mean.

Generalizing the [math]\displaystyle{ \bar x }[/math] notation, we can write a horizontal bar over an expression to indicate the average value of that expression over the set of samples. For example:

- [math]\displaystyle{ \overline{xy} = \frac{1}{n} \sum_{i=1}^n x_i y_i. }[/math]

This notation allows us a concise formula for rxy:

- [math]\displaystyle{ r_{xy} = \frac{ \overline{xy} - \bar{x}\bar{y} }{ \sqrt{ \left(\overline{x^2} - \bar{x}^2\right)\left(\overline{y^2} - \bar{y}^2\right)} } . }[/math]

The coefficient of determination ("R squared") is equal to [math]\displaystyle{ r_{xy}^2 }[/math] when the model is linear with a single independent variable. See sample correlation coefficient for additional details.

Interpretation about the slope

By multiplying all members of the summation in the numerator by : [math]\displaystyle{ \begin{align}\frac{(x_i - \bar{x})}{(x_i - \bar{x})} = 1\end{align} }[/math] (thereby not changing it):

- [math]\displaystyle{ \begin{align} \widehat\beta &= \frac{ \sum_{i=1}^n (x_i - \bar{x})(y_i - \bar{y}) }{ \sum_{i=1}^n (x_i - \bar{x})^2 } = \frac{ \sum_{i=1}^n (x_i - \bar{x})^2\frac{(y_i - \bar{y})}{(x_i - \bar{x})} }{ \sum_{i=1}^n (x_i - \bar{x})^2 } = \sum_{i=1}^n \frac{ (x_i - \bar{x})^2}{ \sum_{j=1}^n (x_j - \bar{x})^2 } \frac{(y_i - \bar{y})}{(x_i - \bar{x})} \\[6pt] \end{align} }[/math]

We can see that the slope (tangent of angle) of the regression line is the weighted average of [math]\displaystyle{ \frac{(y_i - \bar{y})}{(x_i - \bar{x})} }[/math] that is the slope (tangent of angle) of the line that connects the i-th point to the average of all points, weighted by [math]\displaystyle{ (x_i - \bar{x})^2 }[/math] because the further the point is the more "important" it is, since small errors in its position will affect the slope connecting it to the center point more.

Interpretation about the intercept

- [math]\displaystyle{ \begin{align} \widehat\alpha & = \bar{y} - \widehat\beta\,\bar{x}, \\[5pt] \end{align} }[/math]

Given [math]\displaystyle{ \widehat\beta = \tan(\theta) = dy / dx \rightarrow dy = dx\times\widehat\beta }[/math] with [math]\displaystyle{ \theta }[/math] the angle the line makes with the positive x axis, we have [math]\displaystyle{ y_{\rm intersection} = \bar{y} - dx\times\widehat\beta = \bar{y} - dy }[/math]

Interpretation about the correlation

In the above formulation, notice that each [math]\displaystyle{ x_i }[/math] is a constant ("known upfront") value, while the [math]\displaystyle{ y_i }[/math] are random variables that depend on the linear function of [math]\displaystyle{ x_i }[/math] and the random term [math]\displaystyle{ \varepsilon_i }[/math]. This assumption is used when deriving the standard error of the slope and showing that it is unbiased.

In this framing, when [math]\displaystyle{ x_i }[/math] is not actually a random variable, what type of parameter does the empirical correlation [math]\displaystyle{ r_{xy} }[/math] estimate? The issue is that for each value i we'll have: [math]\displaystyle{ E(x_i)=x_i }[/math] and [math]\displaystyle{ Var(x_i)=0 }[/math]. A possible interpretation of [math]\displaystyle{ r_{xy} }[/math] is to imagine that [math]\displaystyle{ x_i }[/math] defines a random variable drawn from the empirical distribution of the x values in our sample. For example, if x had 10 values from the natural numbers: [1,2,3...,10], then we can imagine x to be a Discrete uniform distribution. Under this interpretation all [math]\displaystyle{ x_i }[/math] have the same expectation and some positive variance. With this interpretation we can think of [math]\displaystyle{ r_{xy} }[/math] as the estimator of the Pearson's correlation between the random variable y and the random variable x (as we just defined it).

Numerical properties

- The regression line goes through the center of mass point, [math]\displaystyle{ (\bar x,\, \bar y) }[/math], if the model includes an intercept term (i.e., not forced through the origin).

- The sum of the residuals is zero if the model includes an intercept term:

- [math]\displaystyle{ \sum_{i=1}^n \widehat{\varepsilon}_i = 0. }[/math]

- The residuals and x values are uncorrelated (whether or not there is an intercept term in the model), meaning:

- [math]\displaystyle{ \sum_{i=1}^n x_i \widehat{\varepsilon}_i \;=\; 0 }[/math]

- The relationship between [math]\displaystyle{ \rho_{xy} }[/math] (the correlation coefficient for the population) and the population variances of [math]\displaystyle{ y }[/math] ([math]\displaystyle{ \sigma_y^2 }[/math]) and the error term of [math]\displaystyle{ \epsilon }[/math] ([math]\displaystyle{ \sigma_\epsilon^2 }[/math]) is:[10]:401

- [math]\displaystyle{ \sigma_\epsilon^2 = (1-\rho_{xy}^2)\sigma_y^2 }[/math]

For extreme values of [math]\displaystyle{ \rho_{xy} }[/math] this is self evident. Since when [math]\displaystyle{ \rho_{xy} = 0 }[/math] then [math]\displaystyle{ \sigma_\epsilon^2 = \sigma_y^2 }[/math]. And when [math]\displaystyle{ \rho_{xy} = 1 }[/math] then [math]\displaystyle{ \sigma_\epsilon^2 = 0 }[/math].

Statistical properties

Description of the statistical properties of estimators from the simple linear regression estimates requires the use of a statistical model. The following is based on assuming the validity of a model under which the estimates are optimal. It is also possible to evaluate the properties under other assumptions, such as inhomogeneity, but this is discussed elsewhere.[clarification needed]

Unbiasedness

The estimators [math]\displaystyle{ \widehat{\alpha} }[/math] and [math]\displaystyle{ \widehat{\beta} }[/math] are unbiased.

To formalize this assertion we must define a framework in which these estimators are random variables. We consider the residuals εi as random variables drawn independently from some distribution with mean zero. In other words, for each value of x, the corresponding value of y is generated as a mean response α + βx plus an additional random variable ε called the error term, equal to zero on average. Under such interpretation, the least-squares estimators [math]\displaystyle{ \widehat\alpha }[/math] and [math]\displaystyle{ \widehat\beta }[/math] will themselves be random variables whose means will equal the "true values" α and β. This is the definition of an unbiased estimator.

Confidence intervals

The formulas given in the previous section allow one to calculate the point estimates of α and β — that is, the coefficients of the regression line for the given set of data. However, those formulas don't tell us how precise the estimates are, i.e., how much the estimators [math]\displaystyle{ \widehat{\alpha} }[/math] and [math]\displaystyle{ \widehat{\beta} }[/math] vary from sample to sample for the specified sample size. Confidence intervals were devised to give a plausible set of values to the estimates one might have if one repeated the experiment a very large number of times.

The standard method of constructing confidence intervals for linear regression coefficients relies on the normality assumption, which is justified if either:

- the errors in the regression are normally distributed (the so-called classic regression assumption), or

- the number of observations n is sufficiently large, in which case the estimator is approximately normally distributed.

The latter case is justified by the central limit theorem.

Normality assumption

Under the first assumption above, that of the normality of the error terms, the estimator of the slope coefficient will itself be normally distributed with mean β and variance [math]\displaystyle{ \sigma^2\left/\sum(x_i - \bar{x})^2\right., }[/math] where σ2 is the variance of the error terms (see Proofs involving ordinary least squares). At the same time the sum of squared residuals Q is distributed proportionally to χ2 with n − 2 degrees of freedom, and independently from [math]\displaystyle{ \widehat{\beta} }[/math]. This allows us to construct a t-value

- [math]\displaystyle{ t = \frac{\widehat\beta - \beta}{s_{\widehat\beta}}\ \sim\ t_{n - 2}, }[/math]

where

- [math]\displaystyle{ s_\widehat{\beta} = \sqrt{ \frac{\frac{1}{n - 2}\sum_{i=1}^n \widehat{\varepsilon}_i^{\,2}} {\sum_{i=1}^n (x_i -\bar{x})^2} } }[/math]

is the standard error of the estimator [math]\displaystyle{ \widehat{\beta} }[/math].

This t-value has a Student's t-distribution with n − 2 degrees of freedom. Using it we can construct a confidence interval for β:

- [math]\displaystyle{ \beta \in \left[\widehat\beta - s_{\widehat\beta} t^*_{n - 2},\ \widehat\beta + s_{\widehat\beta} t^*_{n - 2}\right], }[/math]

at confidence level (1 − γ), where [math]\displaystyle{ t^*_{n - 2} }[/math] is the [math]\displaystyle{ \scriptstyle \left(1 \;-\; \frac{\gamma}{2}\right)\text{-th} }[/math] quantile of the tn−2 distribution. For example, if γ = 0.05 then the confidence level is 95%.

Similarly, the confidence interval for the intercept coefficient α is given by

- [math]\displaystyle{ \alpha \in \left[ \widehat\alpha - s_{\widehat\alpha} t^*_{n - 2},\ \widehat\alpha + s_\widehat{\alpha} t^*_{n - 2}\right], }[/math]

at confidence level (1 − γ), where

- [math]\displaystyle{ s_{\widehat\alpha} = s_\widehat{\beta}\sqrt{\frac{1}{n} \sum_{i=1}^n x_i^2} = \sqrt{\frac{1}{n(n - 2)} \left(\sum_{i=1}^n \widehat{\varepsilon}_i^{\,2} \right) \frac{\sum_{i=1}^n x_i^2} {\sum_{i=1}^n (x_i - \bar{x})^2} } }[/math]

The confidence intervals for α and β give us the general idea where these regression coefficients are most likely to be. For example, in the Okun's law regression shown here the point estimates are

- [math]\displaystyle{ \widehat{\alpha} = 0.859, \qquad \widehat{\beta} = -1.817. }[/math]

The 95% confidence intervals for these estimates are

- [math]\displaystyle{ \alpha \in \left[\,0.76, 0.96\right], \qquad \beta \in \left[-2.06, -1.58 \,\right]. }[/math]

In order to represent this information graphically, in the form of the confidence bands around the regression line, one has to proceed carefully and account for the joint distribution of the estimators. It can be shown[11] that at confidence level (1 − γ) the confidence band has hyperbolic form given by the equation

- [math]\displaystyle{ (\alpha + \beta \xi) \in \left[ \,\widehat{\alpha} + \widehat{\beta} \xi \pm t^*_{n - 2} \sqrt{ \left(\frac{1}{n - 2} \sum\widehat{\varepsilon}_i^{\,2} \right) \cdot \left(\frac{1}{n} + \frac{(\xi - \bar{x})^2}{\sum(x_i - \bar{x})^2}\right)}\,\right]. }[/math]

When the model assumed the intercept is fixed and equal to 0 ([math]\displaystyle{ \alpha = 0 }[/math]), the standard error of the slope turns into:

- [math]\displaystyle{ s_\widehat{\beta} = \sqrt{ \frac{1}{n - 1} \frac{\sum_{i=1}^n \widehat{\varepsilon}_i^{\,2}} {\sum_{i=1}^n x_i^2} } }[/math]

With: [math]\displaystyle{ \hat{\varepsilon}_i = y_i - \hat y_i }[/math]

Asymptotic assumption

The alternative second assumption states that when the number of points in the dataset is "large enough", the law of large numbers and the central limit theorem become applicable, and then the distribution of the estimators is approximately normal. Under this assumption all formulas derived in the previous section remain valid, with the only exception that the quantile t*n−2 of Student's t distribution is replaced with the quantile q* of the standard normal distribution. Occasionally the fraction 1/n−2 is replaced with 1/n. When n is large such a change does not alter the results appreciably.

Numerical example

This data set gives average masses for women as a function of their height in a sample of American women of age 30–39. Although the OLS article argues that it would be more appropriate to run a quadratic regression for this data, the simple linear regression model is applied here instead.

Height (m), xi 1.47 1.50 1.52 1.55 1.57 1.60 1.63 1.65 1.68 1.70 1.73 1.75 1.78 1.80 1.83 Mass (kg), yi 52.21 53.12 54.48 55.84 57.20 58.57 59.93 61.29 63.11 64.47 66.28 68.10 69.92 72.19 74.46

| [math]\displaystyle{ i }[/math] | [math]\displaystyle{ x_i }[/math] | [math]\displaystyle{ y_i }[/math] | [math]\displaystyle{ x^2_i }[/math] | [math]\displaystyle{ x_{i} y_{i} }[/math] | [math]\displaystyle{ y_i^2 }[/math] |

|---|---|---|---|---|---|

| 1 | 1.47 | 52.21 | 2.1609 | 76.7487 | 2725.8841 |

| 2 | 1.50 | 53.12 | 2.2500 | 79.6800 | 2821.7344 |

| 3 | 1.52 | 54.48 | 2.3104 | 82.8096 | 2968.0704 |

| 4 | 1.55 | 55.84 | 2.4025 | 86.5520 | 3118.1056 |

| 5 | 1.57 | 57.20 | 2.4649 | 89.8040 | 3271.8400 |

| 6 | 1.60 | 58.57 | 2.5600 | 93.7120 | 3430.4449 |

| 7 | 1.63 | 59.93 | 2.6569 | 97.6859 | 3591.6049 |

| 8 | 1.65 | 61.29 | 2.7225 | 101.1285 | 3756.4641 |

| 9 | 1.68 | 63.11 | 2.8224 | 106.0248 | 3982.8721 |

| 10 | 1.70 | 64.47 | 2.8900 | 109.5990 | 4156.3809 |

| 11 | 1.73 | 66.28 | 2.9929 | 114.6644 | 4393.0384 |

| 12 | 1.75 | 68.10 | 3.0625 | 119.1750 | 4637.6100 |

| 13 | 1.78 | 69.92 | 3.1684 | 124.4576 | 4888.8064 |

| 14 | 1.80 | 72.19 | 3.2400 | 129.9420 | 5211.3961 |

| 15 | 1.83 | 74.46 | 3.3489 | 136.2618 | 5544.2916 |

| [math]\displaystyle{ \Sigma }[/math] | 24.76 | 931.17 | 41.0532 | 1548.2453 | 58498.5439 |

There are n = 15 points in this data set. Hand calculations would be started by finding the following five sums:

- [math]\displaystyle{ \begin{align} S_{x} &= \sum x_i \, = 24.76, \qquad S_{y} = \sum y_i \, = 931.17, \\[5pt] S_{xx} &= \sum x_i^2 = 41.0532, \;\;\, S_{yy} = \sum y_i^2 = 58498.5439, \\[5pt] S_{xy} &= \sum x_iy_i = 1548.2453 \end{align} }[/math]

These quantities would be used to calculate the estimates of the regression coefficients, and their standard errors.

- [math]\displaystyle{ \begin{align} \widehat\beta &= \frac{nS_{xy} - S_xS_y}{nS_{xx} - S_x^2} = 61.272 \\[8pt] \widehat\alpha &= \frac{1}{n}S_y - \widehat{\beta} \frac{1}{n}S_x = -39.062 \\[8pt] s_\varepsilon^2 &= \frac{1}{n(n - 2)} \left[ nS_{yy} - S_y^2 - \widehat\beta^2(nS_{xx} - S_x^2) \right] = 0.5762 \\[8pt] s_\widehat{\beta}^2 &= \frac{n s_\varepsilon^2}{nS_{xx} - S_x^2} = 3.1539 \\[8pt] s_\widehat{\alpha}^2 &= s_\widehat{\beta}^2 \frac{1}{n} S_{xx} = 8.63185 \end{align} }[/math]

The 0.975 quantile of Student's t-distribution with 13 degrees of freedom is t*13 = 2.1604, and thus the 95% confidence intervals for α and β are

- [math]\displaystyle{ \begin{align} & \alpha \in [\,\widehat{\alpha} \mp t^*_{13} s_\alpha \,] = [\,{-45.4},\ {-32.7}\,] \\[5pt] & \beta \in [\,\widehat{\beta} \mp t^*_{13} s_\beta \,] = [\, 57.4,\ 65.1 \,] \end{align} }[/math]

The product-moment correlation coefficient might also be calculated:

- [math]\displaystyle{ \widehat{r} = \frac{nS_{xy} - S_xS_y}{\sqrt{(nS_{xx} - S_x^2)(nS_{yy} - S_y^2)}} = 0.9946 }[/math]

Alternatives

In SLR, there is an underlying assumption that only the dependent variable contains measurement error; if the explanatory variable is also measured with error, then simple regression is not appropriate for estimating the underlying relationship because it will be biased due to regression dilution.

Other estimation methods that can be used in place of ordinary least squares include least absolute deviations (minimizing the sum of absolute values of residuals) and the Theil–Sen estimator (which chooses a line whose slope is the median of the slopes determined by pairs of sample points).

Deming regression (total least squares) also finds a line that fits a set of two-dimensional sample points, but (unlike ordinary least squares, least absolute deviations, and median slope regression) it is not really an instance of simple linear regression, because it does not separate the coordinates into one dependent and one independent variable and could potentially return a vertical line as its fit. can lead to a model that attempts to fit the outliers more than the data.

Line fitting

Line fitting is the process of constructing a straight line that has the best fit to a series of data points.

Several methods exist, considering:

- Vertical distance: Simple linear regression

- Resistance to outliers: Robust simple linear regression

- Perpendicular distance: Orthogonal regression

- Weighted geometric distance: Deming regression

- Scale invariance: Major axis regression

Simple linear regression without the intercept term (single regressor)

Sometimes it is appropriate to force the regression line to pass through the origin, because x and y are assumed to be proportional. For the model without the intercept term, y = βx, the OLS estimator for β simplifies to

- [math]\displaystyle{ \widehat{\beta} = \frac{ \sum_{i=1}^n x_i y_i }{ \sum_{i=1}^n x_i^2 } = \frac{\overline{x y}}{\overline{x^2}} }[/math]

Substituting (x − h, y − k) in place of (x, y) gives the regression through (h, k):

- [math]\displaystyle{ \begin{align} \widehat\beta &= \frac{ \sum_{i=1}^n (x_i - h) (y_i - k) }{ \sum_{i=1}^n (x_i - h)^2 } = \frac{\overline{(x - h) (y - k)}}{\overline{(x - h)^2}} \\[6pt] &= \frac{\overline{x y} - k \bar{x} - h \bar{y} + h k }{\overline{x^2} - 2 h \bar{x} + h^2} \\[6pt] &= \frac{\overline{x y} - \bar{x} \bar{y} + (\bar{x} - h)(\bar{y} - k)}{\overline{x^2} - \bar{x}^2 + (\bar{x} - h)^2} \\[6pt] &= \frac{\operatorname{Cov}(x,y) + (\bar{x} - h)(\bar{y}-k)}{\operatorname{Var}(x) + (\bar{x} - h)^2}, \end{align} }[/math]

where Cov and Var refer to the covariance and variance of the sample data (uncorrected for bias). The last form above demonstrates how moving the line away from the center of mass of the data points affects the slope.

See also

- Design matrix

- Linear trend estimation

- Linear segmented regression

- Proofs involving ordinary least squares—derivation of all formulas used in this article in general multidimensional case

References

- ↑ Seltman, Howard J. (2008-09-08). Experimental Design and Analysis. p. 227. http://www.stat.cmu.edu/~hseltman/309/Book/Book.pdf.

- ↑ "Statistical Sampling and Regression: Simple Linear Regression". Columbia University. http://ci.columbia.edu/ci/premba_test/c0331/s7/s7_6.html. "When one independent variable is used in a regression, it is called a simple regression;(...)"

- ↑ Lane, David M.. Introduction to Statistics. p. 462. http://onlinestatbook.com/Online_Statistics_Education.pdf.

- ↑ Zou KH; Tuncali K; Silverman SG (2003). "Correlation and simple linear regression." (in English). Radiology 227 (3): 617–22. doi:10.1148/radiol.2273011499. ISSN 0033-8419. OCLC 110941167. PMID 12773666. https://repositorio.unal.edu.co/handle/unal/81200.

- ↑ Altman, Naomi; Krzywinski, Martin (2015). "Simple linear regression" (in English). Nature Methods 12 (11): 999–1000. doi:10.1038/nmeth.3627. ISSN 1548-7091. OCLC 5912005539. PMID 26824102.

- ↑ Kenney, J. F. and Keeping, E. S. (1962) "Linear Regression and Correlation." Ch. 15 in Mathematics of Statistics, Pt. 1, 3rd ed. Princeton, NJ: Van Nostrand, pp. 252–285

- ↑ 7.0 7.1 Muthukrishnan, Gowri (17 Jun 2018). "Maths behind Polynomial regression, Muthukrishnan". https://muthu.co/maths-behind-polynomial-regression/.

- ↑ "Mathematics of Polynomial Regression". http://polynomialregression.drque.net/math.html.

- ↑ "Numeracy, Maths and Statistics - Academic Skills Kit, Newcastle University". https://www.ncl.ac.uk/webtemplate/ask-assets/external/maths-resources/statistics/regression-and-correlation/simple-linear-regression.html.

- ↑ Valliant, Richard, Jill A. Dever, and Frauke Kreuter. Practical tools for designing and weighting survey samples. New York: Springer, 2013.

- ↑ Casella, G. and Berger, R. L. (2002), "Statistical Inference" (2nd Edition), Cengage, ISBN:978-0-534-24312-8, pp. 558–559.

External links

- Wolfram MathWorld's explanation of Least Squares Fitting, and how to calculate it

- Mathematics of simple regression (Robert Nau, Duke University)

zh-yue:簡單線性迴歸分析

|